FPPS: An FPGA-Based Point Cloud Processing System

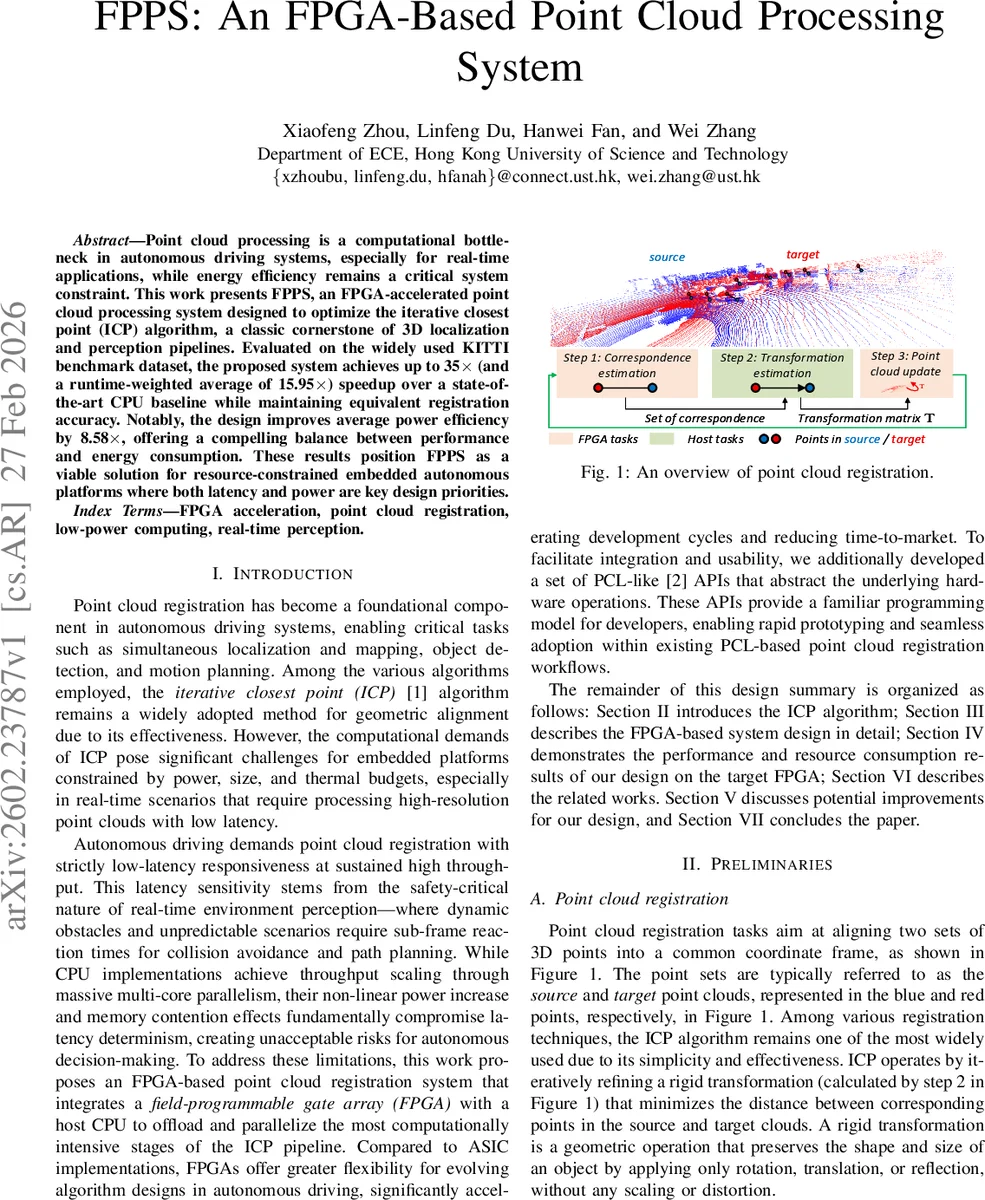

Point cloud processing is a computational bottleneck in autonomous driving systems, especially for real-time applications, while energy efficiency remains a critical system constraint. This work presents FPPS, an FPGA-accelerated point cloud processing system designed to optimize the iterative closest point (ICP) algorithm, a classic cornerstone of 3D localization and perception pipelines. Evaluated on the widely used KITTI benchmark dataset, the proposed system achieves up to 35$\times$ (and a runtime-weighted average of 15.95x) speedup over a state-of-the-art CPU baseline while maintaining equivalent registration accuracy. Notably, the design improves average power efficiency by 8.58x, offering a compelling balance between performance and energy consumption. These results position FPPS as a viable solution for resource-constrained embedded autonomous platforms where both latency and power are key design priorities.

💡 Research Summary

The paper presents FPPS, a hardware‑accelerated point‑cloud registration framework that offloads the most computationally intensive stages of the Iterative Closest Point (ICP) algorithm to an FPGA. The authors target autonomous‑driving platforms where low latency, high accuracy, and strict power budgets are simultaneously required.

System Architecture

FPPS adopts a heterogeneous architecture consisting of a host CPU and an FPGA‑based accelerator on an AMD Alveo U50 card. The CPU handles data movement, control flow, and API calls, while the FPGA implements a pipelined ICP kernel that includes (1) nearest‑neighbor (NN) search, (2) covariance accumulation, and (3) singular‑value decomposition (SVD) for rigid‑body transformation estimation. Two on‑chip BRAM buffers store source and target point clouds, enabling the entire NN search to be performed without external memory accesses.

Nearest‑Neighbor Engine

The NN engine is the core of the accelerator. It follows a streaming model with four concurrent stages: data reading, distance computation, distance comparison, and result accumulation. Source points are loaded into a register buffer; target points are partitioned and broadcast to a systolic array of processing elements (PEs). Each PE computes Euclidean distances in parallel for a batch of candidate points (≈130 k candidates per source point). A hierarchical comparator tree selects the minimum distance and its corresponding target index. The selected correspondence is immediately forwarded to the accumulator, preserving a fully deterministic latency.

Transformation Estimation

Accumulated correspondences are used to build the covariance matrix, which is then decomposed by an FPGA‑implemented SVD using the device’s abundant DSP resources. Fixed‑point arithmetic and careful scaling maintain numerical stability while reducing resource usage. The resulting rotation matrix R and translation vector t are combined into a 4 × 4 homogeneous transform that updates the source cloud for the next ICP iteration.

Software Interface

To ease adoption, the authors provide a set of PCL‑compatible APIs (e.g., setInputSource, setInputTarget, align). These wrappers hide the hardware details, allowing developers to replace a pure‑CPU ICP call with a single function that internally launches the FPGA kernel.

Experimental Setup

Evaluation uses the KITTI odometry benchmark (sequences 00–09). Each frame samples 4 096 points from the source cloud and runs up to 50 ICP iterations. The host is an 11th‑gen Intel Core i5‑11400; the CPU baseline runs a state‑of‑the‑art PCL ICP implementation on an Intel Xeon Gold 6246R. Power is measured with PowerTOP v2.11.

Results

- Accuracy: Average RMSE across sequences differs by ≤0.01 m between CPU‑only and CPU+FPGA, confirming numerical equivalence.

- Latency: Per‑frame processing time drops from 3.7 s–7.0 s (CPU) to 0.14 s–0.54 s (FPGA), yielding speed‑ups ranging from 4.8× to 35.4×.

- Power Efficiency: The FPGA design consumes 28 W (14 W static + 14 W dynamic) plus 2.3 W host power, achieving 8.58× higher performance‑per‑watt compared with the 16.3 W CPU baseline.

- Resource Utilization: On the U50, the design occupies 71.9 % of LUTs, 50.6 % of FFs, 45.6 % of BRAM, and 80.1 % of DSPs within a single Super Logic Region, leaving the other SLR free for future extensions.

Design Choices

The authors deliberately avoid k‑d‑tree based NN search because its sequential traversal introduces latency and control‑flow complexity unsuitable for deep pipelining. Instead, a fully parallel systolic array provides deterministic timing and scales with the FPGA’s fabric.

Related Work Comparison

Prior FPGA ICP accelerators either target low‑resolution indoor scenes, rely on approximate KNN, or require static graph structures that limit adaptability. FPPS distinguishes itself by accelerating the full ICP pipeline with exact NN, preserving PCL compatibility, and delivering orders‑of‑magnitude speed‑up while maintaining accuracy—attributes essential for real‑time autonomous driving.

Conclusion

FPPS demonstrates that a carefully crafted FPGA accelerator can meet the stringent latency, accuracy, and power constraints of modern autonomous‑driving perception stacks. By integrating a streaming NN engine, on‑chip covariance accumulation, and FPGA‑based SVD, and by exposing a familiar software API, the system offers a practical path for deploying high‑performance 3‑D registration on resource‑constrained embedded platforms. Future work may explore scaling to larger point clouds, dynamic reconfiguration for varying workloads, and integration with other perception modules such as SLAM or object detection.

Comments & Academic Discussion

Loading comments...

Leave a Comment