Shuffle Mamba: State Space Models with Random Shuffle for Multi-Modal Image Fusion

Multi-modal image fusion integrates complementary information from different modalities to produce enhanced and informative images. Although State-Space Models, such as Mamba, are proficient in long-range modeling with linear complexity, most Mamba-based approaches use fixed scanning strategies, which can introduce biased prior information. To mitigate this issue, we propose a novel Bayesian-inspired scanning strategy called Random Shuffle, supplemented by a theoretically feasible inverse shuffle to maintain information coordination invariance, aiming to eliminate biases associated with fixed sequence scanning. Based on this transformation pair, we customized the Shuffle Mamba Framework, penetrating modality-aware information representation and cross-modality information interaction across spatial and channel axes to ensure robust interaction and an unbiased global receptive field for multi-modal image fusion. Furthermore, we develop a testing methodology based on Monte-Carlo averaging to ensure the model’s output aligns more closely with expected results. Extensive experiments across multiple multi-modal image fusion tasks demonstrate the effectiveness of our proposed method, yielding excellent fusion quality compared to state-of-the-art alternatives. The code is available at https://github.com/caoke-963/Shuffle-Mamba.

💡 Research Summary

The paper introduces Shuffle Mamba, a novel framework that addresses the inherent bias of fixed scanning orders in state‑space models (SSMs) such as Mamba when applied to two‑dimensional visual data. Conventional Mamba‑based vision models flatten image patches into a 1‑D sequence and process them sequentially; early tokens enjoy a large receptive field while later tokens suffer from reduced context, leading to an unbalanced global perception. To eliminate this bias, the authors propose a Bayesian‑inspired Random Shuffle scanning strategy. Input patches are randomly permuted before being fed into the Mamba block, and an inverse shuffle restores the original spatial order after processing. This pair of transformations provides an unbiased expected global receptive field without adding parameters or increasing computational complexity beyond the linear cost of SSMs.

Shuffle Mamba comprises three key modules: (1) Random Mamba Block, which implements the basic shuffle‑inverse pipeline; (2) Random Channel Interactive Mamba Block, which applies random shuffling across channel dimensions to promote cross‑spectral interaction; and (3) Random Modal Interactive Mamba Block, which facilitates information exchange between different modalities (e.g., PAN vs. MS, CT vs. MRI). During training, each mini‑batch undergoes an independent random shuffle, ensuring that the model never learns a deterministic spatial prior. At inference time, Monte‑Carlo averaging is employed: multiple shuffled‑inverse passes are performed and their outputs averaged, approximating the theoretical expectation and reducing variance caused by a single random permutation.

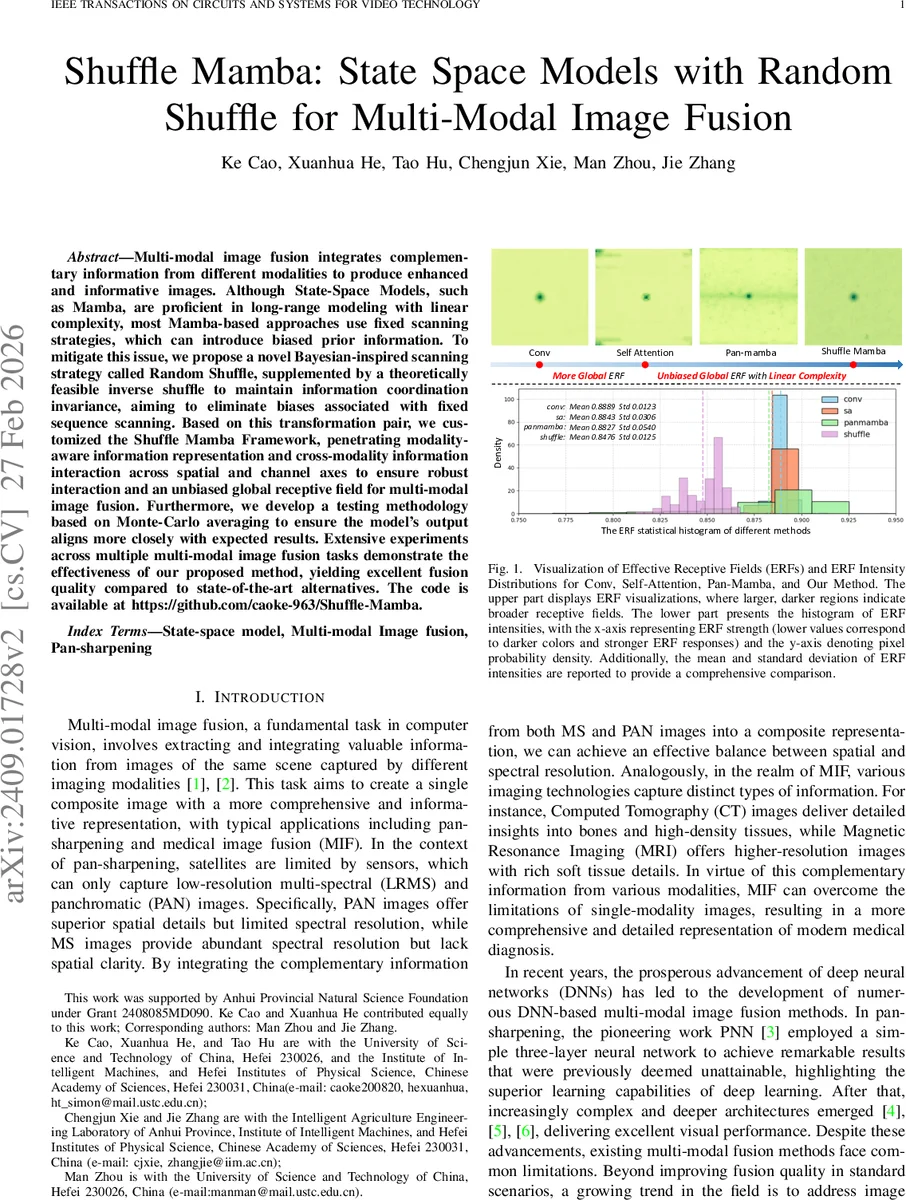

Extensive experiments on two representative multi‑modal fusion tasks—pan‑sharpening (low‑resolution multispectral + high‑resolution panchromatic) and medical image fusion (CT + MRI)—demonstrate that Shuffle Mamba outperforms state‑of‑the‑art baselines, including Pan‑Mamba, transformer‑based fusion networks, and classic CNN approaches. Quantitative metrics (PSNR, SSIM, SAM, ERGAS) and qualitative visual assessments confirm superior spectral fidelity and spatial detail preservation. Moreover, Effective Receptive Field (ERF) analysis shows that Shuffle Mamba yields a left‑shifted intensity distribution with lower mean and variance, indicating a more uniform and stronger global correlation across all spatial locations.

In summary, the work provides a theoretically sound and practically efficient solution to the scanning bias problem in SSM‑based vision models. By leveraging random shuffling and Monte‑Carlo testing, Shuffle Mamba achieves unbiased global modeling with linear complexity, setting a new benchmark for multi‑modal image fusion and opening avenues for further integration of stochastic scanning strategies into other SSM or transformer architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment