FuxiShuffle: An Adaptive and Resilient Shuffle Service for Distributed Data Processing on Alibaba Cloud

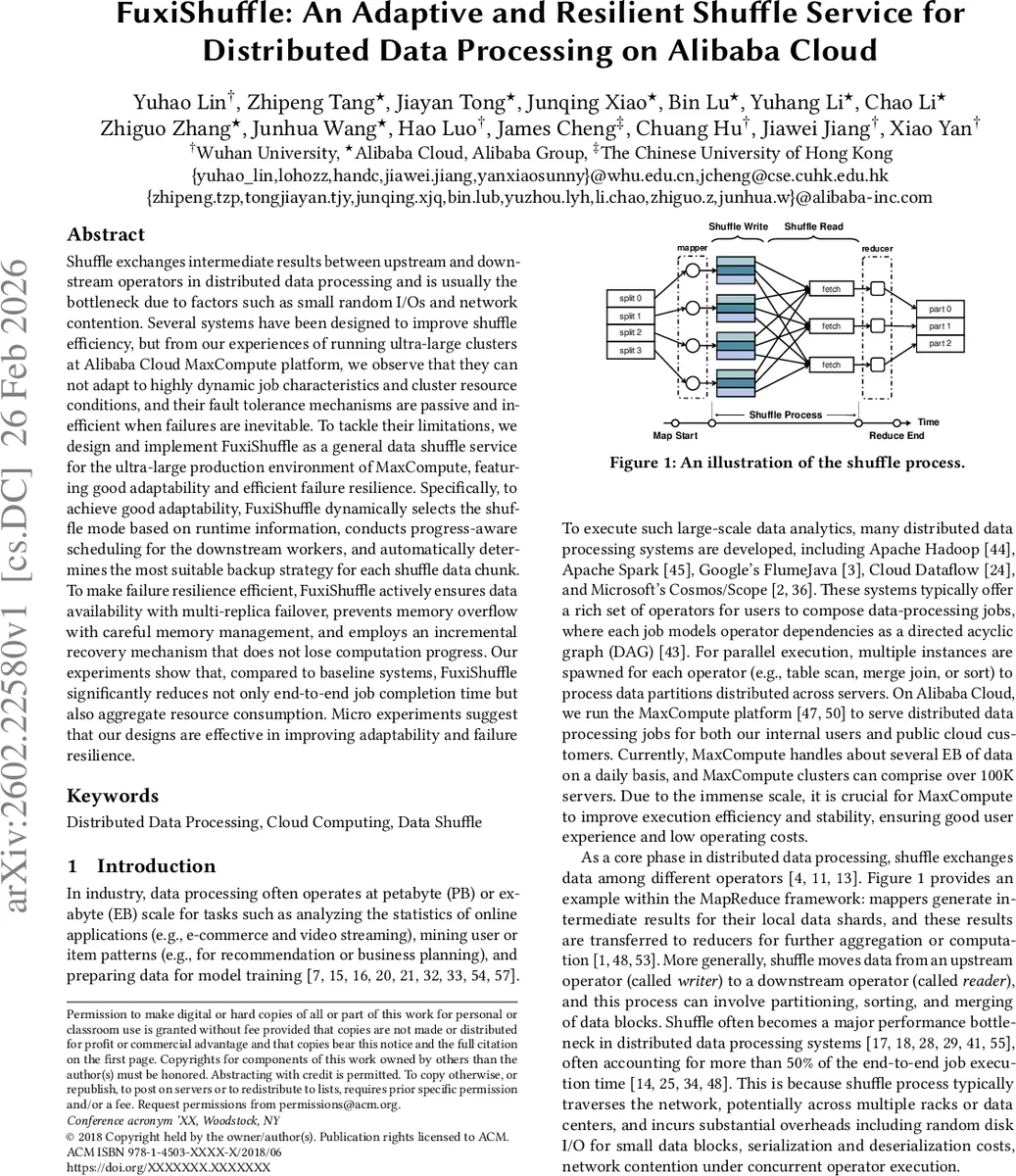

Shuffle exchanges intermediate results between upstream and downstream operators in distributed data processing and is usually the bottleneck due to factors such as small random I/Os and network contention. Several systems have been designed to improve shuffle efficiency, but from our experiences of running ultra-large clusters at Alibaba Cloud MaxCompute platform, we observe that they can not adapt to highly dynamic job characteristics and cluster resource conditions, and their fault tolerance mechanisms are passive and inefficient when failures are inevitable. To tackle their limitations, we design and implement FuxiShuffle as a general data shuffle service for the ultra-large production environment of MaxCompute, featuring good adaptability and efficient failure resilience. Specifically, to achieve good adaptability, FuxiShuffle dynamically selects the shuffle mode based on runtime information, conducts progress-aware scheduling for the downstream workers, and automatically determines the most suitable backup strategy for each shuffle data chunk. To make failure resilience efficient, FuxiShuffle actively ensures data availability with multi-replica failover, prevents memory overflow with careful memory management, and employs an incremental recovery mechanism that does not lose computation progress. Our experiments show that, compared to baseline systems, FuxiShuffle significantly reduces not only end-to-end job completion time but also aggregate resource consumption. Micro experiments suggest that our designs are effective in improving adaptability and failure resilience.

💡 Research Summary

The paper presents FuxiShuffle, a shuffle service designed for the massive-scale data‑processing environment of Alibaba Cloud’s MaxCompute platform. Shuffle, the phase that moves intermediate results from upstream operators (writers) to downstream operators (readers), is a well‑known bottleneck in distributed systems because it often involves many small random I/O operations and heavy network traffic. Existing solutions such as HDShuffle, iShuffle, Magnet, and others either fix the shuffle mode (always in‑memory or always on‑disk) or rely on static scheduling policies (staged or gang scheduling). These approaches cannot adapt to the highly dynamic job characteristics (varying data sizes, execution times, skew) and to the constantly changing resource conditions (memory pressure, network congestion) that are typical in production clusters with tens of thousands of nodes and exabyte‑scale daily workloads.

FuxiShuffle addresses two fundamental shortcomings: poor adaptability and inefficient fault tolerance. Its design introduces three adaptive mechanisms that operate across the entire shuffle lifecycle. First, a dynamic shuffle‑mode selector uses an execution‑time threshold that is continuously tuned according to the amount of idle memory in the cluster. Jobs whose tasks are short and data‑light are automatically assigned to in‑memory shuffle, while long‑running or large‑data tasks fall back to on‑disk shuffle, thereby preventing OOM while exploiting memory when it is abundant. Second, the service replaces static staged or gang scheduling with “progress‑aware” (or progressive) scheduling. Downstream readers are launched incrementally as soon as a configurable fraction of the upstream writers have produced data, and the scheduler continuously balances reader‑writer concurrency based on real‑time progress and resource usage. This eliminates the long idle periods of staged scheduling and the wasteful idle readers of gang scheduling. Third, for each shuffle partition FuxiShuffle decides at runtime whether to perform aggregated writing (to improve throughput) or to create replicated backups (to improve reliability). The decision is driven by partition size, skew, and the job’s SLA, allowing the system to trade off performance and robustness on a per‑partition basis.

On the fault‑tolerance side, FuxiShuffle introduces proactive resilience. Writers are grouped by destination partition and assigned to a Shuffle Agent; each agent is replicated, forming “Shuffle Agent Groups” that spread network load and eliminate single points of failure. Memory is managed with a priority‑based eviction policy that pre‑emptively frees space before OOM occurs. Most importantly, the service implements incremental recovery: when a writer fails, only the affected partition is recomputed, while readers that have already consumed other partitions continue without interruption. This avoids the costly “partial re‑execution” pattern where both upstream and downstream tasks are killed and restarted, a problem that the authors observed in 11 k daily jobs, 36 % of which suffered more than ten re‑executions.

The implementation integrates tightly with MaxCompute and Spark on MaxCompute. Managers (Job Manager and Shuffle Service Manager) orchestrate workers and agents, while agents on compute and storage nodes handle data collection, aggregation, and transmission. The system uses RPC for control messages, Zookeeper for metadata coordination, and RocksDB for local block storage. In production, the cluster comprises over 100 k servers, processes several exabytes of shuffle data per day, and runs tens of millions of DAG jobs.

Extensive evaluation on this production environment shows dramatic improvements. Compared with baseline shuffle implementations, FuxiShuffle reduces end‑to‑end job completion time by an average of 76.36 % and cuts aggregate resource consumption (CPU, network, storage I/O) by 67.14 %. Under a single point‑of‑failure scenario, performance degrades by less than 10 %, and even under continuous disturbances the system maintains high throughput and low failure rates. Micro‑benchmarks isolate the contribution of each component: dynamic mode selection raises the proportion of in‑memory shuffles by 45 % and cuts I/O wait time by 30 %; progressive scheduling improves concurrent writer‑reader utilization by 1.8×; dynamic backup reduces replication overhead by 40 % while keeping data loss at zero; incremental recovery lowers total re‑execution counts by 85 %.

The authors also discuss operational insights: real‑time resource monitoring is essential for adaptive decisions; per‑partition replication policies must be tuned to balance cost and reliability; fast health‑checking and state synchronization among replicas are critical for low‑latency failover; and the design principles are applicable to other distributed processing engines such as Flink, Presto, or Dask.

In summary, FuxiShuffle delivers a practical, production‑grade shuffle service that simultaneously optimizes performance, resource efficiency, and fault resilience in ultra‑large cloud clusters. Its adaptive mode selection, progress‑aware scheduling, dynamic data‑layout strategy, and incremental recovery together form a cohesive framework that overcomes the limitations of prior shuffle systems and sets a new benchmark for large‑scale data‑processing infrastructures.

Comments & Academic Discussion

Loading comments...

Leave a Comment