Not Just How Much, But Where: Decomposing Epistemic Uncertainty into Per-Class Contributions

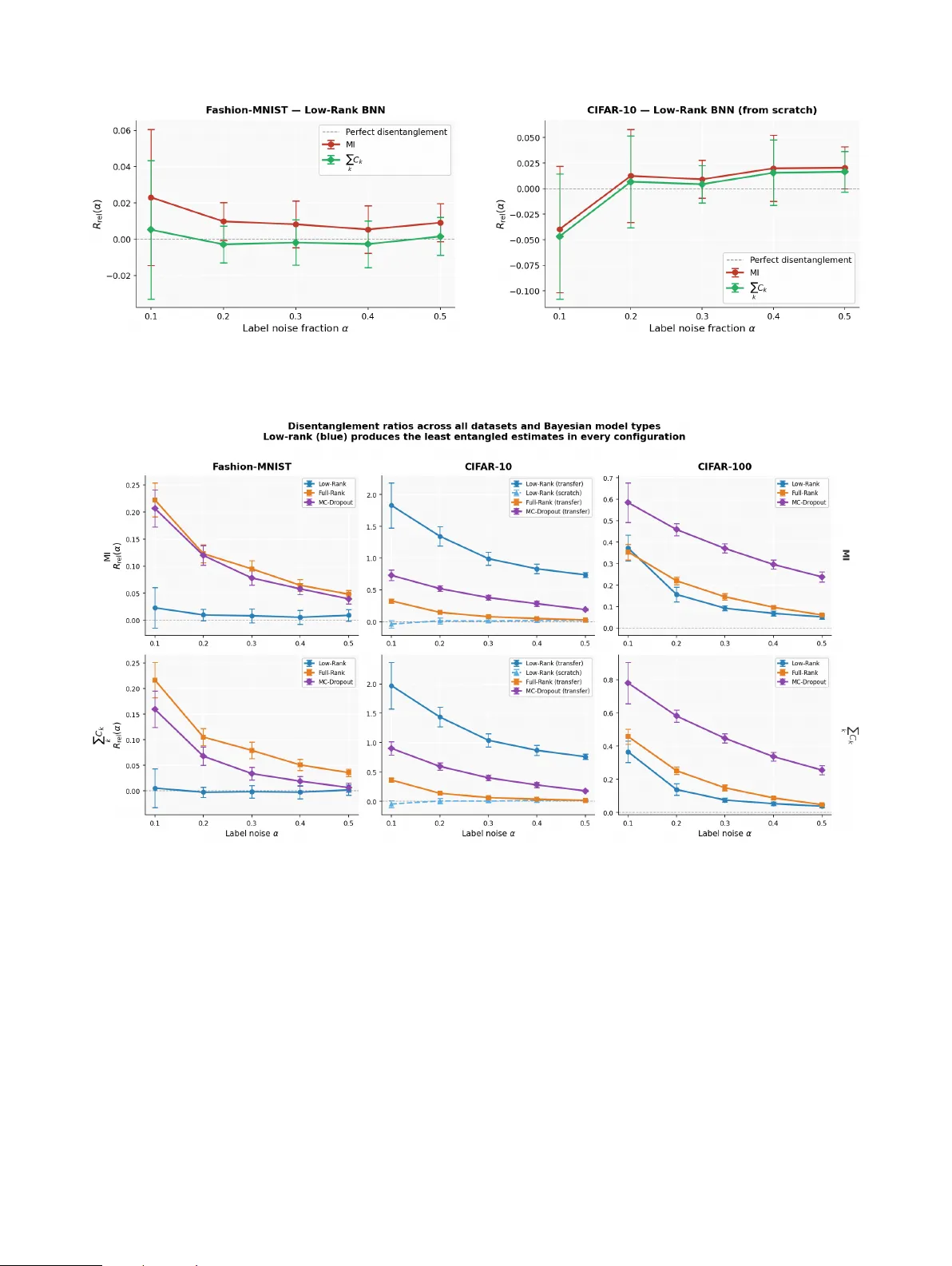

In safety-critical classification, the cost of failure is often asymmetric, yet Bayesian deep learning summarises epistemic uncertainty with a single scalar, mutual information (MI), that cannot distinguish whether a model's ignorance involves a beni…

Authors: Mame Diarra Toure, David A. Stephens