On the Equivalence of Random Network Distillation, Deep Ensembles, and Bayesian Inference

Uncertainty quantification is central to safe and efficient deployments of deep learning models, yet many computationally practical methods lack lacking rigorous theoretical motivation. Random network distillation (RND) is a lightweight technique tha…

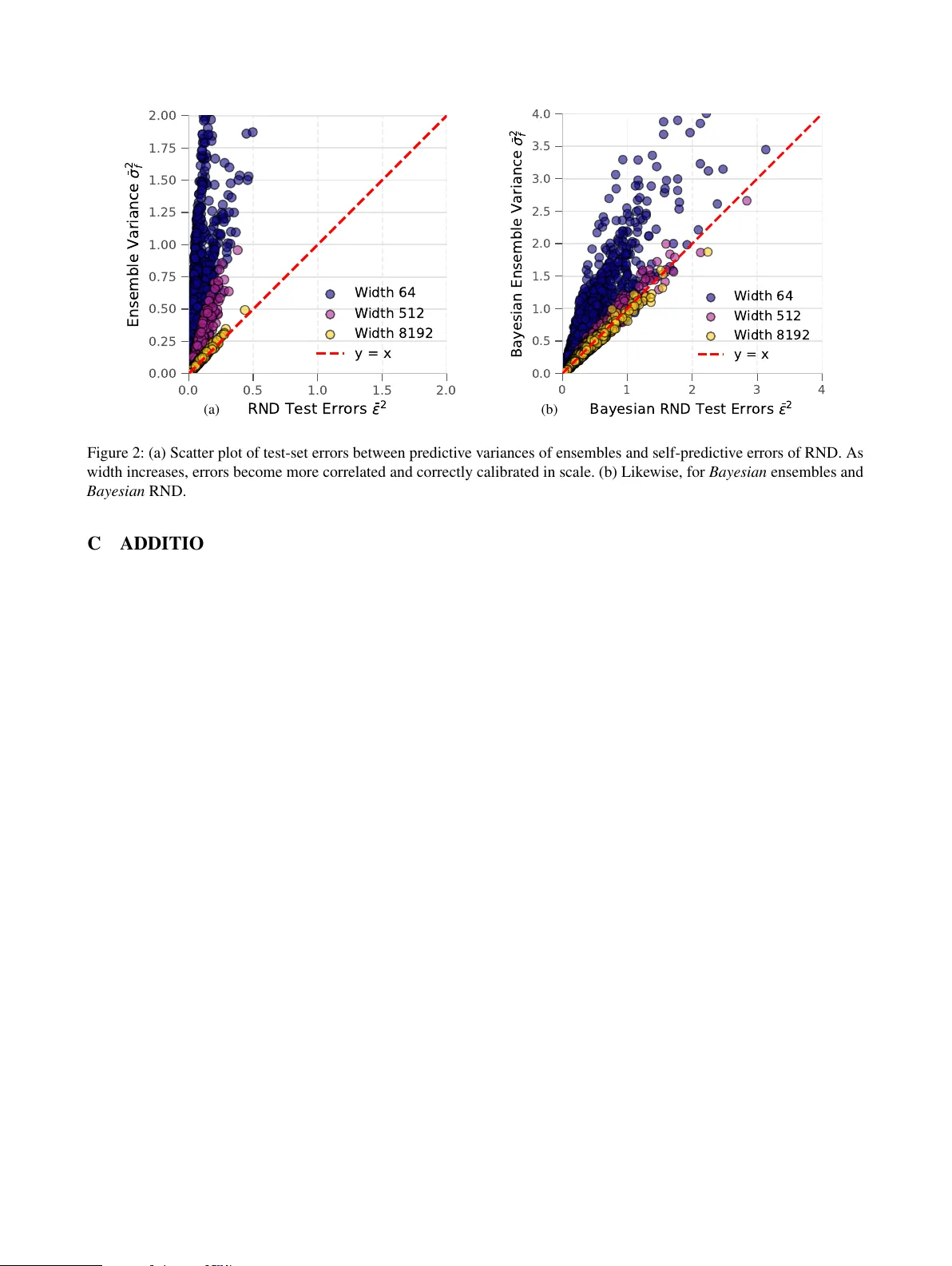

Authors: Moritz A. Zanger, Yijun Wu, Pascal R. Van der Vaart