Bridging Geometric and Semantic Foundation Models for Generalized Monocular Depth Estimation

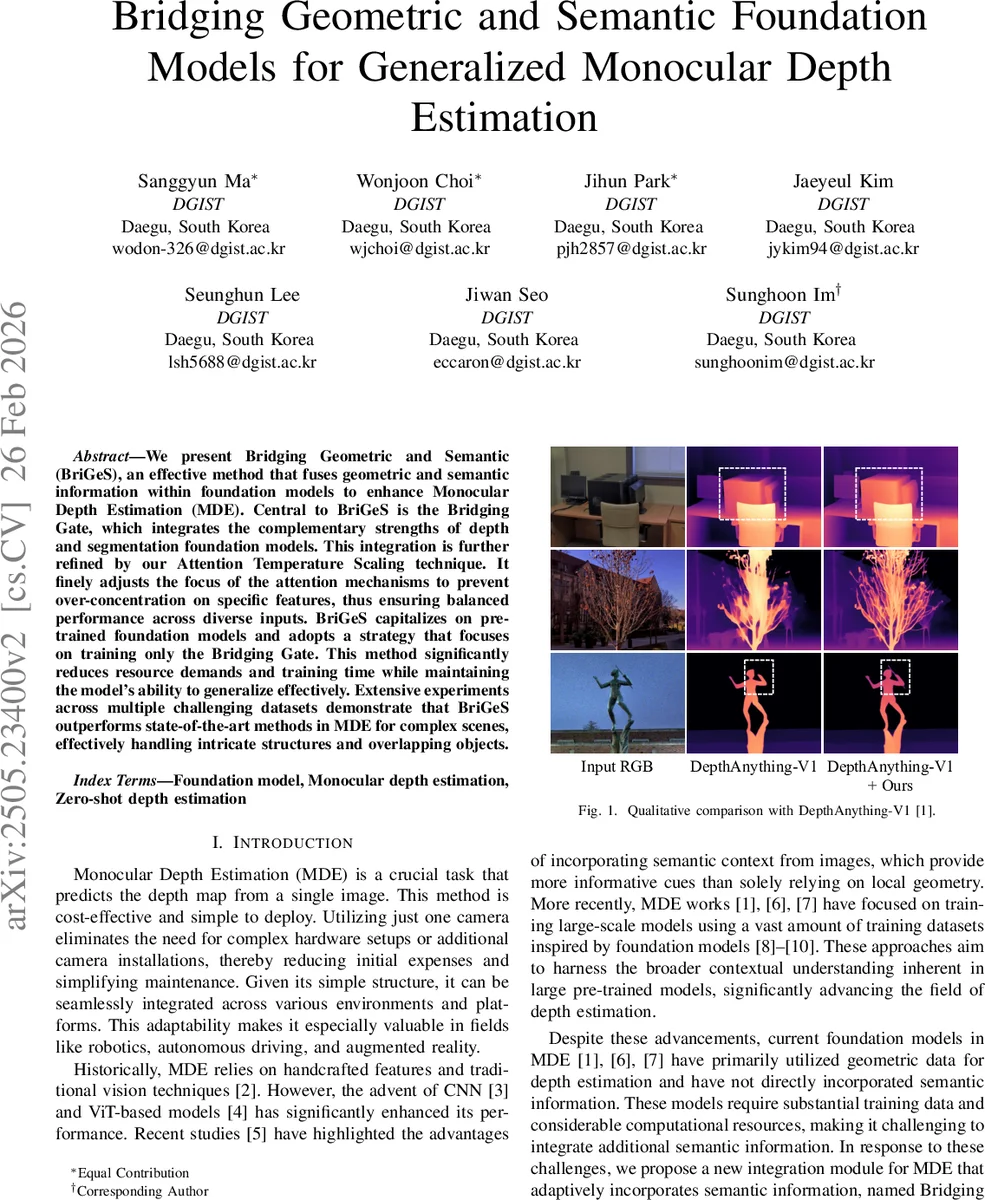

We present Bridging Geometric and Semantic (BriGeS), an effective method that fuses geometric and semantic information within foundation models to enhance Monocular Depth Estimation (MDE). Central to BriGeS is the Bridging Gate, which integrates the complementary strengths of depth and segmentation foundation models. This integration is further refined by our Attention Temperature Scaling technique. It finely adjusts the focus of the attention mechanisms to prevent over-concentration on specific features, thus ensuring balanced performance across diverse inputs. BriGeS capitalizes on pre-trained foundation models and adopts a strategy that focuses on training only the Bridging Gate. This method significantly reduces resource demands and training time while maintaining the model’s ability to generalize effectively. Extensive experiments across multiple challenging datasets demonstrate that BriGeS outperforms state-of-the-art methods in MDE for complex scenes, effectively handling intricate structures and overlapping objects.

💡 Research Summary

**

The paper introduces BriGeS (Bridging Geometric and Semantic), a novel framework that enhances monocular depth estimation (MDE) by fusing geometric cues from a depth foundation model with semantic cues from a segmentation foundation model. The core component, the Bridging Gate, performs a two‑stage attention‑based fusion: a cross‑attention block where depth features act as queries and semantic features serve as keys and values, followed by a self‑attention block that refines the fused representation. This design enables the depth stream to directly attend to semantic context, improving depth predictions especially around object boundaries, thin structures, and ambiguous regions.

A key challenge when merging two modalities is the tendency of attention maps to become overly concentrated on a few dominant regions, neglecting peripheral information. To mitigate this, the authors propose Attention Temperature Scaling (ATS). By introducing a temperature factor τ (>1) into the scaled‑dot‑product softmax, the distribution of attention scores is softened, encouraging a more uniform focus across the entire feature map. Empirical ablations show τ = 2.5 yields the best trade‑off between concentration and dispersion.

Training efficiency is a central theme. Both the depth encoder (E_d) from DepthAnything and the segmentation encoder (E_s) from SegmentAnything are kept frozen, as is the DepthAnything decoder (D_d). Only the parameters of the Bridging Gate are updated. This drastically reduces data and compute requirements: the authors train on a curated subset (~600 k images) of large‑scale datasets, using only 1–2 epochs on 8 RTX Titan GPUs. Model sizes remain modest (244 M for the base version, 744 M for the large version) despite the added gate.

Extensive experiments evaluate zero‑shot relative depth (AbsRel, δ₁) on KITTI, NYUv2, ETH3D, and DIODE, as well as metric depth on the high‑resolution DA‑2K benchmark. Across all datasets, BriGeS consistently outperforms the baseline DepthAnything models. For example, on the DepthAnything‑V1‑Base backbone, AbsRel improves from 0.080 to 0.074 and δ₁ from 0.941 to 0.947; on the large backbone, the gains are similar. The average reduction in AbsRel over the four benchmarks is about 7 %, with a striking 15 % reduction on DIODE, indicating superior handling of complex geometry. On DA‑2K, BriGeS achieves the highest accuracy among both relative and metric depth methods, confirming its generalization to unseen high‑resolution scenes.

Ablation studies dissect the contributions of the Bridging Gate and ATS. Removing the gate reverts performance to that of the original DepthAnything, while adding the gate alone yields noticeable gains. The combination of gate plus ATS delivers the best results, confirming that both modules are complementary. Qualitative visualizations demonstrate that BriGeS preserves thin power lines, delicate tree branches, and fine object boundaries that other methods blur or miss.

In summary, BriGeS makes three primary contributions: (1) a lightweight, attention‑based fusion module (Bridging Gate) that integrates geometric and semantic representations; (2) a temperature‑scaling mechanism that balances attention focus; and (3) an efficient training paradigm that freezes large pre‑trained encoders and only learns the fusion layer. These innovations collectively enable high‑quality depth estimation with minimal additional resources, making the approach attractive for real‑time applications such as robotics, autonomous driving, and augmented reality.

Comments & Academic Discussion

Loading comments...

Leave a Comment