DECODE: Dual-Enhanced Conditioned Diffusion for EEG Forecasting

Forecasting Electroncephalography (EEG) signals during cognitive events remains a fundamental challenge in neuroscience and Brain-Computer Interfaces (BCIs), as existing methods struggle to capture both the stochastic nature of neural dynamics and th…

Authors: Mehran Shabanpour, Sadaf Khademi, Konstantinos N Plataniotis

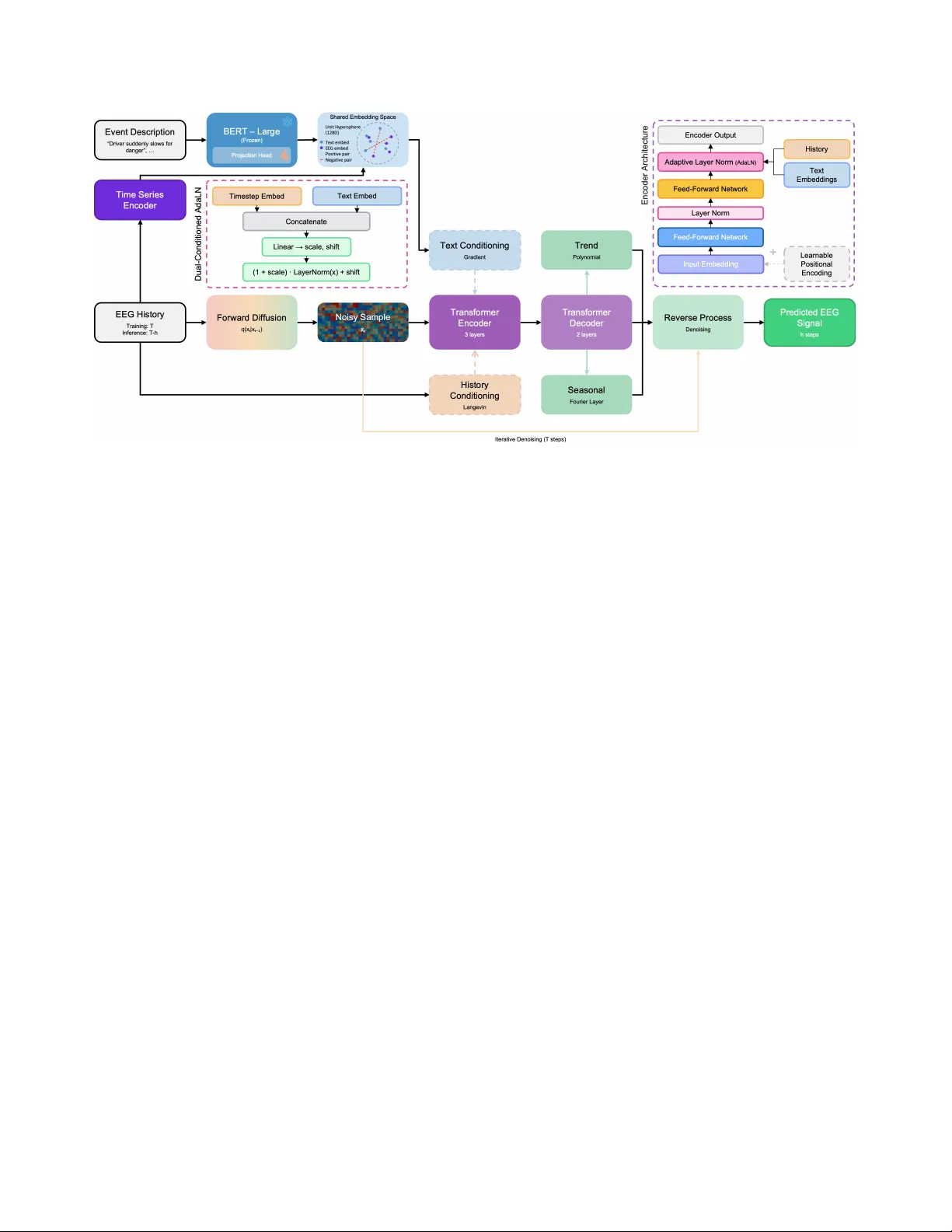

DECODE: DU AL-ENHANCED CONDITIONED DIFFUSION FOR EEG FORECASTING Mehran Shabanpour † , Sadaf Khademi † , K onstantinos N Plataniotis ‡ , and Arash Mohammadi † † Concordia Institute for Information Systems Engineering (CIISE), Concordia Uni versity , Canada. ‡ Department of Electrical and Computer Engineering, Uni versity of T oronto, Canada ABSTRA CT Forecasting Electroncephalography (EEG) signals during cognitive ev ents remains a fundamental challenge in neuroscience and Brain- Computer Interfaces (BCIs), as e xisting methods struggle to capture both the stochastic nature of neural dynamics and the semantic context of behavioral tasks. W e present the Dual-Enhanced COn- ditioned Diffusion (DECODE) for EEG, a no vel frame work that unifies semantic guidance from natural language descriptions with temporal dynamics from historical signals to generate e vent-specific neural responses. DECODE lev erages pre-trained language models to condition the dif fusion process on rich textual descriptions of cognitiv e ev ents, while maintaining temporal coherence through history-based Langevin dynamics. Evaluated on a real-world driv- ing task dataset with fiv e distinct behaviors, DECODE achie ves sub-microv olt prediction accuracy (MAE = 0 . 626 µ V) ov er 75- timestep horizons while maintaining well-calibrated uncertainty estimates. Our framework demonstrates that natural language can effecti v ely bridge high-le vel cogniti ve descriptions and lo w-le vel neural dynamics, opening ne w possibilities for zero-shot generaliza- tion to novel beha viors and interpretable BCIs. By generating phys- iologically plausible, ev ent-specific EEG trajectories conditioned on semantic descriptions, DECODE establishes a new paradigm for understanding and predicting context-dependent neural acti vity . Index T erms — EEG Forecasting, Conditional Diffusion Mod- els, Brain-computer interfaces, Semantic Guidance. 1. INTRODUCTION The ability to accurately forecast future brain patterns based on his- torical neural signals has become increasingly critical for applica- tions ranging from seizure prediction to real-time cognitiv e load as- sessment and brain-state-dependent stimulation protocols [1]. In this context, Electroencephalography (EEG) signals provide a window into the complex dynamics of brain acti vity , offering unprecedented opportunities for understanding cogniti ve states, predicting neural responses, and dev eloping Brain-Computer Interfaces (BCIs). The inherently non-stationary , multi-scale, and subject-specific nature of EEG signals, howe v er , presents fundamental challenges that have limited the effecti v eness of traditional forecasting approaches. The complexity of EEG forecasting extends beyond simple time series prediction. Neural signals exhibit intricate spatio-temporal correla- tions across multiple frequency bands [2, 3], with dynamics that vary across individuals, cogniti v e states, and en vironmental contexts. Recent studies have demonstrated that cognitiv e e vents and be- havioral responses manifest as distinct patterns in EEG recordings, particularly in high-stakes scenarios such as driving tasks where split-second neural responses can determine critical outcomes [4, 5]. These observations underscore the need for forecasting models This w ork was partially supported by Natural Sciences & Eng. Research Council (NSERC) of Canada; NSERC Discovery Grant RGPIN-2023-05654. that can capture both the stochastic nature of neural dynamics and the deterministic patterns associated with specific cognitiv e ev ents. T raditional approaches to EEG forecasting, including autore gressiv e models and Recurrent Neural Networks (RNNs), ha ve shown limited success in capturing the full distrib ution of possible future trajecto- ries, particularly when conditioned on complex cogniti ve ev ents [6]. While these methods can estimate conditional means ef fectiv ely , they struggle to model the uncertainty and multi-modal nature of future EEG states, particularly when conditioned on complex cog- nitiv e e vents. This limitation becomes especially pronounced in real-world applications where understanding the range of possible neural responses is as important as predicting the most likely out- come. The paper targets addressing this limitation by capitalizing on recent advances in generati ve modeling, particularly , dif fusion models, which have re volutionized time series forecasting [7, 8]. Unlike traditional discriminati v e approaches, dif fusion models learn to reverse a gradual noising process, allowing them to capture the full conditional distribution of future states while maintaining tem- poral coherence [9, 10]. For the targeted task of EEG forecasting, this capability is particularly valuable as it enables the generation of physiologically plausible signals that respect the complex spectral and spatial characteristics of neural activity . Literature Review: Diffusion models for time series forecast- ing hav e progressed from early conditional approaches such as T imeGrad [7], which suffered from error accumulation and limited long-range dependencies, to self-supervised masking strate gies such as CSDI and SSSD, enabling parallel generation [11, 8]. More recent works hav e focused on enhancing conditioning and represen- tation; TimeDif f [12] addresses boundary issues, TMDM [7] lev er- ages transformer architectures capturing uncertainty in multi v ariate forecasting tasks, Diffusion-TS [8] preserves trend and seasonality via Fourier-based losses, CN-Dif f [13] integrates nonlinear time- dependent transformations to capture complex temporal patterns, and D3U [14] separates deterministic and uncertain components for improv ed probabilistic forecasting. Despite these adv ances, existing methods primarily rely on historical observations or simple categor - ical conditions, lacking the flexibility to incorporate rich semantic information about future ev ents. When it comes to EEG forecasting, traditional models relied on linear autoregressi ve models, which remain practical but perform poorly for long-range predictions. Recent deep learning methods, such as W aveNet [6], enhance conditional probability modeling of future samples, particularly for theta and alpha bands. Multi-channel EEG forecasting, despite its importance for capturing whole-brain dynamics, has recei ved limited attention. EEGDIF [1] addressed this gap with a diffusion model achie ving correlation coef ficients abov e 0 . 77 across 16 channels. Howev er , most approaches remain limited to resting-state or seizure EEG, with little focus on event- conditioned forecasting in complex cognitiv e tasks. Application of diffusion models to EEG data presents unique challenges due to the Fig. 1 . Architecture of the proposed dual-conditioned diffusion model for event-specific EEG forecasting. The DECODE framework integrates semantic guidance from natural language event descriptions (top path) with history-based temporal conditioning (bottom path) through a hierarchical diffusion process. T ext descriptions are encoded via frozen BER T -large and projected to a shared embedding space, while historical EEG signals under go forw ard diffusion before processing through encoder-decoder transformers with interpretable decomposition. signals’ comple x spatio-temporal structure and subject-specific v ari- ations. EEGDfus [9] introduced a dual-branch CNN-Transformer architecture for denoising, highlighting the importance of multi- scale feature extraction. DiffEEG [15] le veraged diffusion-based data augmentation conditioned on short-time Fourier spectrograms to address class imbalance in seizure prediction. Other approaches focus on EEG reconstruction and synthesis, with ST AD [10] us- ing multi-scale T ransformers for super-resolution reconstruction, and classifier-free guidance generating subject-, session-, and class- specific Ev ent-Related Potentials (ERPs) [16]. Understanding EEG dynamics during cognitiv e tasks provides crucial context for de- veloping event-specific forecasting models. EEG studies in dri ving tasks have identified distinct neural signatures associated with dif- ferent driving behaviors. In particular , beta-band Event-Related Desynchronization/Synchronization (ERD/ERS) and fronto-parietal activity reflect motor preparation and cogniti ve load during driv- ing, highlighting their relev ance for modeling task-specific neural dynamics into forecasting models [4, 5]. Contributions: W e propose a no vel conditional dif fusion framework for event-specific EEG forecasting that incorporates semantic infor- mation to guide signal generation, enabling preemptive safety inter- ventions in semi-autonomous v ehicles. Our key insight is that natu- ral language descriptions of e vents contain rich contextual informa- tion that can guide the diffusion process toward generating event- appropriate neural responses. This intuition goes beyond discrete ev ent labels, capturing the richness of cognitive events [4]. In sum- mary , the paper makes the following contrib utions: • The Dual-Enhanced COnditioned Dif fusion (DECODE) frame- work is introduced, which is the first diffusion-based EEG fore- casting model that simultaneously leverages natural language de- scriptions for semantic guidance and historical signals for tem- poral coherence. The proposed dual conditioning mechanism ad- dresses the critical challenge of latent space collapse when gener- ating signals with subtle inter-class dif ferences. • Introduction of a Semantic-Neural Bridge (SNB) within the un- derlying contrastive learning approach by aligning Bidirectional Encoder Representations from Transformers (BER T)-encoded text descriptions with EEG temporal patterns. The SNB provides zero-shot capabilities to handle novel behavioral conditions and continuous interpolation between cognitive states, unav ailable in existing discrete-label approaches. The proposed DECODE is e valuated ov er challenging real-world scenarios, achieving significant improv ements in deterministic met- rics with particular emphasis on ev ent-specific prediction accuracy . More specifically , DECODE achieves sub-microvolt prediction ac- curacy of 0 . 626 µ V while maintaining well-calibrated uncertainty estimates (CRPS < 0 . 72 ). It outperformed its closest comparable method by approximately 24 % on multi-channel ev ent-related fore- casting tasks. 2. THE DECODE FRAMEWORK W e consider the task of conditional EEG signal forecasting, where giv en a historical EEG sequence x 1: T − h ∈ R ( T − h ) × d and a natu- ral language description c of an upcoming neural event, we aim to predict the future EEG signals x T − h +1: T ∈ R h × d that correspond to the described e vent. Here, T denotes the total sequence length, h represents the forecasting horizon, and d is the number of EEG chan- nels. Next, the utilized dataset will be briefly introduced followed by the detailed dev elopments of the DECODE frame work. 2.1. Dataset Overview W e utilize the Multimodal Physiological Dataset for Driving Be- haviour (MPDB) [4], which contains synchronized EEG recordings from 35 participants performing naturalistic dri ving tasks in a high- fidelity simulator . The dataset captures five distinct driving behav- iors: braking, turning, lane changing, acceleration, and stable driving (baseline condition), with event markers precisely aligned to behav- ioral onsets. For this study , we focus exclusiv ely on the 59-channel EEG data sampled at 1000 Hz. Each trial spans 2 seconds (500 ms pre-stimulus to 1500 ms post-stimulus) centered on event markers. The raw dataset comprises approximately 5,700 trials across all par- ticipants and conditions. 2.2. Pre-pr ocessing and Data Preparation EEG data from 30 participants across fi v e dri ving tasks were prepro- cessed using artifact rejection, bandpass filtering (0.1–30 Hz), base- Fig. 2 . Representativ e EEG forecasting results for electrodes P7 and Pz, with historical signal (blue) providing temporal context up to timestep 1000 and the model generating probabilistic forecasts (orange) compared to ground truth (purple). The topographic map shows grand-average voltage distribu- tion (0-500 ms post-stimulus) with red indicating positiv e and blue indicating negati ve potentials. line correction, and average referencing. ERPs were e xtracted from 4,308 trials, and 14 electrodes most sensitiv e to dri ving events were selected by computing mean amplitudes within a 0-500 ms post- stimulus window across all conditions and ranked channels by their absolute deviation from baseline. For e vent-specific forecasting, the selected electrodes were Fpz, P7, P8, T8, PO7, O1, PO8, PO5, CP2, Pz, C2, CP3, CP4, and FC4, capturing both frontal positivity and central-parietal negati vity . Time-frequency analysis was performed across theta, alpha, beta, and low gamma bands using Hilbert-based power estimation. ERD/ERS was computed as the percentage power change from a pre-stimulus baseline to an activ e window , and inde- pendent t-tests with Cohen’ s d effect sizes identified band-condition pairs with the highest discriminativ e power for forecasting cognitive- motor states. 2.3. Base Diffusion Model Our approach builds upon the Diffusion-TS architecture [8], employ- ing a denoising dif fusion probabilistic model with interpretable de- composition. The forward diffusion process gradually corrupts the clean signal x 0 through T timesteps according to q ( x t | x t − 1 ) = N x t ; p 1 − β t x t − 1 , β t I , (1) where β t follows a cosine schedule [17]. The reverse process learns to denoise through a transformer-based architecture that explicitly models trend and seasonal components ˆ x 0 ( x t , t, θ ) = V t tr + D X i =1 S i,t + R, (2) where V t tr represents the trend component modeled via polynomial regression in a lo w-frequency space, S i,t captures seasonal patterns through Fourier synthesis layers that select dominant frequencies via top- k amplitude selection, and R denotes the residual compo- nent [8]. The model employs an encoder -decoder transformer with adaptiv e layer normalization conditioned on the dif fusion timestep, where each decoder block progressiv ely refines the decomposition through cross-attention with encoded representations. Figure 1 il- lustrates the complete architecture of our dual-conditioned diffusion framew ork. 2.4. T ext-Guided Semantic Conditioning T o prev ent latent space collapse and enable semantic control over generated EEG patterns, we introduce a text conditioning mech- anism using pre-trained language models. W e employ BER T - large [18] to encode natural language event descriptions { c k } K k =1 into a shared embedding space with time series representations. As shown in the top pathway of Figure 1, the text encoder f text projects BER T’ s pooler output through a learned projection head e text = Normalize ( W proj · BER T ( c ) + b proj ) , (3) where normalization ensures unit sphere embeddings. Correspond- ingly , the time series encoder f ts maps EEG sequences through con volutional layers follo wed by projection to the same embedding space. W e optimize a contrastive objective based on the InfoNCE loss [19] L contrast = − log exp( e ts · e + text /τ ) P j exp( e ts · e j text /τ ) , (4) where τ is a learned temperature parameter and e + text denotes the cor- rect te xt embedding. This alignment creates semantically meaning- ful regions in the latent space, pre venting mode collapse while main- taining behavioral coherence. 2.5. Dual Conditioning Mechanism During inference, we employ a dual conditioning strategy that com- bines history-based Langevin dynamics with text-based gradient guidance. For history conditioning, we apply Lange vin sampling to enforce consistency with observed signals x obs through iterativ e refinement x ′ t − 1 = x t − 1 + η h ∇ x t α ∥ x obs − ˆ x obs ∥ 2 + γ log p ( x t − 1 | x t ) , (5) where η h controls the step size, α weights reconstruction fidelity , and γ balances generation fluency [20]. The number of Langevin steps K is adaptively scheduled based on the diffusion timestep, with more iterations at higher noise levels where structural decisions are made. For text conditioning, we compute gradients of the log- probability with respect to the predicted class: ∇ x t log p ( c | x t ) = ∇ x t LogSoftmax f ts ( x t ) · E ⊤ text /τ [ k ] , (6) where E text contains pre-computed embeddings for all e vent descrip- tions and k indexes the target e vent. The final update combines both guidance signals with tuned weights to ensure history dominates lo- cal dynamics while text provides semantic drift toward appropriate behavioral manifolds: x t − 1 = µ θ ( x t , t ) + σ t z + λ h g history + λ t g text . (7) This hierarchical conditioning creates a Riemannian metric on the la- tent manifold where geodesics follo w natural behavioral transitions while maintaining semantic coherence. 3. EXPERIMENT AL RESUL TS Our experiments employed a dual-conditioning diffusion model with a Transformer -based architecture consisting of 3 encoder layers, 2 decoder layers, hidden dimension of 96, and 4 attention heads for multiv ariate EEG forecasting. The dif fusion process utilized 500 Fig. 3 . Mean Absolute Error (MAE) o ver time across the 75-timestep pre- diction horizon. training and 200 sampling timesteps, with a cosine beta schedule and L 1 loss optimization. Training proceeded for 12,000 epochs on sequences of length 1,075 with 59-dimensional features, using the Adam optimizer (learning rate = 1 × 10 − 5 ) and Exponential Mov- ing A verage (EMA) with decay rate 0.995 for stabilization. The dual conditioning mechanism balanced history-based Langevin dynam- ics with text-guided gradient conditioning using frozen BER T -large embeddings. Data Characteristics and Augment ation Strategy: EEG data presents unique challenges compared to conventional time series benchmarks. While datasets like T r affic and Electricity [21] rep- resent continuous temporal processes with arbitrary segmentation preserving long-range dependencies, EEG consists of genuinely independent trials with no inter -trial causal relationships. Each trial represents a discrete experimental ev ent with statistical indepen- dence, eliminating inter-trial temporal dependencies and forcing the model to learn generalizable patterns purely from within-trial dynamics. W e addressed this through sliding window augmenta- tion with 64-timestep strides, ensuring each window contained at least one event marker . This increased the effecti v e training set size by approximately 15-fold while preserving temporal structure. The subtle differences between EEG patterns across dri ving events, evidenced by the moderate 83.59% classification accuracy , present particular challenges for unconditional diffusion models, which are prone to mode collapse when class boundaries are ambiguous. Neural Response Characterization: The oscillatory dynamics of individual electrodes were captured, and the grand-average topo- graphic distributions (Fig. 2) rev ealed distinctive frontal-parietal dissociation patterns, with frontal positivity and parietal negati vity modulations following an urgenc y-dependent hierarchy . T able 1 presents the quantitative statistical analysis of neural responses across driving events. Brake ev ents elicited the strongest corti- cal responses across both ERP and spectral domains, consistent with their higher cognitiv e demands for rapid motor inhibition and decision-making. Notably , our spectral analysis rev ealed ERS rather than the classical desynchronization typically associated with motor tasks, suggesting enhanced cortical engagement during complex driving beha viors. Beta and lo w gamma bands emer ged as the most discriminativ e features (Cohen’ s d > 0 . 18 ), with synchronization magnitudes directly correlating with behavioral urgenc y . Accord- ingly , we selected the lo w-gamma band for subsequent analyses due to its strong discriminative po wer . This unexpected ERS pattern may reflect the heightened attentional demands and sensorimotor integration required for real-time vehicular control, distinguishing these naturalistic driving tasks from simpler motor paradigms. F orecasting Evaluation: Our approach represents a no vel paradigm in EEG forecasting, and differences in signal amplitude, prediction and history window length, and frequency bands, make direct com- T able 1 . Statistical analysis of neural response characteristics across driving ev ents. Measure Event V alue p d ERP Modulation ( µ V) Frontal (Fz) Brake +1.06 < .001 – T urn +0.81 .015 – Lane Change +0.70 .005 – Parietal (Pz) Brake -1.42 < .001 – Event-Related Synchr onization (%) Beta (13-30 Hz) Brake +16.0 .0002 0.18 Gamma (30-45 Hz) Brake +27.7 < .0001 0.23 Note: p-values indicate significance le vels as follows: p < 0.05 (sig- nificant), p < 0.01 (highly significant), and p < 0.001 (very highly sig- nificant). parison with other studies challenging. The most relev ant base- line, Pankka et al. ’ s W aveNet approach [6], achieved MAE values of 1 . 0 ± 1 . 1 µ V for theta and 0 . 9 ± 1 . 1 µ V for alpha bands over 150 ms (75 time steps) horizons on single-channel resting-state data. W e further benchmarked T imeGrad [22] and PatchTST [23], two complementary state-of-the-art time series forecasting models, on our dataset, obtaining av erage MAEs of 0 . 759 µ V and 0 . 733 µ V, respectiv ely . In comparison, our model achie ves an MAE of 0 . 626 ± 0 . 29 µ V for lo w gamma band at 75-timestep horizons on multi- channel ev ent-related recordings with semantic conditioning. Ac- cording to Fig. 3, MAE exhibits characteristic degradation with in- creasing forecast horizon, demonstrating the inherent trade-off be- tween temporal reach and prediction accurac y as the model operates under progressiv ely weaker observ ational constraints. Ablation Analysis: W e conduct ablation studies to identify the indi- vidual contribution of history conditioning and to determine how its integration with text guidance drives overall performance. History conditioning alone (MAE = 0 . 704 µ V) provides temporal coher- ence but lacks ev ent-specific guidance. The synergistic combina- tion achieves superior performance (MAE = 0 . 626 µ V), validating our architectural choices. The learned embeddings support continu- ous interpolation between behavioral conditions, suggesting poten- tial for generating intermediate cognitive states not explicitly present in training data. This capability , combined with the model’ s ability to maintain semantic separation through text embeddings while pre- serving temporal coherence through history conditioning, addresses the fundamental challenge of generating conte xt-dependent neural signals with subtle inter-class dif ferences. 4. CONCLUSION This work introduces DECODE, a dual-enhanced conditional diffu- sion frame w ork that unifies semantic guidance and temporal dynam- ics for event-specific EEG forecasting. By combining natural lan- guage descriptions with history-based temporal conditioning, we en- able generation of physiologically plausible and beha viorally coher- ent neural trajectories, achieving sub-micro volt prediction accuracy with well-calibrated uncertainty estimates. The continuous nature of learned embeddings indicates potential for generating intermediate cognitiv e states through embedding interpolation, while the proba- bilistic formulation with calibrated uncertainty makes the approach suitable for safety-critical BCI applications. The iterativ e denoising paradigm offers an unexplored advantage for real-time BCIs: early termination of the reverse process yields partially denoised signals that may preserve suf ficient task-rele v ant information while dramat- ically reducing inference latency , suggesting future work should in- vestigate optimal noise-fidelity trade-offs for time-critical neural in- terfaces. 5. REFERENCES [1] Zekun Jiang, W ei Dai, Qu W ei, Ziyuan Qin, Kang Li, and Le Zhang, “Eeg-dif: Early warning of epileptic seizures through generative dif- fusion model-based multi-channel eeg signals forecasting, ” in Pro- ceedings of the 15th ACM International Conference on Bioinformat- ics, Computational Biology and Health Informatics , Shenzhen, China, 2024, A CM, pp. 71:1–71:1. [2] W enwen Chang, W eiliang Meng, Guanghui Y an, Bingtao Zhang, Hao Luo, Rui Gao, and Zhifei Y ang, “Driving eeg based multilayer dynamic brain network analysis for steering process, ” Expert Systems with Ap- plications , vol. 207, pp. 118121, 2022. [3] Giov anni M. Di Liberto, Michele Barsotti, Gio vanni V ecchiato, Jonas Ambeck-Madsen, Maria Del V ecchio, Pietro A vanzini, and Luca As- cari, “Robust anticipation of continuous steering actions from elec- troencephalographic data during simulated dri ving, ” Scientific Reports , vol. 11, no. 1, pp. 23383, December 2021. [4] Xiaoming T ao, Dingcheng Gao, W enqi Zhang, Tianqi Liu, Bing Du, Shanghang Zhang, and Y anjun Qin, “ A multimodal physiological dataset for driving behaviour analysis, ” Scientific Data , vol. 11, no. 1, pp. 378, April 2024. [5] Prithila Angkan, Behnam Behinaein, Zunayed Mahmud, Anubhav Bhatti, Dirk Rodenb urg, Paul Hungler , and Ali Etemad, “Multimodal brain–computer interface for in-vehicle driver cognitive load measure- ment: Dataset and baselines, ” IEEE T ransactions on Intelligent T r ans- portation Systems , vol. 25, no. 6, pp. 5949–5964, 2024. [6] H. Pankka, J. Lehtinen, R.J. Ilmoniemi, and T . Roine, “Enhanced eeg forecasting: A probabilistic deep learning approach, ” Neural Compu- tation , vol. 37, no. 4, pp. 793–814, March 2025. [7] Y uxin Li, W enchao Chen, Xinyue Hu, Bo Chen, and Mingyuan Zhou, “T ransformer-modulated diffusion models for probabilistic multivari- ate time series forecasting, ” in The T welfth International Confer ence on Learning Repr esentations . ICLR, 2024. [8] Xinyu Y uan and Y an Qiao, “Dif fusion-ts: Interpretable diffusion for general time series generation, ” in arXiv pr eprint . arXiv , 2024. [9] Xiaoyang Huang, Chang Li, Aiping Liu, Ruobing Qian, and Xun Chen, “Eegdfus: A conditional dif fusion model for fine-grained eeg denois- ing, ” IEEE Journal of Biomedical and Health Informatics , vol. 29, no. 4, pp. 2557–2569, 2025. [10] Shuqiang W ang, T ong Zhou, Y anyan Shen, Y e Li, Guoheng Huang, and Y ong Hu, “Generativ e ai enables eeg super-resolution via spatio- temporal adaptive diffusion learning, ” IEEE T ransactions on Consumer Electr onics , 2025. [11] W eiwei Y e, Zhuopeng Xu, and Ning Gui, “Non-stationary dif fusion for probabilistic time series forecasting, ” in arXiv pr eprint . arXiv , 2025. [12] Lifeng Shen and James Kwok, “Non-autoregressi ve conditional diffu- sion models for time series prediction, ” in International Confer ence on Machine Learning . PMLR, 2023, pp. 31016–31029. [13] J. Rishi, G.V .S. Mothish, and D. Subramani, “Conditional diffusion model with nonlinear data transformation for time series forecasting, ” in Pr oceedings of the F orty-second International Conference on Ma- chine Learning . ICML, 2025. [14] Q. Li, Z. Zhang, L. Y ao, Z. Li, T . Zhong, and Y . Zhang, “Diffusion- based decoupled deterministic and uncertain frame work for probabilis- tic multivariate time series forecasting, ” in Proceedings of the Thir- teenth International Confer ence on Learning Representations . ICLR, 2025. [15] Kai Shu, Le Wu, Y uchang Zhao, Aiping Liu, Ruobing Qian, and Xun Chen, “Data augmentation for seizure prediction with generative dif- fusion model, ” IEEE T ransactions on Cognitive and Developmental Systems , vol. 17, no. 3, pp. 577–591, July 2025. [16] Guido Klein, Pierre Guetschel, Gianluigi Silvestri, and Michael T angermann, “Synthesizing eeg signals from event-related potential paradigms with conditional dif fusion models, ” in Pr oceedings of the International Confer ence Published by V erlag der T echnischen Univer- sit ¨ at Graz . V erlag der T echnischen Uni versit ¨ at Graz, 2024. [17] Alex Nichol and Prafulla Dhariwal, “Improved denoising diffusion probabilistic models, ” CoRR , vol. abs/2102.09672, pp. –, 2021. [18] Jacob Devlin, Ming-W ei Chang, K enton Lee, and Kristina T outano va, “Bert: Pre-training of deep bidirectional transformers for language un- derstanding, ” in Proceedings of the 2019 Confer ence of the North American Chapter of the Association for Computational Linguistics (NAA CL) . Association for Computational Linguistics, 2019, vol. –, pp. –. [19] A ¨ aron v an den Oord, Y azhe Li, and Oriol V inyals, “Represen- tation learning with contrastive predictiv e coding, ” CoRR , vol. abs/1807.03748, pp. –, – 2018. [20] Jiaming Song, Chenlin Meng, and Stefano Ermon, “Denoising dif- fusion implicit models, ” in Pr oceedings of the International Confer- ence on Learning Repr esentations (ICLR) . International Conference on Learning Representations, 2021, vol. –, pp. –. [21] Haoyi Zhou, Shanghang Zhang, Jieqi Peng, Shuai Zhang, Jianxin Li, Hui Xiong, and W ancai Zhang, “Informer: Beyond efficient trans- former for long sequence time-series forecasting, ” in Pr oceedings of the arXiv Conference on Long Sequence Time-Series F or ecasting . arXiv , 2020, vol. abs/2012.07436, pp. 1–10. [22] Kashif Rasul, Calvin Seward, Ingmar Schuster, and Roland V ollgraf, “ Autoregressiv e denoising diffusion models for multiv ariate probabilis- tic time series forecasting, ” in The 38th International Conference on Machine Learning . ICML, 2021. [23] Y uqi Nie, Nam H. Nguyen, Phanwadee Sinthong, and Jayant Kalagnanam, “ A time series is worth 64 words: Long-term forecast- ing with transformers, ” in The Eleventh International Conference on Learning Repr esentations . ICLR, 2023.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment