Macro-Micro Inference: Robust Synaptic Classification via Spike-Triggered Extrapolation

This work introduces a framework for reconstructing the interaction graph of neuronal networks modeled as multivariate point processes. The methodology performs bivariate inference, identifying synaptic links exclusively from the spike trains of a pa…

Authors: Emilio De Santis

Macro-Micro Inference: Robust Synaptic Classification via Spik e-T riggered Extrap olation ∗ Emilio De San tis Dipartimen to di Matematica, Sapienza Università di Roma Piazzale Aldo Moro, 5, 00185, Rome, Italy desantis@mat.uniroma1.it , emilio.desantis@uniroma1.it Marc h 19, 2026 Abstract This work introduces a framework for reconstructing the intera ction graph of neuronal net works mo deled as multiv ariate p oin t pro cesses. The metho dology p erforms biv ariate inference, identifying synaptic links exclusiv ely from the spik e trains of a pair of neurons, without requiring observ ations of the remaining net work activity . W e propose a Macro- Micro Extrapolation algorithm to address data sparsity at the micro-scale, inferring synap- tic interactions in the limit ∆ → 0 + . A k ey contribution is the Spik e-T riggered Estimator, whic h lev erages the local reset prop ert y of Galves-Löcherbac h dynamics to decouple lo cal synaptic jumps from higher-order netw ork contributions, significantly reducing estimation v ariance and eliminating spurious dep endencies on baseline firing intensities. By employing an adaptiv e hybrid logic that switches betw een sample a veraging and our no v el Pyramid Extrap olation, w e ensure robust classification of excitatory , inhibitory , and null connections ev en in low signal-to-noise regimes. The framew ork’s scalability and precision are v alidated b y n umerical results on dense cliques and structured lay ered netw orks, achieving perfect classification accuracy across diverse top ological motifs. Keywor ds: Neuronal net works, m ultiv ariate p oin t pro cesses, sto chastic processes with mem- ory of v ariable length, interaction graphs, statistical mo del selection. 1 In tro duction The identification of the underlying interaction graph b etw een neurons, based on the recording of spike train time series, remains a fundamen tal challenge in neuroscience [1, 3, 4, 5]. Under- standing how the activity of one neuron influences another—through excitatory or inhibitory connections—requires statistical mo dels capable of capturing ultra-rapid temp oral dynamics, whic h are often confounded b y high v ariance in low-in tensit y regimes. In recen t years, functional connectivit y has b een studied primarily through mo dels that in tegrate diverse observed signals, suc h as brain rhythms and neuronal spik es [13]. Our approac h, ho wev er, focuses exclusively on spiking data—the only signal typically av ailable in large-scale extracellular recordings. ∗ Dedicated to the memory of Antonio Galv es (1943–2023), a mentor and collab orator whose brilliance con- tin ues to guide this field. 1 W e fo cus our study on the con tinuous-time Galves-Löcherbac h model (see [7, 10]) to present our techniques for iden tifying synaptic connections b etw een neurons. Although w e believe our metho ds are sufficiently general and robust, in this pap er w e apply them to a single model to a void complexities that would obscure the sim ulation methods. W e highligh t, as a k ey strength, that our statistics identify the presence of a synaptic link b et ween t wo sp ecific neurons using only their spiking information—an information set that is, in a sense, minimal. Specifically , this w ork builds up on the researc h in [1], pro viding a refined construction of the estimators and extending the analytical framew ork to include rigorous statistical error bounds for synaptic classification. A primary difficulty inherent to the methodology in [1] is that the statistical v alidity of the inference is strictly tied to the c hoice of an observ ation window ∆ that is sufficiently small to minimize the approximation bias. This requirement, in turn, leads to the extreme sparsity of the asso ciated coun ting measure, as the statistics defined in [1] are based on the ratio b et w een sums of Bernoulli random v ariables whose success parameters are Θ(∆ 2 ) . Consequen tly , since asymptotic un biasedness of the estimator constrains the analysis to suc h a micro-scale, an exceptionally long observ ation time would be required to obtain statistically significan t results. T o address the statistical c hallenges of micro-scale analysis, fo cusing on synaptic connection classification, this pap er in tro duces a structured framew ork based on tw o distinct statistics and t wo different metho dological approaches, leading to four p ossible analytical com binations. The first statistic is a mo dified version of the estimator established in [1]. The second statistic in tro duces a windo w sampling scheme where observ ation windo ws are dynamically anchored to the spikes of the target neuron, using the lo cal reset prop ert y of the Galves-Löcherbac h dynamics. This second statistic is new, while the first can b e seen as a rescaling b y a factor of ∆ − 1 of the statistic defined in [1]. While this rescaling is less relev an t for direct inference at a single fixed ∆ , it b ecomes fundamental when applying the macro-micro extrap olation algorithm [12, 8, 9]. Indeed, the original statistic proposed in [1] v anishes as ∆ → 0 + , whic h w ould lead to an uninformative degenerate limit regardless of the connection type. In con trast, the rescaling ensures that the statistics conv erge to ward well-defined, non-zero limits that are functionally dep enden t on the synaptic interaction. This effectively “blows up” the microscopic signal, th us allo wing for the identification of excitatory , inhibitory , or null connections in the asymptotic regime. T able 1: Inference F ramew ork: Statistics and Metho ds Sampling Sc heme / Metho d Single-scale Macro-Micro Extrap olation Fixed Grid Sampling Single Small ∆ Extrap olation Spik e-T riggered Sampling Single Small ∆ Extrap olation The metho ds summarized in T able 1 represent differen t balances b etw een theoretical gen- eralit y and statistical efficiency . While the fixed grid sampling (top row) main tains a standard approac h to p oin t process discretization, in this work we exclusively inv estigate the Spik e- T riggered Sampling sc heme (b ottom row). This choice is mathematically motiv ated b y the lo cal reset prop erty of the Galves-Löcherbac h dynamics: by anc horing the observ ation windo ws to the spikes of the target neuron, the membrane p otential is sampled from a kno wn state. This 2 conditioning significantly reduces the estimator’s v ariance and enhances the statistical resolv- abilit y of the synaptic jump from the contributions of the rest of the net work. Consequen tly , w e fo cus on the tw o approaches in the second row: the single-scale spike-triggered estimator, whic h pro vides a rigorous b enchmark under minimal assumptions, and the Macro-Micro Ex- trap olation, whic h lev erages the regularit y of statistical observ ables across different scales. The latter emplo ys an adaptive hybrid logic—switching b etw een mid-p oint av eraging and pyramid extrap olation—to allo w for an in tercept recov ery tow ard the micro-scale limit ev en when direct measuremen ts are dominated by sparsit y . It is also important to note that recen t models hav e been designed to b etter in tegrate biological neuronal b eha vior, such as axonal conduction dela ys and membrane p oten tial leak age (see, e.g., [2]). The methodologies describ ed in this w ork are designed to b e effectiv e b ey ond the specific cases presented here; preliminary tests on the mo del prop osed in [2] already suggest that the adaptive extrap olation logic main tains its robustness even in the presence of more complex membrane dynamics and non-zero transmission latencies. F urthermore, the Galves- Lö c herbac h sto c hastic mo del has also b een applied to social netw orks (see [6, 11]) to mo del fast consensus and metastabilit y in p olarized netw orks, highlighting the relev ance of this model in v arious con texts. The article is organized as follo ws. In Section 2, w e define the stochastic neural model based on the Galv es-Lö c herbach framework. Section 3 in tro duces the core of our methodological shift: the Spik e-T riggered Estimator, highligh ting its adv an tages o ver traditional fixed-grid sampling. In Section 4, we derive the statistical prop erties of the estimator. Section 5 pro vides rigorous b ounds on the conditional probabilities related to our estimators. W e also analytically iden tify a critical windo w width ( ∆ A ) required for single-scale identification, noting that suc h a resolution is often to o fine for practical computational applications. Section 6 is dedicated to sample complexit y and the deriv ation of error b ounds. Section 7 pro vides numerical v alidation of the single-scale estimator, confirming the theoretical predictions. Section 8 introduces the Macro-Micro Strategy and the Pyramid Extrap olation logic to o vercome the limitations of the ultra-fine sampling windo w. Section 9 stresses the framework under extreme noise conditions using a minimal arc hitecture. Section 10 demonstrates its scalability and robustness in fully in teractive and lay ered net works up to N = 60 . Section 11 summarizes our findings and outlines future research directions. 2 The Con tin uous-Time Galv es-Lö c herbac h Mo del Ha ving defined the general scope of our inference framew ork, w e no w establish the formal mathematical en vironment b y describing the sto c hastic dynamics of the neuronal net w ork. F ollo wing the framework of [10], w e mo del the neural net work as a family of interacting p oin t pro cesses ( N i , i ∈ I ) defined on a common probability space (Ω , F , P ) , where I is a finite set of neurons. F or eac h neuron i ∈ I , N i is a random coun ting measure on ( R + , B ( R + )) . F or any in terv al A = ( s, t ] ⊂ R + , N i ( A ) represen ts the n um b er of spik es emitted b y neuron i during the time interv al A . W e assume that the processes are simple, meaning that P ( N i ( { t } ) ≤ 1 for all t ≥ 0) = 1 . The sequence of spike times ( T i k ) k ≥ 1 for neuron i is defined as: T i k = inf { t > T i k − 1 : N i (( T i k − 1 , t ]) = 1 } . (1) The pro cess N i can b e expressed as N i ( A ) = P ∞ k =1 1 A ( T i k ) . F or each neuron i ∈ I , the firing 3 intensity λ i ( t ) is given by: λ i ( t ) = ϕ i ( u i ( t )) , (2) where u i ( t ) represen ts the membr ane p otential and ϕ i : R → R + is a non-negativ e, non- decreasing function. The in teraction b et ween neurons is mediated by synaptic weigh ts w j → i . F or each neuron i ∈ I , w e denote its pre-synaptic neighborho o d as V i := { j ∈ I : w j → i = 0 } , partitioned in to the excitatory subset V i + ( w j → i > 0 ) and the inhibitory subset V i − ( w j → i < 0 ). W e assume a maximum in-degree d , such that |V i | ≤ d . The system of mem brane p oten tials u ( t ) = ( u i ( t )) i ∈ I defines the state space of the net work. By accounting for the current p oten tials, the dynamics satisfy the Marko v prop erty . F or any t ≥ 0 , the potential of neuron i is uniquely determined b y the initial state u (0) and the subsequen t spik es: u i ( t ) = u i (0) 1 { N i ([0 ,t ))=0 } + X j ∈V i w j → i N j (( L i ( t ) ∨ 0 , t )) , (3) where L i ( t ) = sup { s < t : N i ( { s } ) = 1 } tracks the most recen t reset (with sup ∅ = 0 ). Whenev er neuron i spik es, its potential u i ( t ) is reset to zero, effectiv ely erasing the influence of its previous history . This Marko vian form ulation is particularly adv antageous for our Spik e-T riggered ap- proac h: by anchoring our observ ation windo ws to the spik es of neuron i , w e p erform a sampling of the Marko v chain at the exact momen t the p oten tial u i is known to b e zero. T o formalize the information flow, w e introduce t wo filtrations. Let F t b e the σ -algebra represen ting the full state of the system up to time t . Conv ersely , let H t ⊂ F t b e the σ -algebra generated exclusively b y the observ able spike trains up to time t . Remark 1. By the tower pr op erty of c onditional exp e ctation, any spiking event E t r elates to the observable history as: P ( E t |H t ) = E [ P ( E t |F t ) |H t ] . In the Galves-L ö cherb ach mo del, the r eset me chanism ensur es that c onditioning on H t effe ctively c aptur es the information ne c essary to describ e the system’s dynamics. F ollo wing [1], w e assume: Assumption 1. The firing intensity functions ϕ i : R → R + satisfy: 1. non-decreasing; 2. bounded a wa y from 0: min i ∈ I inf u ∈ R ϕ i ( u ) ≥ α > 0 ; 3. bounded from ab o ve: max i ∈ I sup u ∈ R ϕ i ( u ) ≤ β < + ∞ ; 4. minim um synaptic jump: min j ∈V i | ϕ i ( w j → i ) − ϕ i (0) | ≥ δ , ∀ i ∈ I ; 5. maxim um in-degree: max i ∈ I |V i | ≤ d . W e assume that α, β , δ ∈ R + and d ∈ N are fixed, kno wn v alues. 4 Finally , to establish a benchmark for our inference, w e partition the observ ation p erio d in to disjoin t blo c ks of duration 3∆ . F or an y k ∈ N and neurons i = j , we define the Fixed Sc heme ev ents: A i k (∆) = { N i ((3 k − 3)∆ , (3 k − 2)∆] > 0 } (4) B i k (∆) = A i k (∆) ∩ { N i ((3 k − 2)∆ , (3 k − 1)∆] > 0 } (5) C j → i k (∆) = A i k (∆) ∩ { N j ((3 k − 2)∆ , (3 k − 1)∆] > 0 } (6) D j → i k (∆) = C j → i k (∆) ∩ { N i ((3 k − 1)∆ , 3 k ∆] > 0 } (7) In this framework, B i k is the bursting ev ent, C j → i k the trigger even t, and D j → i k the inter action ev ent. 3 Spik e-T riggered Sampling Sc heme In the follo wing, we denote b y A i k , B i k , C j → i k , D j → i k the ev en ts associated with the Fixed Scheme, where the index k refers to disjoint temporal blo c ks of fixed duration. Conv ersely , we employ the calligraphic notation A i k , B i k , C j → i k , D j → i k for the Spik e-T riggered Sc heme in tro duced in this section. In this framework, the index k refers to t wo sequences of stopping times ( τ i k ) k ∈ N and ( σ j → i k ) k ∈ N deriv ed from the firing history of the neurons i and j . These stopping times serv e as dynamic triggers for the observ ation windo ws, allo wing for a recursive definition of the sampling even ts that exploits the lo cal reset prop erty . 3.1 Baseline T riggered Scheme for A i and B i Let ( T i j ) j ≥ 1 b e the ordered sequence of spik e times of neuron i . W e define a sequence of trigger times { τ i k } k ≥ 1 and the corresp onding sampled ev ents through the following recursiv e design: 1. Initialization : τ i 1 := T i 1 . (8) 2. Definition of T riggered Even ts : F or each trigger τ i k , we define: • The r efer enc e event A i k , marking the reset of the mem brane p oten tial: A i k := { N i ( { τ i k } ) = 1 } . (9) • The bursting event B i k (∆) , o ccurring if neuron i emits at least one subsequent spik e within the window ( τ i k , τ i k + ∆] : B i k (∆) := { N i ( τ i k , τ i k + ∆] > 0 } . (10) 3. Conditional Recursiv e Up date : The next trigger time τ i k +1 is determined b y the outcome of B i k (∆) : • If B i k (∆) o ccurs : Let T i k,bur st b e the first spike in ( τ i k , τ i k + ∆] . T o preserv e the reset condition for the next trial, we skip this spik e and set: τ i k +1 := inf { T i ℓ : T i ℓ > T i k,bur st } . (11) 5 • If B i k (∆) do es not o ccur : The next trigger is the first spike o ccurring after the curren t horizon ∆ : τ i k +1 := inf { T i ℓ : T i ℓ > τ i k + ∆ } . (12) 3.2 In teraction T riggered Sc heme for C j → i and D j → i W e define a sequence of trigger times ( σ j → i k ) k ≥ 1 dedicated to the inter action analysis, where eac h trigger coincides with a spik e of the target neuron i . 1. Initialization and Reset : The first interaction trigger is σ j → i 1 := T i 1 . At this instant, the membrane potential u i is reset to zero, providing a con trolled state for the trial. 2. Causal T riggering (Detection of j ) : F or eac h σ j → i k , we searc h for the first spike of neuron j after the trigger: T j k, ∗ := inf { T j h : T j h > σ j → i k } . (13) The trigger ev ent C j → i k (∆) is satisfied if this spike falls within the windo w: C j → i k (∆) := { T j k, ∗ ≤ σ j → i k + ∆ } . (14) 3. Conditional Observ ation of D j → i k (∆) : If C j → i k (∆) o ccurs, a secondary windo w of length ∆ is op ened at T j k, ∗ . The inter action suc c ess o ccurs if neuron i fires: D j → i k (∆) := C j → i k (∆) ∩ { N i ( T j k, ∗ , T j k, ∗ + ∆] > 0 } . (15) 4. Recursiv e Up date Rule : The next trigger σ j → i k +1 is determined as follo ws: • If C j → i k (∆) is not satisfied : The trial is ab orted and the next trigger is: σ j → i k +1 := inf { T i ℓ : T i ℓ > σ j → i k + ∆ } . (16) • If C j → i k (∆) is satisfied : – Case Suc c ess ( D j → i k (∆) o c curs): Let T i k, react = inf { T i ℓ : T i ℓ > T j k, ∗ } . W e skip this reaction spike and set: σ j → i k +1 := inf { T i ℓ : T i ℓ > T i k, react } . (17) – Case F ailur e ( D j → i k (∆) do es not o c cur): The next trigger is: σ j → i k +1 := inf { T i ℓ : T i ℓ > T j k, ∗ + ∆ } . (18) The sequence ( σ j → i k ) k ≥ 1 consists of stopping times with resp ect to the filtration ( F t ) t ≥ 0 . Crucially , by c ho osing each trigger to coincide with a spike of the target neuron i , the mem brane p oten tial u i ( t ) is reset at the start of eac h trial. This local reset mechanism ensures that trials are decoupled, providing the probabilistic foundation required to apply concen tration inequalities for the Spike-T riggered Estimator. 6 4 The Estimators ˆ G j → i (∆) and ˆ G j → i (∆) The sampling proto cols describ ed in Section 3 allow for the construction of rescaled statistics designed to isolate the functional influence b etw een neurons using pairwise observ ations. F or a giv en pair of neurons ( j, i ) , the Fixed Sc heme Estimator is defined as: ˆ G j → i m 0 ,m 1 (∆) := 1 ∆ δ P m 1 k =1 1 D j → i k (∆) P m 1 k =1 1 C j → i k (∆) − P m 0 k =1 1 B i k (∆) P m 0 k =1 1 A i k (∆) ! , (19) whereas the Spike-T riggered Estimator is defined as: ˆ G j → i m 0 ,m 1 (∆) := 1 ∆ δ P m 1 k =1 1 D j → i k (∆) P m 1 k =1 1 C j → i k (∆) − P m 0 k =1 1 B i k (∆) m 0 ! . (20) 4.1 Conditional Probabilities and Ergo dic Means W e define the theoretical quantities for a representativ e trial ( k = 1 ), which depend on the sp ecific realization of the history up to the trigger times. F or the Fixed Scheme, the theoretical random v ariable is given b y: G j → i (∆ , F σ j → i 1 , F ′ τ i 1 ) := 1 ∆ δ P ( D j → i 1 (∆) | F σ j → i 1 ) P ( C j → i 1 (∆) | F σ j → i 1 ) − P ( B i 1 (∆) | F ′ τ i 1 ) P ( A i 1 (∆) | F ′ τ i 1 ) , (21) and analogously , for the Spik e-T riggered approac h: G j → i (∆ , F σ j → i 1 , F ′ τ i 1 ) := 1 ∆ δ P ( D j → i 1 (∆) | F σ j → i 1 ) P ( C j → i 1 (∆) | F σ j → i 1 ) − P ( B i 1 (∆) | F ′ τ i 1 ) 1 . (22) In these expressions, the in teraction term is conditioned on the history F at the trigger time σ j → i 1 , while the baseline term is conditioned on the history F ′ at the trigger time τ i 1 . Under the in v ariant measures µ and ν (corresp onding to fixed and spik e-triggered sampling, resp ectiv ely), the exp ected v alues are: G j → i (∆) := E µ [ G j → i (∆ , F , F ′ )] , (23) G j → i (∆) := E ν [ G j → i (∆ , F , F ′ )] . (24) By ergo dicit y—which holds for this class of pro cesses under Assumption 1, as prov en in [10]—the empirical estimators con verge almost surely to their resp ectiv e ergo dic means: ˆ G j → i m 0 ,m 1 (∆) a.s. − − − − − − − → m 0 ,m 1 →∞ G j → i (∆) , ˆ G j → i m 0 ,m 1 (∆) a.s. − − − − − − − → m 0 ,m 1 →∞ G j → i (∆) . (25) 5 Probabilistic Bounds W e study the range of v alues that the random v ariable P ( B i k (∆) | F τ i k ) can take, based on the history of the pro cess up to the stopping time τ i k . 7 Lemma 1 (Baseline Probabilit y Estimates) . Under Assumption 1, for any trigger time τ i k , the r andom variable P ( B i k (∆) | F τ i k ) satisfies the fol lowing ine qualities almost sur ely: (1 − e − ϕ i (0)∆ ) e − β |V i | ∆ ≤ P ( B i k (∆) | F τ i k ) ≤ (1 − e − ϕ i (0)∆ ) e − α |V i | ∆ +(1 − e − β ∆ )(1 − e − β |V i | ∆ ) . (26) Pr o of. Recalling from Section 3, the even t B i k (∆) represents neuron i emitting at least one spik e in the in terv al ( τ i k , τ i k + ∆] . Since the probabilities are conditioned with resp ect to F τ i k , all subsequent inequalities hold almost surely ( P -a.s.). By the reset prop ert y of the model, u i ( τ i k ) = 0 a.s.; hence, the firing intens ity at the b eginning of the interv al is λ i ( τ i k ) = ϕ i (0) . T o derive the low er b ound, let N V i ( τ i k , τ i k + ∆] b e the p oint pro cess represen ting the spikes of all pre-synaptic neurons j ∈ V i . W e in tro duce a dominating P oisson pro cess P ∗ with constant in tensity β |V i | . The following inequalities hold: P ( B i k (∆) | F τ i k ) ≥ P ( B i k (∆) ∩ { N V i ( τ i k , τ i k + ∆] = 0 } | F τ i k ) ≥ P ( B i k (∆) ∩ { P ∗ ( τ i k , τ i k + ∆] = 0 } | F τ i k ) = (1 − e − ϕ i (0)∆ ) e − β |V i | ∆ , (27) where the second inequalit y follows from the stochastic dominance of P ∗ o ver N V i . F or the upp er b ound, w e partition the ev en t B i k (∆) according to the activit y of N V i ( τ i k , τ i k + ∆] . Using a dominated P oisson pro cess P ∗ (in tensity α |V i | ) and the dominating process P ∗ , we obtain P ( B i k (∆) | F τ i k ) ≤ P ( B i k (∆) ∩ { N V i ( τ i k , τ i k + ∆] = 0 } | F τ i k ) + P ( B i k (∆) ∩ { N V i ( τ i k , τ i k + ∆] > 0 } | F τ i k ) P ( B i k (∆) ∩ { P ∗ ( τ i k , τ i k + ∆] = 0 } | F τ i k ) + P ( B i k (∆) ∩ { P ∗ ( τ i k , τ i k + ∆] > 0 } | F τ i k ) ≤ (1 − e − ϕ i (0)∆ ) e − α |V i | ∆ + (1 − e − β ∆ )(1 − e − β |V i | ∆ ) (28) In (28), the second term is b ounded by setting the firing in tensity to β for neuron i whenev er the neighborho o d is active. As stated in (26), the probabilistic b ounds can b e simplified via T a ylor expansion using the Lagrange form of the remainder. F or any x ≥ 0 : 1 − x ≤ e − x ≤ 1 − x + x 2 2 . (29) By combining Lemma 1, (29) with the assumption that V i ≤ d , w e obtain Corollary 1. Under the same assumptions as L emma 1, ϕ i (0)∆ − d + 1 2 β 2 ∆ 2 ≤ P ( B i k (∆) | F τ i k ) ≤ ϕ i (0)∆ + dβ 2 ∆ 2 . (30) Lemma 2 quan tifies the mo dulation of the bursting probability induced b y a pre-synaptic ev ent from neuron j . 8 Lemma 2 (Probability Ratios for Connectivit y) . L et ∆ > 0 . F or any tar get neur on i and c andidate pr e-synaptic neur on j , the c onditional pr ob ability of an inter action suc c ess satisfies the fol lowing b ounds P -a.s. : 1. No Conne ction ( j / ∈ V i ): (1 − e − ϕ i (0)∆ ) e − 2 |V i | β ∆ ≤ P ( D j → i k (∆) | F σ i k ) P ( C j → i k (∆) | F σ i k ) ≤ (1 − e − ϕ i (0)∆ ) + (1 − e − β ∆ )(1 − e − 2 β |V i | ∆ ) (31) 2. Excitatory Conne ction ( j ∈ V i + ): P ( D j → i k (∆) | F σ i k ) P ( C j → i k (∆) | F σ i k ) ≥ (1 − e − ( ϕ i (0)+ δ )∆ ) e − 2 β ( |V i |− 1)∆ (32) 3. Inhibitory Conne ction ( j ∈ V i − ): P ( D j → i k (∆) | F σ i k ) P ( C j → i k (∆) | F σ i k ) ≤ (1 − e − ( ϕ i (0) − δ )∆ ) + (1 − e − β ∆ )(1 − e − 2 β ( |V i |− 1)∆ ) (33) Pr o of. Since D j → i k (∆) ⊆ C j → i k (∆) , the ratio b ecomes P ( D j → i k (∆) | F σ i k ) P ( C j → i k (∆) | F σ i k ) = P ( D j → i k (∆) | C j → i k (∆) , F σ i k ) . (34) Recalling the definition in (13), let P ( D j → i k (∆) | T j k, ∗ = t ∗ , F σ i k ) , for t ∗ ∈ ( σ i k , σ i k + ∆) . (35) Lo wer b ound j / ∈ V i . Let E j → i k ( t ∗ ) b e the ev ent where all pre-synaptic neurons in V i remain silen t in ( σ i k , t ∗ + ∆] . Using a dominating P oisson pro cess P ∗ with in tensity β |V i | , the following holds: P ( D j → i k (∆) | T j k, ∗ = t ∗ , F σ i k ) ≥ P ( D j → i k (∆) ∩ E j → i k ( t ∗ ) | T j k, ∗ = t ∗ , F σ i k ) ≥ P ( D j → i k (∆) ∩ { P ∗ ( σ i k , t ∗ + ∆] = 0 } | T j k, ∗ = t ∗ , F σ i k ) ≥ 1 − e − ϕ i (0)∆ e − 2 β |V i | ∆ . (36) Upp er b ound j / ∈ V i . Decomp osing D j → i k (∆) via E j → i k ( t ∗ ) and its complemen t: P ( D j → i k (∆) | T j k, ∗ = t ∗ , F σ i k ) ≤ P ( D j → i k (∆) | E j → i k ( t ∗ ) , T j k, ∗ = t ∗ , F σ i k ) + P ( D j → i k (∆) ∩ ( E j → i k ( t ∗ )) c | T j k, ∗ = t ∗ , F σ i k ) . (37) Under E j → i k ( t ∗ ) , with j / ∈ V i , the intensit y λ i equals ϕ i (0) . F or the second term, indep enden t dominating Poisson processes P ∗ (in tensity dβ ) and P ∗∗ (in tensity β ) yield: P ( D j → i k (∆) | T j k, ∗ = t ∗ , F σ i k ) ≤ (1 − e − ϕ i (0)∆ ) + P ( P ∗∗ (∆) ≥ 1) · P ( P ∗ (2∆) ≥ 1) = (1 − e − ϕ i (0)∆ ) + (1 − e − β ∆ )(1 − e − 2 β |V i | ∆ ) . (38) 9 Excitatory Connection j ∈ V i + . After the firing of neuron j , the intensit y of neuron i satisfies λ i ≥ ϕ i (0) + δ . Using |V i | − 1 in the exp onen t to accoun t for the neigh b orho od excluding j , and applying Assumption 1 (non-decreasing ϕ i ), the b ound (32) follo ws. Inhibitory Connection j ∈ V i − . The argument follows analogously b y applying the lo wer b ound of the firing intensit y , ϕ i (0) − δ , and following the same steps as in the excitatory case. 5.1 Explicit Linear-Quadratic Bounds F ollo wing the results established in Lemma 2, w e simplify the exp onential b ounds in to a more manageable linear-quadratic form. By utilizing a T aylor expansion with a Lagrange remainder and |V i | ≤ d , w e obtain Corollary 2. Under the assumptions of L emma 2, the r atio of c onditional pr ob abilities for any p air of neur ons ( j, i ) satisfies the fol lowing explicit b ounds almost sur ely ( P -a.s.): 1. No Conne ction ( j / ∈ V i ): ϕ i (0)∆ − 2 d + 1 2 β 2 ∆ 2 ≤ P ( D j → i k (∆) |F σ i k ) P ( C j → i k (∆) |F σ i k ) ≤ ϕ i (0)∆ + 2 dβ 2 ∆ 2 (39) 2. Excitatory Conne ction ( j ∈ V i + ): P ( D j → i k (∆) |F σ i k ) P ( C j → i k (∆) |F σ i k ) ≥ ( ϕ i (0) + δ )∆ − 2 d − 1 2 β 2 ∆ 2 (40) 3. Inhibitory Conne ction ( j ∈ V i − ): P ( D j → i k (∆) |F σ i k ) P ( C j → i k (∆) |F σ i k ) ≤ ( ϕ i (0) − δ )∆ + (2 d − 2) β 2 ∆ 2 (41) Pr o of. These inequalities are deriv ed b y applying (29) to the terms found in Lemma 2. Sp ecifically , for the lo w er b ounds of the form (1 − e − λ ∆ ) e − γ ∆ , we use: (1 − e − λ ∆ ) e − γ ∆ ≥ λ ∆ − λ 2 ∆ 2 2 (1 − γ ∆) = λ ∆ − λγ + λ 2 2 ∆ 2 + λ 2 γ ∆ 3 2 (42) By dropping the positive ∆ 3 term and substituting the upper b ounds λ ≤ β and γ ≤ 2 dβ (or 2( d − 1) β ), we obtain the constants β 2 (2 d + 0 . 5) and β 2 (2( d − 1) + 0 . 5) . F or the upp er b ounds , which in volv e terms lik e (1 − e − λ ∆ ) + (1 − e − β ∆ )(1 − e − γ ∆ ) , we use: (1 − e − λ ∆ ) + (1 − e − β ∆ )(1 − e − γ ∆ ) ≤ λ ∆ + ( β ∆)( γ ∆) = λ ∆ + β γ ∆ 2 . (43) Substituting γ = 2 dβ (or 2( d − 1) β ) yields the constants 2 dβ 2 and 2( d − 1) β 2 . The v alidit y of these bounds for any ∆ > 0 is guaranteed b y the T a ylor expansion with Lagrange remainder. This justifies the omission of the remainder in the lo wer and upp er b ounds, resp ectiv ely , ensuring the inequalities hold. 10 5.2 Rigorous Bounds for G j → i (∆) By com bining the results of Corollary 1 and Corollary 2, we derive the formal bounds for the scaled random v ariable G j → i (∆) defined in (20). This quantit y isolates the synaptic influence b y cen tering the baseline firing rate and normalizing it by the parameters δ and ∆ . T o ensure that the identification of the connectivity is not affected by finite-window effects, w e in tro duce the op erational condition: 9 dβ 2 ∆ ≤ δ . (44) Under this constraint, the gap b et w een the probability ratios for different connection types remains strictly p ositiv e and prop ortional to δ ∆ . This ensures that the quadratic error do es not obscure the excitatory or inhibitory signal. Theorem 1 (Stabilit y of Interaction Estimators) . Under the sc aling c ondition (44) , the func- tions G j → i (∆) and G j → i (∆) satisfy the fol lowing ine qualities: 1. If j / ∈ V i , then |G j → i (∆) | ≤ (3 d + 0 . 5) β 2 δ ∆ . (45) 2. If j ∈ V i + , then G j → i (∆) ≥ 1 − 3 dβ 2 δ ∆ . (46) 3. If j ∈ V i − , then G j → i (∆) ≤ − 1 + β 2 (3 d − 1 . 5) δ ∆ . (47) Pr o of. The bounds are established b y substituting the linear-quadratic expansions in to the definition of the random interaction strength (22). Note that the ratios represen t the conditional probabilities of a spik e o ccurring within a windo w ∆ , given the o ccurrence of the resp ectiv e trigger even ts. F or the excitatory case ( j ∈ V i + ), applying the estimates from Corollaries 1 and 2, w e obtain: ∆ δ G j → i (∆ , · ) ≥ ( ϕ i (0) + δ )∆ − β 2 (2 d − 0 . 5)∆ 2 − ϕ i (0)∆ + dβ 2 ∆ 2 = δ ∆ − β 2 (3 d − 0 . 5)∆ 2 . Dividing by ∆ δ yields: G j → i (∆ , F σ j → i 1 , F ′ τ i 1 ) ≥ 1 − β 2 (3 d − 0 . 5) δ ∆ . The uniformit y of these bounds with resp ect to the history ( F t ) t ≥ 0 , guaranteed by the reset prop ert y at times σ j → i k and τ i k , allo ws us to extend the result to the expected function G j → i (∆) via the stationary measure E ν [ · ] . The n ull and inhibitory cases follo w b y analogous argumen ts. These results allo w us to establish a statistical criterion for the iden tification of excitatory , inhibitory , and n ull connections. 11 Corollary 3 (T op ology Identificat ion) . F or an observation window ∆ A = δ 9 dβ 2 , the function G j → i (∆) pr ovides an explicit sep ar ation of the network top olo gy: 1. No Conne ction ( j / ∈ V i ): |G j → i (∆ A ) | ≤ 1 3 + 1 18 d . (48) 2. Excitatory Conne ction ( j ∈ V i + ): G j → i (∆ A ) ≥ 2 3 . (49) 3. Inhibitory Conne ction ( j ∈ V i − ): G j → i (∆ A ) ≤ − 2 3 − 1 6 d . (50) 5.3 Comparison with Previous Iden tification Bounds W e compare the analytical b ounds established in Corollaries 1 and 2 with the results presented in Lemma 3 of [1]. The estimates deriv ed in this w ork provide a sharp er c haracterization of the in teraction function G j → i (∆) for the follo wing reasons. 1. Estimator Construction. The estimator in [1] is defined on a fixed-time grid, whereas the current estimator is constructed using the stopping times ( σ j → i k , τ i k ) . By the reset prop ert y , this construction fixes the initial intensit y at λ i = ϕ i (0) , removing the d ep endence on the history of the pro cess for the target neuron i . This leads to sharp er analytical bounds and more stable statistics compared to the fixed-grid approac h. 2. Analytical Refinement of the Bounds. In Lemma 3 of [1], the error estimates w ere dep enden t on the leak rate α . The refined analytical treatmen t in the current w ork remo v es the dep endence on α from the denominators, providing a more direct represen tation of the synaptic in teraction. Comparing the error constants C 2022 with the constan ts C 2026 from Corollaries 1 and 2, we observ e a systematic reduction in the error terms. Ev en assuming ϕ i (0) = α , whic h yields the most fav orable estimates for the previous framework, the b ounds compare as follo ws: 1. Base Firing Ratio, P ( B i k (∆) | F τ i k ) : • Low er Bound: C 2026 = ( d + 0 . 5) β 2 vs C 2022 = 3 dβ 2 • Upp er Bound: C 2026 = dβ 2 vs C 2022 ≥ 4 dβ 3 /α 2. Probabilit y Ratio P ( D j → i k (∆) | C j → i k (∆) , F σ i k ) : • Excitatory Case ( j ∈ V i + ): – Low er Bound: C 2026 = (2 d − 0 . 5) β 2 vs C 2022 ≥ 5 dβ 3 /α 12 • Inhibitory Case ( j ∈ V i − ): – Upp er Bound: C 2026 = (2 d − 2) β 2 vs C 2022 ≥ 5 dβ 4 /α 2 • Null Case ( j / ∈ V i ): – Low er Bound: C 2026 = (2 d + 0 . 5) β 2 vs C 2022 ≥ 5 dβ 3 /α – Upp er Bound: C 2026 = 2 dβ 2 vs C 2022 ≥ 5 dβ 4 /α 2 5.4 Spik e-T riggered Estimator with a Single-Scale Window The empirical estimator ˆ G j → i m 0 ,m 1 (∆ A ) is emplo yed to classify the synaptic interaction b et w een an y pair of neurons ( j, i ) . W e define the following Statistical Classifier: ˆ S ij ( m 0 , m 1 ) := 1 if ˆ G j → i m 0 ,m 1 (∆ A ) > 1 2 , − 1 if ˆ G j → i m 0 ,m 1 (∆ A ) < − 1 2 , 0 if | ˆ G j → i m 0 ,m 1 (∆ A ) | ≤ 1 2 . (51) By Corollary 3, w e obtain that G j → i (∆ A ) ∈ 2 3 , + ∞ ⊂ 1 2 , + ∞ if j → i is excitatory , − 1 3 − 1 18 d , 1 3 + 1 18 d ⊂ [ − 1 2 , 1 2 ] if j is not pre-synaptic to i, −∞ , − 2 3 − 1 6 d ⊂ −∞ , − 1 2 if j → i is inhibitory . (52) By com bining (25) and (52), w e conclude that the statistical classifier ˆ S ij ( m 0 , m 1 ) is asymp- totically correct, ensuring that the probability of an error in t he classification v anishes as m 0 , m 1 → ∞ . As illustrated in Figure 1, the decision space is partitioned by thresholds ζ ± = ± 1 / 2 into three regions: Excitatory , Null, and Inhibitory one. ˆ G j → i (∆ A ) − 1 / 2 1 / 2 Inhibitory Region Null Region Excitatory Region ≥ 2 3 ≤ − 2 3 − 1 6 d 1 3 + 1 18 d − 1 3 − 1 18 d gap gap gap gap inhibitory semi-ray excitatory semi-ray Figure 1: Classification map for top ology iden tification. The decision space is partitioned b y thresholds ζ ± = ± 1 / 2 . The blue gaps represen t the safety margins that acco mmo date the statistical error of the estimator ˆ G j → i (∆ A ) , ensuring it remains within the correct decision region relative to the theoretical bounds. 6 Sample Complexit y and Robustness Analysis While Section 5 established the asymptotic correctness of the Spik e-T riggered Estimator, w e no w address its sample complexity . This analysis determ ines the num b er of trigger even ts m 1 required to ensure that the statistical fluctuations of ˆ G j → i (∆) do not cross the decision thresholds defined in (51). 13 6.1 V ariance of the T riggered Statistic The estimator ˆ G j → i m 0 ,m 1 (∆) is a combination of Bernoulli trials. Crucially , the Spik e-T riggered Sc heme ensures that trials are decoupled through the reset prop ert y . The estimation error is dominated by the interaction term: ˆ Φ j → i m 1 (∆) = P m 1 k =1 1 D j → i k (∆) P m 1 k =1 1 C j → i k (∆) . (53) Let M = P m 1 k =1 1 C j → i k (∆) b e the num ber of "activ e" trials where neuron j actually fired after the trigger. Since D k ⊆ C k , the term ˆ Φ is an empirical mean of M Bernoulli v ariables with success probabilit y p ≈ P ( D | C ) . 6.2 Concen tration and Sample Complexity T o ensure that the classification ˆ S ij is correct with high probabilit y 1 − q , w e require the deviation | ˆ G − G | to b e smaller than the safet y gap iden tified in Figure 1. By applying Bennett’s inequalit y to the decoupled trials, w e find that the num ber of required active trials M (∆) scales with the in verse of the signal: M (∆) ≥ 2 ln(2 /q ) σ 2 (∆) h ( η ) , (54) where σ 2 (∆) ≈ p (1 − p ) is the v ariance. In the small- ∆ regime, p ≈ ( ϕ i (0) + δ )∆ , making the v ariance proportional to ∆ . 6.3 T otal T rigger Requiremen ts A k ey result of this work is the calculation of the total sampling effort m 1 . Since the ev ent C (neuron j firing within ∆ after the trigger) o ccurs with probabilit y P ( C ) ≈ α ∆ , w e m ust observe man y triggers to collect enough activ e trials M . The total n um b er of triggers m 1 scales as: m 1 (∆) ≈ M (∆) P ( C ) = Θ 1 α 2 ∆ 2 . (55) This quadratic dep endence on ( α ∆) − 1 is the price paid for the high resolution of the contin uous- time mo del. 6.4 P arameter Calibration and Stability Using the optimal window ∆ A = δ 9 dβ 2 from Corollary 3, and substituting the dimensionless ratios s = α/β and τ = δ /β , the total required trigger ev ents m ∗ 1 is: m ∗ 1 ≈ 81 d 2 ln(1 /q ) s 2 τ 2 h ( η ) ≈ 16200 d 2 ln(1 /q ) s 2 τ 4 . (56) This expression highligh ts that the Spik e-T riggered Estimator is particularly sensitive to the synaptic jump τ . Ho wev er, b ecause the reset mechanism eliminates the history-induced v ariance (the " α -noise" discussed in Section 5.4), the constant pre-factor is significantly lo wer than that of the Fixed Sc heme, making the identification feasible ev en with moderate recording lengths. 14 7 Numerical V alidation of the Spik e-T riggered Estimator In this section, w e pro vide a n umerical v alidation of the inference framework. The ob jectiv e is to v erify that the Spike-T riggered Estimator ˆ G j → i m 0 ,m 1 con verges correctly and that the decision thresholds ζ = ± 1 / 2 pro vide a robust separation of the connectivit y t yp es under the predicted sample complexity . 7.1 Sim ulation Setup W e adopt a global activ ation function ϕ ( u ) for all neurons i ∈ I , defined as the follo wing piecewise linear function: ϕ ( u ) = α if u ≤ u low , β if u ≥ u hig h , α + ( u − u low ) β − α u high − u low if u low < u < u hig h . (57) F or these simulations, we set α = 1 , β = 5 , u low = − 2 . 0 , and u hig h = 2 . 0 . This configuration implies ϕ (0) = 3 (the firing intensit y at the reset potential). T o v alidate the classification framew ork, w e select synaptic w eights w j → i ∈ { 1 , − 1 , 0 } for excitatory , inhibitory , and null connections, resp ectiv ely . The system is comp osed of N no des, where No de 0 is the target (p ost-synaptic) neuron, while the remaining nodes act as indep enden t "driver" no des (P oisson pro cesses with intensit y 3) that exert influence only on Node 0. 7.2 Results and Discussion The n umerical results, summarized in T able 2, confirm that the Spike-T riggered Estimator acts as a reliable top ological classifier. In our simulations, w e maintained a sparse connectivity with a lo cal in-degree d = 2 or d = 4 . The results verify a key theoretical in tuition: the systematic error (bias) of the estimator dep ends primarily on the local neighborho od V i and the windo w ∆ , rather than the global size N . As long as d remains small, the quadratic interference term dβ 2 ∆ 2 remains con trolled, and the separation gap b et w een different connection types remains sharp. The increase in the num b er of triggers to N D = 300 for the N = 10 case w as used to ensure robust statistical con v ergence. The estimates for n ull links remain consisten tly near zero ( | ˆ G | ≈ 0 . 02 ), proving that the estimator correctly ignores "indirect" connections. While we verified that for d ≤ 5 the estimator correctly classifies links using window sizes ∆ ≈ δ β 2 d , adhering strictly to the ultra-fine resolution ∆ A suggested b y the conserv ative bounds of Corollary 3 p oses significant practical limitations. F or instance, with d = 9 , ∆ A ≈ 0 . 00049 w ould necessitate computationally prohibitive sim ulation timescales. F or this reason, the single-scale Spik e-T riggered approach serv es as the foundation for the Macro-Micro metho ds, whic h o vercome these limitations b y inv estigating the asymptotic b e- ha vior of ˆ G j → i (∆) as ∆ → 0 + , allowing for robust inference even with broader, more practical windo ws. 15 T able 2: Classification results for the Spike-T riggered Estimator across different netw ork sizes and window widths. N N D Link T yp e ∆ ˆ G Threshold ζ Outcome 4 100 Excitatory ( +1 ) 0.055 0.8493 ± 0 . 5 ✓ Correct 4 100 Null ( 0 . 0 ) 0.055 0.0472 ± 0 . 5 ✓ Correct 4 100 Excitatory ( +1 ) 0.009 0.9285 ± 0 . 5 ✓ Correct 10 300 Excitatory ( +1 ) 0.009 1.1173 ± 0 . 5 ✓ Correct 10 300 Inhibitory ( − 1 ) 0.009 -0.8316 ± 0 . 5 ✓ Correct 10 300 Null ( 0 . 0 ) 0.009 -0.0276 ± 0 . 5 ✓ Correct 8 The Macro-Micro Strategy The prop osed inference framework aims to reconstruct microscopic synaptic connections from observ ations at macroscopic time scales. This is achiev ed by ev aluating the Spik e-T riggered Esti- mator ˆ G j → i o ver a multi-scale temp oral grid { ∆ k } 5 k =1 and employing an adaptive extrap olation- a veraging logic to recov er the synaptic signal. 8.1 Multi-Scale Calibration The temp oral scales are c hosen in a geometric progression ∆ k = ( √ 2) k − 1 ∆ 1 . The initial windo w ∆ 1 is analytically determined to ensure the algorithm op erates within a statistically significan t y et linear regime: ∆ 1 = 1 β d |{z} Noise Scale · δ β |{z} Sensitivity · β − α 2 δ | {z } Surviv al distance = β − α 2 dβ 2 . (58) By exploiting the kno wledge of the intensit y function ϕ ( u ) , this calibration ensures that ∆ 1 is large enough to pro vide signal but small enough to av oid the saturation b oundaries of the mem brane potential. 8.2 The Extrap olation Mo dels T o decouple the microscopic signal from macroscopic in terference, we consider tw o complemen- tary approaches for analyzing the ev olution of G (∆) . 1. Lo cal Quadratic Approximation W e assume that for small ∆ , the gain function follows a second-order expansion: G j → i (∆) ≈ a ∆ 2 + b ∆ + c, (59) where c represents the direct synaptic effect, b ∆ accounts for first-order in terference from other presynaptic neurons, and a ∆ 2 aggregates global netw ork noise and non-linearities. 2. Pyramid Extrap olation ( P ) T o av oid the fragilit y and ov erfitting risks of direct parab olic fitting, we emplo y a recursive geometric construction designed for robust in tercept reco very . This metho d, termed Pyramid Extrap olation , op erates through tw o phases: 16 • Con traction via Barycen tric Iteration: Starting from the multi-scale dataset { (∆ k , ˆ G (∆ k )) } 5 k =1 , the algorithm computes successiv e midp oin t iterations. This pro cess condenses the five initial samples into tw o robust “meta-p oin ts”, denoted as A and B . • Linear Pro jection and In tercept Recov ery: The final estimate P is defined as the y-in tercept of the line passing through meta-p oin ts A and B . The hierarc hical structure of the av erages captures the implicit curvatur e of the underlying gain function. This pro vides an intrinsic regularization of the signal, effectiv ely acting as a discrete low-pass filter. It allows the mo del to trac k the fundamen tal trend required for synaptic classification while remaining resilient to high-frequency sto c hastic fluctuations (outliers) that plague measurements at extremely small scales. 8.3 Hybrid Decision Logic: Pyramid vs. Mean The core of our strategy lies in the adaptive selection betw een the Pyramid Extrap olation ( P ) and the Sample Mean ( M ) of the gains. Let S = { +1 , 0 , − 1 } b e the set of theoretical synaptic states. The final index I is c hosen via a distance-minimization rule: I = arg min X ∈{ P ,M } ( dist ( X , S )) . (60) This hybrid approac h balances stabilit y and sensitivit y based on the netw ork’s regime: • The Mean ( M ) is sup erior in balanced noise regimes , where sto c hastic fluctuations tend to cancel out. It provides a grounded, stable estimate, esp ecially for n ull ( w = 0 ) links. • The Pyramid ( P ) is essen tial in unbalanced regimes (e.g., predominan tly excitatory driv e). The background activit y biases the gains; the pyramid effectiv ely “strips aw a y” this background slope, allo wing the true micro-scale intercept to emerge. 8.4 Classification T o prioritize the suppression of false positives, we adopt a conserv ative thresholding logic with an explicit safety buffer: ˆ Σ ij = 1 if I > 5 / 8 , − 1 if I < − 5 / 8 , 0 if | I | ≤ 5 / 8 . (61) While a symmetric threshold at ± 1 / 2 w ould b e theoretically sufficien t, the expansion of the null region to ± 5 / 8 ensures that residual sto c hastic fluctuations in larger netw orks do not trigger misclassifications. 9 Numerical V alidation for Macro-Micro Extrap olation T o v alidate the robustness of our framework under extreme conditions, we consider a target neuron ( i = 0 ) influenced by three presynaptic units. Neuron 1 maintains a constant intensit y 17 α + β 2 , while Neurons 2 and 3 aggregate the activity of excitatory ( L + ) and inhibitory ( L − ) p opulations, resp ectiv ely . This simplified arc hitecture, illustrated in Fig. 2, provides a con trolled environmen t to stress the framew ork under conditions of extremely low Signal-to-Noise Ratio (SNR). By setting L + , L − ≫ β , we sim ulate a dominan t sto chastic bac kground drive that w ould t ypically obscure synaptic signals in standard observ ation windo ws. 0 1 α + β 2 2 L + 3 L − w +1 − 1 Figure 2: Minimal netw ork architecture for v alidation. Computational Adv antages of the Minimal Net work Arc hitecture The selection of this top ology offers significan t adv an tages for even t-driv en simulations using Lewis’ thinning algorithm: 1. Reduced State Up date Ov erhead: Since the in tensity functions follow predictable tra jectories betw een spik es, the calculation of λ max b ecomes computationally trivial, min- imizing the ov erhead of v ariable tracking. 2. Efficiency in Even t A ttribution: In this 4-neuron configuration, the search for the triggered unit among candidate spik es requires ev aluating only four probabilities, ensuring that the computational cost p er ev ent remains extremely low. 3. Immediate Realization of the Reset State: By assuming an initial state u = 0 for the target neuron, w e b ypass the initial transient phases. Since presynaptic inputs are indep enden t P oisson pro cesses, the system is natively in statistical equilibrium, pro viding immediate access to p ost-reset dynamics. Exp erimen tal F ramework and Results W e conducted a systematic campaign of 810 indep enden t simulations across 27 operational scenarios, defined by the interpla y of α , β , the load configurations L ± , and the weigh ts w of Neuron 1. The empirical results highligh t the practical effectiveness of the Pyramid Extrap olation . In all 810 trials, the mo del consistently achiev ed correct classification. Even in the most extreme regime ( β = 7 , aggregate noise of 400 Hz), the iterative a veraging effectiv ely isolates the synaptic sign. As illustrated in Figure 3, the stabilit y of the recov ered in tercepts ( 0 . 964 for excitatory , − 1 . 045 for inhibitory) suggests that this heuristic extrap olation effectively absorbs the bias 18 0 1 2 3 4 5 6 − 1 0 1 2 0.964 ∆ (ms) G net (∆) Excitatory ( W = 1 . 0 ) 0 1 2 3 4 5 6 − 1 0 1 2 -0.003 ∆ (ms) Null ( W = 0 . 0 ) 0 1 2 3 4 5 6 − 1 0 1 2 -1.045 ∆ (ms) G net (∆) Inhibitory ( W = − 1 . 0 ) Figure 3: Synaptic extrap olation results. (T op Left) Excitatory , (T op Right) Null, (Bottom) Inhibitory . Blue marks: ra w gains; Op en red circles: pyramid meta-p oints; Dashed line: ex- trap olation to ∆ → 0 . Red dotted lines indicate the classification thresholds. 19 T able 3: Simulation P arameters and P erformance Results P arameter V alues / Conditions T otal Sim ulations 810 Scenarios 27 ( 3 β levels × 3 Noise t yp es × 3 w weigh ts) Noise Types Excitatory-only , Balanced, Inhibitory-only W eights ( w ) { +1 , 0 , − 1 } Success Rate 100% induced b y finite observ ation windo ws. These findings v alidate the metho d as a robust and efficien t to ol for reconstructing connectivit y using only the fundamen tal parameters of the system: the intensit y b ounds α, β , the synaptic impulse δ , and the lo cal sparsity d . 10 Numerical V alidation in Interactiv e Net w orks T o ev aluate the effectiv eness of the proposed Macro-Micro strategy , we transition from the minimal architecture to a fully interactiv e netw ork of N = 20 neurons. In this setting, the bac kground noise is no longer an external Poisson pro cess but an emergen t prop ert y of the net work dynamics, gov erned b y the endogenous activity of the intensit y functions ϕ ( u ) defined in 57. 10.1 Sim ulation Setup and Statistical Proto col The net work w as configured with α = 1 . 0 , β = 5 . 0 , and N = 20 ( d = 19 ). Synaptic w eights w ere assigned sto c hastically with probabilities P ( w = +1) = 0 . 25 , P ( w = 0) = 0 . 5 , and P ( w = − 1) = 0 . 25 , obtaining δ = 1 in this first sim ulation. In a second simulation, we will tak e v ariable weigh ts with a resulting δ equal to 0.5. Fixed-Ev ent Sampling Strategy: T o ensure uniform statistical p o wer across all esti- mated links and div erse net work scenarios, the sim ulation is not bound by a fixed time window. Instead, we implemen t a proto col where data accum ulation for each pair ( j, i ) pro ceeds un- til a target count of ev ents is reac hed for b oth the conditional and the baseline observ ations. Sp ecifically , we monitor the numerators of our differential estimator: m 1 X k =1 1 {D j → i k (∆) } = N D and m 0 X k =1 1 {B j → i k (∆) } = N B (62) where we set N D = 2000 and N B = 40000 . While N D is chosen to provide a robust yet compu- tationally efficient sample for the conditional firing probability , we utilize a m uch larger N B for the baseline. This asymmetry is strategically b eneficial: since baseline even ts are in trinsically more abundan t and easier to sample, the high v alue of N B effectiv ely suppresses the statistical noise of the subtraction term, ensuring that the final functional index I is dominated by the sp ecific in teraction signal rather than background fluctuations. By main taining these constan t coun ts in the numerator, the observ ation time (the denominator) scales adaptively , guarantee- ing that the confidence interv als for the Pyramid extrap olation remain consistent across the en tire spectrum of synaptic weigh ts, regardless of the lo cal net work regime. 20 F or these exp erimen ts, the initial sampling windo w ∆ 1 w as set following the analytical deriv ation in (58): ∆ 1 = 2 · β − α 2 dβ 2 ≈ 0 . 0042 . (63) A scaling factor of 2 w as applied to slightly broaden the observ ation windo w, accelerating the accum ulation of the required N D coincidences without compromising the microscopic lo calit y . 10.2 P erformance and Hybrid Logic Selection W e conducted 30 indep enden t trials (10 p er synaptic class). The ob jective was to reco v er the class of a sp ecific synapse using the Hybrid Decision Logic (Pyramid vs. Mean). The results, summarized in T able 4, demonstrate 100% accuracy . T able 4: Classification Performance and Index Reco v ery ( N = 20 ). Ground T ruth ( w ) Mean Recov ered Index Std. Dev. Accuracy +1 . 0 (Excitatory) 0 . 9893 0 . 048 100% 0 . 0 (Null) 0 . 0049 0 . 045 100% − 1 . 0 (Inhibitory) − 0 . 9598 0 . 031 100% 10.3 Discussion of T rial Dynamics A key finding is the adaptiv e b eha vior of the classifier based on the local net work regime. F or Excitatory Connections ( w = +1 ), the system frequently selected the Sample Mean (60% of trials). This occurs because the interaction maintains a stable p ositiv e pressure; as the windo w ∆ expands, the signal remains coheren t, allowing the mean to ac hieve optimal v ariance reduction. Con versely , the Pyramid Extrap olation becomes essen tial in "disruptive" or slope-heavy regimes. This is eviden t in Inhibitory F eedback , where the Pyramid "p eels off" the dominan t excitatory bac kground to reco v er the w eaker inhibitory signal. Regarding Null Connections , the selection was split: the Mean was preferred in balanced regimes, while the Pyramid w as in vok ed whenever local fluctuations created a transien t slop e. 10.4 Stress T esting: La yered Arc hitecture and F eedback Lo ops ( N = 60 ) T o v alidate scalability , we sim ulated N = 60 neurons organized into the lay ered arc hitecture sho wn in Fig. 4 (top). This configuration imp oses a directional flow and long-range inhibitory feedbac k, creating a high-interference regime. Numerical Bias vs. F unctional Measure A crucial observ ation emerges in the heteroge- neous weigh t scenario: the arithmetic mean often yields an index closer to ± 1 than the Pyramid result, but this is a n umerical artifact of underestimation bias. As ∆ increases, net work-induced noise dilutes the signal. In con trast, the Pyramid method correctly recov ers the instan taneous jump in firing probability: G j → i (0) ≈ ϕ ( w i,j ) − ϕ (0) δ . In our simulations, with δ = 0 . 5 , this results in a theoretical gain factor of 2. F or instance, link (24 , 11) with w = 0 . 96 yields an index of 21 1 . 91 , while link (29 , 45) with w = − 0 . 74 yields − 1 . 37 , demonstrating a rigorous, ph ysically consisten t measure. Heterogeneous W eights and F unctional Gain W e in tro duced w eights sampled from uni- form distributions ( w exc ∈ [0 . 5 , 1 . 0] , w inh ∈ [ − 1 . 0 , − 0 . 5] ). The results are summarized in Fig. 4 (b ottom). As shown in the scatterplot, the Pyramid metho d (red circles) consistently follo ws the theoretical gain function: G j → i (0) ≈ ϕ ( w i,j ) − ϕ (0) δ = 2 w i,j . (64) This high-fidelity recov ery across the entire sp ectrum—including null cases ( w = 0 )—confirms the robustness of the estimator in dense top ologies ( N = 60 ). 10.5 Stress-test Analysis: Pyramid P erformance under 8 × Macroscopic Win- do wing T o test the asymptotic resilience of the Pyramid estimator, we sub jected the netw ork to an extreme windo wing regime ( 8 × the theoretical ∆ 1 ). As sho wn in the results, the ra w gain G j → i (∆) for excitatory links suffers a massiv e 43% decay due to macroscopic in terference. Ho wev er, the Pyramid metho d demonstrates its sup erior de-biasing capability b y pro jecting a microscopic estimate of 1 . 53 from a degraded 0 . 70 baseline. Most notably , for inhibitory links, the estimator achiev es near-p erfect reco v ery ( − 1 . 72 vs target − 1 . 80 ), confirming that the geometric logic of the p yramid effectiv ely filters out the non-linear curv ature induced b y large observ ation scales, see figure 5. F uture developmen ts will in vestigate an adaptive selection of the observ ation window ∆ based on the estimated noise p olarization. Our empirical results suggest that balanced net- w ork noise allows for significantly larger int egration scales, offering a path tow ard ev en more accelerated inference algorithms. 11 Conclusions In this work, we ha ve introduced a m ulti-lay ered inference framework that ac hieves unprece- den ted robustness in the reconstruction of synaptic connectivit y from spik e train data. The p erfect classification accuracy ( 100% ) observ ed across all sim ulated scenarios—from minimal motifs to complex N = 60 la y ered netw orks—is the direct result of three synergistic inno v ations: 1. The Spik e-T riggered Estimator: By abandoning fixed-grid sampling in fa vor of an ev ent-driv en approac h, w e leveraged the nativ e reset property of the Galves-Löcherbac h mo del. This eliminated the historical noise from the intensit y function, allowing for a cleaner analytical formulation where the error b ounds no longer dep end on the low er- b ound in tensity α . 2. The Macro-Micro Strategy: This framew ork bridges the gap b et ween computationally feasible observ ation windows and the microscopic limit. By inv estigating the asymptotic b eha vior of the gain function, we successfully decoupled the direct synaptic signal from the cumulativ e "sea of noise" generated by the rest of the netw ork. 22 0 10 20 30 40 50 P r e-synaptic inde x (j) 0 10 20 30 40 50 P ost-synaptic inde x (i) P r o ximity Connectivity Matrix (Layer 0 Dictators & 3->1 F eedback) 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 S y n a p t i c W e i g h t w 1.0 0.5 0.0 0.5 1.0 G r o u n d T r u t h W e i g h t w 2 1 0 1 2 F u n c t i o n a l I n t e r a c t i o n I n d e x I S t r e s s T e s t : I n t e r a c t i o n I n d e x I v s T r u e S y n a p t i c W e i g h t w P y r a m i d E x t r a p o l a t e d I = j i ( 0 ) Sample Mean (Macr o -scale) T h e o r e t i c a l E x p e c t a t i o n ( 2 w ) Figure 4: Net work Arc hitecture and Metrological P erformance ( N = 60 ) . T op: Prox- imit y matrix sho wing lay ered connectivity with dictatorial pacemaker ( L 0 ), feed-forw ard blo c ks, and inhibitory feedback ( L 3 → L 1 ). Bottom: Scatterplot comparing recov ered functional in- dex I against ground truth w . The Pyramid metho d (red circles) follo ws the theoretical gain I = 2 w i,j with high linearit y , neutralizing the bias of the sample mean (blue crosses). Data p oin ts collected via N D = 2000 fixed-even t proto col. 23 0.00 0.05 0.10 0.15 0.20 0.25 O b s e r v a t i o n W i n d o w ( m s ) 1.5 1.0 0.5 0.0 0.5 1.0 1.5 M e a s u r e d G a i n G ( ) E x t r e m e C u r v a t u r e A n a l y s i s ( 8 × S c a l e ) P y r a m i d P r o j e c t i o n t o M i c r o s c o p i c L i m i t ( 0 ) Ex citatory (w=0.95) Extrapolated Ex citatory Inhibitory (w=-0.9) Extrapolated Inhibitory Null (w=0.0) Extrapolated Null Figure 5: Asymptotic resilience stress-test under extreme sampling regimes ( 8 × ∆ 1 ) . The plot illustrates the de-biasing p erformance of the Pyramid estimator when the observ a- tion window is delib erately expanded up to eigh t times the theoretical limit. Color e d cir cles : measured indices G (∆) , exhibiting significant decay (up to 43% for excitatory links) due to net work-induced macroscopic in terference. Dashe d lines : geometric extrap olation derived from the Pyramid iterative logic. Solid p oints at ∆ = 0 : reco vered microscopic estimates. Despite the severe damping of the raw signal, the metho d reconstructs the instantaneous gain with high fidelity (e.g., recov ering an index of 1 . 53 from a degraded 0 . 70 baseline for w = 0 . 95 ), confirming that the geometric pro jection effectiv ely comp ensates for the non-linear curv ature induced by macroscopic observ ation scales. 24 3. Hybrid Pyramid-Mean Logic: The introduction of the Pyramid Extrapolation, acting as an intrinsic discrete low-pass filter, pro vided the necessary regularization to handle un balanced regimes and feedbac k lo ops. The adaptiv e selection b etw een the stabilit y of the Mean and the sensitivit y of the Pyramid ensured that ev en weak inhibitory signals could b e recov ered from dominant excitatory backgrounds. Our results demonstrate that synaptic iden tification is fundamentally a lo cal problem: the complexit y of the global netw ork ( N ) do es not degrade the precision of the estimator, pro vided the lo cal neighborho o d is sparsely connected. The resilience of the metho d to heterogeneous w eights and structured top ological motifs confirms its p oten tial as a general-purp ose to ol for neural circuit mapping. F uture developmen ts will fo cus on extending this adaptiv e logic to even more realistic neu- roscien tific scenarios, incorp orating axonal conduction dela ys and membrane p oten tial leak age (leaky-GL mo dels). These additions will further test the flexibilit y of our extrap olation frame- w ork in the presence of temp oral decays and non-instantaneous in teractions, moving closer to the challenges posed b y in vivo multi-electrode recordings. A c kno wledgemen ts The author ac knowledges the assistance of Gemini (Go ogle) in the optimization of the simulation framew ork and for technical support in the formal refinemen t of the man uscript. Declarations Conflict of Interest: The author declares that he has no conflict of in terest. Data A v ailability: The data that supp ort the findings of this study are av ailable from the corresp onding author up on reasonable request. References [1] Emilio De Santis, An tonio Galv es, Giov anna Napp o, and Mauro Piccioni. Estimating the interaction graph of sto c hastic neuronal dynamics by observing only pairs of neurons. Sto chastic Pr o c ess. Appl. , 149:224–247, 2022. [2] Emilio De Santis, Gio v anna Napp o, Mauro Piccioni, and Christophe P ouzat. Estimating the interaction graph of a sto c hastic neuronal dynamics with leak age and dela y b y observing only pairs of neurons. In pr ep ar ation , 2026. [3] Aline Duarte, Ricardo F raiman, An tonio Galv es, Guilherme Ost, and Claudia V argas. Non- parametric estimation of the in teraction graph of a system of spiking neurons. Ele ctr on. J. Stat. , 10(1):1538–1580, 2016. [4] Aline Duarte, An tonio Galv es, Ev a Löc herbach, and Guilherme Ost. Estimating the in ter- action graph of sto c hastic neural dynamics. Bernoul li , 25(1):771–792, 2019. [5] Aline Duarte, Guilherme Ost, and Gilda Ro driguez. Estimation of the interaction graph of a system of spiking neurons. J. Stat. Phys. , 177(6):1050–1073, 2019. 25 [6] An tonio Galv es and Kádmo Laxa. F ast consensus and metastability in a highly p olarized so cial net work. Sto chastic Pr o c ess. Appl. , 177:104445, 2024. [7] An tonio Galv es and Ev a Löc herbac h. Infinite systems of interacting chains with memory of v ariable length—a stochastic mo del for biological neural nets. J. Stat. Phys. , 151(5):896– 939, 2013. [8] F elip e Gerhard, Gordon Pipa, Bruss Lima, Sergio Neuensc hw ander, and W olf Singer. Ex- traction of netw ork top ology from m ulti-electrode recordings: ov ercoming the effect of non-stationarit y . F r ont. Comput. Neur osci. , 5:Art. 4, 18, 2011. [9] Niels Richard Hansen, Patricia Reynaud-Bouret, and Vincen t Riv oirard. Lasso and prob- abilistic classifier for multiv ariate Hawk es pro cesses. A nn. Appl. Pr ob ab. , 25(4):2261–2298, 2015. [10] Pierre Ho dara and Ev a Löcherbac h. Ha wk es pro cesses with v ariable length memory and an infinite num b er of comp onen ts. A dv. in Appl. Pr ob ab. , 49(1):84–107, 2017. [11] Ev a Lö c herbach and Kádmo Laxa. Propagation of chaos and phase transition in a sto c has- tic mo del for a social net work. J. Stat. Phys. , 191(12):Paper No. 155, 34, 2024. [12] L. F. Richardson. The approximate arithmetical solution b y finite differences of physical problems in volving differential equations, with an application to the stresses in a masonry dam. Philos. T r ans. R oy. So c. L ondon Ser. A , 210(459-470):307–357, 1911. [13] Stefano Spaziani, Gabrielle Girardeau, Ingrid Bethus, and Patricia Reynaud-Bouret. Het- erogeneous Multiscale Multiv ariate Autoregressive Mo del: existence, sparse estimation and application to functional connectivit y in neuroscience. J. Math. Biol. , 90(62):31, 2025. 26

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

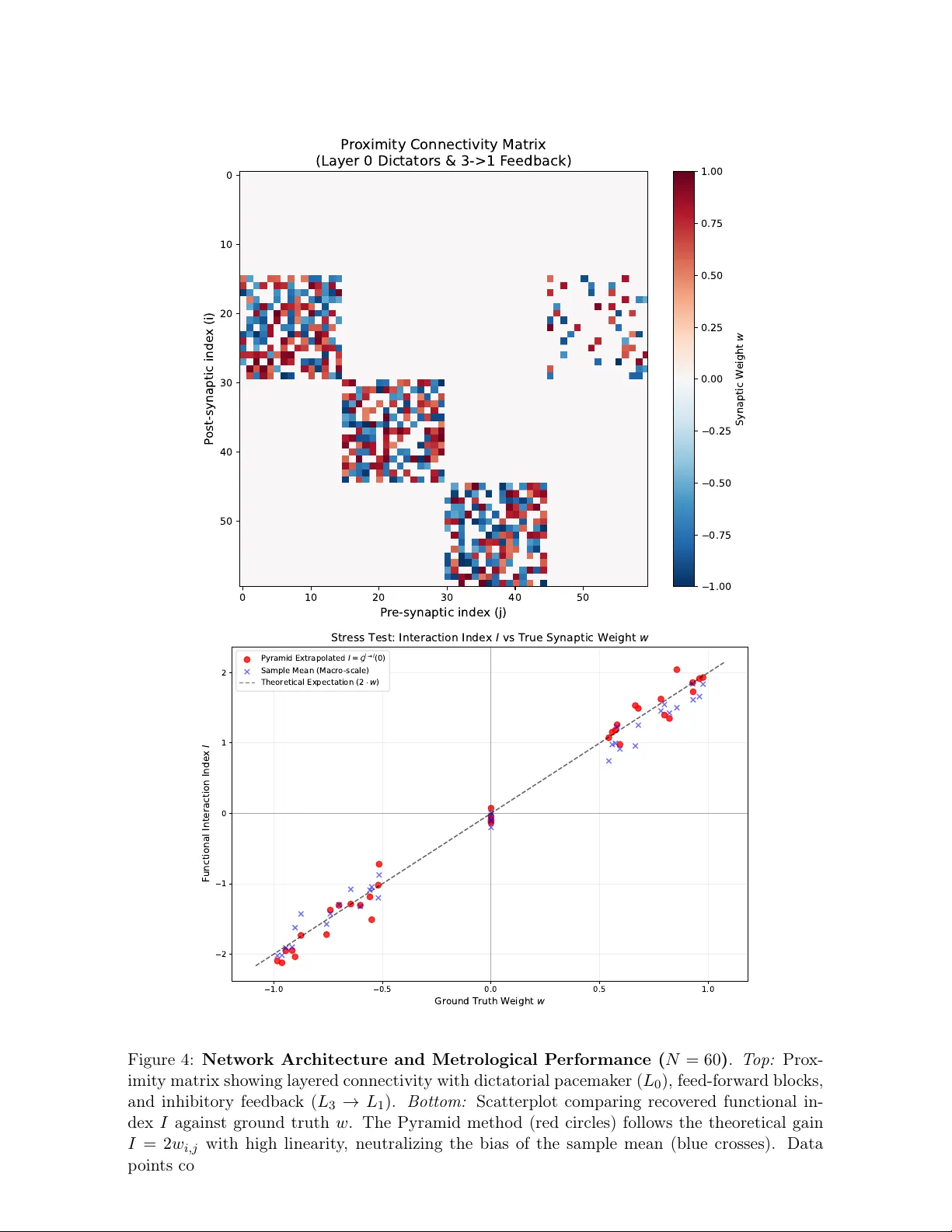

Leave a Comment