Neural-Symbolic Logic Query Answering in Non-Euclidean Space

Answering complex first-order logic (FOL) queries on knowledge graphs is essential for reasoning. Symbolic methods offer interpretability but struggle with incomplete graphs, while neural approaches generalize better but lack transparency. Neural-sym…

Authors: Lihui Liu

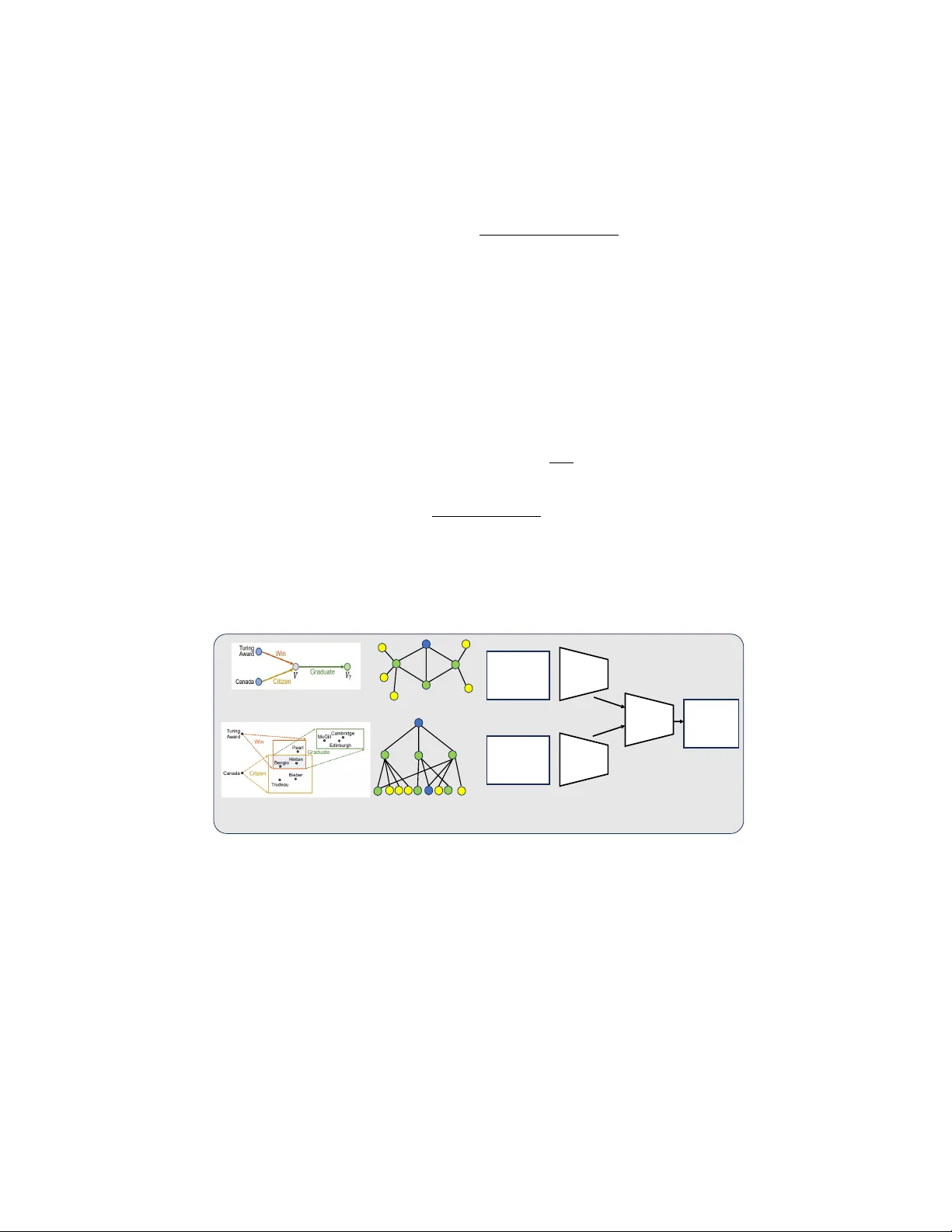

Neural-Symbolic Logic Query Answering in Non-Euclidean Space Lihui Liu 1 W ayne State Univ ersity , Detroit, Michigan, USA {hw6926}@wayne.edu Abstract. Answering complex first-order logic (FOL) queries on kno wledge graphs is essential for reasoning. Symbolic methods offer interpretability b ut struggle with incomplete graphs, while neural approaches generalize better but lack trans- parency . Neural-symbolic models aim to integrate both strengths b ut often fail to capture the hierarchical structure of logical queries, limiting their effecti veness. W e propose H Y Q N E T , a neural-symbolic model for logic query reasoning that fully lev erages hyperbolic space. H Y Q N E T decomposes FOL queries into rela- tion projections and logical operations ov er fuzzy sets, enhancing interpretabil- ity . T o address missing links, it emplo ys a hyperbolic GNN-based approach for knowledge graph completion in hyperbolic space, ef fecti vely embedding the re- cursiv e query tree while preserving structural dependencies. By utilizing hyper- bolic representations, H Y Q N E T captures the hierarchical nature of logical projec- tion reasoning more ef fectiv ely than Euclidean-based approaches. Experiments on three benchmark datasets demonstrate that H Y Q N E T achieves strong perfor- mance, highlighting the advantages of reasoning in h yperbolic space. 1 Introduction A knowledge graph organizes entities and their relationships, making it a valuable tool for applications like question answering [41], recommendation systems [6], and com- puter vision[8]. Reasoning over knowledge graphs helps infer new knowledge and an- swer queries based on existing data, with answering complex First-Order Logic (FOL) queries being a key challenge. FOL queries inv olve logical operations such as existen- tial quantifiers ( ∃ ), conjunction ( ∧ ), disjunction ( ∨ ), and ne gation ( ¬ ). F or e xample, the question “Which univ ersities do Turing A ward winners in deep learning work at?" can be formulated as an FOL query , as shown in Figure. 1. Most existing methods rely on neural networks to model logical operations and learn query embeddings to find answers. Approaches like [7,42,43,5] represent queries using boxes, beta distributions, or points, but their reasoning process is often opaque since neural networks function as black boxes. On the other hand, symbolic methods provide clear interpretability by explicitly deriving answers from stored facts or using subgraph matching. Howe ver , they struggle with incomplete knowledge graphs, which limits their applicability in real-world scenarios. Recent research has explored combining neural reasoning with symbolic techniques to achiev e both strong generalization and interpretability . For example, GNN-QE [50] employs a graph neural network (GNN) for projection operations and integrates sym- bolic graph matching to enhance rob ustness and interpretability . Howe ver , GNN-QE 2 Lihui Liu. encodes local subtrees in Euclidean space, which is suboptimal for capturing hierar- chical relationships. Hyperbolic embeddings provide a more natural representation for such structures. In this paper , we propose a hyperbolic GNN for answering complex First-Order Logic (FOL) queries on kno wledge graphs. Unlike Euclidean-based approaches, our model leverages hyperbolic embeddings with learned curv ature at each layer , enabling it to more effecti vely capture hierarchical query structures. Building upon prior work [50], we decompose FOL queries into expressions o ver fuzzy sets, where relation proj ections are modeled using the hyperbolic GNN with learned curvature. This design improves reasoning accuracy . By operating in hyperbolic space, our model naturally preserves the tree-like structure of logical projections, facilitating more effecti ve representation learning. Additionally , the learned curvature allows the GNN to adapt to the geometry of the kno wledge graph, improving entity projections while maintaining interpretability through fuzzy set-based logic operations. W e ev aluate our method on three standard datasets for FOL queries. Our experiments demonstrate that our approach consistently outperforms existing baselines across v arious query types, achieving state-of-the-art performance on all datasets. In summary , we make the follo wing contributions: – W e propose a nov el method that leverages hyperbolic embeddings with learned curvature to better capture hierarchical structures in logical projections. By learning curvature at each layer, our model gains increased flexibility , leading to improved reasoning performance. – W e demonstrate that our method achiev es state-of-the-art performance on three standard FOL query datasets. 2 Problem Definition In this section, we introduce the background knowledge of FOL queries on knowledge graphs and fuzzy sets. 2.1 First-Order Logic Queries on Knowledge Graphs Giv en a set of entities V and a set of relations R , a knowledge graph G = ( V , E , R ) is a collection of triplets E = { ( h i , r i , t i ) } ⊆ V × R × V , where each triplet is a fact from head entity h i to tail entity t i with the relation type r i . A FOL query on a knowledge graph is a formula composed of constants (denoted with English terms), variables (denoted with a , b , c ), relation symbols (denoted with R ( a, b ) ), and logic symbols ( ∃ , ∧ , ∨ , ¬ ). In the context of knowledge graphs, each constant or variable is an entity in V . A variable is bounded if it is quantified in the expression, and free otherwise. Each relation symbol R ( a, b ) is a binary function that indicates whether there is a relation R between a pair of constants or variables. For logic symbols, we consider queries that contain conjunction ( ∧ ), disjunction ( ∨ ), negation ( ¬ ), and existential quantification ( ∃ ) 1 . 1 For simplicity , we focus on this subset of FOL operations in this work. Neural-Symbolic Logic Query Answering in Non-Euclidean Space 3 Figure 1 illustrates the FOL query for the natural language question “where did Canadian citizens with T uring A ward graduate?”. Gi ven a FOL query , the goal is to find answers to the free variables, such that the formula is true. Fig. 1: An example of logical query . 2.2 Fuzzy Logic Operations Fuzzy sets [10] are a continuous relaxation of sets whose elements have degrees of membership. A fuzzy set A = ( U, x ) contains a universal set U and a membership function x : U → [0 , 1] . For each u ∈ U , the value of x ( u ) defines the degree of membership (i.e., probability) for u in A . Similar to Boolean logic, fuzzy logic defines three logic operations: AND , OR , and NO T , o ver the real-v alued degree of membership. There are several alternative definitions for these operations, such as product fuzzy logic, Gödel fuzzy logic, and Łukasiewicz fuzzy logic. In this paper , fuzzy sets are used to represent the assignments of variables in FOL queries, where the univ erse U is always the set of entities V in the knowledge graph. Since the univ erse is a finite set, we represent the membership function x as a vector x . W e use x u to denote the degree of membership for element u . For simplicity , we abbreviate a fuzzy set A = ( U, x ) as x throughout the paper . 2.3 Hyperbolic Space and Its Embedding Hyperbolic space is a geometric setting where the parallel postulate of Euclidean ge- ometry does not hold. Instead of parallel lines remaining equidistant, they div erge ex- ponentially . This space, often denoted as H n , exhibits a constant negati ve curvature, leading to unique geometric properties such as rapid expansion of v olume with distance and altered notions of angles and distances. These properties make hyperbolic space particularly useful for representing hierarchical structures and relational data, where entities grow e xponentially as one mov es outward. Mathematical Repr esentation via the P oincaré Model. One of the most practical ways to work with hyperbolic space is through the Poincaré ball model, which maps it onto the interior of a unit sphere in R n . Given a point x within this unit ball, distances are not measured using standard Euclidean norms but rather through a modified met- ric that accounts for hyperbolic curvature. Specifically , the hyperbolic distance d ( x, y ) 4 Lihui Liu. between two points x, y is computed as: d ( x, y ) = arccosh 1 + 2 ∥ x − y ∥ 2 (1 − ∥ x ∥ 2 )(1 − ∥ y ∥ 2 ) . This metric ensures that distances grow exponentially as points mov e outward, mirror- ing the behavior of man y complex networks and hierarchical systems. T angent Space and Exponential Map. Although hyperbolic space is fundamen- tally different from Euclidean space, computations can be simplified by leveraging the tangent space at a gi ven point. The tangent space at a point x ∈ D n is a local Euclidean approximation of hyperbolic space, allowing for operations such as optimization and vector transformations. The exponential map exp x : T x H n → H n projects vectors from the tangent space back onto the manifold, while its in verse, the logarithmic map log x : H n → T x H n , maps points from the manifold to the tangent space: exp x ( v ) = x + tanh( ∥ v ∥ ) v ∥ v ∥ log x ( y ) = arctanh ( ∥ y − x ∥ ) ∥ y − x ∥ ( y − x ) . 3 Methodology (a) Logical query (b) Reasoning in Embedding Sp ace (c ) Graph (d) Embedding Propagation Tr e e Tu ri n g Aw a rd : 1 Nobel Priz e: 0 The Oscar s: 0 … Canada: 1 USA: 0 France: 0 … Hyperbo lic GNN: Win Hyperbo lic GNN: Citiz en Hyperbo lic GNN: Gra duate Cambridge: 1 McGi ll : 1 Edinbu rgh: 1 … (e ) Learning Process of HyQNet Fig. 2: Overvie w of H Y Q N E T . Here we present our model H Y Q N E T . The high-lev el idea of H Y Q N E T is to first decompose a FOL query into an expression of four basic operations (relation projection, conjunction, disjunction, and negation) over fuzzy sets, then parameterize the relation projection with a hyperbolic GNN with learned curvature adapted from KG completion, and instantiate the logic operations with product fuzzy logic operations. Giv en a FOL query , the first step is to transform it into a structured computation process, where the query is broken down into a sequence of fundamental operations. Neural-Symbolic Logic Query Answering in Non-Euclidean Space 5 W e represent the query as a directed computation graph, starting from anchor entities and progressiv ely applying transformations until the final answer set is obtained. The decomposition follo ws a structured process. First, relation projections identify the enti- ties that satisfy specific relational constraints. Gi ven an initial fuzzy set of head entities, the projection operation maps them to potential tail entities through the correspond- ing relation. Once relation projections establish the core structure, logical operations refine the intermediate results. Conjunction enforces conditions that must be met si- multaneously , ef fecti vely taking the intersection of fuzzy sets. Disjunction broadens the set of possible answers by considering alternative paths, while negation eliminates en- tities that contradict the query constraints. By iteratively applying these operations, we propagate information through the computation graph, ultimately producing a fuzzy set representing the final answer . This structured ex ecution ensures interpretability at each step while maintaining the flexibility needed for reasoning in knowledge graphs. W e introduce the details of each logical operation below . 4.2. Hyperbolic Neural Relation Projection T o solve complex queries on incom- plete kno wledge graphs, we train a neural model to perform relation projection, defined as y = P q ( x ) , where x is the input fuzzy set, q is the giv en relation, and y is the out- put fuzzy set. The goal of relation projection is to generate the potential answer set y , consisting of entities that could be connected to x via relation q . This task aligns with the knowledge graph completion problem, where missing links must be inferred. Specifically , the neural relation projection model aims to predict the fuzzy set of tail entities y given the fuzzy set of head entities x and the relation q , even in the presence of incomplete knowledge. Many methods have been proposed to model projection operations, including [7,42,43]. Recently , [50] introduced a GNN-based framework for knowledge graph completion. When applying GNN models, it has been shown that their underlying mechanism is equiv alent to learning node embeddings based on a recursi ve tree structure. An e xample is illustrated in Figure 2 (d), where the goal is to predict a suitable answer for the query ( Blue node , r , ?) , where r represents an arbitrary relation. Howe ver , the corresponding recursiv e tree structure grows exponentially with the number of propagation steps. Al- though naively applying GNNs as in [50] is feasible, it is suboptimal because standard GNNs struggle to ef fectiv ely capture the exponential gro wth of node neighborhoods. In contrast, hyperbolic embeddings are better suited for encoding hierarchical structures, as they naturally capture the exponential growth of node neighborhoods. T o address this, we propose lev eraging hyperbolic embeddings with learned curvature for relation projection. Inspired by this idea, we develop a scalable hyperbolic GNN framework to enhance the effecti veness of relation projection in knowledge graph completion. Hyperbolic Relation Projection. Our goal is to design a hyperbolic GNN model that predicts a fuzzy set of tail entities gi ven a fuzzy set of head entities and a relation. Gi ven a head entity u and a projection relation q , we use the following iteration to compute a representation h v for each entity v ∈ V with respect to the source entity u according to the recursive tree structure. Giv en the source entity set x v , we initalize its embedding by h (0) v ← x v q , where the x v is the probability of entity v in x . For each layer in the tree, the learned process can be denoted as h ( t ) v ← A GGREGA TE ( { MESSA GE ( h ( t − 1) z ( z , r , v )) | ( z , r, v ) ∈ E ( v ) } ) 6 Lihui Liu. When utilizing hyperbolic embeddings, we iterativ ely update node embeddings in the knowledge graph. The update process is gi ven by: h ( t +1) v = σ exp 0 c ˜ A W t log 0 c ( h ( t ) z ) , ( z , r , v ) ∈ E ( v ) . Here, the exponential map exp x c maps a tangent vector v ∈ T x D n c to the hyperbolic manifold: exp x c ( v ) = x ⊕ c tanh √ c ∥ v ∥ √ c v ∥ v ∥ . Con versely , the logarithmic map log x c transforms a point on the hyperbolic manifold back to the tangent space: log x c ( y ) = 2 √ c arctanh ( √ c ∥ − x ⊕ c y ∥ ) ∥ − x ⊕ c y ∥ ( − x ⊕ c y ) . In practice, exp 0 c and log 0 c are used to ef ficiently con vert between Euclidean vectors and their hyperbolic representations in the Poincaré ball model. T o apply the hyperbolic GNN framework for relation projection, we propagate the representations for T layers. Then, we tak e the representations in the last layer and pass them into a multi-layer perceptron (MLP) f followed by a sigmoid function σ to predict the fuzzy set of tail entities: P q ( x ) = σ f ( h ( T ) ) . 3.1 Fuzzy Logic Operations In knowledge graph reasoning, operations like C ( x, y ) , D ( x, y ) , and N ( x ) play an es- sential role in combining the results of multiple relation projections, thus forming the input fuzzy set for the next projection. These operations must ideally satisfy founda- tional logical principles, such as commutati vity , associativity , and non-contradiction. While man y prior approaches [7,42,43] ha ve introduced geometric operations to model these logic operations within the embedding space, such neural operators are not alw ays reliable in adhering to logical laws. As a result, chaining these operators can introduce errors. Building on the work of [50], we employ product fuzzy logic operations to model conjunction, disjunction, and ne gation. Specifically , gi ven two fuzzy sets x, y ∈ [0 , 1] V , we define the operations as: C ( x, y ) = x ⊙ y (Conjunction) D ( x, y ) = x + y − x ⊙ y (Disjunction) N ( x ) = 1 − x (Negation) In these equations, ⊙ denotes element-wise multiplication, and 1 is a v ector of ones (representing the univ erse). Neural-Symbolic Logic Query Answering in Non-Euclidean Space 7 3.2 Model T raining In terms of model training, we follow the approach used in previous studies [42,43], aiming to minimize the binary cross-entropy loss function, defined as: L = − 1 | A Q | X a ∈ A Q log p ( a | Q ) − 1 | V \ A Q | X a ′ ∈ V \ A Q log 1 − p ( a ′ | Q ) Here, A Q refers to the set of correct answers for query Q , while V \ A Q represents the set of entities in the knowledge graph that are not part of A Q . The terms p ( a | Q ) and p ( a ′ | Q ) indicate the predicted probabilities for a and a ′ being correct answers, respectiv ely . Theorem 1. The pr oposed H Y Q N E T consistently outperforms—or performs on par with—existing GNN-based logical query reasoning models that rely on Euclidean em- beddings. Pr oof. Euclidean embeddings correspond to a special case of H Y Q N E T where the learned curv ature approaches zero. As H Y Q N E T generalizes Euclidean embeddings by allowing variable curvature, it is capable of capturing a broader range of geometric structures. Consequently , H Y Q N E T consistently matches or surpasses the performance of models using Euclidean embeddings. 4 Experiment W e e v aluate H Y Q N E T on three widely used knowledge graph datasets: FB15k, FB15k- 237, and NELL995 [50]. T o ensure consistenc y with prior studies, we adopt the bench- mark FOL queries pro vided by BetaE [43], which include 9 EPFO query structures and 5 additional queries incorporating negation. Our model is trained on 10 query types (1p, 2p, 3p, 2i, 3i, 2in, 3in, inp, pni, pin), following the setup used in pre vious research [42,43,50]. For e valuation, we test H Y Q N E T on both the 10 training query types and 4 unseen query types (ip, pi, 2u, up) to assess its generalization ability . Evaluation Protocol W e follow the ev aluation protocol of [42], where the answers to each query are divided into two categories: easy answers and hard answers. For test (or v alidation) queries, easy answers are entities that can be directly reached in the validation (or train) graph using symbolic relation traversal. In contrast, hard answers require reasoning over predicted links, meaning the model must infer them rather than retriev e them directly . T o ev aluate performance, we rank each hard answer against all non-answer entities and use mean reciprocal rank (MRR) and HITS@K (H@K) as ev aluation metrics. Specifically , we use MRR and HITS@1, as done in GNN-QE. Baselines W e compare H Y Q N E T against both embedding methods and neural-symbolic methods. The embedding methods include GQE [7], Q2B [42], BetaE [43] and Fuz- zQE [5]. The neural-symbolic methods include CQD-CO, CQD-Beam [1] and GNN- QE [50]. 8 Lihui Liu. T able 1: T est MRR results (%) on answering FOL queries. Model av g p av g n 1p 2p 3p 2i 3i pi ip 2u up 2in 3in inp pin pni FB15k GQE 28.0 - 54.6 15.3 10.8 39.7 51.4 27.6 19.1 22.1 11.6 - - - - - Q2B 38.0 - 68.0 21.0 14.2 55.1 66.5 39.4 26.1 35.1 16.7 - - - - - BetaE 41.6 11.8 65.1 25.7 24.7 55.8 66.5 43.9 28.1 40.1 25.2 14.3 14.7 11.5 6.5 12.4 CQD-CO 46.9 - 89.2 25.3 13.4 74.4 78.3 44.1 33.2 41.8 21.9 - - - - - CQD-Beam 58.2 - 89.2 54.3 28.6 74.4 78.3 58.2 67.7 42.4 30.9 - - - - - GNN-QE 73.7 38.3 87.7 68.8 58.7 79.7 83.5 68.9 68.1 74.4 60.2 44.5 41.7 41.7 29.4 34.3 H Y Q N E T 74.2 38.6 87.8 69.2 59.4 81.0 84.8 71.1 65.8 74.9 60.9 44.3 44.4 42.1 29.8 34.4 FB15k-237 GQE 16.3 - 35.0 7.2 5.3 23.3 34.6 16.5 10.7 8.2 5.7 - - - - - Q2B 20.1 - 40.6 9.4 6.8 29.5 42.3 21.2 12.6 11.3 7.6 - - - - - BetaE 20.9 5.5 39.0 10.9 10.0 28.8 42.5 22.4 12.6 12.4 9.7 3.5 3.4 5.1 7.9 7.4 CQD-CO 21.8 - 46.7 9.5 6.3 31.2 40.6 23.6 16.0 14.5 8.2 - - - - - CQD-Beam 22.3 - 46.7 11.6 8.0 31.2 40.6 21.2 18.7 14.6 8.4 - - - - - FuzzQE 24.0 7.8 42.8 12.9 10.3 33.3 46.9 26.9 17.8 14.6 10.3 8.5 11.6 7.8 5.2 5.8 GNN-QE 26.1 8.4 40.1 12.1 10.2 35.5 51.6 29.2 16.9 13.4 11.2 7.7 14.4 8.3 5.7 5.9 H Y Q N E T 26.5 8.9 40.5 11.9 10.3 36.3 52.1 28.8 17.9 14.3 11.2 7.6 15.8 8.9 6.3 5.9 NELL995 GQE 18.6 - 32.8 11.9 9.6 27.5 35.2 18.4 14.4 8.5 8.8 - - - - - Q2B 22.9 - 42.2 14.0 11.2 33.3 44.5 22.4 16.8 11.3 10.3 - - - - - BetaE 24.6 5.9 53.0 13.0 11.4 37.6 47.5 24.1 14.3 12.2 8.5 5.1 7.8 10.0 3.1 3.5 CQD-CO 28.8 - 60.4 17.8 12.7 39.3 46.6 30.1 22.0 17.3 13.2 - - - - - CQD-Beam 28.6 - 60.4 20.6 11.6 39.3 46.6 25.4 23.9 17.5 12.2 - - - - - FuzzQE 27.0 7.8 47.4 17.2 14.6 39.5 49.2 26.2 20.6 15.3 12.6 7.8 9.8 11.1 4.9 5.5 GNN-QE 28.7 8.6 51.3 17.0 12.9 39.1 49.3 28.1 17.4 14.2 9.0 8.8 12.7 10.9 5.0 5.5 H Y Q N E T - 28.9 8.9 50.3 18.0 14.1 39.7 49.9 27.4 17.5 14.5 10.8 8.5 13.5 11.4 5.8 5.5 T able 2: T est H@1 results (%) on answering FOL queries. Model av g p av g n 1p 2p 3p 2i 3i pi ip 2u up 2in 3in inp pin pni FB15k GQE 16.6 - 34.2 8.3 5.0 23.8 34.9 15.5 11.2 11.5 5.6 - - - - - Q2B 26.8 - 52.0 12.7 7.8 40.5 53.4 26.7 16.7 22.0 9.4 - - - - - BetaE 31.3 5.2 52.0 17.0 16.9 43.5 55.3 32.3 19.3 28.1 16.9 6.4 6.7 5.5 2.0 5.3 CQD-CO 39.7 - 85.8 17.8 9.0 67.6 71.7 34.5 24.5 30.9 15.5 - - - - - CQD-Beam 51.9 - 85.8 48.6 22.5 67.6 71.7 51.7 62.3 31.7 25.0 - - - - - GNN-QE 68.6 21.7 85.4 63.5 52.5 74.8 79.9 63.2 62.5 67.1 53.0 32.3 30.9 32.7 17.8 21.8 H Y Q N E T 69.5 21.9 85.4 64.6 53.8 76.8 80.4 63.2 62.0 70.5 54.3 32.4 30.9 32.7 18.2 22.2 FB15k-237 GQE 8.8 - 22.4 2.8 2.1 11.7 20.9 8.4 5.7 3.3 2.1 - - - - - Q2B 12.3 - 28.3 4.1 3.0 17.5 29.5 12.3 7.1 5.2 3.3 - - - - - BetaE 13.4 2.8 28.9 5.5 4.9 18.3 31.7 14.0 6.7 6.3 4.6 1.5 7.7 3.0 0.9 0.9 CQD-CO 14.7 - 36.6 4.7 3.0 20.7 29.6 15.5 9.9 8.6 4.0 - - - - - CQD-Beam 15.1 - 36.6 6.3 4.3 20.7 29.6 13.5 12.1 8.7 4.3 - - - - - GNN-QE 18.4 3.2 29.9 6.4 5.5 24.6 41.5 20.7 11.2 7.8 6.2 2.7 6.1 3.6 1.9 1.9 H Y Q N E T 18.9 3.8 30.3 6.7 5.9 25.6 42.2 20.5 12.2 8.0 6.4 3.1 7.7 4.1 2.5 2.1 NELL995 GQE 9.9 - 15.4 6.7 5.0 14.3 20.4 10.6 9.0 2.9 5.0 - - - - - Q2B 14.1 - 23.8 8.7 6.9 20.3 31.5 14.3 10.7 5.0 6.0 - - - - - BetaE 17.8 2.1 43.5 8.1 7.0 27.2 36.5 17.4 9.3 6.9 4.7 1.6 2.2 4.8 0.7 1.2 CQD-CO 21.3 - 51.2 11.8 9.0 28.4 36.3 22.4 15.5 9.9 7.6 - - - - - CQD-Beam 21.0 - 51.2 14.3 6.3 28.4 36.3 18.1 17.4 10.2 7.2 - - - - - GNN-QE 21.5 3.3 41.0 12.4 9.3 29.2 40.0 20.2 11.7 8.2 6.4 2.5 5.3 5.5 1.5 1.7 H Y Q N E T 21.5 3.7 42.3 12.0 8.4 28.6 39.4 21.1 12.1 7.7 5.4 3.1 4.6 8.1 0.9 1.9 Neural-Symbolic Logic Query Answering in Non-Euclidean Space 9 4.1 Complex Query Answering T able 3: Spearman’ s rank correlation between the model prediction and the number of ground truth answers on FB15k. avg is the average correlation on all 12 query types in the table. Model avg 1p 2p 3p 2i 3i pi ip 2in 3in inp pin pni Q2B - 0.301 0.219 0.262 0.331 0.270 0.297 0.139 - - - - - BetaE 0.494 0.373 0.478 0.472 0.572 0.397 0.519 0.421 0.622 0.548 0.459 0.465 0.608 GNN-QE 0.952 0.971 0.967 0.926 0.987 0.936 0.937 0.923 0.992 0.985 0.880 0.940 0.991 H Y Q N E T 0.958 0.971 0.970 0.941 0.988 0.938 0.939 0.934 0.990 0.984 0.908 0.948 0.991 T able 1 shows the MRR results of dif ferent models for answering FOL queries. GQE, Q2B, CQD-CO, and CQD-Beam do not support queries with negation, so the corresponding entries are empty . W e observe that H Y Q N E T achieves the best result most of the time for both EPFO queries and queries with negation on all 3 datasets. Notably , H Y Q N E T achi v es the best av erage performance and better overall perfor- mance most of the time compared with baselines, which shows the effecti veness of the proposed hyperbolic embedding model. Hyperbolic embedding can better capture the recursiv e learning tree structure, Thus, the learned embedding is more expressiv e compared with the euclidean embedding. Additionally , H Y Q N E T consistently achiev es the highest a verage MRR, particularly e xcelling in comple x queries such as intersection (2i, 3i) and negation-based queries (2in, 3in, pin, pni). This suggests that these models effecti vely capture relational structures and logical patterns. CQD-Beam also performs well, especially on FB15k, benefiting from beam search strategies that enhance multi- hop reasoning. Ho wev er , these gains are less pronounced in FB15k-237 and NELL995, likely due to the increased sparsity and relational div ersity in these datasets. Overall, the results indicate that neural-symbolic models (e.g., GNN-QE, H Y Q N E T ) and query embedding-based approaches (e.g., CQD variants) are more effecti ve at generalizing across different logical structures compared to earlier embedding-based methods like GQE and Q2B. T able 2 shows the Hits@1 (H@1) results of different methods on FOL queries, demonstrating a consistent pattern of model performance across various datasets (FB15k, FB15k-237, and NELL-995). H Y Q N E T consistently outperforms other models, achiev- ing the highest average Hits@1 scores across all datasets. CQD-Beam also performs well, particularly on the FB15k dataset, benefiting from beam search strategies that enhance multi-hop reasoning. In contrast, models such as GQE and Q2B exhibit rela- tiv ely lower performance, especially on more complex queries and larger datasets like FB15k-237 and NELL995. Compared to GNN-QE, H Y Q N E T sho ws superior perfor- mance. Overall, the results suggest that the hyperbolic embedding model is better at capturing the recursiv e tree structure in embeddings. 4.2 Answer Set Cardinality Prediction Similar to GNN-QE, H Y Q N E T can predict the cardinality of the answer set (i.e., the number of answers) without e xplicit supervision. The cardinality of a fuzzy set is com- 10 Lihui Liu. puted as the sum of entity probabilities exceeding a predefined threshold. W e use 0.5 as the threshold, as it naturally aligns with our binary classification loss. Previous studies [42,43] hav e observed that the uncertainty in Q2B and BetaE is positiv ely correlated with the number of answers. F ollo wing their approach, we report Spearman’ s rank cor- relation between our model’ s predictions and the ground truth. As shown in T able 3, H Y Q N E T significantly outperforms existing methods most of the time. 5 Related W ork Knowledge Graph Reasoning. Knowledge graph reasoning has been studied for a long time [19,18,17,20,22,37,15,25,33,23,32,21,35,34,36,14,13,16,12,24,31,26,27,28,30,29] . Embedding-based approaches [3,44,46] map entities and relations into lo w-dimensional vectors to capture graph structure. Reinforcement learning methods [47,49,11] use agents to explore paths and predict missing links, while rule-based techniques [9,48,40] gen- erate logical rules for link prediction. Graph Neural Networks f or Relational Learning . Graph neural networks (GNNs) [45,50] have gained popularity in learning entity representations for knowledge graph completion. In contrast, our approach adapts hyperbolic GNNs for relation projection and extends the task to complex logical query answering, which introduces more chal- lenges than traditional graph completion. Hyperbolic Geometry in Knowledge Graphs. Hyperbolic space is particularly effecti ve for modeling hierarchical structures due to its exponential growth properties. Early works such as Poincaré embeddings [39] demonstrated the benefits of hyperbolic geometry , which were later e xpanded in Hyperbolic Graph Neural Netw orks (HGNNs) [38] for better relational reasoning. Models like MuRP [2] and AttH [4] have shown improv ed performance in hierarchical link prediction tasks, making hyperbolic em- beddings valuable for relational reasoning in large-scale knowledge graphs. Our work builds on these models to optimize hyperbolic representations for logical reasoning and query answering. Complex Logical Query Handling Complex Logical Queries. T raditional kno wl- edge graph completion techniques focus on predicting missing links, b ut more comple x tasks inv olve answering logical queries that combine operations like conjunction, dis- junction, and negation. Methods such as GQE [7] introduced compositional training to extend embedding-based approaches for path queries. GQE applies a geometric in- tersection operator for conjunctive queries ( ∧ ), while Query2Box [42] and BetaE [43] generalize this approach to handle more complex logical operators like ∨ and ¬ . Fuz- zQE [5] incorporates fuzzy logic to align embeddings with classical logic, providing a more nuanced representation. Ho wev er , these approaches often prioritize computational efficienc y , using nearest neighbor search or dot product for query decoding, which sac- rifices interpretability and makes intermediate reasoning opaque. Combining Symbolic and Neural A pproaches. T o address the limitations of pure embedding methods, some approaches combine neural netw orks with symbolic reason- ing. CQD [1] extends pretrained embeddings to answer complex queries, with CQD- CO employing continuous optimization and CQD-Beam utilizing beam search. While our method shares similarities with CQD-Beam in combining symbolic reasoning with Neural-Symbolic Logic Query Answering in Non-Euclidean Space 11 graph completion, it stands apart by enabling direct training on complex queries. Un- like CQD-Beam, GNN-QE bypasses the need for exhausti ve search or pretrained em- beddings, offering a more efficient and interpretable solution for answering complex logical queries. 6 Conclusion W e proposed a hyperbolic graph neural network (GNN) for answering complex First- Order Logic (FOL) queries on knowledge graphs. By le veraging hyperbolic embed- dings with learned curvature, our model effecti vely captures hierarchical query struc- tures, improving reasoning accuracy and interpretability . Experiments on three bench- mark datasets show that our method outperforms existing baselines, demonstrating the benefits of hyperbolic space for logical reasoning. Future work includes extending our approach to more expressi ve logical operations and exploring adapti ve curvature learn- ing for greater flexibility . References 1. Arakelyan, E., Daza, D., Minervini, P ., Cochez, M.: Complex query answering with neural link predictors. arXiv preprint arXi v:2011.03459 (2020) 2. Balaževi ´ c, I., Allen, C., Hospedales, T .: Multi-relational poincaré graph embeddings. Curran Associates Inc., Red Hook, NY , USA (2019) 3. Bordes, A., Usunier , N., Garcia-Duran, A., W eston, J., Y akhnenko, O.: T ranslating embed- dings for modeling multi-relational data. Advances in neural information processing systems 26 (2013) 4. Chami, I., W olf, A., Juan, D.C., Sala, F ., Ravi, S., Ré, C.: Lo w-dimensional hyperbolic knowledge graph embeddings. In: Proceedings of the 58th Annual Meeting of the Asso- ciation for Computational Linguistics. Association for Computational Linguistics, Online (Jul 2020) 5. Chen, X., Hu, Z., Sun, Y .: Fuzzy logic based logical query answering on knowledge graphs. In: Proceedings of the AAAI Conference on Artificial Intelligence. vol. 36, pp. 3939–3948 (2022) 6. Guo, Q., Zhuang, F ., Qin, C., Zhu, H., Xie, X., Xiong, H., He, Q.: A survey on knowledge graph-based recommender systems (2020), 7. Hamilton, W ., Bajaj, P ., Zitnik, M., Jurafsk y , D., Lesk ovec, J.: Embedding logical queries on knowledge graphs. Adv ances in neural information processing systems 31 (2018) 8. He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition (2015), 9. Ho, V .T ., Stepanov a, D., Gad-Elrab, M.H., Kharlamov , E., W eikum, G.: Rule learning from knowledge graphs guided by embedding models. In: The Semantic W eb–ISWC 2018: 17th International Semantic W eb Conference. pp. 72–90. Springer (2018) 10. Klir , G.J., Y uan, B.: Fuzzy sets and fuzzy logic: theory and applications. Prentice-Hall, Inc., USA (1994) 11. Lin, X., Socher, R.: Multi-hop kno wledge graph reasoning with re ward shaping. In: EMNLP 2018 12. Liu, L.: Knowledge graph reasoning and its applications: A pathw ay towards neural symbolic AI. Ph.D. thesis, Univ ersity of Illinois at Urbana-Champaign (2024) 12 Lihui Liu. 13. Liu, L.: Hyperkgr: Knowledge graph reasoning in hyperbolic space with graph neural net- work encoding symbolic path. In: Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing. pp. 25188–25199 (2025) 14. Liu, L.: Monte carlo tree search for graph reasoning in large language model agents. In: Pro- ceedings of the 34th ACM International Conference on Information and Knowledge Man- agement. pp. 4966–4970 (2025) 15. Liu, L., Chen, Y ., Das, M., Y ang, H., T ong, H.: Knowledge graph question answering with ambiguous query . In: Proceedings of the A CM W eb Conference 2023. pp. 2477–2486 (2023) 16. Liu, L., Ding, J., Mukherjee, S., Y ang, C.J.: Mixrag: Mixture-of-experts retrie val- augmented generation for textual graph understanding and question answering. arXi v preprint arXiv:2509.21391 (2025) 17. Liu, L., Du, B., Fung, Y .R., Ji, H., Xu, J., T ong, H.: K ompare: A kno wledge graph compara- tiv e reasoning system. In: Proceedings of the 27th A CM SIGKDD Conference on Knowledge Discov ery & Data Mining. pp. 3308–3318 (2021) 18. Liu, L., Du, B., Ji, H., Zhai, C., T ong, H.: Neural-answering logical queries on knowledge graphs. In: Proceedings of the 27th A CM SIGKDD Conference on Kno wledge Discovery & Data Mining. pp. 1087–1097 (2021) 19. Liu, L., Du, B., T ong, H., et al.: G-finder: Approximate attributed subgraph matching. In: 2019 IEEE International Conference on Big Data (Big Data). pp. 513–522. IEEE (2019) 20. Liu, L., Du, B., Xu, J., Xia, Y ., T ong, H.: Joint knowledge graph completion and question answering. In: Proceedings of the 28th A CM SIGKDD Conference on Knowledge Discovery and Data Mining. pp. 1098–1108 (2022) 21. Liu, L., Hill, B., Du, B., W ang, F ., T ong, H.: Con versational question answering with lan- guage models generated reformulations ov er knowledge graph. In: Findings of the Associa- tion for Computational Linguistics A CL 2024. pp. 839–850 (2024) 22. Liu, L., Ji, H., Xu, J., T ong, H.: Comparative reasoning for knowledge graph fact check- ing. In: 2022 IEEE International Conference on Big Data (Big Data). pp. 2309–2312. IEEE (2022) 23. Liu, L., Kim, J., Bansal, V .: Can contrastive learning refine embeddings. arXiv preprint arXiv:2404.08701 (2024) 24. Liu, L., Shu, K.: Unifying knowledge in agentic llms: Concepts, methods, and recent ad- vancements. Authorea Preprints (2025) 25. Liu, L., Song, J., W ang, H., Lv , P .: Brps: A big data placement strategy for data inten- siv e applications. In: 2016 IEEE 16th International Conference on Data Mining W orkshops (ICDMW). pp. 813–820. IEEE (2016) 26. Liu, L., T ong, H.: Neural symbolic knowledge graph reasoning 27. Liu, L., T ong, H.: Accurate query answering with llms ov er incomplete kg. In: Neural Sym- bolic Knowledge Graph Reasoning: A Pathway T owards Neural Symbolic AI, pp. 73–87. Springer (2026) 28. Liu, L., T ong, H.: Accurate query answering with neural symbolic reasoning o ver incomplete kg. In: Neural Symbolic Knowledge Graph Reasoning: A P athway T owards Neural Symbolic AI, pp. 55–72. Springer (2026) 29. Liu, L., T ong, H.: Ambiguous entity matching with neural symbolic reasoning over incom- plete kg. In: Neural Symbolic Knowledge Graph Reasoning: A Pathway T owards Neural Symbolic AI, pp. 107–119. Springer (2026) 30. Liu, L., T ong, H.: Ambiguous query answering with neural symbolic reasoning over incom- plete kg. In: Neural Symbolic Knowledge Graph Reasoning: A Pathway T owards Neural Symbolic AI, pp. 89–106. Springer (2026) 31. Liu, L., T ong, H.: Neural Symbolic Knowledge Graph Reasoning: A P athway T owards Neu- ral Symbolic AI. Springer Nature (2026) Neural-Symbolic Logic Query Answering in Non-Euclidean Space 13 32. Liu, L., W ang, Z., Bai, J., Song, Y ., T ong, H.: New frontiers of knowledge graph reasoning: Recent advances and future trends. In: Companion Proceedings of the A CM W eb Conference 2024. pp. 1294–1297 (2024) 33. Liu, L., W ang, Z., Qiu, R., Ban, Y ., Chan, E., Song, Y ., He, J., T ong, H.: Logic query of thoughts: Guiding large language models to answer complex logic queries with knowledge graphs. arXiv preprint arXi v:2404.04264 (2024) 34. Liu, L., W ang, Z., T ong, H.: Neural-symbolic reasoning over knowledge graphs: A survey from a query perspectiv e. A CM SIGKDD Explorations Newsletter 27 (1), 124–136 (2025) 35. Liu, L., W ang, Z., Zhou, D., W ang, R., Y an, Y ., Xiong, B., He, S., Shu, K., T ong, H.: T ransnet: T ransfer knowledge for few-shot knowledge graph completion. arXiv preprint arXiv:2504.03720 (2025) 36. Liu, L., W ang, Z., Zhou, D., W ang, R., Y an, Y ., Xiong, B., He, S., T ong, H.: Few-shot knowl- edge graph completion via transfer knowledge from similar tasks. In: Proceedings of the 34th A CM International Conference on Information and Kno wledge Management. pp. 4960–4965 (2025) 37. Liu, L., Zhao, R., Du, B., Fung, Y .R., Ji, H., Xu, J., T ong, H.: Kno wledge graph comparati ve reasoning for f act checking: Problem definition and algorithms. IEEE Data Eng. Bull. 45 (4), 19–38 (2022) 38. Liu, Q., Nickel, M., Kiela, D.: Hyperbolic graph neural networks (2019), https:// 39. Nickel, M., Kiela, D.: Poincaré embeddings for learning hierarchical representations. In: Guyon, I., Luxburg, U.V ., Bengio, S., W allach, H., Fergus, R., V ishwanathan, S., Gar- nett, R. (eds.) Advances in Neural Information Processing Systems. v ol. 30. Curran Associates, Inc. (2017), https://proceedings.neurips.cc/paper_files/ paper/2017/file/59dfa2df42d9e3d41f5b02bfc32229dd- Paper.pdf 40. Qu, M., Chen, J., Xhonneux, L.P ., Bengio, Y ., T ang, J.: Rnnlogic: Learning logic rules for reasoning on knowledge graphs. arXi v preprint arXi v:2010.04029 (2020) 41. Radford, A., W u, J., Child, R.: Language models are unsupervised multitask learners (2018) 42. Ren, H., Hu, W ., Leskovec, J.: Query2box: Reasoning o ver knowledge graphs in vector space using box embeddings. arXiv preprint arXi v:2002.05969 (2020) 43. Ren, H., Leskovec, J.: Beta embeddings for multi-hop logical reasoning in kno wledge graphs. Advances in Neural Information Processing Systems 33 , 19716–19726 (2020) 44. Sun, Z., Deng, Z.H., Nie, J.Y ., T ang, J.: Rotate: Knowledge graph embedding by relational rotation in complex space. arXi v preprint arXiv:1902.10197 (2019) 45. T eru, K., Denis, E., Hamilton, W .: Inductive relation prediction by subgraph reasoning. In: International Conference on Machine Learning. pp. 9448–9457. PMLR (2020) 46. T rouillon, T ., W elbl, J., Riedel, S., Gaussier , É., Bouchard, G.: Complex embeddings for simple link prediction. In: International conference on machine learning. pp. 2071–2080. PMLR (2016) 47. Xiong, W ., Hoang, T ., W ang, W .Y .: Deeppath: A reinforcement learning method for knowl- edge graph reasoning. arXiv preprint arXi v:1707.06690 (2017) 48. Y ang, F ., Y ang, Z., Cohen, W .W .: Differentiable learning of logical rules for knowledge base completion. CoRR, abs/1702.08367 (2017) 49. Zhang, Q., W eng, X., Zhou, G., Zhang, Y ., Huang, J.X.: Arl: An adapti ve rein- forcement learning framew ork for complex question answering over kno wledge base. Information Processing and Management 59 (3), 102933 (2022). https: //doi.org/https://doi.org/10.1016/j.ipm.2022.102933 , https: //www.sciencedirect.com/science/article/pii/S0306457322000565 50. Zhu, Z., Galkin, M., Zhang, Z., T ang, J.: Neural-symbolic models for logical queries on knowledge graphs. In: International conference on machine learning. pp. 27454–27478. PMLR (2022)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment