Probing the Geometry of Diffusion Models with the String Method

Understanding the geometry of learned distributions is fundamental to improving and interpreting diffusion models, yet systematic tools for exploring their landscape remain limited. Standard latent-space interpolations fail to respect the structure o…

Authors: Elio Moreau, Florentin Coeurdoux, Grégoire Ferre

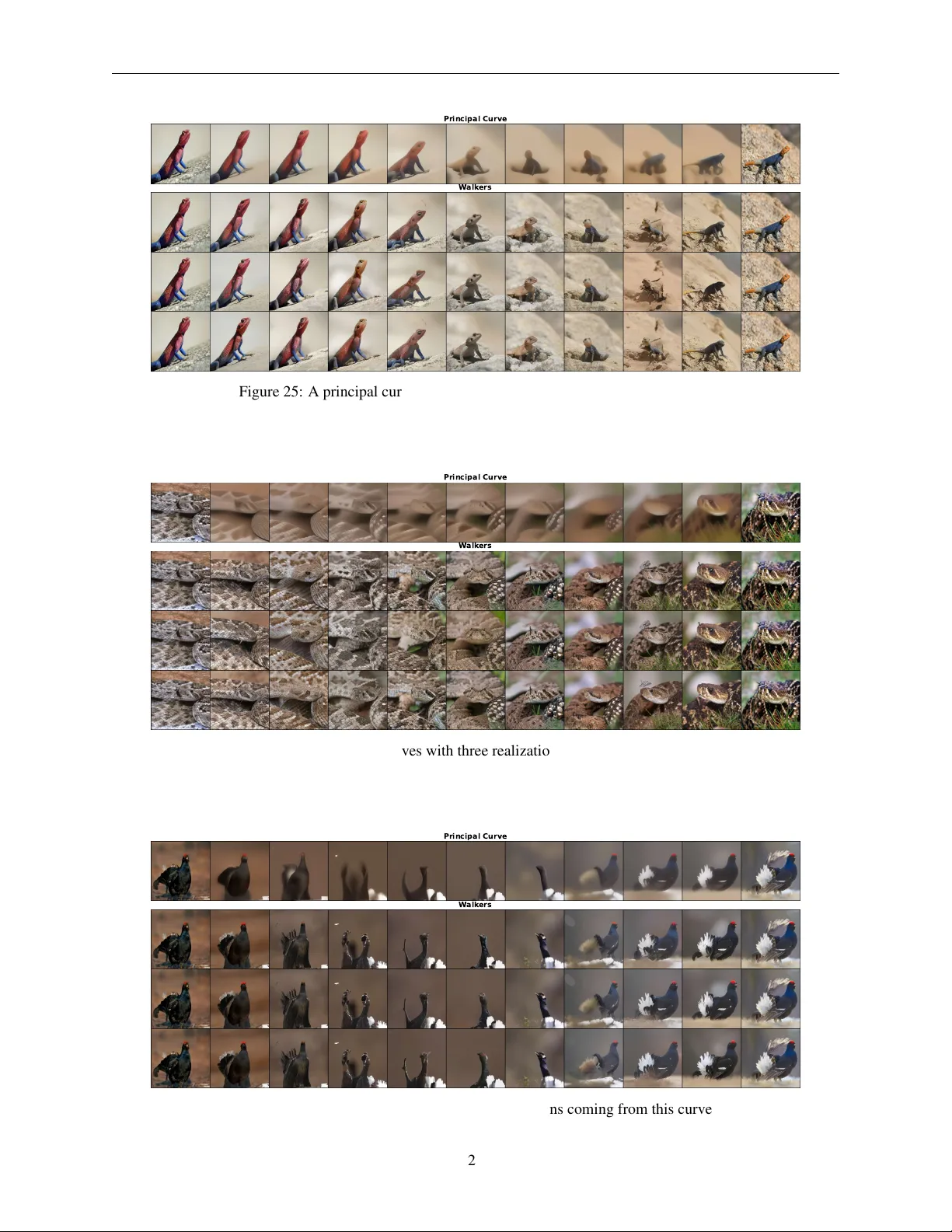

P R O B I N G T H E G E O M E T RY O F D I FF U S I O N M O D E L S W I T H T H E S T R I N G M E T H O D Elio Moreau ∗ Capital Fund Management 23 Rue de l’Univ ersité, 75007 Paris elio.moreau@ens.psl.eu Florentin Coeurdoux Capital Fund Management 23 Rue de l’Univ ersité, 75007 Paris florentin.coeurdoux@cfm.com Grégoire F erre Capital Fund Management 23 Rue de l’Univ ersité, 75007 Paris gregoire.ferre@cfm.com Eric V anden-Eijnden ML Lab, Capital Fund Management 23 Rue de l’Univ ersité, 75007 Paris Courant Institute of Mathematical Sciences, New Y ork Univ ersity , New Y ork, NY 10012, USA, eve2@cims.nyu.edu A B S T R AC T Understanding the geometry of learned distributions is fundamental to impro ving and interpreting diffusion models, yet systematic tools for exploring their landscape remain limited. Standard latent- space interpolations fail to respect the structure of the learned distribution, often tra versing lo w-density regions. W e introduce a framew ork based on the string method that computes continuous paths between samples by ev olving curv es under the learned score function. Operating on pretrained models without retraining, our approach interpolates between three regimes: pure generativ e transport, which yields continuous sample paths; gradient-dominated dynamics, which recover minimum energy paths (MEPs); and finite-temperature string dynamics, which compute principal curves—self- consistent paths that balance energy and entrop y . W e demonstrate that the choice of regime matters in practice. F or image dif fusion models, MEPs contain high-likelihood but unrealistic “cartoon” images, confirming prior observations that likelihood maxima appear unrealistic; principal curv es instead yield realistic morphing sequences despite lower likelihood. For protein structure prediction, our method computes transition pathways between metastable conformers directly from models trained on static structures, yielding paths with physically plausible intermediates. T ogether , these results establish the string method as a principled tool for probing the modal structure of dif fusion models—identifying modes, characterizing barriers, and mapping connecti vity in complex learned distributions. 1 Introduction Generativ e models based on dif fusion and flo w matching hav e achiev ed remarkable success in learning complex data distributions. These models transport indi vidual samples from noise to data, implicitly encoding an ener gy landscape through learned score functions. Y et this point-wise perspective obscures the global geometry of the learned distribution: its modal structure, the barriers between modes, and the connectivity of the data manifold. Understanding pathways between samples has broad applications: morphing between configurations, identifying transition states, and re vealing mechanisms underlying rare events. In molecular systems, such pathways explain conformational changes and folding; in other domains, they can illuminate ho w the learned distribution connects distinct modes. Howe ver , current generators provide only samples, not the pathw ays between them. Exploiting the geometric ∗ Elio Moreau is the corresponding author . Email: elio.moreau@ens.psl.eu. Probing the Geometry of Diffusion Models with the String Method 1 2 3 4 Data P oints P rincipal Curve MEP 1 2 3 4 Figure 1: The likelihood-realism paradox. T op: schematic showing the MEP (green) passing through the high- likelihood re gion (yellow), while the principal curve (red) stays within the typical set where data concentrates (blue points). Dashed lines indicate V oronoi cells—regions of points closest to each image along the string. Bottom: actual images at numbered locations. Endpoints (1, 2) are identical for both paths; the principal curve intermediate (3) is realistic; the MEP intermediate (4) is cartoonish. Full pathways computed by our method are shown in Figures 4 and 5. information encoded in the learned landscape requires a principled definition of transition pathways, along with a computational procedure to find them. W e introduce a framework that e volv es entire curves of samples—strings—rather than individual points. By controlling how these strings interact with the learned score function, we can probe dif ferent aspects of the distribution geometry . Figure 1 previe ws our main finding: the choice of dynamics reveals a fundamental tension between likelihood and realism. Paths that maximize likelihood trav erse “cartoon” configurations—simplified, stylized images that the model assigns high probability but that lie outside the typical set. P aths that account for entropy , in contrast, remain within the typical set and produce perceptually natural transitions. This paper makes three main contributions: 1. W e adapt the string method from computational chemistry to work with learned score functions, enabling pathway computation from pretrained generativ e models without explicit ener gy functions. 2. W e sho w how three choices of dynamics—pure transport, gradient-dominated, and finite-temperature—rev eal complementary aspects of the learned landscape. 3. W e demonstrate that accounting for entropy is essential for realistic pathways in high dimensions—principal curves trav erse the typical set while MEPs do not—and illustrate this on image morphing and protein conformational transitions. Importantly , our method operates directly on pretrained models without any retraining or fine-tuning. Given only access to the learned velocity field b t and score s t , we can compute strings in any of the three regimes. This makes the approach immediately applicable to existing models. 1.1 Related W ork T ransition path methods. Computing pathways between metastable states has a long history in computational chemistry . The nudged elastic band method [ 1 ] and string methods [ 2 , 3 , 4 ] evolv e chains of configurations to find minimum (free) energy paths. Extensions like the finite-temperature string method [ 5 ] incorporate entropic effects by computing principal curves [ 6 ], building on concepts from transition path theory [ 7 , 8 ]. These methods traditionally require explicit, time-independent energy functions or force fields. Our key contribution is adapting them to time- dependent energies defined implicitly through learned score functions, enabling pathway computation directly from pretrained generativ e models. Generative modeling . Diffusion models and flo ws [ 9 , 10 ] learn to rev erse a noising process. Stochastic interpolants [ 11 , 12 ], flo w matching [ 13 ], and rectified flo ws [ 14 ] provide unified framew orks connecting these approaches. Our 2 Probing the Geometry of Diffusion Models with the String Method method builds on this foundation, using the learned velocity and score fields to define string dynamics. Recent work observed that likelihood-maximizing points in dif fusion models appear “cartoonish”—perceptually unrealistic despite high probability [ 15 , 16 ]. W e attribute this paradox to concentration of measure in high dimensions, and resolve it via finite-temperature string dynamics that account for entropy . Image morphing . Interpolating between images in generati ve models is typically done via linear paths in latent space, but such paths often trav erse low-density regions producing unrealistic intermediates. DiffMorpher [ 17 ] addresses this through attention interpolation and self-attention guidance; other methods optimize latent trajectories [ 18 ]. Our frame work dif fers by grounding interpolation in the geometry of the learned distrib ution, showing that naiv e approaches fail by ignoring entropy and that accounting for it yields realistic paths without task-specific modifications. Protein conformations. Recent generati ve models learn conformational distributions from structural databases: AlphaFlow [ 19 ] fine-tunes AlphaFold with flow matching, while DiG [ 20 ], EigenFold [ 21 ], ConfDiff [ 22 ], and FoldingDif f [ 23 ] train diffusion models on conformational ensembles. These methods sample individual conformations but do not provide transition pathways between them—yet such pathways are essential for understanding protein function, since biological activity often in volv es conformational changes [ 24 , 25 ]. Our string method complements these approaches by computing pathways directly from the learned score, potentially rev ealing folding mechanisms and conformational change dynamics from models trained only on static structures. 2 Methodology 2.1 Generative Models as Scor e-Based Dynamics Diffusion and flow-matching models learn to re verse a noising process that transforms data into noise. At the heart of these methods is a time-dependent density ρ t ( x ) interpolating between a simple density ρ 0 ( x ) (typically Gaussian) and the data density ρ 1 ( x ) . The model learns either a velocity field b t ( x ) or a score function s t ( x ) = ∇ log ρ t ( x ) , which are related through the structure of the interpolation. Sampling proceeds by integrating a forward-time dynamics. In its most general form, this takes the shape of an SDE: dx t = b t ( x t ) | {z } transport dt + γ 2 t s t ( x t ) | {z } score correction dt + √ 2 γ t dW t | {z } noise , (1) where the volatility γ t ≥ 0 can be tuned. Setting γ t = 0 recov ers the deterministic probability flow ODE; positiv e γ t yields stochastic samplers. A key observation is that s t ( x ) = −∇ V t ( x ) where V t ( x ) = − log ρ t ( x ) is an implicit energy landscape. While we cannot e valuate V t directly , the learned score provides access to its gradient—exactly what the string method requires. Crucially , the score is estimated via Stein’ s identity (Gaussian integration by parts), which requires adding noise to the signal. As t → 1 , the noise vanishes and this estimator degrades—the learned score becomes unreliable precisely at the data distribution. This is why generative models sample via nonequilibrium transport (pushing noise tow ard data) rather than Langevin dynamics on s 1 . For the same reason, we cannot simply ev olve strings under s 1 ; instead, we must work dynamically across the full time interv al, le veraging reliable scores at intermediate times. More theoretical details on diffusion models can be found in Appendix A. 2.2 The String Method: General Formulation The classical string method [ 2 , 3 ] evolv es curves under a time-independent potential V ( x ) . Here we generalize to time-dependent velocities v t ( x ) arising from dif fusion models. For s ∈ (0 , 1) , the string ev olves according to ˙ ϕ t ( s ) = v t ( ϕ t ( s )) + λ t ( s ) ∂ s ϕ t ( s ) , (2) where λ t ( s ) is a Lagrange multiplier enforcing the constraint | ∂ s ϕ t ( s ) | = const in s . This constraint prev ents bunching or spreading of points—without it, points would cluster at attractors of v t rather than tracing a path between them. The endpoints ϕ t (0) and ϕ t (1) follow the probability flo w ODE ˙ ϕ t = b t ( ϕ t ) , which serve as boundary conditions for the string ev olution. Discrete algorithm. W e discretize the string as N + 1 images { ϕ ( i ) t } N i =0 . The endpoints ϕ (0) t and ϕ ( N ) t follow the probability flow; the interior images i = 1 , . . . , N − 1 e volv e via alternating steps (Figure 2): 3 Probing the Geometry of Diffusion Models with the String Method Step 1: Evolution. Move each interior image using v t : ϕ ( i ) t +∆ t = ϕ ( i ) t + ∆ t v t ( ϕ ( i ) t ) , i = 1 , . . . , N − 1 . (3) Step 2: Reparametrization. Redistribute images to restore equal arc-length spacing via linear or cubic spline interpolation (for details see Appendix B.2). The reparametrization implicitly enforces the λ t constraint. T o fully specify the dynamics, we must choose both the velocity field v t for interior images and an initial string { ϕ ( i ) 0 } N i =0 ; both choices are discussed in Section 2.3. For more details on the string method, we refer the reader to Appendix B. 2.3 From T ransport to Energy to Entropy What should v t be? W e consider three choices, each motiv ated by limitations of the previous one. 2.3.1 Pure T ransport ( γ t = 0 ) ϕ t ϕ t +1 ϕ t +2 v t Rep Figure 2: The string method. Grey dashed arro ws show Step 1 (e volu- tion): each image mov es according to v t , landing at positions marked with × . Blue dotted arrows show Step 2 (reparametrization): images are redistributed to restore equal arc-length spacing along the string. The simplest choice sets v t = b t , the learned velocity field: ˙ ϕ t ( s ) = b t ( ϕ t ( s )) + λ t ( s ) ∂ s ϕ t ( s ) . (4) Starting from a curve of noise samples at t = 0 , this produces at t = 1 a con- tinuous path morphing between generated images. For v ariance-preserving schedules where ρ 0 = N (0 , I ) , typical noise samples hav e norm approximately √ d . Giv en two such samples z 0 and z 1 , a natural initializa- tion is ϕ 0 ( s ) = z 0 cos( π s/ 2) + z 1 sin( π s/ 2) , (5) which traces an approximate geodesic on this sphere; a reparametrization step may be ap- plied to enforce | ∂ s ϕ 0 ( s ) | = const exactly . Alternativ ely , to morph between two specific data samples x A and x B , we can integrate the probability flo w backward from t = 1 to t = 0 to obtain z 0 and z 1 and use these as endpoints—this is the approach taken in our experiments. Pure transport produces visually appealing morphs, but the resulting path is simply the image of the initial string under the flow . While individual images remain typical samples of ρ t , the path as a curve is not intrinsically defined—it depends entirely on the initialization. T o obtain paths with a principled geometric meaning, we must incorporate the score. 2.3.2 Minimum Energy Paths ( γ t ≫ 1 , T = 0 ) Adding the score term to the velocity connects the string to the ener gy landscape V t = − log ρ t : v t = b t + γ 2 t s t = b t − γ 2 t ∇ V t . (6) In the limit γ t → ∞ , the score term dominates and the string relaxes rapidly to ward high-density re gions of ρ t . Since this relaxation is much faster than the evolution of V t itself, the string effecti vely sees a quasi-static energy landscape at each time, recov ering the classical string method and computing minimum ener gy paths (MEPs). Definition 2.1 (Minimum Energy P ath) . A curve ϕ ∗ : [0 , 1] → R d is an MEP if the energy gradient (and hence the score) is ev erywhere tangent to the path: [ ∇ V ( ϕ ∗ ( s ))] ⊥ = − [ s ( ϕ ∗ ( s ))] ⊥ = 0 , ∀ s ∈ [0 , 1] , where [ · ] ⊥ denotes the component perpendicular to the tangent ∂ s ϕ ∗ . 4 Probing the Geometry of Diffusion Models with the String Method Since in our setting V t = − log ρ t , minimizing energy is equiv alent to maximizing likelihood: MEPs are maximum likelihood paths connecting two endpoints. MEPs are geometrically well-defined: they pass through saddle points, identify transition states, and characterize barrier heights. Howe ver , in high dimensions, a fundamental problem emer ges. The likelihood-realism paradox. MEPs maximize likelihood along the path, b ut in high dimensions, high likelihood does not imply high probability mass. Probability mass depends on both the density ρ ( x ) and the volume of nearby configuration space; the latter is an entropic contribution that dominates in high dimensions. Regions where the product of density and volume is maximized form the typical set —where samples actually concentrate. 2 As a result, MEPs trav erse modes, which lie outside the typical set. This explains the “cartoon” phenomenon [ 15 , 16 ]: likelihood-maximizing images appear simplified and unrealistic. Our experiments confirm this—as γ increases, MEPs tra verse higher-lik elihood regions with increasingly cartoonish intermediates (Figure 4). T o find paths connecting samples that remain within the typical set, we must account for entropy . Principal curves, discussed next, achie ve this. 2.3.3 Principal Curves ( γ t ≫ 1 , T > 0 ) Principal curves were introduced by [6] to find structure in unstructured data. Definition 2.2 (Principal Curve) . A curve ϕ ∗ : [0 , 1] → R d is a principal curve for a distribution ρ if it is self-consistent: each point equals the conditional expectation of points that project onto it: ϕ ∗ ( s ) = E ρ [ X | s ∗ ( X ) = s ] , ∀ s ∈ [0 , 1] , where s ∗ ( X ) = arg min s ′ ∥ X − ϕ ∗ ( s ′ ) ∥ is the projection of X onto the curve. 0.0 0.2 0.4 0.6 0.8 1.0 t 1 0 3 1 0 2 1 0 1 1 0 0 1 0 1 1 0 2 m o d e l h k s t − ˆ s t k k s t k i 2 dimensions 8 dimensions 20 dimensions Figure 3: Relative score estimation error: E model [ | s t − ˆ s t | / | s t | ] as a function of t for a mix- ture of Gaussians in various dimensions. The error increases sharply near t = 1 , motiv ating the quenching of γ t as t → 1 . For details see Appendix C In our setting, we take ρ = ρ T ∝ ρ 1 /T 1 , where ρ 1 is the data dis- tribution and T ∈ [0 , 1] controls the ener gy-entropy balance, and we fix the endpoints ϕ ∗ (0) = x A and ϕ ∗ (1) = x B . At T = 1 , we obtain principal curves for ρ 1 connecting the two samples through the typical set; as T → 0 , ρ T concentrates near modes and the principal curve con v erges to an MEP . Our experiments confirm that increasing T yields increasingly realistic intermediates (Figure 5). T o compute principal curves, we discretize them into N + 1 images { ϕ ( i ) t } N i =0 , where the projection re gions become V oronoi cells V i = { x : ∥ x − ϕ ( i ) ∥ < ∥ x − ϕ ( j ) ∥ for all j = i } . W e associate to each image a walker x ( i ) t that samples its V oronoi cell via the full SDE: dx ( i ) t = b t ( x ( i ) t ) dt + γ 2 t s t ( x ( i ) t ) dt + √ 2 T γ t dW t , (7) where integration uses timesteps ∆ t = O ( γ − 2 t ) , and we let each walker drag its string image to ward the running av erage of its posi- tion. This ef fectiv ely defines the velocity v t of the string. W alkers must also remain closer to their associated string image than to an y other; moves violating this constraint are rejected, enforcing the V oronoi restriction. Since b t and s t are time-dependent, we impose a separation of timescales via γ t ≫ 1 : the walkers equilibrate within their V oronoi cells much faster than the landscape V t ev olves, so the string tracks an approximate principal curv e at each instant. The complete finite-temperature string method iterates the following steps for t ∈ [0 , 1] : Step 1: W alker evolution. Evolve each walker x ( i ) t according to (7) , rejecting moves that violate the minimum-distance criterion. 2 For e xample, the density of a d -dimensional Gaussian N (0 , I ) is maximal at the origin, but typical samples lie on a sphere of radius r ≈ √ d . 5 Probing the Geometry of Diffusion Models with the String Method T able 1: Three regimes of string dynamics. T ransport MEP Principal curv e γ t = 0 γ t ≫ 1 γ t ≫ 1 T = 0 T > 0 Geometric No Y es Y es Entropic No No Y es Realistic Y es No Y es Step 2: String update. Update each string image via EMA: ϕ ( i ) t +∆ t = (1 − η ) ϕ ( i ) t + η x ( i ) t +∆ t , where η ∈ (0 , 1] controls the av eraging timescale. Step 3: Reparametrization. Redistrib ute string images to equal arc-length spacing. Remark 2.3 . One might ask why not compute principal curves directly on a pre generated dataset or the original training data. While this could work for curves that remain within high-density regions, we are primarily interested in principal curves connecting metastable states—such as distinct protein conformations or image modes. These transition regions are precisely where data points are scarce. The string method addresses this by using the learned score to sample locally along the curve, ev en in low-density regions. This also highlights the importance of the boundary conditions in our setting: we seek principal curves connecting two specified endpoints, which dif fers from Hastie’ s original formulation where the curve is unconstrained. 2.4 Summary and Implementation T able 1 compares the three regimes in which our framework can operate: pure transport gives appealing but geometrically unmotiv ated morphs; MEPs provide geometric grounding but fail in high dimensions; principal curves combine geometric meaning with realistic outputs. Algorithm 1 provides the complete procedure for computing strings in any of these regimes. Algorithm 1 Diffusion String Method Require: Data samples x A , x B ; velocity b t ; score s t Require: Parameters γ t , T ∈ [0 , 1] ; initial time t 0 ; number of images N ; timestep ∆ t = O ( γ − 2 t ) 1: z 0 , z 1 ← inte grate ˙ x t = b t ( x t ) from t = 1 to t = 0 starting at x A , x B 2: Initialize: ϕ ( i ) t 0 = z 0 cos( π i/ 2 N ) + z 1 sin( π i/ 2 N ) for i = 0 , . . . , N 3: Reparametrize { ϕ ( i ) t 0 } to equal arc-length spacing 4: while t < 1 do 5: for i = 0 to N do 6: Evolv e ϕ ( i ) t (and walker x ( i ) t if T > 0 ) according to chosen regime 7: end for 8: Reparametrize to equal arc-length spacing 9: end while Output: String { ϕ ( i ) 1 } N i =0 connecting x A to x B Quenching near t = 1 . When using γ t > 0 (for example to compute MEPs or principal curv es), we quench γ t → 0 as t → 1 to a void amplifying errors in the estimated score near the data distrib ution (Figure 3). This ensures that interior images arri ve accurately at t = 1 . The endpoints always e volv e with pure transport ( γ t = 0 ), so they return e xactly to x A and x B . Computational cost. The three regimes dif fer in expense. Pure transport ( γ t = 0 ) requires only forward integration of b t with moderate timesteps. MEPs and principal curves ( γ t ≫ 1 ) require smaller timesteps ∆ t = O ( γ − 2 t ) for stability , which dominates the computational cost. In practice, N = 50 – 70 images, and for principal curves η = 0 . 1 – 0 . 5 , provide a good balance between path resolution and cost. Our focus is on interpretability rather than speed: the method provides a tool for analyzing the geometry of pretrained dif fusion models, requiring only access to b t and s t without any retraining. Our experiments demonstrate that the approach is practical for realistic applications. 6 Probing the Geometry of Diffusion Models with the String Method 0 20 40 60 I m a g e I n d e x i 2.5 0.0 2.5 5.0 7.5 10.0 12.5 15.0 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 4: Effect of score weight γ . Left: string realizations for γ ranging from 15 ( top ) to 2 ( middle ) to 10 − 2 ( bottom ) in logarithmic steps (factor of √ 10 ). Higher γ driv es paths through abstract, high-likelihood modes; lo wer γ preserves realism. Right: log-likelihood of images along each string (colored curves), overlaid on the likelihood distribution of ImageNet v alidation images (heatmap; see Figure 6 for details). The intermediate images along the MEP reach likelihoods far e xceeding typical images. 0 20 40 60 I m a g e I n d e x i 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 5: Effect of temperature T . Left: string realizations for T ranging from 0.1 ( top ) to 0.5 ( middle ) to 0.9 ( bottom ). Lower T driv es paths through cartoon-like, high-likelihood regions; higher T produces realistic samples. Right: log-likelihood of images along each string (colored curves), ov erlaid on the likelihood distribution of ImageNet validation images (heatmap; see Figure 6). As T increases, the likelihood of the intermediates images decreases toward typical values, and images become more realistic. 3 Experiments W e demonstrate the string method on two domains: ImageNet for the likelihood-realism paradox, and proteins for conformational transitions. 3.1 Images: The Three Regimes on ImageNet W e apply the string method to ImageNet ( 256 × 256 ) using the SiT -XL-2-256 model [ 26 ]. Images are encoded to a 4 × 32 × 32 latent space via a V AE. Details of the model architecture are given in Appendix D. Additional image pathways are sho wn in Appendix H. Setup. Gi ven two images, we: (1) encode into the Gaussian latent space by backward ODE to t = 0 , (2) initialize a discrete string of N = 71 points along a spherical geodesic Eq (5) , and (3) e volve the string according to Eq (7) with score weight γ t = γ for 0 . 1 ≥ t ≥ 0 . 95 and γ t = 0 otherwise, where γ is a tunable parameter described belo w . This schedule pre vents the string from drifting a way from the typical set (i.e., the sphere in Gaussian latent space) near t = 0 , while av oiding regions where the score approximation de grades as t → 1 (Figure 3). Effect of score weight γ at T = 0 . Figure 4 visualizes strings interpolating between two beaver images for v arying γ and T = 0 . Full strings are size 71, but we sho w only of subsample of 11 (1 image e very 6). W ith a lar ge score weight ( γ = 15 , top row ), the string is dri ven to ward high-likelihood regions, yielding intermediates that lie close to the MEP . Notably , the maximum-likelihood intermediate is a highly abstract, nearly single-color image, confirming that the MEP passes through lik elihood maxima that lie outside the typical set and, as a result, ar e perceptually unr ealistic . 7 Probing the Geometry of Diffusion Models with the String Method 2.5 0.0 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/dim) 0.00 0.01 0.02 0.03 0.04 0.05 0.06 Density of Images 0 5 10 15 1 0 4 1 0 3 1 0 2 Imagnet V alidation Set MEP s T = 0.1 T = 0.5 T = 0.9 Figure 6: Likelihood distributions. Histogram of log- likelihoods for ImageNet 256 × 256 validat ion images (blue), compared with images along MEPs (orange) and finite- temperature strings at T = 0 . 1 (green), T = 0 . 5 (red), and T = 0 . 9 (purple), aggregated across multiple strings. MEP intermediates have significantly higher likelihood than real images, confirming they lie outside the typical set. As temperature increases, string images approach the likeli- hood distribution of real data. Inset: same data on log scale. W ith a moderate score weight ( γ = 2 , middle row ), inter - mediates become cartoon-like , consistent with prior ob- servations in dif fusion-based interpolation [ 15 , 16 ]. With small score guidance ( γ = 10 − 2 , bottom row ), the string produces visually plausible intermediates throughout. The left panel reports log-likelihood along each string: for large γ , the trajectory passes through a pronounced likelihood peak. The gap between typical-image likeli- hoods and this maximum provides a rough estimate of the data-manifold diameter in likelihood space. Effect of temperature T . Figure 5 illustrates the finite- temperature method for varying T when γ = 7 . At low temperatur e ( T = 0 . 1 , first ro w ), results closely re- semble those of the zero-temperature MEP string method, recov ering cartoon-like images. At moderate tempera- tur e ( T = 0 . 5 , second row ), intermediates exhibit slightly more detail but remain cartoon-like. At high temperatur e ( T ≈ 1 , final ro w ), the principal curve passes thr ough realistic-looking intermediates that balance energy and entropy . Additional examples illustrating the ef fect of temperature can be found in Appendix H. Principal curves. Figure 7 shows a principal curve be- tween two images from the goose class, computed using the finite-temperature method with T = 0 . 9 and γ = 7 . The first ro w displays the principal curve itself: each im- age is obtained by EMA from its associated walker . The remaining three ro ws show the w alkers at t = 1 that were used to compute the EMA. Upon close inspection, one can observe subtle dif ferences between walkers—an ef fect of entropy—which av erage out to produce the smoother images along the principal curve. Likelihood Distrib utions In Figure 6, we present the histogram of log-likelihood values for the ImageNet v alidation set, alongside the log-likelihoods of images obtained from a lar ge collection of strings generated using the algorithms described above. The distribution corresponding to the MEP exhibits pronounced peaks at substantially higher likelihoods than those observed for the v alidation set. Moreover , the finite-temperature strings display peaks whose locations shift systematically with temperature: as the temperature increases, the peak likelihood decreases, indicating a negati ve correlation between temperature and lik elihood, consistent with theoretical expectations. 3.2 Proteins: Conformational T ransitions W e apply the string method to predict transition pathways between protein conformations using two dif fusion models. One, called DiG [20], operates in SE(3) and it has been trained on experimental structures up to December 2020. The other , ScoreMD [ 27 ], operates in R 3 and includes a Fokker –Planck regularization term during training to improv e score estimation near t = 1 . Motivation. Proteins fluctuate between metastable conformations, but experiments typically capture only static snapshots. Transition pathways—the sequence of intermediate structures connecting conformers—are crucial for understanding function b ut are rarely observed directly . Computational methods such as molecular dynamics can in principle rev eal these pathways, b ut the timescales in volv ed often exceed what is computationally accessible [ 28 ]. W e demonstrate that our framew ork, applied to a pretrained generativ e model, can predict plausible transition pathways by computing principal curves with the finite-temperature string method. These pathways connect endpoint conformations while remaining within the typical set of the learned distribution, balancing lik elihood and entropy . Method. Giv en two conformations of the same protein, we apply the string method in the SE(3)-equiv ariant space of the model. The score function is deriv ed from the model’ s denoising objectiv e. Details are gi ven in Appendices F and E. 8 Probing the Geometry of Diffusion Models with the String Method Principal curve W alkers Figure 7: Principal curve f or images from the goose class. T op ro w: images along the principal curve, computed as the EMA of associated walkers ( T = 0 . 9 , γ = 7 ). Bottom three rows: individual w alkers at t = 1 . The walkers exhibit subtle v ariations due to entropic ef fects (best seen when zoomed in); a veraging produces the smoother images in the principal curve. Figure 8: Adenylate Kinase transition pathway . Pathway between the open (4AKE) and closed (1AKE) conformations computed using DiG. Intermediate structures maintain physical plausibility (secondary structure preservation, no steric clashes). Adenylate Kinase. Adenylate kinase (AdK) is a phosphotransferase enzyme that under goes a large-scale conforma- tional change between open and closed states during catalysis [ 29 ]. This transition has been e xtensively studied as a model system for understanding protein dynamics [ 30 , 31 ]. Using DiG, we computed the pathway between the open (PDB: 4AKE) and closed (PDB: 1AKE) conformations (Figure 8). The intermediates preserve secondary structure elements and av oid steric clashes, suggesting physically plausible transitions despite the model being trained only on static structures. BB A and Chignolin. BB A and Chignolin are small protein domains widely used as model systems for protein folding due to their rapid folding kinetics and simple topologies [ 32 ]. Using ScoreMD [ 27 ], we computed minimum ener gy paths (MEPs) connecting extended and folded conformations for both proteins. For ScoreMD, the score function is accurately learned near t = 1 , allowing us to apply the classical string method at a fixed time to compute MEPs. Howe ver , the high dimensionality and rough landscape make optimization sensiti ve to initialization. W e therefore use as initialization a string obtained by pure transport ( γ = 0 ). Figures 9 and 10 show the computed pathways projected onto the first two time-independent components (TICs) [ 33 ]. Left panels display the MEP (yello w) and initial string (purple) projected onto TIC coordinates and o verlaid on a free-energy landscape, where free energy is estimated as − log ρ from a histogram of i.i.d. samples generated by ScoreMD; darker regions indicate lo wer free energy . The MEP is dri ven tow ard lower free-ener gy regions compared to the initial string. Some path segments may appear to o verlap due to projection from high dimensions; asterisks mark equal arc-length intervals to clarify the geometry . Right panels show the free energy profile along each pathway , relati ve to the starting conformation. The initial string has significantly higher free energy than the con verged MEP , demonstrating that the string relaxation is essential. Because 9 Probing the Geometry of Diffusion Models with the String Method TIC 1 TIC 2 Initial String Conver ged String 0 20 40 60 80 100 120 length along path 200 150 100 50 0 50 E n e r g y ( k b T ) Initial String Conver ged String Figure 9: BB A folding pathway . T op: structures along the initial str ing obtained by pure transport ( γ = 0 ). Bottom: structures along the con verged MEP . Both show progressi ve formation of secondary structure. Left panel: initial string (purple) and MEP (green) projected onto the first two TIC components, overlaid on a free-ener gy landscape estimated from ScoreMD samples; dark er regions indicate lo wer free ener gy . Asterisks mark equal arc-length interv als. Right panel: energy profile along each pathway . The MEP achiev es significantly lower ener gy than the initial string. the dimensionality is substantially lower than in the image setting, the MEPs remain physically realistic rather than cartoonish. Abov e the two panels for each protein, we include a three-dimensional rendering of its folding pathway; the protein is colored consistently with the corresponding pathway to facilitate visual correspondence. 4 Conclusion W e adapted the string method to probe the geometry of learned distributions using score functions from pretrained generativ e models. By varying the dynamics—pure transport, gradient-dominated, or finite-temperature—we re veal complementary aspects of the distribution landscape. Our ke y finding is that accounting for entropy is essential in high dimensions. Minimum energy paths trav erse high-likelihood but lo w-probability regions, producing cartoonish artifacts. Principal curves, which balance energy against entropy , yield realistic transitions with stronger theoretical grounding. These results confirm and explain prior observations that high-likelihood samples from dif fusion models appear unrealistic [ 15 , 16 ]: the phenomenon is not a model defect but a consequence of concentration of measure, and can be resolv ed by accounting for entropy . This establishes the string method as a tool for analyzing generativ e models beyond sampling: identifying modes, characterizing barriers, and mapping connectivity in complex learned distributions. Importantly , the method requires no retraining—only access to the learned velocity and score fields. 10 Probing the Geometry of Diffusion Models with the String Method TIC 1 TIC 2 Initial String Conver ged String 0 20 40 60 80 100 120 length along path 20 0 20 40 60 80 E n e r g y ( k b T ) Initial String Conver ged String Figure 10: Chignolin folding pathway . T op: structures along the initial string obtained by pure transport ( γ = 0 ). Bottom: structures along the con ver ged MEP . Both show progressiv e formation of secondary structure. Left panel: initial string (purple) and MEP (green) projected onto the first two TIC components, ov erlaid on a free-energy landscape estimated from ScoreMD samples; darker regions indicate lo wer free energy . Asterisks mark equal arc-length intervals. Right panel: energy profile along each pathway . The MEP achiev es significantly lower ener gy than the initial string. Limitations. The computed pathways are only as good as the underlying generative model. If the model assigns low probability to physically relev ant transition states, the string method cannot recov er them. Similarly , errors in score estimation—particularly near the data distribution—propagate to the computed paths, moti v ating our quenching strategy . Future directions. Sev eral extensions are natural. Scaling to larger models (e.g., text-to-image diffusion) would test whether the likelihood-realism tradeoff persists across architectures. Theoretical analysis of con ver gence rates and approximation error would strengthen the foundations. Beyond images and proteins, the method applies wherev er transition pathways matter: molecular design, robotics, and latent space exploration in multimodal models. Finally , combining string methods with conditional generation could enable targeted pathway computation—for instance, finding transitions that pass through specified intermediate states. Impact Statement This paper presents work whose goal is to adv ance the field of Machine Learning. There are many potential societal consequences of our work, none which we feel must be specifically highlighted here. References [1] Graeme Henkelman, Blas P Uberuaga, and Hannes Jónsson. A climbing image nudged elastic band method for finding saddle points and minimum energy paths. The Journal of c hemical physics , 113(22):9901–9904, 2000. 11 Probing the Geometry of Diffusion Models with the String Method [2] W einan E, W eiqing Ren, and Eric V anden-Eijnden. String method for the study of rare events. Physical Review B , 66(5):052301, 2002. [3] W eiqing Ren, Eric V anden-Eijnden, et al. Simplified and improved string method for computing the minimum energy paths in barrier -crossing ev ents. The Journal of c hemical physics , 126(16), 2007. [4] Luca Maragliano, Alexander Fischer , Eric V anden-Eijnden, and Giov anni Ciccotti. String method in collectiv e variables: minimum free energy paths and isocommittor surfaces. The Journal of chemical physics , 125(2), 2006. [5] W einan E, W eiqing Ren, and Eric V anden-Eijnden. Finite temperature string method for the study of rare events. The Journal of Physical Chemistry B , 109(14):6688–6693, 2005. [6] T rev or Hastie and W erner Stuetzle. Principal curves. Journal of the American statistical association , 84(406):502– 516, 1989. [7] Eric V anden-Eijnden et al. T owards a theory of transition paths. Journal of statistical physics , 123(3):503–523, 2006. [8] W einan E and Eric V anden-Eijnden. Transition path theory and path-finding algorithms for the study of rare ev ents. Annual Review of Physical Chemistry , 61:391–420, 2010. [9] Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffusion probabilistic models. Advances in neural information pr ocessing systems , 33:6840–6851, 2020. [10] Y ang Song, Jascha Sohl-Dickstein, Diederik P Kingma, Abhishek Kumar , Stefano Ermon, and Ben Poole. Score- based generati ve modeling through stochastic dif ferential equations. In International Confer ence on Learning Repr esentations , 2021. [11] Michael S Albergo and Eric V anden-Eijnden. Building normalizing flows with stochastic interpolants. In International Confer ence on Learning Repr esentations , 2023. [12] Michael S. Albergo, Nicholas M. Boffi, and Eric V anden-Eijnden. Stochastic interpolants: A unifying frame work for flows and dif fusions, 2023. [13] Y aron Lipman, Ricky T . Q. Chen, Heli Ben-Hamu, Maximilian Nickel, and Matthew Le. Flow matching for generativ e modeling. In The Eleventh International Confer ence on Learning Representations , 2023. [14] Xingchao Liu, Chengyue Gong, and Qiang Liu. Flo w straight and fast: Learning to generate and transfer data with rectified flow . In The Eleventh International Confer ence on Learning Repr esentations , 2023. [15] Florentin Guth, Zahra Kadkhodaie, and Eero P Simoncelli. Learning normalized image densities via dual score matching. In The Thirty-ninth Annual Conference on Neur al Information Pr ocessing Systems , 2025. [16] Rafal Karcze wski, Markus Heinonen, and V ikas Garg. Diffusion models as cartoonists: The curious case of high density regions. In The Thirteenth International Confer ence on Learning Representations , 2025. [17] Kaiwen Zhang, Y ifan Zhou, Xudong Xu, Bo Dai, and Xingang Pan. Dif fmorpher: Unleashing the capability of diffusion models for image morphing. In Pr oceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , pages 7912–7921, 2024. [18] Clinton W ang and Polina Golland. Interpolating between images with dif fusion models. In Pr oceedings of the ICML 2023 W orkshop on Challenges of Deploying Generative AI , 2023. [19] Bowen Jing, Bonnie Berger , and T ommi Jaakkola. Alphafold meets flow matching for generating protein ensembles, 2024. [20] Shuxin Zheng, Jiyan He, Chang Liu, Y u Shi, Ziheng Lu, W eitao Feng, Fusong Ju, Jiaxi W ang, Jianwei Zhu, Y aosen Min, He Zhang, Shidi T ang, Hongxia Hao, Peiran Jin, Chi Chen, Frank Noé, Haiguang Liu, and Tie-Y an Liu. T o wards predicting equilibrium distrib utions for molecular systems with deep learning, 2023. [21] Bo wen Jing, Bonnie Berger , and T ommi Jaakkola. Eigenfold: generative protein structure prediction with diffusion models, 2023. [22] Y an W ang, Lihao W ang, Y uning Shen, Y iqun W ang, Huizhuo Y uan, Y ue W u, and Quanquan Gu. Protein conformation generation via force-guided se(3) diffusion models, 2024. [23] Ke vin E W u, Ke vin K Y ang, Rianne van den Ber g, Sarah Alamdari, James Y Hooper , Emine K ucukbenli, Lucy Colwell, et al. Protein structure generation via folding diffusion, 2024. [24] Hans Frauenfelder , Stephen G Sligar , and Peter G W olynes. The energy landscapes and motions of proteins. Science , 254(5038):1598–1603, 1991. [25] Katherine Henzler-W ildman and Dorothee Kern. Dynamic personalities of proteins. Natur e , 450(7172):964–972, 2007. 12 Probing the Geometry of Diffusion Models with the String Method [26] Nanye Ma, Mark Goldstein, Michael S. Alber go, Nicholas M. Boffi, Eric V anden-Eijnden, and Saining Xie. Sit: Exploring flow and dif fusion-based generati ve models with scalable interpolant transformers, 2024. [27] Michael Plainer , Hao W u, Leon Klein, Stephan Günnemann, and Frank Noé. Consistent sampling and simulation: Molecular dynamics with energy-based dif fusion models, 2026. [28] David E Shaw , Paul Maragakis, Kresten Lindorf f-Larsen, Stefano Piana, Ron O Dror , Michael P Eastwood, Joseph A Bank, John M Jumper , John K Salmon, Y ibing Shan, et al. Atomic-le vel characterization of the structural dynamics of proteins. Science , 330(6002):341–346, 2010. [29] Christoph W Müller , Gerwald J Schlauderer , Jochen Reinstein, and Geor g E Schulz. Adenylate kinase motions during catalysis: an energetic counterweight balancing substrate binding. Structure , 4(2):147–156, 1996. [30] Karunesh Arora and Charles L Brooks III. Large-scale allosteric conformational transitions of aden ylate kinase appear to in volve a population-shift mechanism. Pr oceedings of the National Academy of Sciences , 104(47):18496– 18501, 2007. [31] Oliv er Beckstein, Elizabeth J Denning, Juan R Perilla, and Thomas B W oolf. Zipping and unzipping of adenylate kinase: atomistic insights into the ensemble of open–closed transitions. Journal of Molecular Biology , 394(1):160– 176, 2009. [32] Kresten Lindorf f-Larsen, Stefano Piana, Ron O. Dror , and David E. Sha w . How fast-folding proteins fold. Science , 334(6055):517–520, 2011. [33] Feng Liu, Deguo Du, Amelia A Fuller , Amar Bhakta, V ijay Bhakta, Kelly A Dunn, Mohit Bhakta, and Martin Gruebele. Desolvation is a likely origin of robust enthalpic barriers to protein folding. Journal of Molecular Biology , 393(1):227–236, 2008. [34] Martina Jäger , Hoang Nguyen, Jonathan C Crane, Jeffery W Kelly , and Martin Gruebele. Structure-function- folding relationship in a WW domain. Proceedings of the National Academy of Sciences , 103(28):10648–10653, 2006. 13 Probing the Geometry of Diffusion Models with the String Method A Background on Diffusion Models A.1 V elocity and Score: Definitions and Estimators In the stochastic interpolant framework, we construct a time-dependent density ρ t via the interpolant I t = α t x 0 + β t x 1 with x 0 ∼ N (0 , I ) and x 1 ∼ ρ 1 . Common choices include: • Linear: α t = 1 − t , β t = t • Trigonometric: α t = cos( π t 2 ) , β t = sin( π t 2 ) (v ariance-preserving) • α t = √ 1 − t 2 , β t = t (v ariance-preserving; time-rescaled Ornstein-Uhlenbeck) The velocity field and score are defined as: b t ( x ) = E [ ˙ I t | I t = x ] , (8) s t ( x ) = ∇ log ρ t ( x ) . (9) Both can be estimated by regression: b t by minimizing E [ | ˙ I t − b t ( I t ) | 2 ] , and s t via denoising score matching using the conditional Gaussian structure I t | x 1 ∼ N ( β t x 1 , α 2 t I ) . The two are related by: s t ( x ) = β t b t ( x ) − ˙ β t x α t ( ˙ α t β t − α t ˙ β t ) . (10) A.2 The General SDE and Fokk er -Planck Equation The general SDE used in our framew ork is: dx t = b t ( x t ) dt + γ 2 t s t ( x t ) dt + √ 2 γ t dW t . (11) The corresponding Fokker -Planck equation for the density is: ∂ t ρ t + ∇ · ( b t + γ 2 t s t ) ρ t = γ 2 t ∆ ρ t . (12) Substituting s t = ∇ log ρ t , one can verify that ρ t is preserved for any γ t ≥ 0 : the score correction γ 2 t s t ρ t = γ 2 t ∇ ρ t and the dif fusion term γ 2 t ∆ ρ t cancel in the flux, lea ving the ev olution determined by b t alone. This is a local detailed balance condition at each time t ; since b t and s t are time-dependent, the overall dynamics describe nonequilibrium sampling from ρ 0 to ρ 1 . A.3 Likelihood Computation via the Pr obability Flow ODE Setting γ t = 0 in the general SDE gi ves the probability flo w ODE ˙ x t = b t ( x t ) . (13) The corresponding transport equation for the density is ∂ t ρ t + ∇ · ( b t ρ t ) = 0 . (14) This can be solved by the method of characteristics. Let x t be a solution of the ODE starting from x 0 . Differentiating ρ t ( x t ) along the flo w we deduce d dt ρ t ( x t ) = ∂ t ρ t ( x t ) + ∇ ρ t ( x t ) · ˙ x t = ∂ t ρ t ( x t ) + ∇ ρ t ( x t ) · b t ( x t ) . (15) Using the transport equation ∂ t ρ t = −∇ · ( b t ρ t ) = − b t · ∇ ρ t − ρ t ∇ · b t , we obtain d dt ρ t ( x t ) = − ρ t ( x t ) ∇ · b t ( x t ) . (16) This is a linear ODE in ρ t ( x t ) , with solution ρ t ( x t ) = ρ 0 ( x 0 ) exp − Z t 0 ∇ · b τ ( x τ ) dτ . (17) T aking logarithms gi ves the log-likelihood log ρ t ( x t ) = log ρ 0 ( x 0 ) − Z t 0 ∇ · b τ ( x τ ) dτ . (18) In practice, giv en a data sample x 1 ∼ ρ 1 , we integrate the ODE backward from t = 1 to t = 0 to obtain x 0 , and accumulate the diver gence ∇ · b t along the trajectory . Since ρ 0 = N (0 , I ) , the term log ρ 0 ( x 0 ) is simply − 1 2 ∥ x 0 ∥ 2 − d 2 log(2 π ) . 14 Probing the Geometry of Diffusion Models with the String Method B String Method: Derivation and Implementation B.1 Continuous Evolution W e deriv e the continuous string ev olution equation from the constraint that arc-length parametrization is preserved. Proposition B.1. Let ϕ t : [0 , 1] → R d be a curve evolving under a velocity field v t . Assume that the endpoints evolve as independent points: ˙ ϕ t (0) = v t ( ϕ t (0)) , ˙ ϕ t (1) = v t ( ϕ t (1)) . (19) Then, for s ∈ (0 , 1) , the evolution ˙ ϕ t ( s ) = v t ( ϕ t ( s )) + λ t ( s ) ∂ s ϕ t ( s ) (20) pr eserves the ar c-length parametrization | ∂ s ϕ t ( s ) | = L ( t ) (constant in s ) for an appropriate choice of λ t ( s ) with λ t (0) = λ t (1) = 0 . Pr oof. The arc-length parametrization requires | ∂ s ϕ t | 2 to be constant in s , i.e., ⟨ ∂ s ϕ t , ∂ 2 s ϕ t ⟩ = 0 for all s and t . T aking the time deriv ati ve: ∂ t ⟨ ∂ s ϕ t , ∂ 2 s ϕ t ⟩ = ⟨ ∂ s ˙ ϕ t , ∂ 2 s ϕ t ⟩ + ⟨ ∂ s ϕ t , ∂ 2 s ˙ ϕ t ⟩ = 0 . (21) Substituting ˙ ϕ t = v t ( ϕ t ) + λ t ∂ s ϕ t and requiring this to hold for all s determines λ t ( s ) . The boundary conditions λ t (0) = λ t (1) = 0 are consistent with the endpoints e v olving according to v t . In practice, we enforce the constraint via discrete reparametrization rather than computing λ t explicitly . The deriv ation abov e applies to R d with the Euclidean metric; for Riemannian manifolds such as S O (3) , we work directly with the discrete algorithm. B.2 Reparametrization on R d The discrete reparametrization step redistributes points { ϕ ( i ) } N i =0 to equal arc-length spacing: 1. Compute cumulativ e arc-lengths: L 0 = 0 , L i = L i − 1 + | ϕ ( i ) − ϕ ( i − 1) | . 2. Normalize: α i = L i /L N ∈ [0 , 1] . 3. Fit a cubic spline through ( α i , ϕ ( i ) ) . 4. Evaluate at uniform positions: ϕ ( i ) new = spline ( i/ N ) . B.3 Reparametrization on S O (3) On S O (3) , reparametrization requires con v erting between the rotation matrix representation { ϕ ( i ) M } N i =0 and the axis- angle vector representation { ϕ ( i ) V } N i =0 . T o reparametrize into K + 1 equally spaced points { ψ ( j ) } K j =0 : 1. Compute the incremental rotations: s ( i ) M = ϕ ( i ) M ( ϕ ( i − 1) M ) − 1 for 1 ≤ i ≤ N . 2. Compute cumulativ e arc-lengths in the axis-angle representation: L 0 = 0 , L i = L i − 1 + | s ( i ) V | . 3. Normalize: α i = L i /L N ∈ [0 , 1] . 4. For each 0 ≤ j ≤ K , find the preceding index p ( j ) = max { i : α i ≤ j /K } . 5. Compute interpolated displacements: d ( j ) V = s ( p ( j )+1) V · j /K − α p ( j ) α p ( j )+1 − α p ( j ) . 6. Set ψ (0) M = ϕ (0) M , ψ ( K ) M = ϕ ( N ) M , and for 1 ≤ j ≤ K − 1 : ψ ( j ) M = d ( j ) M ϕ ( p ( j )) M . B.4 Reparametrization on S E (3) The group S E (3) is the semidirect product of R 3 and S O (3) , hence ev ery element ϕ ∈ S E (3) can be written as ϕ = ( t, R ) with t ∈ R 3 and R ∈ S O (3) . There is not a criterion to choose a norm in S E (3) , in this paper we chose to hav e | ϕ | = p | t | 2 + | R V | 2 where | R V | is the norm of the vector in the axis angle representation. W ith this choice for the norm we do the reparameterization as follows: 1. Compute the incremental rotations: s ( i ) M = R ( i ) M ( R ( i − 1) M ) − 1 for 1 ≤ i ≤ N . 15 Probing the Geometry of Diffusion Models with the String Method 2. Compute the incremental translations: q ( i ) = t ( i ) − t ( i − 1) for 1 ≤ i ≤ N . 3. Compute cumulativ e arc-lengths in with this norm: L 0 = 0 , L i = L i − 1 + p | q | 2 + | s V | 2 . 4. Normalize: α i = L i /L N ∈ [0 , 1] . 5. For each 0 ≤ j ≤ K , find the preceding index p ( j ) = max { i : α i ≤ j /K } . 6. Compute interpolated rotation displacements: d ( j ) V = s ( p ( j )+1) V · j /K − α p ( j ) α p ( j )+1 − α p ( j ) . 7. Compute interpolated translation displacements: y ( j ) = q ( p ( j )+1) · j /K − α p ( j ) α p ( j )+1 − α p ( j ) . 8. Set ψ (0) M = ϕ (0) M , ψ ( K ) M = ϕ ( N ) M , and for 1 ≤ j ≤ K − 1 : ψ ( j ) M = ( t p ( j ) + y ( j ) , d ( j ) M ϕ ( p ( j )) M ) . C T esting the score reliability on Gaussian Mixtur es T o examine how score estimation error v aries along the generativ e process (i.e., as t increases from 0 to 1), we trained MLPs to learn a stochastic interpolant transporting a Gaussian distrib ution to a mixture of two Gaussians in d dimensions, for different v alues of d . The two modes in the mixture have means ( ± 3 . 0 , 0 , . . . , 0) ∈ R d and cov ariance matrices 7 . 9 4 ± 6 . 7 √ 3 4 ± 6 . 7 √ 3 4 7 . 9 4 ! ⊕ I d − 2 . where the sign corresponds to the sign of the mean. W e parametrized the score function using an MLP with 3 hidden layers of sizes 512, 1024, and 512. Each model was trained with batch size 1000 for 1500 × d iterations. For inference, we used batch size 5000 × d and 200 timesteps. For each value of d , we trained 10 models; Figure 3 shows the a verage relativ e score error E [ | s t − ˆ s t | / | s t | ] as a function of t . 16 Probing the Geometry of Diffusion Models with the String Method D Hyperparameters f or SiT T able 2: Hyperparameter choices for the SiT Model Hyperparameters V alues Neural network Depth 28 Hidden Size 1152 Patch Size 2 Number of Attention Heads 16 MLP ratio 4.0 Class Dropout Probability 0.1 Input Size 32 Input Channels 4 E Hyperparameters f or DiG T able 3: Hyperparameters of the Distributional Graphormer Protein Model. Hyperparameter Initialization PIDP Data T raining Model depth 12 Hidden dim (Single) 768 Hidden dim (Pair) 256 Hidden dim (Feed Forward) 1024 Number of Heads 32 F Hyperparameters f or ScoreMD T able 4: Graph transformer model definitions e xtracted from the configuration file. Model name hidden_nf feature_embedding_dim n_layers potential dropout transformer_large_score 128 16 3 false 0.0 transformer_large_potential 128 16 3 true 0.0 17 Probing the Geometry of Diffusion Models with the String Method T able 5: Ranged models configuration extracted from the configuration file of the model, it should be kept in mind that for this model t = 0 is actually the interpolant time t = 1 and vice versa. Entry Model refer ence Range 1 transformer_large_score [1 . 0 , 0 . 6] 2 transformer_large_score [0 . 6 , 0 . 1] 3 transformer_large_potential [0 . 1 , 0 . 0] G Another model: ConfDiff W e also applied the model on another model: ConfDiff [ 22 ], a model operating on S O (3) trained on the Protein Data Bank up to December 2021. WW Domain. The WW domain is a small protein module that has serv ed as a model system for studying protein folding due to its fast folding kinetics and simple topology [ 34 ]. Using ConfDiff, we computed the folding pathway from an extended to a folded conformation (Figure 11). The pathway reveals progressive formation of secondary structure, consistent with experimental observ ations of WW domain folding [33]. Figure 11: Pathway from e xtended to folded conformation computed using ConfDiff. Intermediates show progressi ve formation of secondary structure. G.1 Hyperparameters of the model T able 6: Hyperparameter choices for ConfDiff Model Hyperparameters V alues Neural network Number of IP A blocks 4 Dimension of single repr . 256 Dimension of pairwise Repr . 128 Dimension of hidden 256 Number of IP A attention heads 4 Number of IP A query points 8 Number of IP A value points 12 Number of transformer attention heads 4 Number of transformer layers 2 18 Probing the Geometry of Diffusion Models with the String Method H Additional Experiments H.1 Effect of the T emperature in finite-temperature string method 0 20 40 60 I m a g e I n d e x i 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 12: Effect of temperature T on images principal curve. Lower T driv es paths through cartoonish high-likelihood modes (top); higher T preserves realism (bottom). 0 20 40 60 I m a g e I n d e x i 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 13: Effect of temperature T on images principal curve. Lower T driv es paths through cartoonish high-likelihood modes (top); higher T preserves realism (bottom). 0 20 40 60 I m a g e I n d e x i 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 14: Effect of temperature T on images principal curve. Lower T driv es paths through cartoonish high-likelihood modes (top); higher T preserves realism (bottom). 19 Probing the Geometry of Diffusion Models with the String Method 0 20 40 60 I m a g e I n d e x i 0.0 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 15: Effect of temperature T on images principal curve. Lower T driv es paths through cartoonish high-likelihood modes (top); higher T preserves realism (bottom). 0 20 40 60 I m a g e I n d e x i 0.0 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 16: Effect of temperature T on images principal curve. Lower T driv es paths through cartoonish high-likelihood modes (top); higher T preserves realism (bottom). 0 20 40 60 I m a g e I n d e x i 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 17: Effect of temperature T on images principal curve. Lower T driv es paths through cartoonish high-likelihood modes (top); higher T preserves realism (bottom). 0 20 40 60 I m a g e I n d e x i 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 18: Effect of temperature T on images principal curve. Lower T driv es paths through cartoonish high-likelihood modes (top); higher T preserves realism (bottom). 20 Probing the Geometry of Diffusion Models with the String Method 0 20 40 60 I m a g e I n d e x i 0.0 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 19: Effect of temperature T on images principal curve. Lower T driv es paths through cartoonish high-likelihood modes (top); higher T preserves realism (bottom). 0 20 40 60 I m a g e I n d e x i 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 20: Effect of temperature T on images principal curve. Lower T driv es paths through cartoonish high-likelihood modes (top); higher T preserves realism (bottom). 0 20 40 60 I m a g e I n d e x i 0.0 2.5 5.0 7.5 10.0 12.5 15.0 17.5 L og Lik elihood (dB/Dims) 6.5 6.0 5.5 5.0 4.5 4.0 L o g 1 0 D e n s i t y o f I m a g e s Figure 21: Effect of temperature T on images principal curve. Lower T driv es paths through cartoonish high-likelihood modes (top); higher T preserves realism (bottom). 21 Probing the Geometry of Diffusion Models with the String Method H.2 Multiple realizations of a principle cur ves Principal Curve W alk ers Figure 22: A principal curves with three realizations coming from this curve Principal Curve W alk ers Figure 23: A principal curves with three realizations coming from this curve Principal Curve W alk ers Figure 24: A principal curves with three realizations coming from this curve 22 Probing the Geometry of Diffusion Models with the String Method Principal Curve W alk ers Figure 25: A principal curves with three realizations coming from this curve Principal Curve W alk ers Figure 26: A principal curves with three realizations coming from this curve Principal Curve W alk ers Figure 27: A principal curves with three realizations coming from this curve 23 Probing the Geometry of Diffusion Models with the String Method Principal Curve W alk ers Figure 28: A principal curves with three realizations coming from this curve Principal Curve W alk ers Figure 29: A principal curves with three realizations coming from this curve Principal Curve W alk ers Figure 30: A principal curves with three realizations coming from this curve 24 Probing the Geometry of Diffusion Models with the String Method Principal Curve W alk ers Figure 31: A principal curves with three realizations coming from this curve 25

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment