Transmission Delay Minimization for NOMA-Based F-RANs

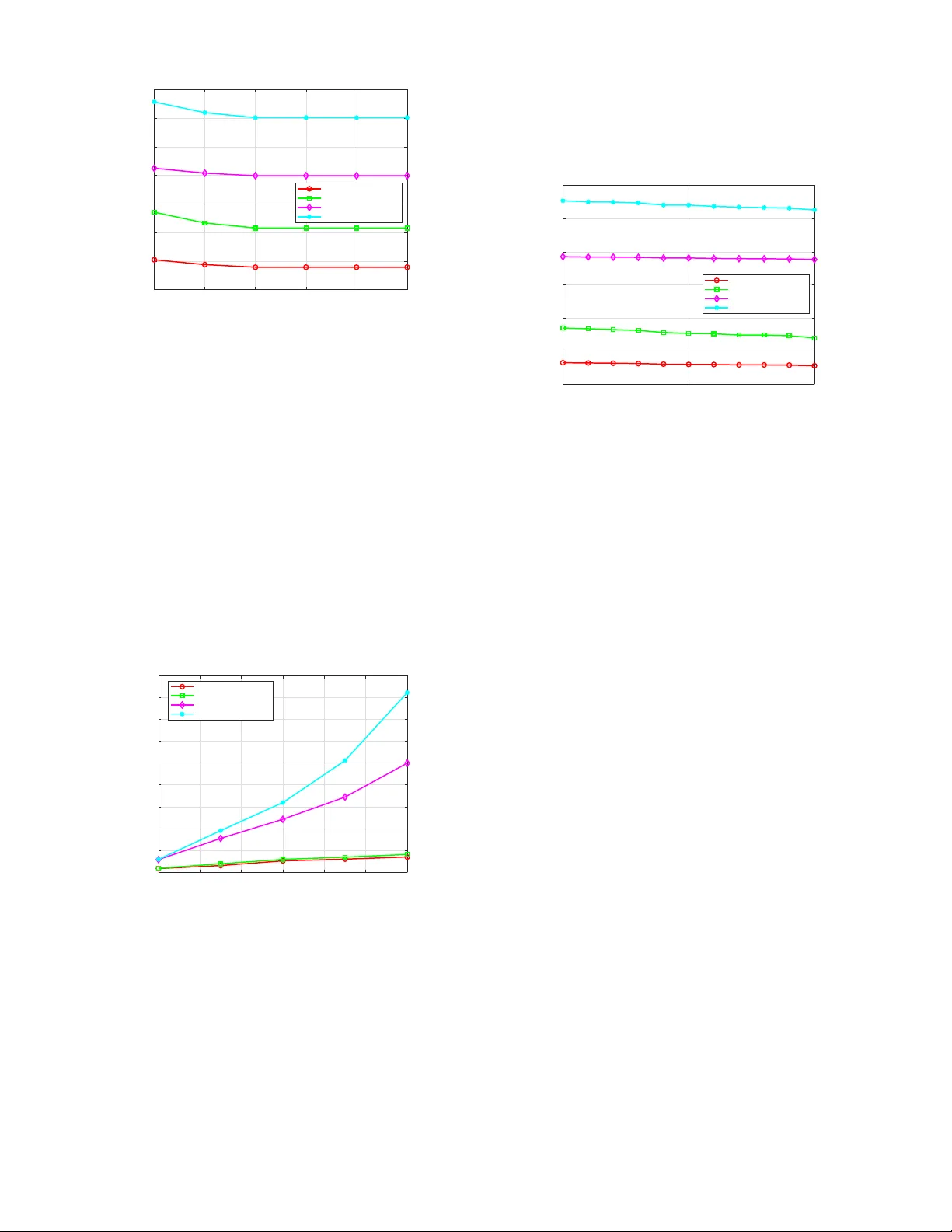

A novel non-orthogonal multiple access (NOMA) based low-delay service framework is proposed for fog radio access networks (F-RANs). Fog access points (FAPs) leverage NOMA for local delivery of cached content, while the cloud access point employs NOMA…

Authors: Yuan Ai, Xidong Mu, Pengbo Si