Language Models Exhibit Inconsistent Biases Towards Algorithmic Agents and Human Experts

Large language models are increasingly used in decision-making tasks that require them to process information from a variety of sources, including both human experts and other algorithmic agents. How do LLMs weigh the information provided by these di…

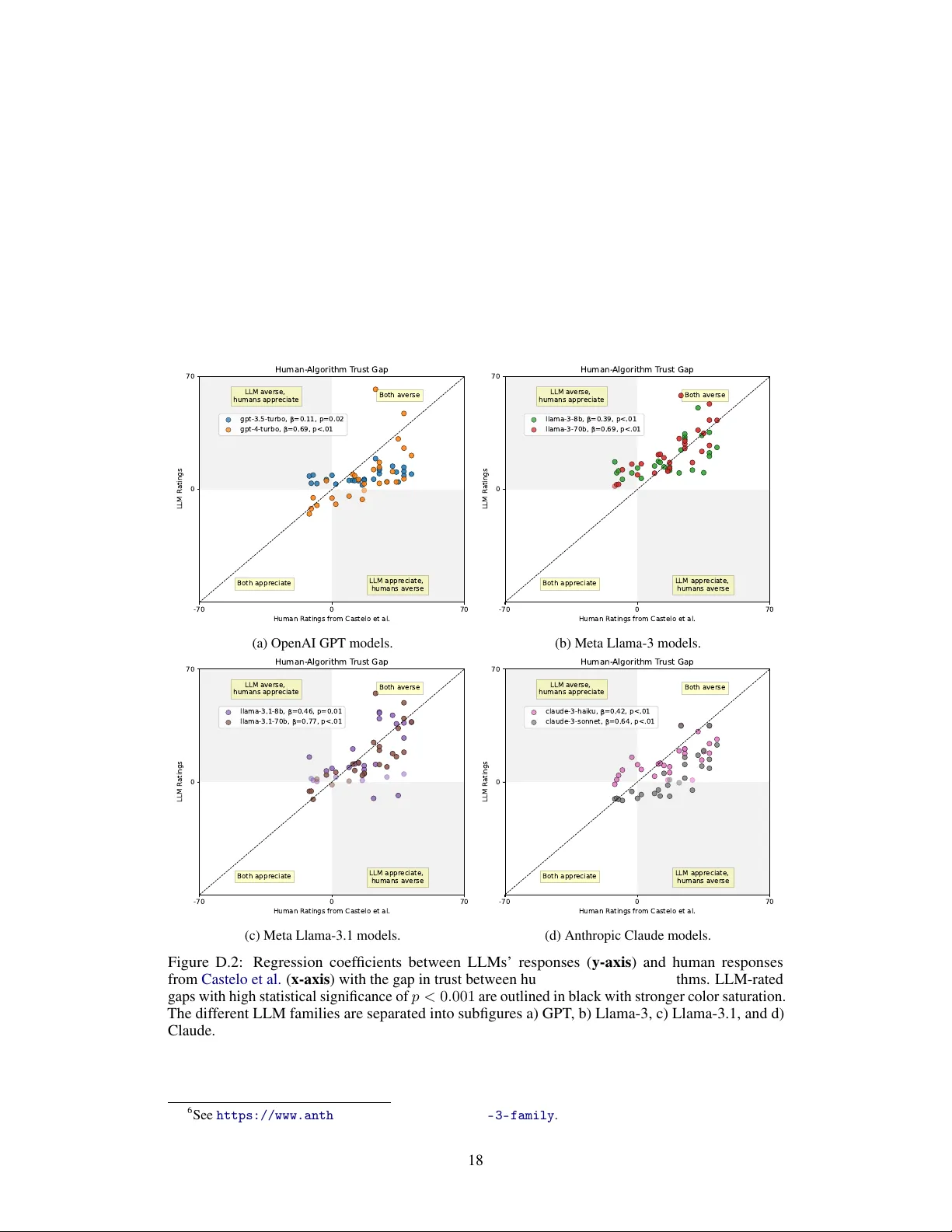

Authors: Jessica Y. Bo, Lillio Mok, Ashton Anderson