TG-ASR: Translation-Guided Learning with Parallel Gated Cross Attention for Low-Resource Automatic Speech Recognition

Low-resource automatic speech recognition (ASR) continues to pose significant challenges, primarily due to the limited availability of transcribed data for numerous languages. While a wealth of spoken content is accessible in television dramas and on…

Authors: Cheng-Yeh Yang, Chien-Chun Wang, Li-Wei Chen

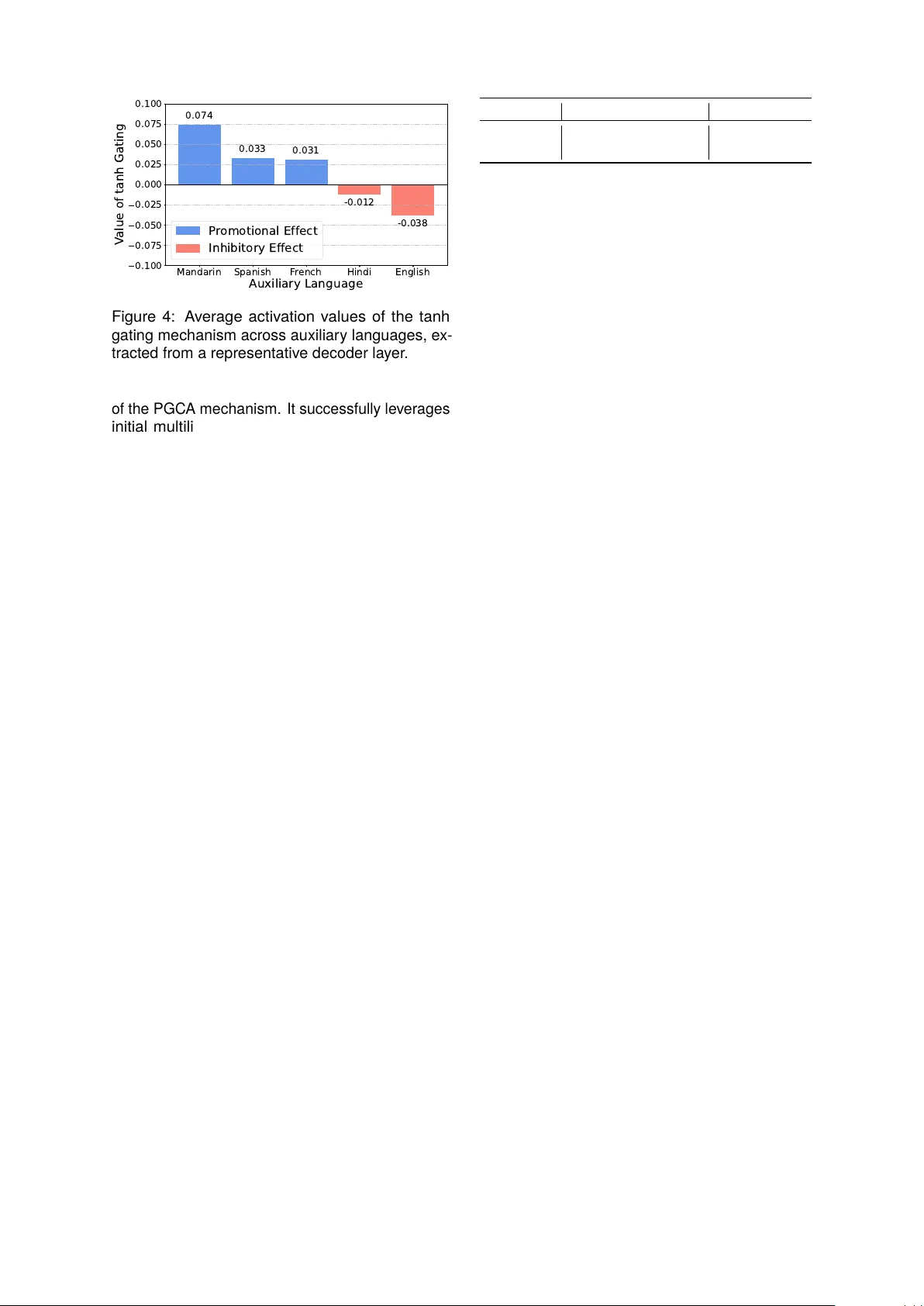

T G-ASR: T ranslation-Guided Learning with Parallel Gated Cross A ttention for Lo w-Resource Automatic Speech Recognition Cheng- Y eh Y ang 1 , Chien-Chun W ang 1 , Li-W ei Chen 3 , Hung-Shin Lee 3 , Hsin-Min W ang 2 , and Berlin Chen 1 1 Dept. Computer Science and Information Engineering, National T aiwan Normal University , T aiwan 2 Institute of Computer Science, Academia Sinica, T aiwan 3 United Link Co., Ltd., T aiwan Abstract Low-resource automatic speech recognition (ASR) continues to pose significant challenges, primarily due to the limited av ailability of transcribed data for numerous languages. While a wealth of spoken content is accessible in tele vision dramas and online videos, T aiwanese Hokkien ex em plifies this issue, with transcriptions of ten being scarce and the majority of available subtitles provided only in Mandarin. T o address this deficiency , we introduce T G-ASR for T aiw anese Hokkien drama speech recognition, a translation-guided ASR framework that utilizes multilingual translation embeddings to enhance recognition per formance in low-resource environments. The framework is centered around the parallel gated cross-attention (PGCA) mechanism, which adaptively integrat es embeddings from various auxiliar y languages into the ASR decoder . This mechanism facilitates robust cross-linguistic semantic guidance while ensuring stable optimization and minimizing interference betw een languages. T o support ongoing research initiatives, we present Y T - THDC, a 30-hour corpus of T aiwanese Hokkien drama speech with aligned Mandarin subtitles and manually verified T aiw anese Hokkien transcriptions. Comprehensive experiments and analyses identify the auxiliar y languages that most effectively enhance ASR per formance, achieving a 14.77% relativ e reduction in character error rate and demonstrating the efficacy of translation-guided learning for underrepresent ed languages in practical applications. Ke ywords: Low-resource automatic speech recognition, T aiwanese Hokkien, translation-guided learning, parallel gated cross attention, multilingual auxiliary language integration 1. Introduction While multilingual cor pora and large-scale speech datasets hav e significantly advanced automatic speech recognition (ASR) ( Ardila et al. , 2020 ; Pratap et al. , 2020 ; Wang et al. , 2021 ), many re- gional and endangered languages remain critically underrepresent ed ( Besacier et al. , 2014 ; Y ero yan and Karpov , 2024 ; Bar telds et al. , 2023 ). The scarcity of transcribed speech, linguistic resources, and standardized ev aluation benchmar ks contin- ues to hamper the development of ASR for low- resource languages. ASR systems trained on high- resource languages, such as Mandarin or English, often exhibit difficulties in generalizing to typologi- cally distinct and data-sparse languages, leading to unstable conver gence and diminished transcription accuracy . In T aiw an, T aiwanese Hokkien ex em plifies a significant challenge in the realm of speech pro- cessing. Despite the av ailability of numerous T ai- wanese Hokkien TV dramas and online videos, most subtitles are predominantly written in Man- darin, which results in the speech of T aiwanese Hokkien being largely untranscribed. This dis- crepancy not only restricts the accessibility of T ai- wanese Hokkien-language media but also threat- ens the preser vation of cultural heritage. T o ad- dress this issue, we propose the dev elopment of an ASR system capable of generating T aiwanese Hokkien transcriptions that are aligned with the exis ting Mandarin subtitles. The primar y objec- tives of our system are to enhance bilingual ac- cessibility , facilitate subtitle generation, and con- tribute to the preservation of the language. Fig- ure 1 illustrates this conte xt, where (a) depicts a typical scene from a T aiwanese Hokkien drama featuring only Mandarin subtitles, while (b) show- cases our envisioned outcome, which includes sub- titles in T aiwanese Hokkien. T o suppor t this initia- tive, we hav e constructed and released a nov el corpus, Y T - THDC ( Y ou T ube-sourced T aiwanese H okkien D rama C orpus), comprising approximat ely 30 hours of T aiwanese Hokkien drama speech that is meticulously aligned with Mandarin subtitles and manually verified T aiwanese Hokkien te xt transcrip- tions. This cor pus ser ves as a valuable benchmar k for low-resource ASR research and facilitates a syst ematic inv estigation of translation-guided ap- proaches within real-world media conte xts. T raditional approaches to low-resource ASR hav e explored data augmentation ( P ar k et al. , 2019 ; K o et al. , 2015 ), multilingual joint training ( Con- neau et al. , 2021 ; Kannan et al. , 2019 ), and transfer learning from resource-rich languages ( Watanabe et al. , 2017 ; Bapna et al. , 2022 ). Howev er , these (a) 伊 哪 有 可 能 去 惹 這 號 代 誌 啦 (b) Figure 1: Illustration of the T aiw anese Hokkien drama subtitles. (a) A scene with spoken T ai- wanese Hokkien and existing Mandarin subtitles enclosed in a blue bo x 1 . (b) The outcome of our framework, showing automatically generated T ai- wanese Hokkien subtitles, highlighted with a red box, aligned with the Mandarin subtitles. methods e xhibit limited efficacy when speech data is ex ceedingly scarce and linguistically divergent from pre-trained languages. Specific to T aiwanese Hokkien, researchers have also attempted to auto- matically generate T aigi transcriptions from dramas by aligning the speech with existing Mandarin subti- tles using an initial ASR system ( Chen et al. , 2020 ). Recent research indicates that integrating auxiliar y information, such as translat ed te xt, can pro vide additional semantic super vision, thereby enhanc- ing ASR per formance ( Soky et al. , 2022 ; T aniguchi et al. , 2023 ; Rouditchenk o et al. , 2024 ). Motiv ated by this obser vation, we inv estigate how translation embeddings from different auxiliary languages can assist ASR in a specific tar get languag e , T aiwanese Hokkien. In this study , we present TG-ASR , an innov ative T ranslation- G uided A utomatic S peech R ecognition framew ork designed for T aiwanese Hokkien drama speech recognition in low-resource scenarios. Specifically , our framew ork introduces the P ar - allel Gated Cross-A ttention (PGC A) mechanism, which integrat es multilingual translation embed- dings e xtracted from pre-trained language mod- els into the ASR decoder . PGCA adaptivel y regu- lates the contribution of each auxiliar y language, facilitating effective cross-lingual semantic guid- ance while maintaining stable optimization and min- imizing language interference. Through ext ensive experiments, we analyze the auxiliar y languages that most significantly enhance T aiwanese Hokkien ASR per formance and demonstrate the practical utility of translation-guided learning in real-world applications. The main contributions of this study are summarized as follows: 1. An Innov ative T ranslation-Guided ASR 1 The meaning of the subtitle is “How could he pos- sibly get involved in such a thing?” in English. Images were adapt ed from publicly available cont ent by Formosa T elevision Co., Ltd. for educational use. Split Duration (hours) # Utterances T rain 27.51 50,984 T est 2.79 4,859 T otal 30.30 55,843 T able 1: Statistics of Y T - THDC, including total du- ration and number of utterances in each split. Framew ork: W e propose T G-ASR, a novel framework designed for low-resource T ai- wanese Hokkien drama speech recognition. This framework introduces the PGCA mech- anism t o fle xibly and adaptively integrate multilingual translation embeddings, enhancing recognition performance and training stability . 2. A N ew Corpus for Low-Resource Research: The Y T - THDC corpus, a 30-hour collection of T aiwanese Hokkien dramas with correspond- ing transcriptions in both T aiwanese Hokkien and Mandarin, is released to facilitate future re- search in low-resource ASR and bilingual subti- tle generation. 2. Corpus: Y T - THDC The Y ouT ube-sourced T aiwanese Hokkien Drama Corpus (Y T - THDC) is a newly constructed dataset specifically designed for the training and evaluation of ASR models in T aiwanese Hokkien. The corpus comprises numerous drama series sourced from publicly av ailable Y ouT ube videos, encompassing a diverse range of speakers, scenes, and recording environments. This div ersity offer s rich linguistic and acoustic variability , closely reflecting authen- tic usage conditions. Each video features spoken T aiwanese Hokkien paired with Mandarin subtitles, which ser ve as loosely aligned references rather than ex act transcriptions of the spoken content. T o obtain precise speech–te xt pairs, the T aiwanese Hokkien speech was initially transcribed using a pre-trained ASR model, with the outputs subse- quently manually v erified and refined by linguis- tic experts, ensuring high transcription fidelity suit- able for super vised ASR training. The final cor pus consists of approximat ely 30 hours of speech and ov er 50,000 utterances, as summarized in T able 1 . All recordings naturally include multiple speakers, background music, and ambient noise, characteris- tics that render the corpus par ticularly valuable for the dev elopment of noise-robust and conte xt-awar e ASR syst ems. Y T - THDC is av ailable for research use only and ser ves as a foundational resource for studying low-resource T aiwanese Hokkien ASR as well as multilingual transfer learning with cross- lingual te xt super vision. Whisper Decoder Log-Mel Spectrogram Whisper Encoder ❄ MLP Self Attention BERT Translated Transcription Translation Embedding Speech Embedding ❄ Self Attention PGCA Cross Attention MLP ❄ 🔥 Parallel Gated Cross Attention Decoder I nput T anh Gating Feedforward T anh Gating T anh Gating Cross Attention Cross Attention Query Key / V alue Key / V alue Lang. 1 T rans. Embed. Lang. L T rans. Embed. ZH TRANS- CRIBE NO TIME STAMPS 伊 哪 有 ... Decoder Input Self Attention PGCA Cross Attention MLP MLP Self Attention MLP Self Attention MLP Self Attention MLP Self Attention MLP Self Attention ❄ ❄ ❄ 🔥 ❄ ❄ ZH TRANS- CRIBE NO TIME STAMPS 伊 哪 ... SOT 🔥 Trainable ❄ Frozen Figure 2: The architectur e of the proposed T G-ASR, which lev erages our nov el parallel gated cross- attention (PGC A) mechanism to integrate multilingual translated transcription in puts for improv ed knowl- edge transfer in ASR. 3. Methodology : TG-ASR 3.1. F ramework Overview Figure 2 illustrates the architectur e of our translation-guided framework, T G-ASR, trained in a two-stage process. In the first stage, the entire Whisper model, where both the encoder and de- coder are updated simultaneously . The Whisper encoder conv er ts in put speech X into acoustic em- beddings H , which are subsequently processed by the decoder for transcription and language model- ing. In the second stage, the decoder parameters obtained from the fir st stage by incorporating our proposed parallel gated cross-attention la yers and per form fine-tuning. During this stage, the Whisper encoder and the decoder paramet er s from the first stage remain frozen, allowing only the PGCA la yers to be updated. Auxiliar y language transcriptions generated by SeamlessM4T ( Communication et al. , 2023 ) are encoded using a pre-trained Multilingual BERT ( Devlin et al. , 2019 ) to produce multilingual translation embeddings E . These embeddings are integrat ed into the decoder via the PGCA lay ers, where a learnable gating mechanism adaptively regulates the contribution from each auxiliar y lan- guage. The resulting weight ed embeddings are aggregat ed and added to the decoder input Y be- fore being processed through a feedforward neural network (FNN). This design enables the model to incorporate multilingual semantic guidance while preserving the core decoding process of Whisper and limiting parameter updates ex clusively to the PGC A lay ers. 3.2. Multilingual Embedding Extraction T o integrate multilingual semantic knowledge, we lev erage multilingual BERT (mBERT) ( Devlin et al. , 2019 ), a T ransf ormer -based model that generates conte xtualized word embeddings. BERT is pre- trained using masked language modeling and next sentence prediction, enabling it to effectively cap- ture linguistic dependencies across multiple lan- guages. In our framework, we employ mBER T BASE with 12 lay ers, 768 hidden units, and 12 at- tention heads. It extracts multilingual embeddings E l ∈ R T l × d from machine-translated auxiliar y lan- guage transcriptions generated by SeamlessM4T ( Communication et al. , 2023 ), where T l and d de- note the number of tokens in auxiliary language l and the embedding dimension, respectivel y . These embeddings, which encode cross-lingual semantic cues, are integrat ed into the Whisper decoder via the PGC A mechanism, thereby enhancing recog- nition robustness in multilingual ASR scenarios. Notably , mBERT remains frozen during training, en- suring that multilingual information is consistently lev eraged without additional fine-tuning. 3.3. Acoustic Embedding Extraction The Whisper encoder ( Radford et al. , 2023 ) trans- forms raw speech into high-level representations that are conducive for decoding. It processes log- mel spectrograms X ∈ R T s × F through a convolu- tional front-end, followed by T ransformer encoder blocks, wherein self-attention mechanisms cap- ture both local and global temporal dependencies ( V aswani et al. , 2017 ). Here, T s denotes the num- ber of time frames, while F indicates the number of mel-frequency bins. The resultant sequence of con- te xtualized speech embeddings H ∈ R T s × d yields a robust representation of the input signal, facilitat- ing accurate and cross-lingual ASR transcription within our framew ork. Similar to mBERT , the Whis- per encoder remains frozen during the PGCA train- ing stage , lever aging the adapted representations acquired from the initial fine-tuning phase. 3.4. Multilingual Fusion T o enhance tar get language speech recognition, w e ext end the Whisper decoder ( Radford et al. , 2023 ) with gated cross-attention ( Alayrac et al. , 2022 ) that incorporates translated transcriptions, as illustrated in Figure 2 . Our framework integrates auxiliar y translation information while preser ving the core architectur e and functionality of the Whisper de- coder blocks. The original Whisper decoder block consists of self-attention, cross-attention (attend- ing to audio features), and a multi-lay er perceptron (MLP). Inspired by Flamingo ( Alayr ac et al. , 2022 ), we introduce a parallel gated cross-attention mech- anism to fuse multilingual embeddings derived from translated transcriptions. Unlike Whisper -Flamingo ( Rouditchenk o et al. , 2024 ), which employs a single cross-attention module for visual input, our frame- work introduces multiple parallel cross-attention modules. Each module independently attends to multilingual embeddings E l derived from L trans- lated versions of the auxiliar y language transcrip- tion, where L denotes the total number of auxiliar y languages considered. F ormally , given decoder input Y ∈ R T y × d and multilingual embeddings { E 1 , . . . , E L } , where T y denotes the number of to- kens in the decoder input, the PGCA mechanism operates as follows: Y ′ = Y + L X l =1 tanh( α attn ( l ) ) × attn( Y , E l , E l ) , (1) Z = Y ′ + tanh( α FNN ) × FNN( Y ′ ) , (2) where α ( l ) attn and α FNN are learnable gating parame- ters that dynamically regulat e the influence of each auxiliary language in conjunction with the feedfor - ward transformation. In this conte xt, tanh denotes a gating function that constrains the contribution range, attn represents the cross-attention mecha- nism between decoder states and translation em- beddings, and FNN refers to the feedforward neural network that refines the integrat ed representation. All gating parameters ( α (1) attn , . . . , α ( L ) attn , and α FNN ) are initialized to zero to ensure training stability . The PGCA modules are strategicall y positioned at the outset of each Whisper decoder block to seam- lessly inject multilingual semantic context early in the decoding process, thereb y enhancing accuracy through adaptive cross-lingual guidance. 4. Experimental Setup 4.1. Backbone Model Our framework is built upon Whisper ( Radford et al. , 2023 ), an open-source speech recognition model dev eloped by OpenAI. Whisper has been trained on ov er 680,000 hours of multilingual and multi- task super vised data, enabling robust transcription across diverse accents, noise conditions, and do- mains. The model is av ailable in v arious sizes, from “tiny” to “large, ” offering flexibility to balance computational efficiency and transcription accuracy . For our e xperiments, we utilized the Whisper Small model, which provides an advantageous trade-off between recognition performance and resource re- quirements. This makes it well suited for multilin- gual speech processing tasks in both research and applied settings. 4.2. Model Configuration Model training was conducted in two stages to ensure stable convergence and effective adapta- tion to the target language. In the first stage, the Whisper Small model was fine-tuned on T aiwanese Hokkien speech with a batch size of 4 and a learn- ing rat e of 1 . 25 × 10 − 5 for 80,000 steps, including a warm-up phase of 8,000 steps. The second stage resumed from the best checkpoint obtained in the first stage and continued training for 180,000 steps with a larger batch size of 8 and a learning rate of 5 . 0 × 10 − 5 , using 30,000 warm-up steps. Both stages utilized the AdamW optimizer ( Loshchilov and Hutter , 2019 ) with a weight decay of 0.01 and employ ed mixed precision (FP16) to enhance train- ing efficiency . The audio input duration was con- strained to 10 seconds, corresponding to a maxi- mum of 160,000 samples, while the acoustic rep- resentation employ ed 80 mel-frequency bins. For multilingual super vision, the number of auxiliar y languages L was set to 5, com prising Mandarin, English, Hindi, Spanish, and F rench, selected as the five most widely spok en languages globally to maximize linguistic diversity . Both the auxiliar y language embeddings and the acoustic representa- tions were encoded with an embedding dimension of 768. 4.3. Evaluation Metric Character error rate (CER) ser ves as the primar y metric for ev aluating the transcription accuracy of the proposed T G-ASR framew ork. CER q uantifies the propor tion of incorrectly recognized characters by comparing the predicted transcription against the reference transcription. This metric accounts for character substitutions, deletions, and inser - tions, offering a fine-grained measure of ASR per - ID Aux. Lang. CER % Rel. % A0 - 13.40 - A1 Mandarin (G T) 11.87 11.42 A2 Hindi 13.17 1.72 A3 English 13.10 2.24 A4 F rench 12.98 3.13 A5 Spanish 12.84 4.18 A6 Mandarin (G T) + Spanish 11.42 14.77 T able 2: CERs and relativ e reductions (Rel.) on YT - THDC using different auxiliar y languages (aux. lang.), where Mandarin ser ves as the ground-truth (G T) subtitle refer ence provided in the corpus. formance. CER is par ticularly appropriate for low- resource languages, such as T aiwanese Hokkien, where word boundaries can be ambiguous and tok enization standards may differ . A lower CER sig- nifies greater transcription accuracy , establishing it as a reliable metric for comparing various models and assessing advancements in speech recogni- tion per formance. Note that the CERs reported in our experiments are computed under a teacher - forcing decoding setting to specifically ev aluate the optimal integration of translation guidance. 5. Results and Discussion 5.1. Main Results on YT - THDC T able 2 presents the key findings from our experi- ments, which uneq uivocally illustrate the efficacy of the proposed translation-guided framew ork in im- proving ASR per formance for T aiwanese Hokkien. The baseline model (A0), trained ex clusively on T ai- wanese Hokkien transcripts without auxiliar y super - vision, ser ves as a ref erence point for per formance ev aluation. Upon the introduction of auxiliar y tex- tual signals, consistent improv ements are observed across all configurations, thereby v alidating the ad- vantages of utilizing multilingual textual guidance. Among single-language super visors, the ground- truth Mandarin reference (A1) achieves the most significant reduction in CER. This finding aligns with our hypot hesis that a semantically close and high-quality auxiliar y language offer s the most ef- fectiv e guidance signal for model optimization. The machine-translated auxiliary languages (A2 to A5) fur ther demonstrate the robustness and generaliz- ability of our framework. Despite the presence of translation noise, all four languages lead to notable CER reductions compared to the baseline, indicat- ing that semantic cues from cross-lingual te xt pro- vide valuable super vision. Int erestingly , Spanish (A5) results in the most substantial improv ement among the translated te xts, surpassing typologi- ID Configuration CER % A6 F ull PGCA 11.42 A7 w/o tanh Gating 11.46 A8 Sequential Attention 11.60 A9 Shared Attention 12.00 A10 Addition 27.68 A11 Concatenation 24.09 T able 3: Ablation study on the PGCA mechanism. cally more distant languages such as Hindi (A2). These results suggest that ev en appro ximate se- mantic alignment, as captured through machine translation, can provide significant benefits when utilized as auxiliar y super vision. The most significant outcome arises from the multilingual combination (A6), which attains the lowest CER and the highest relative improvement throughout the entire study , even surpassing the strong Mandarin-only condition (A1). This finding indicates that the model adeptly lev erages com- plementary information across languages, likely reaping the benefits of diverse linguistic viewpoints that strengthen shar ed semantics and mitigate ov er - fitting to any individual translation source. Collec- tively , these results provide compelling evidence in suppor t of our hypothesis that the integration of multilingual textual information constitutes an ef- fectiv e and scalable approach for enhancing ASR syst ems in low-resource settings. 5.2. Analytical Evaluation of PGCA T o validate the effectiveness and architectural de- sign of the proposed PGCA module, we per formed a comprehensive ablation study . The results, sum- marized in T able 3 , systematically deconstruct the PGC A module to evaluat e the contribution of each fundamental component. Our complete model (A6), which incorporates all components, ser ves as the baseline for comparison. We first analyzed the structural elements within the PGCA. When the tanh gating mechanism was omitted (A7), per for - mance exhibit ed a slight degradation, highlighting that the gating function is pivotal in regulating the flow of multilingual information. Furthermore, sub- stituting the original five-w ay parallel attention with a sequential configuration (A8) result ed in a higher CER, reinforcing the significance of enabling the decoder to attend to all auxiliar y languages concur- rently rather than sequentially . Additionally , trans- forming the five independent attention branches into a shared-weight configuration (A9) fur ther di- minished accuracy , indicating that preserving inde- pendent attention parameters for each language facilitates more precise and language-specific in- teractions. ID Aux. Lang. CER % Proximity T ai. Man. A1 Mandarin (G T) 11.87 0.905 - A2 Hindi 13.17 0.854 0.879 A3 English 13.10 0.552 0.590 A4 F rench 12.98 0.821 0.847 A5 Spanish 12.84 0.843 0.873 T able 4: CERs and language proximity between each auxiliar y language translation and transcrip- tions of T aiwanese Hokkien (T ai.) subtitles of Man- darin (Man.) on Y T - THDC. W e fur ther compared our proposed PGCA frame- work against two widely utilized fusion strategies. Substituting the entire PGCA module with straight- forward element-wise addition (A10) or concate- nation (A11) led to significant per formance degra- dation. These results demonstrate that our PGCA module offers a more efficient approach for integrat- ing multilingual representations through its syner - gistic use of parallel attention, independent weight- ing, and gated control. 5.3. Contribution of Auxiliar y Languag es T o inves tigate why cer tain auxiliar y languages yield greater impro vements, we ex amined the relation- ship between ASR per formance and language pro x- imity . We define language proximity in this con- te xt as the similarity between sentence represen- tations derived from a pre-trained mBER T model, hypo thesizing that this measure captures relev ant linguistic and semantic factors bey ond pure mean- ing alignment offered by models like LaBSE ( Feng et al. , 2022 ). Specifically , we measured this prox- imity using embeddings derived from the [CLS] to- ken of the pre-trained mBERT model,hypot hesizing that this might capture relev ant linguistic and se- mantic factors. F or each sentence pair , we en- coded the ground-truth T aiwanese Hokkien tran- script and its machine-translated counterpar t using mBERT , extract ed their respective [CLS] embed- dings, and computed the cosine similarity . These scores were aver aged across the dataset to yield a single pro ximity value between each auxiliary language and T aiwanese Hokkien (T ai.). We also computed pro ximity relative to the Mandarin subti- tles (Man.) present in our corpus. T able 4 presents the CERs alongside v arious pro ximity measures. A discernible trend emerges when ex amining proximity to T aiw anese Hokkien: languages with higher proximity generally corre- late with enhanced ASR per formance; howev er , the relationship is not strictly monotonic. Mandarin ser ves as the most prominent e xample, demonstrat- ing the highest pro ximity and achieving the optimal 1 2 3 4 5 Number of Auxiliary Languages 11.4 11.5 11.6 11.7 11.8 11.9 CER (%) Figure 3: CERs on Y T - THDC as the number of auxiliary languages increases. CER. In contrast, English e xhibits the lowest pro x- imity and is associated with inferior ASR results. Conv er sely , other languages exhibit more intricate patterns; for instance, Hindi, despite its high prox- imity , results in the worst CER, whereas Spanish outperforms French, even though both languages display similar pro ximity scores. Notably , proximity scores computed in relation to the Mandarin subtitles exhibit a weak er correla- tion with the final per formance of the T aiwanese Hokkien ASR. This obser vation indicates that the direct pro ximity to the target language (T aiw anese Hokkien), as quantified by mBER T [CLS] similar - ity , ser ves as a more per tinent, albeit imper fect, predictor of an auxiliar y language’ s pot ential contri- bution than does its pro ximity to the intermediate Mandarin te xt. Moreov er , other factors bey ond this specific proximity measure are likel y to impact the ov erall per formance. 5.4. Analysis of Language Quantity T o ev aluate scalability and the im pact of multilin- gual data quantity , we conducted an incr emental ex- periment, cumulatively adding auxiliar y languages based on their individual effectiveness (best first, see T able 2 ), up to all five languages. Figure 3 illustrat es the outcomes, revealing a non-monotonic trend. Performance significantly improv es when adding the second-best language to the single best one, achieving the lowest CER. This suggests a strong complementar y effect be- tween the top two auxiliary languages (Mandarin and Spanish). Howev er , progressivel y including more languages leads to a gradual CER increase, although per formance remains substantially bet- ter than using only the single best language. This indicates that adding languages with lower effec- tiveness or pro ximity ma y introduce some noise or interference that slightly diminishes the peak performance achieved with two languages. Despite this slight upward trend af ter the second language, the results demonstrate the robustness Mandarin Spanish F r ench Hindi English Auxiliary Language 0.100 0.075 0.050 0.025 0.000 0.025 0.050 0.075 0.100 V alue of tanh Gating 0.074 0.033 0.031 -0.012 -0.038 P r omotional Effect Inhibitory Effect Figure 4: A verage activation values of the tanh gating mechanism across auxiliary languages, ex- tracted from a representativ e decoder lay er . of the PGC A mechanism. It successfully leverages initial multilingual data for substantial gains and maintains strong per formance ev en with less opti- mal languages, prev enting significant degradation. The learnable gating function likely play s a key role by adaptiv ely weighting contributions, even if inter - ference from the later -added languages is not per - fectly suppressed. This e xperiment highlights that while our framework benefits from multilingualism, carefully selecting the optimal number of auxiliar y languages might yield the best results. 5.5. Dynamic Behavior of tanh Gating T o deepen our understanding of the pr oposed PGC A mechanism’s regulation of multilingual in- formation flow , we examined the functionality of its learnable tanh gating com ponent. The underlying hypo thesis posits that these gat es are essential for dynamically balancing the contributions of vari- ous auxiliar y languages, thus improving informativ e signals while attenuating potentially noisy or less relev ant inputs. T o validate this hypo thesis, we extr acted the activation values of the tanh gates from a representativ e decoder lay er of the trained model, where intricate semantic interactions are prominently established. Figure 4 presents the a veraged gating weights for each auxiliar y language. Positiv e activations corre- spond to languages that the model promotes during decoding, indicating a strong semantic alignment with the target speech. Conv er sely , negative activa- tions signify languages that the model suppresses, suggesting limited utility or potential inter ference in cross-lingual feature integration. Mandarin, show- casing the highest semantic alignment with T ai- wanese Hokkien, e xhibits the strongest positiv e ac- tivation, corroborating that the gating mechanism adaptivel y prioritizes it as the most informative su- per visor y signal. This behavior aligns with our main quantitative findings (see T able 2 ), where Mandarin super vision achiev es the lowest CER. In contrast, ID Model CER % A6 SeamlessM4T 11.42 A12 NLLB 11.52 T able 5: Comparison of ASR per formance using different translation models. English and Hindi, which yield smaller per formance gains in previous e xperiments, demonstrate neg- ative gating activations, indicating that the model activel y filters out less beneficial signals rather than passively ignoring them. These obser vations underscore the adaptiv e and discriminative characteristics of the PGC A gating mechanism. By learning to modulate the contribu- tions of each auxiliar y language, the model effec- tively achieves stability and robustness as multilin- gual inputs increase. This dynamic control mecha- nism offers an internal rationale for the consistent performance improv ements not ed in our scalabil- ity analysis (see Figure 3 ), demonstrating that the model not only capitalizes on multilingual diversity but also dev elops an intelligent approach to man- aging it. 5.6. Impact of Auxiliar y Source T o determine the optimal source of multilingual guid- ance for our T G-ASR framew ork, we conducted a comparative s tudy between two leading multilingual translation models: SeamlessM4T ( Communica- tion et al. , 2023 ) and NLLB ( T eam et al. , 2022 ). The goal was to assess which model’s generat ed trans- lations provide more effective auxiliar y super vision for T aiwanese Hokkien ASR. For a fair evaluation, we employ ed the optimal two-language combina- tion identified in our scalability analysis (Mandarin + Spanish) as the auxiliar y input generated by each respectiv e translation model. The results are presented in T able 5 . The com- parison indicates that both models function as po- tent sources for translation-guided learning; how- ev er , the incor poration of auxiliar y texts generated by SeamlessM4T results in a markedly superior CER on our principal ASR task. This per formance advantage in speech recognition aligns with the re- por ted intrinsic translation quality of SeamlessM4T , which demonstrated competitive ov erall text-t o- te xt translation per formance (chrF++) compared to NLLB on the standard multilingual FLORES ( Goy al et al. , 2022 ) benchmark. This finding indicates that the quality of the auxil- iary translations significantly influences the ov erall performance of the ASR system; a more accurate or semantically aligned translation is likely to of- fer a more robust guidance signal for the PGC A mechanism. These results validate our choice of Figure 5: Visualization of the cross-lingual attention weights from a representativ e PGCA lay er , mapping source Mandarin (Man.) tok ens to target T aiwanese Hokkien (T ai.) tokens. employing SeamlessM4T as the primar y transla- tion source for the main experiments conducted in this study . 5.7. Cross-Lingual Attention T o offer a qualitative and fine-grained analysis of our PGC A mechanism, we visualized the cross- attention weights to under stand how the model aligns the source Mandarin translation with the generated T aiwanese Hokkien transcription at the tok en lev el. W e hypo thesize that an effective translation-guided model should learn e xplicit cor - respondences between semantically related tok ens across the two languages. T o t est this, we e xtracted the attention matrix from a representative PGCA lay er for a sample utterance from the test set. Figure 5 presents the resulting heatmap, which provides compelling visual proof of our hypothe- sis. A strong diagonal pattern emerges, indicating that the model successfully learns the tok en-level alignment between the two languages. More im- pressiv ely , the model demonstrates an understand- ing of semantic paraphrases bey ond direct char - acter matches. It correctly aligns the T aiwanese Hokkien phrase “ 今仔 日 ” (kin-á-jit, today) with the Mandarin “ 今 天 ” (j ¯ ınti ¯ an), and maps the T aiwanese Hokkien “ 規 工 ” (kui-kang, whole day) to the Man- darin “ 整 天 ” (zhěng ti ¯ an). These highly focused at- tention weights confirm that the PGCA mechanism is not merely treating the auxiliar y translation as a sentence-le vel feature vect or or a “bag of words. ” Instead, it is activ ely learning granular , cross-lingual semantic relationships. This precise, tok en-level guidance provides a clean and unambiguous su- per visory signal to the decoder , offering a clear explanation for the per formance gains obser ved in our quantitative experiments. 5.8. Analysis of Auxiliary Languag e Selection Strategies T o determine the most effective method for select- ing auxiliar y languages under a constrained subset, we compared three data-driv en strategies based on metrics derived from our prior analyses: individual language CER, language pro ximity , and learned gating values. For each strategy , we identified the top- k languages (where k ranges from 1 to 5) based on ranking languages according to the respective metric (lower CER is better ; higher proximity/g ating value is better). The resulting ASR per formance for each strategy and v alue of k is presented in T able 6 . At k = 1 , all three strat egies identified Man- darin as the single best auxiliar y language. Conse- quently , they share the same CER result, establish- ing a common star ting point. How ev er , divergences appear as more languages are added. At k = 2 , se- lecting languages based on their individual CERs or their learned gating values yields the best per - formance, outper forming the strategy based purely on language Proximity . This suggests that while pro ximity is impor tant, the model’s learned gating weights or the actual downstr eam task per formance might be slightly better indicators for selecting the most complementar y pair of languages. Interes tingly , at k = 3 , the strategies diverge, with the Proximity -based selection yielding slightly better per formance than the CER-based and Gat- ing V alue-based selections. At k = 4 , the trend shifts again, with selections based on proximity or gating value clearly outper forming the CER-based selection. Finally , at k = 5 , all strategies naturally include all av ailable languages, resulting in an iden- tical language set and the same final CER. Overall, these results indicate that while all three metrics provide reasonable heuristics for language selection, no single strategy consistently outper- forms the others across all values of k . Prioritiz- ing based on CER or gating value is effective for smaller subsets ( k = 2 ), while proximity shows ad- vantages at intermediate subset sizes ( k = 3 , 4 ). The analysis highlights that the optimal strat egy might shif t depending on the number of languages being combined. Nev er theless, the differences between strategies remain relativ ely small, under - scoring the effectiveness of the PGC A mechanism in managing diverse multilingual inputs selected through various data-driven approaches. 6. Conclusion and F uture W ork This study presents T G-ASR, a novel translation- guided framework tailored for T aiwanese Hokkien ASR, which effectively harnesses multilingual tex- tual information to im pro ve performance in scenar - Strategy # Auxiliar y Language ( k ) 1 2 3 4 5 CER 11.87 11.42 11.50 11.53 11.59 Pro ximity 11.87 11.56 11.49 11.44 11.59 Gating V alue 11.87 11.42 11.50 11.44 11.59 T able 6: Com parison of ASR per formance (CER %) using different strategies for selecting the top- k auxiliary languages. ios with limited transcribed data. Our ke y contri- butions encompass the dev elopment of the frame- work, which incorporates a nov el parallel gated cross-attention mechanism, the release of the 30- hour Y T - THDC corpus, and comprehensive e xper - iments that reveal a substantial 14.77% relative reduction in CER. Analyses confirm that the PGCA module adaptively integrat es diverse signals, ef- fectiv ely promoting beneficial languages while sup- pressing less informativ e ones. These findings sub- stantiate translation-guided learning as a powerful approach for improving ASR in practical, resource- constrained conte xts. For future work, we aim to address existing limi- tations and explore promising research directions. A significant limitation is our reliance on auxiliar y te xts during inference; we are actively investig ating knowledge distillation methodologies to dev elop a model that operates ex clusively on speech input. Additionally , we will ex amine the effects of transla- tion quality and explore methodologies for enhanc- ing robustness against noise present in machine- generated texts. Lastly , applying T G-ASR to other underrepresent ed languages is essential for evalu- ating cross-lingual transferability and validating its effectiveness as a generalizable solution. 7. Limitations First, Y T - THDC is limited in size and domain, con- sisting of approximat ely 30 hours of T aiwanese Hokkien drama speech. While it provides diverse speakers and r ecording conditions, the corpus does not cov er other genres such as conv ersa- tional speech, radio broadcasts, or spontaneous dialogues, which may limit the generalizability of T G-ASR to broader real-world scenarios. Second, the TG- ASR framework relies on auxiliar y trans- lations generated by pre-trained multilingual mod- els. T ranslation errors, misalignments, or seman- tic distor tions may introduce noise into the cross- lingual super vision, pot entially affecting model per - formance. Although the PGC A mechanism mit- igates some inter fer ence, e xtremely low-quality translations or languages with low language prox- imity may provide limited benefits. Third, T G-ASR is ev aluated e xclusiv ely on T aiw anese Hokkien as the target language, and the obser ved im pro ve- ments may vary for other low-resource languages with different phonological, syntactic, or morpho- logical charact eristics. F uture work is needed to assess cross-lingual transferability , domain adap- tation, and scalability to larger and more diverse datasets. 8. Ethical Considerations The Y T - THDC corpus is construct ed from pub- licly av ailable T aiwanese Hokkien drama videos on Y ouT ube, sourced from official broadcasting chan- nels. Only non-commercial research pur poses are intended, and no personally identifiable information bey ond what is publicly visible has been collected. All manual transcriptions wer e per formed b y trained linguistic experts under privacy-r especting proce- dures. The use of machine-translated auxiliar y lan- guage data may introduce biases, including transla- tion errors or semantic misalignments, which could affect model behavior . Outputs from the T G-ASR framework should be treat ed as assistive rather than authoritative, especially in conte xts requiring high transcription accuracy . YT - THDC is released under terms restricting its use to non-commercial research and educational purposes. Pot ential so- cietal impacts, including benefits for low-resour ce language research and risks of propagating errors, are carefully considered to encourage responsible use and development. 9. Bibliographical References Jean-Baptiste Alayrac, Jeff Donahue, Pauline Luc, Antoine Miech, Iain Barr , Y ana Hasson, Karel Lenc, Arthur Mensch, Katie Millican, Malcolm Re ynolds, Roman Ring, Eliza Ruther ford, Serkan Cabi, T engda Han, Zhitao Gong, Sina Saman- gooei, Marianne Monteiro, Jacob Menick, Sebas- tian Borgeaud, Andrew Brock, Aida Nematzadeh, Sahand Sharifzadeh, Mikolaj Bink owski, Ricardo Barreira, Oriol Viny als, Andrew Zisserman, and Karen Simony an. 2022. Flamingo: A visual language model for few-sho t learning. In Proc. NeurlPS . Rosana Ardila, Megan Branson, K elly Davis, Michael Henre tty , Michael Kohler , Josh Meyer , Reuben Morais, Lindsa y Saunders, F rancis M. T y ers, and Gregor Weber . 2020. Common voice: A massively -multilingual speech corpus. In Proc. LREC . Ankur Bapna, Colin Cherr y , Y u Zhang, Y e Jia, Melvin Johnson, Y ong Cheng, Simran Khanuja, Jason Riesa, and Alexis Conneau. 2022. mSLAM: massively multilingual joint pre-training for speech and te xt. In Arxiv preprint arXiv:2202.01374 . Mar tijn Bar telds, Nay San, Bradley McDonnell, Dan Jurafsky , and Mar tijn Wieling. 2023. Making more of little data: improving low-resour ce auto- matic speech recognition using data augmenta- tion. In Proc. A CL . Laurent Besacier , Etienne Barnard, Alex ey Kar - pov , and T anja Schultz. 2014. Aut omatic speech recognition for under -resourced languages: A sur ve y . Speech Communication , 56:85–100. Pin- Y uan Chen, Chia-Hua Wu, Hung-Shin Lee, Shao-Kang T sao, Ming- T at Ko, and Hsin-Min W ang. 2020. Using taigi dramas with mandarin chinese subtitles to improv e taigi speech recog- nition. In Proc. O-COC OSD A . Seamless Communication, Loïc Barrault, Y u-An Chung, Mariano Cora Meglioli, David Dale, Ning Dong, Paul-Ambr oise Duquenne, Hady Elsahar , Hongyu Gong, Ke vin Heffernan, John Hoffman, Christopher Klaiber , Pengw ei Li, Daniel Licht, Jean Maillard, Alice Rak otoarison, Kaushik Ram Sadagopan, Guillaume W enzek, Ethan Y e, Bapi Akula, Peng-Jen Chen, Naji El Hachem, Brian Ellis, Gabriel Mejia Gonzalez, Justin Haaheim, Prangthip Hansanti, Russ Howes, Bernie Huang, Min-Jae Hwang, Hirofumi Inaguma, Somy a Jain, Elahe Kalbassi, Amanda Kallet, Ilia Kulik ov , Jan- ice Lam, Daniel Li, X utai Ma, Ruslan Ma vlyu- to v , Benjamin P eloquin, Mohamed Ramadan, Abinesh Ramakrishnan, Anna Sun, Ke vin T ran, T uan T ran, Igor T ufano v , Vish V ogeti, Carleigh W ood, Yilin Y ang, Bokai Y u, Pierre Andrews, Can Balioglu, Mar ta R. Costa-jussà, Onur Celebi, Maha Elbay ad, Cynthia Gao, Fr ancisco Guzmán, Justine Kao, Ann Lee, Alexandr e Mourachko, Juan Pino, Sravya Popuri, Christophe Rop- ers, Safiyyah Saleem, Holger Schwenk, Paden T omasello, Changhan W ang, Jeff W ang, and Skyler W ang. 2023. SeamlessM4T: Massively multilingual & multimodal machine translation. In Arxiv preprint arXiv:2308.11596 . Alexis Conneau, Alex ei Baevski, Ronan Collober t, Abdelrahman Mohamed, and Michael Auli. 2021. Unsupervised cross-lingual representation learn- ing for speech recognition. In Proc. Interspeech . Jacob Devlin, Ming-Wei Chang, Kenton Lee, and Kristina T outanova. 2019. BERT: Pre-training of deep bidirectional transformers for language un- derstanding. In Arxiv preprint . F angxiaoyu Feng, Yinfei Y ang, Daniel Cer , Na veen Arivazhagan, and Wei Wang. 2022. Language- agnostic BERT sentence embedding. In Proc. A CL . Naman Goy al, Cynthia Gao, Vishra v Chaudhary , P eng-Jen Chen, Guillaume W enzek, Da Ju, San- jana Krishnan, Marc’ Aurelio Ranzato, F rancisco Guzmán, and Angela F an. 2022. The flores- 101 evaluation benchmar k for low-resource and multilingual machine translation. T r ansactions of the Association for Computational Linguistics , 10:522–538. Anjuli Kannan, Arindrima Datta, T ara N. Sainath, Eugene W einstein, Bhuvana Ramabhadran, Y onghui Wu, Ankur Bapna, Zhifeng Chen, and Seungji Lee. 2019. Large-scale multilingual speech recognition with a streaming end-to-end model. In Proc. Inter speech . T om Ko, Vijay aditya P eddinti, Daniel Po vey , and Sanjeev Khudanpur . 2015. Audio augmentation for speech recognition. Ily a Loshchilov and F rank Hutter . 2019. Decoupled weight decay regularization. In Proc. ICLR . Daniel S. P ark, William Chan, Y u Zhang, Chung- Cheng Chiu, Barre t Zoph, Ekin D. Cubuk, and Quoc V . Le. 2019. SpecAugment : A simple data augmentation method for automatic speech recognition. In Proc. Interspeech . Vineel Pr atap, Qiantong X u, Anuroop Sriram, Gabriel Synnaev e, and Ronan Collober t. 2020. MLS: A large-scale multilingual dataset for speech research. In Proc. Interspeech . Alec Radford, Jong Wook Kim, T ao Xu, Greg Brock- man, Christine McLeav ey , and Ilya Sutske ver . 2023. Robust speech recognition via large-scale weak super vision. In Proc. ICML . Andrew Rouditchenk o, Y uan Gong, Samuel Thomas, Leonid Karlinsky , Hilde Kuehne, Roge- rio Feris, and James Glass. 2024. Whisper - flamingo: Integrating visual featur es into whisper for audio-visual speech recognition and transla- tion. In Proc. Inter speech . Kak Soky , Sheng Li, Masato Mimura, Chenhui Chu, and T atsuya Kaw ahara. 2022. Lev eraging simul- taneous translation for enhancing transcription of low-resource language via cross attention mech- anism. In Proc. Inter speech . Shuta T aniguchi, T suneo Kato, Akihiro T amura, and K eiji Y asuda. 2023. T ransf ormer-based auto- matic speech recognition of simultaneous inter - pretation with auxiliar y input of source language te xt. In Proc. APSIP A . NLLB T eam, Mar ta R. Costa-jussà, James Cross, Onur Çelebi, Maha Elbay ad, Kenne th Heafield, K evin Heffernan, Elahe Kalbassi, Janice Lam, Daniel Licht, Jean Maillard, Anna Sun, Skyler W ang, Guillaume W enzek, Al Y oungblood, Bapi Akula, Loic Barrault, Gabriel Mejia Gonza- lez, Prangt hip Hansanti, John Hoffman, Se- marley Jarrett, Kaushik Ram Sadagopan, Dirk Row e, Shannon Spruit, Chau T ran, Pierre Andrews, Necip F azil A yan, Shruti Bhosale, Sergey Eduno v , Angela F an, Cynthia Gao, V edanuj Goswami, Francisco Guzmán, Philipp K oehn, Alex andre Mourachko, Christophe Rop- ers, Safiyyah Saleem, Holger Schwenk, and Jeff W ang. 2022. No language lef t behind: scaling human-centered machine translation. In Arxiv preprint arXiv:2207.04672v3 . Ashish V aswani, Noam Shazeer , Niki P armar , Jakob Uszkor eit, Llion Jones, Aidan N Gomez, Ł ukasz Kaiser , and Illia Polosukhin. 2017. At- tention is all you need. In Proc. NeurlPS . Changhan W ang, Morgane Rivière, Ann Lee, Anne W u, Chaitany a T alnikar , Daniel Haziza, Mary Williamson, Juan Pino, and Emmanuel Dupoux. 2021. V oxP opuli: a large-scale multilingual speech cor pus for representation learning, semi- super vised learning and interpretation. In Proc. A CL . Shinji W atanabe, T akaaki Hori, Suyoun Kim, John R. Hershey , and T omoki Hayashi. 2017. Hybrid CT C/attention architectur e for end-to-end speech recognition. IEEE Journal of Selected T opics in Signal Processing , 11(8):1240–1253. Ara Y eroy an and Nikola y Karpov . 2024. Enabling ASR for low-resource languages: a comprehen- sive dataset creation approach. In Arxiv preprint arXiv:2406.01446 .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment