RGB-Event HyperGraph Prompt for Kilometer Marker Recognition based on Pre-trained Foundation Models

Metro trains often operate in highly complex environments, characterized by illumination variations, high-speed motion, and adverse weather conditions. These factors pose significant challenges for visual perception systems, especially those relying …

Authors: Xiaoyu Xian, Shiao Wang, Xiao Wang

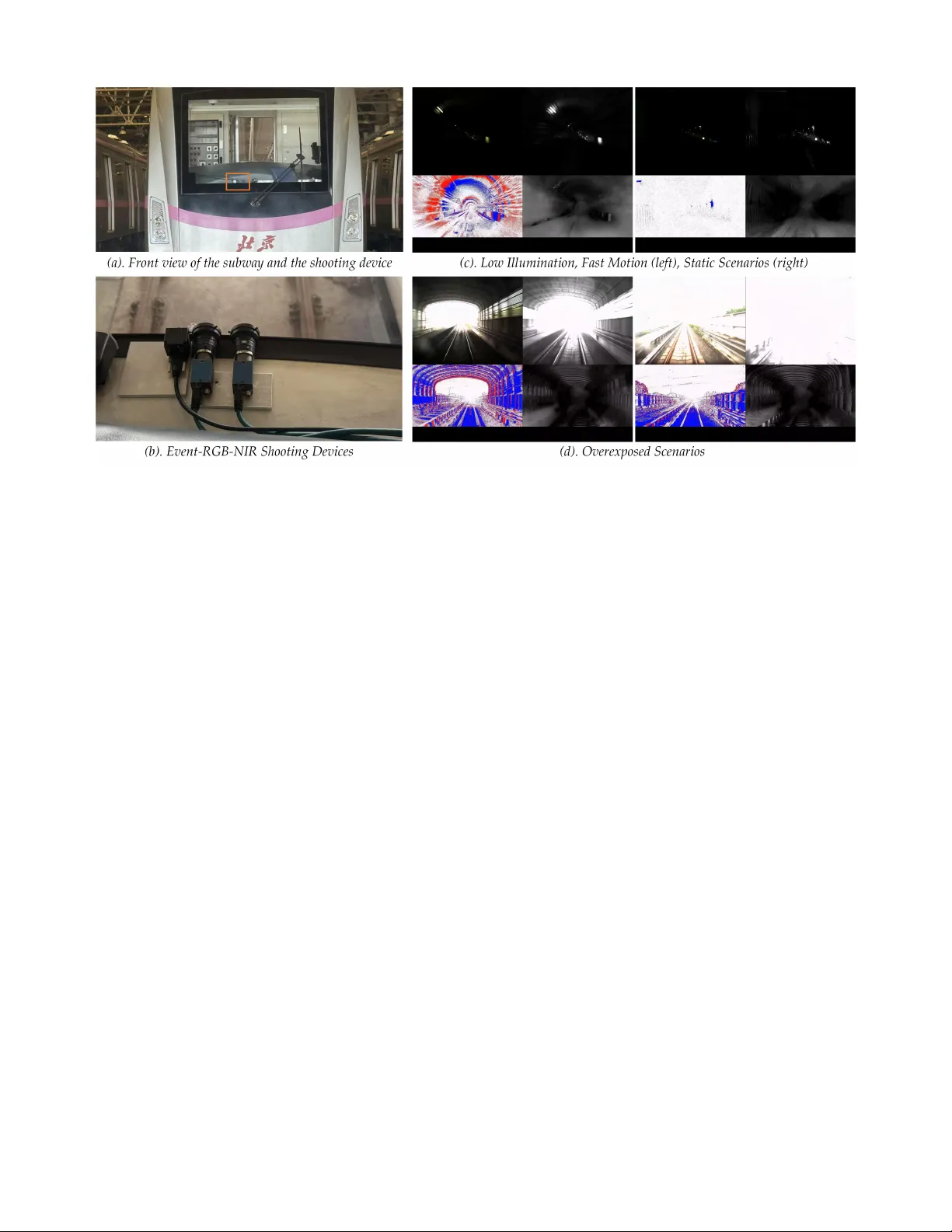

IEEE TRANSA CTIONS ON COGNITIVE AND DEVELOPMENT AL SYSTEMS (IEEE TCDS) , 2026 1 RGB-Ev ent HyperGraph Prompt for Kilometer Marker Recognition based on Pre-trained F oundation Models Xiaoyu Xian, Shiao W ang, Xiao W ang, Member , IEEE , Daxin T ian*, Y an Tian Abstract —Metro trains often operate in highly complex envi- ronments, characterized by illumination variations, high-speed motion, and adverse weather conditions. These factors pose significant challenges for visual perception systems, especially those relying solely on conventional RGB cameras. T o tackle these difficulties, we explore the integration of event cameras into the perception system, leveraging their advantages in low-light conditions, high-speed scenarios, and low power consumption. Specifically , we focus on Kilometer Mark er Recognition (KMR), a critical task for autonomous metro localization under GNSS- denied conditions. In this context, we propose a r obust baseline method based on a pre-trained RGB OCR foundation model, enhanced through multi-modal adaptation. Furthermore, we construct the first large-scale RGB-Event dataset, EvMetro5K, containing 5,599 pairs of synchronized RGB-Event samples, split into 4,479 training and 1,120 testing samples. Extensive experiments on EvMetro5K and other widely used benchmarks demonstrate the effectiveness of our approach for KMR. Both the dataset and source code will be released on https://github. com/Event- AHU/EvMetro5K_benchmark Index T erms —RGB-Ev ent Fusion; Pre-trained F oundation Model; Kilometer Marker Recognition; Hypergraph I . I N T R O D U C T I O N M ETR O systems play a crucial role in urban trans- portation, serving as the backbone of smart cities and intelligent transportation infrastructures. Their operation faces numerous challenges, particularly in achieving accurate train positioning and maintaining precise speed control, both of which are critical for ensuring safety , punctuality , and ov erall operational efficienc y . These challenges are further compounded by dynamic and often harsh en vironmental con- ditions, such as dim lighting in under ground tunnels, e xcessi ve sunlight exposure in above-ground sections, and interference from adverse weather . Under such circumstances, con ventional RGB cameras frequently struggle to provide reliable percep- tion, especially during high-speed train motion and in lo w- illumination scenarios, highlighting the need for more robust • Xiaoyu Xian is with School of Transportation Science and Engineer- ing, Beihang Univ ersity , Beijing 100191, China; CRRC Academy Co., Ltd, Beijing, 100070, China (email: xxy@crrc.tech) • Shiao W ang and Xiao W ang are with the School of Computer Sci- ence and T echnology , Anhui University , Hefei 230601, China. (email: {xi- aow ang}@ahu.edu.cn, wsa1943230570@126.com) • Daxin Tian is with School of Transportation Science and Engineering, Beihang Uni versity , Beijing 100191, China (email: dtian@buaa.edu.cn) • Y an Tian is with CRRC Academy Co., Ltd, Beijing, 100070, China (email: ty@crrc.tech) * Corresponding Author: Daxin T ian sensing solutions capable of handling these e xtreme operating conditions. T o address the aforementioned issues, existing works [ 1 ], [ 2 ] hav e explored pre-training on large-scale RGB datasets to achie ve satisfactory performance. F or instance, the masked autoencoder (MAE) strategy [ 1 ], b uilt upon the V ision Trans- former (V iT) [ 3 ], enhances the model’ s visual perception ability by masking and reconstructing partial image patches. Meanwhile, CLIP [ 2 ] learns semantic alignment between images and texts, thereby enabling strong cross-modal un- derstanding and matching capabilities. Howe ver , due to the inherent limitations of RGB data under extreme conditions, it remains challenging to capture sufficient target details for effecti ve perception. Recently , event cameras have drawn more and more at- tention due to their advantages in high dynamic range, low energy consumption, and high temporal resolution [ 4 ]. The imaging principle of ev ent cameras dif fers from that of con- ventional visible light cameras. Event cameras operate by measuring the brightness variations of pixels in a scene and emit asynchronous event signals when the change exceeds a specific threshold, outputting event points with polarity (+1, -1). These cameras ha ve a higher dynamic range of approximately 120 dB, far surpassing the 60 dB range of the widely used RGB cameras, making them perform significantly better in overexposure and low-light scenarios. Additionally , due to their sparsity in the spatial and density in the temporal, ev ent cameras excel at capturing fast-moving objects. Combining RGB cameras with event cameras to achiev e robust and reliable visual perception has gradually become a hot research trend, for example, RGB-Event based visual tracking [ 5 ]–[ 7 ], object detection [ 8 ], [ 9 ], pattern recogni- tion [ 10 ], [ 11 ], sign language translation [ 12 ]. It is also widely used in many low-le vel image/video processing tasks, such as denoising [ 13 ], super resolution [ 14 ]. Specifically , Huang et al. [ 7 ] achieve effecti ve visual object tracking by integrating ev ent cameras with RGB cameras using a linear-complexity Mamba network. W ang et al. [ 11 ] propose a pedestrian at- tribute recognition task based on RGB-ev ent modalities, and introduce the first large-scale multi-modal pedestrian attrib ute recognition dataset EventP AR, as well as an inno v ati ve R WKV fusion framew ork. The visual localization method based on recognizing metro kilometer markers is an ef fecti ve approach to achiev e precise positioning of metros under GNSS-denied conditions. Ho w- ev er , its performance often limited by the complex operating IEEE TRANSA CTIONS ON COGNITIVE AND DEVELOPMENT AL SYSTEMS (IEEE TCDS) , 2026 2 Fig. 1. The multi-modal imaging device proposed in this paper and typical metro perception scenarios. Specifically , sub-figures (c) and (d) show the imaging results of the metro under lo w-illumination conditions in high-speed motion/static scenes and overexposed scenes, respectiv ely . In each sub-figure (c, d), the four images arranged clockwise are: the RGB image, the NIR image, the stacked e vent stream rendered as a red-blue map, and the reconstructed grayscale image from the ev ent stream. en vironment of metros, such as high-speed motion, dim tunnel lighting, and extreme weather , which sev erely degrades the recognition accuracy of RGB single-modality vision. In this work, we resort to the RGB-Event multi-modal fusion for the Kilometer Marker Recognition (KMR), which fully le verages the advantages of ev ent-based sensors in low-light and high- speed motion scenarios. T o this end, we propose a robust baseline approach for KMR, referred to as HGP-KMR. Gi ven the RGB and Event streams, we first reconstruct the grayscale image from the ev ent stream using an ev ents-to-grayscale image reconstruction algorithm [ 15 ]. Then, we embed the RGB frames into the vision tokens and extract their features using the vision T rans- former layer . F or the grayscale image, we first concatenate the RGB and ev ent tokens into unified feature representations and construct a multi-modal hypergraph. T w o hypergraph con v olu- tional layers are adopted to encode the hyper graph and add the enhanced features into the RGB branch for multi-modal fusion. W e integrate our modules into the P ARseq [ 16 ] for accurate RGB-Event Kilometer Marker Recognition. An ov ervie w of our proposed framework can be found in Fig. 2 . Different from existing hyper graph-based fusion methods [ 17 ], [ 18 ], our work employs a hypergraph network to capture high-order in- teractions between RGB and ev ent modalities, enabling richer cross-modal relationship learning. The hypergraph prompting strategy , in which multimodal hypergraph features guide and modulate the RGB branch, enhances robustness under noisy or degraded conditions. Beyond this, this paper formally proposes, for the first time, the use of RGB-Event cameras to recognize metro mileage. Specifically , we first set up a multi-modal imaging system, consisting of an RGB camera (MER2-134-90GC-P), a near- infrared camera (MER2-134-90GM-P), and a high-definition ev ent camera (Prophesee EVK4), as shown in Fig. 1 . Based on this multi-modal equipment, we collected more than 20 hours of multi-modal videos in the metro scenarios, capturing div erse conditions including different weathers (sunn y , cloudy , rainy), times of day , and lighting conditions (daytime and nighttime scenarios), and train speeds. From these videos, we extracted 5599 effecti v e mileage samples, which were man- ually annotated and verified, resulting in the construction of the EvMetro5K dataset. Using this dataset, we extended con- ventional unimodal character recognition algorithms to multi- modal versions through feature-level fusion, and retrained and ev aluated these models to establish a comprehensi ve benchmark. This dataset and benchmark lay a solid foundation for future research on Kilometer Marker Recognition. T o sum up, the main contributions of this paper can be summarized as the following three aspects: 1). W e ha ve dev eloped the first RGB-Ev ent perception imag- ing system in the railw ay transportation domain and acquired a large-scale multi-modal dataset, termed EvMetro5K. It can effecti vely support vision-based perception tasks, such as Kilo- meter Marker Recognition and dynamic scene reconstruction. 2). W e propose a nov el RGB-Event HyperGraph Prompt for Kilometer Marker Recognition based on pre-trained founda- tion models, termed HGP-KMR. This method fully associates high-order information from both modalities through a multi- modal hypergraph prompt, achieving more rob ust and accurate recognition. 3). Extensiv e e xperiments on our newly proposed EvMetro5K dataset, W ordArt*, and IC15* datasets fully demonstrate the effecti veness of our proposed framew ork. IEEE TRANSA CTIONS ON COGNITIVE AND DEVELOPMENT AL SYSTEMS (IEEE TCDS) , 2026 3 The following of this paper is organized as follows: In section II , we giv e an introduction to the related works, including scene te xt recognition, RGB-Event fusion, and large foundation models. After that, we formally propose our frame- work in section III and the newly built benchmark dataset in section IV . The experiments are conducted in section V . Finally , we conclude this paper in section VI . I I . R E L AT E D W O R K S A. Scene T e xt Recognition Scene text recognition (STR) [ 19 ]–[ 22 ] inherently combines vision and language understanding. Early research mainly focused on either visual feature extraction or linguistic mod- eling, whereas recent approaches emphasize their integration for rob ustness under di verse conditions. E2STR [ 23 ] enhances adaptability by introducing context-rich text sequences and a context training strategy . CCD [ 24 ] lev erages a self-supervised segmentation module and character-to-character distillation, while SIGA [ 25 ] further refines se gmentation through implicit attention alignment. CDistNet [ 26 ] incorporates visual and semantic positional embeddings into its transformer -based design to handle irregular text layouts. In parallel, iterativ e error correction strategies hav e been introduced via language models. V OL TER [ 27 ], BUSNet [ 28 ], MA TRNet [ 29 ], Lev- OCR [ 30 ], and ABINet [ 31 ] ex emplify this trend by refining recognition through feedback loops. Recently , large language model (LLM)-based STR has emerged, exploiting genera- tiv e and contextual reasoning to unify visual-linguistic un- derstanding. Representati ve works include T extMonke y [ 32 ], DocPedia [ 33 ], V ary [ 34 ], mPLUG-DocOwl 1.5 [ 35 ], and OCR2.0 [ 36 ]. Despite their progress, these LLM-based models remain vulnerable under extreme conditions such as lo w illumination, blur , and noise. T o o vercome the limitations of RGB-only recognition, recent research explores e v ent camera data for STR. EventSTR [ 37 ] pioneers this direction, leveraging the high dynamic range and low-latenc y properties of event streams to improve robustness in adverse environments. Furthermore, ESTR-CoT [ 38 ] introduces chain-of-thought style reasoning with e vent representations, enhancing recognition accuracy through structured linguistic refinement. Generally speaking, existing works primarily focus on using RGB or e vent cameras to address text recognition tasks, b ut fe w explore the inte gra- tion of multi-modal data for recognition, which limits their perceptual capability in complex scenarios. B. RGB-Event Fusion Event cameras possess high temporal resolution and inher- ent robustness to motion blur, making them valuable com- plements to RGB data in v arious vision tasks to enhance ov erall performance. Existing RGB-Ev ent fusion methods can be broadly categorized into implicit modeling and explicit modeling approaches. For instance, Zhang et al. [ 39 ] implicitly associate event streams with intensity frames under different baseline conditions, whereas Chen et al. [ 40 ] achiev e cross- modal fusion by explicitly constructing nov el relationships between ev ent streams and LDR images. Lev eraging the unique adv antages of event cameras, RGB-Event fusion has demonstrated significant ef fecti veness across multiple appli- cations. Specifically , their high dynamic range enables ac- curate HDR reconstruction [ 40 ], [ 41 ] and robust low-light enhancement [ 42 ], [ 43 ]. Moreov er , thanks to their superior temporal resolution, e vent cameras facilitate precise dynamic scene reconstruction [ 44 ], [ 45 ] and ef fecti ve video super- resolution [ 46 ]. In addition, in image deblurring, Color4E [ 47 ] and CFFNet [ 48 ] integrate ev ent information with RGB im- ages to recover sharp details under motion blur or low- light conditions. For multi-modal perception, UTNet [ 49 ] fuses ev ents and RGB frames, ef fectively improving detection performance of transparent underwater organisms and enabling more accurate and efficient scene understanding. In 3D recon- struction and neural rendering, E2NeRF [ 50 ] leverages event data to compensate for motion blur and generate high-quality volumetric representations. In object detection, SFDNet [ 51 ] combines RGB and ev ent data to improv e robustness in dy- namic scenes. Inspired by these works, this paper attempts to lev erage RGB-Ev ent data and achie ve high-performance Kilo- meter Marker Recognition in challenging scenarios through hypergraph prompt learning. C. Lar ge F oundation Models Large foundation models emerge as a transformativ e paradigm in computer vision and multi-modal learning, as they acquire univ ersal representations from large-scale pretraining and adapt effecti vely to div erse downstream tasks. V iT [ 3 ] and Swin T ransformer [ 52 ], as among the earliest vision founda- tion models to explore self-attention-based architectures, hav e achiev ed remarkable success. CLIP [ 2 ] represents a pioneering vision-language model, which trains with contrasti ve learning on image-text pairs to align modalities and achieves strong zero-shot recognition and retriev al performance. Building upon this frame work, SigLIP [ 53 ] introduces a sigmoid-based matching loss in place of the con ventional softmax contrasti ve loss. BLIP-2 [ 54 ] further advances vision-language modeling by adopting a lightweight query transformer that bridges frozen pretrained vision encoders and large language models, thereby reducing training costs while enhancing performance in both image understanding and text generation tasks. Beyond contrastiv e and bridging architectures, generative foundation models also demonstrate remarkable capabilities. Stable Dif fusion [ 55 ] e xemplifies this trend through a la- tent diffusion framework that efficiently synthesizes high- fidelity images from te xtual descriptions and drives substantial progress in text-to-image generation. More recently , vision- language large models such as QwenVL-2.5 [ 56 ] extend the scope of foundation models by unifying multi-modal pretrain- ing across large-scale image-text data. Although these large RGB-based models hav e achie ved significant success, they are still limited by the imaging performance of RGB cameras in challenging scenarios. The proposed multi-modal hyper graph fusion approach ef fecti vely le verages the capabilities of large foundation models while incorporating ev ent streams to miti- gate the adverse ef fects of such challenges. IEEE TRANSA CTIONS ON COGNITIVE AND DEVELOPMENT AL SYSTEMS (IEEE TCDS) , 2026 4 Fig. 2. An overvie w of our proposed RGB-Event based Hypergraph Prompt for Kilometer Marker Recognition based on foundation models. I I I . M E T H O D O L O G Y A. Overview In this section, we will give an overvie w of the proposed HGP-KMR frame work, which is designed to ef fecti vely lever - age RGB frames and event streams for accurate recognition of metro mileage. As illustrated in Fig. 2 , we first reconstruct the event stream into grayscale images and perform pre- cropping together with the RGB frames to obtain local image regions containing mileage characters. T w o embedding layers are employed to project the RGB frames and grayscale images into token sequences, which are then augmented with posi- tional encodings and jointly fed into the backbone network. Specifically , in the ev ent branch, the features extracted by the e vent encoder , together with the token embeddings from the RGB modality , are fed into a hypergraph-based prompt module for cross-modal interaction. The resulting multi-modal high-order representations are subsequently integrated into the RGB backbone in a layer-wise manner , enabling a hypergraph prompt based multi-modal fusion. Finally , the encoded fea- tures are fed into a visio-lingual decoder for decoding, and the final output is obtained through a linear projection. B. Input Representation Our HGP-KMR framew ork takes an RGB frame and the corresponding asynchronous e vent stream from the event cam- era as input. Follo wing [ 15 ], to effecti v ely leverage existing deep neural networks for visual information modeling, we first reconstruct the corresponding event stream into an grayscale image, denoted as E ∈ R C × H × W , and represent the cor- responding RGB frame as R ∈ R C × H ′ × W ′ . Subsequently , to mitigate background interference, we perform pre-cropping on both the RGB frame and the grayscale image. The cropped images are then resized to a fixed resolution (e.g., 32 × 128 ) and jointly fed into the deep network for representation learning. C. Network Arc hitectur e Backbone Network Gi ven the preprocessed RGB and grayscale images, denoted as R i ∈ R 3 × h × w and E i ∈ R 3 × h × w , respectively , we first partition the images into patches of equal size according to a predefined patch size (e.g., 4 × 8 ). These patches are then projected into discrete token sequences via embedding layers and augmented with positional encodings to preserve the location information of patches, denoted as T r ∈ R N × C ′ and T e ∈ R N × C ′ . This facilitates their input into the backbone network for cross- patch feature learning and interaction. Subsequently , the em- bedded features of the ev ent modality are fed into the event encoder , which employs L stacked V iT blocks as its backbone, to extract the initial event representations F e ∈ R N × C ′ . The formula of V iT is as follows (Layer-Normalization operation is omitted in the equation): X ′ l = MHA( X l − 1 ) + X l − 1 , ( l = 1 , 2 , ..., L ) X l = FFN( X ′ l ) + X ′ l = FFN(MHA( X l − 1 ) + X l − 1 ) + MHA( X l − 1 ) + X l − 1 , (1) where MHA and FFN denote the attention and feed-forward networks, respectively . L is the total number of V iT blocks, X l denote the output from l -th Transformer block. T o enable effecti ve interaction between the RGB and ev ent modalities, we le verage a hypergraph network to fuse the embedded RGB features with the encoded event represen- tations, allo wing the model to capture complex cross-modal relationships. For the RGB branch, we employ a shared backbone consisting of L stacked V iT blocks to extract RGB features. Meanwhile, the multi-modal information from the hypergraph network is progressi vely inte grated into each V iT block via the hypergraph prompt strategy , ensuring that the RGB feature representations are continuously enriched with ev ent-deri ved cues throughout the hypergraph network. This design allows for both modality-specific feature extraction and IEEE TRANSA CTIONS ON COGNITIVE AND DEVELOPMENT AL SYSTEMS (IEEE TCDS) , 2026 5 effecti ve cross-modal fusion, thereby enhancing the represen- tation capacity of the multi-modal recognition framew ork. Hypergraph Prompt The advantage of e vent cameras in ex- treme scenarios can significantly improve the accuracy of char - acter recognition. Therefore, we propose a novel hyper graph- based fusion prompt module, which not only enables ef fecti ve integration of multi-modal information but also enhances the feature representation capability of the RGB backbone. T o obtain a unified multi-modal representation, we concatenate the RGB embedding features with the grayscale image features generated by the ev ent encoder along the channel dimension and obtain T re . Follo wing [ 57 ], we also adopt a hypergraph construction method based on Euclidean distance K-nearest neighbors (K-NN, where the maximum number of neighboring nodes is set to 10 in our work) to generate the hypergraph structure G . The formula can be shown as: H = { V , E , H } , H j i = ( 1 , if v j ∈ e i , 0 , otherwise , G = D − 1 2 v H D − 1 e H ⊤ D − 1 2 v , (2) where the H refer to the hyper graph, V and E denote the vertex and hyperedge set. H is the node–hyperedge incidence matrix, D v and D e are the node degree matrix and h yperedge degree matrix, respecti vely . G denotes the normalized hyper graph propagation matrix. Subsequently , we feed G into a two-layer hypergraph con- volutional neural network [ 57 ] (HGCN) to aggregate features, which can be expressed by the following formula: X ′ = G ( XW + b ) , (3) where the X and X ′ denote the input and output node feature vector , i.e., T re and T ′ re , respectiv ely . W is the learnable weight matrix and b is the bias. G is the normalized hy- pergraph propagation matrix. After processing through two layers of HGCNs, we obtain the aggregated multi-modal graph features, denoted as T ′ re . T o further enhance the learning of the RGB modality , these features are injected into the V iT backbone of the RGB branch. This inte gration allo ws the backbone to lev erage the rich multi- modal context, ef fectively guiding the RGB feature e xtraction process. Specifically , we adopt a layer-wise residual addition strategy . In this approach, for each V iT block, the RGB features produced by the block are treated as the baseline, to which the hypergraph-aggre gated multi-modal features are added. The resulting fused features are then propagated to the subsequent blocks. By applying this procedure iteratively across all layers, the RGB features are progressively informed by the multi-modal context, from the shallow to the deeper layers. This hierarchical, layer -wise fusion not only encourages the backbone to capture complementary information from different modalities but also significantly enhances the final feature representation capability , leading to more discrimina- tiv e embeddings for downstream tasks. V ision-Lingual Decoder After obtaining the multi-modal image features, following [ 16 ], we similarly employ a pre- LayerNorm T ransformer decoder [ 58 ], [ 59 ] network with twice the number of attention heads, i.e., nhead = d model / 32 . The image features, position queries, and input context (i.e., 1 5 6) are fed simultaneously into the decoder . Follo wing [ 16 ], different permutations are employed as an extension of autoregressi ve (AR) language modeling. By ap- plying attention masks corresponding to v arious permutations of the context sequence, the Transformer can learn multiple sequence factorizations during training. Unlike standard AR models, which generate sequences in a fixed order, this ap- proach trains the model on a subset of randomly sampled permutations, enabling each position to conditionally depend on different conte xts during prediction. This not only enhances the model’ s understanding of sequence structures and gener- alization ability b ut also, through the masking mechanism, allows for efficient parallel computation. Position queries encode the specific tar get positions to be predicted, with each token directly corresponding to a particular output position, thereby enabling the model to fully exploit the benefits of different permutations. As shown in the upper part of Fig. 2 , let T be the length of the conte xt, the permuted context, and the position queries are jointly fed into the first attention module. The formula can be written as: h c = p + MHA( p, c, c, m ) ∈ R ( T +1) × d model . (4) The positional queries are denoted by p ∈ R ( T +1) × d model , while c ∈ R ( T +1) × d model represents the conte xt embeddings that include positional information. An optional attention mask is giv en by m ∈ R ( T +1) × ( T +1) . Adding special delimiter tokens, such as [ B ] or [ E ] , increases the total sequence length to T + 1 . The second attention module is used to aggregate the features of the positional queries and the image tokens: h i = h c + MHA( h c , z , z ) ∈ R ( T +1) × d model , (5) where z denotes the image tok ens. h i is the last decoder hidden state, and is then further fed into an MLP : h dec = h i + MLP( h i ) ∈ R ( T +1) × d model . (6) Finally , the h dec through a Linear layer to generate the output logits: y = Linear( h dec ) ∈ R ( T +1) × ( C +1) , (7) where C is the size of the charset. Loss Function The Cross-Entropy loss is employed to con- strain the predictions to align with the ground truth as closely as possible, which is formulated as: L CE = − N X i =1 C X c =1 y i,c log ˆ y i,c , (8) where N is the number of samples, C is the size of the charset, y i,c is the one-hot ground truth label, and ˆ y i,c is the predicted probability for class c . IEEE TRANSA CTIONS ON COGNITIVE AND DEVELOPMENT AL SYSTEMS (IEEE TCDS) , 2026 6 Fig. 3. Example samples from the EvMetro5K dataset. Each pair shows the RGB image (left) and the corresponding e vent-reconstructed grayscale image (right). While the RGB modality often suffers from low light, motion blur, and overexposure conditions, the ev ent modality provides clearer structural information for milestone recognition. I V . B E N C H M A R K D A TA S E T A. Data Collection and Annotation The EvMetro5K dataset is collected in real metro oper- ation scenarios using both a standard RGB camera and an ev ent-based camera mounted in parallel. A total of 67,602 frames are recorded. The e vent streams are first reconstructed into grayscale images, after which all frames are manually annotated to identify visible milestones. The samples without milestone information are removed. T o further emphasize the regions of interest, the remaining frames are cropped around the annotated milestones. After this processing pipeline, the dataset contains 5,599 paired RGB and event-reconstructed grayscale images. This final dataset forms the basis for the subsequent training and ev aluation of milestone recognition models. B. Statistical Analysis The 5,599 samples in EvMetro5K are split into training and testing subsets at an 8:2 ratio, resulting in 4,479 train- ing samples and 1,120 testing samples. The dataset cov ers div erse metro en vironments with v ariations in illumination, motion speed, and background complexity , reflecting real- world challenges for milestone recognition. Fig. 3 presents representativ e RGB-Event pairs from the dataset. While RGB images are generally clear under normal daylight, they of- ten suffer from motion blur during high-speed operation or visibility degradation in low-light conditions. In contrast, ev ent-reconstructed images preserve sharper structural details, providing complementary information that supports robust milestone recognition. C. Benchmark Baselines T o provide a comprehensiv e ev aluation on the EvMetro5K dataset, we compare our approach with a set of representati ve state-of-the-art (SO T A) scene text recognition models. These baselines are selected to cover div erse architectural paradigms, ranging from CNN-based models to transformer - and V iT - based architectures, ensuring a fair and balanced comparison. Specifically , we include CCD [ 24 ], SIGA [ 25 ], CDistNet [ 26 ], DiG [ 63 ], P ARSeq [ 16 ], and MGP-STR [ 64 ]. These methods represent different design choices, such as self-supervised segmentation (CCD, SIGA), character-le vel positional model- ing (CDistNet), sequence modeling via V iT and transformers (DiG, P ARSeq, MGP-STR). For a fair comparison in the ev ent-dri ven metro scenario, we extend all baseline models into a unified dual-modality framew ork that integrates both RGB and ev ent modalities. In this way , each baseline benefits from complementary visual cues while preserving its original architectural characteristics. W e report accuracy on EvMetro5K along with backbone archi- tecture, parameter size, and code av ailability , as summarized in T able I . This benchmark pro vides a clear view of the IEEE TRANSA CTIONS ON COGNITIVE AND DEVELOPMENT AL SYSTEMS (IEEE TCDS) , 2026 7 T ABLE I T H E AC C U R AC Y C O M PA R I S ON S W I T H S OTA M E T H O D S O N T H E E V M E T RO 5 K DAT A S E T . Algorithm Publish Backbone Accuracy Params Code IP AD [ 60 ] IJCV2025 V iT 69.5 14.1M URL Qwen3VL [ 61 ] arXiv2025 V iT 39.1 235.0B URL CAM [ 62 ] PR2024 ResNet+V iT 81.3 23.3M URL CCD [ 24 ] ICCV 2023 V iT 86.0 32.0M URL SIGA [ 25 ] CVPR 2023 ResNet 81.3 40.4M URL CDistNet [ 26 ] IJCV 2023 ResNet+V iT 91.9 65.5M URL DiG [ 63 ] ACM MM 2022 V iT 84.0 52.0M URL MGP-STR [ 64 ] ECCV 2022 V iT 92.3 148.0M URL P ARSeq [ 16 ] ECCV 2022 V iT 91.7 23.4M URL Ours - V iT 95.1 24.2M - T ABLE II T H E AC C U R AC Y C O M PA R I S ON S W I T H S OTA M E T H O D S O N W O R D A RT * A N D I C 1 5 * . Algorithm Publish Backbone Accuracy Params(M) Code W ordArt* IC15* CCD [ 24 ] ICCV 2023 V iT 62.1 91.6 52.0 URL SIGA [ 25 ] CVPR 2023 ResNet 70.9 73.7 40.4 URL DiG [ 63 ] ACM MM 2022 V iT 74.1 78.2 52.0 URL MGP-STR [ 64 ] ECCV 2022 V iT 80.5 84.9 148.0 URL BLIV A [ 65 ] AAAI 2024 V iT 56.7 51.3 7531.3 URL SimC-ESTR [ 37 ] arXiv 2025 V iT 65.1 56.8 7531.3 URL ESTR-CoT [ 38 ] arXiv 2025 V iT 65.6 57.1 7531.3 URL P ARSeq [ 16 ] ECCV 2022 V iT 74.8 81.2 23.4 URL Ours (P ARSeq) - V iT 77.8 84.8 24.2 - CDistNet [ 26 ] IJCV 2023 ResNet+V iT 90.8 91.2 65.5 URL Ours (CDistNet) - ResNet+V iT 91.5 92.9 66.2 - performance-efficienc y trade-of fs among existing STR models under dual-modality settings and highlights the rob ustness challenges posed by e vent-dri ven metro scene te xt recognition. V . E X P E R I M E N T S A. Datasets and Evaluation Metric In this paper , our experiments are conducted on the W or - dArt* [ 66 ], IC15* [ 67 ], and our newly proposed EvMetro5K dataset. W ordArt* is con verted from the original W ordArt [ 68 ] dataset using the ev ent simulator ESIM [ 69 ]. This dataset includes artistic text samples from posters, greeting cards, cov ers, and handwritten notes. It contains 4,805 training images and 1,511 validation images. IC15* is deri v ed from the ICD AR2015 [ 67 ] dataset and con verted to ev ent-based images. It includes 4,468 training samples and 2,077 test samples from natural scenes. The accuracy is used for ev aluating our pro- posed model and other state-of-the-art recognition algorithms. B. Implementation Details Our framew ork is built upon the P ARseq architecture [ 16 ]. Based on this, we de veloped a novel multi-modal RGB-Ev ent Kilometer Marker Recognition frame work, which significantly improv es the accuracy of mileage recognition. Our code is im- plemented using Python based on the PyT orch [ 70 ] framework. Adam [ 71 ] optimizer is used together with the 1cycle [ 72 ] learning rate scheduler , and the learning rate is set to 7 e − 4 . The input images are uniformly resized to 32 × 128 and di vided into patches of size 4 × 8 . The number of permutations is set to 6 in this work. The e xperiments are conducted on a server with a CPU Intel(R) Xeon(R) Gold 5318Y CPU @2.10GHz and GPU R TX3090s. C. Comparison on Public Benchmarks Results on our pr oposed EvMetro5K dataset. As shown in T ab . I , we also report the recognition results of our HGP- KMR method with other SO T A recognition algorithms. It is evident that our method outperforms all compared algorithms, achieving an accuracy of 95.1%. Compared to the baseline method, ParSeq [ 16 ], our approach improves accuracy by +3.4%. These results demonstrate that in challenging scenarios such as low-light conditions and f ast motion, where RGB cameras typically struggle, our method effecti vely integrates ev ent information, leading to a significant improvement for Kilometer Marker Recognition. Results on W ordArt* dataset. This dataset is designed for artistic character recognition, with images collected from a variety of scenes, including posters, greeting cards, cov ers, and handwritten text. The di verse and challenging nature of these scenes adds to the difficulty of the dataset. As shown in the T ab . II , CDistNet [ 26 ] equipped with our hypergraph prompt strategy outperforms other methods on this dataset, achieving an accuracy of 91.5%. Compared to other character recog- nition methods, such as MGP-STR and SIGA, our approach exceeds their accuracy by +11% and +20.6%, respectiv ely . In addition, b uilding upon P arSeq [ 16 ], incorporating our prompt strategy also resulted in a significant improvement. These results demonstrate the effecti veness of our method in tackling the challenging task of artistic character recognition. IEEE TRANSA CTIONS ON COGNITIVE AND DEVELOPMENT AL SYSTEMS (IEEE TCDS) , 2026 8 T ABLE III A B L A T I O N S T U D I E S O F D I FF E R E N T M O D A L I T I ES . # Modalities Accuracy 1. RGB only 84.2 2. Event only 84.6 3. RGB & Event image 86.7 4. RGB & Grayscale image 95.1 T ABLE IV A B L A T I O N S T U D I E S O F D I FF E R E N T G N N S . Fusion Layers Accuracy 1. GraphSA GE 93.2 2. GA TCon v 93.7 3. HGCN 95.1 T ABLE V A B L A T I O N S T U D I E S O F D I FF E R E N T F U S I ON M E T H O D S . # Fusion Methods Accuracy FPS 1. Addition 91.7 352 2. Concatenate 93.5 234 3. HyperGraph Fusion 93.3 97 4. HyperGraph Prompt 95.1 89 Fig. 4. Ablation studies of the Fusion layers and Permutations. Results on IC15* dataset. This dataset is a widely used benchmark for text detection and recognition tasks. It con- tains a large number of image samples with text information from various complex scenes, making it suitable for training and ev aluating text detection and recognition algorithms. As shown in the T ab . II , on this dataset, CDistNet [ 26 ] equipped with our hypergraph prompt strategy significantly outperforms other state-of-the-art text recognition methods, achieving an accuracy of 92.9%, which demonstrates the rob ustness of our approach across diverse scenes. Furthermore, building upon ParSeq [ 16 ], the incorporation of our fusion strategy yields an additional +3.6% improvement. D. Ablation Study Analysis of different modalities. As sho wn in T ab . III , we compared the model’ s performance across different input modalities. Here, RGB only and Event only indicate that only a single modality is input, following the training procedure of P ARseq [ 16 ]. Their accuracies reached 84.2% and 84.6%, respectiv ely . RGB & Event image denote that we input the RGB image along with the e vent image, which is stacked according to the event stream. In our frame work, the RGB frame and the reconstructed grayscale image from the event stream are fed into the network together, providing richer and more comprehensive information for mileage recognition. As a result, the recognition accuracy is significantly improved, reaching 95.1%. Analysis of different GNNs. In this work, we employed a hypergraph to facilitate multi-modal information interaction and lev eraged graph neural networks (GNNs) for learning. Different GNN architectures yield varying benefits. Specifi- cally , we compared two common GNNs, GraphSA GE [ 73 ] and GA TCon v [ 74 ], and the experiments show that both achie ved strong performance, with accuracies of 93.2% and 93.7%, respectiv ely . T o enable more effecti ve hypergraph modeling, we ultimately adopted HGCN [ 75 ] as the graph learning network, which demonstrated the best performance. Analysis of fusion methods. W e also analyzed dif ferent fusion strategies in an effort to identify the most effecti ve method for multi-modal information interaction. First, we experimented with common approaches such as directly adding or concate- nating the two modality features after extraction, which did not yield satisfactory results. W e then explored concatenating the features along the channel dimension after both modalities passed through their respective encoders, followed by hyper- graph neural network–based relational modeling. Finally , in our proposed framework, we performed hypergraph modeling on the RGB embeddings and the ev ent features obtained from the encoder , and progressively integrated them into the RGB backbone branch via a prompt mechanism. As reported in the T ab . V , our proposed hyper graph prompt strategy achie ved the best performance. In addition, we compare the inference speed of the model under different fusion strategies. The results show that, compared to simple addition or concatenation, the proposed multi-modal fusion method introduces a modest computational o verhead, resulting in an ov erall inference speed of 89 FPS. Analysis of fusion layers. W e further observ ed that, within the IEEE TRANSA CTIONS ON COGNITIVE AND DEVELOPMENT AL SYSTEMS (IEEE TCDS) , 2026 9 Fig. 5. V isualization of attention maps of our method on the EvMetro5K dataset. hypergraph prompt strategy , integrating features into different V iT layers of the RGB branch encoder leads to varying ef fects. As shown in the left part of Fig. 4 , integrating features in the early layers (e.g., from the 1st to the 6th layer) generally results in suboptimal performance. In contrast, integrating the prompt at the final layer (the 12th layer) yields a clear performance impro vement. Ultimately , the results demonstrate that integrating multi-modal hyper graph features at each layer of the RGB backbone can achiev e the best performance. Analysis of the number of permutations. In the decoder part, we adopted the permutation strategy similar to P ARseq [ 16 ]. W e illustrated in the right part of Fig. 4 ho w different values of K , the number of permutations, affect the final experimental results in our work. It can be observed that the best perfor- mance is achiev ed when K is set to 6. W e argue that a smaller K reflects fe wer possible context orderings, which may lead to underfitting, while a larger K may cause the model to o verfit, resulting in suboptimal performance. E. P arameter Analysis Our method also exhibits a clear adv antage in terms of parameter ef ficiency . As reported in T ab . I , our proposed ap- proach contains 24.2 M parameters. Building upon the ParSeq algorithm, the introduction of our hypergraph prompt strategy increases the parameter count by only 0.8 M, yet yields a substantial improv ement (+3.4%) in recognition accuracy . Furthermore, compared with other recognition methods such as MGP-STR and CDistNet, our approach uses merely 16% and 37% of their parameters, respectiv ely . Collectively , these analyses indicate that the proposed framework attains supe- rior performance under a compact parameter budget, thereby substantiating its effecti veness and ef ficiency . F . V isualization In addition to the aforementioned analytical e xperiments, we also conducted visualization e xperiments to help readers better understand our task. As shown in the Fig. 5 , we visualized the attention acti vation maps of the RGB encoder and ov erlaid them on the grayscale images. The red-highlighted regions denote the areas of focus attended to by the model. From the visualization, it can be seen that our method is able to attend to the target metro mileage character regions in various scenarios, demonstrating the robustness of our approach. G. Limitation Analysis Although our method has demonstrated excellent perfor- mance across v arious datasets, there is still room for im- prov ement. On the one hand, the proposed method does not incorporate scenario-specific adapti v e adjustments for dif ferent conditions. For example, under ov erexposure scenarios, it does not e xplicitly employ adaptiv e event filtering or intensity- aware modulation mechanisms to mitigate the loss of critical information. On the other hand, we hav e not le veraged the powerful capabilities of large multi-modal models for joint modeling of visual and textual modalities. In future work, we plan to make our model adapti ve to different challenging scenarios and inte grate large models to achiev e more accurate Kilometer Marker Recognition. IEEE TRANSA CTIONS ON COGNITIVE AND DEVELOPMENT AL SYSTEMS (IEEE TCDS) , 2026 10 V I . C O N C L U S I O N In this work, we hav e made a comprehensi ve contribu- tion to the RGB-Event based Kilometer Marker Recognition (KMR) task. W e introduce EvMetro5K, the first multi-modal RGB–Event mileage recognition dataset comprising 5,599 synchronized RGB–Grayscale image pairs, establishing a reli- able benchmark for future research. Building upon this dataset, we propose the HGP-KMR method, a new multi-modal fusion strategy that performs hyper graph modeling on RGB and e vent modalities and integrates the resulting graph-structured fea- tures into the RGB backbone for layer-wise fusion. Extensi ve experiments across multiple datasets demonstrate the superior performance of our approach, of fering a solid foundation for future dev elopments in multi-modal mileage recognition. A C K N O W L E D G M E N T This work was supported by the National Ke y R&D Pro- gram of China (Grant No. 2022YFC3803700), the National Natural Science Foundation of China (Grant No. 62432002), and Anhui Provincial Natural Science Foundation - Outstand- ing Y outh Project (2408085Y032). The authors acknowledge the High-performance Computing Platform of Anhui Univer - sity for providing computing resources. R E F E R E N C E S [1] K. He, X. Chen, S. Xie, Y . Li, P . Dollár, and R. Girshick, “Masked au- toencoders are scalable vision learners, ” in Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , 2022, pp. 16 000–16 009. [2] A. Radford, J. W . Kim, C. Hallac y , A. Ramesh, G. Goh, S. Agarwal, G. Sastry , A. Askell, P . Mishkin, J. Clark et al. , “Learning transferable visual models from natural language supervision, ” in International confer ence on machine learning . PmLR, 2021, pp. 8748–8763. [3] A. Dosovitskiy , “ An image is worth 16x16 words: Transformers for image recognition at scale, ” arXiv pr eprint arXiv:2010.11929 , 2020. [4] G. Galle go, T . Delbrück, G. Orchard, C. Bartolozzi, B. T aba, A. Censi, S. Leutenegger , A. J. Davison, J. Conradt, K. Daniilidis et al. , “Event- based vision: A survey , ” IEEE transactions on pattern analysis and machine intelligence , vol. 44, no. 1, pp. 154–180, 2020. [5] C. T ang, X. W ang, J. Huang, B. Jiang, L. Zhu, J. Zhang, Y . W ang, and Y . Tian, “Revisiting color-e vent based tracking: A unified network, dataset, and metric, ” arXiv preprint , 2022. [6] X. W ang, S. W ang, C. T ang, L. Zhu, B. Jiang, Y . T ian, and J. T ang, “Event stream-based visual object tracking: A high-resolution bench- mark dataset and a novel baseline, ” in Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2024, pp. 19 248–19 257. [7] J. Huang, S. W ang, S. W ang, Z. W u, X. W ang, and B. Jiang, “Mamba- fetrack: Frame-event tracking via state space model, ” in Chinese Confer- ence on P attern Recognition and Computer V ision (PRCV) . Springer, 2024, pp. 3–18. [8] A. T omy , A. Paigwar , K. S. Mann, A. Renzaglia, and C. Laugier, “Fusing event-based and rgb camera for robust object detection in adverse conditions, ” in 2022 International Conference on Robotics and Automation (ICRA) . IEEE, 2022, pp. 933–939. [9] Z. Zhou, Z. W u, R. Boutteau, F . Y ang, C. Demonceaux, and D. Ginhac, “Rgb-ev ent fusion for moving object detection in autonomous driving, ” arXiv preprint arXiv:2209.08323 , 2022. [10] X. W ang, Z. W u, B. Jiang, Z. Bao, L. Zhu, G. Li, Y . W ang, and Y . T ian, “Hardvs: Revisiting human acti vity recognition with dynamic vision sensors, ” in Pr oceedings of the AAAI Conference on Artificial Intelligence , vol. 38, no. 6, 2024, pp. 5615–5623. [11] X. W ang, H. W ang, S. W ang, Q. Chen, J. Jin, H. Song, B. Jiang, and C. Li, “Rgb-event based pedestrian attribute recognition: A benchmark dataset and an asymmetric rwkv fusion framework, ” arXiv pr eprint arXiv:2504.10018 , 2025. [12] X. W ang, Y . Li, F . W ang, B. Jiang, Y . W ang, Y . Tian, J. T ang, and B. Luo, “Sign language translation using frame and event stream: Benchmark dataset and algorithms, ” arXiv preprint , 2025. [13] S. Ding, J. Chen, Y . W ang, Y . Kang, W . Song, J. Cheng, and Y . Cao, “E-mlb: Multilevel benchmark for event-based camera denoising, ” IEEE T ransactions on Multimedia , vol. 26, pp. 65–76, 2023. [14] L. W ang, T .-K. Kim, and K.-J. Y oon, “Eventsr: From asynchronous ev ents to image reconstruction, restoration, and super-resolution via end- to-end adversarial learning, ” in Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern recognition , 2020, pp. 8315–8325. [15] H. Rebecq, R. Ranftl, V . Koltun, and D. Scaramuzza, “High speed and high dynamic range video with an event camera, ” IEEE transactions on pattern analysis and machine intelligence , v ol. 43, no. 6, pp. 1964–1980, 2019. [16] D. Bautista and R. Atienza, “Scene text recognition with permuted autoregressi ve sequence models, ” in European confer ence on computer vision . Springer , 2022, pp. 178–196. [17] F . W ang, F . Zhang, X. W ang, M. W ang, D. Huang, and J. T ang, “Evraindrop: Hypergraph-guided completion for effecti ve frame and ev ent stream aggregation, ” arXiv pr eprint arXiv:2511.21439 , 2025. [18] Y . Gao, J. Lu, S. Li, Y . Li, and S. Du, “Hyper graph-based multi-view action recognition using event cameras, ” IEEE T ransactions on P attern Analysis and Mac hine Intelligence , vol. 46, no. 10, pp. 6610–6622, 2024. [19] K. W ang, B. Babenko, and S. Belongie, “End-to-end scene text recog- nition, ” in 2011 International conference on computer vision . IEEE, 2011, pp. 1457–1464. [20] B. Shi, X. Bai, and C. Y ao, “ An end-to-end trainable neural network for image-based sequence recognition and its application to scene text recognition, ” IEEE transactions on pattern analysis and machine intelligence , vol. 39, no. 11, pp. 2298–2304, 2016. [21] M. Liao, J. Zhang, Z. W an, F . Xie, J. Liang, P . L yu, C. Y ao, and X. Bai, “Scene text recognition from two-dimensional perspective, ” in Pr oceedings of the AAAI confer ence on artificial intelligence , vol. 33, no. 01, 2019, pp. 8714–8721. [22] X. Han, J. Gao, C. Y ang, Y . Y uan, and Q. W ang, “Spotlight text detector: Spotlight on candidate regions like a camera, ” IEEE T ransactions on Multimedia , 2024. [23] Z. Zhao, J. T ang, C. Lin, B. W u, C. Huang, H. Liu, X. T an, Z. Zhang, and Y . Xie, “Multi-modal in-context learning makes an ego-ev olving scene text recognizer, ” in Proceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , 2024, pp. 15 567–15 576. [24] T . Guan, W . Shen, X. Y ang, Q. Feng, Z. Jiang, and X. Y ang, “Self- supervised character-to-character distillation for text recognition, ” in 2023 IEEE/CVF International Conference on Computer V ision (ICCV) , 2023, pp. 19 416–19 427. [25] T . Guan, C. Gu, J. Tu, X. Y ang, Q. Feng, Y . Zhao, and W . Shen, “Self- supervised implicit glyph attention for text recognition, ” in Proceedings of the IEEE/CVF Conference on Computer V ision and P attern Recogni- tion , 2023, pp. 15 285–15 294. [26] T . Zheng, Z. Chen, S. Fang, H. Xie, and Y .-G. Jiang, “Cdistnet: Perceiving multi-domain character distance for robust text recognition, ” International Journal of Computer V ision , v ol. 132, no. 2, pp. 300–318, 2024. [27] J.-N. Li, X.-Q. Liu, X. Luo, and X.-S. Xu, “V olter: V isual collaboration and dual-stream fusion for scene text recognition, ” IEEE T ransactions on Multimedia , 2024. [28] J. W ei, H. Zhan, Y . Lu, X. T u, B. Y in, C. Liu, and U. Pal, “Image as a language: Revisiting scene text recognition via balanced, unified and synchronized vision-language reasoning network, ” in Proceedings of the AAAI Conference on Artificial Intelligence , vol. 38, no. 6, 2024, pp. 5885–5893. [29] B. Na, Y . Kim, and S. Park, “Multi-modal text recognition networks: Interactiv e enhancements between visual and semantic features, ” in Eur opean Conference on Computer V ision . Springer, 2022, pp. 446– 463. [30] C. Da, P . W ang, and C. Y ao, “Levenshtein ocr, ” in European Conference on Computer V ision . Springer , 2022, pp. 322–338. [31] S. Fang, H. Xie, Y . W ang, Z. Mao, and Y . Zhang, “Read like humans: Autonomous, bidirectional and iterativ e language modeling for scene text recognition, ” in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , 2021, pp. 7098–7107. [32] Y . Liu, B. Y ang, Q. Liu, Z. Li, Z. Ma, S. Zhang, and X. Bai, “T extmonkey: An ocr-free large multimodal model for understanding document, ” arXiv pr eprint arXiv:2403.04473 , 2024. [33] H. Feng, Q. Liu, H. Liu, W . Zhou, H. Li, and C. Huang, “Docpedia: Un- leashing the power of large multimodal model in the frequency domain IEEE TRANSA CTIONS ON COGNITIVE AND DEVELOPMENT AL SYSTEMS (IEEE TCDS) , 2026 11 for versatile document understanding, ” arXiv pr eprint arXiv:2311.11810 , 2023. [34] H. W ei, L. Kong, J. Chen, L. Zhao, Z. Ge, J. Y ang, J. Sun, C. Han, and X. Zhang, “V ary: Scaling up the vision vocabulary for large vision-language model, ” in Eur opean Conference on Computer V ision . Springer , 2025, pp. 408–424. [35] A. Hu, H. Xu, J. Y e, M. Y an, L. Zhang, B. Zhang, C. Li, J. Zhang, Q. Jin, F . Huang et al. , “mplug-docowl 1.5: Unified structure learning for ocr-free document understanding, ” arXiv pr eprint arXiv:2403.12895 , 2024. [36] H. W ei, C. Liu, J. Chen, J. W ang, L. Kong, Y . Xu, Z. Ge, L. Zhao, J. Sun, Y . Peng et al. , “General ocr theory: T owards ocr-2.0 via a unified end-to-end model, ” arXiv preprint , 2024. [37] X. W ang, J. Jiang, D. Li, F . W ang, L. Zhu, Y . W ang, Y . Tian, and J. T ang, “Eventstr: A benchmark dataset and baselines for ev ent stream based scene text recognition, ” arXiv preprint , 2025. [38] X. W ang, J. Jiang, Q. Chen, L. Chen, L. Zhu, Y . W ang, Y . Tian, and J. T ang, “Estr-cot: T ow ards explainable and accurate event stream based scene text recognition with chain-of-thought reasoning, ” arXiv preprint arXiv:2507.02200 , 2025. [39] D. Zhang, Q. Ding, P . Duan, C. Zhou, and B. Shi, “Data association between event streams and intensity frames under div erse baselines, ” in Eur opean Confer ence on Computer V ision . Springer, 2022, pp. 72–90. [40] Z. Chen, Z. Liao, D. Ma, H. T ang, Q. Zheng, and G. Pan, “Evhdr- nerf: Building high dynamic range radiance fields with single exposure images and ev ents, ” in Pr oceedings of the AAAI Conference on Artificial Intelligence , vol. 39, no. 3, 2025, pp. 2376–2384. [41] Y . Y ang, J. Han, J. Liang, I. Sato, and B. Shi, “Learning e vent guided high dynamic range video reconstruction, ” in Pr oceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , 2023, pp. 13 924–13 934. [42] Z. Chen, Z. Lu, D. Ma, H. T ang, X. Jiang, Q. Zheng, and G. Pan, “Event- id: Intrinsic decomposition using an e vent camera, ” in Pr oceedings of the 32nd ACM International Conference on Multimedia , 2024, pp. 10 095– 10 104. [43] G. Liang, K. Chen, H. Li, Y . Lu, and L. W ang, “T owards robust event- guided low-light image enhancement: a large-scale real-world event- image dataset and novel approach, ” in Proceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2024, pp. 23– 33. [44] M. Lu, Z. Chen, Y . Liu, D. Ma, H. T ang, Q. Zheng, and G. Pan, “Edygs: Event enhanced dynamic 3d radiance fields from blurry monocular video, ” in Proceedings of the Thirty-F ourth International Joint Con- fer ence on Artificial Intelligence , 2025, pp. 1684–1692. [45] Y . Liu, Z. Chen, H. Y an, D. Ma, H. T ang, Q. Zheng, and G. Pan, “E- nemf: Event-based neural motion field for novel space-time vie w synthe- sis of dynamic scenes, ” in Proceedings of the IEEE/CVF International Confer ence on Computer V ision , 2025, pp. 10 854–10 864. [46] H. Y an, Z. Lu, Z. Chen, D. Ma, H. T ang, Q. Zheng, and G. Pan, “Evstvsr: Event guided space-time video super-resolution, ” in Pr oceedings of the AAAI Confer ence on Artificial Intelligence , vol. 39, no. 9, 2025, pp. 9085–9093. [47] Y . Ma, P . Duan, Y . Hong, C. Zhou, Y . Zhang, J. Ren, and B. Shi, “Color4e: Event demosaicing for full-color ev ent guided image deblur- ring, ” in Pr oceedings of the 32nd A CM International Conference on Multimedia , 2024, pp. 661–670. [48] H. Li, H. Shi, and X. Gao, “ A coarse-to-fine fusion network for event- based image deblurring, ” in Pr oceedings of the International Joint Confer ence on Artificial Intelligence , 2024, pp. 974–982. [49] F . Guo, P . Ren, and C. Luo, “Utnet: ev ent-rgb multimodal fusion model for underwater transparent org anism detection, ” Intelligent Marine T ec h- nology and Systems , vol. 3, no. 1, p. 18, 2025. [50] Y . Qi, L. Zhu, Y . Zhang, and J. Li, “E2nerf: Event enhanced neural radiance fields from blurry images, ” in Pr oceedings of the IEEE/CVF International Conference on Computer V ision , 2023, pp. 13 254–13 264. [51] L. F an, J. Y ang, L. W ang, J. Zhang, X. Lian, and H. Shen, “Efficient spiking neural network for rgb–ev ent fusion-based object detection, ” Electr onics , vol. 14, no. 6, p. 1105, 2025. [52] Z. Liu, Y . Lin, Y . Cao, H. Hu, Y . W ei, Z. Zhang, S. Lin, and B. Guo, “Swin transformer: Hierarchical vision transformer using shifted windows, ” in Pr oceedings of the IEEE/CVF international confer ence on computer vision , 2021, pp. 10 012–10 022. [53] X. Zhai, B. Mustafa, A. Kolesniko v , and L. Beyer , “Sigmoid loss for language image pre-training, ” in Pr oceedings of the IEEE/CVF international conference on computer vision , 2023, pp. 11 975–11 986. [54] J. Li, D. Li, S. Sav arese, and S. Hoi, “Blip-2: Bootstrapping language- image pre-training with frozen image encoders and large language models, ” in International confer ence on machine learning . PMLR, 2023, pp. 19 730–19 742. [55] R. Rombach, A. Blattmann, D. Lorenz, P . Esser, and B. Ommer, “High- resolution image synthesis with latent diffusion models, ” in Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern reco gnition , 2022, pp. 10 684–10 695. [56] P . W ang, S. Bai, S. T an, S. W ang, Z. Fan, J. Bai, K. Chen, X. Liu, J. W ang, W . Ge et al. , “Qwen2-vl: Enhancing vision-language model’ s perception of the world at any resolution, ” arXiv pr eprint arXiv:2409.12191 , 2024. [57] I. Chami, R. Y ing, C. Ré, and J. Leskovec, “Hyperbolic graph con vo- lutional neural networks, ” Advances in neural information processing systems , vol. 32, p. 4869, 2019. [58] A. Baevski and M. Auli, “ Adaptive input representations for neural language modeling, ” arXiv preprint , 2018. [59] Q. W ang, B. Li, T . Xiao, J. Zhu, C. Li, D. F . W ong, and L. S. Chao, “Learning deep transformer models for machine translation, ” in Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics , 2019, pp. 1810–1822. [60] X. Y ang, Z. Qiao, and Y . Zhou, “Ipad: Iterative, parallel, and diffusion- based network for scene text recognition, ” International Journal of Computer V ision , 2025. [61] S. Bai, K. Chen, X. Liu, J. W ang, W . Ge, S. Song, K. Dang, P . W ang, S. W ang, J. T ang, H. Zhong, Y . Zhu, M. Y ang, Z. Li, J. W an, P . W ang, W . Ding, Z. Fu, Y . Xu, J. Y e, X. Zhang, T . Xie, Z. Cheng, H. Zhang, Z. Y ang, H. Xu, and J. Lin, “Qwen2.5-vl technical report, ” arXiv preprint arXiv:2502.13923 , 2025. [62] M. Y ang, B. Y ang, M. Liao, Y . Zhu, and X. Bai, “Class-aware mask- guided feature refinement for scene text recognition, ” P attern Recogni- tion , vol. 149, p. 110244, 2024. [63] M. Y ang, M. Liao, P . Lu, J. W ang, S. Zhu, H. Luo, Q. Tian, and X. Bai, “Reading and writing: Discriminativ e and generative modeling for self-supervised text recognition, ” in Pr oceedings of the 30th ACM International Conference on Multimedia , 2022, pp. 4214–4223. [64] P . W ang, C. Da, and C. Y ao, “Multi-granularity prediction for scene text recognition, ” in Eur opean Conference on Computer V ision . Springer , 2022, pp. 339–355. [65] W . Hu, Y . Xu, Y . Li, W . Li, Z. Chen, and Z. Tu, “Bliv a: A simple multimodal llm for better handling of text-rich visual questions, ” in Pr oceedings of the AAAI Conference on Artificial Intelligence , vol. 38, no. 3, 2024, pp. 2256–2264. [66] X. Xie, L. Fu, Z. Zhang, Z. W ang, and X. Bai, “T ow ard understand- ing w ordart: Corner -guided transformer for scene text recognition, ” in Eur opean conference on computer vision . Springer , 2022, pp. 303–321. [67] D. Karatzas, L. Gomez-Bigorda, A. Nicolaou, S. Ghosh, A. Bagdanov , M. Iwamura, J. Matas, L. Neumann, V . R. Chandrasekhar , S. Lu et al. , “Icdar 2015 competition on robust reading, ” in 2015 13th international confer ence on document analysis and recognition (ICDAR) . IEEE, 2015, pp. 1156–1160. [68] X. Xie, L. Fu, Z. Zhang, Z. W ang, and X. Bai, “T ow ard understand- ing w ordart: Corner -guided transformer for scene text recognition, ” in Eur opean conference on computer vision . Springer , 2022, pp. 303–321. [69] H. Rebecq, D. Gehrig, and D. Scaramuzza, “Esim: an open event camera simulator , ” in Confer ence on robot learning . PMLR, 2018, pp. 969– 982. [70] A. Paszke, S. Gross, F . Massa, A. Lerer, J. Bradbury , G. Chanan, T . Killeen, Z. Lin, N. Gimelshein, L. Antiga et al. , “Pytorch: An imperativ e style, high-performance deep learning library , ” Advances in neural information pr ocessing systems , vol. 32, pp. 8026–8037, 2019. [71] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” arXiv preprint arXiv:1412.6980 , 2014. [72] L. N. Smith and N. T opin, “Super-conv ergence: V ery fast training of neural networks using large learning rates, ” in Artificial intelligence and machine learning for multi-domain operations applications , vol. 11006. SPIE, 2019, pp. 369–386. [73] W . Hamilton, Z. Y ing, and J. Leskovec, “Inductive representation learning on large graphs, ” Advances in neural information pr ocessing systems , vol. 30, 2017. [74] P . V elicko vic, G. Cucurull, A. Casanova, A. Romero, P . Lio, Y . Bengio et al. , “Graph attention networks, ” stat , vol. 1050, no. 20, pp. 10–48 550, 2017. [75] Y . Feng, H. Y ou, Z. Zhang, R. Ji, and Y . Gao, “Hypergraph neural net- works, ” in Pr oceedings of the AAAI confer ence on artificial intelligence , vol. 33, no. 01, 2019, pp. 3558–3565.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment