Budgeted Active Experimentation for Treatment Effect Estimation from Observational and Randomized Data

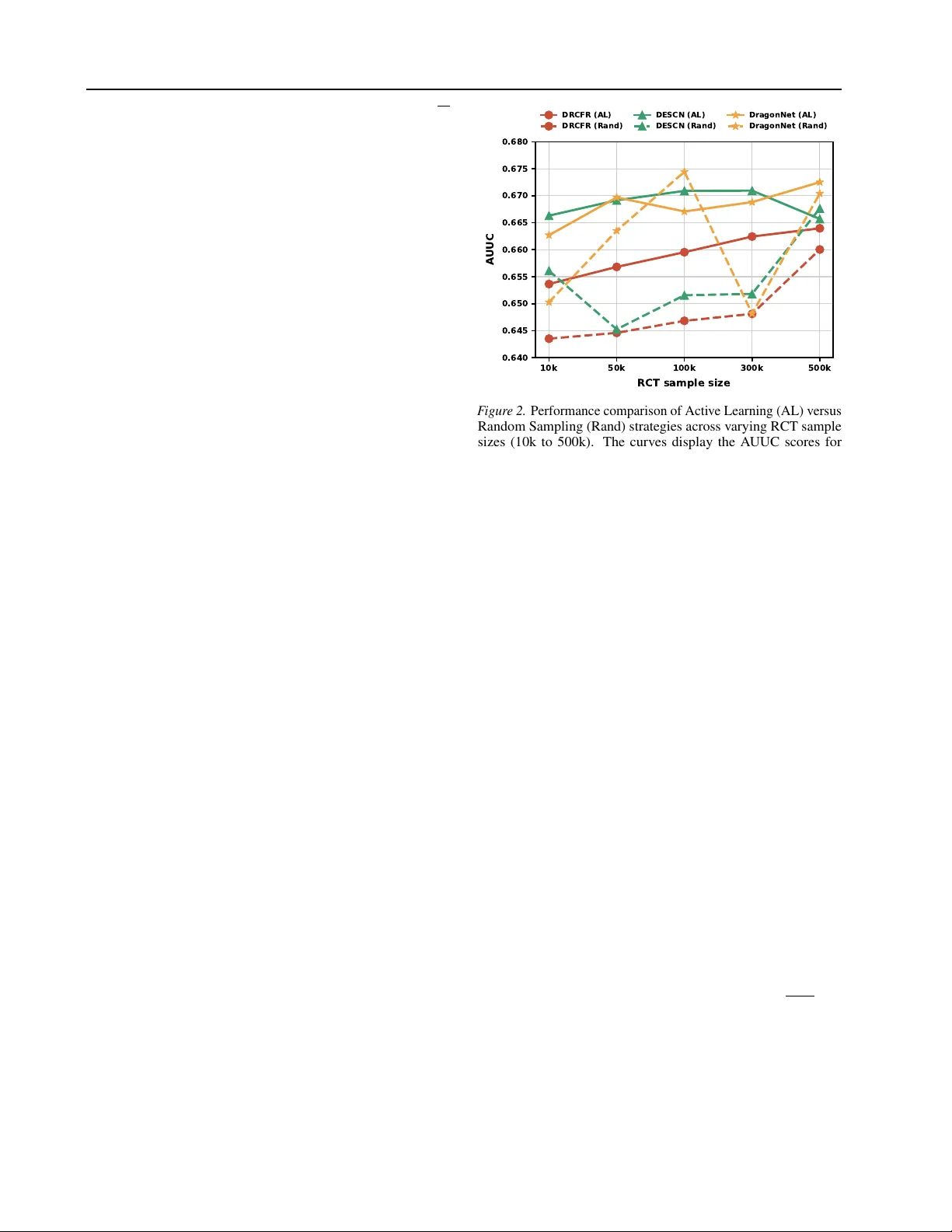

Estimating heterogeneous treatment effects is central to data-driven decision-making, yet industrial applications often face a fundamental tension between limited randomized controlled trial (RCT) budgets and abundant but biased observational data co…

Authors: Jiacan Gao, Xinyan Su, Mingyuan Ma