Enhancing LLM-Based Test Generation by Eliminating Covered Code

Automated test generation is essential for software quality assurance, with coverage rate serving as a key metric to ensure thorough testing. Recent advancements in Large Language Models (LLMs) have shown promise in improving test generation, particu…

Authors: WeiZhe Xu, Mengyu Liu, Fanxin Kong

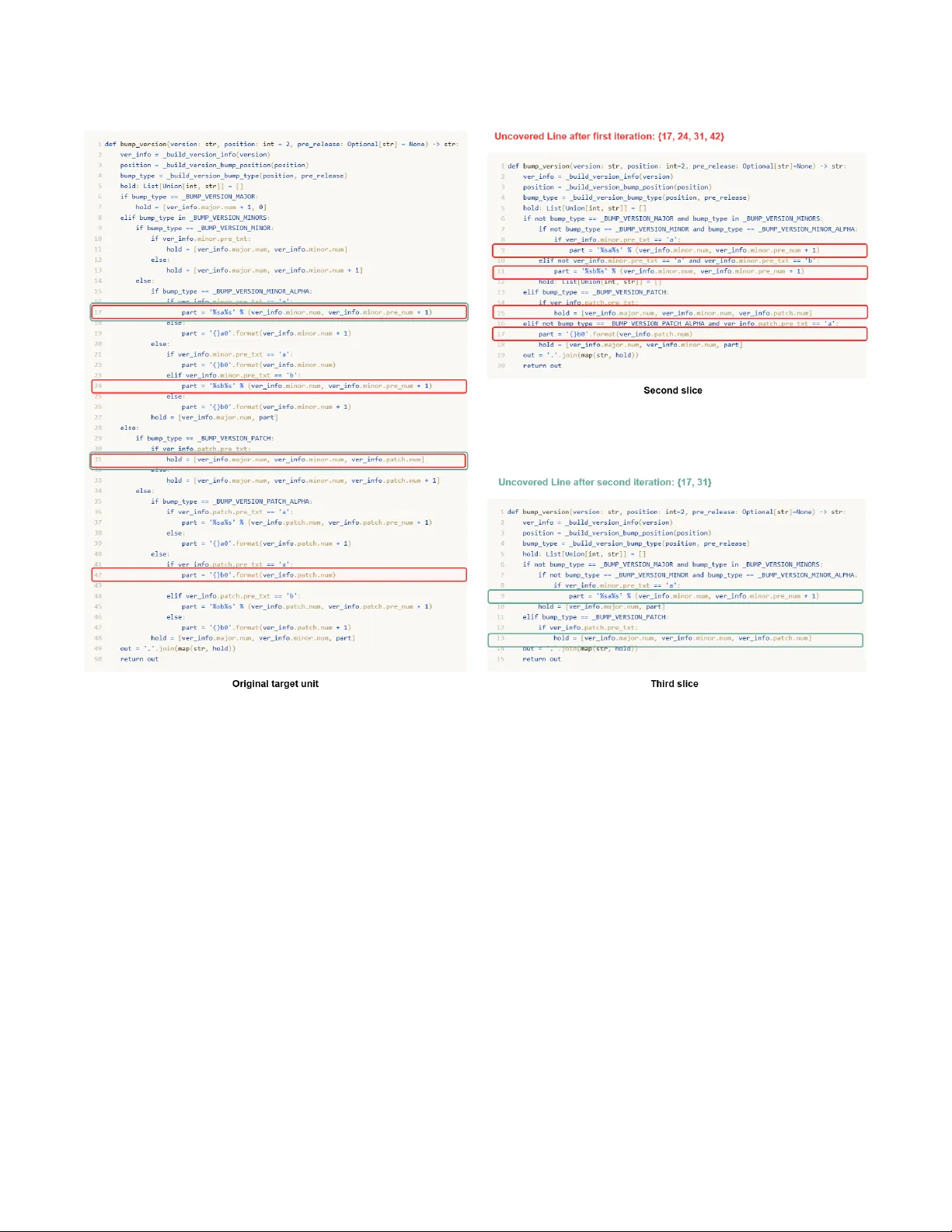

Enhancing LLM-Based T est Generation by Eliminating Cov ered Code W eizhe Xu University of Notre Dame Notre Dame, IN, USA wxu3@nd.edu Mengyu Liu W ashington State University Richland, W A, USA mengyu.liu@wsu.edu Fanxin Kong University of Notre Dame Notre Dame, IN, USA f kong@nd.edu Abstract A utomated test generation is essential for software quality assur- ance, with coverage rate serving as a ke y metric to ensure thorough testing. Recent advancements in Large Language Models (LLMs) have shown promise in impr oving test generation, particularly in achieving higher coverage. Howev er , while existing LLM-based test generation solutions perform well on small, isolate d code snippets, they struggle when applied to complex methods under test. T o address these issues, we propose a scalable LLM-based unit test generation method. Our approach consists of tw o key steps. The rst step is context information retrieval, which uses b oth LLMs and static analysis to gather relevant contextual information associated with the complex methods under test. The second step, iterative test generation with code elimination, repeate dly generates unit tests for the code slice, tracks the achiev ed coverage, and selectively removes code segments that hav e already been cover ed. This pro- cess simplies the testing task and mitigates issues arising from token limits or reduced reasoning eectiveness associate d with excessively long contexts. Through comprehensiv e evaluations on open-source projects, our approach outperforms state-of-the-art LLM-based and search-based methods, demonstrating its eective- ness in achieving high coverage on comple x methods. Ke ywords Unit T est Generation, Large Language Models, Coverage 1 Introduction Achieving high code coverage in automated test generation is a critical yet challenging pr oblem in software quality assurance , as insucient coverage often leaves bugs undetected. Traditional ap- proaches—such as search-based [ 5 , 12 ], constraint-based [ 2 , 7 ], and random-based [ 13 , 18 ] methods—frequently fall short when facing complex methods under test, as their heuristic explorations cannot eciently handle the vast and intricate execution spaces. Recently , large language mo dels (LLMs) have presented new op- portunities for improving the coverage rate of unit test generation. Studies such as ChatT ester [ 29 ] and ChatUniT est [ 4 ] have evalu- ated LLMs, such as GPT -3.5, demonstrating their ability to achieve a higher coverage rate compared to conventional techniques like Evosuite [ 5 ]. Howev er , existing LLM-based test generation methods work well on simple code snippets but struggle with complex func- tions (cy clomatic complexity [ 14 ] > 10), which pose moderate or higher risk [ 21 , 27 ]. Leveraging LLMs to generate high-coverage test Conference’XX, XXX, XXX 2026. ACM ISBN 978-x-xxxx-xxxx-x/Y YY Y/MM https://doi.org/10.1145/nnnnnnn.nnnnnnn code for complex methods is crucial for mo ving LLM-driv en testing from toy examples to practical, pr oduction-level applications. This is a non-trivial task due to two challenging issues. First, cur- rent LLMs have limited reasoning capabilities. Their performance declines on complex methods involving deeply nested conditions and multiple execution paths, often resulting in low coverage. Sec- ond, prompt eciency is a major concern. LLMs face strict token limits (e .g., 16K tokens in GPT -4o), making it dicult to include all relevant conte xt for complex methods. Consequently , enhanc- ing prompt eciency is essential to avoid failures due to token overow . It is also important for improving the eectiveness of the LLM. While some approaches [ 4 , 29 ] try to pack more context into prompts, excessiv e or irrelevant information can introduce noise and hinder reasoning [10, 22, 30]. Multi-turn prompting may help to increase the reasoning capabilities, but it increases r edundancy and token usage. Ecient prompting is thus critical to reduce noise, stay within limits, and improve output quality . T o address these challenges, we propose an LLM-based unit test generation framework for Python that incrementally eliminates already-cover ed co de using a divide-and-conquer strategy . Our method consists of two key steps. (1) Context information re- trieval : we collect and summarize all relevant code dependencies for the target unit, including external packages, helper functions, and method denitions. (2) Iterative test generation with code elimination : the LLM repeatedly generates and renes test cases while the framework systematically removes code segments that have already be en covered, ensuring that such removals do not alter the execution paths of any uncovered lines. After each iteration, this pruning produces a simplied program slice that serves for the next r ound of test generation. By progressively reducing redun- dant code, our method keeps the LLM’s attention focused on the remaining uncovered parts, narrows the r easoning scope, simplies the test generation task, and mitigates performance degradation caused by irrelevant or redundant context. The main contributions of our study are as follows. • T o improve cov erage performance on complex methods, we propose a fully automated LLM-based unit test generation approach tailored for Python. • The approach employs an iterative unit test generation ap- proach that enhances b oth eciency and ee ctiveness by systematically eliminating already cover ed code. • W e conduct a comprehensive evaluation across multiple open-source projects using three LLM-based unit test gener- ation method and one classical sear ch-based method, demon- strating the eectiveness of our method in comparison to three state-of-the-art LLM-based approaches. Conference’XX, 2026, XXX, XXX W eizhe Xu, Mengyu Liu, and Fanxin Kong 2 Related W orks In this section, we provide a brief overview of unit test generation methods. A “unit" typically refers to a function, method, or proce- dure within the code. Unit test generation aims to automatically create test cases that validate the correctness of software by execut- ing dierent code paths. Developers hope the generated unit tests can cover as many branches and lines as possible. 2.1 Conventional Unit T est Generation Methods Researchers have explored various approaches to achieve high cov- erage, including search-based software testing (SBST) [ 5 , 12 ], sym- bolic execution [ 3 , 8 ], and deep-learning-based test generation [ 24 ]. SBST methods employ strategies like evolutionary algorithms in Evosuite [ 5 ] and cov erage-directed random testing in Randoop [ 18 ]. Howev er , their eectiveness is limited in complex software due to the vast search space [ 15 ]. Symb olic execution tools, such as Dart [ 8 ], systematically explore feasible execution paths but suer from scalability issues due to path explosion [ 26 ]. Deep learning models generate test cases directly from co de and oer scalabil- ity by leveraging human-written tests across div erse datasets [ 24 ]. While they produce varied inputs beyond SBST’s capabilities, they often generate code that is non-compliant or fails to execute [28]. 2.2 LLM-based Unit T est Generation Methods In recent years, LLMs have demonstrated their potential to eec- tively handle code-related tasks, such as co de generation, co de repair , etc. Some works hav e already tried to leverage LLMs for unit test generation. ChatT ester [ 29 ] and ChatUniT est [ 4 ] show that the LLM can outperform the SBST methods. CODAMOSA [ 11 ] inte- grates LLMs with SBST methods, leveraging LLMs to assist when SBST methods can no longer eectively explore further . TELP A [ 27 ] utilizes carefully designed prompts to assist LLMs in generating unit test cases that cover hard-to-cover branches. SymPrompt [ 20 ], drawing inspiration from symbolic execution, leverages execution paths as structural guides for the LLM. Although recent methods [ 4 , 11 , 19 , 29 ] improv e LLM-based test generation by providing more context, the y overlook the scalability challenge for complex metho ds. Scalability enlarges the analysis scope, introduces noise, and strains LLMs’ limited generation ca- pacity , while also risking token overow in multi-turn interactions. Consequently , existing approaches still struggle to achieve satisfac- tory coverage for complex real-world methods. HI TS [ 25 ] attempts to address this issue by splitting the method under test. However , this appr oach r elies on the LLM to perform a slicing. Due to halluci- nation, it may miss important cross-slice information or introduce irrelevant or even new code generated by the LLM itself. In addition, when dealing with larger code under test, a single round of splitting may not be sucient. In contrast, our approach fundamentally addresses this issue by eliminating already covered non-essential code. Our metho d is based on static analysis and removes only the lines that are ir- relevant to the execution of the uncover ed lines, thereby avoiding information loss during cutting. Moreover , our approach performs multiple rounds of elimination for each code slice. After each elimi- nation process, the LLM’s conversation history is cleared to mini- mize the impact of multi-turn interactions. 3 Methodology In this se ction, we present an overview of our proposed frame- work, as illustrated in Fig. 1. The framew ork aims to generate high- coverage unit test cases for Python projects through two main steps: context information retrieval and iterative test generation with covered code elimination . 3.1 Step I: Context Information Retrieval The context information retrieval step extracts relevant dependen- cies and summarizes them to support unit test generation for a complex target method under test, as shown in the green dashed box in Fig. 1. Given a target method, we rst identify its internal dependencies, whose denitions are located in the same source le. This is done by analyzing intra-le function calls and internal variable references using static analysis tools such as the Abstract Syntax Tr ee (AST) [ 23 ]. The denitions of these internal dependen- cies, together with the co de of the target method itself, colle ctively form a basic co de slice. Next, we identify information about ex- ternal dependencies by analyzing cross-module function calls and external variable refer ences. For dependencies dened within the project, we colle ct their corresponding denition code to form a dependencies code le, similar to [4, 25, 27] Howev er , this dependency code le is not suitable for direct in- put to the LLM, as it often contains excessive irrelevant information and is typically much larger than the code slice itself. Including such content in the prompt as auxiliary information for unit test genera- tion may consume a signicant number of tokens and introduce substantial noise. T o ensure prompt eciency and reduce noise, our method utilizes LLMs to summarize the dep endency code le. Our method applies the one-shot prompting strategy to summarize the denition of each function collected in the dependencies co de le. Using a manually constructed example, we guide the LLM to sum- marize each function into its signature and a high-level description of its underlying logic. The summarize d dependencies, combined with the code slice, form the prex for subsequent unit test generation. This step is es- sential, as the content of local mo dules is typically project-specic and not encountered during LLM training, making it dicult for the model to infer behavior based solely on mo dule names. Third- party libraries such as numpy or pandas , which LLMs are generally familiar with during the training process, are excluded from sum- marization. By focusing only on strongly relevant and compressed contextual information, this step helps reduce token overhead, min- imize noise, and impr ove the accuracy and quality of the generated unit tests. 3.2 Step II: Iterative T est Generation with Cover ed Code Elimination This is the core step, which takes the slice & dependencies le ob- tained fr om the pr evious context information r etrieval step as input and leverages the LLM to generate unit tests with high coverage rate. This step can be divided into two components. One is LLM- based test generation for a code slice without elimination , and the other is code elimination . As shown in the yellow dashed box in Fig. 1, the slice & de- pendencies le is rst sent to the code elimination component. At Enhancing LLM-Base d T est Generation by Eliminating Covered Code Conference’XX, 2026, XXX, XXX Figure 1: The O verview of Our Framework. Our approach consists of two steps: context information retrieval and iterative test generation with covered code elimination, which are illustrated by the green and orange dashed b oxes in the gure, respectively . this time, the coverage report contains no cover ed lines within the target unit. Therefore, the code elimination component does not perform any elimination, and the temporary slice & dep endencies le remains identical to the original slice & dependencies le. This le is then passed to the LLM-based test generation without the elimination component to generate unit tests. If all lines in the target unit are covered, the resulting tests are added to the nal test suite. If there are still uncover ed lines, a coverage report is produced and forwarded to the code elimination component. This component eliminates portions of the code slice that are irrelevant to the uncov ered lines, while aiming to minimize the impact on the execution of the uncovered lines. Then a new temporary slice & de- pendencies le is created. This process repeats until either all lines in the target method are covered or the LLM-based test generation without the elimination component can no longer generate tests that cover any of the remaining unco vered lines. Figure 2: Internal Structure of LLM-based T est Generation without Elimination Component. 3.2.1 LLM-based T est generation without Elimination. This com- ponent receives the temporary slice & dependencies le from the code elimination component and outputs the generated test cases along with the corr esponding coverage report. The detailed internal structure is illustrated in Fig. 2. In the beginning, the temporary slice & dependencies les are used to construct a prompt. The format of the prompt is shown as Fig. 3. In this format, the section enclosed in {{ · }} represents placeholders that are dynamically lled based on the code under test when generating each prompt. For example, {{ 𝑐𝑜 𝑑 𝑒 _ 𝑢𝑛𝑑 𝑒 𝑟 _ 𝑡 𝑒 𝑠 𝑡 }} will be replaced with the specic co de slice. Figure 3: The Format of the Prompt. {{ · }} serves as a place- holder , which will be replaced by variables during actual use. W e use this prompt to guide the LLM in generating unit tests, which are then evaluated by a test validator for co verage. All pr e- viously generated tests are execute d, and coverage is measured against the original Python le. If full coverage is achieved, the validator outputs the complete test set; other wise, it checks for newly covered lines. If coverage improves, the updated report is forwarded to the code elimination component; if not, renement Conference’XX, 2026, XXX, XXX W eizhe Xu, Mengyu Liu, and Fanxin Kong prompts are generated to guide further test improvement. Runtime errors from failed tests are also collected and incorporate d into re- nement prompts. This process repeats until reaching a predened iteration limit. The overall process of LLM-based test generation without elimi- nation can be represented as Algorithm. 1. The r eturn values 1, 0, and -1, respectively , indicate the following conditions: the target slice has been fully covered and the process terminates; new lines have been covered, processing another round of code elimination; and the iteration limit has been exceeded, leading to termination. In Lines 1 and 2, the algorithm constructs a new prompt and starts a new dialogue with LLM. Starting from Line 4, the algorithm peri- odically prompts the LLM to generate tests and validate them, and takes dierent actions based on the validation results. Algorithm 1 LLM-based T ests Generation without Elimination Require: 𝑠𝑙 𝑖 𝑐𝑒 , 𝑙𝑖 𝑚𝑖𝑡 , 𝑡 𝑒 𝑠𝑡 𝑠 , 𝑢 𝑛𝑐𝑜 𝑣 Ensure: 𝑡 𝑒𝑠 𝑡 𝑠 , 𝑢𝑛𝑐 𝑜 𝑣 , 𝑓 𝑙 𝑎𝑔 1: 𝑝 𝑟 𝑜𝑚 𝑝𝑡 ← 𝑝 𝑟 𝑜 𝑚𝑝 𝑡 _ 𝑐𝑜 𝑛𝑠𝑡 𝑟 𝑢𝑐 𝑡 𝑜 𝑟 ( 𝑠𝑙 𝑖𝑐 𝑒 , 𝑢 𝑛𝑐𝑜 𝑣 ) 2: 𝑙𝑙 𝑚 ← new 𝐿 𝐿𝑀 _ 𝑑 𝑖𝑎𝑙 𝑜 𝑔 𝑢 𝑒 ( ) 3: 𝑖𝑡 𝑒 𝑟 𝑎𝑡 𝑖𝑜 𝑛 ← 0 4: while 𝑖 𝑡 𝑒 𝑟 𝑎𝑡 𝑖𝑜 𝑛 ≤ 𝑙 𝑖𝑚𝑖 𝑡 do 5: 𝑡 𝑒 𝑠 𝑡 ← 𝑙 𝑙𝑚 ( 𝑝 𝑟 𝑜𝑚 𝑝𝑡 ) 6: 𝑡 𝑒 𝑠 𝑡 𝑠 .𝑎𝑑𝑑 ( 𝑡 𝑒 𝑠 𝑡 ) 7: 𝑛𝑒𝑤 _ 𝑢 𝑛𝑐𝑜 𝑣 ← 𝑣 𝑎𝑙 𝑖𝑑 𝑎𝑡 𝑜 𝑟 ( 𝑡 𝑒 𝑠𝑡 𝑠 ) 8: if 𝑛𝑒 𝑤 _ 𝑢𝑛𝑐 𝑜 𝑣 = [ ] then 9: return 𝑡 𝑒𝑠 𝑡 𝑠 , 𝑛𝑒𝑤 _ 𝑢𝑛𝑐 𝑜 𝑣 , 1 10: else if 𝑙 𝑒 𝑛 ( 𝑛𝑒𝑤 _ 𝑢 𝑛𝑐𝑜 𝑣 ) < 𝑙 𝑒 𝑛 ( 𝑢𝑛𝑐𝑜 𝑣 ) then 11: return 𝑡 𝑒𝑠 𝑡 𝑠 , 𝑛𝑒𝑤 _ 𝑢𝑛𝑐 𝑜 𝑣 , 0 12: else 13: 𝑝𝑟 𝑜𝑚 𝑝𝑡 ← 𝑟 𝑒 𝑓 𝑖 𝑛𝑖𝑛𝑔 ( 𝑛𝑒 𝑤 _ 𝑢𝑛𝑐 𝑜 𝑣 ) 14: end if 15: 𝑢𝑛𝑐𝑜 𝑣 ← 𝑛𝑒 𝑤 _ 𝑢 𝑛𝑐𝑜 𝑣 16: 𝑖 𝑡 𝑒 𝑟 𝑎𝑡 𝑖𝑜 𝑛 ← 𝑖𝑡 𝑒 𝑟 𝑎𝑡 𝑖 𝑜 𝑛 + 1 17: end while 18: return 𝑡 𝑒 𝑠 𝑡 𝑠 , 𝑢 𝑛𝑐𝑜 𝑣 , − 1 Through this iterative LLM inv ocation pr ocess, we maximize the utilization of the LLM’s limited code-related capabilities to generate test cases for a slice & dependencies le. There are various ways to construct such prompts [ 20 , 27 ], but prompt engineering is not our focus, and existing methods can be readily integrated into our framework. 3.2.2 Co de Elimination. This component takes the coverage anal- ysis report, along with the original code slice & dependencies le, as input and generates a new temporary slice & dependencies le. It systematically remov es as many already covered lines as possi- ble while preser ving the original execution b ehavior , essentially decomposing a complex problem into simpler subproblems. Specically , all the uncovered liens need to b e preserved. Then, we construct a ne-grained contr ol ow graph (CFG) of the original target method based on the AST . It is a is a directed graph that rep- resents all possible statement execution paths through a program, where nodes correspond to statements and edges indicate control ow between them. From the p erspective of the set of execution paths, our goal is to eliminate all execution paths that do not con- tain any uncov ered line. For each uncovered line, we perform a bidirectional breath-rst search (BFS) on the ne-grained CFG. All nodes reached during these traversals correspond to statements that must be preserved, as they represent the statements r equired by all execution paths that include the uncovered line. Finally , it is also necessary to rene some retained statements. For example, in an 𝑖 𝑓 . ..𝑒𝑙 𝑠 𝑒 . .. structure, if the 𝑖 𝑓 branch becomes empty after prun- ing, the statements in the 𝑒𝑙 𝑠 𝑒 branch should be transformed into an 𝑖 𝑓 𝑛𝑜 𝑡 condition to ensure syntactic correctness and preserve program logic. Algorithm 2 Code Elimination Require: 𝑢𝑛𝑐𝑜 𝑣 , 𝑡 𝑎𝑟 𝑔𝑒𝑡 Ensure: 𝑛𝑒𝑤 _ 𝑡 𝑎𝑟 𝑔𝑒 𝑡 1: 𝑝 𝑟 𝑒 𝑠 𝑒𝑟 𝑣 𝑒 ← 𝑢 𝑛𝑐𝑜 𝑣 2: 𝑐 𝑓 𝑔 ← 𝑐 𝑜 𝑛𝑠𝑡 𝑟 𝑢𝑐 𝑡 _ 𝐶 𝐹 𝐺 ( 𝑡 𝑎𝑟 𝑔𝑒𝑡 ) 3: for each 𝑙 𝑖𝑛𝑒 in 𝑢𝑛𝑐𝑜 𝑣 do 4: 𝑛𝑒𝑐 𝑒 𝑠 𝑠𝑖 𝑡 𝑖 𝑒 𝑠 ← 𝑏𝑖 𝑑𝑖 𝑟 𝑒 𝑐𝑡 _ 𝐵 𝐹 𝑆 ( 𝑙 𝑖 𝑛𝑒 , 𝑐 𝑓 𝑔 ) 5: 𝑝 𝑟 𝑒 𝑠𝑒 𝑟 𝑣 𝑒 .𝑎𝑑𝑑 ( 𝑛𝑒 𝑐 𝑒𝑠 𝑠 𝑖 𝑡 𝑖 𝑒𝑠 ) 6: end for 7: 𝑛𝑒 𝑤 _ 𝑡 𝑎𝑟 𝑔𝑒𝑡 ← 𝑓 𝑖 𝑥 ( 𝑝 𝑟 𝑒 𝑠 𝑒𝑟 𝑣 𝑒 , 𝑡 𝑎𝑟 𝑔𝑒𝑡 ) 8: return 𝑛𝑒 𝑤 _ 𝑡 𝑎𝑟 𝑔𝑒 𝑡 The overall process of code elimination can be represented as Algorithm. 2. The algorithm takes as input the set of uncovered lines ( 𝑢𝑛𝑐 𝑜 𝑣 ) and the original target unit ( 𝑡 𝑎𝑟 𝑔𝑒 𝑡 ), and returns a rened target unit 𝑛𝑒𝑤 _ 𝑡 𝑎𝑟 𝑔𝑒 𝑡 with irrelevant code eliminated. In Line 1, all uncovered lines are initially added to the set 𝑝 𝑟 𝑒 𝑠 𝑒𝑟 𝑣 𝑒 , which indicates the lines that must be preserved. In line 2, the algorithm uses the function 𝑐𝑜 𝑛𝑠 𝑡 𝑟 𝑢 𝑐𝑡 _ 𝐶 𝐹 𝐺 ( · ) to construct a ne-grained CFG of the original target method. Lines 3-6 iterate ov er each uncovered line. For each such line, bidirectional BFS is performed on the CFG to identify all its necessar y lines that are required for execution. These necessary lines are then added to the 𝑝 𝑟 𝑒 𝑠 𝑒𝑟 𝑣 𝑒 set. In Line 7, the function 𝑓 𝑖 𝑥 ( ·) is invoked to reconstruct the new target unit by retaining only the lines in 𝑝 𝑟 𝑒 𝑠𝑒 𝑟 𝑣 𝑒 set. Proposition 3.1 (Beha vior Preserv ation). Let 𝑃 be the original target unit, 𝑈 the set of uncover ed lines, and 𝑃 ′ the program produced by Algorithm 1. Assume that (1) the ne-grained CFG soundly cap- tures all execution paths of 𝑃 ; (2) all data dependencies of lines in 𝑈 lie on execution paths leading to them; and (3) the reconstruction step performs only semantics-preserving local rewrites. Then, for any input on which 𝑃 reaches a line 𝑙 ∈ 𝑈 , the transformed program 𝑃 ′ reaches the corresponding line and observes the same values for all variables used at 𝑙 as in 𝑃 . Proof. In algorithm 2, bidirectional BFS on the ne-grained CFG collects all necessar y statements that may occur on any execution path including each 𝑙 ∈ 𝑈 . By Assumption (2), all statements that dene or inuence variables used at 𝑙 appear on such paths and are therefore preserved. Statements not in the preserved set never occur on paths including 𝑙 . So removing them cannot aect variable values at 𝑙 . The reconstruction step applies only semantics-preserving structural rewrites, ensuring that preserved statements execute in the same order and with the same eects. Thus, for any execution of Enhancing LLM-Base d T est Generation by Eliminating Covered Code Conference’XX, 2026, XXX, XXX 𝑃 that includes an uncovered line 𝑙 , 𝑃 ′ executes the same sequence of preserved statements in the same order , resulting in identical valuations of all variables used in 𝑙 . □ In practice, code elimination may not perfe ctly maintain syn- tactic correctness or program logic. Nevertheless, the essential information for test generation remains preserved in the new code & dependencies slice. Furthermore, all LLM-generated tests are evaluated on the original source le, and each elimination round is applied to the original target unit, preventing error accumulation. After completing the process of code elimination, we obtain a smaller temporary slice & dependencies le and apply it to the LLM-based tests generation without the elimination component. This cycle repeats until the iteration limit in the LLM-based tests generation without the elimination component is reached, and the coverage rate remains unchanged. During this iterative process, each execution of the code elimination not only reduces the token count of the code under test, but also enhances the prompt eciency by narrowing the analysis scope for the LLM. This fo cused context allows the LLM to focus on the relevant logic of the uncovered lines, thereby improving its o verall performance. 4 Experiments In this section, we rst present the setup of the experiment and then detail the results and analysis of the experiment. 4.1 Experiment Setup 4.1.1 Benchmarks. W e construct our dataset follo wing established practices in prior inuential work, including both search-based methods [ 12 ] and LLM-base d approaches [ 11 , 27 ]. Our dataset is built under three criteria. First, we include projects used in Pyn- guin [ 12 ] and TELP A [ 27 ], as they ser ve as part of our baselines. Second, we select projects from diverse domains with high GitHub star counts to ensure practical relevance . Third, each project must contain 1–40 complex methods, dened as methods with cyclomatic complexity above 10 and mor e than 50 lines of code; these methods constitute our target units. These criteria ensure that our dataset is both high-quality and representative, while maintaining a balance between diversity and computational budget. The corr esponding statistics are presented in T able. 1. Each row is an open-source project. The "Revision" column indicates the specic version of the project used in our evaluation. The "Domain" column represents the application area of the open-source pr oject. "#MU T s" denotes the number of methods under test in each project. 4.1.2 Baselines. W e select three state-of-the-art LLM-based unit test generation methods and one SBST approach as our baselines. The details are as follows: • ChatUniT est (CU T) [ 4 ]: This is a widely cited LLM-based automated unit test generation framework that employs a generation-validation-repair mechanism to mitigate errors produced by the LLM. • TELP A [ 27 ]: It is a novel LLM-based test generation method that tackles hard-to-cover branches. It renes inee ctive tests as counter-examples and integrates program analysis results into the prompt to guide LLMs in generating more eective tests. • HI TS [ 25 ]: It is a method that enhances LLM-base d unit test generation by slicing complex focal metho ds into smaller parts, allowing the LLM to generate tests incrementally . • Pynguin [ 12 ]: It is a widely used sear ch-based software test- ing tool for Python that leverages e volutionary algorithms to automatically generate unit tests aiming to maximize co de coverage. For TELP A, as the original paper does not release the source code, we reimplement the approach based on the descriptions pro- vided. For ChatUniT est and HI TS, which are originally designed for generating unit tests in Java, we develop an adapted version tailored for Python. 4.1.3 Experimental Parameters. W e use OpenAI’s GPT -4o [ 16 ] as the LLM in this work since this mo del strikes a goo d balance be- tween the price and the performance in co de-related works. For the GPT -4o API, we use the default values for the temperature and token length limit parameters. Specically , the temperature is set to 1.0, and the token length limit is 8096. For each LLM-based method, the number of interaction rounds with the LLM in a dialogue is limited to 5. For Pynguin, we set the max iteration number to 100. 4.2 Coverage Rate Comparison W e present and analyze the line cov erage achieved when testing complex Python methods, which serves as the core evaluation of our approach. Due to space limitations, we report only line coverage, since prior studies [ 11 , 25 , 27 ] have shown that line and branch coverage are strongly correlated across unit test generation methods. Coverage is compute d using the widely adopte d tool Coverage [1]. T able 2 summarizes the results, where each row corresponds to a project and each column to a test generation method. Bold values denote the b est performance for each project. As shown in the table, our method achieves the highest average line coverage among all baselines , and attains the best result in 7 out of the 9 projects, demonstrating its eectiveness on complex target methods. Overall, existing LLM-based methods struggle with the complex functions. For instance, TELP A achieves only 20.51% co verage on the complex target function in docstring parser , despite reporting 92.20% coverage for the full project in its original paper . This signi- cant drop highlights the scalability limitations of current LLM-based approaches when dealing with highly complex functions. Moreover , TELP A and HI TS do not consistently outperform each other , but both p erform better than the basic ChatUniT est. This suggests that the context enrichment used in TELP A and the lightweight LLM-guided decomposition adopte d by HITS can be eective to some extent, but their overall impact r emains limited compared to our method. The SBST method, Pynguin, also performs poorly on complex functions, yielding 0.00% coverage on six projects. This occurs because its evolutionary search often cannot produce valid inputs that satisfy the strict preconditions, de ep control ows, or large input domains of these complex methods. As a result, the generated tests fail to reach the entry paths of the target functions, leading to no statements being covered. This reects the superiority of LLM-based test generation methods. Conference’XX, 2026, XXX, XXX W eizhe Xu, Mengyu Liu, and Fanxin Kong Project Revision Domain #MU T s # Lines min mean max dataclasses-json dc63902 Serialization 6 53 60 85 docstring parser 4951137 T ext Processing 36 51 93 260 utes 3b7c518 Utility 4 50 70 104 utils df0f84e Utility 18 50 87 180 mimesis b981966 Data Processing 6 53 64 60 sanic a64d7fe Microservices 20 52 189 89 pytutils d7a37c0 Utility 3 51 67 80 thonny e019c1e IDE 20 50 144 78 cookiecutter b445123 Utility 10 52 172 101 T able 1: Characteristics of the benchmarks use d in the evaluation. Project Our CU T TELP A HI TS Pynguin dataclasses-json 24.27% 25.12% 16.50% 15.53% 16.57% docstring parser 40.71% 22.64% 20.51% 33.80% 16.49% utes 49.34% 45.80% 49.34% 48.68% 0.00% utils 41.04% 16.22% 35.07% 20.90% 15.15% mimesis 78.82% 0.00% 55.29% 64.71% 0.00% sanic 38.95% 38.32% 31.70% 27.76% 0.00% pytutils 34.87% 25.89% 41.28% 31.19% 0.00% thonny 42.62% 25.79% 19.57% 28.15% 3.62% cookiecutter 47.28% 25.00% 25.25% 14.36% 0.00% A vg. 42.21% 24.98% 32.72% 31.67% 5.76% T able 2: Line Coverage Scores on Complex Metho ds. 4.3 Execution Correctness Check W e compare the execution correctness among the ve unit test generation methods. LLM-based unit test generation methods rely on the reasoning capabilities of LLMs to understand code and gener- ate test cases. Compared with traditional search-based approaches, LLMs, with their probabilistic nature , are mor e likely to generate incorrect test cases. Specically , the numb er of passed test cases is calculated as the total number of test cases minus those that resulted in failures, errors, or were skipped. The results of the pass rates are shown in T able 3. According to T able 3, the pass rate of our approach is not signicantly higher than that of other methods. W e also ob- serve that a higher pass rate does not necessarily indicate a higher coverage rate . This may be because, during the iterative generation process, the LLM tends to produce many similar unit test cases tar- geting the same code lines, resulting in limite d additional coverage despite a high pass rate. For example, the pass rate of our method on the project utils is 63.83%, much higher than it is on project mimesis , which is 24.45%, while the line coverage rate is opposite. W e also observe that when Pynguin successfully generates ex- ecutable test cases, its pass rate is generally higher than that of LLM-based test generation methods. This highlights a limitation of LLM-based approaches in this aspect and indicates an important direction for future improv ement. Project Our CU T TELP A HI TS Pynguin dataclasses-json 63.31% 64.28% 63.83% 46.00% 77.78% docstring parser 29.06% 40.00% 20.63% 34.57% 83.33% utes 38.75% 21.15% 27.91% 24.44% N/A utils 63.83% 38.72% 63.32% 46.00% 50.00% mimesis 24.45% 0.00% 42.85% 37.33% N/A sanic 10.86% 35.58% 15.15% 20.42% N/A pytutils 47.36% 25.32% 51.85% 28.57% N/A thonny 32.60% 19.27% 11.94% 16.35% 50.00% cookiecutter 29.00% 23.07% 13.07% 17.12% N/A A vg. 37.69% 29.71% 34.50% 30.08% 65.29% T able 3: Pass Rate Comparison on Complex Methods. 4.4 Ablation Study W e analyze the components’ contribution to improving coverage scores through the ablation study . Compared to directly using LLMs to generate unit test cases for the software under test, our approach incorporates several key techniques: (1) context information re- trieval, which extracts project dependencies and summarizes them using the LLM; (2) code elimination, which removes code that has already been covered; and (3) iteration generation without elimina- tion, in which the LLM is called multiple times to generate unit tests for a code slice, rening them based on coverage reports until an iteration limit is r eached. W e perform ablation studies to investigate the contribution of each component within our method. The results are shown in T able. 4. Project Our w/o E w/o I w/o D dataclasses-json 24.27% 14.56% 16.99% 20.54% docstring parser 40.71% 25.33% 16.54% 36.54% utils 41.04% 29.14% 11.42% 38.05% mimesis 78.82% 54.55% 0.00% 75.29% cookiecutter 42.62% 28.44% 36.13% 37.62% A vg. 45.49% 30.40% 16.22% 41.61% T able 4: Ablation Study . w/o E indicates without code elim- ination. w/o I means without iteration interact with LLMs. w/o D represents without dependency information. Enhancing LLM-Base d T est Generation by Eliminating Covered Code Conference’XX, 2026, XXX, XXX W e compare three variants of our method: (1) “w/o Elimination" , which removes the elimination module from the system; (2) “w/o Iteration" , which sets the iteration limit in the LLM-based test gener- ation without elimination module to 1; and (3) “w/o Dependencies" , which directly inputs the code slices without dependencies. As shown in the table, the decrease in coverage score caused by removing the context information retrieval step (w/o Dependencies) is the smallest. This may be be cause related code within the same le as the target unit is still included in the code slice, whereas the inuence of external packages is relatively limited. Since these projects are high-quality open-source software, the LLM can often infer the usage of referenced functions and classes from detailed comments and naming conventions. Both code elimination and iteration number have a signicant impact on the system’s performance. However , removing iteration leads to an even larger decrease in coverage scor e. This is because the output of LLMs is highly unstable; without iteration, if the LLM fails to generate test cases that cover new lines, the method with code elimination will terminate imme diately , rendering the elimination module ineective. All steps and comp onents in our method contribute to the performance improvements in the average coverage scores . 5 Conclusion W e highlight the limitations of LLM-based unit test generation methods in achieving high cov erage when applie d to comple x meth- ods under test. W e follow the divide-and-conquer paradigm and propose a method to enhance the LLM-based unit test generation by iteratively eliminating covered code through static analysis. W e conducted a comprehensive evaluation of our method against state- of-the-art LLM-base d and search-based methods, demonstrating the eectiveness of our approach. References [1] Ned Batchelder . [n. d.]. coverage.py . https://coverage.readthedocs.io/. Accessed: 2025-11-18. [2] Cristian Cadar , Daniel Dunbar, Dawson R Engler , et al . 2008. Klee: unassisted and automatic generation of high-coverage tests for complex systems programs.. In OSDI , V ol. 8. 209–224. [3] Ting Chen, Xiao-song Zhang, Shi-ze Guo , Hong-yuan Li, and Y ue Wu. 2013. State of the art: Dynamic symbolic execution for automated test generation. Future Generation Computer Systems 29, 7 (2013), 1758–1773. [4] Yinghao Chen, Zehao Hu, Chen Zhi, Junxiao Han, Shuiguang Deng, and Jianwei Yin. 2024. Chatunitest: A framework for llm-based test generation. In Compan- ion Proce e dings of the 32nd ACM International Conference on the Foundations of Software Engineering . 572–576. [5] Gordon Fraser and Andrea Ar curi. 2011. Evosuite: automatic test suite generation for object-oriented software. In Proceedings of the 19th A CM SIGSOFT symposium and the 13th European conference on Foundations of software engine ering . 416–419. [6] Y unfan Gao, Yun Xiong, Xinyu Gao, Kangxiang Jia, Jinliu Pan, Yuxi Bi, Yixin Dai, Jiawei Sun, Haofen W ang, and Haofen W ang. 2023. Retrieval-augmented generation for large language models: A survey . arXiv preprint 2, 1 (2023). [7] Patrice Godefroid, Nils Klarlund, and Koushik Sen. 2005. DART: Directed auto- mated random testing. In Proceedings of the 2005 ACM SIGPLAN conference on Programming language design and implementation . 213–223. [8] Patrice Godefroid, Nils Klarlund, and K oushik Sen. 2005. DART: directed auto- mated random testing. SIGPLAN Not. 40, 6 (June 2005), 213–223. doi:10.1145/ 1064978.1065036 [9] Daya Guo, Qihao Zhu, Dejian Y ang, Zhenda Xie, Kai Dong, W entao Zhang, Guanting Chen, Xiao Bi, Yifan Wu, YK Li, et al . 2024. DeepSeek-Co der: when the large language model meets programming–the rise of code intelligence. arXiv preprint arXiv:2401.14196 (2024). [10] Hongye Jin, Pei Chen, Jingfeng Y ang, Zhengyang Wang, Meng Jiang, Yifan Gao , Binxuan Huang, Xinyang Zhang, Zheng Li, Tianyi Liu, et al . 2025. END: Early Noise Dropping for Ecient and Eective Context Denoising. arXiv preprint arXiv:2502.18915 (2025). [11] Caroline Lemieux, Jeevana Priya Inala, Shuvendu K Lahiri, and Siddhartha Sen. 2023. Codamosa: Escaping coverage plateaus in test generation with pre-trained large language models. In 2023 IEEE/A CM 45th International Conference on Soft- ware Engineering (ICSE) . IEEE, 919–931. [12] Stephan Lukasczyk and Gordon Fraser . 2022. Pynguin: Automated unit test gen- eration for python. In Proceedings of the ACM/IEEE 44th International Conference on Software Engineering: Companion Proce edings . 168–172. [13] David R MacIver , Zac Hateld-Dodds, et al . 2019. Hypothesis: A new approach to property-based testing. Journal of Open Source Software 4, 43 (2019), 1891. [14] Thomas J McCabe. 1976. A complexity measure. IEEE Transactions on software Engineering 4 (1976), 308–320. [15] Phil McMinn. 2011. Search-based software testing: Past, present and futur e. In 2011 IEEE Fourth International Conference on Software T esting, V erication and V alidation W orkshops . IEEE, 153–163. [16] OpenAI. 2024. GPT -4o Technical Report. https://openai.com/index/gpt- 4o. Ac- cessed: 2025-05-30. [17] OpenAI. 2024. GPT -o3 Technical Report. https://openai.com/index/gpt- o3. Ac- cessed: 2025-05-30. [18] Carlos Pacheco and Michael D Ernst. 2007. Rando op: feedback-directed random testing for Java. In Companion to the 22nd ACM SIGPLAN conference on Object- oriented programming systems and applications companion . 815–816. [19] Juan Altmayer Pizzorno and Emery D Berger . 2024. Coverup: Coverage-guided llm-based test generation. arXiv preprint arXiv:2403.16218 (2024). [20] Gabriel Ryan, Siddhartha Jain, Mingyue Shang, Shiqi W ang, Xiaofei Ma, Mu- rali Krishna Ramanathan, and Baishakhi Ray . 2024. Code-aware prompting: A study of coverage-guided test generation in regression setting using llm. Pro- ceedings of the ACM on Software Engineering 1, FSE (2024), 951–971. [21] BA Sanusi, SO Olabiyisi, A O Afolabi, A O Olowoye , et al . 2020. Development of an Enhanced Automated Software Complexity Measurement System. Journal of Advances in Computational Intelligence Theor y 1, 3 (2020), 1–11. [22] Freda Shi, Xinyun Chen, Kanishka Misra, Nathan Scales, David Dohan, Ed H Chi, Nathanael Schärli, and Denny Zhou. 2023. Large language models can be easily distracted by irrelevant context. In International Conference on Machine Learning . PMLR, 31210–31227. [23] W eisong Sun, Chunrong Fang, Y un Miao, Yudu Y ou, Mengzhe Yuan, Yuchen Chen, Quanjun Zhang, An Guo, Xiang Chen, Y ang Liu, et al . 2023. Abstract syntax tree for programming language understanding and representation: Ho w far are we? arXiv preprint arXiv:2312.00413 (2023). [24] Michele T ufano, Dawn Drain, Alexey Svyatkovskiy , Shao Kun Deng, and Neel Sundaresan. 2020. Unit test case generation with transformers and focal context. arXiv preprint arXiv:2009.05617 (2020). [25] Zejun Wang, Kaibo Liu, Ge Li, and Zhi Jin. 2024. HI TS: High-coverage LLM-based Unit T est Generation via Metho d Slicing. In Proceedings of the 39th IEEE/ACM International Conference on A utomated Software Engineering . 1258–1268. [26] T ao Xie, Nikolai Tillmann, Jonathan De Halleux, and W olfram Schulte. 2009. Fitness-guided path exploration in dynamic symbolic execution. In 2009 IEEE/IFIP International Conference on Dependable Systems & Networks . IEEE, 359–368. [27] Chen Y ang, Junjie Chen, Bin Lin, Jianyi Zhou, and Ziqi W ang. 2024. Enhancing llm-based test generation for hard-to-cover branches via program analysis. arXiv preprint arXiv:2404.04966 (2024). [28] Y anming Y ang, Xin Xia, David Lo, and John Grundy . 2022. A survey on d eep learning for software engineering. ACM Computing Surveys (CSUR) 54, 10s (2022), 1–73. [29] Zhiqiang Y uan, Yiling Lou, Mingwei Liu, Shiji Ding, Kaixin W ang, Yixuan Chen, and Xin Peng. 2023. No more manual tests? evaluating and improving chatgpt for unit test generation. arXiv preprint arXiv:2305.04207 (2023). [30] Zhanke Zhou, Rong T ao, Jianing Zhu, Yiwen Luo, Zengmao W ang, and Bo Han. 2024. Can language mo dels perform robust reasoning in chain-of-thought prompt- ing with noisy rationales? Advances in Neural Information Processing Systems 37 (2024), 123846–123910. Conference’XX, 2026, XXX, XXX W eizhe Xu, Mengyu Liu, and Fanxin Kong A An Illustrative Example Here we provide an illustrative example of code elimination process, as shown in Fig 4. On the left is the original target unit, which is a complex func- tion bump_v ersion( · ) from an open-source Python project utils . As can be seen, this target unit contains 50 lines of co de and includes multiple layers of nested conditional statements, making it chal- lenging for the LLM to achieve full coverage in a single attempt. The code lines highlighted with red boxes, which are Lines 17, 24, 31, and 42, represent the uncov ered lines identied after the unit test generation without elimination. Base d on these uncovered lines, we apply Algorithm 2 to the original target code to perform code elimination. This process yields the rened second code slice shown in the upper-right corner . As shown, w e preserve all predecessor code lines of the uncovered lines. For example, Lines 2, 3, 4, and 5. This ensures the execution logic for the uncovered line remains unchanged. Additionally , we address structural issues that may arise when branches under an if statement are eliminated, which could otherwise invalidate the corr esponding else or elif blocks. For instance, in the second slice, Lines 6 and 7 are changed to maintain syntactic and semantic correctness. At this point, the code has been reduced to 20 lines, with all unnecessar y statements remo ved. After unit test generation without elimination for the se cond slice, a new set of unco vered lines is identied. Specically , the y are Lines 17 and 31 in the original target unit. Our method then applies the code elimination process to the target unit again based on these newly uncover ed lines. This results in a mor e compact third slice , consisting of 15 lines, as shown in the lower-right corner . This example demonstrates that our approach eectively decom- poses the problem of generating unit test cases for a complex target function into generating tests for a series of smaller code slices. By doing so, it addresses the scalability issue faced by LLM-based unit test generation methods in a principled and ecient manner . B Experiment Details B.1 Baseline Implementation For TELP A [ 27 ], we re-implement the approach based on the de- scription pr ovided, as the original paper does not release the source code. This baseline adopts an ar chitecture similar to the LLM-based test generation without elimination in our method. The key dif- ference lies in its iterativ e process: rather than terminating upon detecting newly covered lines after test validation, it continues the generation process until either full coverage is achieved or a prede- ned iteration limit is reached. The iteration limit for renement is set to 5. For prompts used in renement, we construct them using the backwards analysis approach proposed in the original work. For ChatUniT est [ 4 ], the original code is designe d for Java, and we implement our own version. W e rst prompt the LLM to generate unit tests using the same initial prompt as in our method, and then iteratively invoke the LLM to perform bug xes on the generated tests. The maximum number of bug-xing iterations is set to ve . For HI TS [ 25 ], the original co de is also designed for Java, and we im- plement our own version. W e rst leverage the LLM to segment the target unit into several segments and summarize the functionality of each segment. Then, the LLM is prompted to generate unit tests for each segment individually . Finally , the LLm is use d to perform bug xing similar to ChatUniT est, with the number of iterations limited to ve. B.2 Experiment Setup W e use Op enAI’s GPT -4o [ 16 ] as the large language model in this work since this model strikes a good balance between the price and the performance in co de-related works. For the GPT -4o API, we use the default values for the temperature and token length limit parameters. Specically , the temperature is set to 1.0, and the token length limit is 8096. For each LLM-based method, the number of interaction rounds with LLM in a dialogue is limited to 5. It is worth noting that if the accumulated multi-turn dialogue context causes the prompt to exceed the token limit during an LLM invocation, we treat this inv ocation as a failure and terminate the loop. This applies to both our method and TELP A ’s multi-turn renement of generated unit tests, as well as ChatUniT est and HI TS’s multi-turn bug-xing processes. During the experiments, we select target methods or functions from nine open-source projects base d on the criterion of having more than 50 lines of code . W e also collect dep endency information for each target unit. All relevant information for each target unit is stored in a corresponding JSON le. The datasets will be released upon publication. During evaluation, each method is teste d using its JSON le directory as input to generate and assess the corresponding unit test. It is important to note that, since dierent baselines originally adopt their own strategies for incorporating dependency information into the initial prompt, we standardize this step by using the dependency collection metho d proposed in this paper . C Discussion C.1 Future Improvement The most straightforward enhancement is to use a more powerful LLM. In our experiments, we selected the widely used GPT -4o for its balance between performance and cost-eectiveness. However , it is easy to substitute this model with more advanced mo dels that are specically optimized for code-related tasks, such as DeepSe ek- Coder [ 9 ], GPT -o3 [ 17 ]. These models can be seamlessly integrate d into our framework to potentially further impro ve unit test genera- tion quality . In our implementation, we deliberately avoided extensive prompt engineering. The initial prompt consists of the code and its depen- dency le, accompanied by a few simple examples as guidance. During the iterative renement process, we simply use uncover ed lines or run-time errors as prompts to instruct the LLM to further improve the generated test cases. In fact, users can easily incorpo- rate their own prompt engineering strategies into our method. For example, prompts constructed through static analysis as used in TELP A can be integrated to further guide the LLM in addressing uncovered paths. Meanwhile , other techniques such as Retrieval- A ugmented Generation (RA G) [ 6 ] can also be integrated into the system’s iterative test generation module—specically the version without code elimination—to further enhance its eectiveness. Enhancing LLM-Base d T est Generation by Eliminating Covered Code Conference’XX, 2026, XXX, XXX Figure 4: An Example of Eliminating Cov ered Code. C.2 Threats to V alidity The main threats to the validity of our metho d lie in the limite d dataset and its generalizability to other LLMs. Due to budget con- straints, our experiments were conducted on complex methods from only nine projects. Moreover , the inherent variability in LLM performance may aect the magnitude of the dierences in cover- age rates among the unit tests generated by dier ent methods in our experiments. Nevertheless, the results suciently demonstrate the superiority of our approach in addressing scalability challenges. Howev er , for real-world deployment, larger-scale testing would be necessary to uncover potential issues within our codebase. In our experiments, we only select the widely used GPT -4o model, which oers a favorable trade-o between generation capability and API cost. Expanding the dataset and evaluating additional LLMs remain important directions for future work. Meanwhile, there are still areas for improvement in our im- plementation. For instance, although we explicitly instruct the LLM via prompts to place executable unit test code within the ... structure, the inherent instability of LLM outputs often leads to unexpected formatting issues—such as the inclusion of illegal string patterns like triple backticks (“‘), which can disrupt code execution. Additionally , various path dependency issues may cause the generated tests to fail at run-time. These fac- tors can aect the stability of the resulting coverage rates. In future work, we plan to further rene the process of extracting test cases from LLM outputs to improv e robustness and reliability .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment