Bayesian Generative Adversarial Networks via Gaussian Approximation for Tabular Data Synthesis

Generative Adversarial Networks (GAN) have been used in many studies to synthesise mixed tabular data. Conditional tabular GAN (CTGAN) have been the most popular variant but struggle to effectively navigate the risk-utility trade-off. Bayesian GAN ha…

Authors: Bahrul Ilmi Nasution, Mark Elliot, Richard Allmendinger

T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 1 – 2 8 Bayesian Generative Adversarial Networks via Gaussian Approximation for T abular Data Synthesis Bahrul Ilmi Nasution 1 ∗ , Mark Elliot 1 , Richard Allmendinger 2 1 Department of Social Statistics, The University of Manchester , Oxfor d Rd, Manchester , M13 9PL, UK. 2 Alliance Manchester Business School, The University of Manchester , Booth Street W est, Manchester , M15 6PB, UK. ∗ Corresponding Author E-mail: firstname.lastname@manchester.ac.uk Received 17 July 2025; received in r evised form 12 January 2026; accepted 24 February 2026 Abstract. Generative Adversarial Networks (GAN) have been used in many studies to synthesise mixed tabular data. Conditional tabular GAN (CTGAN) have been the most popular variant but struggle to effectively navigate the risk-utility trade-off. Bayesian GAN have received less atten- tion for tabular data, but have been explored with unstructur ed data such as images and text. The most used technique employed in Bayesian GAN is Markov Chain Monte Carlo (MCMC), but it is computationally intensive, particularly in terms of weight storage. In this paper , we intr oduce Gaus- sian Appr oximation of CTGAN (GACTGAN), an integration of the Bayesian posterior approximation technique using Stochastic W eight A veraging-Gaussian (SW AG) within the CTGAN generator to syn- thesise tabular data, reducing computational over head after the training phase. W e demonstrate that GACTGAN yields better synthetic data compared to CTGAN, achieving better pr eservation of tabu- lar structure and inferential statistics with less privacy risk. These results highlight GACTGAN as a simpler , effective implementation of Bayesian tabular synthesis. Keywords. deep generative models, generative adversarial networks, stochastic weight averaging- Gaussian, GACTGAN, synthetic tabular data 1 Introduction Synthetic data has become an increasingly valuable asset across multiple domains, of- fering practical solutions to privacy-pr eserving data sharing. It facilitates the release of data by mitigating disclosur e risks (Raab 2024), and supports pedagogical use cases by en- abling students to work with realistic yet non-disclosive datasets (Elliot et al. 2024; Little et al. 2025). However , high-dimensional tabular data-such as that fr om censuses and so- cial surveys-poses significant challenges due to the presence of sensitive attributes (e.g., demographic, health, financial). As a result, data controllers often impose strict access restrictions, limiting broader reuse, including in educational settings. T o address this, 1 2 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger resear chers—particularly within national statistical offices (NSOs)—have developed syn- thetic data generation methods aimed at pr eserving key statistical properties while reduc- ing disclosure risk (Nowok et al. 2016; Little et al. 2023b). Synthetic data generation is commonly pursued through two broad methodological paradigms: statistical and deep learning-based approaches. Statistical methods assume that the under - lying data distribution can be ef fectively captured by a single model, with examples includ- ing Classification and Regression T r ees (CAR T) (Nowok et al. 2016) and Bayesian Networks (BN) (Zhang et al. 2017). In contrast, deep learning approaches—often r eferred to collec- tively as deep generative models (DGMs)—leverage neural networks to approximate com- plex, high-dimensional data distributions. Building on the universal approximation theo- rem, which states that suf ficiently deep neural networks can approximate any continuous function (Hornik et al. 1989), DGMs have become a vibrant area of resear ch (Goodfellow et al. 2014; Ma et al. 2020; Xu et al. 2019). Among these, generative adversarial networks (GAN) (Goodfellow et al. 2014) are among the most pr ominent, pr oducing high-quality samples via adversarial training between gen- erator and discriminator networks. However , standard GAN are susceptible to mode col- lapse , where the generator fails to capture the full diversity of the data distribution (Saatci and W ilson 2017). This challenge is particularly acute for tabular data, which often com- prises heter ogeneous feature types (e.g. numerical and categorical variables), introduc- ing complex optimisation landscapes (Ma et al. 2020; Nasution et al. 2022). Conditional tabular GAN (CTGAN) (Xu et al. 2019) addresses some of these challenges by modelling conditional distributions and using training-by-sampling techniques, making it a promis- ing candidate for synthesising structur ed data. Nonetheless, concerns persist ar ound the trade-off between utility and disclosur e risk in synthetic outputs (Ran et al. 2024). Bayesian neural networks (BNN) offer a potentially compelling alternative by estimating posterior distributions over model parameters, rather than relying on fixed point estimates as in classical neural networks. In the context of DGMs, this Bayesian treatment enables exploration of multiple modes in the data distribution, potentially improving sample di- versity (Saatci and W ilson 2017). Bayesian DGMs typically approximate the intractable posterior via methods such as Markov Chain Monte Carlo (MCMC) (Saatci and W ilson 2017; Gong et al. 2019; T urner et al. 2019; Salimans et al. 2015) or variational approxima- tion (Glazunov and Zarras 2022; T ran et al. 2017). While MCMC appr oaches pr ovide strong asymptotic guarantees, they ar e computationally intensive and memory-demanding, as they requir e storing multiple samples of the model weights across iterations. A more tractable approach to posterior approximation involves projecting the network weights into a multivariate normal distribution defined by a mean vector and covariance matrix. This naturally raises the question: how should the mean and covariance be spec- ified? The Laplace approximation (MacKay 1992), which estimates the covariance using second-order derivatives of the loss function, is a well-established method. However , it is computationally expensive, with a cost that scales quadratically with the number of param- eters (Daxberger et al. 2021). Simplified diagonal approximations—such as those based on the inverse Fisher information—are more efficient but can produce unstable estimates, in- cluding exploding variances, especially in settings wher e vanishing gradients are common, such as GAN training (Gulrajani et al. 2017). T o address these limitations, stochastic weight averaging–Gaussian (SW AG) (Maddox et al. 2019) has been proposed as a scalable and ef ficient alternative. SW AG approximates the posterior by capturing the trajectory of weights over the course of training to estimate both the mean and covariance, offering a lightweight yet ef fective method for posterior inference. When applied to GAN, SW AG enables a Bayesian treatment of the generator T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 3 with minimal additional computational burden compared to traditional methods such as gradient averaging or second-order techniques (Murad et al. 2021). While GAN, including their Bayesian variants (Saatci and W ilson 2017; Mbacke et al. 2023), have been used primarily in image and text applications, the implementation for tab- ular data synthesis, particularly using SW AG, remains largely unexplor ed. Existing studies have focused on applying weight averaging techniques in GAN for tasks such as image segmentation (Durall et al. 2019) but have not fully integrated SW AG into GAN frame- works for tabular data. However , SW AG has been successfully applied in a variety of other real-world domains, such as field temperature prediction (Morimoto et al. 2022), air quality monitoring (Murad et al. 2021), and earthquake fault detection (Mosser and Naeini 2022). Therefor e, this study helps connect Bayesian modeling with tabular data synthesis. Our contributions are thr eefold: 1. GACTGAN: Bayesian posterior over the generator . W e introduce Gaussian Approx- imation of CTGAN (GACTGAN), which integrates SW AG with CTGAN to appr oxi- mate a posterior distribution for the generator parameters. (Section 3) 2. Practical implementation. W e provide an implementable version of GACTGAN based on differ ent configurations of SW AG. (Sections 4.2.2) 3. Improved utility with lower risk. W e show that GACTGAN str engthens CTGAN, producing synthetic data that remains similarly useful while reducing risk relative to standard CTGAN. (Section 5) W e view this work as a step toward making Bayesian methods more actionable for tabular data synthesis, and we hope it will enable further research as well as practical deployments. This paper is structured as follows. Section 2 provides background information on CT - GAN and SW AG. Section 3 formulates our proposed method, GACTGAN. Section 4 de- scribes the methodology , including the dataset, the evaluation, and the envir onmental set- ting. Section 5 presents the results of our experiments. Finally , Sections 7 discuss limita- tions, future work, and conclusions. 2 Background This section provides an initial overview before diving into our proposed methodology . GACTGAN is composed of two main elements: the CTGAN and the posterior approxi- mation facilitated by SW AG. In Subsection 2.1, we give a concise introduction to CTGAN. Next, we explor e the weight averaging methods in Subsection 2.2, followed by a posterior formulation using SW AG in Subsection 2.3. 2.1 CTGAN Generative Adversarial Networks (GAN) are DGMs that capture data distribution via an adversarial game between two neural networks (Goodfellow et al. 2014). The generator network, denoted as G and parameterised by θ G , aims to generate r ealistic data fr om ran- dom noise z to deceive its adversary . The discriminator , denoted as D with parameters θ D , strives to distinguish r eal from fake data. Both networks engage in a minimax game to reach equilibrium, cr eating high-quality synthetic data. The original GAN developed T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 4 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger by Goodfellow et al. (2014), also known as vanilla GAN, uses binary entropy loss. How- ever , vanilla GAN suffers from unstable training and mode collapse, where the generator produces similar outputs, leading to low-diversity data. Arjovsky et al. (2017) and Gulra- jani et al. (2017) introduced the W asserstein loss to address these issues, enhancing model stability and reducing mode collapse. For batch data x sampled from the original dataset D , the discriminator and generator weights are updated using Equation (1) for vanilla GAN and (2) for W asserstein GAN. ( b θ G , b θ D ) = arg min θ G max θ D E x [log D ( x )] + E z [log(1 − D ( G ( z ))] (1) ( b θ G , b θ D ) = arg min θ G max θ D E x [ D ( x )] − E z [ D ( G ( z ))] (2) Xu et al. (2019) developed conditional tabular GAN (CTGAN) for mixed data types in tabular data. CTGAN tackles challenges of mixed data types using a conditional generator that synthesises data based on categorical column values, enabling the model to learn vari- able dependencies. CTGAN ef ficiently synthesises tabular data, applied in fields for data simulation and preservation of privacy (Athey et al. 2024; Little et al. 2023a). 2.2 Stochastic W eight A veraging The concept of averaging weights in neural networks has gained traction, offering a novel perspective for identifying optimal solutions, referr ed to as Stochastic W eight A veraging (SW A) (Izmailov et al. 2018). In short, SW A leverages the historical trajectory of weights op- timised using standard algorithms, such as Stochastic Gradient Descent (SGD) and Adam, combined with a scheduled learning rate. Standard optimisation methods often converge to a “sharp minimum”—a narrow solution where small changes in the data or weights can cause a significant drop in performance. SW A addresses this by computing the arithmetic mean of the weights accumulated along the optimisation path (Hwang et al. 2021). Intuitively , while standard SGD traverses the edges of a low-loss region, averaging these points identifies the centroid of that region. This allows the model to settle into a “flat minimum”—a wider , more stable r egion in the loss landscape. Solutions found in flat minima tend to generalise better to unseen data because they ar e less sensitive to minor shifts in the underlying distribution (Izmailov et al. 2018). T ypically , the SW A process starts after several iterations of standard training. For sim- plicity , the calculation of the average weights w can be made at all specific intervals c . The choice of c offers flexibility and can be tailored according to the specific requir ements of the user . Denoting n mod as the total number of models that have been averaged so far , the update in iteration t can be performed when t (mo d c ) = 0 using Equation (3). w = w × n mod + w ( t ) n mod + 1 (3) Due to the straightforwar d implementation, there are many studies that have used SW A to improve the performance of their neural networks, such as in adversarial learning (Kim et al. 2023) and medical imaging (Pham et al. 2021; Y ang et al. 2023). T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 5 2.3 Being Bayesian: SW A and Gaussian approximation SW A-Gaussian (SW AG) is an extension of SW A with Bayesian perspective that captures uncertainty in the weight trajectory Maddox et al. (2019). SW AG approximates the poste- rior distribution of weights using a multivariate normal distribution N ( µ, Σ) , wher e µ and Σ denote the mean of SW A and the approximate covariance matrix, r espectively (Glazunov and Zarras 2022). The covariance matrix is composed of both diagonal and non-diagonal el- ements. The diagonal covariance Σ diag is calculated using the mean and second sample mo- ment, denoted w 2 . The details of the calculation can be seen in Equations (4) and (5) (Onal et al. 2024). Σ diag = diag ( w 2 − w 2 ) (4) w 2 = w 2 × n mod + ( w ( t ) ) 2 n mod + 1 (5) The diagonal covariance matrix is fr equent in the neural network, particularly in the vari- ational approximation (Blundell et al. 2015). However , its use is often too restrictive, as it fails to consider the relationship among random variables that ar e characterised by the co- variance matrix. An approach to obtain the covariance matrix involves utilising the outer products of the deviations, as illustrated in Equation (6). Σ ≈ 1 n mod − 1 n mod X i =1 ( w i − w )( w i − w ) ⊺ = 1 n mod − 1 b D b D ⊺ (6) b D r epresents the deviation matrix structured in a column format. Unfortunately , storing a full rank Σ is prohibitively expensive (Glazunov and Zarras 2022). A viable alternative is to use the low-rank covariance Σ l-rank , which efficiently captures only the last K-rank of the deviation matrix. The use of a low-rank approximation proves to be practically advantageous in enhancing the performance of the SW AG model (Maddox et al. 2019). The Σ l-rank can be calculated using Equation (7). Σ l-rank = 1 K − 1 b D b D ⊺ (7) The approximate posterior distribution of the model weights is repr esented by a Gaus- sian distribution with a mean from SW A and its approximate covariance, expressed as N ( w, α G (Σ diag + Σ l-rank )) . By default, the scaling factor for the covariance, denoted as α G , is set to 0.5. However , users have the flexibility to choose alternative scaling factors, with recommendations from (Maddox et al. 2019) suggesting values α G ≤ 1 . Although im- plementation may appear straightforward within the Bayesian context, practical applica- tions of SW AG remain niche, being particularly pertinent in specific areas such as out-of- distribution detection (Glazunov and Zarras 2022) and the development of large language models (LLMs) (Onal et al. 2024). 3 GACTGAN 3.1 Model Formulation Figure 1 pr ovides an overview of an iteration of the training of the GACTGAN model, and Algorithm 1 illustrates the details of the training process of the GACTGAN model in T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 6 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger the form of pseudocode. In this study , we adopted the CTGAN methodology by Xu et al. (2019), with additional SW AG steps each epoch (Maddox et al. 2019). As illustrated in the figure, during the training phase, a noise z is sampled from N (0 , I ) . Subsequently , this noise is fed into the G , resulting in the creation of synthetic data, denoted x ′ . The role of D is to determine whether the data is real or fake by comparing them with the original dataset, which is selected according to the given batch size and labelled x . Consequently , Steps 7 of Algorithm 1 update the discriminator and generator using gradients derived from the selected objective function: the vanilla loss (Equation (1)) or the W asserstein loss (Equation (2)), as defined by the user . Generator real or fake? Discriminator Backpropagation Update weights Update average and covariance Figure 1: An iteration process of GACTGAN. Note that the generator and discriminator is trained regularly . However , after the backpr opagation in the generator , the weights are stored to update the mean and mean of squared weights. The mean and new weights are used to store the deviation matrix, while the diagonal covariance is constructed from the mean and mean of squared weights. During the SW AG phase, the updated θ G is stor ed to iteratively update the first and sec- ond samples every c . At the same time, the differ ences between the updated θ G and the mean value θ G are used to estimate the deviation matrix. Given constraints on the low- rank approximation, if this maximum rank is surpassed, the oldest column is replaced by the newest addition. Calculating the covariance matrix using Equations (4) and (7) is car- ried out once training is complete, allowing the approximate posterior distribution of the generators to be derived using normal distribution. The approximate posterior distribution of θ G can now be applied for data synthesis. Algo- rithm 2 outlines the data synthesis procedure using the GACTGAN model. W e first define the number of synthetic data rows and its batch size, which implies the iteration of pos- terior sampling. For instance, if one wants a dataset consisting of 3000 entries and with T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 7 Algorithm 1 GACTGAN Input: D , T , epoch to start weight mean and covariance collection t collect , K , Generator layer G , Discriminator layer D , batch size M 1: Obtain x num and x dc by transforming the numerical dataset using standard scaling based on Gaussian mode 2: Obtain x ohe by transforming the categorical dataset using a hot encoding 3: x = x num ⊕ x dc ⊕ x ohe 4: Sample data into M number of mini-batches 5: for t = 1 → T do 6: for m = 1 → M do 7: Update θ D and θ G using regular CTGAN pr ocedure (Xu et al. 2019) 8: end for 9: if t > t collect then 10: Update θ G using Equation (3) and θ 2 G using Equation (5) by setting w ( t ) = θ ( t ) G , w = θ G , and w 2 = θ 2 G 11: Calculate b D t = θ ( t ) G − θ G 12: if num cols ( b D ) > K then 13: remove first column of b D 14: else 15: Append column b D t to b D 16: end if 17: end if 18: end for 19: calculate Σ diag using Equation (4) 20: calculate Σ l-rank using Equation (7) Output: Approximate posterior N ( θ G , α G (Σ diag + Σ l-rank )) batch size of 500, there are six iterations. For each iteration, random noise is generated in a predefined batch size, followed by posterior sampling of the weights. The noise is fed to the sampled weights for data synthesis. The process is repeated until the iteration in numbers is finished. The sampling pr ocess using dif ferent weights effectively indicates ex- ploration in differ ent distribution modes, ther eby enhancing the diversity of the generated data (Saatci and W ilson 2017). 3.2 Complexity Analysis The complexity analysis for CTGAN, BayesCTGAN, and GACTGAN reveals a clear trade- off between computational cost and the benefits of being Bayesian. Note that the analysis is performed with similar generator and discriminator networks for each algorithm. T raining complexity . Let M be the number of mini-batch parameter updates per epoch, and n epochs be the total number of training epochs, and let t collect be the epoch at which the SW AG process begins. GACTGAN still maintains good efficiency during training and incurs a small constant overhead per epoch to update its moment matrices ( O (1) ) for n epochs − t collect iterations. Thus, its overall training complexity of O ( n epochs × ( M +1) − t collect ) remains linear and closely aligned with the baseline CTGAN complexity of O ( n epochs × M ) . This makes the training process highly scalable despite the added Bayesian machinery . T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 8 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger Algorithm 2 GACTGAN data synthesis Input: n sample , Generator approximate posterior N ( θ G , α G (Σ diag + Σ l-rank ) , batch size M , number of MC sample S 1: T s = ⌈ n sample / M ⌉ ▷ obtain number of iterations for sampling from posterior 2: Initiate ˜ X as the synthetic data storage 3: for t = 1 → T s do 4: Sample z ∼ N (0 , I ) with size M 5: for s = 1 → S do 6: Sample posterior e θ G ∼ N θ G , α G (Σ diag + Σ l-rank ) 7: Update batch norm statistics 8: ˜ x + = 1 S x ∗ where x ∗ is obtained by feeding z to e θ G 9: end for 10: Append row ˜ x to ˜ X 11: end for 12: T ransform ˜ X to make e D Output: Synthetic dataset e D 1: n sample Parameter complexity . Let P G denote the number of generator network weights stored. Unlike MCMC-based methods like BayesCTGAN, which must store a large number S of full parameter sets ( O ( S × P G ) ), GACTGAN employs a mor e efficient appr oach by storing only thr ee cor e components: a mean vector θ G , a diagonal covariance Σ diag , and a low-rank approximation matrix Σ l-rank , r esulting in a space complexity of O (3 × P G ) . This repr esents substantial memory savings, as 3 is much smaller than the number of samples S requir ed for good MCMC posterior samples. Sampling complexity . Finally , the complexity analysis of the sampling shows that the cost of generating the data is application-dependent. For a single sample ( S = 1 ), the cost of GACTGAN of O ( T s ) is equivalent to CTGAN. However , when generating from multiple posterior samples to enable model averaging, the complexity scales linearly to O ( T s × S ) . This cost is inher ent to any Bayesian method that uses an ensemble of models but is justified by the ability to pr oduce diverse outputs and quantify predictive uncertainty , features absent in the CTGAN. In conclusion, GACTGAN offers a computationally efficient pathway to Bayesian deep learning for generative models. It achieves a favourable balance, introducing only mini- mal overhead during training and requiring a fixed, low-memory footprint for storing the posterior approximation, all while pr oviding the benefits of a Bayesian approach. 4 Methodology W e introduced GACTGAN in Section 3 as our first contribution. This section describes the methodology used to support our remaining contributions. W e first describe the datasets in Section 4.1. Section 4.2 then details the experimental framework, including the baseline comparisons and model configurations in Section 4.2.1. Meanwhile, Section 4.2.2 presents the implementation of the pr oposed GACTGAN variants, addressing our second contribu- tion. Finally , Section 4.3 outlines the evaluation metrics used to assess performance and to examine our third contribution. T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 9 4.1 Dataset In this study , we used seven datasets, of which five are census datasets, as shown in T a- ble 1. Census datasets used ar e from dif ferent countries: UK, Indonesia, Fiji, Canada, and Rwanda, which we obtained from IPUMS International (Minnesota Population Cen- ter 2023). Census datasets are valuable for benchmarking tabular data synthesis because they capture a range of community characteristics, such as demographics and education. Moreover , these datasets are collected by the National Statistics Offices, which are legally requir ed to ensure that the released data accurately repr esent the population while also pro- tecting individual privacy (Nowok et al. 2016; Ran et al. 2024). Therefore, census datasets are highly relevant as benchmarks for evaluating synthetic data methods. However , one drawback is that census data often lack numeric variables. T o addr ess the limitation in terms of numerical attributes, we added two social datasets from the UCI repository , which have also been used in pr evious r esearch on tabular deep generative models (Xu et al. 2019; Ma et al. 2020; Kotelnikov et al. 2023). The column details of the datasets can be seen in T a- ble 4 in Appendix A. Acronym Dataset Name Y ear #Observations #Numerical #Categorical V ariables V ariables UK UK Census 1991 104267 0 15 ID Indonesia Census 2010 177429 0 13 CA Canada Census 2011 32149 3 22 FI Fiji Census 2007 84323 0 19 R W Rwanda Census 2012 31455 0 13 AD Adult 1994 48842 5 10 CH Churn Modelling N/A 10000 4 7 T able 1: Datasets used in this study and their key characteristics. 4.2 Experimental Setup 4.2.1 Baselines Methods and Configurations W e compared our proposed methods with several baselines. T o answer our main ques- tion, we compare this with regular CTGAN as a baseline, which is available as a library in Python 1 . The default loss function in the script is W asserstein; ther efore, we add the vanilla loss to align with our study . Furthermore, we also compar ed our proposed algorithm with BayesCTGAN, an integration of Bayesian GAN developed by (Saatci and W ilson 2017) with CTGAN 2 . However , instead of using SGHMC, we sampled the parameters using precon- ditioned stochastic gradient Langevin dynamics (PSGLD) (Li et al. 2016) 3 . T able 2 shows the algorithm parameters and their settings as used in the study . For fair comparison, we put a similar basic configuration for all datasets without fine-tuning, 1 https://github.com/sdv- dev/CTGAN/blob/main/ctgan 2 W e adopted the original implementation then changed the optimiser into PSGLD. The original code for pytorch is available in https://github.com/vasiloglou/mltrain- nips- 2017/tree/master/ben_ athiwaratkun/pytorch- bayesgan 3 The original code is available in https://github.com/automl/pybnn/blob/master/pybnn/ sampler/preconditioned_sgld.py T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 10 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger Model Parameter Size CTGAN layer size (256, 256) Noise dimensions 128 Dropout (for D ) 0.5 Number of epochs 200 Batch size 500 Pac 10 Optimiser Algorithm Adam Learning rate 2 × 10 − 4 W eight Decay 1 × 10 − 6 Coefficients (0.5, 0.9) BayesCTGAN Preconditioning decay 0.99 σ 2 prior 0.01, 1, 10 #MCMC samples 20 GACTGAN σ 2 prior 1 K 0 (Diagonal), 30, 100, 150 α G 0 (ACTGAN), 0.25, 0.5, 1.0 T able 2: Algorithm parameters and their settings as used in this study . mainly adopted from Xu et al. (2019). W e used two hidden layers of size 256, as recom- mended in previous studies as one of the combinations of hidden layers (Kotelnikov et al. 2023; Gorishniy et al. 2021). W e also adapted the PacGAN framework (Lin et al. 2018), which uses several discriminator samples to improve the sample quality . W e used Adam as the optimiser (Kingma and Ba 2015), except for BayesCTGAN which uses PSGLD with differ ent prior variances ( σ 2 prior ). W e only sweep the σ 2 prior to make sur e that the differ ence only come from the Bayesian setting. 4.2.2 GACTGAN variants implementation. W e used different variants of GACTGAN as our proposed method. The first variant uses SW A without any posterior approximation, which we denote as the averaging CTGAN or ACTGAN. In GACTGAN, we investigated performance in diagonal and low-rank covari- ance settings to answer our second contribution . Further , we tested dif ferent covariance rank settings, including diagonal (D), 30, 100, and 150. For clarity , we label each version ac- cording to its covariance rank, for example, GACTGAN (D), GACTGAN (30), and so forth. The fourth part of T able 2 summarises the configurations for GACTGAN. W e acknowledge that GACTGAN requir es tuning that can be done during the synthesis phase without modifying the training parameters, which becomes the advantage over CT - GAN and BayesCTGAN. In the synthesis phase, we used different covariance scales α G , in which the findings of Maddox et al. (2019) showed that it is recommended to use a scale below 1. T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 11 4.3 Evaluation Method 4.3.1 Qualitative and Quantitative Evaluations W e evaluated the performance of the synthetic data produced using differ ent approaches. For starters, we provided an evaluation visually to assess the behaviour of the synthetic data generated by the models compar ed to the real data. For continuous variables, we used the absolute dif ference in correlation to investigate the relationship between variables and histogram to investigate the distribution. On the other hand, we visualised the categorical variables using a bar chart based on the cross-tabulation r esults. W e also performed quantitative evaluations based on their utility and disclosur e risk val- ues. W e focus on the narr ow utility measures (T aub et al. 2019), which include the user ’s perspective on what they want to use the data for . Narrow measures can be calculated using two appr oaches: ratio of counts (ROC) and confidence interval overlap (CIO). ROC compares cell values within frequency tables and cr oss-tabulations between synthetic and original data by calculating the ratio between the smaller of any cell count pairs and the larger of the pair (Little et al. 2021). CIO measures the performance of synthetic data in sta- tistical inference using a statistical model, such as the coefficients of the logistic regression model (Ran et al. 2024). Disclosure risk values, on the other hand, can be found using marginal tar get correct at- tribution probability (TCAP) values, as seen in (8). These show how well an adversary can determine sensitive variables from fake data, as long as they know some of the population data (Little et al. 2021). Considering the baseline, we truncated the rescaled TCAP values to zero to obtain the risk under the assumption that TCAP values below zer o already have low risk. T runcating the values also gives easier interpretation and comparison. The details of the measurements can be seen in (T aub et al. 2019; Little et al. 2021; T aub et al. 2019; Ran et al. 2024). R = max { 0 , T C AP − W E AP 1 − W E AP } (8) Since many combinations of models are to be investigated, we use an aggregate score to select the best model based on the resear ch questions we want to answer , which accounts for the utility and risk. The selection score (SS) is calculated in Equation (9). S S = ( ϕ × U ) + [(1 − ϕ ) × (1 − R )] (9) ϕ is the weight of the utility , which is [0,1]. Increasing ϕ will focus the model selection on utility and vice versa. In this study , we used ϕ = 0 . 75 as we are looking for a model with balanced utility and risk. For each simulation, we generated five different datasets fr om differ ent seeds using the trained models. W e then calculated utility and risk, followed by mean calculations across the dif ferent generated datasets. 4.3.2 Pareto Front In multi-objective optimisation, the Pareto front provides a principled way to analyse trade- offs among competing objectives and to identify solutions that best align with a decision maker ’s prefer ences (Mirjalili and Dong 2020). The concept is grounded in Pareto domi- nance (Censor 1977), which formalises when one solution is consider ed superior to another . Specifically , a solution s 1 is said to dominate another solution s 2 if and only if: 1. s 1 is at least as good as s 2 in all objectives, and T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 12 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger 2. s 1 is better than s 2 in at least one objective. A solution is considered Pareto optimal if it is not dominated by any other feasible so- lution. Unlike single-objective optimisation problems, which typically admit a single best solution, multi-objective problems generally yield a set of Pareto-optimal solutions (All- mendinger et al. 2016). This arises because improvements in one objective often lead to degradation in another , r esulting in inherent trade-offs rather than a single optimal out- come. The collection of Par eto-optimal solutions constitutes the Pareto front , which serves as a pool of candidate solutions fr om which decision makers can select according to their prefer ences. In this work, we use the Pareto front to analyse the trade-of f between utility , which is to be maximised, and risk, which is to be minimised. V isualising these objec- tives on a two-dimensional plane pr ovides an intuitive and effective means of identifying suitable solutions (Little et al. 2025). 5 Results This section disseminates the results obtained from our experiments. Initially , in Sec- tion 5.1, we provided descriptive statistics related to both synthetic data and original data. Furthermore, we delved into the primary quantitative findings and engaged in an in-depth discussion of these results in Section 5.2. W e also added additional analysis of different GACTGAN parameters in Section 5.3. Lastly , Section 5.4 provides insight into how our proposed model performs in dif ferent scenarios. 5.1 Descriptive Statistics Comparison Before going into quantitative evaluation, we attempted to visually compare the synthetic data with respect to the original counterparts. W e analysed continuous variables using correlation matrices and histograms and categorical data output using cross-tabulations between two variables. Considering page limitation, we only pr ovide a few examples from differ ent datasets, as seen in Figures 2 and 3. In each subfigur e, the top r ow corresponds to a W asserstein loss, while the bottom row repr esents a vanilla loss. Figure 2 presents the correlation differ ences between synthetic and real data in the CA dataset. The dif ference is computed by subtracting the real data corr elation from the syn- thetic data corr elation, with brighter colours (lighter purple) indicating better ability to preserve corr elation between numerical variables and darker colours (blue) showing oth- erwise. In the figur e, each method is represented by a 3 × 3 heatmap corresponding to the pairwise correlations of the thr ee continuous variables in the dataset. The results show that GACTGAN consistently outperforms CTGAN and BayesCTGAN. These configura- tions achieve the lowest corr elation dif ferences, indicating closer correlation of the real data. In contrast, CTGAN, BayesCTGAN, and ACTGAN exhibit higher deviations, with darker colours indicating their inability to pr eserve correlations. Therefore, it can be con- cluded that GACTGAN performed better in preserving corr elations. Figure 3 reveals the superior performance of GACTGAN in capturing the distribution within categorical and continuous variables. In Figure 3a, GACTGAN accurately captures the frequency of category ownership in the UK between men, which is indicated from the blue chart to the red in GACTGAN. In contrast, CTGAN, BayesCTGAN, and ACTGAN exhibit over- and under estimation in some categories, especially in minor categories, fail- ing to replicate the real data’s patterns effectively . In Figure 3b, GACTGAN continues to T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 13 CTGAN BayesCTGAN A CTGAN GA CTGAN (D) GA CTGAN (30) GA CTGAN (100) GA CTGAN (150) W asserstein V anilla value 0.0 0.1 0.2 0.3 Figure 2: Heatmap visualisation of correlation differ ence for the CA dataset across differ- ent generative models (columns) and loss functions (rows). The difference is computed by subtracting the r eal data correlation from the synthetic data correlation. Each inner 3 × 3 grid displays the pairwise correlation differ ences between synthetic and real data for the dataset’s continuous variables. Lighter tiles indicate minimal deviation from the r eal data (better preservation of multivariate dependencies), while darker blue tiles highlight oth- erwise. GACTGAN variants (right columns) predominantly exhibit lighter tones, demon- strating superior pr eservation of correlation str uctures compar ed to baselines like CTGAN and BayesCTGAN. excel in learning the distributions of the credit scor e in the CH dataset. Similarly , for the credit scor e, GACTGAN achieves high fidelity , accurately replicating the original data dis- tribution. However , using lower covariance ranks, such as diagonal, show reduced perfor- mance, underscoring the importance of the rank. In comparison, CTGAN, BayesCTGAN, and ACTGAN fail to capture the finer details of the distribution, particularly in the peak and tail. In general, GACTGAN demonstrates its r obustness and adaptability across vari- ous variables and datasets, significantly outperforming othe r methods to pr eserve categori- cal and continuous data distributions. These r esults highlight the importance of leveraging SW AG’s posterior approximation in the CTGAN generator and carefully tuning covariance ranks for optimal synthetic data generation. In summary , GACTGAN with higher parameter values (100 and 150) provides the best overall performance, pr eserving the original data characteristics across all variables and datasets. Other methods show less consistent r esults, making GACTGAN the most r eliable choice for generating high-quality synthetic data. W e also pr ovided additional descrip- tive statistics in the Appendix B. The next subsection shows our evaluation results from a quantitative perspective, which completes our findings. 5.2 Quantitative Results After observing that our proposed method performed better in descriptive statistics, this subsection discusses our main goal, the quantitative investigation of whether GACTGAN could improve CTGAN in terms of utility and risk. W e did the simulation results quan- titatively using the evaluation measurements mentioned in Section 4. W e run the models based on the considered hyperparameters, followed by a selection based on the maximum selection score. As an initial ablation study , we also placed SW A (denoted as ACTGAN) T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 14 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger CTGAN BayesCTGAN A CTGAN GA CTGAN (D) GA CTGAN (30) GA CTGAN (100) GA CTGAN (150) W asserstein V anilla None Own occ−buying Own occ−outright Rent priv unfurn Rented Hsg.Assoc Rented Job/busns Rented LA/NT E+W Rented priv furn None Own occ−buying Own occ−outright Rent priv unfurn Rented Hsg.Assoc Rented Job/busns Rented LA/NT E+W Rented priv furn None Own occ−buying Own occ−outright Rent priv unfurn Rented Hsg.Assoc Rented Job/busns Rented LA/NT E+W Rented priv furn None Own occ−buying Own occ−outright Rent priv unfurn Rented Hsg.Assoc Rented Job/busns Rented LA/NT E+W Rented priv furn None Own occ−buying Own occ−outright Rent priv unfurn Rented Hsg.Assoc Rented Job/busns Rented LA/NT E+W Rented priv furn None Own occ−buying Own occ−outright Rent priv unfurn Rented Hsg.Assoc Rented Job/busns Rented LA/NT E+W Rented priv furn None Own occ−buying Own occ−outright Rent priv unfurn Rented Hsg.Assoc Rented Job/busns Rented LA/NT E+W Rented priv furn 0 10000 20000 30000 40000 0 10000 20000 30000 40000 TENURE Frequency Data Original Synthetic (a) UK-House ownership among females CTGAN BayesCTGAN A CTGAN GA CTGAN (D) GA CTGAN (30) GA CTGAN (100) GA CTGAN (150) W asserstein V anilla Data Original Synthetic (b) CH-credit scor e Figure 3: Descriptive statistics of synthetic and original data: (a) and (b) represent cross- tabulation of house ownership among different sex in the UK, and (c) and (d) r epresent the distribution of estimated salary and credit score in CH, respectively . Each subplot com- pares the synthetic data generated by differ ent methods (CTGAN, BayesCTGAN, ACT - GAN, GACTGAN with varying parameters) to the original data, highlighting the align- ment between the synthetic and original distributions across differ ent demographics and variables. The top and bottom part of each subfigur e represent W asserstein and vanilla loss. T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 15 UK Indonesia Fiji Canada Rwanda Adult Churn W asserstein V anilla 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.1 0.2 0.3 0.4 0.5 0.0 0.1 0.2 0.3 0.4 0.5 Utility Risk Model CTGAN BayesCTGAN A CTGAN GA CTGAN (D) GA CTGAN (30) GA CTGAN (100) GA CTGAN (150) Figure 4: R-U map of CTGAN, Bayesian GAN, and GACTGAN. The purple lines indicated the solution candidates based on Pareto fr ont. and SW AG-diagonal (denoted as GACTGAN (D)) as the special case of SW AG. W e also compared dif ferent covariance ranks of GACTGAN as denoted inpar entheses. Figure 4 showed the simulation results of CTGAN, BayesCTGAN, and GACTGAN di- vided by loss functions and visualised on the R-U map with the obtained Par eto front to select the most optimal solution candidates. W e only consider algorithms that have a util- ity larger than 0.4 to be included in the candidate because a dataset with utility lower than 0.4 cannot be properly used. For example, although BayesCTGAN in RW can be a solution candidate based on the Pareto fr ont, it is excluded because the utility is less than 0.4. In general, Figure 4 shows that BayesCTGAN and SW A-CTGAN performed worse com- pared to other algorithms. It can be seen fr om the figur e that SW A-CTGAN has the lowest utility in most datasets both in vanilla and in W asserstein loss, although they are sometimes chosen as a solution in some datasets. Mor eover , CTGAN was found to perform better than both algorithms while performing worse in CH. Therefore, we do not suggest using weight averaging in the generator for tabular data synthesis, except probably for small datasets. On the other hand, the Pareto front, visualised in the purple line, showed that GACTGAN became the primary candidate solution for tabular data synthesis in all datasets, with CT - GAN joined in some datasets. In detail, at the top of Figure 4, it is evident that in the W asserstein distance, GACTGAN could improve upon CTGAN. The claim can be seen from the improvement of utility in most datasets, such as FI and AD, by GACTGAN with rank 150. Moreover , our interesting finding is that in the UK, CA, R W , and CH, the synthetic data generated fr om GACTGAN seem to have higher utility and lower disclosure risk, which is ideal for tabular data syn- thesis. However , in the ID dataset, the utility of the SW AG dataset is slightly lower than that of CTGAN, but the risk becomes significantly lower , but they are not considered a so- lution because of the utility cut-off point. The same thing happened for GACTGAN in AD except for rank 150, but they are pr eferred solution. On the other hand, at the bottom of Figure 4, where the models use vanilla loss, there is a pattern similar to that of the top figure. In the UK and FI, GACTGAN has a lar ger utility in general with a slight dif ference in risk with CTGAN. In CA, AD, and CH, the ideal scenario of synthetic data protection occurred. In RW , the utility and risk values increase compared to CTGAN, which commonly occurs in tabular data synthesis. In the ID dataset, GACTGAN has a lower risk than CTGAN with the same level of utility . T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 16 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger In summary , GACTGAN could improve CTGAN by producing a more useful synthetic dataset with lower disclosur e risk. Considering the focus on GACTGAN, it can be seen that using dif ferent covariance ranks gives quite close results in the datasets, whereas using the covariance approximation showed impr ovements compared to the diagonal. 5.3 Utility , Loss, and Rank Consideration in GACTGAN T able 3 pr esents the utility and risk gains (%) for differ ent covariance levels, utility weights ( ϕ ), and loss functions when combining SW AG with CTGAN. In this updated context, ϕ = 1 r epresents full utility weight , while ϕ = 0 . 75 indicates partial utility weight . The results highlight how differ ent configurations affect the balance between utility and risk, offering insights for optimising performance. For ϕ = 1 , the vanilla loss function achieves its highest utility gain of 6.46% at a covari- ance rank of 150, with a moderate risk of 4.08%. Lower ranks, such as diagonal rank, show reduced utility (4.63%) but maintain a much lower risk (1.30%). The W asserstein loss func- tion also performs well with ϕ = 1 , achieving its highest utility of 6.95% at rank 150, but at a slightly higher risk of 4.29%. However , in lower ranks, such as the diagonal rank, the W asserstein loss sacrifices utility (3.63%) to achieve its lowest risk of 5.15%. When using ϕ = 0 . 75 , the trends change slightly . The V anilla loss function still favours higher covariance ranks, with the highest utility gain of 6.18% achieved at rank 150. How- ever , the risk remains relatively low at 1.29%. The W asserstein loss function demonstrates a strong balance of utility and risk at rank 100, wher e utility reaches 6.08% and risk is re- duced to 2.69%. Lower covariance ranks, such as the diagonal, again show reduced utility but maintain better risk levels for certain configurations. Based on observations, full utility weight is recommended for tasks that prioritise utility , as it consistently yields higher utility gains across different configurations. In contrast, a partial utility weight may be more suitable for risk-sensitive applications due to its bet- ter contr ol over risk reduction. The W asserstein loss function outperforms the vanilla loss function in many cases, particularly when prioritising a balance between utility and risk. As for the covariance rank, higher ranks (for example, 150) generally perform better to maximise utility , while moderate ranks (e.g., 100) strike a balance between utility and risk, making them a robust choice for most applications. However , the computational cost is prohibitively expensive if the rank is too large, which should also be taken into considera- tion when building GACTGAN. Covariance Rank ϕ = 0.75 ϕ = 1 V anilla W asserstein V anilla W asserstein Utility ( ↑ ) Risk ( ↓ ) Utility ( ↑ ) Risk ( ↓ ) Utility ( ↑ ) Risk ( ↓ ) Utility ( ↑ ) Risk ( ↓ ) Diagonal 4.21 1.30 3.24 0.78 4.63 4.29 3.63 5.15 30 4.86 -4.12 3.57 -4.89 6.18 1.29 5.73 4.59 100 5.93 -1.57 4.74 -2.22 6.53 1.57 6.08 2.69 150 5.89 -0.41 6.03 -1.02 6.46 4.08 6.95 4.29 T able 3: A verage utility and risk gain (in %) of GACTGAN with dif ferent covariance ranks using partial utility weight ( ϕ = 0 . 75 ) and full utility weight ( ϕ = 1 ) in the model selection. The gain is calculated using the differ ence of average utility and risk of GACTGAN w .r .t. CTGAN. T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 17 UK Indonesia Fiji Canada Rwanda Adult Churn 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.2 0.4 0.6 0.0 0.1 0.2 0.3 0.4 0.5 0.6 Utility Risk # P osterior Samples 1 2 3 4 5 6 7 8 9 10 Figure 5: Utility-Risk map of GACTGAN based on number of posterior samples. The blue line showed the trend between each number of samples. 5.4 Ablation Study The beginning of our quantitative results is considered as an ablation study because of the differ ent models to use, namely ACTGAN and GACTGAN (D). This subsection pr ovides additional ablation study based on the number of posterior samples for BMA on different data. Figure 5 shows the R-U map of the GACTGAN synthesis results using the differ ence number of posterior samples. Based on the figur e, an inverse diagonal trend can be seen across the increment of number of posterior samples. The finding indicates that the use of large posterior samples of BMA for tabular data synthesis in CTGAN is not recommended because it tends to generate less useful data with higher disclosure risk, which should be avoided in tabular data synthesis. On the other hand, in some datasets, such as R W and AD, using two samples could produce data that has higher utility but with increased risk. Based on (Saatci and W ilson 2017), the sampled weight from G is used to explore differ ent modes of distribution, instead of model averaging. Thus, we recommend trying between one or two posterior samples when performing data synthesis. 6 Discussion Our comprehensive evaluation acr oss seven diverse tabular datasets demonstrates that GACTGAN consistently outperforms existing benchmarks, including standar d CTGAN, its Bayesian variant (BayesCTGAN), and a generator using Stochastic W eight A veraging (SW A), across both descriptive and quantitative metrics. This section interprets these find- ings, situates them within the br oader literatur e on synthetic data and Bayesian deep learn- ing, and offers a critical analysis of the implications, str engths, and limitations of our ap- proach. 6.1 Interpretation of Key Findings The superior performance of GACTGAN can be attributed to its enhanced ability to capture the complex, multi-modal distributions typical of tabular data. The SW AG posterior pro- vides a mor e nuanced and robust appr oximation of the generator ’s parameter distribution compared to point estimates (CTGAN), mean-field variational inference (BayesCTGAN), T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 18 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger or a simple average of weights (SW A). This allows GACTGAN to generate a richer vari- ety of plausible data points, which directly translates to higher utility , as evidenced by its improved corr elation preservation (Figur e 2) and distributional fidelity (Figure 3). A crucial finding is the critical role of the covariance rank within the SW AG approxima- tion. Our results (T able 3) indicate that a low-rank approximation (e.g., rank 30) often un- derperforms, while a full-rank (diagonal) approximation, while computationally cheaper , fails to captur e parameter correlations, leading to suboptimal results. The highest util- ity gains were consistently achieved with higher ranks (100-150), which effectively model dependencies between parameters in the generator network. This aligns with the under - standing that capturing these correlations is essential to generate coherent and realistic data recor ds (Maddox et al. 2019). However , this comes with a non-trivial computational cost, presenting a practical trade-of f for practitioners. Furthermore, the ablation study on the number of posterior samples for Bayesian Model A veraging (BMA) (Figure 5) yielded a counter-intuitive yet insightful result: using more than one or two samples often degraded performance. This suggests that for the task of data synthesis–wher e the goal is to produce a single high-quality dataset–extensive sam- pling from the posterior may lead to “averaging out” the distinct high-quality modes that a single good sample can capture. This finding nuances the typical BMA paradigm and suggests that in generative tasks, exploring a few high-probability modes might be more effective than attempting to average over the entir e posterior . 6.2 Comparison with Prior W ork Our findings confirm and challenge existing narratives in the literature. The consistent underperformance of BayesCTGAN and SW A-CTGAN (ACTGAN) reinfor ces the conclu- sions of Xu et al. (2019) and others that naively applying Bayesian methods to GAN is fraught with difficulty . The training instability and mode collapse issues inherent in GAN are often exacerbated by simplistic variational approximations. GACTGAN addresses this by employing SW AG, a method lauded for its stability and accuracy in capturing poste- rior distributions in deep networks (Maddox et al. 2019), and successfully adapts it to the adversarial training setting of a GAN. The result that the W asserstein loss generally outperformed the vanilla loss in terms of utility (T able 3) is consistent with the established benefits of the W asserstein distance for GAN training, such as improved stability and more meaningful loss gradients (Arjovsky et al. 2017). However , our work adds a new layer to this understanding: the choice of loss function also interacts significantly with the Bayesian approximation method. The W asser- stein loss’s smoother landscape likely pr ovides a mor e stable base over which SW AG can construct an accurate posterior , leading to more reliable impr ovements. Our work also contributes to the ongoing discussion on the dilemma between data utility and disclosure risk (T aub et al. 2019; Little et al. 2025). While GACTGAN primarily opti- mises utility , the synthetic data was produced without a pr oportional increase in disclosur e risk, and in some cases even reduced it (e.g., in the CA dataset with W asserstein loss). This suggests that by producing a more accurate and less “memorised” repr esentation of the underlying data distribution, a better Bayesian generator can inherently mitigate some pri- vacy risks. T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 19 6.3 Limitations Despite its promising results, this study has several limitations that should be acknowl- edged. First, the computational overhead of GACTGAN is significant. T raining SW AG requir es maintaining a running estimate of the first and second moments of the weights over multiple epochs, and generating samples involves forward passes through multiple networks drawn from the posterior . This cost may be prohibitive for very large datasets or models, and future work should focus on developing more computationally ef ficient ap- proximations tailored for generative models. Moreover , future resear ch may also explore other posterior approximations (e.g., V ariational Inference (Blundell et al. 2015; Zhang et al. 2018), Monte Carlo Dropout (Gal and Ghahramani 2016)) and their integration within dif- ferent generative ar chitectures. Second, while our evaluation is compr ehensive, it is constrained to the CTGAN architec- ture and specific datasets. The generalisability of SW AG’s benefits to other state-of-the-art tabular generators (e.g., T abDDPM (Kotelnikov et al. 2023), TV AE (Xu et al. 2019), and Flow matching (Guzm ´ an-Corder o et al. 2025; Nasution et al. 2026)) as well as integration with other generator architectur es (e.g. T ransformers-based model) remains an open question. It is plausible that the advantages of a Bayesian generator would be even more pronounced in a more powerful base model. Finally , the optimal configuration of GACTGAN (rank, number of samples, ϕ ) appears to be dataset-dependent. Although we pr ovide general guidelines, the need for per-dataset tuning somewhat diminishes the “out-of-the-box” advantage. Developing methods for automated configuration or demonstrating more robust default settings would enhance the practical adoption of this technique. 7 Concluding Remarks This study introduced GACTGAN, a novel Bayesian extension of the CTGAN framework that leverages Stochastic W eight A veraging-Gaussian (SW AG) for posterior approximation in the generator . W e evaluated our proposed algorithm against CTGAN, BayesCTGAN, and ACTGAN across multiple datasets using utility and disclosure risk metrics. Across datasets, GACTGAN pr oduced synthetic samples that mor e closely matched the empirical properties of the real data, with improvements observed for both categorical and continu- ous features. These findings highlight SW AG-style posterior approximations as a practical mechanism for improving tabular data synthesis. A consistent empirical finding is that richer covariance structur e in the SW AG posterior matters. Higher covariance ranks, par- ticularly around 100, tended to provide the most reliable gains, primarily when combined with the W asserstein objective. In addition, the results suggest that sampling overhead can often be kept modest, since a small number of posterior samples may already deliver most of the utility benefits. Overall, these r esults support the use of scalable posterior ap- proximations as a viable route to impr oving the performance of tabular generative models, especially CTGAN. Data and Code A vailability The implementation code of this paper is available in https://github.com/rulnasution/ ctgan- bayes/ . T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 20 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger Acknowledgements B.I. Nasution is supported by the Indonesia Endowment Fund for Education Agency (LPDP) scholarship. Declarations The authors declare no conflict of inter est. References Allmendinger , R., M. Ehr gott, X. Gandibleux, M.J. Geiger , K. Klamroth, and M. Luque. 2016. Navigation in multiobjective optimization methods. Journal of Multi-Criteria Deci- sion Analysis 24 (1–2): 57–70 . Arjovsky , M., S. Chintala, and L. Bottou 2017. Wasserstein generative adversarial networks. In Pr oceedings of the 34th International Conference on Machine Learning , V olume 70, pp. 214– 223. Athey , S., G.W . Imbens, J. Metzger , and E. Munro. 2024. Using W asserstein generative adversarial networks for the design of Monte Carlo simulations. Journal of Economet- rics 240 (2): 105076 . Blundell, C., J. Cornebise, K. Kavukcuoglu, and D. W ierstra 2015. W eight uncertainty in neural networks. In Proceedings of the 32nd International Conference on International Confer- ence on Machine Learning , V olume 37, pp. 1613–1622. Censor , Y . 1977. Pareto optimality in multiobjective problems. Applied Mathematics and Optimization 4 (1): 41–59 . Daxberger , E., A. Kristiadi, A. Immer , R. Eschenhagen, M. Bauer , and P . Hennig 2021. Laplace Redux - Effortless Bayesian Deep Learning. In Proceedings of the 35th Interna- tional Conference on Neural Information Pr ocessing Systems , pp. 20089–20103. Durall, R., F .J. Pfreundt, U. K ¨ othe, and J. Keuper 2019. Object segmentation using pixel- wise adversarial loss. In DAGM GCPR 2019: Pattern Recognition , pp. 303–316. Elliot, M., C. Little, and R. Allmendinger 2024. The production of bespoke synthetic teach- ing datasets without access to the original data. In International Conference on Privacy in Statistical Databases , pp. 144–157. Gal, Y . and Z. Ghahramani 2016. Dropout as a Bayesian approximation: Representing model uncertainty in deep learning. In Proceedings of The 33rd International Confer ence on Machine Learning , V olume 48, pp. 1050–1059. Glazunov , M. and A. Zarras 2022. Do Bayesian variational autoencoders know what they don’t know? In Proceedings of the 38th Confer ence on Uncertainty in Artificial Intelligence , pp. 718–727. Gong, W ., S. T schiatschek, S. Nowozin, R.E. T urner , J.M. Hern ´ andez-Lobato, and C. Zhang 2019. Icebreaker: Element-wise efficient information acquisition with a Bayesian deep latent Gaussian model. In Advances in Neural Information Processing Systems , V olume 32. T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 21 Goodfellow , I.J., J. Pouget-Abadie, M. Mirza, B. Xu, D. W arde-Farley , S. Ozair , A. Courville, and Y . Bengio 2014. Generative adversarial nets. In Advances in Neural Information Pro- cessing Systems , V olume 27. Gorishniy , Y ., I. Rubachev , V . Khrulkov , and A. Babenko 2021. Revisiting deep learning models for tabular data. In Advances in Neural Information Processing Systems , V olume 34. Gulrajani, I., F . Ahmed, M. Arjovsky , V . Dumoulin, and A. Courville 2017. Improved train- ing of W asserstein GANs. In Advances in Neural Information Processing Systems , V olume 30. Guzm ´ an-Corder o, A., F . Eijkelboom, and J.W . van de Meent 2025. Exponential family vari- ational flow matching for tabular data generation. In Forty-second International Conference on Machine Learning . Hornik, K., M. Stinchcombe, and H. White. 1989. Multilayer feedforwar d networks ar e universal approximators. Neural Networks 2 (5): 359–366 . Hwang, J.w ., Y . Lee, S. Oh, and Y . Bae 2021. Adversarial training with stochastic weight average. In 2021 IEEE International Conference on Image Pr ocessing (ICIP) , pp. 814–818. Izmailov , P ., D. Podoprikhin, T . Garipov , D.P . V etrov , and A.G. W ilson 2018. A veraging weights leads to wider optima and better generalization. In Proceedings of the 34th Con- ference on Uncertainty in Artificial Intelligence , pp. 876–885. Kim, H., W . Lee, S. Lee, and J. Lee. 2023. Bridged adversarial training. Neural Networks 167: 266–282 . Kingma, D.P . and J. Ba 2015. Adam: A method for stochastic optimization. In 3rd Interna- tional Conference on Learning Repr esentations, ICLR 2015 . Kotelnikov , A., D. Baranchuk, I. Rubachev , and A. Babenko 2023. TabDDPM: Modelling tabular data with diffusion models. In Proceedings of the 40th International Conference on Machine Learning , V olume 202, pp. 17564–17579. Li, C., C. Chen, D. Carlson, and L. Carin 2016. Preconditioned stochastic gradient langevin dynamics for deep neural networks. In Proceedings of the Thirtieth AAAI Conference on Artificial Intelligence , V olume 30, pp. 1788–1794. Lin, Z., A. Khetan, G. Fanti, and S. Oh 2018. PacGAN: The power of two samples in gen- erative adversarial networks. In Advances in Neural Information Processing Systems , V ol- ume 31. Little, C., R. Allmendinger , and M. Elliot. 2025. Synthetic census micr odata generation: A comparative study of synthesis methods examining the trade-of f between disclosur e risk and utility . Journal of Official Statistics 41 (1): 255–308 . Little, C., M. Elliot, and R. Allmendinger 2023a. Do samples taken from a synthetic mi- crodata population replicate the relationship between samples taken from an original population? In 2023 Expert Meeting on Statistical Data Confidentiality , Conference of Eur o- pean Statisticians . Little, C., M. Elliot, and R. Allmendinger . 2023b. Federated learning for generating syn- thetic data: a scoping review . International Journal of Population Data Science 8 (1): 2158 . T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 22 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger Little, C., M. El liot, and R. Allmendinger 2025. Producing synthetic teaching datasets using evolutionary algorithms. In Expert Meeting on Statistical Data Confidentiality , Conference of European Statisticians . Little, C., M. Elliot, R. Allmendinger , and S.S. Samani. 2021. Generative adversarial net- works for synthetic data generation: A comparative study . Conference Of Eur opean Statis- ticians: Expert Meeting on Statistical Data Confidentiality : 1–16 . Ma, C., S. T schiatschek, R. T urner , J.M. Hern ´ andez-Lobato, and C. Zhang 2020. V AEM: A deep generative model for heterogeneous mixed type data. In Proceedings of the 34th International Conference on Neural Information Pr ocessing Systems . MacKay , D.J.C. 1992. A practical Bayesian framework for backpropagation networks. Neu- ral Computation 4 (3): 448–472 . Maddox, W .J., P . Izmailov , T . Garipov , D.P . V etrov , and A.G. W ilson 2019. A simple baseline for Bayesian uncertainty in deep learning. In H. W allach, H. Larochelle, A. Beygelzimer , F . d'Alch ´ e-Buc, E. Fox, and R. Garnett (Eds.), Advances in Neural Information Processing Systems , V olume 32. Mbacke, S.D., F . Clerc, and P . Germain 2023. P AC-Bayesian generalization bounds for ad- versarial generative models. In Proceedings of the 40th International Conference on Machine Learning . Minnesota Population Center. 2023. Integrated public use microdata series, international: V ersion 7.4. Mirjalili, S. and J.S. Dong. 2020. Multi-Objective Optimization using Artificial Intelligence T ech- niques . Springer International Publishing. Morimoto, M., K. Fukami, R. Maulik, R. V inuesa, and K. Fukagata. 2022. Assessments of epistemic uncertainty using Gaussian stochastic weight averaging for fluid-flow regr es- sion. Physica D: Nonlinear Phenomena 440: 133454 . Mosser , L. and E.Z. Naeini. 2022. A comprehensive study of calibration and uncertainty quantification for Bayesian convolutional neural networks — an application to seismic data. GEOPHYSICS 87 (4): IM157–IM176 . Murad, A., F .A. Kraemer , K. Bach, and G. T aylor . 2021. Probabilistic deep learning to quan- tify uncertainty in air quality forecasting. Sensors 21 (23): 8009 . Nasution, B.I., I.D. Bhaswara, Y . Nugraha, and J.I. Kanggrawan 2022. Data analysis and synthesis of COVID-19 patients using deep generative models: A case study of jakarta, indonesia. In 2022 IEEE International Smart Cities Conference (ISC2) , pp. 1–10. Nasution, B.I., F . Eijkelboom, M. Elliot, R. Allmendinger , and C.A. Naesseth. 2026. Flow matching for tabular data synthesis. Nowok, B., G.M. Raab, and C. Dibben. 2016. synthpop: Bespoke Creation of Synthetic Data in R. Journal of Statistical Software 74 (11 SE - Articles): 1–26 . Onal, E., K. Fl ¨ oge, E. Caldwell, A. Shever din, and V . Fortuin 2024. Gaussian stochastic weight averaging for Bayesian low-rank adaptation of lar ge language models. In Sixth Symposium on Advances in Approximate Bayesian Infer ence - Non Archival T rack . T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 23 Pham, V .T ., T .T . T ran, P .C. W ang, P .Y . Chen, and M.T . Lo. 2021. EAR-UNet: A deep learning- based approach for segmentation of tympanic membranes from otoscopic images. Arti- ficial Intelligence in Medicine 115: 102065 . Raab, G.M. 2024. Privacy risk from synthetic data: Practical proposals. In Privacy in Statis- tical Databases , pp. 254–273. Springer Nature Switzerland. Ran, N., B. Nasution, C. Little, R. Allmendinger , and M. Elliot 2024. Multi-objective evo- lutionary GAN for tabular data synthesis. In Proceedings of the Genetic and Evolutionary Computation Conference , pp. 394–402. Saatci, Y . and A.G. W ilson 2017. Bayesian GAN. In Advances in Neural Information Pr ocessing Systems , V olume 30. Salimans, T ., D. Kingma, and M. W elling 2015. Markov Chain Monte Carlo and variational inference: Bridging the gap. In Proceedings of the 32nd International Conference on Machine Learning , pp. 1218–1226. T aub, J., M. Elliot, G. Raab, A.S. Charest, C. Chen, C.M. O’Keefe, M.P . Nixon, J. Snoke, and A. Slavkovic 2019. The synthetic data challenge. In Joint UNECE/Eurostat Work Session on Statistical Data Confidentiality . T ran, D., R. Ranganath, and D.M. Blei 2017. Hierar chical implicit models and likelihood- free variational inference. In Proceedings of the 31st International Conference on Neural In- formation Processing Systems . T urner , R., J. Hung, E. Frank, Y . Saatchi, and J. Y osinski 2019. Metropolis-Hastings gener- ative adversarial networks. In Pr oceedings of the 36th International Conference on Machine Learning , pp. 6345–6353. Xu, L., M. Skoularidou, A. Cuesta-Infante, and K. V eeramachaneni 2019. Modeling tab- ular data using conditional GAN. In Advances in Neural Information Processing Systems , V olume 32. Y ang, L., Y . W ang, X. Y ang, and C. Zheng. 2023. Stochastic weight averaging enhanced tem- poral convolution network for EEG-based emotion recognition. Biomedical Signal Pr ocess- ing and Control 83: 104661 . Zhang, G., S. Sun, D. Duvenaud, and R. Grosse 2018. Noisy natural gradient as variational inference. In Pr oceedings of the 35th International Conference on Machine Learning , pp. 5852– 5861. Zhang, J., G. Cormode, C.M. Procopiuc, D. Srivastava, and X. Xiao. 2017. Privbayes: Private data release via Bayesian networks. ACM T ransactions on Database Systems 42 (4): 1–41 . T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 24 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger A Dataset Details T able 4 describes the details of the dataset we used. W e dif ferentiate the use of age in cen- sus and noncensus datasets. W e treated age as a categorical variable in the census dataset, following previous studies (Ran et al. 2024). In contrast, we considered age as a numer- ical variable in the AD and CH datasets. Furthermore, we limited the region to the W est Midlands area in the UK dataset. On the other hand, we only used the Deli Ser dang munic- ipality area in the ID dataset (code 1212 or 012012 in IPUMS). The details of the variables used to calculate the evaluation measurements can be seen in the code. T able 4: Details of columns used in this study . Dataset Y ear Continuous columns Categorical Columns UK 1991 - AREAP , AGE, COBIR TH, ECONPRIM, ETH- GROUP , F AMTYPE, HOURS, L TILL, MST A- TUS, QUALNUM, RELA T , SEX, SOCLASS, TRANWORK, TENURE ID 2010 - OWNERSHIP , LANDOWN, AGE, RELA TE, SEX, MARST , HOMEFEM, HOMEMALE, RELIGION, SCHOOL, LIT , EDA TT AIND, DISABLED FI 2007 - PROV , TENURE, RELA TE, SEX, AGE, ETHNIC, MARST , RELIGION, BPLPROV , RESPROV , RESST A T , SCHOOL, EDA T - T AIN, TRA VEL, WORKTYPE, OCC1, IND2, CLASSWKR, MIG5YR R W 2012 - AGE, ST A TUS, SEX, URBAN, REGBTH, WK- SECTOR, MARST , NSPOUSE, CLASSWK, OWNERSH, DISAB2, DISAB1, EDCERT , RE- LA TE, RELIG, OCC, HINS, NA TION, LIT , IND1, BPL AD - age, fnlwgt, capital-gain, capital-loss, hours-per- week workclass, education, education-num, marital-status, occupation, relationship, race, sex, native-country , income CH - Cr editScore, Age, Balance, Estimated- Salary Geography , Gender , T enure, NumOfProd- ucts, HasCrCard, IsActiveMember , Exited B Additional Descriptive Statistics Figure 6 reveals a further cross-tabulation in RW and CH. The analysis demonstrates GACT - GAN’s ability to accurately capture nuances in cross-tabulations, including employment sector patterns and gender-based customer exits. On the other hand, CTGAN and BayesCT - GAN sometimes display inconsistent performance. These findings suggest that although T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 25 GACTGAN is generally preferr ed for pr ecise synthetic data generation, CTGAN could also yield good results even in smaller datasets. Figure 7 presents additional histograms of continuous variables in AD and CH. The re- sults suggest that GACTGAN frequently aligns more closely with the original data than other methods, indicating its potential to learn data distributions across differ ent featur es. Nevertheless, in some cases, notably with variables like fnlwgt and Balance, CTGAN oc- casionally becomes competitive with GACTGAN in replicating the original distributions. These findings imply that GACTGAN generally has a greater capacity to reflect the com- plexities of datasets in certain contexts or with specific data types. Figure 8 presents additional correlation differences in AD and CH datasets. The r esults suggest that GACTGAN frequently could perform better by having a lower correlation dif- ference in CH, whereas in AD the corr elation dif ference is quite in a contest with CTGAN. These findings imply that GACTGAN generally has a good ability to preserve corr elation. T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 26 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger CTGAN BayesCTGAN ACTGAN GACTGAN (D) GACTGAN (30) GACTGAN (100) GACTGAN (150) Wasser stein V anilla 1 2 3 4 8 1 2 3 4 8 1 2 3 4 8 1 2 3 4 8 1 2 3 4 8 1 2 3 4 8 1 2 3 4 8 0 5000 10000 15000 0 5000 10000 15000 WKSECTOR Frequency Data Or iginal Synthetic (a) R W -W orking sector in status=1 CTGAN BayesCTGAN ACTGAN GACTGAN (D) GACTGAN (30) GACTGAN (100) GACTGAN (150) Wasser stein V anilla 1 2 3 4 8 1 2 3 4 8 1 2 3 4 8 1 2 3 4 8 1 2 3 4 8 1 2 3 4 8 1 2 3 4 8 0 1000 2000 3000 4000 0 1000 2000 3000 4000 WKSECTOR Frequency Data Or iginal Synthetic (b) R W -W orking sector in status=2 CTGAN BayesCTGAN ACTGAN GACTGAN (D) GACTGAN (30) GACTGAN (100) GACTGAN (150) Wasser stein V anilla 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1000 2000 3000 4000 5000 0 1000 2000 3000 4000 5000 Exited Frequency Data Or iginal Synthetic (c) CH-Customer exit among males CTGAN BayesCTGAN ACTGAN GACTGAN (D) GACTGAN (30) GACTGAN (100) GACTGAN (150) Wasser stein V anilla 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1000 2000 3000 4000 0 1000 2000 3000 4000 Exited Frequency Data Or iginal Synthetic (d) CH-Customer exit among females Figure 6: Additional cross-tabulation between synthetic data and original datasets. T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) Gaussian-Approx GAN for T abular Data 27 CTGAN BayesCTGAN ACTGAN GACTGAN (D) GACTGAN (30) GACTGAN (100) GACTGAN (150) Wasser stein V anilla Data Or iginal Synthetic (a) AD-Age CTGAN BayesCTGAN ACTGAN GACTGAN (D) GACTGAN (30) GACTGAN (100) GACTGAN (150) Wasser stein V anilla Data Or iginal Synthetic (b) AD-fnlwgt CTGAN BayesCTGAN ACTGAN GACTGAN (D) GACTGAN (30) GACTGAN (100) GACTGAN (150) Wasser stein V anilla Data Or iginal Synthetic (c) CH-Age CTGAN BayesCTGAN ACTGAN GACTGAN (D) GACTGAN (30) GACTGAN (100) GACTGAN (150) Wasser stein V anilla Data Or iginal Synthetic (d) CH-Balance Figure 7: Additional histogram between synthetic data and original datasets. T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 ) 28 Bahrul Ilmi Nasution, Mark Elliot, Richar d Allmendinger CTGAN BayesCTGAN A CTGAN GA CTGAN (D) GA CTGAN (30) GA CTGAN (100) GA CTGAN (150) W asserstein V anilla value 0.0 0.1 0.2 0.3 (a) AD CTGAN BayesCTGAN A CTGAN GA CTGAN (D) GA CTGAN (30) GA CTGAN (100) GA CTGAN (150) W asserstein V anilla value 0.0 0.1 0.2 0.3 (b) CH Figure 8: Additional correlation dif ference between synthetic and original dataset. T R A N S A C T I O N S O N D ATA P R I V A C Y ( 2 0 2 6 )

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

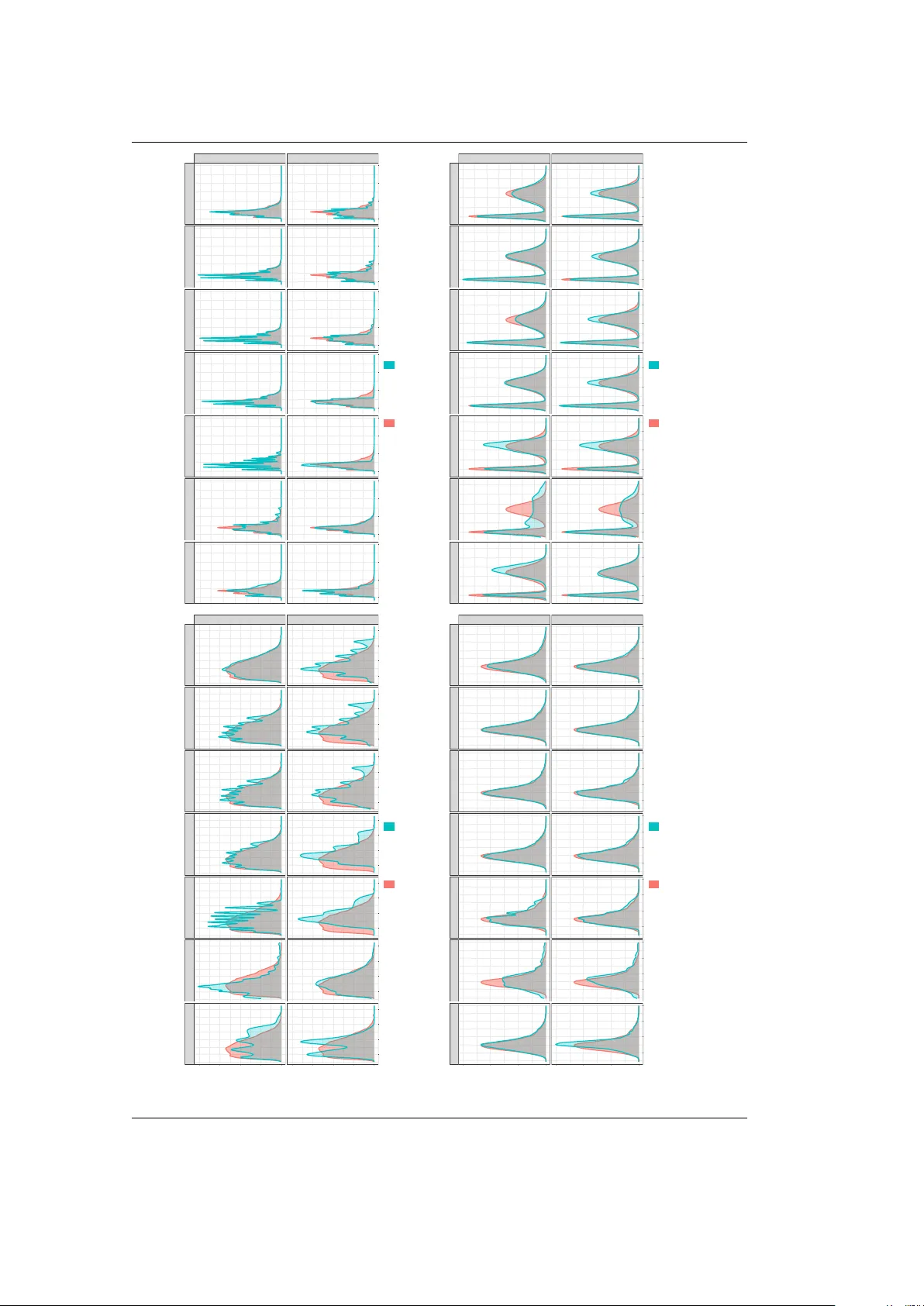

Leave a Comment