Scalable Kernel-Based Distances for Statistical Inference and Integration

Representing, comparing, and measuring the distance between probability distributions is a key task in computational statistics and machine learning. The choice of representation and the associated distance determine properties of the methods in whic…

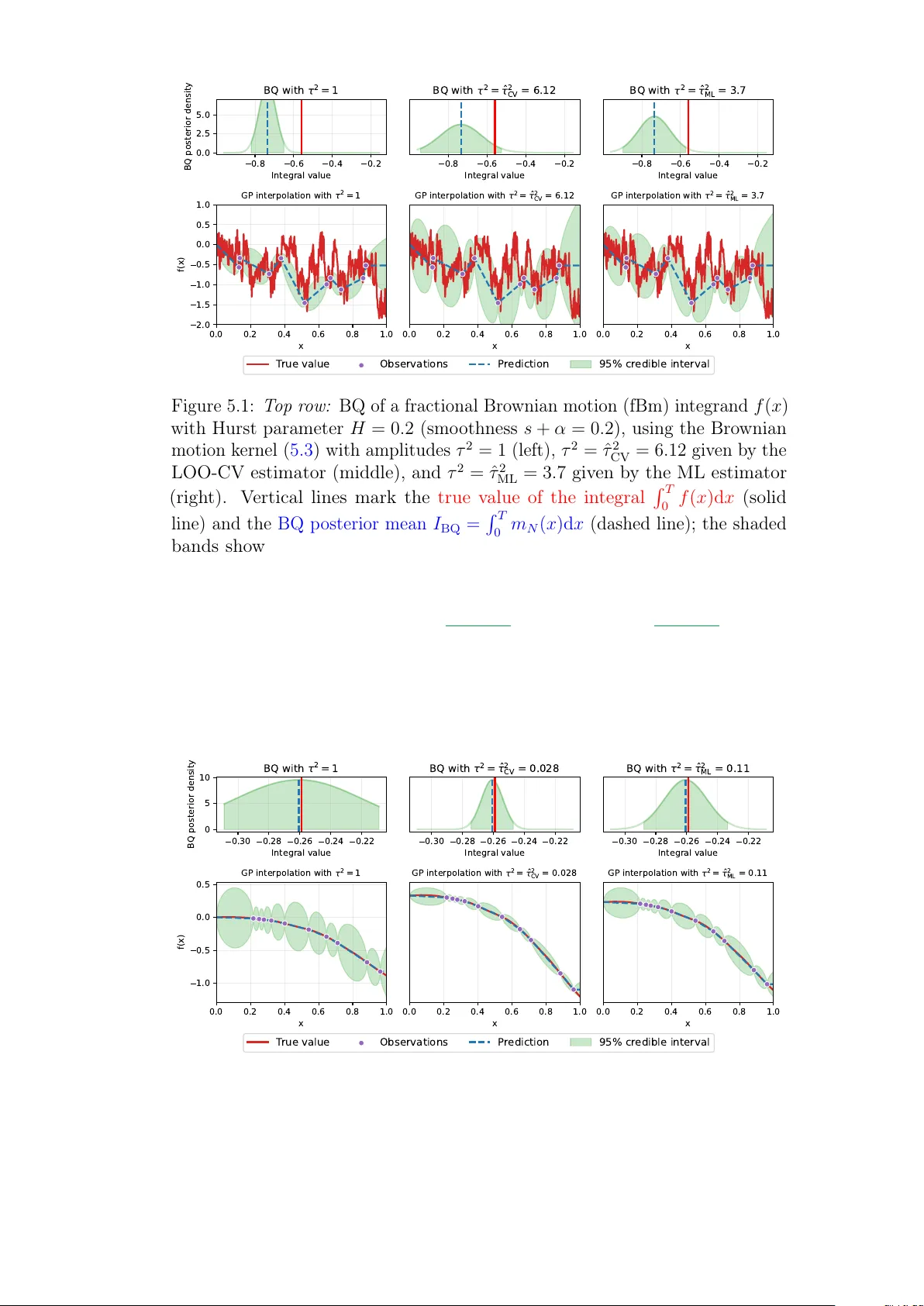

Authors: Masha Naslidnyk