Towards Object Segmentation Mask Selection Using Specular Reflections

Specular reflections pose a significant challenge for object segmentation, as their sharp intensity transitions often mislead both conventional algorithms and deep learning based methods. However, as the specular reflection must lie on the surface of…

Authors: Katja Kossira, Yunxuan Zhu, Jürgen Seiler

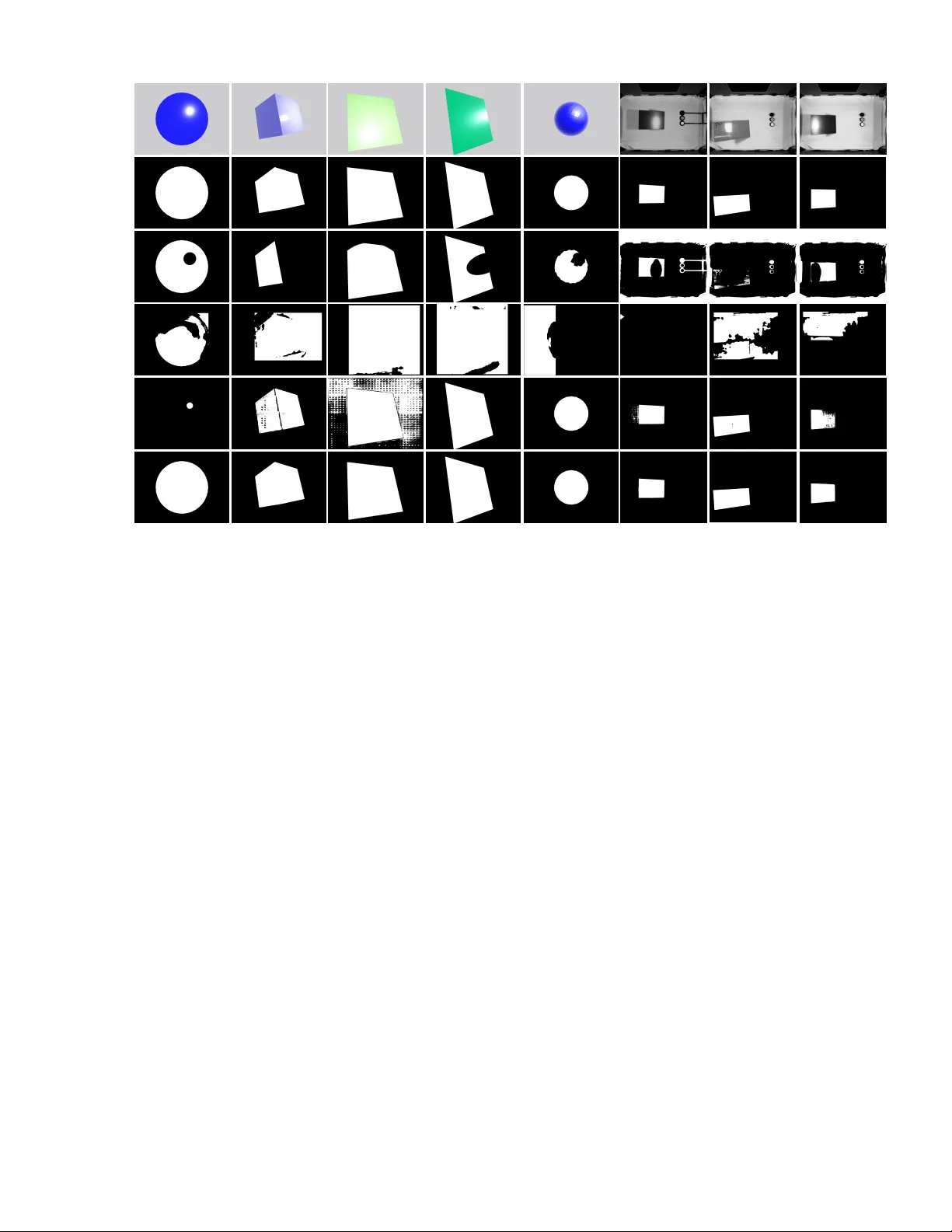

©2025 IEEE. Published in 2025 International Conference on V isual Communications and Image Processing (VCIP), scheduled for 01-04 December 2025 in Klagenfurt, Austria. Personal use of this material is permitted. Howe ver , permission to reprint/republish this material for advertising or promotional purposes or for creating new collectiv e works for resale or redistribution to servers or lists, or to reuse any copyrighted component of this work in other works, must be obtained from the IEEE. DOI: 10.1109/VCIP67698.2025.11396903 T o w ards Object Se gmentation Mask Selection Using Specular Reflections Katja K ossira, Y unxuan Zhu, J ¨ urgen Seiler , and Andr ´ e Kaup Multimedia Communications and Signal Pr ocessing F riedrich-Ale xander University Erlangen-N ¨ urnber g (F A U) Cauerstr .7, 91058 Erlangen, Germany { katja.kossira, yunxuan.zhu, juer gen.seiler, andre.kaup } @f au.de Abstract —Specular reflections pose a significant challenge for object segmentation, as their sharp intensity transitions often mislead both con ventional algorithms and deep learning based methods. However , as the specular reflection must lie on the surface of the object, this fact can be exploited to improv e the segmentation masks. By identifying the largest region containing the r eflection as the object, we derive a mor e accurate object mask without requiring specialized training data or model adaption. W e evaluate our method on both synthetic and real world images and compare it against established and state-of-the-art techniques including Otsu thresholding, YOLO, and SAM2. Compared to the best performing baseline SAM2, our approach achieves up to 26.7% improvement in IoU, 22.3% in DSC, and 9.7% in pixel accuracy . Qualitative evaluations on real world images further confirm the rob ustness and generalizability of the proposed approach. Index T erms —Specular Reflections, Object Detection, Object Segmentation, Masking . I . I N T RO D U C T I O N Accurate object segmentation is a fundamental task in computer vision, enabling the precise identification of object boundaries within digital images. This capability is essential for a wide range of applications, including industrial automa- tion [1], robotic manipulation [2], medical diagnostics [3], and en vironmental monitoring [4]. In such contexts, the ability to isolate specific objects such as plastic parts on an assembly line is a prerequisite for reliable do wnstream processing, whether it in volves classification, measurement, or interaction [5]. Over the years, a di verse set of object segmentation methods has been dev eloped. Classical image processing techniques remain relev ant in controlled en vironments. For example, Otsu’ s thresholding method [6] automatically determines an optimal grayscale threshold to separate foreground from back- ground. This method is fast and interpretable, but it often fails in complex scenes where lighting, texture, or occlusion interfere with signal quality . T o address such challenges, in recent years the field has shifted tow ards deep learning-based segmentation, which enables more robust and generalizable solutions. A widely adopted model is Mask R-CNN [7], which extends object detection models to pixel-lev el instance segmentation by adding a dedicated mask prediction branch. The authors gratefully acknowledge that this work has been supported by the Bayerische Forschungsstiftung (BFS, Bavarian Research Foundation) under project number AZ-1547-22. Original Otsu [6] Mask R-CNN [7] Y OLO [10] SAM2 [12] Ours Fig. 1. Original image and the object masks generated using Otsu [6], Mask R-CNN [7], Y OLO [10], SAM2 [12] as well as our proposed method. The white regions represent the calculated masks. Other architectures lik e DeepLabv3+ [8] incorporate dilated con volutions to capture multi-scale contextual information, while HRNet [9] maintains high-resolution representations throughout the network, producing particularly accurate and sharp segmentation masks. Among the most influential models is Y OLO (Y ou Only Look Once) [10], which was originally designed for real-time object detection. Recent iterations ex- tend this functionality to instance segmentation, of fering fast and reasonably accurate se gmentation capabilities. Due to their speed and lightweight architecture, YOLO-based models are widely deployed in industrial en vironments where real-time processing is critical. More recently , foundation models hav e emerged in computer vision. A leading e xample is the Se gment Anything Model (SAM) [11] and its successor SAM2 [12]. These models enable zero-shot segmentation, allo wing them to predict object masks in previously unseen domains without task-specific training. SAM2 improves upon SAM by offering enhanced prompt handling, refined multi-mask generation, greater generalization capabilities, and a significantly faster runtime. Crucially , SAM2 can produce multiple candidate masks for a given object, reflecting ambiguity or uncertainty in its boundary , particularly in complex scenes. Fig. 1 sho ws the segmentation results for a flat, textureless object with a specular reflection using Otsu [6], Mask R-CNN [7], Y OLO [10], SAM2 [12] and our proposed solution. It is ob vious that classical methods like Otsu misinterpret the reflections as additional object, leading to distorted masks. Even the more modern deep learning methods like Mask R- CNN or YOLO produce unsharp mask boundaries and often I Specular reflection detection [13]–[15] SAM2 [12] M 2 M 1 M 3 Mask selector T est maximum white pix el ratio Discard mask Find arg max R i R i > R max R i ≤ R max Post processing CCA In vert mask CCA In vert mask M Ω , [ c x , c y ] ∈ Ω M selected Fig. 2. Overvie w of the proposed pipeline for object mask selection using specular reflections. Starting from the input image I , specular reflection detection identifies candidate regions, which are then processed by SAM2 [12] to generate multiple segmentation masks M 1 , M 2 , M 3 . The mask selector evaluates each candidate using the white-pixel ratio R i and discards masks above a predefined threshold R max . Among the remaining candidates, the mask with the highest R i is selected as M selected . Finally , the post-processing step refines this mask through connected component analysis and mask inversion to obtain the final segmentation result M . show inconsistencies within the predicted masks. In contrast, foundation models like SAM2 show impro ved segmentation by le veraging large-scale training data and richer contextual reasoning. While reflective regions are included in the object masks, shado ws are occasionally partially segmented, resulting in incomplete or fragmented mask artifacts. Furthermore, with multiple masks being a vailable, the best mask still has to be determined. In robotic assembly , a misaligned grasp point caused by an inaccurate segmentation can result in mechanical errors or system shutdowns [5]. In automated recycling, ov er- or undersegmentation of reflecti ve packaging can lead to mis- classification and reduced material purity [16]. In industrial inspection systems, reflections misidentified as surface defects may cause false alarms and unnecessary rejection of products [17]. Therefore, this work focuses on de veloping and e val- uating an improved strategy for automatically selecting the most accurate mask from a set of candidates, with attention to the e xclusion of specular reflections in the segmentation of reflectiv e objects. Importantly , our approach does not rely on models that hav e been specifically trained to handle specular reflections. Instead, it identifies the largest connected region containing the reflection, thereby increasing the robustness of generic segmentation pipelines. By addressing this gap, we aim to improv e the reliability and accurac y of vision-based automation systems in demanding en vironments. I I . P RO P O S E D M E T H O D Our nov el method Reflection-aware Postprocessed Segmentation (RePoSeg) is based on a concatenation of a specular reflection detection, the SAM2 model outputs, a mask selection and post processing. The proposed pipeline and concept is shown in Fig. 2. Specular reflections manifest as intense highlights on sur- faces, resulting from the coherent reflection of incident light. Fig. 3. Examples of the specular reflection detection based on [13]. The upper row shows the original image, while the bottom row displays the corresponding mask Ω , which captures only the core specular highlights and ignores the surrounding intensity fallof f. They occur predominantly on smooth surfaces like glass, plastics, or polished materials. As they hide the actual surface information of the object, the first step is to detect their location in the image I . For this, already established methods such as Y -channel histogram exploration [13], adaptive thresh- olding histogram exploration [14], or neural network based approaches like [15] can be employed. Fig. 3 shows some results of the specular reflection detection based on [13]. The white area of each specular mask is denoted as Ω . Its center of mass C = [ c x , c y ] for positions [ x, y ] ∈ Ω is generally computed as c x = 1 | Ω | X ( x,y ∈ Ω) x , c y = 1 | Ω | X ( x,y ∈ Ω) y . (1) After the specular reflection center of mass C has been identified, its coordinates [ c x , c y ] are passed into SAM2. The model is configured in multimask-mode, allowing it to generate up to three potential se gmentation results M 1 , M 2 and M 3 for the object to which the reflection center C most likely belongs. This setup enables SAM2 to reflect inherent ambiguity in boundary interpretation, especially in scenes with complex reflections. Thus, these masks show dif ferent plausible interpretations of the object surrounding the giv en point. For example, one mask may represent only the specular reflection, another may include the surface containing the reflection, and the third may capture a broader area, sometimes including background elements. Examples for M 1 , M 2 and M 3 are shown in Fig. 4 in the middle. As the segmentation output is ambiguous by design, a subsequent mask selector is applied to determine the most likely mask representing the reflecti ve surface. This selection process is based on two complementary principles. First, the pixel ratio-based selection prioritizes the mask with the highest proportion of white (foreground) pixels, under the assumption that the reflecti ve surface cov ers a larger area than the specular highlight. This can be expressed as M pre-selected = arg max M i R i , (2) where R i is the white pixel ratio of mask M i . Second, to avoid selecting masks that include unrelated background regions especially in complex scenes, a maximum area threshold R max is introduced. Any mask exceeding this upper bound is excluded from the selection: M valid = {M i | R i ≤ R max } . (3) T ypical values for R max range between 0.3 and 0.6, depending on the scene complexity . The final selected mask is determined by M selected = arg max M i ∈M valid R i . (4) This two-stage selection ensures that the mask corresponds to the relev ant surface, excluding smaller specular highlights and ov erly large background regions. After selecting the most suitable mask, a post processing step is applied to clean the binary output and remove small noise artifacts such as isolated white or black dots. These can result from lighting effects, reflections, or shadows. The goal is to produce a coherent mask that accurately captures the reflectiv e surface. In the first step, foreground noise outside the main object is remov ed. This is achiev ed using connected component analysis (CCA) [18], which groups all connected foreground pixels in M selected into disjoint sets A k , also called components. Each component A k contains a set of connected pixel positions p = ( x, y ) , and no pixel belongs to more than one component: K [ k =1 A k = { p ∈ Ω |M selected ( p ) = 1 } , A i ∩ A j = ∅ for i = j. (5) Here, K is the number of connected components, and i, j are indices over these components. Then, from all detected regions, only the largest component A max is retained. Thus, isolated white specks that may lie outside the main reflectiv e surface are successfully remov ed. Howe ver , the selected mask may still contain small black areas within the main white region, which are not remov ed in the first step. These appear as background within the object Mask selector Post processing Original M 1 M 2 M 3 M Fig. 4. Original image (left), masks M 1 - M 3 generated by SAM2 for a giv en input specular reflection center [ c x , c y ] (middle) and the final mask M after post processing (right). The best candidate mask of M 1 - M 3 is selected automatically by the mask selector . T ABLE I Q UA N TI TA T I VE E V A L UA T I O N O F O TS U [ 6 ], Y OL O [ 1 0] , S A M2 [ 1 2 ] A N D O U R P RO P OS E D M E T H OD R E P O S E G O N S Y NT H E T IC A N D R E A L - W O RL D DAT A . T H E B ES T R E SU LT S A R E M A RK E D I N B O LD . Synthetic data Metric V alue [%] IoU (Otsu) 69.98 IoU (YOLO) 36.96 IoU (SAM2) 78.03 IoU (RePoSeg) 98.86 DSC (Otsu) 79.92 DSC (YOLO) 48.40 DSC (SAM2) 81.29 DSC (RePoSeg) 99.43 Pixel Acc. (Otsu) 98.77 Pixel Acc. (YOLO) 67.69 Pixel Acc. (SAM2) 90.87 Pixel Acc. (RePoSeg) 99.68 Real-world data Metric V alue [%] IoU (Otsu) 9.45 IoU (YOLO) 7.78 IoU (SAM2) 88.76 IoU (RePoSeg) 94.22 DSC (Otsu) 16.83 DSC (YOLO) 13.30 DSC (SAM2) 93.93 DSC (RePoSeg) 97.02 Pixel Acc. (Otsu) 68.22 Pixel Acc. (YOLO) 77.46 Pixel Acc. (SAM2) 99.10 Pixel Acc. (RePoSeg) 99.52 and reduce the mask’ s completeness. An in version of the mask transforms black holes within the object into small white regions on a black background. Another CCA is subsequently performed to remov e these small regions. Finally , the result is in verted back to restore the correct polarity . The resulting mask represents a clean, hole-free segmentation of the reflecti ve surface, preserving only the largest continuous region and eliminating both white speckles and internal black voids: M = 1 − ( 1 if p ∈ A max 0 otherwise . (6) Examples of M after post-processing are illustrated in Fig. 4 on the right. I I I . E X P E R I M E N T A L R E S U LT S RePoSeg is ev aluated quantitati vely and qualitati vely on synthetic images and real world data, captured with a camera array [19]. The synthetic images were rendered in Blender [20], allowing the generation of accurate ground truth masks Original Ground truth Otsu [6] Y OLO [10] SAM2 [12] Ours Fig. 5. Qualitati ve ev aluation of the segmentation masks on synthetic and real world images using Otsu [6], YOLO [10], SAM2 [12] and our method RePoSeg. The examples shown here are representativ e, the complete setup was conducted on a substantially larger dataset. for objective ev aluation. The masks for the real world images were manually annotated. Representativ e examples for both datasets are shown in Fig. 5. T o quantify segmentation performance, we use three com- monly used metrics. Intersection o ver Union (IoU) [21] mea- sures the ov erlap between prediction and ground truth, the Dice Similarity Coefficient (DSC) [22] emphasizes correctly predicted foreground pixels, and pixel accuracy (pix. acc.), which reflects the proportion of correctly classified pix els in the entire image, including both foreground and background. Quantitativ e results for both datasets are shown in T able I. RePoSeg is ev aluated alongside the three established baselines of Otsu [6], the YOLO model [10], and SAM2 [12]. Our approach achiev es superior performance across all metrics. Specifically , for the synthetic images we obtain an IoU of 98.86%, a DSC of 99.43%, and a pixel accuracy of 99.68%. Compared to the strongest baseline SAM2, RePoSeg improv es the IoU by 26.7%, the DSC by 22.3% and pixel accuracy by 9.7%. Against the classical Otsu method, improvements are 41.3% in IoU, 24.4% in DSC and 0.9% in pixel accuracy . In comparison to the masks obtained with YOLO, ev en larger relativ e gains of 176.5% in IoU, 105.4% in DSC and 47.2% in pixel accurac y are achieved. Similarly , on the real-world data, we observ e consistently strong results with an IoU of 94.22%, a DSC of 97.02% and a pixel accuracy of 99.52%. These results clearly demonstrate that the proposed method remains highly ef fectiv e e ven when applied to a segmentation model that was not specifically trained to handle specular re- flections. The approach yields a high robustness and precision, particularly in capturing specular regions that often challenge con ventional and learning-based segmentation methods. Fig. 5 presents qualitative e xamples of segmentation outputs for both synthetic and real images. For synthetic cases, the ground truth mask is shown alongside the outputs of Otsu [6], Y OLO [10], SAM2 [12] and RePoSeg. The visual results con- firm that our method produces cleaner , more precise bound- aries and preserves object regions better than the reference approaches, e ven in the presence of complex highlights and specular reflections. Importantly , it performs consistently well on both grayscale and color images, showing no noticeable limitations with respect to input modality . I V . C O N C L U S I O N In this paper , a nov el approach for obtaining object se g- mentation masks in the presence of specular reflections was proposed. RePoSeg le verages the physical constraint that spec- ular highlights must lie on the object surface. By identifying the lar gest connected region containing such highlights, the object can be se gmented reliably , ev en in cases where con- ventional methods or deep neural networks struggle due to the misleading visual cues introduced by reflections. Our strategy enables the use of generic se gmentation networks, that were not explicitly trained to handle specular reflections, improving their applicability to a wider range of real world scenarios. The effecti veness of the proposed method is supported by strong quantitativ e results on synthetic data as well as con- sistent qualitati ve performance on synthetic and real world imagery , demonstrating both robustness and generalizability independent of the input modality . R E F E R E N C E S [1] Zihao Xiong, Fei Zhou, Fengyi W u, Shuai Y uan, Maixia Fu, Zhenming Peng, Jian Y ang, and Yimian Dai, “Drpca-net: Make robust pca great again for infrared small target detection, ” IEEE Tr ansactions on Geoscience and Remote Sensing , pp. 1–17, 2025. [2] Songsong Zhang, Zhenggong Han, Guirong Guo, and Zhaonan Mu, “ A 6d pose optimization grasping method for robots in unstructured scenes, ” in 2025 5th International Conference on Neural Networks, Information and Communication Engineering (NNICE) , 2025, pp. 1282–1286. [3] Lei Zhao, Deyu Li, Jianhui Chen, and T ao W an, “ Automated coro- nary tree segmentation for x-ray angiography sequences using fully- con volutional neural networks, ” in 2018 IEEE V isual Communications and Image Processing (VCIP) . IEEE, 2018, pp. 1–4. [4] Olcay Guldogan, Jani Rotola-Pukkila, Uv araj Balasundaram, Thanh- Hai Le, Kamal Mannar , T aufan Mega Chrisna, and Moncef Gabbouj, “ Automated tree detection and density calculation using unmanned aerial vehicles, ” in 2016 V isual Communications and Image Pr ocessing (VCIP) . IEEE, 2016, pp. 1–4. [5] Guohao Sun and Haibin Y an, “Ultra-high resolution image segmentation with efficient multi-scale collective fusion, ” in 2022 IEEE International Confer ence on V isual Communications and Imag e Pr ocessing (VCIP) . IEEE, 2022, pp. 1–5. [6] Nobuyuki Otsu et al., “ A threshold selection method from gray-le vel histograms, ” Automatica , vol. 11, no. 285-296, pp. 23–27, 1975. [7] Kaiming He, Georgia Gkioxari, Piotr Doll ´ ar , and Ross Girshick, “Mask r-cnn, ” in Pr oceedings of the IEEE International Confer ence on Computer V ision , 2017, pp. 2961–2969. [8] Liang-Chieh Chen, Y ukun Zhu, George Papandreou, Florian Schrof f, and Hartwig Adam, “Encoder-decoder with atrous separable conv olution for semantic image segmentation, ” in Proceedings of the Eur opean Confer ence on Computer V ision (ECCV) , 2018, pp. 801–818. [9] Ke Sun, Y ang Zhao, Borui Jiang, T ianheng Cheng, Bin Xiao, Dong Liu, Y adong Mu, Xinggang W ang, W enyu Liu, and Jingdong W ang, “High-resolution representations for labeling pixels and regions, ” arXiv pr eprint arXiv:1904.04514 , 2019. [10] Glenn Jocher, Jing Qiu, and A Chaurasia, “Ultralytics yolo11, ” gitHub r epository , 2024. [11] Alexander Kirillov , Eric Mintun, Nikhila Ravi, Hanzi Mao, Chloe Rolland, Laura Gustafson, T ete Xiao, Spencer Whitehead, Alexander C. Berg, W an-Y en Lo, Piotr Doll ´ ar , and Ross Girshick, “Segment anything, ” arXiv:2304.02643 , 2023. [12] Nikhila Ravi, V alentin Gabeur, Y uan-T ing Hu, Ronghang Hu, Chaitanya Ryali, T engyu Ma, Haitham Khedr, Roman R ¨ adle, Chloe Rolland, Laura Gustafson, Eric Mintun, Junting Pan, Kalyan V asudev Alwala, Nicolas Carion, Chao-Y uan W u, Ross Girshick, Piotr Doll ´ ar , and Christoph Feichtenhofer , “Sam 2: Segment anything in images and videos, ” arXiv pr eprint arXiv:2408.00714 , 2024. [13] Thomas H Stehle, “Specular reflection remov al in endoscopic images, ” in Pr oceedings of the 10th International Student Conference on Electri- cal Engineering . Citeseer , 2006. [14] Mirko Arnold, Anarta Ghosh, Stefan Ameling, and Gerard Lacey , “ Auto- matic segmentation and inpainting of specular highlights for endoscopic imaging, ” EURASIP J ournal on Image and V ideo Processing , vol. 2010, pp. 1–12, 2010. [15] Gang Fu, Qing Zhang, Qifeng Lin, Lei Zhu, and Chunxia Xiao, “Learning to detect specular highlights from real-world images, ” in ACM Multimedia , 2020, pp. 1873–1881. [16] Li Shen and Ernst W orrell, “Plastic recycling, ” in Handbook of recycling , pp. 497–510. Elsevier , 2024. [17] Qingdong Cai and Charith Abhayaratne, “Ssdb-net: a single-step dual branch network for weakly supervised semantic segmentation of food images, ” in 2023 IEEE 25th International W orkshop on Multimedia Signal Pr ocessing (MMSP) . IEEE, 2023, pp. 1–6. [18] Azriel Rosenfeld and John L Pfaltz, “Sequential operations in digital picture processing, ” Journal of the ACM (J ACM) , vol. 13, no. 4, pp. 471–494, 1966. [19] N. Genser , J. Seiler , and A. Kaup, “Camera array for multi-spectral imaging, ” IEEE T ransactions on Image Processing , vol. 29, pp. 9234– 9249, 2020. [20] Blender Online Community, Blender - a 3D modelling and rendering packag e , Blender Foundation, Blender Institute, Amsterdam, 2021. [21] Paul Jaccard, “ ´ Etude comparative de la distribution florale dans une portion des alpes et des jura, ” Bull Soc V audoise Sci Nat , vol. 37, pp. 547–579, 1901. [22] Lee R Dice, “Measures of the amount of ecologic association between species, ” Ecology , vol. 26, no. 3, pp. 297–302, 1945.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment