Two-Stage Active Distribution Network Voltage Control via LLM-RL Collaboration: A Hybrid Knowledge-Data-Driven Approach

The growing integration of distributed photovoltaics (PVs) into active distribution networks (ADNs) has exacerbated operational challenges, making it imperative to coordinate diverse equipment to mitigate voltage violations and enhance power quality.…

Authors: Xu Yang, Chenhui Lin, Xiang Ma

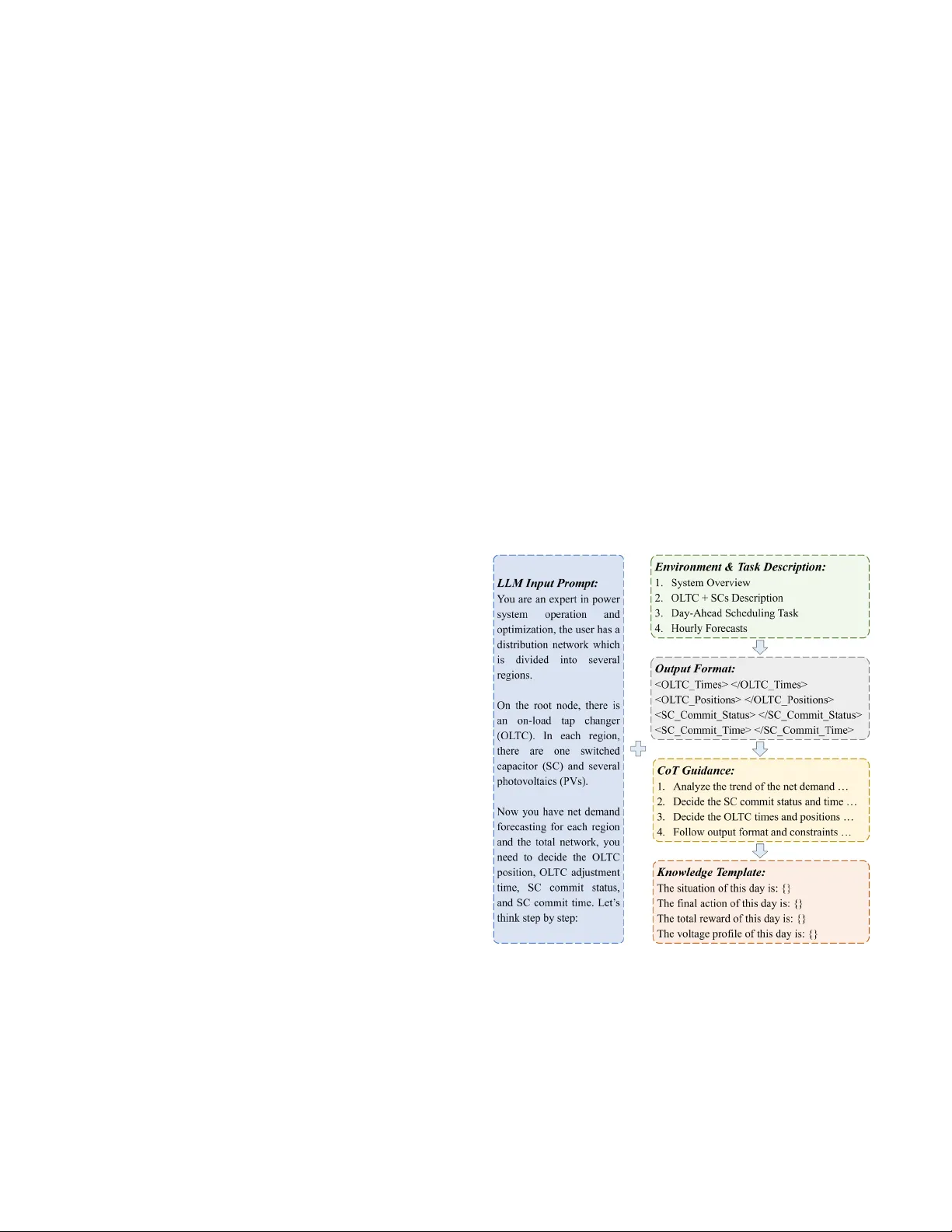

1 Tw o - Stage Activ e Distribution Network V oltage Control v ia LLM - RL C o llaboration: A Hybrid K nowledge - Data - Driven Approach Xu Yang , Graduate Student Member, I EEE , Chenhui Lin, Senio r Member, IEEE Xiang Ma , Dong Liu, R an Zheng, Haotian Liu , Member, IE EE , and Wenchuan Wu , Fell ow , IEEE Abstr act — The gr owing inte grati on of distr ibuted ph otovolta ics (PVs) into active distribu tion networks (ADNs) ha s exacerb ated opera tion al ch a llenges, making it imper ative to coordinat e di v erse equipme nt to mi tigate voltage violati ons and enhance power quality. Although existing data - drive n approache s have demo nstrated ef fectiven ess in the voltage con trol probl em, the y often req uire extens ive trial - and - error ex plorat ion and strugg le to incorp orate hetero geneo us informa tion, such as day - ah ead fore casts and se mantic - based grid codes. Consi deri ng th e opera tional scena rios an d requiremen ts in rea l - world ADNs , in this pap er, we propose a hybrid kn o wledge - data - driv en appro ach that le verages d ynamic col la boration between a large language mod el (LLM) agent and a reinfo rcement l earning (RL) agent to achiev e two - st age v oltag e control . In the da y - ahead stag e, the LLM agent receives coa rse region - level forecast s and generates schedul ing st rategies fo r on - load tap changer (OLTC) and shunt capa citors (S Cs) to reg ulate th e overall voltage p rofile. Th en in the intra - day sta ge, based on accurate node - level m easurem ents, the RL agen t refines terminal voltages b y derivi ng reactiv e power genera tion stra tegies fo r PV i nverters. On top of the LLM - RL colla boration framework , we further p ropo se a self - evoluti on mecha nism for t he LLM agent and a pretra in - fin etune pipeline for the RL a gent, effecti vely enh ancing and coordi nating the polici es for both agents . The prop osed appr oach not onl y aligns more closely with p ractical o peratio nal cha racteristi cs but a lso effectiv ely uti lizes the inheren t knowled ge and reason ing capabilities of th e LLM agent , signif icantly improvi ng traini ng efficien cy and voltag e control p erforman ce. Co mp rehensive compari sons an d ablation stu dies demo nstrat e the effectiven ess of the pro posed met hod. Index Ter ms — Active distr ibution netw ork, two - stage vo ltage contr ol, large lan guage model, rei nforcem ent learning , knowledge - d a ta - dri ven. I. I NTRODUCTION SPONDING to climate emerg encies, po llution f rom fossil fuels, and car bon neutrali ty commitme nts, renewabl e energy re sources, pa rticularly distributed photovoltaic s (PVs), are being increasingl y integrat ed into distri bution netwo rks, transfor ming them into ac tive dist ribution networ ks (ADNs) [1] , [2] . While th is int egration subs tantially reduces ca rbon emissio ns at the distributio n level , the growing pene tration of This wor k was sup ported i n part b y the Stat e Grid Zhe jiang El ect ric Po wer Compa ny Lim ited Sc ience a nd Tech nolo gy Proje ct “Res earch on K ey Techn ologi es for Coo perati ve Regu lation of Fl exible R esource s in Ac tive Distr ibutio n Netw orks B ased o n Highl y S afe and S calable Artific ial Intell igence” under Gran t 5211 JH250006 (Correspond ing a uthor: Wenchuan Wu). PV has introduced s ignificant operational challenges , notably voltage violations at the network extr emities. Also, th e inherent intermi ttency and st ochastic nat ure of PV generat ion furthe r exacerbate voltage fluctuat ions, pow er imbalance s, and ove rall power qua lity degradat ion, posi ng serious th reats to ADN stability and security [3] , [4] . In this c ontext, tra ditional pa ssive volt age control met hods are no longer s ufficient t o meet the dema nds of AD N opera tion . The ADN ope rators must adopt proact ive voltage c ontrol approac hes that jointly utilize mechanical as sets such as on - load tap c hanger (OLTC ) and shunt capacitors (SCs) , as well as flexible resources like PV inverters cap able of dynamic reactive support. Due t o tem poral coupling c onstraints and the requireme nt s for manual on - site inter vention, O LTC and SCs usuall y necessitate day - ahea d scheduling ba sed on th e forecasted information or histor ical records . In cont rast, during intra - day operatio n, the fas t reactive power regulation capabil ity of PV inverte rs can be leve raged to ena ble rapid control bas ed on real - time measurements . A variety of dat a - driven appro aches, primar ily bas ed on deep reinforcem ent learnin g (RL), have be en developed t o enable effici ent resolution of the a forementioned vol tage cont rol problem [5] - [14] . The se methods funda mentally oper ate by allowing an intelligent RL agent to ite ratively inter act with the external environment and enhanc e its poli cy based on th e received feedback. For example, researchers in [5] - [7] propos ed RL - based voltage control methods for ADNs, optim izing voltage pr ofiles by utilizing internal di stributed e nergy resources. Researchers in [8] - [11] extended t his paradigm i nto multi - agent setti ngs, where different system regions or resource clusters are assigne d to i ndividual R L agents that c o llaborate to achieve overall voltage optimization. Additionally, researchers in [12] - [14] addressed the c oordinat ion of differe nt equipment operating o n two times cales, enabling hierarchical voltage regulati on across va rious temporal horizons. Although t he above RL - bas ed data - driven methods ha ve demonstrated effective and promisi ng performance, their practical application to real - world A DN voltage cont rol, especially in the day - ah ead stage, stil l faces four ke y Xu Yang, C henhu i Lin, H aoti an Liu, and W en chu an Wu a re with t he Sich uan Ene rgy I nterne t Resear ch I nstitut e, Ts inghua U nivers ity, C hengd u 610213 , Ch ina . Xiang Ma , Dong Liu, and Ran Z heng are wit h the Sta te Grid Zhejiang Electri c Power Co. , Ltd., Ji nhua Powe r Supply Co mpany, Jinhua 321017 , China . R 2 challenges : 1) I ncom plete and heter ogeneous inf ormation. The effectiveness of data - driven methods ty pically hinges on the availability of complete and hi gh - qu ality data , which correspon ds to accurate no de - level meas urements i n the c ontext of voltage c ontrol probl em . Ho wever, limited by the r esolution of current meteorological forecasts and the accuracy of PV/load predicti ons, only coars e - grained infor mation, i.e., hourly region - level fore casts are available i n the day - ahead st age. Moreover, some unst ructured and het erogeneous informati on such as historical r ecords and vol tage reports , may also be provided in the day - ahead s tage. Proces sing and integrat ing such inco mplete a nd heterogeneous data pos e significant challenges for existing RL a lgorithm s . 2) Semantic s - based operat ional constr aints. The scheduling of ADN mechanical ass ets usually i nvolves numer ous semantic - based grid c odes , such as: “ The number of dail y adjust ment s of an OLTC is su bject to an upp er limit, ” or “ SCs can only be comm itt ed during a specific time window each day due to the re quirement fo r manual swi tching.” These grid codes are ess entially opera tional constr aints on the agent ’ s action space. However, incorporating such co nstraints into an RL policy typi cally requi res elabora te technique s, such as pen alty mechanism [14] or action ma sking [15] , whi ch reduces the training efficiency of the RL agent. 3) Instr uctions adapted to l ong - tail requirement s. S in ce mechanical assets may encounter unforeseen events such as faults or maintenance, ADN operators may occasionally i ssue operationa l instructions des cribed in natura l language. Therefo re, the age nt’s policy must be capabl e of adapt ing to such ins tructions. However, R L agents are i nherently u nable to process emergen t natural - langua ge instructio ns and exhi bit poor genera lization to unseen sc enarios, which ca n result i n performa nce degradatio n or even infeasible contro l actions. 4) Ambiguous policy im provement di rection. Finally, the RL agent rel ies solel y on a scalar r eward signal to guide its training process . However, such a scalar reward carries very limited informati on and fail s to provi de a clea r direction for policy evolution. For example , whe n the overal l voltage is low, the optimal action, such as raising the OLT C tap positi on, is intuit ively straight forward. Y et, the RL agent must under go extensive explor ation to discove r this simple r ule, resu lting in low er train ing efficien cy or worse perfo rmance . The rapid ad vancement of l arge language models (LLMs) in recent years has in itiated widespread application s across various do mains [16] - [18 ] a nd offered innovative solutions to the above c hallenges. On the one ha nd, LLMs possess i nherent natural - language process ing and strong i nformation integration abilities, enabling the m to receive, analyze, and process incomplet e and heterogene ous informati on. On the ot her hand, LLMs embed a vast amount of prior knowledge a nd exhibit powerful re asoning capabi lities, al lowing them to compr ehend semantic - based operational constraint s and instruct ions and generate valid responses accordingl y . Building on t hese strengt hs, some pionee ring studi es have begun ap plying LLM s to the power syst em dispatch domain [19] - [ 21] , while others have investigated how LLMs can as sist the training proces s of RL age nts [22] - [24] . In these studies, LLMs are generally limit ed to auxiliary subtasks s uch as code pro gramming or function s ynthesis and are primarily t reated as a nat ural - language i nterface, rat her than being us ed for polic y formulati on. Furtherm ore, researc h on how to improve LL M policies rem ains comparatively scarce . Fig. 1. Ove rall scheme of the prop osed app roach. Consi dering practical requi rements and operational scenarios in ADN vol tage control , we propos e a h ybrid k nowledge - d at a - d riven a pproach that combines respective advantages of LLM and RL. A s shown in Fig. 1, i n the day - ahead stage, the ADN operator receives hourly re gio n - level forecasts and u tilizes an LLM agent to generate s cheduling strategies for the OLTC and SCs . Then in the intra - day stage, the ADN op erator receives accurate node - level measure ments and utilizes an RL agent to generate real - time reactive power generation strategies for PV inverter s . On the one hand, this setup aligns well with t he two - stage na ture of volt age control problem: in t he day - ahead stage, coarse information is used to dispatch mechanical assets for regulatin g the over all voltage pro file, while in the intra - day stage, fin e - gr ained information enables PV inverters to r efine terminal voltages. O n the oth er hand, the pro posed appr oach synerg istically leverages the complementar y advantages of LLM and RL, which not onl y exploit the LLM ’ s i nformati on process ing capabilit ies and embedde d knowledge t o enhance policy l earning effici ency but als o RL ’ s p recise computation and rapi d response t o optimize cont rol performa nce . In essence, different fro m the existing LLM - assisted RL paradigm, the propos ed approach a ddresses a compl ex mu lti - stage con strained o ptimizatio n pro blem throu gh LLM - RL collabora tion using different aspects of i nformation. From the LLM ’ s perspective, the RL agent serves as a callable specia lized tool ca pable of performing in verter reactive c ontrol tasks , thereby exten ding the LLM ’ s capabil ity boundari es. Convers ely, from the RL ’ s perspective, the LLM agent constitutes an inte gral part o f the external e nvironment that process es heterogene ous or semant ic informati on , effectively reducing problem compl exity and shri nking the acti on space that needs to be expl ored , thus accelerating RL policy 3 convergence. Buildi ng upon the prop osed LLM - RL collabora tion framework , the key chal lenge li es in how to imp rove and coordinate the poli cies of the LLM agent a nd the RL age nt. To this e nd, we propose a R eflexion [ 25] - based self - evoluti on mechanis m for the LLM polic y, which continu ously enhance s its decision - making th rough enviro nmental fee dback and knowledge base up d ates. Meanwhile, we als o propose a pretrain - finet un e pipeline for the RL agent t o quickl y conform to the LL M polic y and ther eby strengt hen the co ordination between two agents. The mai n contributi ons of thi s paper can be summarized as follows: 1) Comprehe nsive proble m form ulation. We present a more compr ehensive formulati on of the tw o - stage volta ge control p roblem in ADNs . C ompared to exis ting studies , we explici tly account for the difference s in availabl e information between the day - ahead and intra - day s tages: in t he day - ahead stage, only hourly re gion - level forecasts are access ible, wh ereas accurate node - level measurements become available only during t he intra - day stage. This setup better reflects t h e operationa l conditions of real - world ADNs. 2) LLM - RL collab oration framework. To optimize the formulate d problem, we propose an LLM - RL c ollaborati on framework that assi gns day - ahead OLTC and SCs scheduling to the LLM agent and intra - day P V inverter reacti v e control t o the RL a gent. The proposed f ramework is ess entially a hybrid knowledge - d ata - driven a pproach that ef fectively c ombines the strengt hs of LLM and RL , not only exploi ting the LL M’s informati on process ing capabil ities and embe dded domai n knowledge, but also leve raging RL ’s precise comput ation and rapid res ponse . 3) Polic y improvem ent mechanism for LLM and RL. To enable pol icy improvement a nd coordinatio n within the LLM - RL coll aboration frame work, we also propos e dedicate d enhancement me thod s, incl uding a Refl exion - based self - evolution m echanism for t he LLM policy and a pretrain - finetune pi peline for the R L policy , enabl ing effici ent adaptat ion and joint c onvergence toward optima l performan ce. Comprehens ive comparis ons and ablation s tudies vali date the effecti veness and supe riority of t he proposed me thod. II. P RE LIM INA R IES A. Two - Stage Voltage Control Pr oblem Formulat ion In this paper, we consider a n ADN whose s et of no des is denoted by . Based on the spatial bounda ries define d by weather f orecasts or PV/load pred ictions, this AD N can be partition ed into r egi on s . The ob jective of the two - sta ge voltage c ontrol pr oblem is t o minimize vol tage deviatio ns across the system and eliminate violations as much as possi ble: min , (1) where , is the volta ge magnitud e at node , time ; is the voltage reference value; and is the leng th of th e voltage control p rocess, whic h is set to one day in thi s st udy. In order to achieve this obje ctive, the AD N operat or can dispat ch the follow ing cont rollable e qui pmen t: an OLTC installed at th e root node ; a set of SCs distributed ac ross the network, w hose set of nodes is denot ed as ; and several PV inverter s install ed at terminal nodes of the ne twork, whos e set of nodes is denoted as . We also assume that SCs are not deploye d at PV user nodes , i.e ., = . The OLTC serv es to ra ise or lower the overall system v oltage through adj ustment of i ts tap position: , = + (2) where , is the root nod e voltage at time ; is the voltage difference between two adjace nt tap positions ; and is an integer ind icating the tap position of th e OLTC at time . For example, for an OLTC wi th 11 tap posi tions, { 5, 4, … , 1,0,1, … ,4, 5 } . The SCs serve to provide reactive power compensat ion through com mitment durin g periods of pea k load, ther eby raisi ng the voltage i n correspo nding local regi on s: , = , , , (3) where , is the reactive injection at node , time ; is the SC capacity; , is the reactive load at node , time ; an d , { 0,1 } is the com mitment statu s of the SC at node , time . The PV inverters leverage the ir spare capacity to generate reactive power, thereby refining terminal voltages : , = , , , (4) , ( ) , , (5) where , is the r eactive gene ration of the PV i nverter at node , tim e ; is the PV installed capacity; , is the active generati on of the PV inve rter at node , time ; and [ 0,1 ] is the reactive capacity factor o f the inverte r, which is join tly determined by the inverter configurati on s and ancillary services provided b y the PV user . In addit ion to t he above eq uipment cons traints, t he ADN voltage c ontrol pro blem must al so satis fy power flow constrain ts (6) - (9) and voltag e limits (10): , = , , , , , \ (6) , = , , \ (7) , = , , cos , + sin , , (8) , = , , sin , cos , , (9) , , ( 10 ) where , is the active injection at nod e , time ; , is the active load at node , time ; and are the real and imagina ry parts of th e admittance element between no des and , respectiv ely; , is the voltage phase diff erence between nodes and ; and and are the low er limit and upper limit of th e voltage magn itude, respectiv ely. Eq s . (1) - (10) represen t the mathematical mo deling of the two - stage volt age control p roblem adopt ed by most ex isting studi es. However, in real - worl d ADN operati ons, particula rly in scheduling mechanical assets, a series o f grid codes must be followed, as the cont rol of th ese devices often invo lves 4 temporal c oupling constr aints and require ments for manual on - site interve ntion. In this paper, we consider two c ommon grid code requirements for OLTC and SCs, i.e., “ The numbe r of daily OLT C adjust ment s is ca pped by an uppe r limit, ” and “ SCs can only be committed during a specific time wind ow each day. ” As can be seen, these grid code requirements can be easily express ed in natural language, yet effective ly embeddin g them into a da ta - driven R L policy requires complicated design. T his is one of the main motivati ons for intr oducing LLMs, as LLMs can rea dily compre hend such o perational constraints and generate compliant scheduling strategies . The solut ion to the above c onstrai ned optimizati on problem is usually car ried out in two stages, i. e., the day - ahead stage and the intra - day st age. In the da y - ahead stage, the ADN operator obtains hourly region - level f orecasts and determines scheduling strategies fo r OLTC and SCs to proactiv ely regulate the overall voltage pro file for the fol lowing day, p reventing se vere voltage violation s. Then i n the intra - day stage, real - time accurate node - level measurements become acces sible, and the ADN operator leverages the fast response ca pability of PV inverters to further refine termin al voltag es. B. L LM as D ecision - Making Model s In recent years, the rapid development of LLMs has b ecome one of the m ost transforma tive and si gnificant develo pments in artifi cial intell igence, bringi ng profound i mpacts to nume rous specialized domains . Buil t upon the t ransforme r architecture [26] and traine d on vast amo unts of pret raining data, exist ing LLMs have acquired powerful in formation processing capabil ities and embedde d domain knowl edge , m aking it possi ble to employ them as decision - makin g models. Some advanced research in artificial intelligence has begun to apply LLMs as decision - making mo dels in autonom ous driving [27] , [28] and roboti c control [29] , [30] . These models take te x t - based or mul timodal envi ronmental des criptions as i nput and generate corresponding control strategies in an en d - to - end manner, offering a novel pathway for solving compl ex decision - mak ing problems. In power s ystems, dis patch probl ems, such a s ADN voltage contro l, are quinte ssential decision - making probl ems. However , existing app lications of LL Ms in th e power domain have lar gely focuse d on tas ks like simulation setup [31] , [32] , document analysis [ 33] , and scenario generation [ 34] . Th e few studie s related to di spatching t ypically ass ign LLMs subtas ks such as code programming an d function synthesis, which remain fundamentally natural - language p rocessing pro blems and do n ot fully exploi t the capabilities of existin g LLMs. Therefore, in this paper, we i nnovatively i ntroduce t he LLMs as decision - making model s and appl y them to the AD N voltage control problem. Thr ough the p roposed in put and out put formulation, LLM - R L collaborat ion framework, an d policy improveme nt mechanis m s , the LLM agent succe ssfully addresses the challenge of coordinating mechanical assets under gri d code constrai nts and enhance s voltage cont rol performance in ADNs. C. MDP and R L The intr a - day strate gies ar e provided by t he RL agent. In R L, the seque ntial decisio n - makin g process is usually modeled as a Markov decision process (MDP) [35] , which can b e descri b ed by a tupl e ( , , , , ) , where is the state s p ace of the environm ent; is the action space of the RL agent ; is the state transition functio n; is the rew ard function; and [ 0,1 ) is the discount factor for futur e rewards. At time , the RL agent receives the state observa tion of the current envi ronment and decid es it s control action based on its polic y : × [ 0, ) , i.e., ~ ( | ) . Then the en vironment tra nsfers to t he next state based on : × × [ 0, ) and gives the RL agent a rewar d ( , ) as feedback. Using the feedback info rmation fro m the environm ent, the ob jective of the RL ag ent is to op timize its policy so th at the expected cumulative rewards ( ) can be maximized , i.e., max ( ) = , [ ] ( 11 ) Detailed MDP se ttings of the voltage cont rol problem and RL traini ng mechanism wi ll be desc ribed in the next sec tion. III. M ETHODS A. LLM - RL Collabor ation Fra mework Fig. 2. Illustrative p rompts for LLM strategies gen eration . As show n in Fig. 1, the pro posed LLM - RL collabo ration framework employs a n LLM agent and an RL a gent to handle day - ahead and int ra - day decisi on - making, respectively, achievi ng voltage opt imizati on through t heir collab orative interactio n. In the day - ahea d stage, the input to the LLM agent consis ts of hourly region - level forecasts, specifical ly th e n et load forecasts for the regions a nd the overall ADN sy stem. These inputs are characterized by coarse gr anularity and may 5 exhibit c ertain deviation s due to the limited accuracy of current PV / load predi ctions . Based on thi s informati on, the LLM ag ent outputs s cheduling s trategies for t he OLTC and S Cs that comply w ith grid co de require ments. Then in the intra - day stage, the input to t he RL agent c onsists of accurate node - level measurements , specifically the real - time loads and PV generati on. These inputs are cha racterized by high resolut ion and relat ively high acc uracy. Ba sed on this inform ation, the RL agent outputs reactive control st rategies for the PV inverters that com ply with reactiv e capacity co nstraints. The key innovatio n of this c ollaborat ion framework lies in t he introduct ion of the LLM ag ent , which enab les the exploitatio n of its advantages in informa tion process ing and knowled ge integratio n. To adapt an e xisting general - purpose LLM i nto the required domain - specific LLM agent, that is, one cap able of accurat ely understa nding the volt age control pro blem and genera ting day - ahead OLTC and SCs schedu ling strategies that satisfy operational constrai nts, w e propose the tailored prompt s . A s illustrated in Fig. 2 , the prompts consis t of the follow ing component s: 1) Envir onment and task des cription: This compone nt provides a detailed desc ription of the e nvironment and ta sk, including t he system over view, des criptions of the OLTC and SCs, and a clear specification of t he day - ahead s cheduling ta sk, which requires the LLM agent to generate day - ahead strategies based on the hourly for ecasts for the following day. 2) Output for mat: A standardized outp u t format can effecti vely reduce t he occurre nce of halluci nations or i nternal errors. I n this comp onent, we s pecify the required out put format for the LLM agent, namely, the t iming and corresp onding t ap positi ons for OLTC a djustment s, a s well as the comm itment status es and time window s for SCs. Once the LLM agent generate s the content, we apply reg ular express ions to ext ract struct ured scheduli ng strategie s from the re sponse and validate their compliance with grid co de r equirements. If the strategies satisf y these ope rational constraints, th e policy is deem ed v alid; otherwis e, the LLM agent is prompte d to regenerate the day - ahead schedulin g strate gies in another dial ogue. 3) Chai n - of - Thou ght (CoT) guidance: CoT reasoni ng refers to decom posing a comple x problem int o a sequence of simple, executable inference steps [36] . For domain - speci fic proble ms, CoT can effec tively assist in generatin g reliable outputs. Specifi cally, in the context of volta ge control, the LLM age nt is first instr ucted to analyze the trend a nd magnitude of net lo ad forecasts; based on which it determ ines the commitm ent actions for SCs in each region a nd the tap posi tions for the OLTC ; an d finally, it or ganize s thes e decis ions into a forma l final answer which ret urns to the AD N operator. 4) Few - shot example s as knowle dge embedding : The above approac h only ensures that the generated strategies are feasible, but it does not enabl e improveme nt of the LLM policy. To realize policy impro vement, we further construct a knowle dge base that store s inform ation from several historical d ays . For a sp ecific histor ical day, we store its day - ahead forecast ing data, g enerated OLTC and S C s acti ons, and the resulting total reward and voltage profi les. Whe n making decisions , the LLM agent re trieves the histor ical day m ost similar to th e current scenari o from the kn owledge base and e mbeds it as a few - shot example i n the prom pt s for ref erence durin g reasoning. This prevents the LLM agent ’s decisi o n - making fro m being arbi trary and enabl es continuous policy opti mization t hrough iterat ive updates of the knowle dge base. Complet e prompts for the LLM agent are provided i n an onli ne suppleme ntary file [37] . An d t he details o f LLM polic y improvement , historic al knowledge retrieval, and knowledge base update will be elaborated i n the followi ng subsections . On the othe r hand, the rol e of the RL age nt is to adapt th e OLTC a nd SCs sett ing s prescribed by t he LLM agent i n the day - ahead st age and furt her optimi ze the termi nal volta ges during the i ntra - day stage by adjusting t he reactive po wer generation o f PV inverters . Although the RL pol icy can be readily impr oved us ing off - the - shelf RL algorithms, r apidly adapting i t to the LLM policy wi thin the pro posed LLM - RL collabora tion framewor k poses a s ignificant cha llenge. Because when the statuses of the OLT C and SCs cha nge, the R L agent actually faces a different environment. Therefore, the tr aining mechanism of the RL agent should be c arefully designed to enhance its learn ing capab ility and adaptatio n rate. As show n in Fig. 3, to addres s this is sue, we furthe r propose a pretrain - finetune pipeline fo r the RL agent. S pecificall y, the RL agent first undergoe s extensi ve ex ploration in a pretra ining environm ent to learn a general c ontrol policy for PV i nverters . Subseque ntly, the pret rained RL age nt is transfe rred to a finetuni ng environment, where it ada pts to the current LLM policy. N otably, in the pretraining envi ronment, t he actions of OLTC and SCs are rando mly assigned, whereas in the finetuni ng environment, these actions are provided by the we ll - improve d LLM agent . This desi gn enables the R L agent to acquire versatile control strategies across diverse s ettin gs during pre training, thereby fa cilitati ng rapid a daptation t o the LLM polic y during finet uning. Fig. 3. Pretrain - fin etune pipeline for RL agent . B. In tra - D ay P V Reactive Contr ol Policy Impr ovement Within the p retrain - finetune pipeline, i n order to leverage RL algorith ms to improve the intra - d ay PV reactive control p olicy, the intra - day volt age control pr oblem should first be formulated as an MDP. C orre sponding definitions are desi gned as fol lows: 1) State s pace: Th e state of the M DP consist s of two 6 components, i.e., the real - time node - level me asu rem en ts ( , , , , ) from the ADN monito ring system and OLTC an d SCs actio ns ( , ) previous ly decided by the LLM age nt, where , , are the vectors of the ADN ’ s active loads, reactive loads, and voltage magnitudes ; , are the vectors of the PVs ’ active and reactive generation; and is the vector of the SCs ’ commitm ent status es . Spec ifically , OLTC a nd S Cs actions ( , ) are embedded into the state space using one - hot encodin g. Incorporat ing t he LLM agent ’s actio n s into the state space enhances th e RL agent ’s environmental awareness and improves i ts adaptati on to the LLM policy. 2) Action space: The action of the MDP is constru cted with the reactiv e generation of all controllab le PV inverte rs in the ADN . Specifically, we con strain the action of the RL agent in [ , ] so that the reactive capacity constra int eq. (5 ) i s always satisfied. 3) Reward funct ion: Th e reward funct ion of the MDP aligns with the o bjective of th e voltage control pr oblem: = , | | (12) The RL algorithm utiliz ed in this pap er is p rox imal policy optimiza tion ( PPO ) proposed in [38] . I n PPO , the RL a gent ’ s policy i s approximated by a neural network with parameters . After completing one episode of iteration between t he old poli cy and the externa l environme nt, the objectiv e functi on for can be expressed as : ( ) = ( , ) ~ ( ) , ( 13 ) where ( , ) ~ is the batch of iteratio n data generated f rom the old poli cy ; ( ) = ( | ) / ( | ) is the impor tance samp ling ratio; and , is an estim ator of the advantage funct ion using generalized advant age estim ator (GAE) technique . In or der t o constra in the updat ed polic y within a tr ust regio n and ensure more stable training, a common practice in PPO is to use gra dient clipping , i.e., ( ) = ( , ) ~ min ( ) , , ( ( ) , 1 , 1 + ) , (14) where the clip functi on confines ( ) to the interval [ 1 , 1 + ] so that t he updated p olicy does not deviat e excess ively from the old policy. Then based o n the calculated ( ) , the poli cy network is o ptimized times by updating its p arameters. C. Day - Ahead OLTC and SCs Schedul ing P olicy Improvement After ens uring that the LLM agent can c omprehend th e problem an d gen erate day - ahead strategies that comply wi th operationa l constrai nts, the next c onsidera tion is how t o improve the LLM pol icy ac cordingly . I n this pa per, we pro pose a Reflexion - based self - evolution mechanism for the LLM agent. Simil ar to RL algorit hms, Refl exion enhances the agent ’ s policy th rough interaction w ith an environment and feedba ck from it. Spec ifically, Refle xion aims to impr ove the model ’ s reasoni ng and decis ion - making c apabili ties on compl ex tasks via a self - reflection proces s that encompasses execution, evaluat ion, reflect ion, memory , and iterative ref inement. For the vol tage control pr oblem discu ssed in this pape r, a knowledge base is firstly main tained for the LLM agen t, storing data fr om several his torical days. When a decisi o n i s required for a new day , the LLM agent queries the knowledge base and ret rieve s the historical scen ario most similar to cu rrent situ ation (with th e highe st similarity ) , wh ich is then embedded as a fe w- shot example into the prom pts provided to the LLM agen t. Specifically, for the new day and a historica l day , their simila rity is calculated as follows: = × (15) where captures the tem poral similarity betw een and ; and captures the magnitu de similarity between and . and are designed as follows: = , × , , × , (16) = , , , , , , (17) where , ( [ 0, 23 ]) is the hourly net loa d forecast fo r the ove rall ADN system in . After the OL TC and SCs action s are executed in the training environm ent , the LLM agen t then receives the corres ponding result s and feedback, incl uding the total reward of t he day and hourly volta ge profiles , i.e., average voltage for each region a nd the ove rall ADN system . Based on the fe edback and fol lowing the Reflexio n par adigm, we instruct th e LLM ag ent to ref ine its original actions. For example, if the overall vol tage is too l ow during a specific time period, the LLM agent will increase the OLTC t ap position for th e correspondi ng period. Afte r performi ng rounds of R eflexion, w e obta in + 1 candidate reward s for t he new day , and we denot e the highes t one as . Fig. 4. Knowl edge base updat ing proces s for LLM agent . After R eflexion conclu des, the next s tep is to update the knowledge base , where we set a n u pdating thre shold 7 . Then t he update process sho uld be handl ed in tw o cases: If < , it in dicates that the new day is a nove l situation which have not been enc ountered bef ore, then the new day , the reward , and its correspondi ng OLTC and SCs actions are directl y appended i nto . If , it indicate s that has been e ncountered be fore a nd can be parti ally repres ented by a historica l day . We then compare the total rewards and to examine their performance. If > , it means th e LLM agent has discovered a better s et of actions for this si tuation, and we replace in with . Convers ely, if , it implie s that the p reviously stored day correspon d s to a superior strategy , thus is retain ed in and is discarded . This updating proc ess is a lso illustrated in Fig. 4. Drawing an intuitive ana logy bet ween the proposed Reflexion - based self - evolution method a nd RL algorit hms, the knowledge base and the retrieved histo rical day serv es as the policy parameters , ins tructing the age nt to genera t e correspon ding actions ; t he Re flexion proces s is analogous to the optim ization pro cess of ( ) , where the agent identifies directi ons for policy i mprovement s; is like , which constra ins d ifferences between th e old policy and th e updated one ; and is like , which de notes the n umber of ti mes an epis ode of data can be reused . Through the iterative Refle xion process and updates of t he knowledge base, the LLM policy can be pr ogressivel y refined. I n addition , it s hould be noted that Reflex ion is essentially a “ tra ining ” process bas ed on iterative experi ences, and t hus it occurs only in an of fline manner. During onli ne executi on , the know ledge base i s f ro zen and the LLM direc tly retrieves the most similar h istorical scenario from the knowledge base for in feren ce, as it is infeasible and impractical f or online Ref lexion . IV. N UMERICAL S T UD IES A. Cases and Method s Set up In this section, numeric al simulations are conducted on IEEE 33 - bus [39] and 141 - bus [40] dis tribu tion sy stem s. OLTC a t root node has a total of 11 tap positions, each corresponding to a volta ge regulation step of 0.6% . Voltage limitations are set a t [0.95, 1.05] p.u. The 33 - bus system is partitione d into three regions, and the 141 - bus system is partitioned into five regions, in wh ich ea ch region is equipped with one SC and two PV inverters. We use on e year of PV and load da ta to simulate power flow in the distribution syste ms. In th e d ay - ahead s tage, the LL M agent rece ives hourly region - l eve l for ecas ts, to whic h we add 5% white noise to emu late the prediction err or s in current PV and load predictions. F or the RL agent, i ntra - day control interval is 15 minutes and the length of the control process is 96 steps. Corres pond ing configurations are listed in Table I, and m ore deta iled specif ica tions and topologies are provided in the online supplementary file [37] . As for the LLM and RL used in this paper, c onsidering the randomness in LLM responses and the PPO algorithm, we use 3 independent random seeds for each experiment . All expe rime nts are run on a computer with a 2.2GHz Int e l Core i 9 - 14900HX CPU and a 32G B RAM. Hyp erpara meters for the LLM ag ent and the RL agent ar e pre sented in Tab le II . TABLE I C ONFIGURAT IONS OF THE T EST S YSTEMS P arame ter V alue No. Re gion 3, 5 No . SC 3, 5 No. PV 6, 10 No. OLTC Position 11 OLTC A djustme n t Lim its 4 OLTC Step 0.6% SC Cap acity 0.15 MVA R, 0. 4 MV AR 0.3, 0. 15 For com parison and ablati on studies, in order to demonstrate the effectiveness of the proposed approach , in th e off line t rain ing phase, we set the proposed m ethod against three baselines: 1) Pure - RL, in w hich the day - a he ad and intra - da y pol icies are optimized by two separa te RL agen ts, is design ed to va lidate the effe ctiven ess of the LLM - RL collaboration framewo rk. To ensure compli ance with grid code requi re ments, we further incor por ate a s oft constraint and a hard constraint into the day - ahead schedulin g strateg ie s of Pure - RL , following established practices in the litera ture. The sof t con str aint refers to th e penalty mechanism : when the RL agent proposes an action that violates grid cod e limits, it receives a corresponding negative penalty; while the hard cons traint r efer s to actio n ma skin g, wh ich enfor ces th at the st ate s of the OLTC and SC s remain unchanged once their maximum allowable sw itching count s are reached. It shoul d be noted that although P ure - RL receives a penalty term as feedback during training, this pena l ty term is excluded from the reward metrics presented in the following results to ensure a fair comparison. 2) No - PT, in wh ich th e RL a gen t is not p retra ined and i s direc tly finetuned in the environment defined by the LLM policy, is designed to validate the necessity of the proposed pretrain - finetu ne pipeline . 3) No - Reflexion: in which t h e Reflexion process is omitte d, i s des igned to d em onstr ate th e e ffec tiven ess o f the proposed Reflexi on - based mechanism in complex tasks. TABLE I I H YPERPAR AMETERS OF THE LL M A GENT AND THE RL A GENT Age nt Parame ter V alue LLM Mod el Q wen - Plu s Versi on “ 2025 - 12 - 01 ” Temperatu re (Training) 0.7 Top - P (Tr aining ) 0.8 Temperatu re (Test) 0.2 Top - P ( Te st) 0.6 0.7 3 RL Mini B atch Siz e 32 Hidden Layers 2 Hid den U nits 256 0.9 Learni ng Rate (Pr etrain) 1e - 4 → 1e - 5 (Li near Decay) Learni ng Rate (Fi netune) 1e -5 0.3 4 Then, in the online execution phase, in addition to the aforementioned methods, we a l so design another three base lines : 1) Orig ina l, in which the OLT C is fix ed at th e ref eren ce vo lt age position, SC commitment is disabled, and PV inverters are 8 prohibited from participating reacti ve control, and this baseline is used to demonstrate the necessity of proactive voltage control mea sure s in AD Ns . 2) No - LLM, in whi ch the m echani cal as sets OLTC and SCs are di sabled, is des igned to val idate their cap ability of regulating the overall voltage prof ile . 3 ) No - R L, in w hich th e PV reactive generation is disabled, is designed to validate it s capabi lity o f r efinin g ter min al v oltag es . B. Training Pe rformance of P roposed and B aseline Met hods To compar e the perf ormance of P roposed, P ure - RL , No - PT , and No - R eflexion duri ng the offl ine traini ng phase, w e plot their train ing curve s in Fig s . 5 - 6. Solid lines in the figures represent the mean values across mu ltiple random seeds, while the shade d areas indicat e the corres ponding error bounds. Als o, consider ing that vol tage cont rol performa nce may vary signifi cantly over the co urse of a yea r, we apply a movi ng average wi th a window l ength of 25 e pisodes to s mooth t hese curves. Fig. 5. Traini ng performance in IEEE 33 - bus syst em. Fig. 6. Traini ng performance in IEEE 141 - bus sys tem. As can be seen , the trainin g phase begi ns w ith the LLM improveme nt; once the LLM policy st abilizes, we freeze its knowledge base and int roduce a pretrained R L agent into the environm ent for RL finetunin g . In the 33 - bus syst em, th e LLM agent un dergoes impro vement over 200 episodes, w hile the R L agent is pretrained for 1500 episodes and finet uned for 1 50 episodes . In the 141 - bus s ystem, the LLM age nt ’ s improvem ent spans 250 episodes, a nd the R L agent is pret rained for 2500 episodes and finetuned f or 200 epis o d es. In addi tion, f o r better visualizatio n and compa rison, we present the comp l ete training curves of Proposed an d Pure - R L in the figures , but only s how the firs t half of the tra ining curve for No - Reflexion an d the second hal f for No - PT. How ever, it shoul d be noted t hat all these f our methods un dergo the complete LLM i mproveme nt and RL finetuning procedures, a nd their c onverged performa nce will be co mpared in t he next subse ction. Firs t, examining the t raining cur ve of Propose d reveals t hat, during t he first half, the L LM policy progressi vely improve s and conver ges steadil y through interac tion with t he environm ent and continuo us updates of the knowledge ba se, demonstra ting the effecti veness of the proposed Ref lexion - based self - evolutio n mechanis m. In the secon d half of t he curve, after t he RL agent is introduc ed, the re ward furthe r improve s using the PV reactive generation ca pacity . Moreover, since t h e RL agent has been well - pretrain ed beforehand, it can quic kly adapt to the day - ahe ad strate gies provide d by the LLM agent , leading to stable convergence and enabli n g efficient coordinati on between day - ahe ad and intra - day ope rations. Second, we compare the performa nce of Pure - R L. It can be observed that, even without iteration and improveme nt, the initial LLM p olicy already outperforms the RL pol icy on the day - ahead voltage scheduling task. This is because the L LM posse sses ex tensi ve embedded knowledge a nd, guided by the propose d prompts, can generate compl iant a n d reasonable strategies. Fo r example , it lowe rs the OLTC tap position when PV genera tion is high and dis patches SCs during pe ak load periods. S uch intuitive operati onal knowled ge is largely absent in the RL ag ent, resulting in an in itial policy that is r elatively random and i neffective . An d even af ter interac ting with the environm ent, Pure - RL s hows no obvious performance improveme nt. On the one hand, t he RL agent m ust handle operationa l constrai nts and a large acti on space, re quiring subst antially more ex ploration t han the LLM age nt to devel op an effect ive unders tanding of t he environment . On the oth er hand, th e feedback received by the RL agent is a simple scalar reward, whereas the L LM agent can process heterogene ous informati on, such as vol tage profiles a nd system sta tes, which provides much richer con textual cues. Compared to a scalar reward, this divers e information offe rs the LLM agent clear directi ons for policy i mprovement, w hile the RL pol icy r emain s largely sto chastic. In summary , on the day - ahe ad volt age scheduli ng task, the LLM demonstrates at least thr ee advantages over RL, i.e., embedde d knowledge driven strategy gener ation, semantic comprehe nsion enhanced c onstrai nt handling, and re asoning backed pol icy improveme nt. These advantages unde rscore bot h the super iority and nec essity of a pplying LLMs t o speciali zed dispatch tasks, as well as th e effectiveness of the proposed LLM - RL collabora tion framework. Third, we observe the trainin g curve of No - PT. Since the only di fference betw een No - PT and P roposed is whether t he introduce d RL agent has underg one pretraini ng , and bo th 9 methods share the same LLM agent, their curves are identical in th e first half and di verge only in t he second ha lf. It can be found that , due to the absenc e of pretraini ng, the RL age nt in No - PT learns and converge s more slowly t han that in Pr oposed in the 33 - b us sy stem. And in the 141 - bus system, it e ven converge s to a suboptima l pol icy. This demo nstrates t he effectiveness of the proposed pretrai n- finet une pipeline. The pretrai ning process not only provides t he RL agent wit h a high - quality w arm start polic y but also enhances its ability t o adap t to divers e OLTC and S Cs settings . Therefore, p retrain ing th e RL agent is essentia l wi thin the pr oposed LLM - RL collabora tion framework. Final ly, an analys is of No - Ref lexion revea ls that, although it is also capable of self - evoluti on through intera ction with the enviro nment, its imp rovem ent rate is significa ntly lower than that of P roposed. Thi s is because No - Refl exion does not perform in - dept h reasoning an d re flection o n environment al feedback and instead re lies on trial - and - error learnin g. We still use RL algorithms by anal ogy, which in fac t indicates t hat the interactio n samp les of No - Reflexi on are not fu lly utilized, thereby reduc ing “ sample efficiency ” and degradi ng tra in in g performance. C. Executi on Performance of Proposed an d Baseline Met hods After the LLM agent and the RL agent are full y trained an d converge d , the syst em enters t he online exec ution phas e. During thi s phase, the LLM agent ’ s knowledge bas e and the RL agent ’ s policy para meters are no longer updated , and Reflex ion is disabled . I nstead, day - ahead a nd intra - day strategies are generated directly. In real - world ADNs, the latest kn owledge base and p olicy parameters are store d and maintaine d by the ADN opera tor. In the day - ahead stage, the LLM agent determine s the sche duling of OLTC and SCs based on foreca st inform ation, who se strategies are genera ted within m inutes. Then duri ng the i ntra - day ope ration, the RL agent determines the reactive power genera tion based on r eal - time measurements, with strateg y generatio n occurring in milliseconds, whic h is totally sufficient to meet the requirements of real - time control. To evaluate the execution performance of the proposed an d baseli ne methods, w e select 25 additional episodes f or each rando m seed to test their converg ed policies, r esulting in a total of 75 tes t episodes pe r metho d. We present the tested average nodal volta ge deviation s and the nodal voltage violatio n rate s in Tables I II and IV , in which the best performance is marked in bold. TABLE I II E XECUTION P ER FORMANCE OF IEEE 33 - BUS S YSTEM Method Volt age Dev iation (p.u.) Violat ion Rate (%) Mea n Std. Mea n Std. Origi nal 2.50 e - 02 4.95 e - 03 10.7 6.06 No - LLM 1.14 e - 02 2.48 e - 03 3.65 e - 02 1.62 e - 01 No - RL 1.69 e - 02 3.18 e - 03 1.21 2.79 Prop osed 8.22 e - 03 2.08 e - 03 2.60 e - 03 1.38 e - 02 Pure - RL 2.06 e - 02 7.09 e - 03 3.24 5.31 No - PT 1.02 e - 02 2.28 e - 03 1.26 1.53 No - Refle xion 8.60 e - 03 1.97 e - 03 6.08 e - 03 2.42 e - 02 * Mean and S td. denote mea n values an d standard deviati ons across 75 episodes. TABLE I V E XECUTION P ER FORMANCE OF IEEE 141 - BUS S YST EM Method Volt age Dev iation (p.u.) Violat ion Rate (%) Mea n Std. Mea n Std. Origi nal 2.16 e - 02 3.35 e - 03 3.74 4.82 No - LLM 1.21 e - 02 3.23 e - 03 1.46 2.87 No - RL 1.21 e - 02 3.26 e - 03 1.35 e - 01 3.64 e - 01 Prop osed 8.37 e - 03 2.21 e - 03 1.58 e - 02 8.48 e - 02 Pure - RL 2.30 e - 02 6.30 e - 03 2.89 3.46 No - PT 1.11 e - 02 2.89 e - 03 7.67 e - 01 1.59 No - Refle xion 1.00 e - 02 2.33 e - 03 4.70 e - 02 3.20 e -01 * Mean and S td. denote mea n values an d standard deviati ons across 75 episodes. As show n in Table s III a nd IV, Original e xhibits the wor st performa nce on the vol tage control p roblem, res ulting in la rge voltage de viations a nd frequent voltage viol ations, w hich demonstra te the necess ity of proact ive control in A DNs. Although No - LLM and No - RL show si gnificant imp rovements over Or iginal, th eir perfor mance still fall s shor t of that o f Proposed. This not only conf irms the effect iveness of the propose d method but also unders cores the i mportance of coordinating mechanical a ssets with flex ible resou rces. Especi ally in the conte xt of widespr ead inte gration of distri buted energy res ources into A DNs, coordinat ed control of multipl e equipment has becom e a critic al approac h for address ing voltage cha llenges . The test resu lts of Pure - RL show that, even at the final stage of train ing, it still fails to disc over a high - quality cont rol poli cy and perfor ms worse than Ori ginal in ce rtain s cenarios. T his again val idates the effective ness of inc orporating the LLM agen t, which can significantly imp rove both optim ization effici ency and solutio n quality on s pecific proble ms. As for No - PT and N o - Ref lexion, their perf ormance is consisten t with their traini ng behavior, i .e., the a bsence of pretraining and Refle xion pro cess l eads to performance degradation compared to Proposed. These com parisons also demonstra te the effecti veness of the proposed pre train - finetune pipeline and the Reflexion - based self - evolut ion mechanism. V. C ONCLUSION To address voltage chal lenges in A DNs caused by hi gh PV penetrat ion and the i nability o f existing da ta - driven met hods to effectively handle heterogeneous in fo rmation, semantic constra ints, and dynami c instruc tions, we propose a hybrid LLM - RL collabo ration framework for two - stage voltage control: the LLM agent s chedules O LTCs and SCs us ing day - ahead forecasts and histori cal recor ds , wh ile the RL agent adjusts PV inverter reactive power based on real - time measurem ents. Buildi ng upon this frame work, we further introduce a Reflexion - based self - evolution m echanism ta ilored for the LLM agent and a pr etr ain - finetune pipeline de signed f or the RL a gent, enabling effe ctive polic y enhancement a nd effici ent coordinati on between the se two agents. Experimental result s show that our a pproach si gnificantly outperforms baseli ne methods i n both volt age regulat ion perf ormance a nd training efficiency, demonstrating the effectiveness an d necessity of synergi zing knowle dge - driven reasoni ng with data - driven contr ol. 10 Drawing fr om our rese arch and operatio n al experience, we found tha t LLMs excel at knowledge - base d reasonin g and generation but are less capable in precise numerical computati on. This is also one of the key motivati ons for proposi ng the LLM - RL collaborat ion framew ork. In fut ure work, how to fully l everage t he strengths of L LMs and achie v e deeper int egration wi th various domai n - specific models represe nts an importa nt and promis ing research direction. R EFERENCES [1] X. Su, L. Fang, J. Yang , F. Shahnia, Y. Fu and Z. Y. Dong, “ Spat ial - Temp oral C oordina ted V olt/VA R Contr ol for Active Distr ibutio n Sys tems, ” IEEE Trans . Power Systems , vol. 39 , n o. 6, pp. 70 77 - 7088, Nov. 2 024 . [2] S. S . Al Kaab i , H. H . Zeinel d in an d V. Khadki kar, “ Planning Ac tive Distr ibutio n Netw orks C onsider ing M ulti - DG Config urations , ” IEEE Trans . Power System s , vol. 2 9, no. 2, pp . 785 - 793, Ma r . 2014 . [3] X. X u, Y. Li, Z. Yan , H. Ma a nd M. S hahidehpour , “ Hierarc hical Centr al - Local I nverter - Base d Voltag e Control i n Distrib ution Ne tworks Consider ing Stoch astic PV Pow er Admissibl e Range, ” IEEE T rans . Smar t Grid , vol. 14 , n o. 3, pp. 18 68 - 1879, May 2 023 . [4] R. Ya n, Q. Xin g and Y. X u, “ Multi - Age nt Safe Graph Reinfor cement Lear ning for PV Inv erters - Ba sed Real - Time De centralize d Volt/Var Contr ol in Zon ed Distri bution Ne tworks, ” I EEE Trans . Smart Gr id , vo l. 15 , no. 1, p p. 299 - 31 1, Jan. 2024 . [5] L. Ge, J. Li , L. Hou and J. Lai , “ Autonomou s Voltage Regula tion for Sma rt Distri bution N etwork With Hi gh - Prop ortion P Vs: A G raph M eta - Reinf o rcem ent Learning A p proa ch, ” IEEE T rans . S ustain able E nergy , vol. 16, n o. 4, pp. 27 68 - 2781, Oct. 2025 . [6] H. L iu and W. Wu, “ Two - Stage Dee p Reinforcem ent Learni ng for Invert er - Base d Volt - V AR Contro l in Active D istributi on Networ ks, ” IEEE T rans . Smart Gr id , vol . 12, no. 3 , pp. 2037 - 2047 , May 2021 . [7] R. Wa ng, X. Bi and S. Bu, “ Real - Time Coordi nation of Dynam ic Netw ork Reco nfigura tion a nd Volt - V AR Contr ol in Acti ve Distr ibution Ne twork: A Graph - Awa re Dee p Reinfor ceme nt Lear ning Appr oach, ” IEEE Tra n s . Smart Gr id , vol . 15, no. 3 , pp. 3288 - 3302 , May 2024 . [8] X. Y ang, H. Liu and W. Wu, “ At tent ion - Enha nced M ulti - Agent Reinf orcem ent Lear ning A gainst O bser vation P erturba tions f or Distr ibute d Volt - VAR Control , ” IEEE Trans . Sm art Gr id , vo l. 15, no. 6, pp. 5761 - 5772, Nov. 2 024 . [9] H. Liu and W. Wu, “ On line Multi - Agent Reinfor ceme nt Lear ning f or Decentra lized Inv erter - Based Volt - VAR Control, ” IEEE Tr ans . Smart Grid , vol. 12 , n o. 4, pp. 29 80 - 299 0, Jul . 2021 . [10] X. Zh eng, S. Y u, H. Cao , T. Shi , S. Xue an d T. Ding, “ Sensit ivity - Based Heter o geneo us Ordered M ulti - Age nt Reinf orceme nt Lear ning for Distri buted V olt - Var C ontrol in A ctive Dis tributi on Netw ork, ” IEEE Trans . Smart Gr id , vol . 16, no. 3 , pp. 2115 - 2126 , May 2025 . [11] C. Mu, Z. Liu, J. Y an, H. Ji a and X. Z hang, “ Graph Mu lti - Agent Reinf o rcem ent Learn i ng f o r I nverter - Based Active Vol tage Contr ol, ” I EEE Trans . Sma rt Grid , vol . 15, no. 2 , pp . 1399 - 1409 , Mar . 2024 . [12] H. Liu, W. W u and Y. Wan g, “ Bi - Level Off - Polic y Rei nforcem ent Lear ning for Two - Tim escale V olt/VA R Control i n Active D istribut ion Netwo rks, ” IEEE Trans . Power Syste ms , vol. 38, no. 1, pp. 3 8 5 - 395, Jan . 2023 . [13] T. Zha n g, L . Yu, D. Y ue, C. Do u, X. Xie a nd G. P . Hancke, “ Two - Time scale Coord inated Volta ge Regul ation for High Renewable - Pene trated A ctiv e Distr ibutio n Netw orks C onsider ing Hy brid De vices , ” IEEE Trans . Indu strial Informat ics , v ol. 20 , no. 3, pp . 34 56 - 3467, Mar . 2024 . [14] D. Cao et al. , “ Deep Rei nfo rce ment Lea rni ng Enab led P hysi cal - Mod el - Free Tw o - Timesc ale Volt age Contr ol Metho d for Ac tive Distr ibution Systems, ” IEEE Tra n s . Smart G rid , vol . 13, no. 1, pp. 149 - 165, Jan. 2 022 . [15] J. - Y. Oh, S. Oh, G. - S. Lee , Y. T . Yoo n and S . Jo, “ Seque ntial Co ntrol of Indiv idual S witches for Re al - Time D istri bution N etwor k Reconf igurat ion Usin g Deep Reinf orceme nt Lear ning, ” IEEE T ran s . Sm art Grid , vol. 16 , no. 5 , pp. 3666 - 3683, Sep. 202 5 . [16] Y. Nie, Y. Kong, X. Dong et a l. , “A S urvey of Larg e Lang uage Models for Financia l Applica tions: Progr ess, Prosp ects and C h allen ges,” ar Xiv : 2406.1 1903 , Jun. 2 024. [17] J. Qi u, K. L am, G. Li et al. , “LL M - based ag entic sys tems in medici ne and health care,” Natu re Machi ne Int elligen ce , vol . 6, no . 12, pp . 1418 - 1420, 2024. [18] S. Yue , W. Ch en, S. W ang et al . , “DISC - LawLLM: Fine - tuning L arge Langua ge Models f o r I nt ellige nt Legal Ser vices,” arX iv: 230 9.11325 , Sep. 2023. [19] X. Yang et a l. , “ L arge Lan guage Mod el Powere d Automa ted Mod eling an d Opti mizati on of Ac tive D istr ibuti on Networ k Dispa tch Pro blems , ” IE EE Trans . Sma rt Grid , ea rly ac cess . [20] A. Jena, F . Ding, J. W ang, Y. Ya o and L. Xie , “ LLM - Ba sed Ad aptiv e Dist ribution Voltage Regulati on Under Frequen t Topol ogy Chang es: An In - Context MPC Fra mewor k, ” IEEE Trans . Smart Gri d , vo l. 16, no. 5, pp. 4297 - 43 00, Sep . 2025 . [21] F. Bernier , J. Cao, M. Cordy and S. Gham izi, “ PowerGrap h - LLM: Novel Power Grid Graph Embe dding and Optimiza tion With L arge Langua ge Models , ” IEEE Trans . P owe r Systems , vol. 40, no . 6, pp. 548 3 - 5486, Nov. 2025 . [22] X. Yang, C. Lin, H . Liu and W. Wu, “ RL 2: Rein for ce Lar ge Lang uage Mode l to As sist Saf e Rei nforceme nt Lea rning for Ener gy Ma nageme nt of Active Distrib ution Ne tworks, ” IEEE Tr a ns . S ma rt G rid , vol. 16, no. 4, pp. 3419 - 34 31, Jul . 202 5 . [23] Z. Y an and Y. X u, “ Rea l - Time Optimal P ower F low Wit h Lingu istic Sti pulations : Inte grating GPT - Agent and Deep Rei n for cemen t Learni ng, ” IEEE Trans . Power Sys tems , vol. 39, n o. 2, pp. 47 47 - 4750, Mar . 202 4 . [24] Y. Cao e t al. , “ S urve y on Large La nguage Model - E n hance d Reinforcem ent Lear ning: Co ncept, Tax onomy, and Me thods, ” IEEE Tra n s . Neu ral Netwo rks and Lear ning Syste ms , vo l. 36, no. 6 , pp. 9737 - 975 7, Jun . 2025 . [25] N. Shin n, F. Cassano, A . Gopina th, K. Narasim han, and S. Y ao, “Re flexion: Lang uage a gents w ith v erbal r einfor ceme nt lear ning, ” A dvances in Neur al Infor mation Pro cessin g Systems , vol. 3 6, 2024 . [26] A. Vasw ani, N. Shaze er, N. Parm ar et al. , “Atte ntion is all you ne ed,” Adva nces in neural inform ation proces sing sy stems , 2017, no. 30. [27] Z. Xu et al. , “ DriveG PT4: I n terpret able End - to - End Autono mous Drivi ng Via Lar ge Langua ge Model, ” IE EE Robot ics an d Autom ation L etters , v ol. 9, no . 10, pp. 81 86 - 8193, Oct. 2024 . [28] L. Wen, D . Fu, X. Li e t al. , “ Di Lu: A Kno wledge - Drive n Approac h to Autono mous Dri ving wit h Large Language Models , ” a rXi v: 2309. 16292 , Feb. 202 4. [29] A. Broha n, Y. Chebo t ar, C. F inn et al. , “ Do as I ca n, not as I say: Groundi ng lang uage i n robot ic af forda nces,” in Co nferenc e on r obot lear ning , 2023, pp. 2 87 - 318. [30] W. Huan g, F. Xia, T . Xiao et al. , “Inner Monol ogue: E mbodied Re asoning throu gh Pla nning with Langua ge Mode ls,” in Confe rence on Robot Lear ning , 2023, pp. 17 69 - 1782. [31] M. Jia, Z . Cui and G. Hug, “ E nhancin g LLMs for Pow er Syst em Simu lati o ns: A Feedba ck - Driv en Mult i - Agent Framew ork, ” IEEE Tra ns . Smart Gr id , vol . 16, no. 6 , pp. 5556 - 5572 , Nov. 2025 . [32] R. S. B onadia, F. C . L. Trin dade, W. Freitas an d B. V enkatesh, “ On th e Poten tial of ChatGPT to Ge nerate Distribu tion Sys tems f or Load Flow Studi es Usi ng OpenD SS, ” IEEE Trans . Power Sys tems , vol. 38, no. 6, pp. 5965 - 59 68, Nov. 202 3 . [33] S. Maj u mder, L . Dong, F. D oudi et al. , “Expl oring t he capa biliti es and limita tions of large languag e mode ls in t he electr ic ener gy sec tor,” Joule , vol. 8, no . 6, p p. 1544 - 1549, Jun. 2 024. [34] K. Deng , Y. Zh ou, H. Ze ng, Z. Wang and Q. G uo, “ Pow er Grid Model Gene ration Based on the Too l - Augmented Large L anguage Mo del, ” IEEE Trans . Power System s , v ol. 40 , no. 6, pp. 5487 - 54 90, Nov. 202 5 . [35] R. S. Sut ton and A. G. Barto, Rei nforc ement L earnin g: An I ntrodu ction , vol. 1. Cam bridge, MA, USA : MIT Press, 1998. [36] J. W ei, X. Wan g, D. Schuur mans et al., “Chai n - of - Thought Prompt ing Elicits Reas o ning i n Large Language M odels,” Adv ances in ne ural inform atio n proc essin g sys tems , vol. 35, p p. 2482 4 - 24837, 2022. [37] X. Yang et a l. , “ Su pplementar y files for Two - Sta ge Activ e Distr ibution Netw ork Vol tage Control via LL M - RL C ollabora tion: A Hy brid Know ledge - Data - Driven App roach ,” Feb. 2026, [Onl ine]. Avail able: https ://git hub.c om/YangX uStev e/ Knowled ge - Da ta - Driv en . [38] J. Schu lman, F. W o lski , P. Dhariwal , A. Radfor d and O. Klim ov, “Proximal pol icy opti mizat ion alg orith ms,” a rXiv:17 07.06347 , Aug. 2017 . [39] M. E. Baran and F. F. Wu, “Network r econ figuration in distribut ion syst ems for loss reduct ion a nd loa d bala ncing,” IEEE Trans . Powe r Delivery , vol. 4, no. 2, pp . 1401 – 1407, Ap r. 1989. [40] H. M. K h odr, F . G. Olsina, P . M. De O liveira - De Jes us, and J. M . Yusta, “ Max imum sa vings a pproa ch for locati on and sizin g of ca pacitor s in distr ibution sys tems,” Electric Power S ystems Researc h , vol. 78, n o. 7, pp. 1192 - 12 03, Jul. 200 8.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment