From Specialist to Large Models: A Paradigm Evolution Towards Semantic-Aware MIMO

The sixth generation (6G) network is expected to deploy larger multiple-input multiple-output (MIMO) arrays to support massive connectivity, which will increase overhead and latency at the physical layer. Meanwhile, emerging 6G demands such as immers…

Authors: Keke Ying, Zhen Gao, Tingting Yang

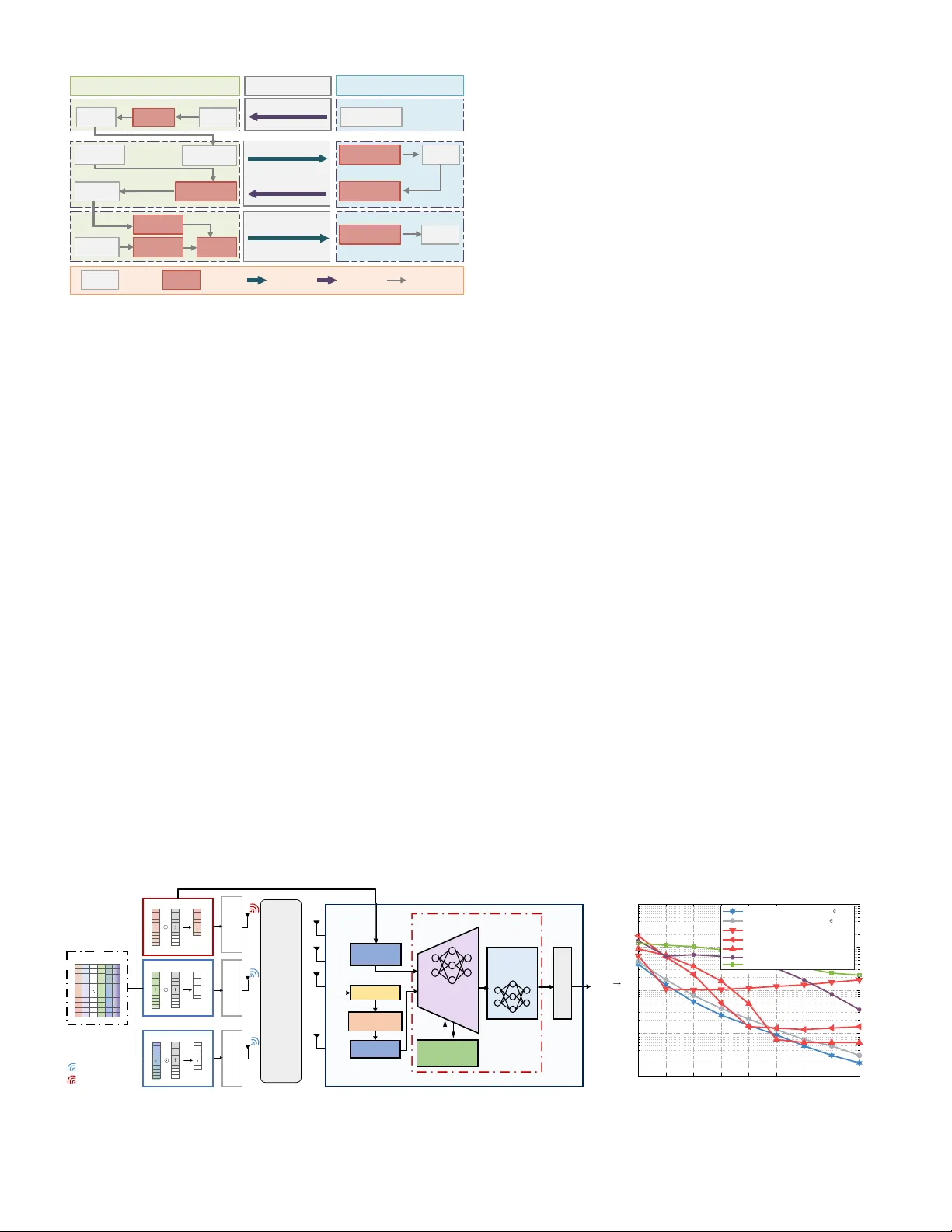

1 From Specialist to Lar ge Models: A P aradigm Ev olution T o w ards Semantic-A w are MIMO K eke Y ing, Zhen Gao, Tingting Y ang, Jianhua Zhang, Xiang Cheng, T ony Q.S. Quek, and H. V incent Poor Abstract —The sixth generation (6G) network is expected to deploy larger multiple-input multiple-output (MIMO) arrays to support massive connectivity , which will increase ov erhead and latency at the physical layer . Meanwhile, emerging 6G demands such as immersive communications and en vironmental sensing pose challenges to traditional signal processing . T o address these issues, we propose the “semantic-awar e MIMO” paradigm, which leverages specialist models and large models to per ceive, uti- lize, and fuse the inherent semantics of channels and sources for improved performance. Moreover , f or repr esentative MIMO physical-layer tasks, e.g., random access activity detection, channel feedback, and precoding, we design specialist models that exploit channel and source semantics f or better performance. Additionally , in view of the more diversified functions of 6G MIMO, we further explore lar ge models as a scalable solution for multi-task semantic-aware MIMO and re view recent advances along with their advantages and limitations. Finally , we discuss the challenges, insights, and prospects of the evolution of specialist models and large models empower ed semantic-aware MIMO paradigms. I . I N T RO D U C T I O N Massiv e multiple-input multiple-output (MIMO) technology is expected to be a pi votal technology for sixth generation (6G) wireless systems. On the base station (BS) side, sup- porting immersi ve communication scenarios such as mixed reality necessitates larger antenna arrays to achiev e improv ed spatial di versity and multiplexing capabilities. On the user equipment (UE) side, the dramatic gro wth of device density in the Internet of Things calls for more advanced multi-user access schemes to handle massiv e connectivity . Besides, MIMO channels inherently contain rich information about the wireless propagation environment [1], efficiently exploiting such struc- ture for communication or sensing remains challenging. Standard MIMO theory faces challenges due to the v ast MIMO dimensions and signal modal di versity . For instance, con ventional methods for MIMO channel state information (CSI) acquisition rely on signal detection and estimation theory , aiming to acquire complete CSI or parametrized information such as multipath delays, angles, and Doppler shifts. As BS an- tennas or transmission environment complexity increases, these methods demand excessi ve pilot overhead. Regarding source transmission tasks, traditional schemes aim to design source and channel codec schemes that approach channel capacity within the syntax communication frame work of information theory [1]. For data-intensive sources, such as images and videos, significant data transmission overhead is required, and a clif f Kek e Y ing and Zhen Gao ( corresponding author ) are with Beijing Institute of T echnology , China; T ingting Y ang is with Peng Cheng Laboratory , China; Jianhua Zhang is with Beijing University of Posts and T elecommunications, China; Xiang Cheng is with Peking Univ ersity , China; T ony Q. S. Quek is with Singapore University of T echnology and Design, Singapore; H. V incent Poor is with Princeton University , USA. effect occurs where performance severely degrades when the quality of channel falls below a certain threshold. Recently , semantic communication has gained attention [1] as it focuses on efficiently and accurately transmitting the meaning of source information, unlike traditional syntactic communication, which aims to accurately reco ver transmit- ted symbols. By exploring intrinsic meanings or task-related semantic information, the transmission resource requirements can be greatly reduced. This encourages the design of MIMO systems aware of source and channel semantic features to solve physical-layer tasks, potentially reducing transmission overhead or enhancing performance metrics in adverse transmission conditions. Achieving this inv olves le veraging the semantic encoding capabilities of deep neural networks (DNNs), which can be learned from large amounts of data. In MIMO systems, channel and source semantics can be transformed into feature representations using DNNs. F or e xample, the Transformer framew ork [2] allo ws conv ersion of source and channel modal- ities into feature sequences, enabling the T ransformer to extract task-specific semantics for various tasks. More recently , Inspired by the success of large language models (LLMs) in natural language processing, researchers are exploring how to integrate specialized models into a univ ersal communication large model (LM) framework. This approach seeks to align div erse physical-layer transmission tasks at a unified token space, allowing a single LM to manage multiple tasks efficiently . For example, the mixture of experts (MoE) method offers an efficient solution by activ ating only a subset of expert subnetworks within the LM during inference. This enables scalable and adaptive task management without signifi- cantly increasing computational costs, sho wcasing potential for handling various tasks. In the remaining sections of this article, we will first discuss sev eral semantic-aware MIMO communication cases based on specialist models, including user acti vity detection (UAD), CSI feedback, and multi-user precoding, to rev eal how they utilize deep learning for the perception, utilization, and fusion of semantic information in MIMO systems. Next, we will further in vestigate and compare existing research on MIMO physical-layer tasks based on general LMs. Follo wing that, we will discuss challenges and future directions. The final section summarizes the entire article. I I . S P E C I A L I S T M O D E L S F O R S E M A N T I C - A W A R E M I M O In this section, we examine semantic-aware specialist models for physical-layer MIMO tasks, including uplink random ac- cess, CSI feedback, and precoding. These tasks highlight uplink UE-side semantics, propagation-en vironment semantics, and downlink BS-side semantics, providing a comprehensive view 2 MIMO Channels UE-side BS-side Activity Detection Uplink Preamble ① Random Access Downlink Pilots ② Pilot Transmission Channel Estimation Semantic CSI Compression ③ CSI Feedback Semantic CSI Reconstruction Precoding ④ Data Transmission Semantic Data Decoder Semantic Data Encoder Downlink Data Downlink CSI Downlink CSI Side Information Semantic Perception Semantic Perception Received Preamble Active User Set Semantic Utilization Downlink Data Data Unit Data Processing Module Downlink Transmission Uplink Transmission Information Flow Direction Semantic CSI Encoder Semantic Fusion Fig. 1. System architecture of a semantic communication framework incorporat- ing key functionalities including activity detection, CSI feedback, and downlink precoding. The communication process in volves three semantic stages: (i) semantic perception, which extracts local features such as user activity and channel characteristics during activity detection and semantic CSI compression; (ii) semantic utilization, where side information is lev eraged to enhance CSI reconstruction quality; and (iii) semantic fusion, inv olving the fusion of semantic data and downlink CSI for precoding design. of the wireless communication pipeline. As illustrated in Fig. 1, the process starts when users request initial connection with the BS via random access. The BS must then identify activ e users from the superimposed preamble sequence. Subsequently , before downlink transmission, obtaining high-dimensional CSI through the feedback link is essential for precoding, espe- cially for systems without channel reciprocity . Finally , the BS performs precoding for high-quality multi-user transmission. Focusing on these tasks, we revie w existing research and present semantic-aware designs to enhance performance. The T ransformer architecture w as selected as the backbone of our semantic-aware MIMO framew ork. Its self-attention mechanism effecti vely captures global dependencies, while cross-attention flexibly models correlations across heterogeneous domains or data sources. Additionally , the T ransformer architecture pro- vides efficient parallel computation, scalability in model size, and seamless integration with the large-model paradigm. A. U AD for Random Access 1) Related W ork: With the growing density of cellular net- work de vices, identifying an activ e user set (A US) from massi ve numbers of UEs is a crucial initial step for establishing efficient communication link. Grant-free non-orthogonal access effec- tiv ely reduces massiv e random access delays. In this method, shown in the upper part of Fig. 1, each user is assigned a specific non-orthogonal preamble, and the BS needs to identify A US from the received superimposed preambles of all potential users. This activity information extraction can be seen as a form of semantic perception. Existing methods like compressed sensing (CS) [3] and cov ariance-based maximum likelihood detection [4] address this by le veraging sparsity or building likelihood models. CS focuses on reconstructing sparse channel matrices rather than directly detecting activ e users, increasing preamble overhead. The co variance-based approach impro ves detection performance at the cost of higher complexity . [4] further introduces a het- erogeneous T ransformer to learn correlations between receiv ed signal co variance and preambles, boosting performance and lowering complexity . Y et, data-dri ven methods struggle to gen- eralize across different preamble lengths within a single model, prev enting dynamic adaptation to traffic and w asting resources. This calls for adaptable detection networks that can efficiently capture user activity semantics across v ariable preamble lengths. 2) Semantic-A war e U AD with V ariable Pr eamble Length: W e propose a semantic-aw are U AD network with variable preamble length (SA-U ADNet-VPL) that directly perceiv es user activity semantics instead of reconstructing the sparse channel matrix, enabling a single model to handle different preamble lengths. As shown in Fig. 2(a), in an uplink access system, each of the K potential users is pre-assigned a unique preamble sequence p k of length L max . When some users become activ e, they transmit their respectiv e preamble sequences. The BS receiv es the superimposed preamble and determines the A US. Extending [4] to variable preamble lengths, we lev erage a T ransformer encoder to extract correlations. Specifically , we project user preambles { p k } K k =1 and the autocorrelation of the zero-padded received signal Y p into same-dimensional features using two linear layers. Given their distinct semantics, we use a heterogeneous T ransformer with separate weights for Y p and { p k } K k =1 in multi-head attention and feed-forward layers. In the final output layer, the activity decoder calculates the correlation between the feature sequences corresponding to Y p and the multi-user preambles { p k } K k =1 to estimate the user activity λ . The proposed scheme employs the categorical cross-entropy of user activity as its objective function, allowing the receiver to directly capture acti vity semantics from the recei ved preamble. T o improve scalability , we introduce a preamble-length adap- tiv e mechanism. The transmitter sets a maximum preamble 1 p 1 MASK 1 p 2 MASK 1 0 1 0 p K MASK Preamble Pre-allocaio n Power Normalization Power Normalization Power Normalization User 1 User 2 User K Uplink Massive Random Access Channel SA-UADNet-VPL ˆ Autocorrelation Linear Embedd ing Linear Embedd ing Heterogeneous Transformer Network Preamble Length Adaptive Modul e (PLAM) Activity decoder Binary threshold detector 1 Preamble Pool Preamble Tran smission BS 1 0 1 0 1 0 1 0 1 2 K Zero Padding 1 K k k p p Y max L L Inactive UE Active UE max L max L L L 0 0 0 0 (a) 8 10 12 14 16 18 20 22 24 L test 10 -4 10 -3 10 -2 10 -1 10 0 Pe SA-UADNet-VPL (with PLAM), L train [8,24] SA-UADNet-VPL (no PLAM), L train [8,24] SA-UADNet-VPL (no PLAM), L train = 10 SA-UADNet-VPL (no PLAM), L train = 15 SA-UADNet-VPL (no PLAM), L train = 18 AMP OMP (b) Fig. 2. (a) System diagram of semantic-aware massive random access with variable preamble length transmission; and (b) Performance of P e relativ e to testing preamble lengths L test in various U AD schemes. Notes: During simulation, we randomly generated QPSK preambles of maximum length L max = 28 , with K = 128 potential UEs and a BS equipped with 64 antennas. The activity of each UE follows a Bernoulli distribution with an activ e probability of 0.1, and the channels between UEs and the BS follow independent and identically distributed Rayleigh fading. 3 Embedding Transformer Positional Encoding Semantic CSI Encoder 1 Parameter Sharing FC RCA-Block Transformer Positional Encoding FC &Embedding Semantic CSI Decoder 1 Embedding Transformer Positional Encoding Semantic CSI Encoder M FC RCA-Block Transformer Positional Encoding FC &Embedding Semantic CSI Decoder M Parameter Sharing Parameter Sharing ... ... BS UEs Information sharing Uplink Feedback Link Re s i d u a l Cut a dd & nor m a dd & nor m F e e d fo rw ard Ne two r k softmax h RCA-Bloc k R → C IQ Mapping Power Norm OFDM Mod R → C IQ Mapping Power Norm OFDM Mod C → R IQ Demapping OFDM Demod C → R IQ Demapping OFDM Demod UE 1 1 H UE M M H UE 1 UE M 1 ˆ H ˆ M H 2 X 1 X o W q W k W v W 1 L 1 L 2 L 2 L 3 L 3 L Re s i d u a l Cut a dd & nor m a dd & nor m F e e d fo rw ard Ne two r k softmax h RCA-Bloc k 2 X 1 X o W q W k W v W (a) 1/32 1/16 1/8 1/4 Compression Ratio ( r ) -24 -22 -20 -18 -16 -14 -12 -10 NMSE (dB) Proposed SA-RCA-MUNet SA-CA-MUNet vanilla Transformer ADJSCC-CSINet+ [9] Distributed-DeepCMC [10] (b) Fig. 3. (a) The SA-RCA-MUNet-based multi-user semantic-aware CSI feedback architecture; and (b) NMSE performance comparison of different CSI feedback schemes as a function of compression ratio [10]. Notes: For performance ev aluation, simulations used a dataset generated by Quadriga under the 3GPP TR 38.901 UMi scenario. The BS has a 32-antenna linear array , while the UE has a single antenna. The downlink channel operates at 6.7 GHz with 30 kHz subcarrier spacing across 1024 subcarriers. After angle-delay transformation, only the first N c = 32 delay-domain rows, where multipath energy concentrates, are fed back. The feedback uses k subcarriers, yielding a compression ratio r = k/ (32 × 32) = k / 1024 . length L max ; when the actual length L < L max , the extra part is masked and not transmitted. At the BS, the received preamble is zero-padded to L max . Since zero se gments make feature impor - tance unequal, we propose a preamble length adapti ve module (PLAM). Inspired by [5], PLAM concatenates the preamble length information L with average-pooled input features, then uses a two-layer fully connected network to produce scaling factors. These adjust the T ransformer input feature weights, en- abling adapti ve attention to cope with v ariable preamble lengths. It is worth noting that SA-U ADNet-VPL captures task-oriented semantics by projecting pilots into a unified feature space and using self-attention together with PLAM to emphasize activity- related structures rather than full channel details, allowing consistent extraction of activity semantics across different pilot- length configurations. T o ev aluate the performance of the proposed scheme, we used the probability of error in detecting user activity ( P e ) as the performance metric. Fig. 2(b) presents P e as a func- tion of preamble sequence length L test . These results indicate that the proposed SA-U ADNet-VPL scheme achie ves superior error detection performance with shorter pilots compared to orthogonal matching pursuit (OMP) and approximate message passing (AMP) algorithms [3], thereby effecti vely reducing access delay . Additionally , compared to models trained with fixed preamble lengths ( L train = 10 , 15 , 18 ), the scheme trained with variable-length preambles ( L train ∈ [8 , 24] ) demonstrates enhanced generalization capabilities. Furthermore, by compar- ing SA-U ADNet-VPL (with PLAM), L train ∈ [8 , 24] , and SA- U ADNet-VPL (no PLAM), L train ∈ [8 , 24] , we can find that the inclusion of the PLAM further improves the detection perfor - mance, confirming the effecti veness of the proposed scheme in extracting user activity semantics from receiv ed signal. B. CSI F eedback 1) Related W ork: T raditional codebook or CS-based feed- back requires high ov erhead, whereas deep learning reduces ov erhead and impro ves reconstruction. Most deep learning- based CSI feedback uses separate source-channel coding (SSCC), where encoder outputs are quantized into bits be- fore channel coding. In SSCC framew orks, CSI reconstruction performance notably degrades when the signal-to-noise ratio (SNR) of the feedback link drops below a certain threshold, a phenomenon kno wn as the clif f effect [6]. T o address this, [7] proposed a conv olutional neural network (CNN)-based deep joint source-channel coding (DJSCC) technique, using end-to-end optimized CNN encoders/decoders to overcome the clif f effect. [8] further proposed an attention-based JSCC (ADJSCC) scheme that integrates SNR information into en- coder/decoder intermediate layers via an attention feature mod- ule [5], enabling adaptation to varying SNRs. Considering the multi-user scenario, [9] proposed a distributed deep learning- based channel matrix compression (DeepCMC) scheme, which uses summation-based fusion in joint decoders to exploit geographically-correlated UEs side information. DeepCMC adopted a CNN-based structure, which leav es potential for better capturing multi-user CSI semantic correlations. 2) Semantic-A war e CSI F eedback: W e propose a semantic- aware residual cross-attention multi-user network (SA-RCA- MUNet) for CSI feedback, employing a Transformer -based backbone to utilize CSI semantics from geographically cor- related UEs [10]. As illustrated in the middle part of Fig. 1, each UE percei ves and compresses downlink CSI into analog constellation symbols for feedback; the BS then enhances semantic CSI reconstruction using side information from other geographically correlated UE UEs. In the multi-user CSI feedback system shown in Fig. 3(a), semantic features of delay-angle domain CSI for each UE are independently extracted and compressed using a Transformer - based semantic CSI encoder . After real-to-complex con ver- sion, the outputs are mapped to analog constellation symbols and transmitted after in-phase/quadrature (IQ) mapping, power normalization, and orthogonal frequency division multiplexing (OFDM) mapping. At the BS, the received CSI undergoes OFDM demodulation, IQ demapping, and complex-to-real con- version, and is then reconstructed by the semantic CSI decoder . Specifically , the T ransformer acts as the backbone for both encoder and decoder . In the encoder, after conv erting complex to real numbers, the angle-delay domain CSI is represented by N t sequences of length 2 N c , where N t is the number of BS antennas and N c the number of subcarriers. These sequences are embedded into N t feature sequences of length d , processed by an L 1 -layer T ransformer encoder for feature extraction, and finally compressed into a low-dimensional semantic sequence of dimension 2 k via a linear layer for transmission. Con versely , the decoder reconstructs the CSI by first applying dimensional upscaling via linear embedding, followed by a backbone with L 2 T ransformer layers and L 3 residual cross- attention blocks (RCA-Blocks). The CSI for each UE is initially reconstructed through parameter-sharing Transformer layers, then refined jointly by RCA-Blocks. As detailed in [10], for each UE, the RCA uses query (Q) information from other UEs 4 . . . Image Semantic Extraction Network Image Semantic Extraction Network CSI Semantic Extraction Network Semantic Fusion Network Wireless MIMO Channel Image Semantic Decoding Network Image Semantic Decoding Network . . . Wireless Channel Semantic Decoding at the UEs Source-Channel Semantic-Aware Precoding at the BS Data-Driven Semantic-Aware Precoding Knowledge- Driven Semantic- Aware Precoding weighted sum Hybrid Data- Knowledge Driven Semantic- Aware Precoding ˆ H H [] K D [1] D ˆ [1] D ˆ [] K D Swin Transformer Swin Transformer Transformer Transformer Swin Transformer Swin Transformer (a) -10 -5 0 5 10 15 20 SNR (dB) 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 LPIPS Proposed SCSAP ADJSCC + RZF BPG + LDPC + QAM + RZF (best) BPG + 1/2 LDPC + 16QAM + RZF BPG + LDPC + E2E (b) Fig. 4. (a) Source-channel semantic-aware precoding for multi-user image transmission network; and (b) LPIPS vs. SNR for different transmission schemes. Notes: It is assumed that a BS equipped with 64 antennas simultaneously transmits dif ferent image data to 4 UEs o ver the broadband multipath channel. Due to space limitations, we include only the key results here, while more complete analyses and full experimental settings can be found in [12]. and key (K)/value (V) information from itself to compute at- tention scores and extract common information among different UEs. A residual cut is further introduced to pro vide complemen- tary information across UEs. This leverages semantic channel information from geographically similar UEs and enhances CSI recov ery . In SA-RCA-MUNet, channel semantics are extracted via T ransformer encoders and further refined through residual cross-attention, which selectiv ely exchanges environment infor- mation among UEs. This injects complementary propagation semantics and leads to improved CSI reconstruction. Besides, the normalized mean square error (NMSE) is chosen as the loss function for end-to-end optimization. In Fig. 3(b), we compare the performance of various schemes at a received SNR of 10 dB under a two-UE scenario. Among these, SA-CA-MUNet replaces our residual cross-attention with typical cross-attention, while the v anilla Transformer simplifies SA-RCA-MUNet to a single-user netw ork by substituting RCA- Blocks with con ventional Transformers b ut maintaining the same number of network layers. ADJSCC-CSINet+ [8] is a CNN-based structure designed for single-user CSI feedback, and Distributed-DeepCMC extends the vanilla Transformer scheme to the multi-user case by incorporating the joint re- construction method from [9]. These results demonstrate that significant gains in CSI reconstruction performance at various compression ratios can be achiev ed by percei ving and utilizing side information (i.e., channel semantic from nearby UEs) with the assistance of the proposed architecture. C. Multi-user Downlink Hybrid Pr ecoding 1) Related W ork: Multi-user hybrid precoding is essential for data transmission in massi ve MIMO systems. By accurately controlling signal directionality and mitigating user interfer- ence, precoding can substantially improve the system’ s spectral efficienc y . Deep learning-based hybrid precoding methods have achiev ed lower complexity and better performance than tradi- tional approaches. Howe ver , these methods mainly focus on optimizing spectral or energy efficienc y under the assumption of ideal Gaussian-distributed sources, thus ov erlooking the semantic information of the source during precoding design. T o address both source semantics and CSI information in MIMO transmission, [11] hav e proposed inte grating CSI into the joint encoder-decoder framework. This integration of CSI and image semantic features facilitates adaptiv e power alloca- tion according to feature importance, thereby improving the robustness of MIMO-based semantic transmission. 2) Sour ce-Channel Semantic-A war e Pr ecoding (SCSAP): Despite these advances, e xisting research predominantly focuses on single UE transmission in small-scale MIMO systems. In contrast, we propose a joint design approach for massi ve MIMO systems, which fuses CSI and source semantics into the multi- user precoding design [12]. As illustrated at the bottom of Fig. 1, the BS employs semantic CSI and data encoders to extract and fuse semantics for precoding, while each UE decodes its source information using a semantic data decoder . More specifically , as illustrated in Fig. 4(a), a Swin T ransformer-based semantic extraction network processes high- dimensional image sources by leveraging window partitioning and shifting to reduce self-attention complexity while cap- turing multi-scale features. F or the lower -dimensional CSI, a T ransformer-based network extracts multi-user channel se- mantics. By concatenating the semantic features of the CSI and images at the feature dimension, a T ransformer-based network is employed to inte grate these semantic representations. This fusion strategy enables the subsequent precoder design to recognize both source and channel semantics, rather than relying solely on CSI as in conv entional precoding designs. Besides, by embedding channel information directly into the source-semantic encoding process, the transmitted information can adapt to the underlying propagation conditions, thereby improving robustness in complex en vironments. T o enable efficient transmission of fused semantic features, we propose a hybrid data-knowledge dri ven semantic-aware precoding scheme [12]. The data-driv en semantic-aware pre- coding module feeds fused semantics into a T ransformer , outputting precoded signals matching BS antenna dimensions. T o improve robustness and interpretability , expert knowledge is integrated by designing a knowledge-dri ven semantic-aware precoding module, where a T ransformer generates learnable parameters that replace traditional ones in the weighted min- imum mean square error (WMMSE) algorithm. The precoding matrix obtained from learned WMMSE is then multiplied with the fused semantic features to generate the knowledge- driv en precoded signals. Then, the outputs from both model- driv en and knowledge-dri ven precoding are combined using a learnable weighted sum to produce the final precoded transmis- sion signals. This method effecti vely leverages the strengths of expert knowledge and deep learning to optimize perfor- mance. Finally , each UE applies a Swin T ransformer-based semantic decoder to reconstruct images from recei ved feature maps. Unlike traditional precoding schemes focused only on 5 spectral ef ficiency , the proposed SCSAP scheme uses semantic loss as its training criterion, aiming for high-quality image reconstruction. Moreov er , SCSAP exploits image semantics by fusing image-deriv ed source features with CSI-based propa- gation features, enabling a knowledge-dri ven precoder refined through a learnable semantic-correction branch. This hybrid design embeds source-distribution priors into the transmitter , allowing the precoder to better preserve perceptual semantics and surpass purely channel-driven precoding methods. T o ev aluate the performance of the proposed SCSAP scheme, the learned perceptual image patch similarity (LPIPS) is adopted as the semantic performance metric and the follow- ing transmission schemes are compared: i) ADJSCC + RZF: Utilizes a CNN-based network architecture from [8] for joint source-channel coding. T o cope with the inter-user interference, regularized zero forcing (RZF) is used for multi-user precoding. ii) BPG + LDPC + QAM + RZF (best): Through exhaus- tiv e search of different better portable graphics (BPG) source coding rates, low-density parity check (LDPC) channel coding rates, and quadrature amplitude modulation (QAM) orders, the combination with optimal performance is selected. iii) BPG + 1/2LDPC + 16QAM + RZF: Uses fixed-rate source and channel coding and 16 QAM modulation. iv) BPG + LDPC + E2E: The system employs BPG for source coding and LDPC for channel coding. The single-user autoencoder-based end-to-end (E2E) transmission frame work [13] is extended to accommodate the multi-user scenario for transmission. In Fig. 4(b), we compare the performance of abov e schemes at different receiv ed SNRs. The traditional SSCC method e x- perienced significant performance degradation under low SNR conditions; in contrast, schemes based on joint source-channel coding design such as ‘ ADJSCC + RZF’ and the proposed ‘SCSAP’ performed better . Furthermore, the proposed ‘SCSAP’ scheme, which effecti vely lev erages fused source-channel se- mantic information in precoding design, exhibits consistently better LPIPS performance over ‘ ADJSCC + RZF’, demonstrat- ing the effecti veness of the semantic-aw are precoding scheme. I I I . L A R G E M O D E L - E NA B L E D 6 G C O M M U N I C A T I O N S The previous section discussed specialist models for semantic-aware MIMO physical-layer communications. Re- cently , the general problem-solving capabilities demonstrated by LMs in fields such as natural language processing have led academia to explore their application in 6G. Beyond chat- based LMs for telecom domain knowledge, employing LMs for physical-layer tasks is also a noteworthy direction. LMs typically undergo two important stages before practical application: pre-training and fine-tuning. Pre-training in volv es training model parameters on large-scale, non-specific task data to find a fav orable initial point. Notably , the “masked token prediction” paradigm used by the bidirectional encoder repr e- sentations fr om transformers (BER T) series and the “next token prediction” adopted widely by the generative pr etrained T rans- former (GPT) and larg e language model Meta AI (LLAMA) series are deemed effecti ve methodologies. Fine-tuning uses task-specific data to adjust pre-trained model parameters, en- hancing performance on specific tasks, generally requiring much smaller datasets than pre-training. Unlike full parameter fine-tuning, parameter -efficient techniques such as low-r ank adaptation (LoRA), adapter tuning, and prefix tuning achie ve comparable results by adjusting only a subset of the model’ s parameters, which also reduces training costs. In Fig. 5, we ev aluate wireless physical-layer works based on LMs by comparing dif ferent studies regarding model architec- ture, training methods, datasets used in pre-training, and fine- tuning methods along with applicable downstream tasks during the fine-tuning phase. A. Pr e-training Existing research on pre-trained models can be classified into two primary approaches. The first approach employs text- based pre-tr ained models , such as GPT -2 and LLAMA2, as key components for addressing communication tasks. This approach is often complemented by the fine-tuning of the backbone model and the input-output modules to adapt to downstream tasks. The second approach entails constructing a new foundation model pre-trained on channel datasets, i.e., CSI-based pre- trained models . Similar fine-tuning techniques are then applied to tackle downstream tasks. 1) Models Backbone: In Fig. 5, studies [R1]-[R7] examine text-based pre-trained models, like GPT2 and LLAMA, which use Transformer decoder blocks and ne xt-word prediction for pre-training. These LLMs hav e parameters ranging from mil- lions (M) to billions (B). Con versely , studies [R9]-[R13] utilize T ransformer encoder blocks or masked autoencoder architec- tures for pre-training on CSI-type datasets, employing self- supervised learning methods like masked CSI token prediction. The parameter scale for CSI-based models v aries significantly , from 300K to 800M, influenced by network layers and hidden space dimensions. Unlike the single-modal LMs discussed, [R8] explores a multimodal LM based on DeepSeek Janus-Pro, pre- trained on image and text data, to process both input types simultaneously . 2) Datasets: T ext-based models for pre-training mainly use text-type datasets, such as Common Crawl, W ebT ext, W ikipedia, books, and GitHub code. In contrast, CSI-based models rely on simulated or measured channel datasets like 3GPP channel models, DeepMIMO, and practical W iFi datasets. Some studies use div erse channel data types, like RF spec- trograms, W iFi CSI, and 5G CSI [R13], or datasets from various geographical scenarios [R12, R14] to enhance pre- training comprehensi veness. Overall, dataset sizes for CSI- based models range from 5 K to 1 M samples. Compared to LLMs like LLAMA, with billions of parameters trained on trillions of tokens, CSI-based models face limitations due to the lack of lar ge, publicly recognized datasets, posing a bottleneck in exploring larger communication models. 3) Comparison Between T ext/CSI Pr etrained Models: These two primary pre-training paradigms, text-pretrained LMs [R1]- [R8] and CSI-pretrained LMs [R9]-[R15], exhibit complemen- tary strengths and inherent limitations. T ext-based LMs, though trained on linguistic data, use modality-independent T ransform- ers capable of modeling long-range dependencies in arbitrary sequences. W ith appropriate tokenizers or projection heads, wireless inputs (pilots, CSI tensors, image semantic features) can be embedded into token spaces for Transformer processing, similar to multimodal LLMs (e.g., GPT -4V , LLaV A) using vision encoders for image patches. Additionally , parameter- 6 Pre-training Fine-tuning [R4] [R5] [R6] [R9] [R10] [R11] [R12] [R13] [R14] [R15] [R2] [R3] Backbone Pre-training Datasets Parameters Pre-Training Method Fine-tuning meth od Downstream Tasks Name Reference LLM4CP LLM4WM N/A 1 N/A GPT2 GPT2 GPT2 LLAMA2 N/A GPT2 CSI-BERT2 BERT BERT4MIMO BERT(12 Transformer encoder layers) WiFo ViT-based MAE 3 LWM 12 Transformer encoder layers WavesFM 12 ViT encoder blocks + 8 ViT decoder blocks WiMAE/ ContraWiMAE Transformer- based MAE WirelessGPT N/A WebText WebText WebText Common Crawl, Wikipedia, books, and code, etc. WebText WiFi CSI datasets Generated CSI according to 3GPP- TDL channel models Generated 3GPP CSI using QuaDRiGa DeepMIMO dataset RF-Spectrograms, WiFi CSI, 5G C SI DeepMIMO dataset Publicly available channel dataset Pre-training Datasets size 40GB 40GB 40GB 2T tokens 40GB N/A 6K samples 160K samples 820K samples ~5K samples 1.14M samples 300GB 82.87M 88.71M N/A N/A N/A 5.45M N/A 0.3M-86.1M 600K 45M 570K-600K ~80M-800M Next token prediction Next token prediction Next token prediction Next token prediction Next token prediction Masked token prediction Masked token prediction Masked-token prediction Masked-token prediction Masked-token prediction Masked-token prediction Masked-token prediction Layer normalization tuning Mixture of experts with LoRA No fine-tuning 2 LoRA Fine-tuning over layer normalization and positional embedding layers Freeze the bottom layers and fine-tune the top lay e rs No fine-tuning No fine-tuning Fine-tune embeddings and the last layers LoRA N/A N/A Channel prediction Channel reco nstruction, beam manag ement, and radio environment mining Beam prediction Multi-user precoding, signal detection, and channel prediction Communication CSI prediction with assistance from sensing CSI CSI prediction and CSI classification Missing/Noisy CSI reconstruction Channel prediction Sub-6 to mmWave beam prediction and LoS/NLoS classification Human activity sensing, RF signal identification, 5G NR positioning, and channel estimation Cross frequency beam selection and LoS detection Channel estimation, channel prediction, human activity recognition, and wireless reconstruction Notes: References [R1] L. Yu et al, “ ChannelGPT: A large model towards real-world channel foundation model for 6G environment intelligence communication, ” IEEE Commun. Mag., vol. 63, no. 10, pp. 68-74, Oct. 2025. [R2] B. Liu, X. Liu, S. Gao, X. Cheng, and L. Yang, “ LLM4CP: Adapting large language models for channel prediction, ” J. Commun. Inf. Netw., vol. 9, no. 2, pp. 113-125, June 2024. [R3] X. Liu, S. Gao, B. Liu, X. Cheng, and L. Yang, “ LLM4WM: Adapting LLM for Wireless Multi-Tasking, ” IEEE Trans. Mach. Learn. Commun. Netw, vol. 3, pp. 835-847, Jul. 2025. [R4] Y. Sheng, K. Huang, L. Liang, P. Liu, S. Jin, and G. Ye Li, “ Beam prediction based on large language models, ” IEEE Wireless Commun. Lett., vol. 14, no. 5, pp. 1406-1410, May 2025. [R5] J. He et al, “ Sensing-assisted channel prediction in complex wireless environments: An LLM-based approach, ” IEEE Wireless Commun. Lett.,vol. 14, no. 12, Dec. 2025. [R6] T. Zheng and L. Dai, “ Large language model enabled multi -t ask physical layer network, ” IEEE Trans. Commun., vol.74, pp. 307-321, Oct. 2025. [R7] H. Yang et al, “ FAS-LLM: Large language model-based channel prediction for OTFS- enabled satellite-FAS links, ” IEEE J. Sel. Areas Commun., early access, Dec. 2025. [R8] Y. Zhao, L. Yu, L. Shi, J. Zhang, and G. Liu, “ Multi-modal large models based beam prediction: An exa mple empowered by DeepSeek, ” arXiv: 2506.05921, June 2025. [R9] Z. Zhao et al, “ Mining limited dat a sufficiently: A BERT-inspired approach for CSI time series application in wireless communication and sensing, ” arXiv: 2412.06861, Dec. 2024. [R10] F. O. Catak et al, “ BERT4MIMO: A foundation model using BERT architecture for massive MIMO channel state i nformation prediction, ” arXiv: 2501.01802, Jan. 2025. [R11] B. Liu, S. Gao, X. Liu, X. Cheng, and L.Yang, “ WiFo: Wireless foundation model for channel prediction, ” Sci. China Inf. Sci., vol.68, May 2025. [R12] S. Alikhani, G. Charan, and A. Alkhateeb, “ Large wireless model (LWM): A foundation model for wireless channels, ” arXiv: 2411.08872, Nov. 2024. [R13] A. Aboulfotouh, E. Mohammed, and H. A.-Zeid, “ 6G WavesFM: A foundation model for sensing, communication, and localization, ” IEEE Open J. Commun. Soc. vol.6, Aug. 2025. [R14] B. Guler et al, “ A Multi-task foundation model for wireless channel representation using contrastive and m asked autoencoder learnin g, ” arXiv: 2505.09160, May 2025. [R15] T. Yang et al, “ WirelessGPT: A generative pre-trained multi-task le arning framework for wireless communication, ” IEEE Network, vol. 39, no. 5, pp. 58-65, Sept. 2025. [R7] FAS-LLM LLAMA3 15T tokens 1B Next token prediction LoRA Channel prediction 1 N/A indicates information not explicitly provided, such as model and dataset sizes. While certain sizes could be estimated based on the backbone or sp ecific dataset, N/A is used to maintain rigor. 2 Although the LM backbone was not fine-tuned, the input/output modules connected to the LM were trained on specific downstream tasks. 3 ViT and MAE stand for Vision Transformer and Masked Autoencoder, respectively. The authors trained a series of backbone models with varying numbers of encoder and decoder layers. Common Crawl, Wikipedia, books, and code, etc. [R1] ChannelGPT GPT2 WebText 40GB 124M Next token prediction Layer normalization and full- tuning Channel prediction and pathloss prediction [R8] MLM-BP DeepSeek Janus-Pro 234M samples 1.5B Next token prediction Layer normalization tuning and LoRA Beam prediction Image-text pairs Fig. 5. A comparison of various LM applications in communication physical-layer tasks. efficient tuning (LoRA, adapters, MoE) further enables inject- ing communication-domain priors without modifying the full backbone. Howe ver , linguistic pretraining provides no inductive bias for physical-layer structures: standard tokenization ignores OFDM timefrequency correlation, antenna geometry , and chan- nel statistics; generic LLMs also lack noise and interference awareness, risking misinterpretation of noise or reduced robust- ness under distribution shifts. Thus, while feasible, text-based LMs require domain adaptation for physical-layer tasks. In contrast, CSI-pretrained models directly learn en vironment-related semantics but face data scarcity . Real- world CSI datasets (e.g., DeepMIMO) offer high fidelity but limited environmental and configuration di versity , while synthetic CSI from 3GPP , ray-tracing, or geometry-based models scales well yet exhibits a simulation-to-reality gap. This can be addressed via generativ e modeling (GANs, diffusion) to generate diverse but physically consistent CSI, domain adaptation to align synthetic and real data, and wireless task-specific data augmentation (e.g., noise perturbation, dimension permutation). Besides, in the long term, the release of extensi ve multi-en vironment CSI datasets by operators and research infrastructures represents an impactful approach to effecti ve CSI-based pretraining. B. F ine-tuning Current fine-tuning methods typically fall into tw o main categories: 1) Adding additional input-output preprocessing modules for specific tasks without fine-tuning the backbone model parameters. For instance, in CSI prediction tasks, [R11] uses pre-trained channel models, and [R4] uses additional prompts with a trained input-output network for beam pre- diction. 2) Fine-tuning specific backbone model parameters along with input-output modules. Studies like [R3, R6, R7, R8, R13] apply LoRA by integrating lo w-rank matrices into the query and value matrices of the Transformer’ s attention module or feed-forward network. In contrast, [R1, R2, R5] adjust layer normalization parameters while freezing others. [R9, R12] explore fine-tuning the final layers of the backbone. Additionally , [R3] combines LoRA with MoE, using a gating network to select and combine experts for different tasks. This allows the network to lev erage shared knowledge across tasks while retaining task-specific features for experts. Moreov er , MoE uses sparse computation, activ ating a subset of experts per input, enabling model expansion for more tasks without increasing inference costs. In downstream communication tasks, most research focuses on CSI-related tasks, including CSI prediction, CSI estimation, and classification tasks using CSI data, such as line of sight (LoS)/non-line of sight (NLoS) classification and human acti v- ity recognition. A small number of studies address tasks such as signal detection [R6], multi-user precoding [R6], and 5G new radio (NR) positioning [R13]. Additionally , some studies explore specific problem scenarios, for example, [R1], [R5], which examines the enhancement of CSI prediction through the incorporation of en vironment image or sensing CSI information. C. Evolution F r om Specialist Models to Larg e Model Overall, the use of LMs in wireless communications remains in its early stage, and existing models in Section II mainly perform task-specific optimization. Although these specialists achiev e strong performance, they depend on separate data pipelines and isolated representation spaces, limiting the reuse of common wireless priors such as uplink/downlink propaga- tion consistency , spatial correlation, and noise/SNR statistics. This results in redundant parameters, duplicated training, and weak cross-task generalization. A unified LM can ov ercome these issues by employing a shared T ransformer backbone with lightweight projection heads and task decoders [R3, R6, R15]. Heterogeneous inputs (e.g., recei ved pilots, compressed CSI, image semantics) can be mapped into a unified token space, enabling scalable multi-task processing and cross-task knowledge transfer . In particular , recent CSI-based pre-trained backbones [R15] provide a promising direction: by learning latent environment structure, they extract reusable channel se- mantics for multiple tasks. Such unified representations high- light the potential of LMs to support higher-le vel reasoning over physical-layer signal structures. Considering practical computational constraints, the evolu- tion from specialist models to LMs can be conceptualized in three lev els: (i) Level 1 is a BS-only LM that conducts multi-task inference using a shared backbone, facilitated by parameter-ef ficient methods like MoE for multiple tasks; (ii) Level 2 presents a hybrid design where UE-side specialist encoders generate semantic features that are aligned to the 7 BS token space through projection heads; and (iii) Level 3 offers a complete BS-UE dual-side LM, where a compact UE backbone is deri ved through compression or distillation from the BS-side LM. The accompan ying training strategy progresses naturally through these le vels: Le vel 1 inv olves pretraining the BS backbone; Lev el 2 includes joint optimization of UE specialists with BS-side projection and task heads; and Lev el 3 entails end-to-end UL/DL fine-tuning for semantic alignment across the communication link. This provides a coherent and scalable path for evolving isolated specialist models into a unified foundation-model framew ork for wireless systems. I V . C H A L L E N G E S A N D O P E N I S S U E S In this section, we discuss potential future research directions in semantic-aware communication and LMs. Fundamental Theory of Communication LMs: LLMs follow an empirical scaling law indicating that test performance improv es with increasing model size, data, and compute. How- ev er , classical signal detection and estimation tasks are con- strained by limits such as the Cram ´ er-Rao lower bound and the Shannon-Hartley theorem, meaning that simply enlarging mod- els or datasets may not yield uniform gains. Balancing model and data size is therefore essential for managing the complexity- performance trade-of f. In higher-le vel semantic or pragmatic tasks, performance may exhibit patterns similar to LLM scaling behavior . Moreover , large-model inference remains challenging for symbol-lev el physical-layer operations due to strict latency demands, though quantization, structured sparsification, and hardware-aw are acceleration can meet frame-le vel latenc y for quasi-static tasks lik e U AD or CSI reconstruction. Therefore, dev eloping latency-efficient communication LMs is thus crucial for improving real-time applicability . Multimodal Channel/En vironment Information Driven LMs: Existing CSI-based LMs are mostly pre-trained on 3D CSI tied to specific frequency bands and scenarios, relying on a single modality and insuf ficiently using cross-band, cross- scenario, and cross-modal data such as radio maps, visual inputs, and radar signals. Consequently , lev eraging multimodal channel/en vironment information to train LMs that capture richer features for tasks like beam prediction, radio map re- construction, and sensing remains a major challenge for 6G semantic-aware MIMO. Notably , [14] studied the multimodal LM DeepSeek Janus-Pro-1B, using its cross-modal alignment to fuse image data with UE positions for improv ed beam predic- tion, showing strong potential for communication applications. Further exploration is also needed on pre-training and fine- tuning strategies for such multimodal LMs. Generative Models-Driven Semantic-A ware Communica- tions: Semantic communication targets transmitting and inter- preting div erse sources such as text, speech, images, and CSI. Generativ e models can create new samples following desired conditional distributions by learning from lar ge datasets. Thus, by sending only essential semantic information and allowing a generati ve model (e.g., diffusion models or LMs) to recon- struct content at the receiver using source-distribution priors, communication ov erhead can be greatly reduced. For e xample, in image transmission, [15] proposes sending only a “te xtual prompt + edge map” as the semantic representation, enabling a conditional dif fusion model at the recei ver to reconstruct a high-fidelity image with substantially lower cost and latency . V . C O N C L U S I O N S This article has presented the paradigm ev olution of semantic-aware MIMO communication. Through the examina- tion of tasks such as user activity detection, CSI feedback, and precoding, we hav e demonstrated that specialist models can effecti vely percei ve, utilize, and fuse the semantic features of sources and channels, thereby enhancing MIMO communica- tion performance. Additionally , the study has explored the role of large models in v arious physical-layer tasks, rev ealing the potential for enabling intelligent networks. Finally , we have identified unresolved challenges within large communication models and discussed future research directions tow ard 6G semantic-aware MIMO communications. R E F E R E N C E S [1] Z. Qin, F . Gao, B. Lin, X. T ao, G. Liu, and C. Pan, “ A generalized se- mantic communication system: From sources to channels, ” IEEE Wir eless Commun. , vol. 30, no. 3, pp. 18–26, June. 2023. [2] Y . W ang, Z. Gao, D. Zheng, S. Chen, D. G ¨ und ¨ uz, and H. V . Poor, “T ransformer-empowered 6G intelligent networks: From massive MIMO processing to semantic communication, ” IEEE Wir eless Commun. , vol. 30, no. 6, pp. 127–135, Dec. 2023. [3] M. Ke, Z. Gao, Y . W u, X. Gao, and R. Schober, “Compressiv e sensing- based adapti ve acti ve user detection and channel estimation: Massi ve access meets massiv e MIMO, ” IEEE T rans. Signal Process. , vol. 68, pp. 764–779, Jan. 2020. [4] Y . Li, Z. Chen, Y . W ang, C. Y ang, B. Ai, and Y . -C. W u, “Heterogeneous transformer: a scale adaptable neural network architecture for device activity detection, ” IEEE T rans. W ireless Commun. , vol. 22, no. 5, pp. 3432–3446, May 2023. [5] J. Xu, B. Ai, W . Chen, A. Y ang, P . Sun, and M. Rodrigues, “Wireless image transmission using deep source channel coding with attention modules, ” IEEE Tr ans. Circuits Syst. V ideo T echnol. , vol. 32, no. 4, pp. 2315–2328, Apr . 2022. [6] E. Bourtsoulatze, D. Burth Kurka, and D. G ¨ und ¨ uz, “Deep joint source- channel coding for wireless image transmission, ” IEEE T rans. Cogn. Commun. Netw . , vol. 5, no. 3, pp. 567–579, Sept. 2019. [7] M. B. Mashhadi, Q. Y ang, and D. G ¨ und ¨ uz, “CNN-based analog CSI feedback in FDD MIMO-OFDM systems, ” Proc. IEEE P ers. Int. Conf. Acoust., Speech Signal Pr ocess. (ICASSP) , May 2020, pp. 8579-8583. [8] J. Xu, B. Ai, N. W ang, and W . Chen, “Deep joint source-channel coding for CSI feedback: An end-to-end approach, ” IEEE J. Sel. Ar eas Commun. , vol. 41, no. 1, pp. 260–273, Jan. 2023. [9] M. B. Mashhadi, Q. Y ang, and D. G ¨ und ¨ uz, “Distributed deep con vo- lutional compression for massive MIMO CSI feedback, ” IEEE T rans. W ir eless Commun. , vol. 20, no. 4, pp. 2621–2633, Dec. 2020. [10] H. Zhang, M. W u, L. Qiao, L. Liu, Z. Han, and Z. Gao, “Residual cross-attention transformer-based multi-user CSI feedback with deep joint source-channel coding, ” IEEE W ir eless Commun. Lett. , vol. 14, no. 8, pp. 2481–2485, Aug. 2025. [11] B. Xie, Y . W u, Y . Shi, W . Zhang, S. Cui, and M. Debbah, “Robust image semantic coding with learnable CSI fusion masking ov er MIMO fading channels, ” IEEE T rans. Wir eless Commun. , vol. 23, no. 10, pp. 14155– 14170, Oct. 2024. [12] M. Wu, Z. Gao, Z. W ang, D. Niyato, G. K. Karagiannidis, and S. Chen, “Deep joint semantic coding and beamforming for near-space airship- borne massiv e MIMO network, ” IEEE J. Sel. Ar eas Commun. , early access, pp. 1–16, Sept. 2024. [13] A. Felix, S. Cammerer, S. Drner , J. Hoydis, and S. T en Brink, “OFDM- autoencoder for end-to-end learning of communications systems, ”in Proc. IEEE Int. W orkshop Signal Process. Adv . W ireless Commun. (SP A WC) , June 2018, pp. 1-5. [14] Y . Zhao, L. Y u, L. Shi, J. Zhang, and G. Liu, “Multi-modal large models based beam prediction: An example empo wered by DeepSeek, ” June. 2025. [Online] A vailable: https://arxiv .org/abs/2506.05921. [15] L. Qiao, M. B. Mashhadi, Z. Gao, C. H. Foh, P . Xiao, and M. Bennis, “Latency-aw are generative semantic communications with pre-trained dif- fusion models, ” IEEE W ireless Commun. Lett. , vol. 13, no. 10, pp. 2652– 2656, Oct. 2024.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment