Fair Model-based Clustering

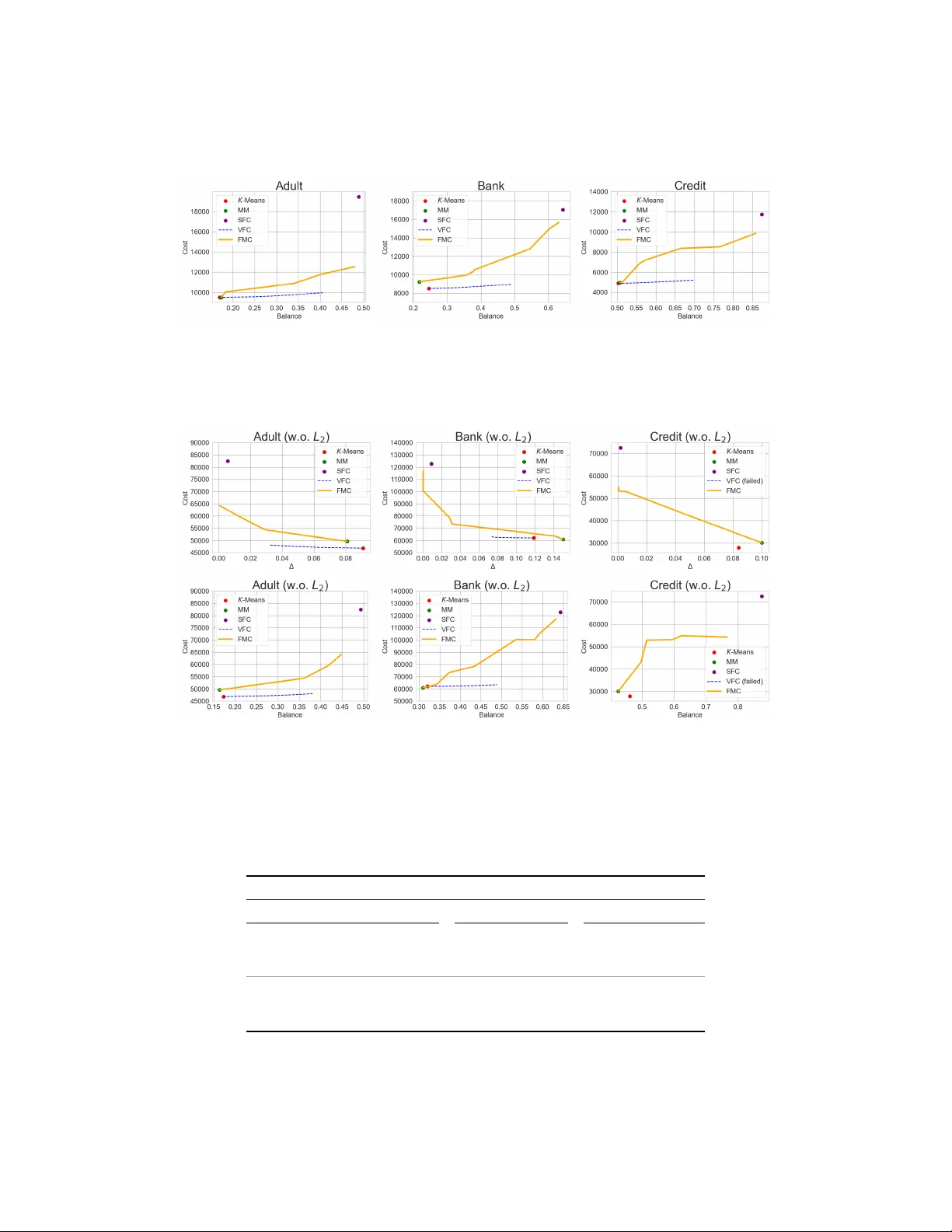

The goal of fair clustering is to find clusters such that the proportion of sensitive attributes (e.g., gender, race, etc.) in each cluster is similar to that of the entire dataset. Various fair clustering algorithms have been proposed that modify st…

Authors: Jinwon Park, Kunwoong Kim, Jihu Lee