A Knowledge-Driven Approach to Music Segmentation, Music Source Separation and Cinematic Audio Source Separation

We propose a knowledge-driven, model-based approach to segmenting audio into single-category and mixed-category chunks with applications to source separation. "Knowledge" here denotes information associated with the data, such as music scores. "Model…

Authors: Chun-wei Ho, Sabato Marco Siniscalchi, Kai Li

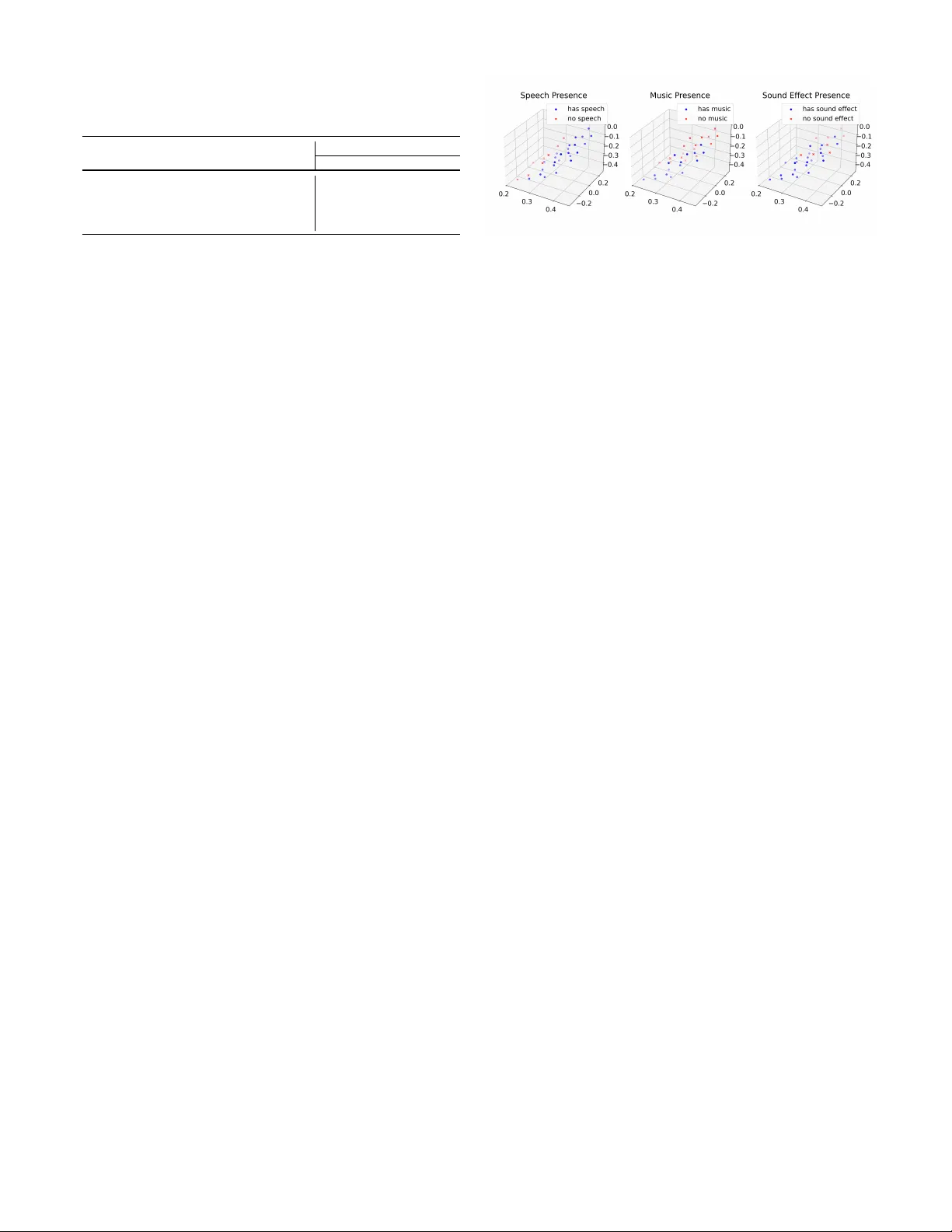

A Kno wledge-Dri v en Approach to Music Se gmentation, Music Source Separation and Cinematic Audio Source Separation Chun-wei Ho 1 , Sabato Marco Siniscalchi 2 , Kai Li 3 , and Chin-Hui Lee 1 1 Georgia Institute of T echnology , USA 2 Uni versity of P alermo, Italy 3 Dolby Laboratories, China Abstract —W e propose a kno wledge-driven, model-based ap- proach to segmenting audio into single-category and mixed- category chunks with applications to source separation. ”Knowl- edge” her e denotes inf ormation associated with the data, such as music scores. ”Model” here r efers to tool that can be used for audio segmentation and recognition, such as hidden Markov models. In contrast to con ventional learning that often relies on annotated data with given segment categories and their corresponding boundaries to guide the learning process, the proposed framework does not depend on any pr e-segmented training data and learns directly from the input audio and its related knowledge sources to build all necessary models autonomously . Evaluation on simulation data shows that score- guided learning achiev es very good music segmentation and separation results. T ested on movie track data for cinematic audio source separation also shows that utilizing sound category knowledge achie ves better separation results than those obtained with data-driven techniques without using such information. Index T erms —Music Segmentation, Music Source Separation, Cinematic A udio Source Separation, Sound Demixing, HMM I . I N T R O D U C T I O N Music segmentation is a task of change point detection consisting of finding temporal boundaries of meaningful ev ents, such as instrumentation, tempo, harmony , dynamics and other musical characteristics, in continuous audio streams. This problem plays a crucial role in various downstream applications, including music source separation [1], music transcription [2], remixing [3], and enhancement [4], etc. Segmentation is usually accomplished by signal-based tech- niques. For example, an energy-based data filtering procedure was proposed in [5] using a pre-trained source separation model to generate a preliminary separation output of single- and mixed-instrument segments. Next a signal-to-noise ratio (SNR) between the mixture and the preliminary separation output is calculated. Finally , a chosen target threshold and a selected perturbation threshold are used to categorize all output segments into three classes: pseudo-target, pseudo- perturbation, and pseudo-mixture. It’ s worth mentioning that this method was used when training a widely used separation model, namely Band-split RNN (BSRNN) [5], on unlabeled data. In [6], the authors instead proposed an approach based on nov elty detection, marking the transition between two subsequent structural parts exhibiting different properties that can be measured by self-similarity matrices (SSM) [6]. A similar problem to music segmentation is to segment continuous speech into phonemes to build models for auto- matic speech recognition (ASR) [7]. Hidden Markov model (HMM) [8] is a widely-used mechanism because of its capability to simultaneously capture spectral and temporal variations in speech. Giv en a set of utterances and their corresponding unit transcriptions, initial models can first be established. These models can then be used to iterativ ely segment continuous speech into modeling units according to the given transcriptions. This is often accomplished with V iterbi decoding [8], and this process is kno wn as ”forced alignment” by forcing the labels of the se gment sequence to follow the giv en sequence of the units, e.g., words or phonemes. The gi ven sequence of units can also be defined as a sort of “kno wledge”, which helps the segmentation process. In music, we do not have transcriptions as in speech, b ut music scores can serve as an equiv alent source of ”knowl- edge” [9], [10]. Therefore, in this paper , we propose a knowledge-dri ven frame work for music segmentation. In tradi- tional supervised learning, models are trained using annotated data sets, where the desired outputs, e.g., segment boundaries for meaningful ev ents such as instrumentation and tempo in music, are explicitly provided by human experts. This anno- tated data serves as ground truth to guide the learning process. In contrast, the proposed knowledge-dri ven approach does not rely on any externally pre-segmented training data to function. This allo ws models to train on a more realistic scenario when segment boundaries are not av ailable. W e test our proposed knowledge-dri ven approach mainly on simulation data due to an av ailability of built-in information about segment labels and their boundaries for detailed ev aluations. First we ev aluate mu- sic ev ent classification after segmentation. Next we check the effecti veness of score-guided HMM-based alignment to e xtract single-instrument segments from continuous audio streams. After performing knowledge-dri ven segmentation, we in ves- tigate two potential applications: (i) Music source separation (MSS) using the simulated dataset described abov e, (ii) Cine- matic source separation (CASS). Both tasks can be viewed as knowledge-dri ven separation methods. Compared to the aforementioned scenario using simulated segmentation music data, cinematic audio processing (including segmentation [11] and separation [12]) deals with more realistic and di verse audio source, including speech, music, environmental sounds, and sound ef fects. These data typically originate from TV shows or movie soundtracks and have important applications in the film and television industry . For e xample, frame level deep- learning-based methods, such as TVSM [11], have recently been proposed for speech and music segmentation in TV shows. Moreov er , the 2023 Sound Demixing Challenge [12] (SDX23) - also known as Divide and Remaster , DnR-v2, focused on separating speech, music, and sound effects. DnR- v3 [13] and DnR-nonv erbal [12] later extended DnR-v2. W e compare music separation performances with widely used music separation tool packages, e.g., Demucs [14] and BSRNN [5]. Finally , we ev aluate CASS performances on DNR-v2 and DNR-non verbal datasets using SepRe- former [15]. Our preliminary MSS results show the method’ s effecti veness on simulated data, and the CASS results achie ve state-of-the-art performance on the DNR-nonv erbal dataset. I I . R E L A T E D W O R K In the past decade, music segmentation research has shifted from feature-engineered [16] and probabilistic approaches [17] tow ard deep, data-driven models capable of capturing both local musical cues and long-range structure. Early deep learning efforts emplo yed con v olutional [18] and recurrent neural networks [19] to learn timbral and temporal patterns directly from spectrograms, improving boundary detection ov er traditional self-similarity [20] and nov elty-curve [21] methods. Subsequent work incorporated attention mechanisms and transformer architectures [22], which model global mu- sical form and repetition more effecti vely than RNN-based systems. Parallel advances in representation learning such as self-supervised learning [23], [24] and contrasti ve learn- ing [25] ha ve further strengthened segmentation performance: Representations deri ved from pretrained audio models robust high-lev el features that generalize across genres and record- ing conditions with minimal task-specific supervision. These systems combine the pretrained embeddings with downstream classifiers to jointly capture harmonic changes, timbral shifts, and long-range repetitions in various music recordings. Segmentation can assist in separation. One approach is to jointly perform segmentation and separation [26], allo wing models to exploit temporal structural information and improve the consistency of separated sources ov er time. Alternativ ely , segmentation results can be used for data selection: for in- stance, in BSRNN [5], unlabelled audio was first se gmented into single-instrument regions, which were then used for semi-supervised training, effecti vely increasing the quantity of usable training data while maintaining instrument-specific purity . While segmentation boundaries are often a vailable in these tasks, weakly supervised methods [27] hav e also been proposed to handle cases where segment labels exist but temporal information is not provided. A dif ferent approach in volves incorporating prior kno wl- edge, such as musical scores, instrument types, or even infor - mation about the performing artists, into building separation models. For e xample, score-informed methods [28] using information from musical scores, e.g., type of instrument [29] and pitch [30], can help associate each separated source with its corresponding instrument. This form of kno wledge-dri ven learning will be address later in the paper . Cinematic Audio Source Separation (CASS) aims to decom- pose mixed cinematic audio into canonical stems, including dialogue (speech), music, and sound effects. While CASS differs from MSS in sev eral aspects, certain MSS techniques can be directly applied to CASS. For instance, Solovyev et al. [31] experimented Demucs4 HT , an architecture which was pre-trained on MSS and fine-tuned on CASS. Another example is BandIt [32], a BSRNN-inspired architecture that achiev es the state-of-the-art on the DnR-v2 dataset. Other data- driv en MSS methods, such as CrossNetunmix (XUMX) [33] architecture, are also proven to be effecti ve [34] on CASS. One key dif ference between cinematic audio and music data is that the former typically lacks well-structured scores, such as MIDI files. Fortunately , in the recent Sound Demixing Challenge [12], the dataset includes se gmentation boundaries. Recently , Hung et al. [11] proposed a speech and music segmentation model that can be used to pseudo-label cine- matic audio. These segmentation boundaries can still serve as valuable kno wledge to assist downstream tasks, such as source separation, despite being less informative than music scores. I I I . P R O P O S E D K N O W L E D G E - D R I V E N F R A M E W O R K T raining a set of segmentation models typically requires a collection of single-instrument data. This can be accomplished by detecting these segments from continuous audio. T o this end, an HMM-based framework is proposed. A. Model-Based Se gment Detection and Selection As in HMM-based recognition, not only the boundaries will vary , but also the recognized sequence will contain insertion, deletion, and substitution errors [7], [8]. In contrast to ASR modeling, in which an abundance of transcribed utterances is a vailable for building high-performance models, a major difficulty in music segmentation is a lack of single-instrument segments to train high-accurac y music classification models. Pre-trained instrument HMMs need to be initialized and em- ployed to identify the sequence of instrument se gments. They can also be re-aligned and optimized as in the ASR modeling- building process. Most recordings come with music scores that can be processed with a similar procedure - using HMMs for forced alignment. In order to detect and extract as man y single- instrument segments as possible for model training with real- world audio, the knowledge source, such as instruments used in the music audio, are employed to improve segmentation quality with forced alignments. Both forced alignment and recognition pro vide information about the active instrument segments and their corresponding boundaries, which serve as a valuable guidance for mixture- music simulation and separation model training. Other recog- nition models, such as DNN-HMMs [35], [36] and connec- tionist temporal classification (CTC) [37] can also be adopted to produce alignments during music segmentation. pro jected knowledge, dim=3 . . . frame-level mixture feature dim=256 . . . frame-level one-hot voice activities dim= N =num source . . . . . . . . . . . . . . . . . . · · · concat concat Separator h 0 h 1 h 2 h 3 h 4 x 0 y 0 x 1 y 1 x 2 y 2 x 3 y 3 x 4 y 4 separated source 1 separated source 2 . . . separated source N Fig. 1. A knowledge-dri ven cinematic audio source separation system. The separator can be an RNN, a Transformer , or any separation model. It recei ves the mixture TF spectrogram concatenated with the projected kno wledge and produces the separated spectrograms. B. Music Sour ce Separation T rained with Se gmented Data In real-world music recordings, separated tracks for in- dividual instruments are often unav ailable due to recording constraints and copyright restrictions. W e address this by introducing a knowledge-dri ven learning framework. In this framew ork, all training data are constructed from identified single-instrument segments in the mixture recording. F or each instrument, we first extract its corresponding single instrument segments using forced alignment. These segments are then ran- domly scaled, cropped, and mixed to form “pseudo mixtures”. The separation model is trained on the pseudo mixtures and predicts the original clean segments. W e ev aluate the proposed knowledge-dri ven separation ap- proach in two settings: training from scratch, and fine-tuning. In former setting, the method eliminates the need for any separated tracks. In the latter , we expect the kno wledge-dri ven strategy to provide additional performance gains for the model. C. Se gment-informed CASS An alternative use of segmentation knowledge is to incorpo- rate the segment information as an auxiliary input. A similar approach has been applied to singing voice separation [29]. W e adopt the same idea for CASS to inv estigate whether the additional knowledge can further improve performance. As depicted in Figure 1, our proposed CASS system takes as input a frame-lev el feature (e.g., a T -F spectrogram) and a set of frame-le vel voice activity indicators representing which sound sources are acti ve. Then, the v oice activity indicators are then projected into a lo w-dimensional embedding. Its dimension k is typically the number of sound categories to be separated (dim= k =3 in this case). It is then concatenated with the feature vector (dim=256 here) at each frame to form the separator input. The separator can be an RNN or a T ransformer . It processes the concatenated vectors and finally outputs the separated spectrograms corresponding to each target source category . I V . E X P E R I M E N T A L S E T U P F O R S E G M E N T A I T O N , S E PAR AT I O N & C A S S A. Data Sets and Pr eprocessing 1) Music Se gmentation and Separation: W e conduct all music segmentation and separation experiments using Slakh2100 [38], which consists of 2100 mixed tracks. T o simplify the experiments, only piano and bass tracks are used. The data set is divided into three parts here: (i) Pre-train set A: a 2-instrument v ersion of the original Slakh2100 [38] train set, containing 89 hours of data; (ii) T rain set B (with scores): a subset (9 hours) of the 2 instrument version of the original validation set (45 hours) but annotated with music scores and segment boundaries; and (iii) T est set: a 2-instrument version of the original test set containing 11 hours of data. T rain set B is created in order to ev aluate the correctness of the segmentation. In this set, the audio range from 12 to 15 seconds, consisting of solo piano, solo bass, their mixtures, and silence, with 3 to 5 seconds each. The music scores are provided as the “knowledge”. The segmentation boundary are giv en as the ev aluation target. This set also serves as a train set for the knowledge-dri ven MSS. 2) Cinematic A udio Source Separation: For cinematic au- dio source separation (CASS), we ha ve tested our proposed framew ork on more realistic audio and obtained very encour- aging results. In some cases, it is required to separate mixed- audio into separated sound se gments, each containing a single sound source. Since category information is usually a vailable, our proposed knowledge-dri ven framework, as shown in Fig- ure 1, can be utilized to improve performances obtained with con ventional purely data-driv en techniques. For our experiments, we use DNR-v2, a dataset employed in the 2023 Cinematic Sound Demixing Challenge [12], for ev aluation. Additionally , DNR-nonv erbal [39], an extension to DNR-v2 [12], are used for training and ev aluation. DNR-v2 was created by randomly cropping (guided by V AD results) and scaling audio from LibriSpeech [40], FSD50K [41], and FMA [42], and mixing them at random start times. Each clip is labeled with voice activities (i.e., start/end times for each category), speech transcription, instrument type, and sound effect (SFX). DNR-nonv erbal extends DNR-v2 by incorporating non verbal v ocalizations from FSD50K (e xcluded in the original DNR-v2 set), including screaming, shouting, whispering, crying, sobbing and sighing, into distinct isolated categories to form sound mixtures. B. HMM-based Music Alignment Results For the proposed HMM-based detection and se gmentation framew ork, we use 39-dim MFCC features [8], including the first- and second-order deriv atives extracted with a 25 ms window and a 10 ms shift. The music score is provided in a MIDI format. W e parse each MIDI file and classify segments into one of four units: piano, bass, mixture, or silence. W e then utilize Hidden Markov Model T oolkit (HTK) [43] to build and HMM for each unit. T o a void resulting in short segments, we use 300-state HMMs, where each state is characterized via a Gaussian mixture model (GMM) [8], [44]. T ABLE I M E AN A B SO L U T E E R RO R ( M AE ) W IT H S C OR E - I NF O R ME D H M M- B AS E D F O RC E D A L I GN M E N T . T H E OV E R AL L M A E I S 9 . 03 F R AM E S ( W I T H 1 0 M S F O R E AC H F R A ME ) . D E T EC T I N G B OU N DA R I E S B ET W E E N M I XT U R E A N D E I TH E R P I A NO O R B AS S S E GM E N TS I S Q U I TE C H AL L E N GI N G . Forced alignment segment boundary MAE (nframe) Previous segment type Silence Piano Mixture Bass Next segment type Silence - 2.0 0.7 2.4 Piano 0.9 - 24.6 7.2 Mixture 2.4 29.3 - 14.8 Bass 2.5 6.4 17.1 - T ABLE II T H E C O NF U S IO N M A T R I X F O R H M M B A SE D R E C O GN I T I ON I N T H E A B SE N C E O F M U S IC S C OR E S . T H E M O D EL T E ND S TO C O N FU S E P I A NO A N D M I XT U R E S E GM E N T S M O RE O F TE N T H AN OT H E R C L AS S E S . Confusion Matrix of the HMM segment recognition Reference segment type Silence Piano Mixture Bass Recognized segment type Silence 2000 3 1 16 Piano 0 1742 86 14 Mixture 0 247 1834 23 Bass 0 1 79 1947 All the training and ev aluation are performed on train set B. Our HMM-based segmentation achieves a good result, with an av erage MAE of 9.03 frames when compared to the reference boundaries. As summarized in T able I, we categorize bound- aries into 12 types based on the instrument transitions. Among these types, boundaries between silence and any other segment type are the easiest for the HMMs to identify . In contrast, boundaries between mixture segments and either piano or bass segments are more challenging. Our training scheme does not require any hand-craft segmentation boundary . In fact, it only requires music scores, which are usually av ailable. In the absence of music scores, HMM-based recognition can be used to identify instrument segments, and the resulting confusion matrix is sho wn in T able II, with an overall accurac y of 94.0%. As indicated, identifying silence and bass segments is easy . Howe ver , it tends to confuse piano with mixture seg- ments more frequently than in other cases. W ith the absence of music scores, this types of segment confusions will provide false information for the next stage separation, which wouldn’ t happen when music scores are provided. C. Music Sour ce Separation Results BSRNN [5] and HTDemucs [45], are adopted as our bench- mark separation models. At a sampling rate of 44,100 Hz, STFT spectrograms, computed with a Hamming window size of 4096 samples, and a hop size of 1024 samples. T o prepare the “pseudo mixtures” mentioned in section III-B, we first remove all detected single-instrument segments shorter than 3 seconds. All remaining segments are next randomly cropped to a fixed duration of 3 seconds. The piano solo (target) and bass solo (perturbation) segments are then randomly mixed to create training examples. W e apply data augmentation techniques following the approach described T ABLE III T H E E V A L UA T I O N R E SU LT S O F M S S . K N OW L E DG E - D RI V E N I N D IC A T E S T H E U SA G E O F A M U S I C S C OR E T O R UN T H E F O RC E D A L I G NM E N T O N T R AI N S ET B , A N D U S E T H E F O RC E D A L I G NM E N T R E SU LT S T O S EL E C T DAT A T O T R AI N / FI NE - T U NE T H E S E P A R A T IO N M O DE L . Scenario Model SDR (dB) Pre-train (PT) BSRNN 15.06 Knowledge-dri ven from-scratch (KD-FS) BSRNN 17.89 Knowledge-dri ven fine-tune (KD-FT) BSRNN 18.52 KD-FT with reference alignments BSRNN 22.30 Pre-train (PT) HTDemucs 14.34 Knowledge-dri ven from-scratch (KD-FS) HTDemucs 17.84 Knowledge-dri ven fine-tune (KD-FT) HTDemucs 17.90 KD-FT with reference alignments HTDemucs 18.36 in [46], including random swapping of left and right channels for each instrument, random scaling of amplitudes within a range of ± 10 dB, and a random dropping rate of 10% of either tar get or perturbation segments to simulate cases where only one source is present. W e ev aluate SDR [47] results, in dB, in three training scenarios, namely: (1) pre-train (PT) on train set A; (2) Knowledge driv en training from scratch (KD-FS) on the train set B; (3) Knowledge-dri ven fine-tuning (KD-FT) from the PT model using train set B. Results are giv en in T able III. T able III is organized into two sections, each correspond- ing to a widely used MSS model: BSRNN [5] and HTDe- mucs [45]. In both sections, KD-FS outperforms PT even with substantially less training data. This indicates that carefully selecting training data based on “kno wledge” can enhance performance, and a large dataset is not necessary if the segmentation model (HMM in our case) is appropriate. Fur- thermore, in scenarios where a large amount of pretraining data is av ailable, the KD-FT setup can be applied. Here, knowledge-based data selection can further boost the PT model’ s performance, achieving better results than KD-FS due to training on a larger dataset. This trend is consistent for both BSRNN and HTDemucs, suggesting that the performance gains stem primarily from the selected data rather than the model architecture. The last row of T able IV presents the scenario in which the reference segmentation is used, representing the upper bound of our knowledge-dri ven approach under perfect boundary detection. This indicates that the method’ s performance could improv e further if the segmentation quality itself is enhanced D. Cinematic AudioSour ce Separation Results SepReformer [15] is adopted as separator . It is a deep spectrum regressor [48] with a separation encoder and a reconstruction decoder arranged in a temporally multi-scale T ransformer U-Net. Separation is performed at the U-Net’ s bottleneck. The decoder is shared across all separated sources. T able IV summarizes the results on DNR-non verbal. W e compared performances with and without the inclusion of voice activity information. The model with voice activity information (4th row) significantly outperforms the v ersion without it (3rd row) in all categories. Moreover , our model T ABLE IV S D R E V A L UATI O N R E S U L T S O N D N R- N O N VE R BA L W I T H A N D W I TH O U T VO I C E AC T I VI T Y I N F O RM A T I ON . Method SDR (dB) Speech Music SFX BSRNN trained on DNR-v2 [39] 5.62 4.33 2.54 BSRNN trained on DNR-nonv erbal [39] 9.30 4.79 5.23 SepReformer trained w/o voice acti vity 7.68 2.94 2.41 SepReformer trained w/ voice acti vity 11.03 5.12 6.67 surpasses the current state-of-the-art on DNR-non verbal (2nd row), demonstrating the strong potential of our approach. The key comparison lies between the third and fourth ro ws. This suggests that, for cinematic mixtures with diverse and ov erlapping acoustic ev ents, conv entional separation models may have difficulties to correctly associate spectral energy to appropriate source without additional context. In contrast, when we integrate the projected voice acti vity representation (fourth row), the model achieves lar ge and consistent im- prov ements across all three categories. The gains are most pronounced for speech and sound effects, where we observe improv ements of 3.35 and 4.26 dBs, respectiv ely , ov er the no- activity variant. These improv ements also surpass the state-of- the-art BSRNN baseline [39], demonstrating the importance of explicitly conditioning the separator on high-level knowledge at each frame. This in turn suggests that the proposed source- related knowledge-dri ven framework helps the model better allocate acoustic energy to the correct outputs, particularly in complex cinematic scenes where multiple nonv erbal sound elements co-occur . Next, we examine the ef fectiv eness of utilizing category information through visualization with knowledge projection, referring to the bottom left portion of Figure 1. In the DNR- non verbal data set, the cate gory for each frame is labeled as speech, music, or sound ef fect. Speech is further di vided into dialog and non verbal and sound effects are split into foreground and background. Thus, each frame can contain dialog, non verbal, music, foreground SFX, background SFX, or any arbitrary mixture of them, resulting in 2 5 = 32 possible combinations. W e iterate ov er all 32 possible combinations to construct 32 seven-dimensional binary voice activity vectors. Each dimension corresponds to one of the following cate- gories: speech, dialogue, non-verbal, music, sound effect (sfx), foreground sfx, and background sfx, where 0 indicates the category is inactive and 1 indicates it is active. W e then feed all 32 voice activity vectors through the knowledge projector mentioned in Figure 1, and visualize the resulting projected 3-D representations and colored according to their category attributes. As shown in the left part of Figure 2, the resulting 3-D feature projection successfully groups those frames contain- ing speech from those without speech. A similar pattern is observed for music and sound effects shown in the middle and right parts of Figure 2, respecti vely . Moreover , the clusters associated with speech dif fer noticeably from those associated Fig. 2. Grouping of the 3-D knowledge projection for all possible voice activities in the DNR-nonv erbal set for (1) left: speech vs. non-speech; (2) middle: with vs. w/o music; and (3) right: with vs. w/o sound effect. with music and sound effects, whereas the clustering patterns for music and sound ef fects are somewhat similar . This sug- gests that music and sound ef fects are less distinguishable from each other when compared to the strong contrast between speech and the other two categories. In addition, to illustrate the separation performance, we in- clude a demo slide as supplemental material in the submission. In this slide, we select one example from the DNR-nonv erbal set, apply our trained kno wledge-driv en model, and present all three separated components, speech (dialog and non verbal), sound effects (foreground and background), and music. V . S U M M A RY A N D F U T U R E W O R K So far , we hav e e v aluated our proposed frame work on both MSS and CASS. Our preliminary results are quite encouraging for the proposed kno wledge-driven learning paradigm. In fact, we hav e verified that the use of music scores helps detecting single-instrument segments. The detected single instrument segments can further facilitate the training process, thereby enhancing separation performance. In addition to training data selection, we found that pro- viding segmentation boundaries as part of the model input significantly improv es performance. Moreover , the learned knowledge projection re veals clear phonetic similarities among different sound categories. In future work, we intend to experiment on real-world continuous audio recordings. W e need to improve HMM- based detection of single-instrument segments with DNN- HMMs [35]. When the a vailable audio duration is v ery limited, e.g., in a single song of less than 5 minutes in length, pre- trained models are required. W e will inv estigate ways to collect music materials with instrument data close to the target audio to learn appropriate pre-trained models. Once pre- trained models are used, knowledge transfer to target audio will become a key challenge. Recent adaptation techniques, applied to attention mechanisms [49] and latent v ariables [50], can also be studied. R E F E R E N C E S [1] E. Manilow , P . Seetharaman, and J. Salamon, “Open source tools & data for music source separation, ” source-separation.github.io/tutorial, 2020. [2] M. D. Plumbley , S. A. Abdallah, J. P . Bello, M. E. Davies, G. Monti, and M. B. Sandler, “ Automatic music transcription and audio source separation, ” Cybern. Syst. , vol. 33, no. 6, pp. 603–627, 2002. [3] J. Pons, J. Janer, T . Rode, and W . Nogueira, “Remixing music using source separation algorithms to improve the musical experience of cochlear implant users, ” J. Acoust. Soc. Am. , vol. 140, no. 6, pp. 4338– 4349, 2016. [4] Noah Schaffer , Boaz Cogan, Ethan Manilow , Max Morrison, Prem Seetharaman, and Bryan Pardo, “Music separation enhancement with generativ e modeling, ” in ISMIR , 2022, pp. 4–8. [5] Y . Luo and J. Y u, “Music source separation with band-split RNN, ” IEEE/ACM Tr ans. Audio, Speech, Lang. Proc. , vol. 31, pp. 1893–1901, 2023. [6] J. Foote, “ Automatic audio segmentation using a measure of audio novelty , ” in Proc. IEEE Int. Conf. Multimedia Expo (ICME) , 2000, pp. 452–455. [7] L. Rabiner and B.-H. Juang, Fundamentals of speech reco gnition , Prentice-Hall, Inc., USA, 1993. [8] L. R. Rabiner , “ A tutorial on hidden markov models and selected applications in speech recognition, ” in Pr oc. IEEE , 1989, vol. 77, pp. 257–286. [9] M. Edwards, J. Kitchen, N. Moran, Z. Moir, and R. W orth, Fundamen- tals of Music Theory , The Uni versity of Edinburgh, UK, 2021. [10] B. Boone, Music Theory 101: Fr om Ke ys and Scales to Rhythm and Melody , an Essential Primer of the Basics of Music Theory , Adam Media, USA, 2017. [11] Y .-N. Hung, C.-W . W u, I. Orife, A. Hipple, W . W olcott, and A. Lerch, “ A large tv dataset for speech and music activity detection, ” EURASIP J. Audio, Speech, Music Pr oc. , vol. 2022, no. 1, pp. 21, 2022. [12] Minseok Kim, Jun Hyung Lee, and Soonyoung Jung, “Sound demixing challenge 2023 music demixing track technical report: Tfc-tdf-unet v3, ” arXiv pr eprint:2306.09382 , 2023. [13] K. N. W atcharasupat, C.-W . Wu, and I. Orife, “Remastering divide and remaster: A cinematic audio source separation dataset with multilingual support, ” in 2024 IEEE 5th Int. Symp. Internet of Sounds (IS2) . IEEE, 2024, pp. 1–10. [14] A. D ´ efossez, N. Usunier , L. Bottou, and F . Bach, “Demucs: Deep extractor for music sources with extra unlabeled data remixed, ” arXiv pr eprint:1909.01174 , 2019. [15] U.-H. Shin, S. Lee, T . Kim, and H.-M. Park, “Separate and reconstruct: Asymmetric encoder-decoder for speech separation, ” Adv . Neural Inf. Pr oc. Syst. , vol. 37, pp. 52215–52240, 2024. [16] O. Nieto and J. P . Bello, “Systematic exploration of computational music structure research, ” in ISMIR , 2016, pp. 547–553. [17] C. E. C. Chac ´ on, “Probabilistic segmentation of musical sequences, ” in Math. Comput. Music, 5th Int. Conf., MCM 2015, London, UK, June 22-25, 2015, Proc. Springer, 2015, vol. 9110, p. 323. [18] Y . Guan, J. Zhao, Y . Qiu, Z. Zhang, and G. Xia, “Melodic phrase segmentation by deep neural networks, ” arXiv pr eprint:1811.05688 , 2018. [19] T . O’Brien and I. Rom ´ an, “ A recurrent neural network for musical structure processing and expectation, ” 2016. [20] M. Goto, “ A chorus-section detecting method for musical audio signals, ” in IEEEICASSP 2003 . IEEE, 2003, vol. 5, pp. V –437. [21] J. Foote, “ Automatic audio segmentation using a measure of audio novelty , ” in IEEE ICMEn2000 . IEEE, 2000, vol. 1, pp. 452–455. [22] G. W u, S. Liu, and X. Fan, “The power of fragmentation: a hierar- chical transformer model for structural segmentation in symbolic music generation, ” IEEE/ACM T rans. Audio, Speech, Lang. Pr oc. , vol. 31, pp. 1409–1420, 2023. [23] C. Hao, R. Y uan, J. Y ao, Q. Deng, X. Bai, W . Xue, and L. Xie, “Songformer: Scaling music structure analysis with heterogeneous su- pervision, ” arXiv preprint:2510.02797 , 2025. [24] M. Buisson, B. Mcfee, S. Essid, and H.-C. Crayencour, “Learning multi- lev el representations for hierarchical music structure analysis, ” in ISMIR , 2022. [25] M. Buisson, B. Mcfee, S. Essid, and H.-C. Crayencour, “ A repetition- based triplet mining approach for music segmentation, ” in ISMIR , 2023. [26] P . Seetharaman and B. Pardo, “Simultaneous separation and segmenta- tion in layered music, ” in ISMIR , 2016, pp. 495–501. [27] Q. K ong, Y . W ang, X. Song, Y . Cao, W . W ang, and M. D. Plumbley , “Source separation with weakly labelled data: An approach to compu- tational auditory scene analysis, ” in IEEE ICASSP 2020 . IEEE, 2020, pp. 101–105. [28] S. Ewert, B. Pardo, M. Muller , and M. D. Plumbley , “Score-informed source separation for musical audio recordings: An ov erview , ” IEEE Signal Pr ocessing Magazine , vol. 31, no. 3, pp. 116–124, 2014. [29] R. V . Swaminathan and A. Lerch, “Improving singing voice separation using attribute-a ware deep network, ” in 2019 Int. W orkshop Multilayer Music Repr esentation and Processing (MMRP) , 2019, pp. 60–65. [30] Y . Shi, “Correlations of pitches in music, ” F ractals , vol. 4, no. 4, pp. 547–553, 1996. [31] R. Solovyev , A. Stempkovskiy , and T . Habrusev a, “Benchmarks and leaderboards for sound demixing tasks, ” arXiv preprint:2305.07489 , 2023. [32] K. N. W atcharasupat, C.-W . W u, Y . Ding, I. Orife, A. J. Hipple, P . A. W illiams, S. Kramer , A. Lerch, and W . W olcott, “ A generalized bandsplit neural network for cinematic audio source separation, ” IEEE Open Journal of Signal Pr ocessing , vol. 5, pp. 73–81, 2024. [33] R. Sawata, S. Uhlich, S. T akahashi, and Y . Mitsufuji, “ All for one and one for all: Improving music separation by bridging networks, ” in IEEE ICASSP 2021 , 2021, pp. 51–55. [34] D. Petermann, G. W ichern, Z.-Q. W ang, and J. L. Roux, “The cocktail fork problem: Three-stem audio separation for real-world soundtracks, ” in IEEE ICASSP , 2022, pp. 526–530. [35] H. A. Bourlard and N. Morgan, Connectionist Speech Recognition: A Hybrid Appr oach , Kluwer Academic Publishers, USA, 1993. [36] G. E. Dahl, D. Y u, L. Deng, and A. Acero, “Context-dependent pre- trained deep neural networks for large-vocab ulary speech recognition, ” IEEE/ACM Tr ans. Audio, Speech, Lang. Pr oc. , vol. 20, no. 1, pp. 30–42, 2012. [37] A. Graves, S. Fern ´ andez, F . Gomez, and J. Schmidhuber , “Connection- ist temporal classification: labelling unsegmented sequence data with recurrent neural networks, ” in ICML , 2006, pp. 369–376. [38] E. Manilow , G. Wichern, P . Seetharaman, and J. Le Roux, “Cutting music source separation some slakh: A dataset to study the impact of training data quality and quantity , ” in W ASP AA , 2019, pp. 45–49. [39] T . Hasumi and Y . Fujita, “Dnr-non verbal: Cinematic audio source separation dataset containing non-verbal sounds, ” arXiv pr eprint:2506.02499 , 2025. [40] V . Panayotov , G. Chen, D. Povey , and S. Khudanpur , “Librispeech: An asr corpus based on public domain audio books, ” in ICASSP . IEEE, 2015, pp. 5206–5210. [41] E. Fonseca, X. Favory , J. Pons, F . Font, and X. Serra, “Fsd50k: An open dataset of human-labeled sound events, ” IEEE/ACM T rans. Audio, Speech, Lang. Pr oc. , vol. 30, pp. 829–852, 2021. [42] M. Def ferrard, K. Benzi, P . V andergheynst, and X. Bresson, “Fma: A dataset for music analysis, ” arXiv pr eprint:1612.01840 , 2016. [43] S. Y oung, G. Evermann, M. Gales, T . Hain, D. Kershaw , X. Liu, G. Moore, J. Odell, D. Ollason, D. Povey , V . V altchev , and P . W oodland, “The htk book, ” 1999. [44] P . Sprechmann, P . Cancela, and G. Sapiro, “Gaussian mixture models for score-informed instrument separation, ” in ICASSP , 2012, pp. 49–52. [45] S. Rouard, F . Massa, and A. D ´ efossez, “Hybrid transformers for music source separation, ” in ICASSP , 2023. [46] S. Uhlich, M. Porcu, F . Giron, M. Enenkl, T . Kemp, N. T akahashi, and Y . Mitsufuji, “Improving music source separation based on deep neural networks through data augmentation and network blending, ” in ICASSP , 2017, pp. 261–265. [47] E. V incent, R. Gribon val, and C. Fevotte, “Performance measurement in blind audio source separation, ” IEEE T rans. Audio, Speech, Lang. Pr oc. , vol. 14, no. 4, pp. 1462–1469, 2006. [48] J. Du, Y . Tu, L.-R. Dai, and C.-H. Lee, “ A regression approach to single- channel speech separation via high-resolution deep neural networks, ” IEEE/ACM T rans. Audio, Speech, Lang. Pr oc. , vol. 24, no. 8, pp. 1424– 1437, 2016. [49] C. Subakan, M. Rav anelli, S. Cornell, M. Bronzi, and J. Zhong, “ Attention is all you need in speech separation, ” in ICASSP , 2021, pp. 261–265. [50] H. Hu, M. Siniscalchi, C.-H. Y ang, and C.-H. Lee, “V ariational bayesian learning for deep latent variables for acoustic knowledge transfer, ” IEEE/ACM T rans. Audio, Speech, Lang. Proc. , vol. 33, no. 1, pp. 719– 730, 2025.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment