Automated Disentangling Analysis of Skin Colour for Lesion Images

Machine-learning models applied to skin images often have degraded performance when the skin colour captured in images (SCCI) differs between training and deployment. These discrepancies arise from a combination of entangled environmental factors (e.…

Authors: Wenbo Yang, Eman Rezk, Walaa M. Moursi

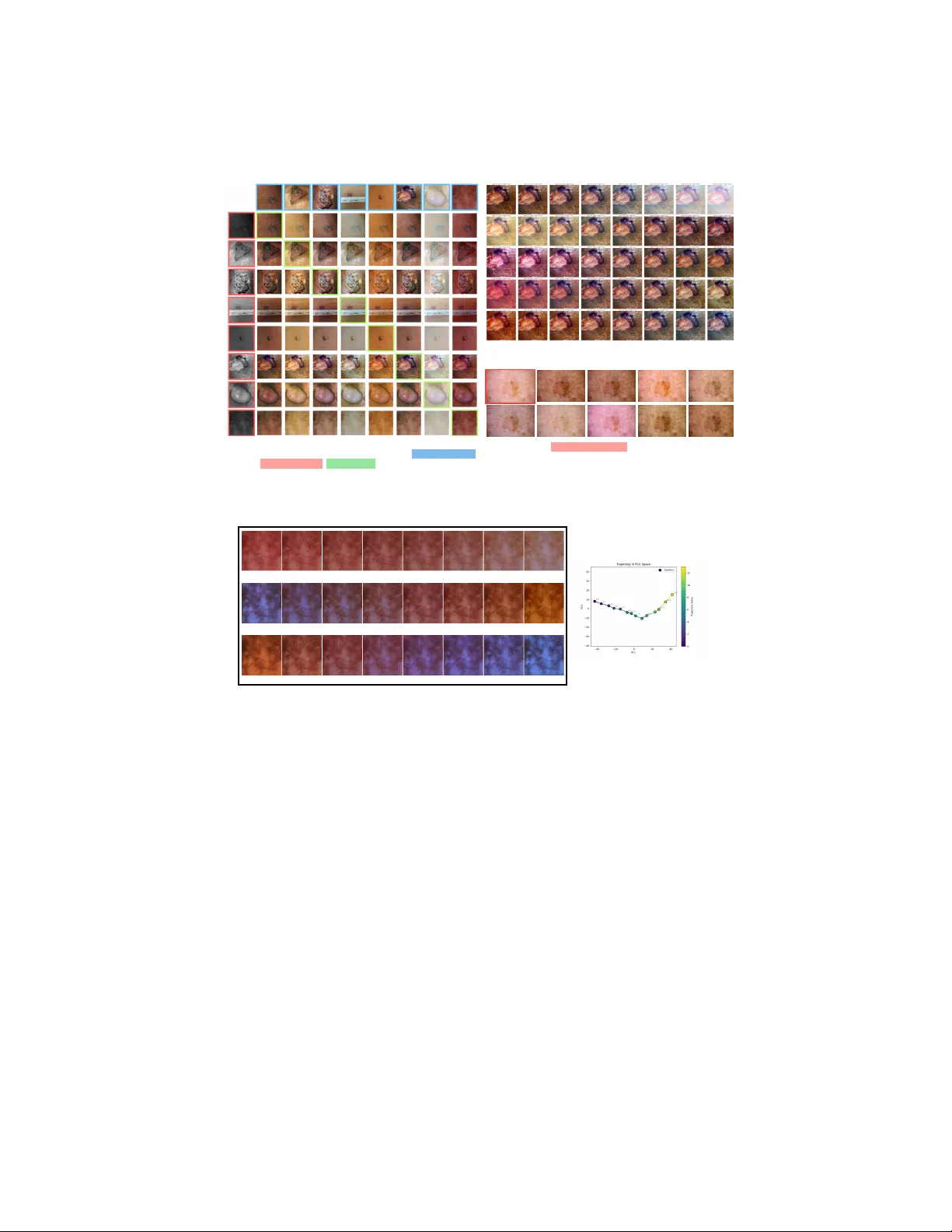

Automated Disen tangling Analysis of Skin Colour for Lesion Images W en b o Y ang [0009 − 0000 − 7528 − 1925] , Eman Rezk [0000 − 0002 − 2531 − 0799] , W alaa M. Moursi [0000 − 0002 − 0113 − 9309] , and Zhou W ang [0000 − 0003 − 4413 − 4441] {w243yang,e2rezk,walaa.moursi,z70wang}@uwaterloo.ca Univ ersity of W aterlo o, W aterlo o, ON, Canada, N2L 3G1 Abstract. Mac hine-learning mo dels applied to skin images often hav e degraded performance when the skin colour captured in images (SCCI) differs b et w een training and deploymen t. These discrepancies arise from a com bination of en tangled en vironmental factors (e.g., illumination, cam- era settings) and in trinsic factors (e.g., skin tone) that cannot b e accu- rately describ ed b y a single “skin tone” scalar – a simplification commonly adopted b y prior w ork. T o mitigate such colour mismatches, we prop ose a skin-colour disentangling framework that adapts disentanglemen t-by- compression to learn a structured, manipulable laten t space for SCCI from unlab elled dermatology images. T o preven t information leak age that hinders proper learning of dark colour features, w e in tro duce a ran- domized, mostly monotonic decolourization mapping. T o suppress un- in tended colour shifts of lo calized patterns (e.g., ink marks, scars) dur- ing colour manipulation, we further prop ose a geometry-aligned p ost- pro cessing step. T ogether, these comp onen ts enable faithful counterfac- tual editing and answering an essential question: “What would this skin condition look like under a differen t SCCI?”, as w ell as direct colour trans- fer b et ween images and controlled trav ersal along ph ysically meaningful directions (e.g., blo od p erfusion, camera white balance), enabling educa- tional visualization of skin conditions under v arying SCCI. W e demon- strate that dataset-lev el augmentation and colour normalization based on our framework achiev e comp etitive lesion classification performance. Ul- timately , our work promotes equitable diagnosis through creating diverse training datasets that include different skin tones and image-capturing conditions. Keyw ords: Skin Colour Disentanglemen t · Counterfactual Image Syn- thesis · Data Augmentation 1 In tro duction Computer-aided dermatology diagnosis systems ha ve improv ed significantly in recen t y ears. Ho wev er, similar to human experts, their p erformance degrades when training and deplo yment data exhibit mismatched skin colours captured in images (SCCI). Benmalek et al. [3] rep orts accuracy gaps reaching 10%–30% b et w een ligh t and dark skin presen tations. The mismatc h of SCCI is caused by 2 Authors Suppressed Due to Excessiv e Length (b) Results of varying individual latent dimensions. Each row corresponds to a dierent latent dimension. (a) Results of transferring skin colour . Each non-diagonal cell represents the result of transferring skin colour captured in the source image (top) to the target image (le). Diagonal cells equals the original image. (c) Results of the target image (top-le) augmented with colour latent vector randomly sampled from the latent space. Fig. 1. Our model is capable of changing the captured skin colour after training. Skin Blood Perfusion Camera White Balance Colour T emperature of Illumination (a) Results of shiing the colour latent of a test image through independently obtained trajectories corresponding to physical properties (b) Trajectory of the colour embeddings for camera white balance (rst 2 principal components) Fig. 2. T ra jectories with physical meanings can b e found in the latent space. m ultiple en tangled factors, including intrinsic sub ject properties (e.g., skin tone), and environmen tal factors (e.g., illumination, camera white balance, and other device settings) [10, 23]. Existing mitigation strategies for colour mismatc h fall into t wo categories. First, most recent w orks in dermatology fo cus on augmenting the training set using augmen ted images [1] or partially or fully synthetic images [16, 8]. While these methods sho w promising performance improv emen t, they only fo cus on in trinsic skin-tone change and usually consider skin colour on individual images without a structured colour mo del, offering limited con trol of the result and dis- tribution matching of datasets. The single-v alue scalar or categorical skin-tone descriptors (suc h as FST [7] and MST [15]) used b y these methods ha ve b een sho wn to ha ve very p oor correlation with the skin colour shown in photos [10, 23], b ecause it do es not reflect other factors (including blo o d p erfusion and environ- Automated Disentangling Colour Analysis of Skin Images 3 men tal factors). Second, colour normalization aims at mitigating the mismatc h b y conv erting each image to a “standard” colour. While such a metho d has been widely used in histology [4, 24, 22], only limited w orks ha ve b een prop osed for skin images [12, 20, 19], possibly due to the more complex colour formation pro- cess. Curren tly , these metho ds focus on environmen tal factors without explicitly considering the in trinsic factors that affect the SCCI. Moreov er, educators and future ph ysicians need a to ol for visualizing the same skin condition under differ- en t SCCI, whic h requires a representation that can be tra versed in a controlled and in terpretable wa y . In this work, we prop ose an unsup ervisedly learned skin-colour disen tan- gling framework that treats SCCI as the outcome of multiple en tangled fac- tors. Enabled by recent applications of the information b ottlenec k principle [21] to lo w-lev el vision feature disen tanglement [13, 25], our metho d adapts [25]’s disen tanglement-b y-compression framew ork and compresses skin colour into an organized latent space whose entries are approximately indep enden t and can b e individually adjusted. F aithful disentanglemen t of SCCI p ossesses unique chal- lenges compared to [25]. First, to av oid shortcuts that leak skin-darkness/shading information and obstruct the representation learning, we introduce a random- ized, mostly monotonic decolourization mapping that generates the colourless input required b y the framework. Second, to suppress the unintended colour shift of lo calized patterns (e.g., ink marks, scars), we prop ose a geometry-aligned p ost-processing step to selectively reje ct unreliable c hanges. The abilit y of faith- ful con trolled manipulation in a laten t space enables visualizing the lesion under a differen t SCCI. After training, SCCI can b e transferred directly from source images to targets (fig. 1(a)), and individual latent entries can b e adjusted indep endently (fig. 1(b)). The prop osed metho d impro ves health equity b y adjusting the training set for differen t populations. Dataset-lev el augmentation can be performed b y sampling from a target distribution (fig. 1(c)), and data normalization can b e p erformed b y setting the colour embedding to a standard v alue. Physically meaningful tra- jectories can b e identified and trav ersed in the latent space (fig. 2) for educational visualization. In summary , the main con tributions of this work are: – An unsup ervised model that answers the counterfactual “What would the skin c ondition lo ok like if the skin c olour c aptur e d in the image wer e differ- ent?” via laten t-space manipulation and transfer, enabled by the randomized decolourization mo dule we prop ose. – A geometry-aligned p ost-processing step that corrects unintended colour shifts, impro ving faithfulness of the intended app earance transformation. – Augmentation and normalization strategies for downstream tasks using the prop osed SCCI mo delling metho d. 4 Authors Suppressed Due to Excessiv e Length Input coloured lesion image Colour encoding network Reproduced Coloured Image Colour synthesis network Colour Embedding Colourless image (a) Overall architecture of skin colour disentangling network Randomized Non-decreasing Mapping (b) Randomized Decolourization Input coloured lesion image RGB Components Colourless image Randomized Decolourization Fig. 3. Architecture of the skin colour disen tangling model. 2 Metho dology 2.1 Mo del Arc hitecture for Perceiv ed Skin Colour Mo delling Since p erceiv ed skin colour captured in images (SCCI) is influenced by multiple en tangled factors (e.g., skin tone, illumination, and camera settings), we mo del it with a disentanglemen t-b y-compression framew ork adapted from [25] (retaining most of its arc hitecture). As shown in fig. 3(a), the colour encoding net w ork e maps an input image y to a lo w-bitrate colour embedding e = e ( y ) . A colour syn thesis net work f then reconstructs ˆ y = f ( x , e ) from e and a colourless image x that preserv es geometry but suppresses colour from y . By constraining e to b e just sufficient for faithful reconstruction, e is encouraged to retain primarily global SCCI information. At test time, replacing x with another image enables colour transfer, while editing or sampling entries of e enables controlled colour manipulation. Decolourization Unlike [25], whic h assumes a separately provided x for each y , w e prop ose a randomized decolourization mo dule to generate x from y . A fixed linear greyscale conv ersion can leak luminance cues correlated with skin darkness and shading, whic h weak ens control ov er p erceiv ed darkness. W e therefore use a randomized pixel-wise mapping g α : [0 , 1] 3 → [0 , 1] with fixed endp oin ts g α ( 0 ) = 0 , g α ( 1 ) = 1 , (1) where α is resampled for eac h training image in eac h ep o c h, and g α is c hosen to b e mostly increasing in each c hannel. In practice, a linear combination of mono- tonic quadratic terms is used to balance simplicit y and diversit y in concavit y: g α ( c ) := 3 X i =1 3 X j = i α ij c i c j + 3 X i =1 3 X j = i β ij (1 − (1 − c i )(1 − c j )) , (2) where c = ( c 1 , c 2 , c 3 ) is the R GB vector and α = ( α ij , β ij ) i ≤ j is sampled such that P i ≤ j ( α ij + β ij ) = 1 and α ij , β ij ≥ − ϵ for a small ϵ that is c hosen to balance flexibilit y and monotonicity . Automated Disentangling Colour Analysis of Skin Images 5 2.2 P ost-Pro cessing for Colour Correction Because the mo del is designed to capture SCCI (a global feature across the entire image), lo calized colour patterns (e.g., ink marks, scars, irritations) ma y undergo undesirable colour shifts during transfer or sampling. W e correct the colour of suc h regions b y defining a p ost-pro cessing op erator P that suppresses changes at pixels that were already hard to reconstruct. Let ˆ y = f ( x , e ) with e = e ( y ) , and let ˆ y ′ = f ( x , e ′ ) b e the manipulated output, where e ′ is obtained from another image, sampling, or man ual adjustment. W e define a rejection weigh t w by w ij := h ( d ij ) , where d ij := 1 1 − τ max( ∥ y ij − ˆ y ij ∥ − τ , 0) , (3) τ is a small tolerance threshold, and h : [0 , 1] → [0 , 1] is monotone increasing. The p ost-processing operator P only accepts the change prop osed b y f when the reconstruction error d ij is small: P ( ˆ y ′ ) := y + (1 − w ) ⊙ ( ˆ y ′ − y ) . (4) 2.3 Data Augmentation and Normalization for Downstream T asks A t ypical downstream ML mo del is trained on skin images with distribution S and tested on T . Lab els for S are alwa ys a v ailable, whereas labels for T are not during training. In some scenarios, how ev er, unlab elled examples from T are a v ailable in adv ance (e.g. when a clinical site has collected some patient images but diagnosis is not yet av ailable).It is well known that do wnstream p erformance can degrade when the SCCI distributions of S and T mismatc h. T raining Data Augmentation In ph ysician education, exp osure to skin colours in the p opulation that they will serv e is essential for building an accurate men- tal mo del. Similarly , a ML model can b enefit from a training set augmented to matc h the SCCI distribution of a target cohort: S aug := n P ◦ f g α ( y ( i ) ) , e ( i,k ) : y ( i ) ∼ S, e ( i,k ) ∼ e ( T ) o (5) where f is trained on S ∪ T without lab els and e ( i,k ) ∼ e ( T ) is sampled from the em b edding distribution of the test set. Since it is needed to model the SCCI dis- tribution of T , some unlab elled images from T are needed during training. This mo de suits deploymen t settings where unlab elled target-domain images are av ail- able b efore testing while diagnostic lab els remain unav ailable. This pro cedure only mo difies the training set, with no change needed to the test-time pro cedure, and can b e used to generate training examples for physician education. Colour Normalization In contrast, colour normalization is more ML-centric. It maps every image to a “standard” colour, reducing v ariability across devices 6 Authors Suppressed Due to Excessiv e Length and acquisition conditions in b oth training and ev aluation: S nrm := n P ◦ f g α ( y ( i ) ) , ¯ e : y ( i ) ∼ S o , and T nrm := n P ◦ f g α ( y ( i ) ) , ¯ e : y ( i ) ∼ T o (6) where ¯ e is the mean colour embedding of the training set used as the “standard”. Unlik e augmentation, normalization supp orts online use (at the cost of running f at test time of do wnstream tasks): eac h test image is pro cessed independently without kno wledge of T ’s distribution (and without needing examples from T during training), and f can b e trained on S alone. 3 Exp erimen ts and Results Eac h mo del is trained on an H100 GPU for 50 ep o c hs using the same loss func- tions, training pro cedure, and optimization settings as in [25], except that w e set λ bpp_g = 0 . 003 , λ diver = 0 . 05 , and λ color = 0 . 1 . Different downstream tasks may use different training sets. F or qualitativ e experiments, we use the augmentation mo del trained on the training and test sets in [16] and ISIC-2020 [18] (b oth without labels). F or p ost-processing, we set τ = 0 . 1 and h ( d ) = d 2 . The code will b e released up on acceptance. 3.1 Qualitativ e Results of Skin Colour Matching and Sampling W e selected several challenging examples from SkinCap [27] and ISIC-2020 [18] as test cases and generated results by transferring the SCCI b et ween them. As can b e seen in fig. 1(a), our mo del effectively transfers the SCCI without altering the skin structure or lesion app earance. By computing the v ariance of each en try in the 256-dimensional laten t vector e , we iden tified a small n umber of activ e entries. The results of individually v arying the first five active en tries are sho wn in fig. 1(b), each sho ws a semi- in terpretable ph ysical factor. In addition, w e independently sample each entry of e from the empirical marginal distribution of the SkinCap test set to augmen t a training image. The results are sho wn in fig. 1(c). The figure confirms that the mo del pro duces div erse yet realistic skin colour v ariations. 3.2 Exploration of the Latent Space Realistic ph ysical meaning can be observed in the latent space. W e captured a sequence of tra jectory-building sample photos (TBSPs) corresp onding to three ph ysical factors: (a) skin blo od p erfusion (via temp erature c hange), (b) camera white balance settings, and (c) the colour temperature of lights. F or each factor, the TBSPs are fed into the colour enco ding netw ork to pro duce a sequence of laten t vectors, which are connected in a piecewise-linear manner to form a sample tra jectory (ST ra) in the latent space. T o visualise the effect, we randomly select Automated Disentangling Colour Analysis of Skin Images 7 T able 1. Accuracy of lesion malignancy classification mo dels trained on differen t aug- men ted or normalized training sets. Results of existing metho ds are obtained from the original authors. Index Aug./Norm. Metho d (Modification) A cc. (std) Baseline and Prior Arts 1 (Baseline) [16] 0.561 (0.020) 2 Augmentation w/ St yle T ransfer [16] 0.761 (0.018) Prop osed Augmentation and Normalization Methods 3 Prop osed Augmentation (eq. (5)) 0.772 (0.014) 4 Prop osed Normalization (eq. (6)) 0.764 (0.036) Ablation Studies 5 Prop osed Augmentation (eq. (5)) (w/ Flow Sampler) 0.765 (0.028) 6 Prop osed Augmentation (eq. (5)) (w/ Indep endent Sampler) 0.759 (0.014) 7 Prop osed Normalization (eq. (6)) (w/o p ost-processing) 0.727 (0.009) 8 Prop osed Normalization (eq. (6)) (w/ naive greyscale con version) 0.722 (0.015) (a). Without P ost-Pro cessing (b). With naiv e decolourization method Fig. 4. Qualitative ablation study results of transferring p erceiv ed skin colours. Ro ws and columns are defined in the same wa y as in fig. 1(a). images and v ary their colour laten t e along a curve parallel to the ST ra. Results from one test image are shown in fig. 2(a,b,c) (animated examples can b e found in the supplemen tary materials). The PCA plot of the ST ra for camera white balance settings (fig. 2(d)) traces a smooth curve, indicating the mo del has learned a con tinuous representation of this ph ysical factor. 3.3 Dataset Augmen tation and Colour Normalization for Lesion Classification Our metho d is a general framew ork applicable to v arious downstream tasks. Due to space constraints, we present only the results of training a lesion malignancy classification mo del. Note that the goal of these exp erimen ts is not to prop ose a state-of-the-art classifier, but to explore the potential of our augmentation and normalization metho ds. F or fair comparison, we follow ed the same ev alua- tion proto col as in [16] and obtained the training set (parts of DermNet NZ [5] and ISIC-2018 JID editorial images [11]) and test set (parts of Dermatology A t- las [14]) directly from the authors. F or each augmen ted or normalized training set, w e train a ResNet-50 [9] model for lesion malignancy classification using A dam with a learning rate of 10 − 3 , rep eating each experiment ten times and re- p orting the mean and standard deviation of accuracy . The colour mo del for data 8 Authors Suppressed Due to Excessiv e Length augmen tation is trained on unlabelled training and test sets for classification, while the colour normalization mo del is trained on the training set only (to im- pro ve represen tation quality , unlab elled ISIC-2020 [18] is added to the set). The mo dels trained on the augmen ted (row 3 in table 1) and normalized (row 4) sets outp erform the baseline (row 1) b y a large margin. They also p erform fa vorably compared to the prior augmentation metho d [16], while providing significantly more in terpretability in the representation space. 3.4 Ablation Study Effects of P ost-Pro cessing Certain non-skin features (e.g., scars and ink marks) that are faithfully preserved after p ost-pro cessing (fig. 1(a)) are absent in the direct netw ork output (fig. 4(a)). When p ost-pro cessing is remov ed from the normalization pip eline, the do wnstream classifier p erforms significan tly w orse than the full metho d (ro w 7 vs. row 4 in table 1). W e also computed the av erage LPIPS score [26] b et w een the normalized and original images (after masking out non-lesion regions using masks provided by [17]), and found that remo ving p ost-processing worsens the score from 0.011 to 0.045. Effects of Decolourization Metho ds The naiv e alternative to the pro- p osed decolourization metho d is the default greyscale conv ersion metho d in Py- T orc h [2]. In con trast to fig. 1(a), the mo del trained with the naive metho d’s outputs produces results (fig. 4(b)) whose skin darkness resem bles that of the target image (which should hav e provided lesion structure) rather than the source image (whic h should hav e provided SCCI). This indicates the naive conv ersion is not capable of enabling faithful skin colour transfer and can create significan t obstacle to pro vide diverse augmentation results for education. Effects of Latent Sampling Metho ds Under ideal conditions (a very large training set and a highly flexible mo del), the en tries of e may b e assumed inde- p enden t; in practice, how ev er, w eak correlations betw een en tries are observ ed. W e therefore compare several laten t sampling strategies for the augmen tation metho d; results are shown at the b ottom of table 1. W e tested 3 metho ds for sampling the latent v ector e from the distribution of the test set: (ro w 3) di- rectly reusing laten ts from the test set (by random selection), (row 5) fitting a normalizing flow mo del [6], and (ro w 6) sampling eac h entry indep enden tly from the empirical marginal distribution. All methods with a relativ ely accurate mo d- elling of the marginal distribution of the laten t vector can ac hieve comparable p erformance, while direct random sampling p erforms the b est. 4 Conclusion In this work, we presented a disentanglemen t-b y-compression framew ork that learns a structured, manipulable latent represen tation of skin colour captured in images (SCCI) from unlabelled dermatology data. A randomized decolour- ization mapping and a geometry-aligned p ost-pro cessing step w ere proposed to impro ve faithfulness of the colour manipulation; ablation studies confirmed that Automated Disentangling Colour Analysis of Skin Images 9 b oth contribute measurably to downstream p erformance and p erceptual fidelity . The learned latent space supp orts colour transfer, indep endent en try manipula- tion, and trav ersal along physically meaningful tra jectories (e.g., blo o d p erfusion, camera white balance), enabling training set augmentation, colour normaliza- tion, and educational visualization of skin conditions under v arying SCCI. On a do wnstream b enc hmark, b oth augmentation and normalization strategies p er- form fa vourably against prior metho ds. This work is a step tow ards ac hieving equitable diagnosis, where our models can be reliably adapted across different clinical settings and p opulations. References 1. Aggarwal, P ., P apay , F.A.: Artificial in telligence image recognition of melanoma and basal cell carcinoma in racially diverse popula- tions. Journal of Dermatological T reatment 33 (4), 2257–2262 (2022). h ttps://doi.org/10.1080/09546634.2021.1944970 2. Ansel, J., Y ang, E., He, H., et al.: PyT orch 2: F aster Machine Learning Through Dynamic Python Byteco de T ransformation and Graph Compi- lation. In: 29th A CM International Conference on Arc hitectural Supp ort for Programming Languages and Op erating Systems, V olume 2 (ASP- LOS ’24). A CM (Apr 2024). h ttps://doi.org/10.1145/3620665.3640366, h ttps://do cs.p ytorch.org/assets/p ytorch2-2.pdf 3. Benmalek, A., Cin tas, C., T adesse, G.A., Daneshjou, R., V arshney , K.R., Dalila, C.: Ev aluating the impact of skin tone representation on out-of- distribution detection performance in dermatology . In: Pro ceedings of the IEEE International Symp osium on Biomedical Imaging (ISBI). pp. 1–5 (2024). h ttps://doi.org/10.1109/ISBI56570.2024.10635847 4. Cong, C., Liu, S., Di Iev a, A., Pagn ucco, M., Berko vsky , S., Song, Y.: Semi- sup ervised adversarial learning for stain normalisation in histopathology images. In: de Bruijne, M., Cattin, P .C., Cotin, S., Pado y , N., Sp eidel, S., Zheng, Y., Es- sert, C. (eds.) Medical Image Computing and Computer Assisted Interv ention – MICCAI 2021. pp. 581–591. Springer International Publishing, Cham (2021) 5. DermNet NZ: DermNet NZ, https://dermnetnz.org/ 6. Dinh, L., Sohl-Dickstein, J., Bengio, S.: Density estimation using real nvp. arXiv preprin t arXiv:1605.08803 (2016) 7. Fitzpatrick, T.B.: Soleil et p eau. J. Med. Esthet. 2 , 33–34 (1975) 8. Ghorbani, A., Natara jan, V., Coz, D., Liu, Y.: DermGAN: Synthetic generation of clinical skin images with pathology . In: Machine learning for health workshop. pp. 155–170. PMLR (2020) 9. He, K., Zhang, X., Ren, S., Sun, J.: Delving deep into rectifiers: Surpassing human- lev el p erformance on imagenet classification. In: Pro ceedings of the IEEE interna- tional conference on computer vision. pp. 1026–1034 (2015) 10. How ard, J.J., Sirotin, Y.B., Tipton, J.L., V emury , A.R.: Reliabilit y and v alidit y of image-based and self-rep orted skin phenotype metrics. IEEE T ransactions on Biometrics, Behavior, and Iden tity Science 3 (4), 550–560 (2021) 11. International Skin Image Collab oration: International skin image collab oration, h ttps://www.isic-archiv e.com/ 10 Authors Suppressed Due to Excessiv e Length 12. Iyatomi, H., Celebi, M.E., Schaefer, G., T anak a, M.: Auto- mated color calibration method for dermoscopy images. Com- puterized Medical Imaging and Graphics 35 (2), 89–98 (2011). h ttps://doi.org/https://doi.org/10.1016/j.compmedimag.2010.08.003, h ttps://www.sciencedirect.com/science/article/pii/S0895611110000832, adv ances in Skin Cancer Image Analysis 13. Li, X., Chen, Q., Peng, X., Y u, K., Chen, X., Lu, Y.: Bitrate-controlled diffusion for disentangling motion and conten t in video. In: Pro ceedings of the IEEE/CVF In ternational Conference on Computer Vision. pp. 12904–12914 (2025) 14. Marques, B.P ., Melo, C.C., Calheiros, D.B., Portugal, F.M., Junior, G.B.L., Marcolino, G.C., Amorim, H., Oliv eira, I.C.D., Cruz, L.M.O., Filho, L.L.L., Botelho, M.C., Brandão, P .L.M., Prado, R., Pérez, R.H., Santana, S., Mendonça, T.A., Macedo, V.A.O.M., Nascimen to, V.L.B., Inak anti, Y.: Dermatology atlas, h ttps://www.atlasdermatologico.com.br/contributors.jsf 15. Monk, E.: The monk skin tone scale (2023). h ttps://doi.org/https://doi.org/10.31235/osf.io/pdf4c 16. Rezk, E., Eltorki, M., El-Dakhakhni, W.: Improving skin color di- v ersity in cancer detection: Deep learning approach. JMIR Der- matology 5 (3) (2022). h ttps://doi.org/https://doi.org/10.2196/39143, h ttps://www.sciencedirect.com/science/article/pii/S2562095922000605 17. Rezk, E., Eltorki, M., El-Dakhakhni, W.: Leveraging artificial intelligence to im- pro ve the div ersity of dermatological skin color pathology: proto col for an algorithm dev elopment and v alidation study . JMIR Research Proto cols 11 (3), e34896 (2022) 18. Rotemberg, V., Kurtansky , N., Betz-Stablein, B., Caffery , L., Chousak os, E., Co della, N., Com balia, M., Dusza, S., Guitera, P ., Gutman, D., et al.: A patien t- cen tric dataset of images and metadata for identifying melanomas using clinical con text. Scien tific data 8 (1), 34 (2021) 19. Salvi, M., Branciforti, F., V eronese, F., Za v attaro, E., T arantino, V., Sa voia, P ., Meiburger, K.M.: Dermo cc-gan: A new approac h for standardizing dermatological images using generativ e adversarial net- w orks. Computer Metho ds and Programs in Biomedicine 225 , 107040 (2022). h ttps://doi.org/https://doi.org/10.1016/j.cmpb.2022.107040, h ttps://www.sciencedirect.com/science/article/pii/S0169260722004229 20. Schaefer, G., Ra jab, M.I., Emre Celebi, M., Iyatomi, H.: Colour and contrast enhancement for improv ed skin lesion segmentation. Computerized Medical Imaging and Graphics 35 (2), 99–104 (2011). h ttps://doi.org/https://doi.org/10.1016/j.compmedimag.2010.08.004, h ttps://www.sciencedirect.com/science/article/pii/S0895611110000844, adv ances in Skin Cancer Image Analysis 21. Tishb y , N., P ereira, F.C., Bialek, W.: The information b ottlenec k metho d. arXiv preprin t ph ysics/0004057 (2000) 22. T osta, T.A.A., F reitas, A.D., de F aria, P .R., Neves, L.A., Martins, A.S., do Nasci- men to, M.Z.: A stain color normalization with robust dictionary learning for breast cancer histological images pro cessing. Biomedical Signal Pro cessing and Control 85 , 104978 (2023). https://doi.org/h ttps://doi.org/10.1016/j.bsp c.2023.104978, h ttps://www.sciencedirect.com/science/article/pii/S1746809423004111 23. W eir, V.R., Li, Y., Gillis, M.C., Kurtansky , N.R., Salv ador, T., Halpern, A.C., Nelson, K.C., Lester, J.C., Rotemberg, V.: Ev aluating skin tone scales for der- matologic dataset lab eling: a prospective-comparativ e study . np j Digital Medicine 8 (1), 787 (2025) Automated Disentangling Colour Analysis of Skin Images 11 24. Xu, C., Sun, Y., Zhang, Y., Liu, T., W ang, X., Hu, D., Huang, S., Li, J., Zhang, F., Li, G.: Stain normalization of histopathological images based on deep learning: A review. Diagnostics 15 (8), 1032 (2025) 25. Y ang, W., W ang, Z., W ang, Z.: T o wards a universal image degradation mo del via con tent-degradation disentanglemen t. In: Pro ceedings of the IEEE/CVF In terna- tional Conference on Computer Vision. pp. 12966–12975 (2025) 26. Zhang, R., Isola, P ., Efros, A.A., Shech tman, E., W ang, O.: The unreasonable effectiv eness of deep features as a p erceptual metric. In: Pro ceedings of the IEEE conference on computer vision and pattern recognition. pp. 586–595 (2018) 27. Zhou, J., Sun, L., Xu, Y., Liu, W., Afv ari, S., Han, Z., Song, J., Ji, Y., He, X., Gao, X.: Skincap: A multi-modal dermatology dataset annotated with rich medical captions (2024)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment