Towards Controllable Video Synthesis of Routine and Rare OR Events

Purpose: Curating large-scale datasets of operating room (OR) workflow, encompassing rare, safety-critical, or atypical events, remains operationally and ethically challenging. This data bottleneck complicates the development of ambient intelligence …

Authors: Dominik Schneider, Lalithkumar Seenivasan, Sampath Rapuri

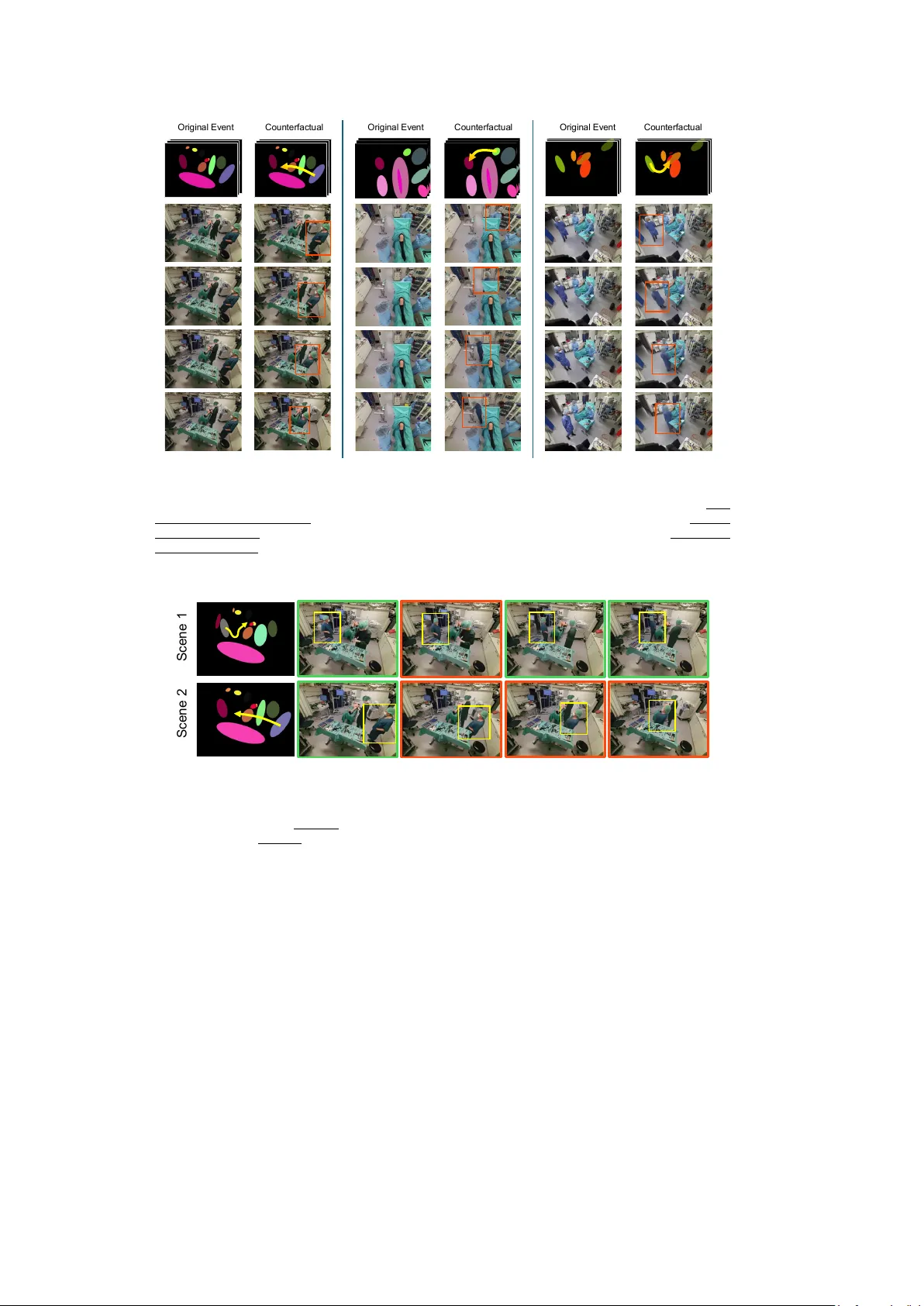

T o w ards Con trollable Video Syn thesis of Routine and Rare OR Ev en ts Dominik Sc hneider 1,2 † , Lalithkumar Seeniv asan 1 † , Sampath Rapuri 1 , Vishalroshan Anil 1 , Aiza Maksuto v a 1 , Yiqing Shen 1 , Jan Emily Mangulabnan 1 , Hao Ding 1 , Jose L. P orras 1,3 , Masaru Ishii 3 , Mathias Un b erath 1* 1* Johns Hopkins Univ ersity , Baltimore, 21211, MD, USA. 2* T echnical Univ ersit y Munich, Munic h, 80333, BY, German y . 3 Johns Hopkins Medical Institutions, Baltimore, 21287, MD, USA. *Corresp onding author(s). E-mail(s): un b erath@jh u.edu ; † Co-first author. Abstract Purp ose: Curating large-scale datasets of op erating room (OR) workflo w, encompassing rare, safet y-critical, or atypical even ts, remains op erationally and ethically c hallenging. This data b ottlenec k complicates the developmen t of am bient in telligence for detecting, understanding, and mitigating rare or safety- critical even ts in the OR. Metho ds: This w ork presents an OR video diffusion framework that enables con trolled synthesis of rare and safet y-critical ev ents. The framew ork integrates a geometric abstraction mo dule, a conditioning mo dule, and a fine-tuned diffusion mo del to first transform OR scenes in to abstract geometric representations, then condition the synthesis pro cess, and finally generate realistic OR even t videos. Using this framework, we also curate a synthetic dataset to train and v alidate AI mo dels for detecting near-misses of sterile-field violations. Results: In syn thesizing routine OR even ts, our metho d outperforms off-the-shelf video diffusion baselines, achieving low er FVD/LPIPS and higher SSIM/PSNR in b oth in- and out-of-domain datasets. Through qualitative results, w e illustrate its ability for controlled video synthesis of counterfactual even ts. An AI mo del trained and v alidated on the generated synthetic data achiev ed a RECALL of 70 . 13 % in detecting near safety-critical even ts. Finally , w e conduct an ablation study to quan tify p erformance gains from key design choices. 1 Conclusion: Our solution enables controlled syn thesis of routine and rare OR ev ents from abstract geometric representations. Beyond demonstrating its capa- bilit y to generate rare and safety-critical scenarios, we show its potential to supp ort the developmen t of am bient in telligence models. Keyw ords: OR Ev ent Generation, Am bien t Intelligence, OR Video Generation, Conditional Video Diffusion, Diffusion Mo dels 1 In tro duction With the op erating ro om (OR) b eing central to patient care [ 1 ] and hospital eco- nomics [ 2 ], driving efforts to ward OR ambien t in telligence can enhance hospital p erformance b oth clinically and financially . Clinically , enabling automated OR work- flo w analysis and optimization reduces intraoperative risk and impro ves patien t outcome: automated detection of safety-critical even ts (e.g., sterile-field breaches); shorter anesthesia exposure benefits patients [ 3 ]; eac h additional 60 min utes of surgery increases the o dds of surgical-site infection b y 37% [ 4 ]; impro v ed coordination is associ- ated with few er complications [ 5 ]; and shorter case duration impro v es OR throughput, increasing access to more patients in need of care. Financially , global surgical demand exceeds existing hospital capacity [ 6 , 7 ]. With constant demand for surgery and ORs generating roughly 60-70% of hospital reven ue [ 2 ] and accounting for 35-40% of hospi- tal expenses [ 2 ], enabling automated OR w orkflow optimization could increase surgical throughput, increasing hospital rev en ue. F urthermore, optimizing OR workflo w could p oten tially allo w for b etter resource utilization and op erational efficiency [ 8 ], reducing hospital costs. Although emerging AI solutions bring OR ambien t in telligence within reac h, progress is constrained b y the lack of comprehensive datasets that capture the full sp ectrum of OR even ts necessary for model developmen t. Curating rare, safety-critical, or atypical OR even ts at scale is op erationally and ethically challenging. In clinical practice, generating that data is practically difficult due to priv acy and access constraints, site and pro cedure v ariability , and the inherent rarit y of critical ev ents. Delib erately eliciting safety-critical rare ev ents – sterile field violations, equipment handoffs, or deviations from standard proto cols – for enriching the dataset is ethically imp ermissible and risks patien t harm. Manual curation and staged reenactments are not scalable, given the breadth of clinical v ariability , staffi ng limitations, and op erational disruption. There is a clear need for scalable metho ds to generate OR even ts on demand – with rich pro cedural v ariation and rare, safety-critical scenarios – to support the developmen t of OR am bient in telligence. Adv ancing controllable and scalable data curation metho ds, w e introduce an OR video-diffusion framework conditioned on an abstract geometric scene represen tation to curate synthetic videos of routine and rare OR even ts. The framework represen ts the OR workspace and even ts using simple geometric primitives: ellipsoids for p erson- nel, the patien t, and equipment. Given an initial OR scene and abstract geometric represen tations of the in tended OR even t as conditioning input, it generates synthetic 2 videos of the sp ecified ev en ts. The ev ent conditioning is modeled either from prior rou- tines derived from kno wn OR even ts or from user-defined tra jectories on the abstract geometric represen tations. Our key contributions are: • W e introduce an abstract, geometry conditioned OR diffusion framework with a no vel geometric abstraction and conditioning mo dule, enabling con trolled, scalable syn thesis of OR-even t videos via ellipsoid-based en tity representation and tra jectory sk etches. • W e demonstrate the video syn thesis of routine, rare, atypical, and safety-critical OR even ts (sterile-field violations) that would otherwise b e difficult to collect due to practical/ethical reasons. • W e curate a synthetic dataset and train AI mo dels to detect sterile-field violations, ac hieving 70% recall (sensitivity) and demonstrating the framework’s p oten tial to enable scalable data curation for am bient intelligence dev elopment. • Additionally , we augment the baseline fine-tuning with a Patc hGAN loss to improv e the lo cal realism and fidelity of the synthesized videos. 2 Related W ork Generativ e mo dels for general-purpose video syn thesis ha ve b een widely explored from early GAN-based [ 9 ] approaches to more mo dern diffusion mo del frameworks [ 10 ]. Among the general-purp ose video diffusion mo dels, Stable Video Diffusion (SVD) [ 11 ] is a p opular image-to-video diffusion mo del, incorp orating an image frame and an optional text prompt as conditioning inputs. In contrast, the W AN family of mo dels [ 12 ] represents a series of text-conditioned diffusion approac hes. While these mo d- els achiev e strong performance, they utilize natural-language prompts and/or single k eyframes as conditioning inputs, which lac k fine-grained control o ver ob ject p osition- ing, orien tation, and in teractions. In the surgical domain, generativ e mo dels ha ve been adopted for simulation [ 13 ], using con trollable conditioning inputs to guide the video generation process. Typically , these conditioning signals are class labels, text prompts, reference images or videos, or tra jectory information, essential for accurately mo del- ing complex and high-risk surgical workflo ws. T o our knowledge, no prior work has ac hieved controllable generation of the ambien t op erating ro om en vironment, including staff mo vemen t, equipment rep ositioning, and safet y-critical even ts. 3 Metho d Our proposed abstract, geometry conditioned OR diffusion framew ork reform ulates a video-to-video diffusion task into a controlled OR even t video generation task condi- tioned via abstract geometric scene represen tations. The framew ork incorporates three main mo dules: (i) geometric abstraction mo dule, (ii) conditioning mo dule, and (iii) diffusion module (Fig. 1 ). Giv en an initial OR scene, the geometric abstraction mo dule first transforms it into an abstract geometric scene representation. The conditioning mo dule generates a temp oral series of abstract geometric scene representations, either based on prior routines from known OR even ts or based on user-generated tra jecto- ries on the geometric represen tation of the initial scene. The diffusion module – built 3 D i f f u s i o n M o d u l e A c c e p t s e i t h e r R o u t in e O R E v e n t s o r U s e r - d e fi n e d C o u n t e r fa c t u a l E v e n t s G e o m e t r i c A b s t r a c t i o n M o d u l e R o u t i n e O R E v e n t C o u n t e r f a c t u a l O R E v e n t U s e r - d e f i n e d T r a j e c t o r i e s F o r C o u n t e r f a c t u a l E v e n t s I n i t i a l S c e n e R o u t i n e O R E v e n t R o u t i n e O R E v e n t C o n d i t i o n i n g C o u n t e r f a c t u a l O R E v e n t C o n d i t i o n i n g I n i t i a l O R S c e n e C o n d i t i o n i n g O R E v en t C o n d i t i o n i n g : C o u n t e r f ac t u al / R o u t i n e C o n d i t i o n i n g M o d u l e Fig. 1 abstract, geometry conditioned OR diffusion framework consists of three main mo dules: (i) Geometric Abstraction Mo dule conv erts the initial OR scene into an abstract geometric scene representation using ellipsoids. (ii) Conditioning Mo dule generates temp oral sequences of abstract geometric scenes through tw o pathw ays: from routine OR even ts (blue dash path), or from incorp o- rating user-defined tra jectories (dashdotted green path). (iii) Diffusion Mo dule synthesizes videos of OR even ts conditioned on the initial scene and the geometric sequences. on a video-to-video diffusion backbone – then uses the initial scene and the series of abstract geometric scene representations (video conditioning) as input to diffuse an OR ev ent. Abstract Geometric Scene Representation : The abstract geometric scene rep- resen tation can b e formulated as an implicit scene graph G = ( V , E ), where V = { v 1 , v 2 , . . . , v k } represen ts a set of k nodes corresp onding to OR en tities (OR person- nel, patient, and equipmen t), and edges ( E ) represent the implicit spatial and temp oral relationships betw een the no des. Eac h no de v j consists of geometric attributes g j ∈ R 6 and class information c j ∈ R 2 . The geometric attributes enco de (a) a 2-dimensional cen troid p osition, (b) a 3-dimensional ellipsoid representation capturing spatial spread (heigh t, width, and rotation angle), and (c) a 1-dimensional normalized relative depth v alue. The 2D class v ector c j ∈ R 2 represen ts the (R, G) color channel v alues for eac h en tity class, adopting the 36 semantic classes defined in the MMOR dataset [ 16 ]. Since the blue channel is reserv ed for depth enco ding, the red and green channels enco de S A M 2 V i d e o D e p t h A n yt h i n g A b s t r a c t G e o m e t r i c S c e n e R e p r e s e n t a t i o n (R , G ) C l a ss I n f o rm a t i o n (B ) D e p t h I n f o rma t i o n M a n u a l S e g m e n t a t i o n P r o m p t + Fig. 2 Geometric abstraction mo dule pip eline: Giv en an initial scene and segmentation point prompts, SAM2 [ 14 ] propagates instance segmen tation masks across video. Depth information is estimated using Video Depth Anything [ 15 ]. Each segmen ted instance is then approximated b y an ellipsoid parameterized by its centroid p osition and spatial spread (heigh t, width, rotation angle). The resulting Abstract Geometric Scene Representation encodes class information in the red and green channels, combined with normalized relative depth in the blue channel intensit y . 4 seman tic class information, providing visually distinct represen tations for each en tity t yp e. With implicit edges, spatial relationships such as proximit y can b e derived from pairwise distances b et ween 2D centroids in normalized image space. Spatial la yering (‘in-fron t-of ’, ‘o ccluded-b y’) is captured through relativ e depth v alues. T emp orally , no des representing the same ob ject across frames form implicit corresp ondences. Rendering abstract scene represen tation: Each scene represen tation ( G ) is rendered as a 2D image at 1024 × 768 resolution (Fig. 2 ), with no des depicted as ellipses on a blac k can v as. Eac h ellipse is p ositioned at its cen troid and scaled and rotated according to its spatial spread parameters (height, width, and rotation angle). Class information is enco ded in the red and green color channels, pro viding unique colors for each ob ject class, while normalized depth is encoded in the blue channel intensit y . (i) Geometric abstraction mo dule: Giv en an OR scene, the abstract geometric scene representation is created using a semi-automated pip eline that employs out-of- the-b o x SAM2 [ 14 ] and Video Depth An ything [ 15 ] mo dels. Firstly , entities in the scene and their geometric parameters (ellipsoids’ centroid p osition, heigh t, width, and rotation angle) are extracted using segmentation masks generated through SAM2 using man ual (inference)/groundtruth (training) prompts. The 1D normalized relative depth v alues are extracted using depth maps and are av eraged ov er an instance mask. The extracted features are then used to render the abstract scene representation. (ii) Conditioning mo dule: With the video generation formulated as video-to-video diffusion task, this mo dule curates a series of abstract scene representations (corre- sp onding to the num b er of frames in the diffused video) to condition the diffusion. Firstly , it employs the geometric abstraction mo dule on all the frames of template videos of a known OR even t. The resulting series of abstract representations is then used as conditioning on the initial scene to diffuse the synthetic OR even t. Alter- nativ ely , the mo dule also offers the flexibility to alter tra jectories of one or more en tities using user-defined tra jectories, to diffuse syn thetic at ypical/rare/safet y-critical OR ev ents. W e implement an in teractive tra jectory drawing to ol (using Op enCV, Pygame, and Tkin ter) that allo ws users to select ellipses from the abstract geometric represen tation by clicking on them, then sketc h desired mo vemen t paths by drawing freehand tra jectories. The tool captures wa yp oin ts along the drawn tra jectory , which A b st r a ct i o n M o d u l e C o n d i t i o n i n g f o r R o u t i n e O R E v e n t s C o n d i t i o n i n g f o r C o u n t e r f a c t u a l O R E v e n t s C o n d i t i o n i n g M o d u l e + Fig. 3 Interactiv e conditioning module for counterfactual even t generation. Given an input OR video sequence, the Abstraction mo dule con verts the scene in to an abstract geometric representation. A graphical user interface enables direct manipulation of these ellipsoids through drag-and-drop operations to sketc h desired tra jectories. The Conditioning Module transforms the original geometric sequence into a counterfactual even t by incorp orating the user-modified tra jectories. 5 are interpolated across the full video sequence and applied as translational offsets to the selected ellipse’s centroid p osition. Fig. 3 illustrates an example of video condi- tioning using user-defined tra jectories: OR-p ersonnel w alking around the instrument table, instead of mo ving tow ards the patien t. (iii) Diffusion mo dule: W e emplo y L TX-Video [ 17 ] – a transformer-based latent video diffusion model as the diffusion bac kb one mo del. Sp ecifically , w e fine-tuned using the In-Con text LoRA (IC-LoRA) pip eline, that allo ws for video-video diffusion b y conditioning on reference frames (abstract geometric scene representation). During fine-tuning, in addition to baseline implementation, we incorp orate Patc hGAN loss [ 18 ] to further improv e the fidelit y and realism of the synthesized video. The fine-tuned IC-LoRA weigh ts, trained sp ecifically for in-context conditioning, enable the mo del to in terpret these rendered visualizations as structural guidance during generation. 4 Exp erimen ts and Results 4.1 Exp erimen tal setup: (i) Dataset : W e emplo y t wo public datasets: MMOR [ 16 ] and 4DOR [ 19 ]. The diffu- sion mo del is trained and v alidated on videos from the MMOR dataset. With original videos av ailable at 1 fps, we temp orally interpolate it to 24 fps using the L TX’s k eyframe interpolation feature [ 17 ]. T o maintain segmen tation consistency across the in terp olated video, we employ SAM2 to segmen t OR entities based on the first-frame groundtruth annotations. Videos are pro cessed at 1024 × 768 resolution, with 97 frames eac h. The train/test split w as assigned on a video-wise basis: 338 videos for fine-tuning the framew ork and 50 videos for a detailed ablation study . F or baseline in- and out- of-domain c omparison against baseline conditional diffusion models, we used 6 videos MMOR testset and 6 videos from 4DOR. (ii) T raining and inference. Diffusion model training and inference: The IC-LoRA- adapted video diffusion model is trained on a single NVIDIA A100 GPU for 8000 steps. W e adopt the default h yp erparameters from L TX’s video style transfer configuration: LoRA rank and alpha of 128, learning rate of 2 × 10 − 4 , AdamW optimizer, and bfloat16 mixed precision training. Inference is p erformed using 50 denoising steps with a guid- ance scale of 3.5. During training, first-frame conditioning is provided in 20% of cases to encourage b oth conditional and unconditional generation capabilities. A t inference time, first-frame conditioning is p erformed exclusively , providing the initial frame of the target video alongside the complete rendered abstract scene representations. (iii) Ev aluation Metrics : (a) With Groundtruth videos: W e use FVD, SSIM, and PSNR, and LPIPS metrics. SSIM and PSNR are used to quantify the av erage video quality and degradation of the generated videos against the groundtruth videos. FVD summarizes set-level spatio-temporal realism. Additionally , to ev aluate structural accuracy and alignement with abstract conditioning, we use b ounding b o x IoU (BB IoU) and segmen tation mask IoU (Seg IoU). These metrics quantify controllabilit y b y measuring the spatial alignmen t betw een the conditioned ellipsoid tra jectories and the corresp onding en tit y p ositions in the generated video. W e prompt each instance in the groundtruth initial frame for generating segmentation masks using SAM2 and track 6 T able 1 Comparison of our framework against out-of-the-b o x baseline mo dels on in- and out-of-domain testsets. W AN [ 12 ] & L TX-base (L TX b ) [ 17 ]: T ext-conditioned generation using VLM descriptions of the groundtruth scene. SVD [ 11 ]: Image-to-video generation with low dynamic motion setting. Ours: Our prop osed framework. Metho d FVD ↓ SSIM ↑ PSNR ↑ LPIPS ↓ FVD ↓ SSIM ↑ PSNR ↑ LPIPS ↓ MMOR (In-Domain) 4DOR (Out-of-Domain) W AN [ 12 ] 1190.57 0.78 18.95 0.20 699.78 0.86 21.72 0.13 SVD [ 11 ] 5021.19 0.68 17.91 0.24 3790.73 0.74 19.86 0.16 L TX b [ 17 ] 2439.33 0.47 12.88 0.58 1135.26 0.46 13.10 0.58 Ours 689.88 0.86 23.21 0.13 265.25 0.90 25.87 0.07 G r o u n d t r u t h O u r s S V D L T X - B a se W A N - G e o m e t r i c I m g 2 V i d T e x t 2 V i d T e x t 2 V i d Fig. 4 Qualitative comparison of video synthesis metho ds on out-of-domain (4DOR) dataset. Groundtruth: Original video frames to reconstruct. W AN [ 12 ] & L TX-Base: T ext-conditioned gen- eration using VLM descriptions of the groundtruth scene. SVD: Image-to-video generation with low dynamic motion setting. Ours: Our proposed video synthesis using abstract geometric represen tation. them across b oth the real and generated video sequences. By comparing the result- ing segmentation masks and b ounding b o xes b et ween real and generated sequences, w e measure spatial alignment and comp onen t localization accuracy . (b) Without groundtruth videos: we employ DOVER [ 20 ] and Inception Score [ 21 ] to quantify per- formance. (c) Downstream near-miss detection task: W e prioritize recall (sensitivit y) o ver accuracy , as missing a true violation (false negative) p oses greater clinical risk than a false alarm (false positive), whic h simply prompts staff v erification. 4.2 Results (i) Baseline comparison: Firstly , we b enc hmark our abstract geometric conditioned OR diffusion framework on in- and out-of-domain testsets against out-of-the-box base- line mo dels: (i) SVD [ 11 ] that p erforms image to video diffusion, and (ii) W AN [ 12 ] and L TX-base [ 17 ] that condition video via text-prompt. Quantitativ ely (T able. 1 ), 7 T able 2 Quan titative assessment of our framework’s ability in curating synthetic rare/atypical/safet y-critical OR even ts. Method DOVER ↑ Inception Score ↑ DragNUW A [ 22 ] 0.31 1 . 04 ± 0 . 05 Ours 0.52 1 . 03 ± 0 . 01 T able 3 Detecting near safet y-critical even ts (near misses of sterile-field violation) using mo dels trained on synthetic data. Method Accuracy ↑ RECALL ↑ ResNet34 [ 23 ] 64.91 50.65 ViT-B/16 [ 24 ] 67.54 70.13 our framew ork – fine-tuned on a small trainset (338 videos; 97 frames eac h) – performs w ell on b oth in-domain and out-of-domain testsets. Fig. 4 shows the qualitative p er- formance of our framework against baseline mo dels on out-of-domain videos. These results demonstrate the effectiv eness of our abstract geometry conditioning OR video generation framework in conditioning the OR video synthesis at every frame, for each en tity . (ii) Syn thesizing rare/atypical/satefy-critical ev en ts: T o demonstrate the framew ork’s flexibilit y in controlled videos synthesis of rare/at ypical/safety-critical ev ents, which w ould otherwise b e difficult to generate without straining the workforce or risking patien t harm, we qualitatively (Fig. 5 ) and quantitativ ely (T able. 2 ) assess its p erformance. Quantitativ ely , we show that our framework p erforms b etter than out-of-the-b o x DragNUW A [ 22 ] – a baseline mo del that also conditions video genera- tion through user-defined sketc h. W e show that, by using an interactiv e conditioning to ol, where ellipsoids (geometric represen tation of OR en tities) can b e manipulated and dragged to generate new even ts, the framework can diffuse coun terfactual OR ev ents. Qualitatively , we observe that, while our framework allows for explicit spatial conditioning of entit y tra jectories, in some cases, it has also implicitly learned the in teractions b etw een en tities in the training distributions based on spatial proximit y . F or instance, when an OR p ersonnel is conditioned to mov e tow ards the instrument table, the framew ork diffuses an OR even t video, where the p erson is seen interacting with the table. (iii) Dev eloping OR am bient intelligence mo del for detecting near safety- critical ev ents from syn thetic data: Considering sterile-field violations can p oten tially compromise patient outcomes, w e define near misses in sterile-field vio- lations as scenarios in whic h non-sterile p ersonnel approac h the sterile field without making contact, and treat thes e instances as near safet y-critical even ts. Using our trained framework, we curate a synthetic dataset to train and v alidate AI mo dels for detecting near-misses of sterile-field violations. Using 20 of the 50 MMOR testset videos, w e curated 87 synthetic videos, depicting positive and negativ e samples for near-misses of sterile-filed violations. Image frames from 68 of these syn thetic videos w ere used to train the model. F rames from the remaining videos were used for mo del v alidation. The synthetic dataset comprises 678 training frames (252 p ositiv e, 426 negativ e) and 228 v alidation frames (77 p ositiv e, 151 negative). Positiv e samples rep- resen t frames where non-sterile p ersonnel are in close pro ximity to the sterile field, while negativ e samples represen ts a OR scene where non-sterile p ersonnel maintain a safe distance from the sterile field. The near-miss detection model is a p er-frame image 8 O r i g i n a l E v e n t C o u n t e r f a c t u a l O r i g i n a l E v e n t C o u n t e r f a c t u a l O r i g i n a l E v e n t C o u n t e r f a c t u a l Fig. 5 Controllable synthesis of safety-critical, interactions, and alternate OR even ts. Each col- umn pair shows a routine OR even t (left) with its abstract geometric representation (top), and a counterfactual even t (right) generated by providing a tra jectory for geometric conditioning. Left pair (safet y-critical even t): A non-sterile assistant approaches the sterile instrument table. Middle pair (Interaction): Personnel walking tow ard and reac hing for interaction with the table. Right pair (Alternate even t): Mo dified tra jectory where p ersonnel w alks directly tow ard the patient b ed instead of the original path around the room. S c e n e 1 S c e n e 2 Fig. 6 Left: abstract geometric conditioning. Right: synthesized video frames. Positiv e (red) and negative (green) training samples for near safety-critical event (near misses in sterile-field violation) detection from coun terfactual syn thetic data generated using our framew ork. Tw o scenes demonstrate near-miss progressions: Scene 1 shows non-sterile p ersonnel approaching then retreating from the instrument table. Scene 2 shows p ersonnel passing close to the instrument table. classifier that op erates on individual frames without tra jectory history , detecting near- misses based on spatial pro ximit y within each frame. Fig. 6 shows p ositiv e and negativ e samples of these frames generated from conditioning the tra jectories of entities using the framew ork. T able 3 summarizes the p erformance of classifiers trained and v alidated on these syn thetic samples for detecting near-misses of sterile-field violations. 9 T able 4 Ablation study of our framework with and without base (diffusion backbone and baseline fine-tuning), Seg (segmentation mask-based conditioning), E (ellipsoids-based conditioning), D (depth encoded ellpsoids) and L g (Patc hGAN loss integrated for finetuning). Method FVD ↓ SSIM ↑ PSNR ↑ LPIPS ↓ BB IoU ↑ Seg IoU ↑ Base Seg E D L g ✓ ✓ 347.88 0.88 25.34 0.09 0.96 0.95 ✓ ✓ 518.50 0.86 23.65 0.12 0.93 0.90 ✓ ✓ ✓ 532.05 0.86 23.74 0.12 0.93 0.90 ✓ ✓ ✓ ✓ 487.20 0.88 24.71 0.11 0.93 0.91 (iv) Ablation Study: W e p erform extensive ablation study using all 50 MMOR testset videos to v alidate the key comp onents of our framework. T able 4 compares three conditioning approaches: direct segmentation maps, ellipse rendering without depth, and our prop osed ellipse rendering with depth enco ding and adv ersarial train- ing. While segmentation map conditioning achiev es sup erior reconstruction metrics (FVD: 347.88), this comes at the cost of controllabilit y as segmentation masks are not easy to manipulate (such as fine-grained conditioning the lim bs) or mov e around. Our ellipse-based represen tation main tains strong absolute performance (SSIM > 0.88, and BBo x IoU > 0.93) while enabling flexibility in conditioning and scene composition. Adding Patc hGan loss ( L g ) further enhances p erformance to an FVD of 487.20 and segmen tation IoU of 0.91. 5 Discussion and Conclusion In this work, w e in tro duced an OR video diffusion framework that reformulates a video-to-video diffusion task as an OR ev ent diffusion model conditioned on abstract geometry scene represen tation. By abstracting the input scene and routine OR even ts in to a visualizable geometric representation, and coupling it with an in teractive condi- tioning mo dule, our framework offers a flexible and con trolled diffusion of OR even ts. This unlo c ks synthetic video generation of routine, atypical, rare, and safety-critical OR even ts, at scale, that otherwise are difficult/near-imp ossible to curate due to strain on the workforce and risk to patient outcome. W e demonstrate that our framework – fine-tuned on a small public dataset of 338 videos – outp erforms out-of-the-b o x baseline mo dels b oth quantitativ ely and qualitatively on small in-domain and out- of-domain test sets. W e also show case our framew ork’s controllabilit y in generating at ypical/rare/safety-critical OR even ts using abstract geometric scene conditioning. Additionally , w e show our framework’s p oten tial in generating synthetic data tow ards training AI models for detecting near safety-critical ev en ts – near misses of sterile-field violation. With this w ork serving as a groundwork tow ard scalable data curation for devel- oping OR am bient intelligence for OR workflo w analysis, k ey limitations exist that need to b e progressiv ely addressed. (i) Conditioning and c ontr ol lability tr ade off: W e selected ellipsoids as geometric primitives to enable in tuitiv e drag-and-drop tra jectory con trol while b eing robustly extractable from segmen tation masks, unlike articulated p ose representations that w ould require complex in terfaces and are prone to failure 10 in cluttered OR scenes. This design choice provides sufficien t granularit y for spatial- conditioning to enforce proximit y/mo vemen t of OR p ersonnel near OR devices, but is limited in enforcing explicit fine-grained articulation control (e.g., arm extension of OR p ersonnel when reac hing for instruments). In the curren t framework, the gen- erativ e mo del implicitly learns articulation and interaction priors from the training data distribution, guided b y spatial-proximit y conditioning. (ii) Gener alization and r obustness: Our framework demonstrated generalizability to out-of-domain dataset (4DOR) for routine even t synthesis. How ever, challenges remain in generating syn- thetic videos for significantly different en vironments due to v ariations in sterile attire colors, equipment configurations, and surgeries (e.g., op en surgery , emergency trauma) not represented in the MMOR training data. (iii) Clinic al validation and downstr e am utility: While clinical collab orators were consulted throughout the dev elopment stages, with this study b eing groundwork tow ards developing scalable syn thetic data gener- ation for OR ambien t intelligence, formal domain-exp ert ev aluation (e.g., structured ratings by indep enden t surgeons) is b ey ond the scop e of this work. The do wnstream am bient AI mo del – near critical-ev ent detection mo del – trained and v alidated on syn thetic data, serves as a pro of-of-concept. Comprehensive ev aluation on real OR images and the impact of am bient in telligence in enhancing hospital performance – clinical and financial – remains to be studied. F uture w ork includes: (i) further improving the video-diffusion mo del’s p erfor- mance. Although integrating a P atchGAN loss during fine-tuning has impro ved fidelit y , the resolution and clarity of moving entities degrade as the video progresses and deviates from the groundtruth even t; architectural mo difications to enhance tem- p oral fidelity and consistency are a promising direction. (ii) In tro ducing scalable, in tuitive conditioning for explicit fine-grained articulation conditioning (e.g., OR p er- sonnel reaching for instruments), extending b ey ond the current framew ork’s explicit spatial (tra jectory) conditioning. (iii) Reducing reliance on manual SAM2 prompts at inference b y developing a scalable data-curation pip eline that automates geometric abstraction via zero-shot en tity detection, enabling large-scale processing of public OR datasets. (iv) Comprehensive v alidation, including clinical v alidation, on framew ork generalizabilit y to real and diverse OR environmen ts. Declarations F unding: This work was funded b y the National Science F oundation, under Gran t No. 2239077. The conten t is solely the resp onsibilit y of the authors and do es not necessarily represen t the official views of the National Science F oundation. Comp eting in terests: The authors hav e no competing interests. Ethics appro v al: This is a computational study inv olving no human participan ts or animals and is based on publicly av ailable datasets. No ethical appro v al was required. Informed consent: Not applicable. Author contributions: The first t wo authors contributed equally to this w ork. References [1] Saeedian, M., Sep ehri, M.M., Jalalimanesh, A., Shadp our, P .: Op erating room 11 orc hestration by using agent-based sim ulation. P eriop erativ e care and op erating ro om management 15 , 100074 (2019) [2] Healey , T., El-Othmani, M.M., Healey , J., Peterson, T.C., Saleh, K.J.: Improving op erating room efficiency , part 1: general managerial and preop erativ e strategies. JBJS reviews 3 (10), 3 (2015) [3] Phan, K., Kim, J.S., Kim, J.H., Somani, S., Di’Capua, J., Do wdell, J.E., Cho, S.K.: Anesthesia duration as an indep enden t risk factor for early p ostoperative complications in adults undergoing electiv e acdf. Global spine journal 7 (8), 727– 734 (2017) [4] Cheng, H., Chen, B.P .-H., Soleas, I.M., F erko, N.C., Cameron, C.G., Hinoul, P .: Prolonged op erativ e duration increases risk of surgical site infections: a systematic review. Surgical infections 18 (6), 722–735 (2017) [5] Ko c h, A., Burns, J., Catchpole, K., W eigl, M.: Associations of workflo w disrup- tions in the op erating ro om with surgical outcomes: a systematic review and narrativ e synthesis. BMJ Qualit y & Safet y 29 (12), 1033–1045 (2020) [6] Meara, J.G., Leather, A.J., Hagander, L., Alkire, B.C., Alonso, N., Ameh, E.A., Bic kler, S.W., Conteh, L., Dare, A.J., Davies, J., et al. : Global surgery 2030: evidence and solutions for achieving health, w elfare, and economic developmen t. The lancet 386 (9993), 569–624 (2015) [7] W eiser, T.G., Regenbogen, S.E., Thompson, K.D., Haynes, A.B., Lipsitz, S.R., Berry , W.R., Gaw ande, A.A.: An estimation of the global volume of surgery: a mo delling strategy based on av ailable data. The lancet 372 (9633), 139–144 (2008) [8] Vladu, A., Ghitea, T.C., Daina, L.G., T , ˆ ırt , , D.P ., Daina, M.D.: Enhancing op erating ro om efficiency: The impact of computational algorithms on surgical sc heduling and team dynamics. In: Healthcare, vol. 12, p. 1906 (2024). MDPI [9] Saito, M., Matsumoto, E., Saito, S.: T emp oral generative adversarial nets with singular v alue clipping. In: Pro ceedings of the IEEE International Conference on Computer Vision, pp. 2830–2839 (2017) [10] Peebles, W., Xie, S.: Scalable diffusion mo dels with transformers. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 4195–4205 (2023) [11] Blattmann, A., Dockhorn, T., Kulal, S., Mendelevitc h, D., Kilian, M., Lorenz, D., Levi, Y., English, Z., V oleti, V., Letts, A., et al.: Stable video diffusion: Scaling laten t video diffusion mo dels to large datasets. arXiv preprint (2023) [12] W an, T., W ang, A., Ai, B., W en, B., Mao, C., Xie, C.-W., Chen, D., Y u, F., 12 Zhao, H., Y ang, J., et al.: W an: Op en and adv anced large-scale video generative mo dels. arXiv preprint arXiv:2503.20314 (2025) [13] Chen, T., Y ang, S., W ang, J., Bai, L., Ren, H., Zhou, L.: Surgsora: Ob ject-a ware diffusion mo del for con trollable surgical video generation. In: International Con- ference on Medical Image Computing and Computer-Assisted In terven tion, pp. 521–531 (2025). Springer [14] Ravi, N., Gab eur, V., Hu, Y.-T., Hu, R., Ryali, C., Ma, T., Khedr, H., R¨ adle, R., Rolland, C., Gustafson, L., et al.: Sam 2: Segment an ything in images and videos. arXiv preprin t arXiv:2408.00714 (2024) [15] Chen, S., Guo, H., Zhu, S., Zhang, F., Huang, Z., F eng, J., Kang, B.: Video depth an ything: Consisten t depth estimation for sup er-long videos. In: Pro ceedings of the Computer Vision and P attern Recognition Conference, pp. 22831–22840 (2025) [16] ¨ Ozso y , E., Pellegrini, C., Czempiel, T., T ristram, F., Y uan, K., Bani-Harouni, D., Ec k, U., Busam, B., Keic her, M., Nav ab, N.: Mm-or: A large multimodal operating ro om dataset for seman tic understanding of high-intensit y surgical environmen ts. In: Pro ceedings of the Computer Vision and P attern Recognition Conference, pp. 19378–19389 (2025) [17] HaCohen, Y., Chiprut, N., Brazo wski, B., Shalem, D., Moshe, D., Ric hardson, E., Levin, E., Shiran, G., Zabari, N., Gordon, O., et al.: Ltx-video: Realtime video laten t diffusion. arXiv preprint arXiv:2501.00103 (2024) [18] Isola, P ., Zh u, J.-Y., Zhou, T., Efros, A.A.: Image-to-image translation with condi- tional adversarial net works. In: Proceedings of the IEEE Conference on Computer Vision and P attern Recognition, pp. 1125–1134 (2017) [19] ¨ Ozso y , E., ¨ Ornek, E.P ., Eck, U., Czempiel, T., T ombari, F., Nav ab, N.: 4d- or: Semantic scene graphs for or domain mo deling. In: International Conference on Medical Image Computing and Computer-assisted Interv ention, pp. 475–485 (2022). Springer [20] W u, H., Zhang, E., Liao, L., Chen, C., Hou, J., W ang, A., Sun, W., Y an, Q., Lin, W.: Exploring video quality assessmen t on user generated conten ts from aes- thetic and technical p erspectives. In: Proceedings of the IEEE/CVF In ternational Conference on Computer Vision, pp. 20144–20154 (2023) [21] Salimans, T., Go o dfello w, I., Zarem ba, W., Cheung, V., Radford, A., Chen, X.: Impro ved techniques for training gans. Adv ances in neural information pro cessing systems 29 (2016) [22] Yin, S., W u, C., Liang, J., Shi, J., Li, H., Ming, G., Duan, N.: Dragnu w a: Fine- grained control in video generation b y integrating text, image, and tra jectory . 13 arXiv preprin t arXiv:2308.08089 (2023) [23] He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recogni- tion. In: Pro ceedings of the IEEE Conference on Computer Vision and P attern Recognition (CVPR), pp. 770–778 (2016) [24] Dosovitskiy , A., Beyer, L., Kolesniko v, A., W eissenborn, D., Zhai, X., Unterthiner, T., Dehghani, M., Minderer, M., Heigold, G., Gelly , S., Uszkoreit, J., Houlsby , N.: An image is w orth 16x16 w ords: T ransformers for image recognition at scale. arXiv preprin t arXiv:2010.11929 (2020) 14

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment