Archetypal Graph Generative Models: Explainable and Identifiable Communities via Anchor-Dominant Convex Hulls

Representation learning has been essential for graph machine learning tasks such as link prediction, community detection, and network visualization. Despite recent advances in achieving high performance on these downstream tasks, little progress has …

Authors: Nikolaos Nakis, Chrysoula Kosma, Panagiotis Promponas

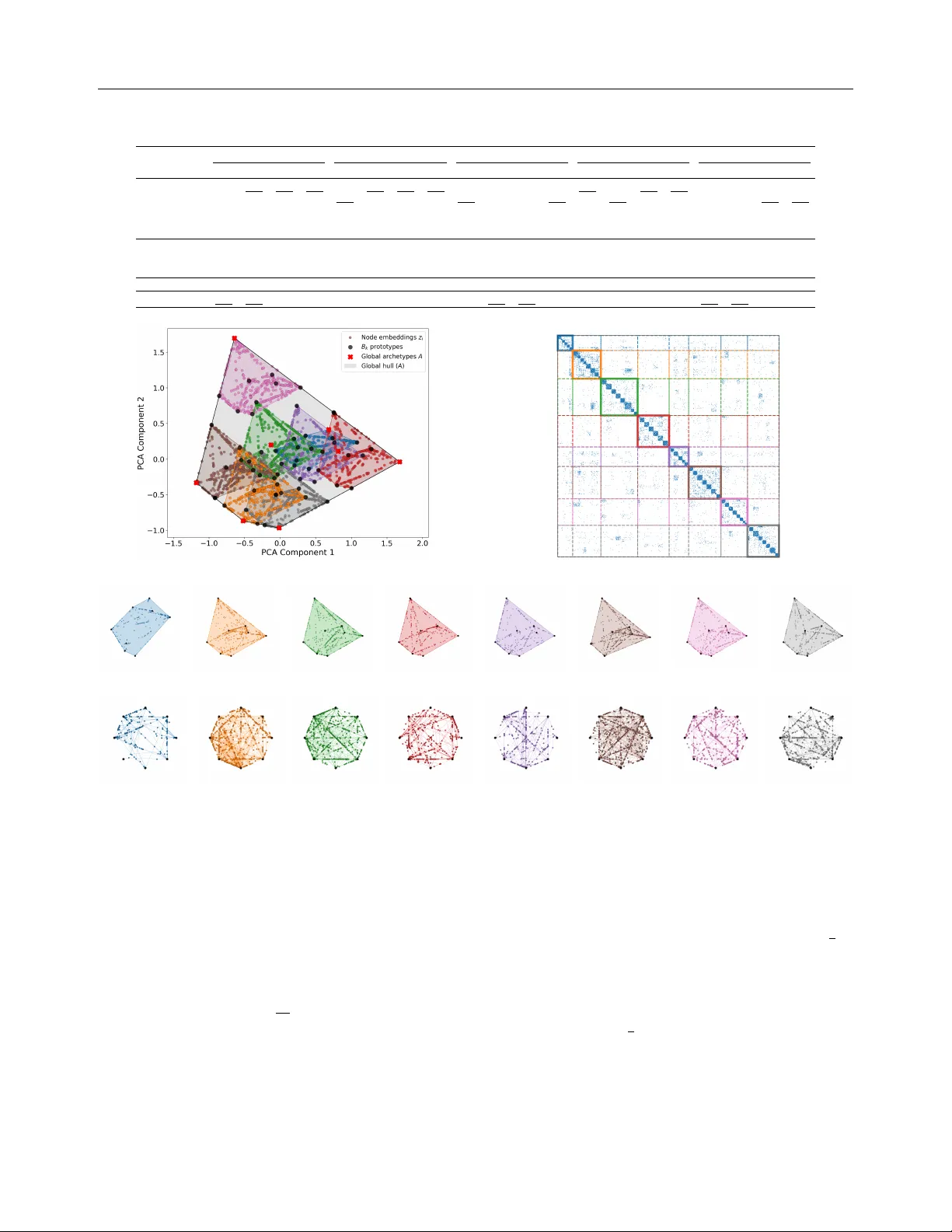

Arc het ypal Graph Generativ e Mo dels: Explainable and Iden tifiable Comm unities via Anc hor-Dominan t Con v ex Hulls Nik olaos Nakis † Chrysoula Kosma ‡ P anagiotis Promp onas † Mic hail Chatzianastasis ♣ Giannis Nik olen tzos ♠ † Y ale Institute for Net work Science, Y ale Univ ersity , USA ‡ Univ ersit´ e P aris Saclay , Univ ersit´ e P aris Cit´ e, ENS P aris Saclay , CNRS, SSA, INSERM, Cen tre Borelli, F rance ♣ ´ Ecole P olytechnique, P aris, F rance ♠ Departmen t of Informatics and T elecommunications, Univ ersity of P eloponnese, Greece Abstract Represen tation learning has been essen tial for graph mac hine learning tasks such as link prediction, communit y detection, and net- w ork visualization. Despite recent adv ances in ac hieving high p erformance on these down- stream tasks, little progress has b een made to ward self-explainable mo dels. Understand- ing the patterns b ehind predictions is equally imp ortan t, motiv ating recent interest in ex- plainable machine learning. In this pap er, we presen t GraphHull , an explainable gener- ativ e mo del that represents netw orks using t wo levels of con vex hulls. A t the global level, the vertices of a conv ex hull are treated as ar chetyp es , each corresp onding to a pure com- m unity in the net work. A t the lo cal lev el, eac h comm unity is refined by a pr ototypic al hul l whose vertices act as representativ e pro- files, capturing communit y-specific v ariation. This tw o-lev el construction yields clear m ulti- scale explanations: a no de’s p osition relative to global arc hetypes and its lo cal prototypes directly accoun ts for its edges. The geom- etry is well-behav ed by design, while local h ulls are k ept disjoin t by construction. T o further encourage diversit y and stability , we place principled priors, including determinan- tal p oin t pro cesses, and fit the mo del under MAP estimation with scalable subsampling. Exp erimen ts on real netw orks demonstrate the ability of GraphHull to reco ver multi- lev el comm unity structure and to ac hiev e com- Pro ceedings of the 29 th In ternational Conference on Arti- ficial Intelligence and Statistics (AIST A TS) 2026, T angier, Moro cco. PMLR: V olume 300. Cop yright 2026 by the au- thor(s). p etitiv e or sup erior performance in link predic- tion and comm unit y detection, while naturally pro viding interpretable predictions. 1 In tro duction In recen t years, machine learning on graphs has at- tracted considerable attention. This is not surprising since data from different domains can b e modeled as graphs. F or instance, molecules (Gilmer et al., 2017), proteins (Gligorijevi ´ c et al., 2021), and computer pro- grams (Cheng et al., 2021) are commonly represen ted as graphs. Graph learning approaches mainly map elemen ts of the graph into a low-dimensional vector space, while preserving the graph structure. A signif- ican t amount of research effort has b een dev oted to no de embedding approaches, i. e., algorithms that map the no des of a graph into a lo w-dimensional space (Cui et al., 2018). These metho ds typically use shallo w neural netw ork mo dels to learn embeddings in an un- sup ervised manner. Those embeddings can then serve as input for v arious downstream tasks. Graph neural net works (GNNs) are another family of graph learn- ing algorithms which are mainly applied to supervised learning problems. These mo dels follow a message passing pro cedure, where each no de up dates its fea- ture vector by aggregating the feature vectors of its neigh b ors (W u et al., 2020). Unsup ervised learning and clustering metho ds play a cen tral role in rev ealing laten t structure and in trin- sic organization in complex datasets. Among such approac hes, Ar chetyp al Analysis (AA) (Cutler and Breiman, 1994) pro vides a geometrically interpretable framew ork for represen ting data constrained to a K - dimensional p olytop e. In AA, each observ ation is ex- pressed as a con vex combination of extremal p oin ts, the ar chetyp es which corresp ond to the vertices of the p olytope and capture pure, represen tative profiles of Arc hetypal Graph Generativ e Models the data. Beyond its original form ulation, AA has b een studied extensively from b oth mac hine learning and geometric p erspectives (Mørup and Hansen, 2012; Damle and Sun, 2015), highlighting connections to matrix factorization and con vex geometry . Its geo- metric interpretation has also pro vided insight in to trade-offs and Pareto-optimal structure in biological systems (Sho v al et al., 2012). Over the years, AA has b een successfully applied to div erse domains such as computer vision (Chen et al., 2014) and p opulation ge- netics (Gim b ernat-Ma yol et al., 2022), while significant effort has been devoted to improving scalability and robustness (Eugster and Leisc h, 2011; Bauckhage and Th urau, 2009). A comprehensive ov erview of metho d- ological dev elopments and applications is provided in the recent survey of Alcacer et al. (2025). More re- cen tly , AA has b een generalized to relational settings (Nakis et al., 2025b, 2023a), enabling applications to net work data. One prominent application of graph learning algorithms is communit y detection (a.k.a., clustering), i. e., the problem of disco vering clusters of no des, with many edges joining no des of the same cluster and compara- tiv ely few edges joining no des of different clusters (F or- tunato, 2010). In recent years, different graph learning algorithms ha ve b een prop osed that can assign no des to comm unities while maintaining high clustering qual- it y (Li et al., 2018; Shc hur and G¨ unnemann, 2019; Chen et al., 2019b; Sun et al., 2020). Despite the success of these mo dels in detecting communities in real-w orld net works, the lack of transparency still limits their application scop e. Similar concerns hav e b een raised in v arious domains, including healthcare and criminal justice (Rudin, 2019), and hav e led to the develop- men t of the field of explainable artificial intelligence (XAI) (Ribeiro et al., 2016; Ying et al., 2019; Gau- tam et al., 2022). XAI builds metho ds that justify a mo del’s predictions, th us increasing transparency , trust- w orthiness and fairness. How ever, existing approaches generally fo cus on optimizing clustering qualit y rather than providing explanations for the learned commu- nities. Moreov er, curren t explainable graph learning metho ds mostly target no de classification tasks, leav- ing the problem of in terpretable communit y detection relativ ely underexplored. T o the b est of our knowledge, no existing approach provides a principled generative framew ork that jointly achiev es accurate detection of comm unities and inherent interpretabilit y . In this pap er, we introduce GraphHull , a nov el gen- erativ e framework for explainable graph representation learning. The metho d relies on arc hetypes (Cutler and Breiman, 1994), extreme profiles that summarize the div ersity of structures found in a graph. Our con- tributions are: i ) Hier ar chic al ge ometric design by prop osing a tw o-lev el conv ex-hull architecture in which global archet yp es define extreme comm unity profiles and anchor-dominan t lo cal hulls introduce in terpretable protot yp es for communit y-sp ecific v ariation; ii ) Iden- tifiability by c onstruction in provin g that lo cal hulls are non-ov erlapping, whic h guarantees unique no de re- constructions and transparent communit y assignments; iii ) Stable and sc alable infer enc e by deriving a Lip- sc hitz contin uity result for the MAP ob jectiv e, that enables pro v ably safe optimization, and reducing like- liho od ev aluation from O ( N 2 ) to O ( | E | ) via unbiased subsampling; iv ) Principle d diversity through determi- nan tal p oint pro cess priors on b oth global and lo cal arc hetypes, that encourage non-degenerate and expres- siv e latent geometry; and v ) Diverse empiric al val- idation via the comp etitiv e or sup erior p erformance of GraphHull on m ultiple real-world net works for link prediction and comm unity detection, accompa- nied by interpretable multi-scale explanations. Ov er- all, our geometric design enforces non-o verlapping con- v ex hulls anchored at global archet yp es, yielding com- m unity embeddings that are simultaneously unique, in terpretable, and diverse. Co de is available her e: https://github.com/Nicknakis/GraphHull 2 Related W ork Graph learning for comm unity detection. Com- m unity detection has b een approac hed using b oth shal- lo w embedding methods and deep er mo dels such as GNNs. The standard pip eline em b eds no des into a low- dimensional vector space and then applies clustering algorithms lik e k -means. Unsup ervised no de represen- tation learning metho ds include random walk–based approac hes, matrix factorization, and auto encoders. Al- though shallo w embeddings were once considered less effectiv e, metho ds such as DeepW alk (Perozzi et al., 2014) and no de2v ec (Grov er and Lesko v ec, 2016a) hav e recen tly b een shown to achiev e the optimal detectabil- it y limit under the sto c hastic block model (Ko jaku et al., 2024). General-purp ose embeddings often fail to capture comm unity structure, motiv ating approac hes that join tly preserve graph structure and optimize clus- tering ob jectives, e. g., mo dularit y (Sun et al., 2020). Other w orks introduce communit y embeddings along- side no de embeddings (Cav allari et al., 2017), or use nonnegativ e matrix factorization to directly enco de comm unity structure (Li et al., 2018). GNNs hav e b een applied to communit y detection, e. g., with b elief- propagation–inspired message passing (Chen et al., 2019b; W ang et al., 2025), Bernoulli–P oisson models for ov erlapping comm unities (Shch ur and G ¨ unnemann, 2019), and no de classification formulations (W ang et al., 2021). Auto encoder approaches embed no des and then cluster them (Y ang et al., 2016; Tian et al., 2014; Xie Nakis, Kosma, Promp onas, Chatzianastasis, Nik olentzos et al., 2019), often with GNN encoders (W ang et al., 2017a; He et al., 2021), or unify em b edding and clus- tering in a single framework (W ang et al., 2019; Chen et al., 2019a; Cho ong et al., 2018). Explainable graph learning algorithms. Graph explainabilit y methods fall into tw o categories: p ost- ho c approaches and self-explainable mo dels. P ost-ho c metho ds explain trained GNNs by identifying influen- tial subgraphs (Ying et al., 2019; Y uan et al., 2020; V u and Thai, 2020; Luo et al., 2020; Y uan et al., 2021; Lin et al., 2021; Henderson et al., 2021), but are sensitive to p erturbations and thus less reliable (Li et al., 2024). Self-explainable mo dels are interpretable b y design, often via motifs (Lin et al., 2020; Serra and Niep ert, 2024; Miao et al., 2022) or prototypes (Zhang et al., 2022; Ragno et al., 2022; Dai and W ang, 2021), with recen t work formalizing their expressivity (Chen et al., 2024) and synthetic data for b enc hmarking (Agarwal et al., 2023). Our approach is related to prototype- based methods, but instead learns ar chetyp es . In signed net works, archet ypal embeddings hav e also b een used for in terpretable comm unit y structure (Nakis et al., 2025b,a). 3 Prop osed Metho d Preliminaries. Let G = ( V , E ) b e a simple undi- rected graph with N = | V | and adjacency matrix Y ∈ { 0 , 1 } N × N , where Y ij = Y j i and Y ii = 0. Capital b old letters such as X denote matrices, small b old let- ters suc h as x denote v ectors, while non-b old letters suc h as x denote scalars. W e next extend archet ypal analysis to hierarc hical nested structures b y introducing a con vex-h ull form ulation that captures latent struc- tural organization in an inheren tly interpretable and generativ e manner, prop osing GraphHull , illustrated in Figure 1. A high-lev el generativ e pro cess of the mo del is provided in Algorithm 1 (the detailed genera- tiv e pro cess is provided in the supplementary material). Global archet yp es. Giv en a graph G , we aim to em b ed no des in a D -dimensional laten t space that is constrained to a ( D − 1)-dimensional p olytope. Each no de’s embedding is represented as a conv ex combi- nation of the polytop e’s vertices, where each v ertex corresp onds to an ar chetyp e capturing a pure, extreme profile of the netw ork (Nakis et al., 2023a, 2025b). F or- mally , w e learn a matrix of archet yp es A = [ a ⊤ 1 ; . . . ; a ⊤ K ] ∈ R K × D with K ≤ D , (1) where eac h row defines a corner of the p olytop e. This geometric c haracterization couples communities with extreme arc hetypal profiles, yielding interpretable la- ten t factors that explain netw ork structure. Such an arc hetypal analysis of the netw ork can successfully cap- Algorithm 1 Generativ e pro cess 1: Global archet ypes: Sample A ∈ R K × D using the b oxed SVD parameterization. 2: Local hulls: F or each communit y k = 1 , . . . , K : construct prototypes B k = f W k A with anchor–dominan t rows (truncated Dirichlet, ε ). If ε < 1 2 , then conv( B k ) ∩ con v ( B ℓ ) = ∅ . 3: Div ersity priors: Place DPP priors on rows of A and each B k to encourage spread. 4: Communities and no des: Dra w community prop ortions π , assignments c i ∼ Cat ( π ), barycentric weigh ts ω i ∼ Dir ( α ω ), and set z i = ω ⊤ i B c i . Draw degree bias g i ∼ N (0 , τ 2 g ). 5: Global scale: Draw s ∼ HalfNormal( τ s ). 6: Edges: F or each i < j , sample Y ij ∼ Bern ( σ ( s z ⊤ i z j + g i + g j )). ture global characteristics, but it disregards p oten tial structure within each archet yp e, motiv ating the need for a multi-scale extremal organization of the netw ork. A key design c hoice in our model is how to construct the global arc hetype matrix A , which defines the v ertices of the global conv ex hull in latent space. T o ensure stabilit y , we parameterize A in an SVD-like form, A = U diag( σ ) V ⊤ , (2) where U ∈ R K × K and V ∈ R D × K ha ve orthonor- mal columns, and σ ∈ R K con tains the singular v al- ues constrained to lie within fixed b ounds [ σ min , σ max ]. This construction has three adv an tages: (i) it pro duces arc hetypes that span approximately orthogonal direc- tions, reducing the risk of degeneracy; (ii) the b ounded singular v alues control the ov erall scale of inner pro d- ucts, prev enting either collapse or explosion of the laten t embeddings; and (iii) the separation of orienta- tion (via U , V ) and scale (via σ ) reduces redundan t v ariabilit y and stabilizes inference. Enforcing σ min > 0 guaran tees that the rows of A are linearly indep enden t, so that A alwa ys spans a well–conditioned latent basis. Anc hor–dominant lo cal h ulls. W e p osit K glob al ar chetyp es collected in A ∈ R K × D , each serving as an extremal profile that defines a latent communit y in the net work. T o capture communit y–sp ecific struc- ture at a finer resolution, for each vertex (archet yp e) of the global h ull k ∈ { 1 , . . . , K } w e in troduce a lo- c al pr ototypic al hul l B k ∈ R K × D . Each no de will b e represen ted as a con vex combination of the K ro ws of some B k , yielding interpretable communit y - a ware protot yp es. The idea parallels prototype - based mo del- ing in interpretable image generation (Gautam et al., 2022; Kjærsgaard et al., 2024), but here it is adapted to relational data where b oth global and local structure m ust b e inferred join tly . A t the global lev el, A contains extremal arc het yp es that define pure communit y profiles. At the lo cal level, each h ull B k refines a comm unity b y introducing vertices that act as prototypes/lo cal archet yp es, i.e., represen- tativ e subprofiles within the communit y . F or enhanced Arc hetypal Graph Generativ e Models convex-combination vertices global archetypes latent variable global hull Edge Generation: MAP Estimation Communities, Nodes, Global Scale: Latent Archetypal Space Input Network Output Network Figure 1: Visualization of the prop osed archet ypal graph generative mo del, so-called GraphHull . iden tifiability , each lo cal hull B k is anchor e d to a dis- tinct global arc hetype a ⊤ k . W e write B k = ˜ W k A = W k e ⊤ k A ∈ R K × D , k = 1 , . . . , K, (3) where the total barycentric co ordinates (including the anc hor) are giv en b y ˜ W k so that e ⊤ k ∈ R 1 × K is the k -th standard basis row (so the last row of B k equals the an- c hor a ⊤ k ), and W k ∈ R ( K − 1) × K is a c ommunity - sp e cific ro w–simplex matrix whose ro ws are conv ex w eigh ts o ver the global arc hetypes: ( W k ) ℓ, : ∈ ∆ K − 1 := { w ∈ R K : w ≥ 0 , 1 ⊤ w = 1 } , ℓ = 1 , . . . , K − 1 . Under a generativ e mo del, for selecting the anc hor, one ma y regard this as sampling a global archet yp e without replacement and fixing it as a vertex of the lo cal h ull. The remaining K − 1 vertices of B k are constrained to b e conv ex combinations of the global arc hetypes, ensuring that local extremes are explained hierarc hically by the global structure, as prototypes. Th us, B k has K ro ws (v ertices in R D ): one fixed an- c hor at the global archet ype a ⊤ k and K − 1 prototypes with rows obtained as conv ex com binations of { a ⊤ j } K j =1 . Imp ortan tly , B 1 , . . . , B K are distinct (eac h has its own co efficien t matrix W k ) and are anchored to differen t global arc hetypes. Non–o verlapping lo cal h ulls by construction. A cen tral prop erty of our design is lo c al hul l identifiability . Therefore, for each pair of communities k = ℓ , their con- v ex hulls should satisfy con v ( B k ) ∩ con v ( B ℓ ) = ∅ . This disjointness ensures that every no de embedding has a unique reconstruction: if t wo lo cal h ulls w ere to ov erlap, then any p oin t in the in tersection could b e expressed as a conv ex combination of prototypes from either hull, breaking identifiabilit y and inter- pretabilit y of the representation. While one could attempt to discourage ov erlap by adding prior en- couraging h ull separation, these provide only a soft p enalt y and cannot guarantee non–ov erlap without careful tuning. Instead, we ensure disjointness by c onstruction by defining the local h ulls B k through barycen tric co ordinates W k sampled from a trunc ate d Dirichlet prior . Sp ecifically , each row w ⊤ is dra wn as w ∼ Dir ( α 1 K ) conditioned on w k ≥ 1 − ε, where k denotes the anc hor co ordinate of hull k . This trunca- tion forces every local prototype in the h ull anchored at the k -th global archet ype ( a ⊤ k ) to retain at least mass 1 − ε on its anchor. F or ε < 1 2 this ensures that anchor- dominan t regions of different hulls are disjoint, so the induced conv ex h ulls do not ov erlap cf. Lemma 3.1, (pro of in supplementary). Lemma 3.1 (Non-ov erlap of anchor-dominan t local h ulls) . L et A ∈ R K × D b e affinely indep endent glob al ar chetyp es. F or e ach c ommunity k ∈ { 1 , . . . , K } , let its lo c al b aryc entric r ows w ∈ ∆ K − 1 b e dr awn fr om a trunc ate d Dirichlet prior w ∼ Dir( α 1 K ) c onditione d on w k ≥ 1 − ε, 0 < ε < 1 2 . L et ˜ W k stack K such r ows, with the final r ow set to e ⊤ k , and define lo c al vertic es B k = ˜ W k A . Then for any k = ℓ , con v ( B k ) ∩ con v ( B ℓ ) = ∅ . Nakis, Kosma, Promp onas, Chatzianastasis, Nik olentzos In practice we realize this truncated Dirichlet prior by parameterizing each row of W k ∈ R ( K − 1) × K directly . Let ε ∈ (0 , 1 2 ) be the anc hor mass. F or rows r = 1 , . . . , K − 1 we set ( W k ) r, : = (1 − s k,r ) e ⊤ k + s k,r ˜ q k,r , (4) ( W k ) r, : ∈ ∆ K − 1 , s k,r = ε t k,r , t k,r ∈ (0 , 1) , where e k is the k -th basis vector and ˜ q k,r ∈ ∆ K − 1 is a probabilit y vector supp orted only on { 1 , . . . , K } \ { k } (i.e. zero mass on the anc hor co ordinate) where w e can a place Dirichlet prior, ˜ q k ∼ Dir( α q 1 K ) . The final row is set to e ⊤ k , yielding ˜ W k ∈ R K × K , and the lo cal v ertices are obtained as B k = ˜ W k A ∈ R K × D . This anc hor–dominant parameterization is equiv alen t to sampling from the truncated Dirichlet in Lemma 3.1, but is simpler to implement in practice and ensures the disjoin tness guarantee. Finally , the strength of anc hor dominance in Eq. (4) is con trolled by the shrink v ariable s k,r = ε t k,r , where t k,r ∈ (0 , 1) is given a Beta prior t k,r ∼ Beta( a, b ) . No de represen tation. Ha ving the global and lo cal arc hetypal structure in place we can define the final no de representation. F or each no de, we define a global comm unity/arc hetype assignment to exactly one com- m unity (lo cal hull). W e enco de this with the one–hot matrix, M ∈ { 0 , 1 } N × K . Conceptually , each row m ⊤ i corresp onds to drawing the assignment c i from a cat- egorical distribution ov er the K global arc hetypes, so that m ⊤ i is a one–hot vector indicating the unique h ull to which node i b elongs. If no de i b elongs to comm unity c i , then m ⊤ i = e ⊤ c i is the i -th row of M . Within its communit y , the p osition of the no de is given b y barycentric coordinates ω i ∈ ∆ K − 1 o ver the local arc hetypes B c i ∈ R K × D . Thus the embedding of node i is simply z i = ω ⊤ i B c i ∈ R D . (5) Stac king all nodes yields the final representation matrix Z = [ z ⊤ 1 ; . . . ; z ⊤ N ] ∈ R N × D while Ω = [ ω ⊤ 1 ; . . . ; ω ⊤ N ] ∈ R N × K collects the barycentric weigh ts. F or the no de–specific barycentric weigh ts ω i o ver the K lo cal arc hetypes of its assigned hull B k , we place a symmet- ric Dirichlet prior, ω i ∼ Dir( α ω 1 K ) , with α ω = 1, corresp onding to the uniform distribution ov er the probabilit y simplex. During training we emplo y the Gum b el–Softmax (GS) relaxation (Jang et al., 2017), o ver global assignment matrix M ∈ { 0 , 1 } N × K whic h pro vides a differen tiable appro ximation to such cate- gorical samples. Edge likelihoo d. W e fo cus on binary undirected graphs and mo del edges with a Bernoulli likelihoo d. The formulation can b e extended to weigh ted graphs using a Poisson likelihoo d and to signed graphs using a Sk ellam lik eliho od. Let g i ∈ R b e a node-sp ecific degree bias, z i ∈ R D the laten t representation of no de i , and s > 0 a global scale parameter controlling geometric sharpness. F or an unordered no de pair ( i, j ) with i < j , the log-o dds expression is η ij = s ⟨ z i , z j ⟩ + g i + g j , (6) Y ij | Z , g , s ∼ Bernoulli σ ( η ij ) . The complete log-likelihoo d ov er the set of unordered pairs D = { ( i, j ) : 1 ≤ i < j ≤ N } is log p ( Y | Z , g , s ) = X i 0, we use a half-normal prior s ∼ N + (0 , τ 2 s ). Div ersity of v ertices within each lo cal hull. An imp ortan t c haracteristic of our mo del is the diversity and expr essiveness of b oth the lo cal hulls B k and global h ull A . T o capture ric h communit y structure, each h ull should span a large and w ell–conditioned volume, rather than collapsing onto a small subspace or pro- ducing co–linear vertices. T o encourage this, we place a Determinantal P oint Pr o c ess (DPP) (Kulesza et al., 2012) prior in its L –ensem ble formulation on the set of lo cal/global arc het yp es. The L –ensem ble DPP requires a p ositiv e–semidefinite k ernel matrix. F or a conv ex hull, let Φ ∈ R K × D denote its K v ertices and define the ro w–normalized matrix Ψ , with rows ψ k = ϕ k / ∥ ϕ k ∥ 2 , we then form the Gram matrix L = ΨΨ ⊤ . The log–prior con tribution is log p DPP ( Φ ) = log det( L ) − log det( I + L ) . (8) This determinantal p oin t pro cess prior acts as a repul- siv e force among b oth the lo cal and global archet ypes, discouraging degeneracy and collapse. Applied to the lo cal hulls B k , it encourages the vertices to b e diverse so that each hull spans a rich volume within its com- m unity . Applied to the global archet yp es A , it spreads the global basis vectors apart, ensuring that the com- m unities themselves are well separated. Join t distribution and MAP ob jectiv e. Let Θ denote the GraphHull parameters, with deterministic constrain ts B k = ˜ W k A and z i giv en b y Eq. (5) . Up to constan ts, the joint distribution factorizes as: Arc hetypal Graph Generativ e Models log p ( Y , Θ ) = X ( i,j ) ∈D h Y ij η ij − softplus( η ij ) i | {z } Bernoulli edge likelihoo d + N X i =1 ( α ω − 1) K X k =1 log ω ik | {z } Dirichlet prior on ω i + K X k =1 K − 1 X r =1 ( α q − 1) X j = k log( q k,r ) j | {z } non-anchor Dirichlet + K X k =1 K − 1 X r =1 h ( a − 1) log t k,r + ( b − 1) log(1 − t k,r ) i | {z } anchor shrink age (Beta) + K X k =1 h log det( L k ) − log det( I + L k ) i | {z } Local hull DPP prior + h log det( L ) − log det( I + L ) i | {z } Global Hull DPP prior − 1 2 τ 2 g N X i =1 g 2 i − s 2 2 τ 2 s . (9) Naiv ely , ev aluating the Bernoulli–logistic edge likeli- ho od scales as O ( N 2 ) due to all pairwise in teractions. T o achiev e scalability , we exploit that the first term in volv es only observ ed edges and can b e computed in O ( | E | ), while the log-partition term is estimated by uniform subsampling of non-edges, yielding an unbiased estimator. This reduces the p er-iteration cost to O ( | E | ). The DPP prior adds a smaller ov erhead. F or eac h lo- cal h ull B k ∈ R K × D (and the global arc hetypes A ), w e compute a Gram matrix and tw o log-determinants, whic h require O ( K 2 D + K 3 ) op erations. Since there are K lo cal hulls plus one global term, the total DPP cost is O ( K 3 D + K 4 ). The remaining priors contribute lo wer- order costs. Thus, the o verall p er-iteration complexit y of the method is O ( | E | ) + O ( K 3 D + K 4 ), dominated by the linear-in-edges term for large graphs when K ≪ N . Optimization and curv ature. Bo xing the global arc hetype matrix not only improv es identifiabilit y but also controls curv ature: the next theorem shows that the gradient with resp ect to the barycentric w eights is globally Lipschitz, so fixed-step pro jected gradien t is safe, as shown in Theorem 3.2 (pro of in the supple- men tary). This helps explain the stable b eha vior we observ e in practice. Theorem 3.2 (Boxing ⇒ Lipsc hitz gradien t in ω ) . Consider the ne gative Bernoul li–lo gistic loss L ( Z , g , s ) = X i 0, the global scale parameter. In practice, s is regularized by a half-normal prior in the MAP ob jective k eeping the Lipschitz constant finite. In terpretability and Self-Explainabilit y . F ollo w- ing the literature on self-explainable mo dels (SEM) (Gautam et al., 2022), we characterize GraphHull through complemen tary notions of interpretabilit y and explainabilit y . The model is interpr etable b ecause its latent comp onents, global arc hetypes and anchor- dominan t lo cal prototypes organized as nested conv ex h ulls, corresp ond to extremal structural roles in the graph. It is explainable b ecause each prediction admits an explicit generative decomp osition: ev ery no de has a unique barycentric representation within a lo cal hull, comm unities are anchored at global archet ypes, and edge log-o dds decomp ose in to interpretable geometric in teractions and degree effects. Th us, explanations arise directly from the mo del’s laten t geometry rather than post-ho c analysis. Moreov er, GraphHull satis- fies the core SEM predicates of tr ansp ar ency , diversity , and trustworthiness (Gautam et al., 2022). T rans- parency follo ws from the direct use of archet ypes and protot yp es in the generativ e mec hanism. Diversit y is enforced via affine indep endence, anchor-dominan t disjoin t hulls, and determinantal p oin t pro cess pri- ors. T rustw orthiness stems from faithful generative decomp ositions and the b oxed-SVD parameterization, whic h yields stable optimization with controlled cur- v ature. Finally , as a generativ e SEM, GraphHull enjo ys gener ative c onsistency : the same interpretable laten t factors that explain predictions also gov ern the probabilistic data-generation pro cess. The hierarchical iden tifiability of prototypes through global archet yp es further strengthens this alignment b et ween geometry , explanation, and generation. 4 Results W e extensiv ely ev aluate GraphHull against baseline graph represen tation learning metho ds on netw orks Nakis, Kosma, Promp onas, Chatzianastasis, Nik olentzos T able 1: Statistics of net works. N : nu mber of no des, | E | : num b er of edges, K n um b er of comm unities. LastFM Citeseer Pol Cora Dblp AstroPh GrQc HepTh N 7,624 3,327 18,500 2,708 27,199 17,903 5,242 8,638 | E | 55,612 9,104 61,200 5,278 66,832 197,031 14,496 24,827 K 14 6 2 7 — — — — of v arying sizes and structures. All exp erimen ts were conducted on an 8 GB Apple M2 machine. F or Graph- Hull , we optimize the MAP ob jective of Eq. (9) us- ing Adam (Kingma and Ba, 2014) with learning rate in range [0 . 01 , 0 . 05]. Unless otherwise stated, we set the anc hor strength to ε = 0 . 45, b o x SVD b ounds to [ σ min = 0 . 3 , σ max = 1 . 5], and c ho ose K = D for sim- plicit y . Detailed description of h yp erparameters for all mo dels are provided in the supplementary . Datasets and Baselines. W e ev aluate on m ultiple undirected citation, collab oration, and so cial netw orks, eac h treated as unw eigh ted (see T able 1 for details). Citation netw orks include C ora (machine learning pub- lications, 7 classes) and Citeseer (computer science publications, 6 classes) (Sen et al., 2008). Collab ora- tion netw orks include DBLP (co-authorship b y research field) (Perozzi et al., 2017), AstroPh (astrophysics), GrQc (general relativity and quantum cosmology), and HepTh (high-energy ph ysics) (Lesko v ec et al., 2007). W e also use the so cial netw ork LastFM (Asian users with 14 country labels) (Rozemberczki and Sark ar, 2020), and the so cio-p olitical retw eet netw ork P ol (la- b els denote p olitical alignment) (Rossi and Ahmed, 2015). W e compare against shallow embeddings, ma- trix factorization metho ds, mixed-mem b ership mo dels, and a GNN-based approac h. Shallow e m b eddings in- clude Node2Vec (Grov er and Lesko vec, 2016b) and R ole2Vec (Ahmed et al., 2018). F actorization-based metho ds are NetMF (Qiu et al., 2018), GraRep (Cao et al., 2015), and RandNE (Zhang et al., 2018). Mixed- mem b ership mo dels include NNSED (Sun et al., 2017), MNMF (W ang et al., 2017b), and SymmNMF (Kuang et al., 2012). W e also ev aluate Dmon (Tsitsulin et al., 2023), whic h couples a GNN enco der with mo dularit y maximization (for extended details see supplementary). W e emphasize shallo w em b eddings, since they w ere recen tly shown to reach the detectability limit under the sto c hastic blo c k mo del (Ko jaku et al., 2024). Link prediction. F or link prediction, we follow the widely adopted ev aluation proto col of Perozzi et al. (2014); Nakis et al. (2023b). Sp ecifically , we randomly remo ve 50% of the edges while e nsuring that the resid- ual graph remains connected. The remov ed edges, together with an equal num ber of randomly sampled non-edges, form the p ositiv e and negative instances of the test set. The residual graph is then used to learn no de embeddings. W e ev aluate p erformance on five b enc hmark netw orks, each o ver five runs and across m ultiple embedding dimensions ( D ∈ { 8 , 16 , 32 , 64 } ). T able 2 re ports the Area Under the Receiver Op erating Characteristic Curve (A UC-ROC) (for Precision-Recall (PR-A UC) scores see supplementary). Across runs, the v ariance was consistently on the order of 10 − 3 and is omitted for readability . F ollo wing Gro ver and Lesko v ec (2016a), dyadic features are constructed using binary op erators (av erage, Hadamard, weigh ted- L 1 , weigh ted- L 2 ) and a logistic regression classifier with L 2 regu- larization is trained to make predictions. In contrast, predictions from GraphHull ( ϵ = 0 . 49) are obtained directly from the mo del: we use the log-odds η ij of a test pair { i, j } to compute link probabilities, with no additional classifier required. Imp ortan tly , Graph- Hull is tuned solely on training sets, and remains blind to the test set. By comparison, many baseline results are rep orted under test-a ware tuning. Despite this adv an tage, GraphHull remains highly comp etitive with strong baselines across datasets and embedding dimensions, achieving comparable or sup erior p erfor- mance in many settings while main taining consistent link prediction accuracy . Comm unity detection. T o assess communit y recov- ery , we use four netw orks with ground-truth lab els. F or mem b ership-a ware mo dels, including GraphHull , we set the latent dimension equal to the num b er of true comm unities and compare inferred memberships with the ground truth. F or GRL methods without mem b er- ships, we extract embeddings of the same dimensional- it y and apply k -means. W e rep ort Normalized Mutual Information (NMI) and Adjusted Rand Index (ARI) a v- eraging o ver five runs. All baselines are tuned for their main hyperparameters, while GraphHull uses the same settings across datasets. Results in T able 3 sho w GraphHull is consisten tly comp etitiv e with GRL baselines and outp erforms mem b ership-based metho ds, with the exception of Cora , where GRL with post-ho c clustering has an adv an tage. Nev ertheless, Gr aph- Hull is the most consistent across datasets and metrics, outp erforming membership-based metho ds, remaining comp etitiv e with GRL approac hes. Net work visualization. W e illustrate how Graph- Hull captures global arc hetypal and lo cal prototypical structures in the HepTh netw ork. In Figure 2, panel (a) sho ws the PCA pro jection of the latent space inferred b y GraphHull . Eac h lo cal hull B k is anc hored in a distinct global archet yp e defined by the global hull A . Any apparent ov erlap in panel (a) arises only from pro jecting to tw o dimensions; in the full D = 8 space, all h ulls are guaranteed to b e non-ov erlapping. P anel (b) shows the adjacency matrix reordered b y global h ull assignments, and within eac h blo c k by the maxi- m um prototype membership arg max ω i . This reveals Arc hetypal Graph Generativ e Models T able 2: A UC ROC scores for representation sizes of 8, 16, 32, and 64 av eraged ov er five runs. AstroPh GrQc HepTh Cora DBLP Dimension ( D ) 8 16 32 64 8 16 32 64 8 16 32 64 8 16 32 64 8 16 32 64 Node2Vec .943 .954 .961 .962 .928 .932 .937 .936 .879 .882 .888 .892 .761 .760 .766 .777 .920 .923 .931 .941 Role2Vec .957 .969 .970 .965 .927 .936 .934 .934 .897 .907 .902 .895 .769 .767 .759 .752 .940 .952 .943 .944 NetMF .904 .928 .946 .955 .835 .882 .882 .883 .778 .797 .802 .793 .698 .675 .674 .654 .791 .817 .829 .842 GraRep .919 .946 .959 .965 .892 .906 .909 .894 .825 .845 .842 .829 .692 .704 .728 .717 .855 .877 .879 .867 RandNE .867 .888 .900 .907 .787 .826 .854 .877 .718 .770 .812 .839 .604 .641 .676 .701 .741 .795 .836 .865 NNSED .863 .883 .891 .897 .799 .836 .861 .876 .741 .788 .820 .841 .620 .649 .689 .709 .755 .801 .834 .853 MNMF .877 .916 .938 .954 .881 .905 .918 .918 .810 .844 .864 .875 .694 .718 .700 .680 .859 .898 .919 .931 SymmNMF .784 .818 .840 .862 .859 .873 .901 .905 .740 .770 .795 .826 .734 .717 .713 .705 .822 .848 .868 .888 Dmon .845 .852 .857 .861 .822 .845 .856 .866 .786 .799 .804 .807 .723 .744 .777 .788 .774 .785 .788 .791 GraphHull .945 .954 .960 .965 .923 .931 .940 .945 .874 .885 .895 .904 .753 .790 .791 .803 .930 .934 .945 .948 (a) GraphHull PCA pro jection with K = 8 and D = 8. (b) GraphHull Reordered Adjacency matrix. (c) PCA: B 1 (d) PCA: B 2 (e) PCA: B 3 (f ) PCA: B 4 (g) PCA: B 5 (h) PCA: B 6 (i) PCA: B 7 (j) PCA: B 8 (k) Cp : B 1 (l) Cp : B 2 (m) Cp : B 3 (n) Cp : B 4 (o) Cp : B 5 (p) Cp : B 6 (q) Cp : B 7 (r) Cp : B 8 Figure 2: GraphHull Visualiza tions: (a) PCA pro jection of K = 8 h ulls in D = 8 dimensions. (b) Reordered adjacency matrix: first by global hull assignments, then within blo cks b y arg max ω i . (c)–(j) PCA pro jections of individual lo cal hulls. (k)–(r) Prototype membership spaces for each B k , shown as circular plots ( Cp ) with protot yp es ev enly spaced on the circle, with lines indicating links inside a hull (colors denote lo cal hulls). clear blo ck structure at b oth the global and lo cal levels. P anels (c)–(j) present disentangled PCA pro jections of eac h lo cal h ull B k . Finally , panels (k)–(r) sho w circular plots of the prototype mem b ership spaces, where proto- t yp es are p ositioned every 2 π K radians, illustrating no de mem b erships ω i enric hed with the links across no des inside each blo c k. Overall, GraphHull extracts infor- mativ e and robust netw ork structures while combining the strengths of global archet ypal characterization with protot ypical structure inside communities. Effect of anc hor strength ε . The parameter ε con- trols the maximum spread of each hull; for ε < 1 2 it guaran tees disjoin tness. Figure 3a shows link prediction p erformance as a function of ε . As ε increases, predic- tiv e p erformance steadily improv es in b oth AUC-R OC and AUC-PR. This trend contin ues even in the o ver- lapping regime ( ε > 1 2 ), where allowing ov erlaps b etter explains mixed-mem b erships. Notably , at ε ≈ 0 . 45 w e ac hieve high predictive accuracy and remain within the iden tifiable regime. W e inv estigate hull geometry as ε v aries. Figure 3b reports the effectiv e log-v olume across runs: volumes increase monotonically with ε , sho wing that h ulls naturally expand as anc hor dominance re- Nakis, Kosma, Promp onas, Chatzianastasis, Nik olentzos T able 3: Normalized Mutual Information (NMI) and Adjusted Rand Index (ARI) scores. Cora Citeseer LastFM Pol Metric NMI ARI NMI ARI NMI ARI NMI ARI Node2Vec .460 ± .010 .397 ± .024 .223 ± .010 .211 ± .025 .591 ± .005 .436 ± .009 .727 ± .007 .791 ± .008 Role2Vec .486 ± .001 .417 ± .010 .299 ± .016 .279 ± .015 .596 ± .001 .455 ± .003 .712 ± .003 .772 ± .003 NetMF .463 ± .006 .349 ± .006 .223 ± .010 .145 ± .016 .605 ± .001 .522 ± .001 .145 ± .001 .199 ± .001 GraRep .292 ± .001 .192 ± .001 .320 ± .001 .320 ± .001 .394 ± .001 .259 ± .001 .447 ± .001 .492 ± .001 RandNE .020 ± .004 .010 ± .004 .015 ± .002 .010 ± .003 .063 ± .001 .018 ± .001 .003 ± .001 .003 ± .001 NNSED .310 ± .031 .229 ± .027 .244 ± .011 .201 ± .016 .290 ± .006 .143 ± .011 .085 ± .043 .136 ± .056 MNMF .294 ± .017 .226 ± .018 .144 ± .031 .125 ± .030 .473 ± .006 .307 ± .012 .599 ± .024 .624 ± .030 SymmNMF .311 ± .001 .204 ± .001 .310 ± .006 .289 ± .003 .448 ± .020 .329 ± .043 .117 ± .006 .153 ± .004 Dmon .350 ± .024 .299 ± .042 .271 ± 0.018 .265 ± 0.027 .518 ± .025 .397 ± .049 .714 ± .001 .769 ± .001 GraphHull .422 ± .004 .333 ± .008 .347 ± .007 .372 ± .009 .616 ± .001 .514 ± .003 .757 ± .001 .820 ± .001 (a) Predictive p erformance as a function of ε . (b) Effectiv e log-volume of h ulls as a function of ε . (c) Violin plots of lo cal-hull singular v alues as a function of the anc hor strength ε under three priors: none (no DPP), standard DPP , and upw eighted DPP ( κ = 5). Figure 3: Effect of ε : Results on the HepTh dataset. laxes. Although the DPP prior discourages coincident protot yp es, it do es not enforce full affine indep endence p er hull. In practice, some comm unities form hulls of r e duc e d effe ctive r ank , where a protot yp e is nearly an affine com bination of others. This reflects b enign o ver-parameterization: the data do es not require all directions. Such rank deficiencies are harmless, the re- dundan t vertex can be trivially remov ed without c hang- ing the hull, pro viding an interpretable estimate of a comm unity’s intrinsic dimension. Concretely , replacing L k with κ L k for κ > 0 scales the eigen v alues of the ker- nel, yielding the prior log det ( κ L k ) − log det ( I + κ L k ). Larger κ strengthens the repulsion b et ween prototypes, discouraging redundant vertices without altering the feasible set of hulls. This effect is evident in Figure 3c: without a DPP prior, the singular v alues of lo cal h ulls B k exhibit hea vy tails near zero, pro ducing unstable h ulls with rank deficiency . Including a DPP prior al- most entirely eliminates this issue, while upw eigh ting with κ = 5 further remo ves rank deficiency . Protot yp e interpretabilit y . Examining the learned protot yp es reveals clear structural roles. Across datasets, anc hors generally corresp ond to dense cores, while non-anc hor protot yp es capture sparser or p e- ripheral structures, highligh ting diversit y within eac h comm unity . F or example, in the HepTh net work an- c hors exhibit systematically higher clustering coeffi- cien ts than non-anchor protot yp es, whereas p eripheral protot yp es often attract large num b ers of “on-vertex” exemplars despite low clustering v alues. These findings confirm that the anc hor–prototype hierarch y yields in terpretable profiles of structural organization (see supplemen tary for detailed quantitativ e analysis). 5 Conclusion & Limitations W e in tro duced GraphHull , a principled genera- tiv e framework for explainable graph representation learning. By combining global archet yp es with an- c hor–dominant lo cal conv ex h ulls, our approach yields em b eddings that are iden tifiable, interpretable, and div erse by design. The geometry enforces disjointness and stability , while determinantal p oint pro cess priors promote non-degenerate structure. Across multiple net works, GraphHull achiev es comp etitiv e or sup erior p erformance on link prediction and communit y detec- tion, while naturally pro viding multi-scale explanations of comm unities and prototypes. Like most generative mo dels, optimization is non-con vex and dep ends on initialization, but in practice we find GraphHull to b e stable under deterministic initializations based on the normalized Laplacian. Beyond graph analysis, the prop osed hierarchical archet ypal design is broadly ap- plicable to other domains where transparent latent geometry is needed. W e hop e this w ork encourages fur- ther researc h into generative, geometry-aw are mo dels for in terpretable machine learning. Arc hetypal Graph Generativ e Models Ac kno wledgements W e gratefully ac knowledge the reviewers for their con- structiv e feedback and insightful comments. C. K. is supp orted by the IdAML Chair hosted at ENS Paris- Sacla y , Universit ´ e Paris-Sacla y . References C. Agarwal, O. Queen, H. Lakk ara ju, and M. Zitnik. Ev aluating explainability for graph neural netw orks. Scientific Data , 10(1):144, 2023. N. K. Ahmed, R. Rossi, J. B. Lee, T. L. Willk e, R. Zhou, X. Kong, and H. Eldardiry . Learning role-based graph embeddings. arXiv pr eprint arXiv:1802.02896 , 2018. A. Alcacer, I. Epifanio, S. Mair, and M. Mørup. A surv ey on archet ypal analysis, 2025. URL https: //arxiv.org/abs/2504.12392 . C. Bauckhage and C. Thurau. Making arc hetypal analysis practical. pages 272–281, 09 2009. ISBN 978- 3-642-03797-9. doi: 10.1007/978- 3- 642- 03798- 6 28. S. Cao, W. Lu, and Q. Xu. Grarep: Learning graph rep- resen tations with global structural information. In Pr o c e e dings of the 24th A CM International on Con- fer enc e on Information and Know le dge Management , pages 891–900, 2015. S. Ca v allari, V. W. Zheng, H. Cai, K. C.-C. Chang, and E. Cambria. Learning communit y embedding with comm unity detection and no de em b edding on graphs. In Pr o c e e dings of the 2017 A CM on Confer enc e on Information and Know le dge Management , pages 377– 386, 2017. Y. Chen, J. Mairal, and Z. Harc haoui. F ast and robust arc hetypal analysis for representation learning. In 2014 IEEE Confer enc e on Computer Vision and Pattern R e c o gnition , pages 1478–1485, 2014. doi: 10.1109/CVPR.2014.192. Y. Chen, Y. Bian, B. Han, and J. Cheng. How inter- pretable are in terpretable graph neural netw orks? In Pr o c e e dings of the 41st International Confer enc e on Machine L e arning , pages 6413–6456, 2024. Z. Chen, C. Chen, Z. Zhang, Z. Zheng, and Q. Zou. V ariational graph embedding and clustering with laplacian eigenmaps. In Pr o c e e dings of the 28th Inter- national Joint Confer enc e on Artificial Intel ligenc e , pages 2144–2150, 2019a. Z. Chen, L. Li, and J. Bruna. Supervised communit y detection with line graph neural net works. In 7th In- ternational Confer enc e on L e arning R epr esentations , 2019b. X. Cheng, H. W ang, J. Hua, G. Xu, and Y. Sui. Deep- wuk ong: Statically detecting soft ware vulnerabilities using deep graph neural netw ork. ACM T r ansac- tions on Softwar e Engine ering and Metho dolo gy , 30 (3):1–33, 2021. J. J. Choong, X. Liu, and T. Murata. Learning com- m unity structure with v ariational auto encoder. In Pr o c e e dings of the 2018 IEEE International Confer- enc e on Data Mining , pages 69–78, 2018. P . Cui, X. W ang, J. P ei, and W. Zhu. A survey on net- w ork embedding. IEEE T r ansactions on Know le dge and Data Engine ering , 31(5):833–852, 2018. A. Cutler and L. Breiman. Archet ypal analysis. T e ch- nometrics , 36(4):338–347, 1994. E. Dai and S. W ang. T o wards self-explainable graph neural netw ork. In Pr o c e e dings of the 30th ACM International Confer enc e on Information and Know l- e dge Management , pages 302–311, 2021. A. Damle and Y. Sun. A geometric approach to arc hety- pal analysis and non-negative matrix factorization, 2015. URL . M. J. Eugster and F. Leisc h. W eighted and robust arc hetypal analysis. Computational Statistics and Data Analysis , 55(3):1215–1225, 2011. ISSN 0167- 9473. doi: https://doi.org/10.1016/j.csda.2010.10. 017. S. F ortunato. Comm unit y detection in graphs. Physics r ep orts , 486(3-5):75–174, 2010. S. Gautam, A. Boub ekki, S. Hansen, S. A. Salah ud- din, R. Jenssen, M. M. H¨ ohne, and M. Kampffmeyer. Proto v ae: a trust w orthy self-explainable protot ypical v ariational model. In Pr o c e e dings of the 36th Interna- tional Confer enc e on Neur al Information Pr o c essing Systems , pages 17940–17952, 2022. J. Gilmer, S. S. Schoenholz, P . F. Riley , O. Viny als, and G. E. Dahl. Neural message passing for quan tum c hemistry . In Pr o c e e dings of the 34th International Confer enc e on Machine L e arning , pages 1263–1272, 2017. J. Gimbernat-May ol, A. Dominguez Mantes, C. D. Bustaman te, D. Mas Montserrat, and A. G. Ioannidis. Arc hetypal analysis for p opulation genetics. PL oS Computational Biolo gy , 18(8):e1010301, 2022. V. Gligorijevi ´ c, P . D. Renfrew, T. Kosciolek, J. K. Le- man, D. Beren b erg, T. V atanen, C. Chandler, B. C. T a ylor, I. M. Fisk, H. Vlamakis, et al. Structure- based protein function prediction using graph con- v olutional netw orks. Natur e c ommunic ations , 12(1): 3168, 2021. A. Grov er and J. Lesko vec. node2vec: Scalable feature learning for netw orks. In Pr o c e e dings of the 22nd ACM ACM SIGKDD International Confer enc e on Know le dge Disc overy and Data Mining , pages 855– 864, 2016a. Nakis, Kosma, Promp onas, Chatzianastasis, Nik olentzos A. Grov er and J. Lesko vec. node2vec: Scalable feature learning for netw orks. In Pr o c e e dings of the 22nd A CM SIGKDD International Confer enc e on Know l- e dge Disc overy and Data Mining , pages 855–864, 2016b. D. He, Y. Song, D. Jin, Z. F eng, B. Zhang, Z. Y u, and W. Zhang. Comm unity-cen tric graph conv olutional net work for unsup ervised communit y detection. In Pr o c e e dings of the 29th International Confer enc e on International Joint Confer enc es on A rtificial Intel li- genc e , pages 3515–3521, 2021. R. Henderson, D.-A. Clev ert, and F. Montanari. Im- pro ving molecular graph neural netw ork explainabil- it y with orthonormalization and induced sparsity . In Pr o c e e dings of the 38th International Confer enc e on Machine L e arning , pages 4203–4213, 2021. E. Jang, S. Gu, and B. Poole. Categorical reparameter- ization with gum b el-softmax. In 5th International Confer enc e on L e arning R epr esentations , 2017. D. P . Kingma and J. Ba. Adam: A metho d for sto chas- tic optimization. arXiv pr eprint arXiv:1412.6980 , 2014. R. Kjærsgaard, A. Boub ekki, and L. Clemmensen. P ant yp es: Diverse representativ es for self-explainable mo dels. In Pr o c e e dings of the 38th AAAI Confer enc e on Artificial Intel ligenc e , pages 13230–13237, 2024. S. Ko jaku, F. Radicchi, Y.-Y. Ahn, and S. F ortunato. Net work communit y detection via neural embeddings. Natur e Communic ations , 15(1):9446, 2024. D. Kuang, C. Ding, and H. Park. Symmetric nonneg- ativ e matrix factorization for graph clustering. In Pr o c e e dings of the 2012 SIAM International Confer- enc e on Data Mining , pages 106–117, 2012. A. Kulesza, B. T ask ar, et al. Determinantal p oin t pro cesses for mac hine learning. F oundations and T r ends ® in Machine L e arning , 5(2–3):123–286, 2012. J. Lesko v ec, J. Kleinberg, and C. F aloutsos. Graph ev olution: Densification and shrinking diameters. ACM T r ansactions on Know le dge Disc overy fr om Data (TKDD) , 1(1):2–es, 2007. Y. Li, C. Sha, X. Huang, and Y. Zhang. Comm unity detection in attributed graphs: an embedding ap- proac h. In Pr o c e e dings of the 32nd AAAI Confer enc e on Artificial Intel ligenc e , pages 338–345, 2018. Z. Li, S. Geisler, Y. W ang, S. G ¨ unnemann, and M. v an Leeu wen. Explainable graph neural netw orks under fire. arXiv pr eprint arXiv:2406.06417 , 2024. C. Lin, G. J. Sun, K. C. Bulusu, J. R. Dry , and M. Her- nandez. Graph neural netw orks including sparse in terpretability . arXiv pr eprint arXiv:2007.00119 , 2020. W. Lin, H. Lan, and B. Li. Generative causal explana- tions for graph neural net w orks. In Pr o c e e dings of the 38th International Confer enc e on Machine L e arning , pages 6666–6679, 2021. D. Luo, W. Cheng, D. Xu, W. Y u, B. Zong, H. Chen, and X. Zhang. P arameterized explainer for graph neural net work. In Pr o c e e dings of th e 34th Interna- tional Confer enc e on Neur al Information Pr o c essing Systems , pages 19620–19631, 2020. S. Miao, M. Liu, and P . Li. Interpretable and gen- eralizable graph learning via sto c hastic atten tion mec hanism. In Pr o c e e dings of the 39th International Confer enc e on Machine L e arning , pages 15524–15543, 2022. M. Mørup and L. K. Hansen. Archet ypal analy- sis for machine learning and data mining. Neu- r o c omputing , 80:54–63, 2012. ISSN 0925-2312. doi: h ttps://doi.org/10.1016/j.neucom.2011.06.033. URL https://www.sciencedirect.com/science/ article/pii/S0925231211006060 . Sp ecial Issue on Mac hine Learning for Signal Pro cessing 2010. N. Nakis, A. Celikk anat, L. Boucherie, C. Djurhuus, F. Burmester, D. M. Holmelund, M. F rolcov´ a, and M. Mørup. Characterizing p olarization in social net works using the signed relational latent distance mo del. In Pr o c e e dings of the 26th International Con- fer enc e on Artificial Intel ligenc e and Statistic , pages 11489–11505, 2023a. N. Nakis, A. C ¸ elikk anat, S. L. Jørgensen, and M. Mørup. A hierarchical blo ck distance mo del for ultra lo w-dimensional graph representations, 2023b. URL . N. Nakis, C. Kosma, A. Brativnyk, M. Chatzianastasis, I. Evdaimon, and M. V azirgiannis. The signed tw o- space pro ximity mo del for learning represen tations in protein–protein interaction netw orks. Bioinformatics , 41(6):btaf204, 2025a. N. Nakis, C. Kosma, G. Nikolen tzos, M. Chatzianasta- sis, I. Evdaimon, and M. V azirgiannis. Signed graph auto encoder for explainable and p olarization-a ware net work embeddings. In Pr o c e e dings of the 28th In- ternational Confer enc e on Artificial Intel ligenc e and Statistic , pages 496–504, 2025b. B. P erozzi, R. Al-Rfou, and S. Skiena. Deep walk: On- line learning of so cial representations. In Pr o c e e dings of the 20th ACM SIGKDD International Confer enc e on Know le dge Disc overy and Data Mining , pages 701–710, 2014. B. Perozzi, V. Kulk arni, H. Chen, and S. Skiena. Don’t w alk, skip! online learning of multi-scale netw ork em b eddings. In Pr o c e e dings of the 2017 IEEE/A CM International Confer enc e on A dvanc es in So cial Net- works Analysis and Mining , pages 258–265, 2017. Arc hetypal Graph Generativ e Models J. Qiu, Y. Dong, H. Ma, J. Li, K. W ang, and J. T ang. Net work embedding as matrix factorization: Uni- fying DeepW alk, LINE, PTE, and Node2V ec. In Pr o c e e dings of the 11th ACM International Confer- enc e on Web Se ar ch and Data Mining , pages 459–467, 2018. A. Ragno, B. La Rosa, and R. Capobianco. Prototype- based interpretable graph neural netw orks. IEEE T r ansactions on Artificial Intel ligenc e , 5(4):1486– 1495, 2022. M. T. Rib eiro, S. Singh, and C. Guestrin. ” why should i trust y ou?” explaining the predictions of any classifier. In Pr o c e e dings of the 22nd ACM SIGKDD International Confer enc e on Know le dge Disc overy and Data Mining , pages 1135–1144, 2016. R. A. Rossi and N. K. Ahmed. The net work data rep ository with interactiv e graph analytics and visualization. In AAAI , 2015. URL https:// networkrepository.com . B. Rozem b erczki and R. Sark ar. Characteristic func- tions on graphs: Birds of a feather, from statisti- cal descriptors to parametric models, 2020. URL https://arxiv.org/abs/2005.07959 . C. Rudin. Stop explaining blac k b o x machine learning mo dels for high stakes decisions and use in terpretable mo dels instead. Natur e Machine Intel ligenc e , 1(5): 206–215, 2019. P . Sen, G. Namata, M. Bilgic, L. Geto or, B. Gallagher, and T. Eliassi-Rad. Collective classification in net- w ork data. AI magazine , 2008. G. Serra and M. Niep ert. L2xgnn: learning to explain graph neural netw orks. Machine L e arning , 113(9): 6787–6809, 2024. O. Shc hur and S. G ¨ unnemann. Overlapping communit y detection with graph neural net works. arXiv pr eprint arXiv:1909.12201 , 2019. O. Shov al, G. Shinar, Y. Hart, O. Ramote, A. May o, E. Dek el, K. Kav anagh, and U. Alon. Ev olutionary trade-offs, pareto optimalit y , and the geometry of phenot yp e space. Scienc e (New Y ork, N.Y.) , 336: 1157–60, 04 2012. doi: 10.1126/science.1217405. B.-J. Sun, H. Shen, J. Gao, W. Ouyang, and X. Cheng. A non-negativ e symmetric enco der-deco der approac h for communit y detection. In Pr o c e e dings of the 2017 A CM on Confer enc e on Information and Know le dge Management , pages 597–606, 2017. H. Sun, F. He, J. Huang, Y. Sun, Y. Li, C. W ang, L. He, Z. Sun, and X. Jia. Net work embedding for comm unity detection in attributed netw orks. A CM T r ansactions on Know le dge Disc overy fr om Data , 14 (3):1–25, 2020. F. Tian, B. Gao, Q. Cui, E. Chen, and T.-Y. Liu. Learning deep representations for graph clustering. In Pr o c e e dings of the 28th AAAI Confer enc e on Ar- tificial Intel ligenc e , pages 1293–1299, 2014. A. Tsitsulin, J. Palo witc h, B. Perozzi, and E. M ¨ uller. Graph clustering with graph neural net works, 2023. URL . M. N. V u and M. T. Thai. Pgm-explainer: probabilis- tic graphical mo del explanations for graph neural net works. In Pr o c e e dings of the 34th International Confer enc e on Neur al Information Pr o c essing Sys- tems , pages 12225–12235, 2020. C. W ang, S. Pan, G. Long, X. Zhu, and J. Jiang. Mgae: Marginalized graph auto encoder for graph clustering. In Pr o c e e dings of the 2017 A CM on Confer enc e on Information and Know le dge Management , pages 889– 898, 2017a. C. W ang, S. P an, R. Hu, G. Long, J. Jiang, and C. Zhang. Attributed graph clustering: a deep at- ten tional em b edding approach. In Pr oc e e dings of the 28th International Joint Confer enc e on A rtificial Intel ligenc e , pages 3670–3676, 2019. H. W ang, Y. Zhang, Z. Zhao, Z. Cai, X. Xia, and X. Xu. Simple yet effectiv e heuristic comm unity detection with graph conv olution net work. Scientific R ep orts , 15(1), Nov. 2025. ISSN 2045-2322. doi: 10.1038/s41598- 025- 22860- z. URL http://dx.doi. org/10.1038/s41598- 025- 22860- z . X. W ang, P . Cui, J. W ang, J. Pei, W. Zhu, and S. Y ang. Comm unity preserving netw ork embedding. In Pr o- c e e dings of the 31st AAAI Confer enc e on A rtificial Intel ligenc e , 2017b. X. W ang, J. Li, L. Y ang, and H. Mi. Unsup ervised learn- ing for communit y detection in attributed netw orks based on graph conv olutional net work. Neur o c om- puting , 456:147–155, 2021. Z. W u, S. Pan, F. Chen, G. Long, C. Zhang, and S. Y. Philip. A comprehensive survey on graph neural net works. IEEE T r ansactions on Neur al Networks and L e arning Systems , 32(1):4–24, 2020. Y. Xie, X. W ang, D. Jiang, and R. Xu. High- p erformance communit y detection in so cial net works using a deep transitiv e auto encoder. Information Scienc es , 493:75–90, 2019. L. Y ang, X. Cao, D. He, C. W ang, X. W ang, and W. Zhang. Modularity based communit y detection with deep learning. In Pr o c e e dings of the 25th Inter- national Joint Confer enc e on Artificial Intel ligenc e , pages 2252–2258, 2016. R. Ying, D. Bourgeois, J. Y ou, M. Zitnik, and J. Lesko v ec. Gnnexplainer: generating explanations for graph neural netw orks. In Pr o c e e dings of the 33r d Nakis, Kosma, Promp onas, Chatzianastasis, Nik olentzos International Confer enc e on Neur al Information Pr o- c essing Systems , pages 9244–9255, 2019. H. Y uan, J. T ang, X. Hu, and S. Ji. Xgnn: T o wards mo del-lev el explanations of graph neural net works. In Pr o c e e dings of the 26th ACM SIGKDD Interna- tional Confer enc e on Know le dge Disc overy and Data Mining , pages 430–438, 2020. H. Y uan, H. Y u, J. W ang, K. Li, and S. Ji. On ex- plainabilit y of graph neural net works via subgraph explorations. In Pr o c e e dings of the 38th International Confer enc e on Machine L e arning , pages 12241–12252, 2021. Z. Zhang, P . Cui, H. Li, X. W ang, and W. Zhu. Billion- scale netw ork embedding with iterative random pro- jection. In Pr o c e e dings of the 2018 IEEE Interna- tional Confer enc e on Data Mining , pages 787–796, 2018. Z. Zhang, Q. Liu, H. W ang, C. Lu, and C. Lee. Prot- gnn: T ow ards self-explaining graph neural netw orks. In Pr o c e e dings of the 36th AAAI Confer enc e on Ar- tificial Intel ligenc e , pages 9127–9135, 2022. Chec klist 1. F or all mo dels and algorithms presented, chec k if y ou include: (a) A clear description of the mathematical set- ting, assumptions, algorithm, and/or mo del. Y es (b) An analysis of the prop erties and complexity (time, space, sample size) of any algorithm. Y es (c) (Optional) Anonymized source co de, with sp ecification of all dep endencies, including external libraries. Y es as a supplementary file 2. F or any theoretical claim, chec k if you include: (a) Statemen ts of the full set of assumptions of all theoretical results. Y es (b) Complete pro ofs of all theoretical results. Y es in the supplemen tary (c) Clear explanations of any assumptions. Y es 3. F or all figures and tables that present empirical results, c heck if you include: (a) The co de, data, and instructions needed to re- pro duce the main exp erimental results (either in the supplemental material or as a URL). Y es in the supplemen tary (b) All the training details (e.g., data splits, hy- p erparameters, how they were chosen). Y es (c) A clear definition of the sp ecific measure or statistics and error bars (e.g., with resp ect to the random seed after running exp erimen ts m ultiple times). Y es (d) A description of the computing infrastructure used. (e.g., type of GPUs, internal cluster, or cloud pro vider). Y es 4. If you are using existing assets (e.g., co de, data, mo dels) or curating/releasing new assets, chec k if y ou include: (a) Citations of the creator If your work uses existing assets. Y es (b) The license information of the assets, if appli- cable. Not Applicable (c) New assets either in the supplemental mate- rial or as a URL, if applicable. Not Applicable (d) Information ab out consen t from data pro viders/curators. Not Applicable (e) Discussion of sensible con tent if applicable, e.g., p ersonally identifiable information or of- fensiv e conten t. Not Applicable 5. If y ou used crowdsourcing or conducted researc h with h uman sub jects, chec k if you include: (a) The full text of instructions given to partici- pan ts and screenshots. Not Applicable (b) Descriptions of p oten tial participant risks, with links to Institutional Review Board (IRB) appro v als if applicable. Not Applicable (c) The estimated hourly wage paid to partici- pan ts and the total amount sp en t on partici- pan t comp ensation. Not Applicable

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment