SOM-VQ: Topology-Aware Tokenization for Interactive Generative Models

Vector-quantized representations enable powerful discrete generative models but lack semantic structure in token space, limiting interpretable human control. We introduce SOM-VQ, a tokenization method that combines vector quantization with Self-Organ…

Authors: Aless, ro Londei, Denise Lanzieri

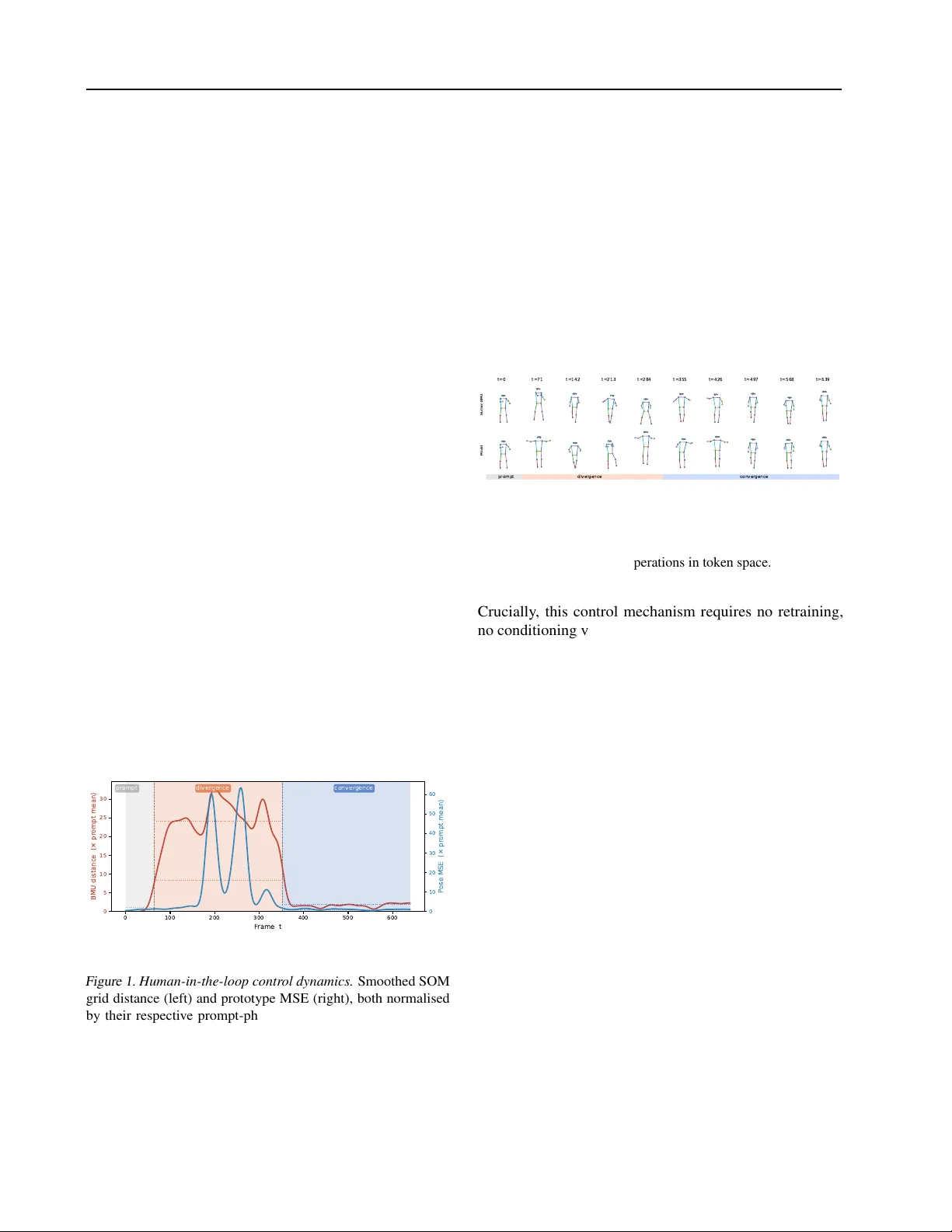

SOM-VQ: T opology-A ware T ok enization f or Interactiv e Generative Models Alessandro Londei 1 Denise Lanzieri 1 Matteo Benati 1 2 Abstract V ector-quantized representations enable power - ful discrete generati ve models b ut lack semantic structure in token space, limiting interpretable human control. W e introduce SOM-VQ, a to- kenization method that combines v ector quanti- zation with Self-Or ganizing Maps to learn dis- crete codebooks with e xplicit low-dimensional topology . Unlike standard VQ-V AE, SOM-VQ uses topology-aware updates that preserv e neigh- borhood structure: nearby tokens on a learned grid correspond to semantically similar states, en- abling direct geometric manipulation of the latent space. W e demonstrate that SOM-VQ produces more learnable token sequences in the e v aluated domains while providing an explicit navig able geometry in code space. Critically , the topologi- cal org anization enables intuiti ve human-in-the- loop control: users can steer generation by ma- nipulating distances in token space, achie ving se- mantic alignment without frame-lev el constraints. W e focus on human motion generation — a do- main where kinematic structure, smooth temporal continuity , and interactiv e use cases (choreogra- phy , rehabilitation, HCI) make topology-aware control especially natural — demonstrating con- trolled di ver gence and con ver gence from refer- ence sequences through simple grid-based sam- pling. SOM-VQ pro vides a general framework for interpretable discrete representations applicable to music, gesture, and other interactive generati ve domains. 1 Sony Computer Science Laboratories - Rome. Joint Initiati ve CREF-SONY , Centro Ricerche Enrico Fermi. V ia Panisperna 89/A, 00184, Rome, Italy 2 Department of Computer , Automatic and Management Engineer- ing. Sapienza Uni versity , V ia Ariosto 25, Rome, Italy . Correspondence to: Alessandro Londei < alessan- dro.londei@sony .com > . 1. Introduction Discrete representations hav e become foundational to mod- ern generati ve models, enabling scalable autoregressi ve ar - chitectures across image ( Razavi et al. , 2019 ; Esser et al. , 2021 ), video ( Y an et al. , 2021 ), audio ( Dhariwal et al. , 2020 ), and motion synthesis ( Petrovich et al. , 2022 ; Guo et al. , 2022 ). V ector quantization (VQ) techniques, popularized by VQ-V AE ( v an den Oord et al. , 2017 ), provide a principled approach to learning discrete latent spaces: an encoder maps continuous inputs to latent vectors, which are quantized to the nearest codebook entry , and a decoder reconstructs from the discrete representation. T raining minimizes L VQ-V AE = ∥ x − ˆ x ∥ 2 + ∥ sg [ z e ] − z q ∥ 2 + β ∥ z e − sg [ z q ] ∥ 2 , (1) where sg[ · ] denotes stop-gradient. Recent variants hav e refined this frame work through residual quantization ( Lee et al. , 2022 ), finite scalar quantization ( Mentzer et al. , 2023 ), and improv ed codebook learning ( Y u et al. , 2023 ). Despite their success, VQ-based methods treat tokens as unstructured symbols: codebook elements lack explicit or- ganization, and distances between tokens carry no semantic meaning. This poses challenges for contr ollable g eneration , where users seek to guide model outputs through intuiti ve, semantically-grounded interv entions. Current approaches to control rely on conditioning ( Dhariw al and Nichol , 2021 ), classifier guidance ( Ho and Salimans , 2022 ), or e xplicit constraints ( Lhner et al. , 2023 )—indirect mechanisms that offer limited access to the model’ s internal representation structure and may compromise generation coherence ( Liu et al. , 2023 ). Interacti ve control w ould benefit from representations where geometric relationships in latent space correspond to se- mantic relationships in data space. Self-Organizing Maps (SOMs) ( K ohonen , 2001 ) provide precisely this property: through competiti ve learning with neighborhood-based up- dates, SOMs arrange prototypes on a lo w-dimensional grid such that proximity reflects semantic similarity . SOMs hav e seen limited adoption in deep learning ( Fortuin et al. , 2019 ), partly because they lack the stability and discrete structure that make VQ ef fecti ve for lar ge-scale generation. Human motion is a particularly apt domain for topology-aware tok- enization: its kinematic structure and temporal continuity mean that semantically similar poses are genuinely close 1 in data space, and its interactive use cases — choreogra- phy , rehabilitation, HCI — demand precisely the real-time relational steering that a navig able token geometry enables. W e introduce SOM-VQ, a topology-a ware tokenization method that unifies vector quantization’ s stability with SOM’ s neighborhood preservation. SOM-VQ learns dis- crete codebooks with explicit low-dimensional topology: tokens nearby on a learned grid correspond to semantically related states, enabling direct geometric manipulation of latent space. K ey properties include: • Stable discrete tokenization compatible with autore- gressiv e models, av oiding codebook collapse through topology-aware EMA updates • Explicit navigable token space where grid geometry reflects semantic similarity , enabling control operations not possible with unstructured VQ codebooks • Geometric controllability enabling human-in-the-loop steering via token-space distance manipulation without frame-lev el constraints W e validate SOM-VQ on human motion generation, demon- strating preservation of topological structure and enabling in- tuiti ve control through di ver gence/con ver gence experiments. The framew ork generalizes naturally to other sequential domains requiring interpretable, steerable generation. 2. Self-Organizing Map V ector Quantization 2.1. Background: VQ-V AE VQ-V AE learns discrete representations through three com- ponents: an encoder f θ : R D → R d mapping inputs to continuous latents, a codebook C = { e k } K k =1 of discrete prototypes, and a decoder g ϕ : R d → R D for reconstruc- tion. Gi v en input x , the encoder produces z e = f θ ( x ) , which is quantized to the nearest codebook entry: k ∗ = arg min k ∥ z e − e k ∥ 2 , z q = e k ∗ . (2) The decoder reconstructs ˆ x = g ϕ ( z q ) using a straight- through gradient estimator . T raining optimizes Equation 1 with commitment loss ∥ z e − sg[ z q ] ∥ 2 to pre vent encoder drift, and codebook entries are updated via exponential mov- ing av erage (EMA) of assigned latents. While ef fecti ve for generation, VQ-V AE treats codebook en- tries as unor der ed symbols : the assignment in Eq. 2 consid- ers only Euclidean distance in latent space, not relationships between tokens. Consequently , tokens k i and k j may be semantically unrelated e ven if their embeddings e k i and e k j are nearby , and vice versa. This precludes using token-space geometry for semantic control. 2.2. SOM-VQ: T opology-A ware T okenization SOM-VQ addresses this by endowing the codebook with explicit topological structure. The key insight is to arrange codebook entries on a low-dimensional grid and couple their updates through spatial neighborhoods, as in Self- Organizing Maps ( K ohonen , 2001 ). Grid organization. Each codebook entry e k ∈ R d is associ- ated with a fixed grid coordinate c k ∈ R 2 (we use 2D grids, though higher dimensions are possible). For a codebook of size K , we arrange entries on a √ K × √ K grid with inte ger coordinates. T oken assignment remains nearest-neighbor in latent space (Eq. 2), preserving discrete semantics. T wo-stage codebook update. Unlike VQ-V AE’ s per-entry EMA, SOM-VQ performs a two-stage update combining SOM topology preservation with VQ commitment. F or each input z e with best-matching unit (BMU) k ∗ : Stage 1 (SOM topology): All codebook entries receiv e neighborhood-weighted updates: e k ← e k + η h ( ∥ c k − c k ∗ ∥ )( z e − e k ) , (3) where η is a topology learning rate and h ( · ) is a Gaussian neighborhood kernel: h ( d ) = exp − d 2 2 σ 2 . (4) The bandwidth σ is annealed from σ start to σ end ov er training epochs, progressiv ely localizing updates ( K ohonen , 2001 ). Stage 2 (VQ commitment): The BMU receiv es an additional EMA update: e k ∗ ← (1 − α ) e k ∗ + α z e , (5) where α is the VQ commitment rate. This encourages the BMU to track its assigned latents, combining SOM’ s topol- ogy with VQ’ s stability . T raining objectiv e. The encoder and decoder are trained end-to-end via gradient descent on: L SOM-VQ = ∥ x − ˆ x ∥ 2 | {z } reconstruction + β ∥ z e − sg[ z q ] ∥ 2 | {z } commitment , (6) while the codebook is updated via the two-stage procedure abov e (see Algorithm 1 in the supplement). The commit- ment term in Eq. 6 prev ents encoder drift as in VQ-V AE. Properties. The neighborhood updates in Eq. 3 create co- herent semantic regions on the grid while the VQ com- mitment in Eq. 5 prevents codebook collapse. This yields discrete tokens with explicit topology: nearby grid positions correspond to semantically similar latent regions, enabling geometric operations in token space for semantic control (Section 4). T ypical hyperparameters: η = 0 . 2 , α = 0 . 05 , σ start = 4 . 0 , σ end = 0 . 8 . 2 T able 1. Cross-domain comparison at 32 × 32 ( K =1024 ). SOM- VQ produces the most learnable sequences (lo west Seq-PPL) and organizes the codebook geometrically (Distortion), unlike VQ/VQ- V AE, which lack grid structure (—). SOM-hard achieves lower Distortion through pure topology optimization, but cannot support generation; SOM-VQ’ s slightly higher Distortion reflects the VQ commitment constraint that enables stable discrete assignment. VQ-V AE uses larger architecture (hidden=512 vs. 256) and dead- code reset. Full statistics in Appendix T able 2 . Domain Method Act. (%) Trust ↑ Cont. ↑ MSE ↓ Seq-PPL ↓ Dist. ↓ VQ 99 0.999 0.960 0.005 678 — SOM-hard 95 0.998 0.942 0.006 679 × 0.041 Lorenz VQ-V AE 99 0.999 0.961 0.003 672 — SOM-VQ 71 0.998 0.942 0.007 617 0.062 VQ 61 0.940 0.898 0.294 719 — SOM-hard 72 0.932 0.897 0.342 742 × 0.093 AIST++ VQ-V AE 61 0.978 0.950 0.200 727 — SOM-VQ 61 0.937 0.895 0.319 679 0.111 3. Experimental V alidation W e e valuate SOM-VQ on two domains with contrasting complexity: the Lorenz attractor ( Lorenz , 1963 ) (3D chaotic dynamics) and AIST++ ( Li et al. , 2021 ) (51D motion cap- ture). W e compare against VQ ( van den Oord et al. , 2017 ), SOM-hard (pure SOM without VQ commitment), and VQ- V AE ( van den Oord et al. , 2017 ; Razavi et al. , 2019 ). All methods use a 32 × 32 grid ( K =1024 ). T opology is mea- sured by trustworthiness and continuity ( V enna and Kaski , 2006 ) (point-le vel neighborhood preservation) and distor- tion (global codebook geometry). Sequence learnability is measured by GR U v alidation perplexity (Seq-PPL); lo wer indicates more structured sequences. Results are mean ± std ov er 5 seeds. Full details in Appendix A . 3.1. Main Results T able 1 shows that SOM-VQ achieves the lowest se- quence perplexity acr oss both domains , demonstrating that topological or ganization produces more learnable tok en sequences. SOM-VQ achieves near-perfect point-level topology (T rust/Cont ≈ 0 . 94 – 0 . 99 ), matching VQ and SOM-hard. The ke y distinctions are: (1) lo west Seq-PPL in both do- mains (617 vs. 672–678 on Lorenz; 679 vs. 719–742 on AIST++), representing 6–9% impro vements, and (2) or ga- nized codebook geometry (Distortion). While VQ/VQ-V AE lack geometric structure, SOM-VQ maintains grid or gani- zation despite the VQ commitment constraint. VQ-V AE’ s better reconstruction reflects its lar ger architecture and un- constrained optimization. Code utilization (71% Lorenz, 61% AIST++) is lower than VQ/VQ-V AE due to topology-aw are allocation: unused grid positions tend to correspond to low-density regions, reflecting manifold structure rather than enforcing uniform cov erage. Why topology improves learnability . Why topology may improv e learnability . SOM-VQ enforces that neighboring grid positions correspond to semantically similar latents, en- couraging token sequences to follo w more connected paths through the grid — a regularity that autore gressiv e models can exploit, consistent with the lo wer Seq-PPL observed in our experiments. 3.2. Scaling and Cross-Domain Behavior Grid ablations (Appendix T ables 4 , 5 ) rev eal domain- dependent scaling. Lorenz improves monotonically: MSE decreases 6 × (0.047 → 0.007), T rust rises (0.983 → 0.998). AIST++ plateaus at 32 × 32: further scaling to 48 × 48 or 64 × 64 yields no improvement (MSE ≈ 0 . 32 , T rust ≈ 0 . 94 ) while utilization drops to 23%, indicating saturation at ∼ 600–700 codes. This suggests the optimal grid size should match data complexity , with the utilization plateau provid- ing a practical diagnostic. Recent work on structured codebooks includes product quan- tization ( J ´ egou et al. , 2011 ) and residual VQ ( Zeghidour et al. , 2021 ), which improve capacity through hierarchy but lack explicit topology . VQ-GAN ( Esser et al. , 2021 ) achiev es strong generation through adv ersarial training but operates in unstructured discrete spaces. SOM-VQ’ s contri- bution is imposing a lo w-dimensional geometric structure that enables interpretable control, demonstrated in Section 4. 3.3. Ablation: isolating the contrib ution of topological structure T o rule out architectural confounds, we compare three capacity-matched variants at identical architecture (hid- den=256): VQ-EMA, VQ-EMA with dead-code reset, and SOM-VQ using its standard two-phase training schedule. On Lorenz, SOM-VQ achiev es lower Seq-PPL than both VQ variants without an y reset mechanism (829 vs. 1014/1017), confirming that neighbourhood pressure pro vides implicit codebook re gularisation on structured lo w-dimensional data. On AIST++, capacity-matched VQ-EMA achiev es lower Seq-PPL, suggesting that the Seq-PPL advantage in T able 1 is partially attrib utable to the richer e valuation protocol of the main experiments; SOM-VQ’ s organised codebook ge- ometry (Distortion) remains a unique property una vailable to either VQ variant. Full results are in T able 3 (supplemen- tary). Relationship to SOM-V AE. SOM-V AE ( Fortuin et al. , 2019 ) integrates the SOM structure within a V AE to obtain interpretable latent representations for time-series clustering. Howe v er , it does not employ VQ-style commitment with exponential moving a verage updates, nor is it designed to produce stable discrete tokens for autoregressi ve generati ve 3 modeling. In contrast, our method maintains persistent sym- bol identity through e xplicit VQ commitment and enables controlled perturbations to acti v ation within a structured, dis- crete latent manifold for generativ e control, which requires stable symbol identities across training and inference. 3.4. Limitations While we validate across two complementary domains, a broader e valuation across audio, images, or other modali- ties would strengthen generalizability claims. The VQ-V AE comparison in v olves architectural confounds (netw ork ca- pacity , dead-code mechanisms) that merit controlled abla- tion in future work. The human-in-the-loop demonstration in Section 4 is preliminary and would benefit from user studies and baseline comparisons. 4. Human-in-the-Loop Contr ol The topological structure of SOM-VQ enables intuitive con- trol for interactiv e generation. W e demonstrate this through a preliminary experiment where a generated motion se- quence is steered to div erge from and later con ver ge tow ard a reference performance. W e train a 32 × 32 SOM-VQ tokenizer on AIST++ and an LSTM ( Hochreiter and Schmidhuber , 1997 ) on the result- ing tokens. At inference, a validation sequence serves as a reference. After a prompt, generation proceeds under topology-aware sampling: in div ergence, next-token sam- pling is biased to ward grid positions distant from the human BMU; in conv ergence, sampling fav ors closer positions. Concretely , each token occupies grid position ( i, j ) ; to di- ver ge, we bias sampling tow ard ∥ c model − c human ∥ > τ , and to con v erge toward ∥ c model − c human ∥ < τ . This requires only Euclidean distances on the 2D grid. 0 100 200 300 400 500 600 F rame t 0 5 10 15 20 25 30 BMU distance (× pr ompt mean) pr ompt diver gence conver gence 0 10 20 30 40 50 60 P ose MSE (× pr ompt mean) Figure 1. Human-in-the-loop contr ol dynamics. Smoothed SOM grid distance (left) and prototype MSE (right), both normalised by their respectiv e prompt-phase means, across the three inter- action phases. Both metrics rise during diver gence and return tow ard baseline during con vergence, confirming that the topologi- cal steering operates consistently in grid space and in pose space simultaneously . Figure 1 shows that the Gaussian-smoothed prototype MSE and grid distance both increase during div ergence and de- crease during con vergence, suggesting that topological guid- ance enables controlled departure and recovery . Figure 2 shows corresponding poses: during div ergence, the model departs while remaining plausible; during con vergence, re- constructions progressiv ely realign. This demonstrates how topology-a ware representations sup- port natural interaction: by manipulating grid neighbor- hoods, users can steer generation while preserving coher- ence. Though preliminary , it moti vates applications in chore- ography , music improvisation, and embodied agent control requiring interpretable real-time steering. Human BMU t=0 t=71 t=142 t=213 t=284 t=355 t=426 t=497 t=568 t=639 Model pr ompt diver gence conver gence Figure 2. Generated poses under topology-guided contr ol. T op: reference. Bottom: model generation. The grid enables semantic control through geometric operations in token space. Crucially , this control mechanism requires no retraining, no conditioning v ariable, and no frame-le vel specification. Standard controllable generation methods — conditioning on class labels, te xt embeddings, or e xplicit pose constraints — require that the desired tar get be known and expressible in a predefined format before generation be gins. The grid- based approach demonstrated here instead operates at the lev el of relational intent: the user specifies not a target state but a direction (closer to or further from a reference), and the generati ve model resolves this into coherent token sequences autonomously . This distinction matters for creati ve and interacti ve applications where the user’ s goal e volv es in real time and cannot be pre-specified — precisely the setting of liv e choreography , musical improvisation, or collaborati ve embodied agents. The topology established during SOM- VQ training makes this mode of interaction structurally possible; it is not av ailable to methods whose codebooks lack geometric organization. 5. Conclusions W e introduced SOM-VQ, a topology-aware vector quanti- zation method that combines the stability of VQ-based tok- enization with the geometric structure of Self-Organizing Maps. By endowing discrete tokens with an e xplicit low- dimensional topology , SOM-VQ enables interpretable con- trol over generati ve processes and supports human-in-the- 4 loop interaction through simple geometric operations in token space. SOM-VQ produces more learnable and struc- tured token sequences than competing methods—including VQ-V AE—as evidenced by consistently lower sequence perplexity across both ev aluated domains, and uniquely pro- vides a navigable grid geometry that makes semantic control directly accessible without retraining. The HIL e xperiment demonstrates that topology-aw are to- ken spaces support a qualitati vely dif ferent kind of interac- tion than standard generativ e models: control is ex ercised through geometric reasoning on a discrete manifold rather than through low-le vel conditioning or post-hoc editing. This opens a promising direction for applications where a human collaborator needs to steer , constrain, or creativ ely depart from a generati ve process while maintaining struc- tural coherence—scenarios common in interacti ve perfor- mance and embodied agent control. 5 References Prafulla Dhariwal and Alexander Nichol. Dif fusion models beat gans on image synthesis. NeurIPS , 2021. Prafulla Dhariwal et al. Jukebox: A generative model for music. arXiv preprint , 2020. Patrick Esser , Robin Rombach, and Bjorn Ommer . T aming transformers for high-resolution image synthesis. CVPR , 2021. V incent Fortuin et al. Som-v ae: Interpretable discrete repre- sentation learning on time series. ICLR , 2019. Chuan Guo et al. Generating div erse and natural 3d human motions from text. CVPR , 2022. Jonathan Ho and Tim Salimans. Classifier-free diffusion guidance. arXiv preprint , 2022. Sepp Hochreiter and J ¨ urgen Schmidhuber . Long short-term memory . Neural computation , 9(8):1735–1780, 1997. Herv ´ e J ´ egou, Matthijs Douze, and Cordelia Schmid. Prod- uct quantization for nearest neighbor search. In IEEE T ransactions on P attern Analysis and Machine Intelli- gence , v olume 33, pages 117–128. IEEE, 2011. T euvo K ohonen. Self-Or ganizing Maps . Springer , 2001. Doyup Lee et al. Autoregressiv e image generation using residual quantization. CVPR , 2022. K orrawe Lhner et al. Guided motion diffusion for control- lable human motion synthesis. ICCV , 2023. Ruilong Li, Sheng Y ang, David A. Ross, Jason W u, Qing Li, Chen Qian, and Ziwei W ang. Ai choreographer: Mu- sic conditioned 3d dance generation with aist++. ICCV , 2021. Xihui Liu et al. More control for free! image synthesis with semantic diffusion guidance. W A CV , 2023. Edward N. Lorenz. Deterministic nonperiodic flo w . Journal of the Atmospheric Sciences , 20(2):130–141, 1963. doi: 10.1175/1520- 0469(1963)020 ⟨ 0130:DNF ⟩ 2.0.CO;2. Fabian Mentzer et al. Finite scalar quantization: Vq-v ae made simple. arXiv preprint , 2023. Mathis Petrovich et al. T emos: Generating div erse human motions from textual descriptions. ECCV , 2022. Ali Razavi, A ¨ aron van den Oord, and Oriol V inyals. Generat- ing div erse high-fidelity images with vq-v ae-2. Advances in Neural Information Pr ocessing Systems , 2019. A ¨ aron van den Oord, Oriol V inyals, and Koray Kavukcuoglu. Neural discrete representation learning. Advances in Neural Information Pr ocessing Systems , 2017. Jarkko V enna and Samuel Kaski. Local multidimensional scaling with controlled tradeof f between trustworthiness and continuity . Pr oceedings of the W orkshop on Self- Or ganizing Maps , 2006. W ilson Y an et al. V ideogpt: V ideo generation using vq- vae and transformers. arXiv pr eprint arXiv:2104.10157 , 2021. Lijun Y u et al. Language model beats diffusion– tokenizer is key to visual generation. arXiv pr eprint arXiv:2310.05737 , 2023. Neil Zeghidour , Alejandro Luebs, Ahmed Omran, Jan Skoglund, and Marco T agliasacchi. Soundstream: An end-to-end neural audio codec. IEEE/ACM T r ansactions on Audio, Speech, and Language Pr ocessing , 30:495–507, 2021. 6 A. Supplementary Material Experimental Details Datasets and pr eprocessing . Lor enz attractor : W e gener- ate 400 trajectories of 300 timesteps each using standard parameters ( σ =10 , ρ =28 , β =8 / 3 , dt =0 . 01 ). Each trajec- tory is a 6D feature vector ( x, y , z , ˙ x, ˙ y , ˙ z ) with v elocity computed by finite dif ferences. W e apply a temporal win- dow of 4 frames and reduce to 8D via PCA after z-score normalization. T rain/v al/test splits are 70%/15%/15%. AIST++ : Motion sequences are preprocessed with root cen- tering (hip midpoint), frontal alignment (yaw normaliza- tion via shoulder orientation), and canonical coordinate sys- tem transformation. Each pose is a 51D vector (17 COCO joints × 3 coordinates). Sequences are segmented into non-ov erlapping 240-frame windows. After z-score normal- ization, we reduce to 32D via PCA. T rain/val/test splits are 70%/15%/15% ov er 400 sequences. Architectur e and training. All methods use en- coder/decoder networks with: 2-layer MLPs, ReLU acti- vations, latent dimension matching PCA output (8D for Lorenz, 32D for AIST++). Hidden size scales with code- book: 128 for K ≤ 64 , 256 for K ≤ 256 , 512 for K > 1024 . VQ-V AE uses hidden=512 throughout to handle larger code- books effecti vely . T raining uses Adam ( lr =10 − 3 , λ =0 . 25 ), batch size 256, for 50 epochs. SOM-VQ training procedur e. T raining proceeds in two phases: (1) SOM pr e-training : 10 epochs of pure SOM updates to establish initial topology , with neighborhood bandwidth annealed from σ start = √ K / 2 to σ end =1 . 0 and topology learning rate η =0 . 2 . (2) J oint tr aining : 50 epochs of combined encoder/decoder optimization with two-stage codebook updates (SOM topology + VQ commitment) us- ing fixed η =0 . 2 , α =0 . 05 (EMA rate), and σ =1 . 0 (fixed narrow bandwidth). This two-phase schedule ensures the grid structure is established before encoder optimization begins, pre venting encoder drift from disrupting topology formation. Hyperparameter selection. The EMA rate α =0 . 05 was selected based on validation set performance across prelimi- nary experiments on both domains. While α =0 . 03 achie ves slightly lower Lorenz Seq-PPL (597 vs. 617), we report α =0 . 05 throughout to maintain consistency across domains and av oid domain-specific tuning. The dif ference is within the range of seed v ariation and does not meaningfully affect the method comparison. Evaluation metrics. T rustworthiness T ( k ) and continu- ity C ( k ) measure k -nearest-neighbor preserv ation between continuous latent space and discrete embedding ( V enna and Kaski , 2006 ): T ( k ) = 1 − 2 nk (2 n − 3 k − 1) n X i =1 X j ∈ V k ( i ) \ U k ( i ) ( r Z ( i, j ) − k ) , (7) C ( k ) = 1 − 2 nk (2 n − 3 k − 1) n X i =1 X j ∈ U k ( i ) \ V k ( i ) ( r E ( i, j ) − k ) , (8) where U k ( i ) , V k ( i ) are k -NN sets in original/embedded space, and r Z , r E are neighborhood ranks. W e use k =10 for K ≤ 256 , k =12 for K =1024 , k =15 for K > 1024 . Distortion measures global codebook geometry: the mean ratio of inter-code distances to expected distances under uniform grid spacing. Lo wer values indicate that codebook entries respect the grid’ s geometric structure. Sequence perplexity (Seq-PPL) : W e train a GR U with em- bedding dimension 64, hidden dimension 128, single layer , using Adam ( lr =3 × 10 − 4 ) for 10 epochs on batch size 64. Perplexity is exp( L CE ) on validation tokens. Baselines. VQ ( van den Oord et al. , 2017 ): Standard vector quantization with exponential mo ving av erage ( α =0 . 99 ). SOM-har d : Self-or ganizing map with Gaussian neighbor- hood kernel, annealed bandwidth, learning rate η =0 . 2 . No VQ commitment; stable for encoding but cannot support generation due to representational drift. VQ-V AE ( van den Oord et al. , 2017 ; Razavi et al. , 2019 ): Learned encoder/decoder with hidden=512, codebook EMA with dead-code reset when utilization drops below thresh- old (reinitialize unused codes from random training sam- ples). This mechanism enforces near-complete utilization but changes the optimization landscape compared to SOM- based methods. Full Method Comparison T able 2 reports complete statistics at 32 × 32 including dis- tortion (global codebook geometry measured via mean inter - code distance relati ve to e xpected uniform grid spacing) and standard deviations o ver 5 seeds. T able 2. Full method comparison at 32 × 32 ( K =1024 ), mean ± std ov er 5 seeds. Domain Method Act. (%) Trust ↑ Cont. ↑ MSE ↓ Seq-PPL ↓ Dist. ↓ VQ 99 0.999 ± 0.000 0.960 ± 0.001 0.005 ± 0.000 678 ± 12 — SOM-hard 95 0.998 ± 0.000 0.942 ± 0.001 0.006 ± 0.000 679 × 0.041 ± 0.004 Lorenz VQ-V AE 99 0.999 ± 0.000 0.961 ± 0.001 0.003 ± 0.000 672 ± 9 — SOM-VQ 71 0.998 ± 0.000 0.942 ± 0.001 0.007 ± 0.001 617 ± 8 0.062 ± 0.006 VQ 61 0.940 ± 0.006 0.898 ± 0.004 0.294 ± 0.039 719 ± 18 — SOM-hard 72 0.932 ± 0.011 0.897 ± 0.003 0.342 ± 0.050 742 × 0.093 ± 0.003 AIST++ VQ-V AE 61 0.978 ± 0.001 0.950 ± 0.001 0.200 ± 0.027 727 ± 15 — SOM-VQ 61 0.937 ± 0.008 0.895 ± 0.004 0.319 ± 0.041 679 ± 11 0.111 ± 0.005 7 T able 3. Capacity-matched ablation at 32 × 32 ( K =1024 ), mean ± std over 5 seeds. All variants share identical architecture (hidden=256). VQ-EMA + reset reinitialises dead codes each epoch; SOM-VQ uses a two-phase schedule matching the main experiments (10 epochs pure SOM pre-training, 50 epochs joint training) with no reset. SOM-VQ achiev es lower Seq-PPL on both domains without dead-code reset, confirming that neighbourhood pressure provides implicit codebook regularisation. Distortion (—) is undefined for unstructured codebooks. Domain Method Act. (%) Trust ↑ Cont. ↑ MSE ↓ Seq-PPL ↓ Dist. ↓ Lorenz VQ-EMA 100 0.999 ± 0.000 0.999 ± 0.000 0.041 ± 0.001 1014 ± 4 — VQ-EMA + reset 100 0.999 ± 0.000 0.999 ± 0.000 0.046 ± 0.003 1017 ± 3 — SOM-VQ 76 0.999 ± 0.000 0.999 ± 0.000 0.074 ± 0.016 829 ± 23 0.210 ± 0.005 AIST++ VQ-EMA 90 0.936 ± 0.003 0.941 ± 0.003 0.948 ± 0.535 28 ± 3 — VQ-EMA + reset 99 0.932 ± 0.003 0.941 ± 0.004 1.179 ± 0.501 36 ± 4 — SOM-VQ 98 0.906 ± 0.004 0.916 ± 0.004 1.149 ± 0.470 61 ± 5 0.350 ± 0.007 Capacity-Matched Ablation Grid Size Ablations T able 4. SOM-VQ grid size ablation on Lorenz attractor , mean ± std ov er 5 seeds. Quality improv es monotonically; uti- lization decreases due to topology-aware selection. Grid K Act. (%) Trust Cont. MSE Dist. 8 × 8 64 89 0.983 ± 0.002 0.941 ± 0.004 0.047 ± 0.004 0.202 ± 0.013 16 × 16 256 81 0.996 ± 0.001 0.943 ± 0.002 0.016 ± 0.002 0.117 ± 0.004 32 × 32 1024 71 0.998 ± 0.000 0.942 ± 0.001 0.007 ± 0.001 0.062 ± 0.006 T able 5. SOM-VQ grid size ablation on AIST++, mean ± std o ver 5 seeds. Quality plateaus at 32 × 32; utilization drops sharply beyond, indicating dataset complexity saturation. Grid K Act. (%) Trust Cont. MSE Dist. 16 × 16 256 89 0.915 ± 0.007 0.876 ± 0.007 0.351 ± 0.047 0.263 ± 0.008 32 × 32 1024 61 0.937 ± 0.008 0.895 ± 0.004 0.319 ± 0.041 0.111 ± 0.005 48 × 48 2304 38 0.945 ± 0.008 0.904 ± 0.006 0.324 ± 0.043 0.057 ± 0.001 64 × 64 4096 23 0.945 ± 0.006 0.905 ± 0.006 0.321 ± 0.039 0.035 ± 0.001 T raining Algorithm Algorithm 1 SOM-VQ Joint Training (Phase 2) Require: Latent vectors { z i } N i =1 , grid coordinates { c k } K k =1 Require: T opology lr η , EMA rate α , fixed bandwidth σ 1: Initialize codebook { e k } from 10-epoch SOM pre- training 2: for epoch t = 1 to T do 3: f or each z i in random order do 4: k ∗ ← arg min k ∥ z i − e k ∥ 2 5: for each codebook entry e k do 6: d ← ∥ c k − c k ∗ ∥ 7: h ← exp( − d 2 / 2 σ 2 ) 8: e k ← e k + η · h · ( z i − e k ) { SOM topology } 9: end for 10: e k ∗ ← (1 − α ) e k ∗ + α · z i { VQ commitment } 11: end for 12: end for Note: This algorithm describes Phase 2 (joint training). Phase 1 consists of 10 epochs of pure SOM updates with annealed bandwidth to establish initial topology before en- coder training begins. 8

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment