MIP Candy: A Modular PyTorch Framework for Medical Image Processing

Medical image processing demands specialized software that handles high-dimensional volumetric data, heterogeneous file formats, and domain-specific training procedures. Existing frameworks either provide low-level components that require substantial…

Authors: Tianhao Fu, Yucheng Chen

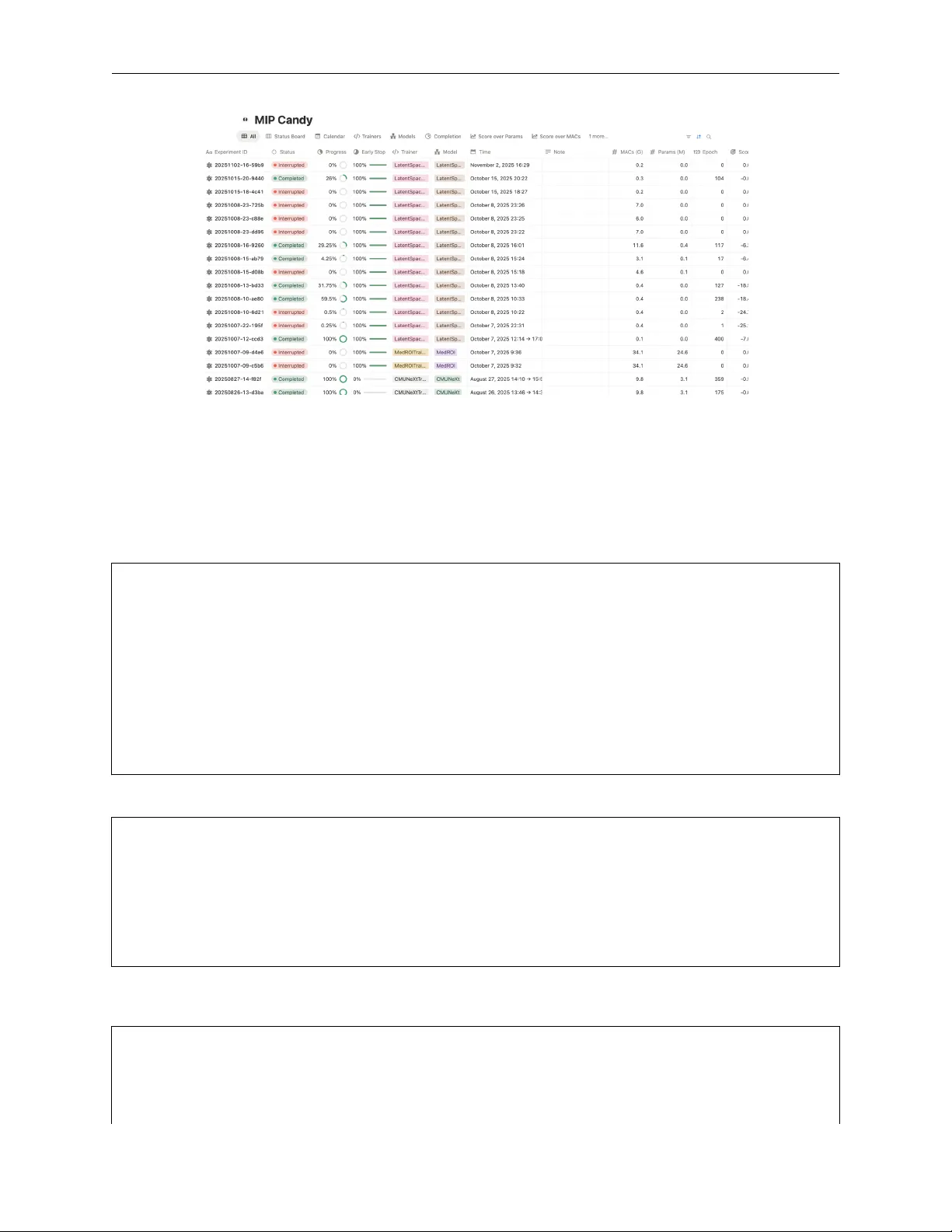

M I P C A N DY : A M O D U L A R P Y T O R C H F R A M E W O R K F O R M E D I C A L I M AG E P R O C E S S I N G T E C H N I C A L R E P O RT Tianhao Fu ∗ Univ ersity of T oronto, T oronto, ON, Canada V ector Institute, T oronto, ON, Canada Project Neura, T oronto, ON, Canada UTMIST , T oronto, ON, Canada terry.fu@projectneura.org Y ucheng Chen ∗ Project Neura, T oronto, ON, Canada Amplimit, T oronto, ON, Canada steven.chen@projectneura.org A B S T R AC T Medical image processing demands specialized software that handles high-dimensional v olumetric data, heterogeneous file formats, and domain-specific training procedures. Existing frame works either provide lo w-lev el components that require substantial integration effort or impose rigid, monolithic pipelines that resist modification. W e present MIP Candy (MIPCandy), a freely av ailable, PyT orch- based framew ork designed specifically for medical image processing. MIPCandy pro vides a complete, modular pipeline spanning data loading, training, inference, and ev aluation, allowing researchers to obtain a fully functional process w orkflow by implementing a single method— build_network — while retaining fine-grained control ov er every component. Central to the design is LayerT , a deferred configuration mechanism that enables runtime substitution of con volution, normalization, and acti vation modules without subclassing. The framework further offers b uilt-in k -fold cross-v alidation, dataset inspection with automatic region-of-interest detection, deep supervision, exponential mo ving av erage, multi-frontend experiment tracking (W eights & Biases, Notion, MLflo w), training state recov ery , and validation score prediction via quotient regression. An extensible b undle ecosystem provides pre-built model implementations that follow a consistent trainer–predictor pattern and integrate with the core frame work without modification. MIPCandy is open-source under the Apache- 2.0 license and requires Python 3.12 or later . Source code and documentation are av ailable at https://github.com/ProjectNeura/MIPCandy . 1 Introduction Medical image segmentation—the task of assigning a semantic label to each v oxel in a clinical scan—is a fundamental step in computer -aided diagnosis, treatment planning, and longitudinal disease monitoring. Unlike natural images, medical data are typically stored in domain-specific formats (NIfTI, DICOM, MHA) that encode acquisition metadata such as vox el spacing, orientation, and modality . V olumes are often three-dimensional, high-resolution, and acquired with anisotropic spacing, making both the data handling and the model training substantially more in volved than in standard computer vision pipelines. Meanwhile, expert annotations are scarce and e xpensive , placing a premium on training strategies—cross-v alidation, data augmentation, deep supervision—that extract maximal information from limited labels. General-purpose deep learning framew orks such as PyT orch [P aszke et al., 2019] and T ensorFlo w [Abadi et al., 2016] provide the computational substrate b ut lack medical-imaging-specific functionality . Building a segmentation pipeline from scratch on top of these frameworks requires implementing format-aw are data loading, geometry-preserving transforms, specialized loss functions, sliding window inference for large volumes, and reproducible experiment management—a significant engineering effort that is duplicated across research groups. ∗ Equal contribution. MIP Candy T E C H N I C A L R E P O RT Sev eral domain-specific frameworks ha ve been de veloped to address this gap, ranging from comprehensive component libraries such as MON AI [Cardoso et al., 2022] and T orchIO [Pérez-García et al., 2021] to fully automated pipelines such as nnU-Net [Isensee et al., 2021]. Howe ver , existing solutions tend to ward one of two e xtremes: they either provide low-le vel components that require substantial assembly effort or impose monolithic pipelines that resist modification. W e revie w these approaches in Section 2. W e present MIP Candy (hereafter MIPCandy), a PyT orch-based framework designed to occupy the middle ground between these extremes. MIPCandy provides a complete pipeline—from data loading and dataset inspection through training and inference to ev aluation—yet e very component is independently usable and replaceable. A researcher can obtain a fully functional segmentation w orkflow by implementing a single abstract method, build_network , on top of the provided SegmentationTrainer preset; alternati vely , indi vidual modules such as the metric functions, the visualization utilities, or the dataset classes can be adopted incrementally into an existing PyT orch codebase. The principal contributions of this w ork are as follows: 1. LayerT , a deferred module configuration mechanism that enables runtime substitution of con volution, normal- ization, and activ ation layers without subclassing (Section 4.2). 2. A hierarchical training framework with pre-configured segmentation presets, deep supervision, exponential moving a verage, training state recov ery , and multi-frontend experiment tracking (Section 4.3). 3. A dataset inspection system that automatically computes foreground bounding boxes, class distributions, and intensity statistics, enabling region-of-interest-based patch sampling (Section 4.1). 4. V alidation score prediction via quotient re gression, which fits a rational function to the validation trajectory and estimates both the maximum achiev able score and the optimal stopping epoch (Section 4.3). 5. An e xtensible b undle ecosystem that packages model architectures, trainers, and predictors into self-contained, reusable units (Section 5). MIPCandy requires Python 3.12 or later and mak es deliberate use of modern language features—type aliases (PEP 613), pattern matching, the Self type, and the @override decorator—to improv e readability and catch errors at de velopment time. The frame work is released under the Apache-2.0 license at https://github.com/ProjectNeura/MIPCandy . 2 Related W ork Existing software for medical image segmentation can be broadly or ganized into three categories: general-purpose deep learning framew orks, domain-specific component libraries, and end-to-end segmentation pipelines. General-purpose frameworks. PyT orch [Paszke et al., 2019] and T ensorFlow [Abadi et al., 2016] provide the foundational building blocks—automatic differentiation, GPU-accelerated tensor operations, and modular neural network layers—on which all contemporary medical imaging tools are b uilt. Higher -level wrappers such as PyT orch Lightning [Falcon and The PyT orch Lightning team, 2019] reduce boilerplate by standardizing the training loop, checkpoint management, and distrib uted training. Howe ver , none of these frameworks are aware of the particularities of medical data: volumetric file formats, vox el spacing, anisotropic resolution, or the class-imbalance and small-dataset regimes that are characteristic of clinical annotations. Researchers building on these framew orks must therefore implement format-aw are data loading, geometry-preserving transforms, specialized loss functions, and reproducible experiment management from scratch—an engineering ef fort that is duplicated across groups. Domain-specific component libraries. MON AI [Cardoso et al., 2022] is the most widely adopted medical imaging library for PyT orch. It provides a large collection of transforms (spatial, intensity , crop/pad, with both array and dictionary interfaces), netw ork architectures, loss functions, and metrics, together with Ignite-based training engines. MON AI follows an opt-in, compositional design: indi vidual components can be imported independently and composed with vanilla PyT orch code. This flexibility , howe ver , comes at the cost of assembly ef fort. Constructing a complete training pipeline in MON AI requires the user to select and configure each component—data loaders, transform chains, network, optimizer , loss, metric, engine, and ev ent handlers—and wire them together manually . There is no single entry point that produces a working segmentation w orkflow with researched defaults. T orchIO [Pérez-García et al., 2021] focuses on a narrower scope: efficient loading, preprocessing, augmentation, and patch-based sampling of medical images. It integrates well with PyT orch’ s DataLoader and supports queue-based patch e xtraction for lar ge 3D v olumes. T orchIO is complementary to, rather than competiti ve with, pipeline frame works; it addresses data handling but does not pro vide training loops, experiment management, or e valuation utilities. 2 MIP Candy T E C H N I C A L R E P O RT T able 1: Feature comparison of activ e medical image segmentation frame works. Feature nnU-Net MON AI T orchIO MIPCandy Complete training pipeline ✓ – – ✓ One-method setup ✓ – – ✓ Modular / individually usable – ✓ ✓ ✓ Custom architecture swap Hard Manual N/A build_network Deep supervision ✓ Manual N/A One flag EMA support – Manual N/A One flag T raining state recovery ✓ Manual N/A Built-in Real-time metric visualization – V ia handlers N/A Built-in Prediction previe ws – – N/A Built-in Score prediction / ETC – – – ✓ Multi-frontend tracking T ensorBoard T ensorBoard N/A W andB / Notion / MLflow Dataset inspection & R OI Internal – – inspect() Patch-based sampling ✓ ✓ ✓ ✓ k -fold cross-validation ✓ – – ✓ Bundle / model ecosystem – MON AI Bundles – ✓ End-to-end segmentation pipelines. nnU-Net [Isensee et al., 2021] occupies the opposite end of the spectrum. Giv en a dataset in a prescribed format, it automatically determines the preprocessing strategy , network topology , training schedule, and post-processing, achieving state-of-the-art results on a wide range of benchmarks [Isensee et al., 2024]. This automation, ho wev er , comes at the cost of modularity . The pipeline’ s components—data augmentation, architecture selection, loss function, and training loop—are tightly coupled and not designed to be used independently . Substituting a custom network architecture, loss function, or training strate gy requires modifying nnU-Net’ s internal code rather than composing external modules. Furthermore, the training process provides limited real-time visibility: intermediate predictions, per-epoch metric trajectories, and estimated time to completion are not surfaced to the user during training. MIST [Celaya et al., 2024] is a more recent end-to-end framework that similarly automates preprocessing and training for 3D medical image segmentation, though with a simpler , more configurable pipeline than nnU-Net. Earlier efforts. NiftyNet [Gibson et al., 2018], built on T ensorFlow , was among the first open-source platforms dedicated to medical image analysis, providing configurable pipelines for segmentation, regression, and image gen- eration. DL TK [Pawlo wski et al., 2017] offered reference deep learning implementations for medical imaging, and DeepNeuro [Beers et al., 2021] targeted neuroimaging workflo ws. All three projects are now lar gely unmaintained and incompatible with current versions of their underlying frame works. Positioning . T able 1 summarizes the capabilities of the most rele vant acti ve frame works. MIPCandy is designed to combine the completeness of an end-to-end pipeline with the modularity of a component library . Like nnU-Net, it provides a fully configured training workflo w with researched defaults—a working segmentation pipeline can be obtained by implementing a single method ( build_network ). Like MON AI, every component is independently usable and replaceable. Unlike both, MIPCandy emphasizes training transpar ency : per-epoch metric curves, input–label– prediction pre views, v alidation score prediction with estimated time to completion, and multi-frontend experiment tracking are built into the training loop rather than requiring e xternal configuration. 3 Design Philosophy MIPCandy is guided by four design principles that together shape the frame work’ s API, implementation, and extension model. PyT orch-nativ e. Every trainable component in MIPCandy is a standard nn.Module ; ev ery dataset is a standard torch.utils.data.Dataset . Loss functions, normalization layers, padding operators, and deep supervision wrap- pers are all nn.Module subclasses that compose with the rest of the PyT orch ecosystem without adaptation. As a consequence, any e xisting PyT orch utility—distributed data parallelism, automatic mixed precision, torch.compile — can be applied to MIPCandy components without modification. 3 MIP Candy T E C H N I C A L R E P O RT Opt-in and incremental. No module assumes that the rest of the framework is present. A researcher can adopt a single component—a loss function, a dataset class, a metric—into an existing codebase and later inte grate additional modules as needed. Composition ov er inheritance. MIPCandy fa vors runtime configuration o ver class proliferation. The LayerT mecha- nism (Section 4.2) stores a module type together with its constructor arguments and instantiates the module on demand, enabling users to swap con volution, normalization, or acti vation layers by passing diff erent LayerT instances rather than defining ne w subclasses. The same compositional approach appears throughout: DeepSupervisionWrapper wraps any loss module, BinarizedDataset wraps any supervised dataset, and TrainerToolbox bundles model, optimizer , scheduler , and criterion into a flat dataclass. Minimal API surface. The common case should require no configuration. SegmentationTrainer ships with a pre-configured optimizer , scheduler , and loss that selects the appropriate v ariant based on the number of classes. A complete training run can be launched with trainer.train(100) ; all optional keyw ord arguments hav e researched defaults. Con versely , every def ault is overridable via class attrib utes or method overrides. 4 System Architectur e MIPCandy is organized into nine loosely coupled modules, summarized in T able 2. Each module can be imported and used independently; the training frame work, for example, has no compile-time dependency on the e valuation module, and the metrics module depends only on PyT orch tensors. T able 2: MIPCandy module ov erview . Module Responsibility mipcandy.data Multi-format I/O, dataset classes, k -fold cross-v alidation, trans- forms, dataset inspection, visualization mipcandy.layer LayerT configuration, device management, checkpoint I/O, WithNetwork and WithPaddingModule base classes mipcandy.training Trainer base class, TrainerToolbox dataclass, experiment management, validation score prediction mipcandy.presets SegmentationTrainer preset with pre-configured loss, opti- mizer , scheduler , and deep supervision mipcandy.inference Predictor base class, parse_predictant utility , file-lev el pre- diction and export mipcandy.evaluation Evaluator class, EvalResult container with per-case and ag- gregate metrics mipcandy.metrics Dice-family metrics: binary_dice , dice_similarity_coefficient , soft_dice mipcandy.frontend Experiment tracking frontends: W eights & Biases, Notion, MLflow , and hybrid combinations mipcandy.common Building blocks: con volution blocks, loss functions, learning rate schedulers, quotient regression The remainder of this section describes each module in detail. 4.1 Data Pipeline Multi-format I/O. MIPCandy reads and writes medical images via SimpleITK [Y aniv et al., 2018], supporting NIfTI, MetaImage, and raster formats. The load_image() function performs automatic format detection, optional isotropic resampling, and direct device placement. For intermediate storage, fast_save() and fast_load() use the safetensors format [Hugging Face, 2023], pro viding zero-copy deserialization. Dataset hierarch y . All datasets inherit from a generic base that extends torch.utils.data.Dataset and provides device management, k -fold splitting, and a path-export interface. Ke y implementations include NNUNetDataset (nnU-Net raw format with multimodal support), BinarizedDataset (multiclass-to-binary wrapper), and composition utilities for merging datasets. Every dataset e xposes a fold() method for k -fold cross-v alidation with configurable splitting strategies. 4 MIP Candy T E C H N I C A L R E P O RT Dataset inspection. The inspect() function scans a supervised dataset and records per-case foreground bounding boxes, class distrib utions, and intensity statistics. From these annotations the framew ork computes a statistical for e ground shape and deri ves a region-of-interest (R OI) shape for patch-based training. RandomROIDataset samples random patches with configurable foreground o versampling (default: 33% of patches contain foreground). 4.2 LayerT Configuration System Neural network architectures are typically parameterized by the choice of con volution, normalization, and acti vation layers. The standard approaches to making these choices configurable are either to accept many constructor arguments or to require subclassing for each combination. Both scale poorly: a network that supports 2D and 3D con volutions, batch and group normalization, and multiple acti vations would need 2 × 2 × k subclasses under an inheritance-based approach. LayerT solves this by storing a module type together with its constructor k eyword ar guments as a lightweight descriptor . The module is instantiated only when assemble() is called, at which point positional and keyw ord arguments are merged with the stored def aults: from mipcandy.layer import LayerT from torch import nn # Define layer configurations conv = LayerT(nn.Conv2d) norm = LayerT(nn.BatchNorm2d, num_features= "in_ch" ) act = LayerT(nn.ReLU, inplace=True) # Instantiate at build time conv_module = conv.assemble(64, 128, 3, padding=1) # nn.Conv2d(64, 128, 3, padding=1) norm_module = norm.assemble(in_ch=128) # nn.BatchNorm2d(128) act_module = act.assemble() # nn.ReLU(inplace=True) The string "in_ch" acts as a deferr ed parameter : it is resolved to the inte ger value passed to assemble() , allowing a single descriptor to adapt to different channel counts. MIPCandy uses LayerT perv asively; for e xample, ConvBlock2d accepts LayerT arguments for con volution, normalization, and activ ation, with pre-configured defaults that can be ov erridden at construction time: from mipcandy.common import ConvBlock2d from mipcandy.layer import LayerT from torch import nn # Default: Conv2d + BatchNorm2d + ReLU block = ConvBlock2d(64, 128, 3, padding=1) # Custom: Conv2d + GroupNorm + GELU block = ConvBlock2d( 64, 128, 3, padding=1, norm=LayerT(nn.GroupNorm, num_groups=8, num_channels= "in_ch" ), act=LayerT(nn.GELU), ) 4.3 T raining Framework T rainer and T rainerT oolbox. The Trainer base class manages the training lifecycle. Training state is encapsulated in a TrainerToolbox dataclass that bundles the model, optimizer , scheduler, criterion, and an optional EMA [Polyak and Juditsky, 1992] model. The toolbox is constructed from b uilder methods ( build_network , build_optimizer , etc.) that subclasses ov erride to customize each component. Each training run produces a timestamped experiment folder containing checkpoints, per -epoch metrics (CSV), progress plots, log files, and worst-case prediction pre views (see Section 6). Before the first epoch, a sanity check v alidates the output shape and reports MA Cs and parameter count. T raining state is serialized ev ery epoch, enabling seamless recovery after interruptions. SegmentationT rainer preset. SegmentationTrainer extends Trainer with pre-configured defaults: a combined Dice–cross-entropy loss [Sudre et al., 2017] that selects the binary or multiclass variant automatically , SGD with 5 MIP Candy T E C H N I C A L R E P O RT momentum 0.99 and Nesterov acceleration, a polynomial learning rate scheduler, and gradient clipping. When the deep_supervision flag is set, the criterion is wrapped in a DeepSupervisionWrapper [Lee et al., 2015] with auto-computed weights w i = 2 − i . EMA via PyT orch’ s AveragedModel can be enabled with a single flag. V alidation score. MIPCandy defines the v alidation score as the negated combined loss: s = −L val . This con vention maps e very loss function to a unified “higher is better” scale, so that best-checkpoint selection, early stopping, and score prediction all use a single comparison direction ( s new > s best ) regardless of the underlying criterion. The framew ork then fits a quotient regression model—a rational function P ( x ) /Q ( x ) —to the v alidation score trajectory , estimating the maximum achiev able score and the epoch at which it will be reached (ETC). Frontend integrations. Experiment tracking uses a pluggable Frontend protocol; shipped implementations cov er W eights & Biases [Biewald, 2020], Notion, and MLflo w [Zaharia et al., 2018], with a factory for combining multiple frontends. The visual aspects of training transparency—console output, metric plots, prediction pre views, and frontend screenshots— are presented in Section 6. 4.4 Infer ence and Evaluation The Predictor class mirrors the trainer’ s WithNetwork interface: the user implements build_network() and the framew ork handles lazy checkpoint loading, de vice placement, and padding. A unified parse_predictant() function accepts file paths, directories, tensors, or datasets, normalizing them into a common format. Predictors support single-image, batch, and file-lev el output ( .png for 2D, .mha for 3D). The Evaluator class accepts arbitrary metric functions and produces an EvalResult container with per-case and aggregate scores, supporting e valuation from datasets, raw tensors, or end-to-end predict-and-e valuate workflo ws. MIP- Candy provides Dice-family metrics— binary_dice , dice_similarity_coefficient , and soft_dice —cov ering boolean, one-hot, and soft-probability formats. The same functions serve dual roles as both loss components and ev aluation metrics. 5 Bundle Ecosystem While the core framew ork provides the infrastructure for training, inference, and ev aluation, specific network archi- tectures and their associated configurations are distrib uted as bundles —self-contained packages that plug into the framew ork without modifying it. Bundle structure. Each bundle follows a consistent three-file pattern: • Model : an nn.Module subclass implementing the architecture, plus builder functions ( make_unet2d , make_unet3d , etc.) that construct common configurations. • T rainer : a class extending SegmentationTrainer that overrides build_network() (and optionally build_padding_module() , build_optimizer() , or backward() ). • Pr edictor : a class extending Predictor that overrides build_network() . The only mandatory o verride is build_network() , which receives the shape of a single input tensor and returns an nn.Module . All other training infrastructure—loss, optimizer, scheduler, checkpointing, metric tracking, deep supervision—is inherited from the preset. Integration. Bundles depend on the core frame work through its public API and use LayerT , presets, and data pipeline classes directly . No monkey-patching or re gistration is required. Bundle-specific behavior (e.g., custom normalization selection based on batch size, architecture-specific deep supervision) is expressed through standard method o verrides. Extensibility . The b undle mechanism is not limited to model architectures. Augmentation pipelines, loss functions, and task-specific workflo ws can all be packaged as bundles. At the time of writing, MIPCandy ships with b undles for U-Net [Ronneberger et al., 2015], UNet++ [Zhou et al., 2018], V -Net [Milletari et al., 2016], CMUNeXt [T ang et al., 2024], MedNeXt [Roy et al., 2023], and UNETR [Hatamizadeh et al., 2022], co vering both 2D and 3D segme ntation tasks. 6 MIP Candy T E C H N I C A L R E P O RT (a) Combined loss and validation score. (b) V alidation score trajectory . Figure 1: T raining progress plots automatically generated by MIPCandy during a U-Net training run on the PH2 dermoscopy dataset. The validation score is the negated combined loss (Section 4.3); higher values indicate better performance. 6 T raining T ransparency A recurring frustration in medical image segmentation research is the opacity of the training process. Many framew orks report only a final score after training completes, leaving the researcher with little insight into how the model e volv ed, which cases are problematic, or whether training should be stopped early . MIPCandy treats training visibility as a first-class design goal: e very training run automatically produces a rich set of artifacts that allo w the researcher to monitor , diagnose, and communicate results without additional code. Console output and metric r eporting. During training, MIPCandy prints a structured summary after each epoch via the Rich library [McGugan, 2019], including the current epoch, all tracked losses, validation scores, learning rate, epoch duration, and—when av ailable—the estimated time of completion (ETC). After each validation pass, a per -case metric table is displayed, highlighting the worst-performing case so that the researcher can immediately identify failure modes. Appendix A (Figure 5) sho ws a representativ e console screenshot when resuming a pre viously interrupted training run, illustrating both the recov ery mechanism and the per-epoch metric reporting. T raining progr ess visualization. At the end of each epoch, MIPCandy updates a set of metric curve plots sa ved to the experiment folder . These include combined loss and v alidation score on a single progress plot, as well as individual plots for each loss component (Dice, cross-entropy), per -class Dice scores, learning rate schedule, and epoch duration. Researchers can monitor these plots in real time via any file vie wer or integrate them into slide decks and lab notebooks. Figure 1 shows the progress plot and v alidation score curve from a U-Net trained on PH2 for 90 epochs. Prediction pr eviews and worst-case tracking. After each v alidation epoch, the framework identifies the worst- performing validation case (by v alidation score) and sa ves a set of pre view images: the raw input, the ground-truth label, the model’ s prediction, and two o verlay composites—the e xpected overlay (ground truth superimposed on the input) and the actual ov erlay (prediction superimposed on the input). By always displaying the worst case rather than a random or cherry-picked example, this mechanism ensures that the researcher’ s attention is directed to the most informativ e failure mode. Figure 2 sho ws these previe ws from a 2D skin lesion segmentation experiment. For 3D volumes, visualize3d() renders the label and prediction as interacti ve PyV ista [Sulli van and Kaszynski, 2019] meshes with automatic downsampling, as sho wn in Figure 3. V alidation score pr ediction. After a configurable warm-up period (def ault: 20 epochs), MIPCandy fits a quotient regression model to the validation score trajectory and extrapolates the maximum achiev able score and the epoch at which it will be reached. From these estimates the frame work computes an ETC (Estimated T ime of Completion) that is displayed after each validation epoch. This allows researchers to make informed decisions about early stopping, hyperparameter adjustment, or resource allocation without waiting for the full training run to complete. A full console screenshot illustrating both the recovery mechanism and the per-epoch output is provided in Appendix A. 7 MIP Candy T E C H N I C A L R E P O RT (a) Input image. (b) Expected (GT ov erlay). (c) Actual (prediction ov erlay). Figure 2: W orst-case prediction previe ws automatically saved during training. The framework identifies the v alidation case with the lowest score and generates o verlays comparing the ground truth (b) and model prediction (c) against the input image (a). This example is from a U-Net trained on PH2. (a) BraTS ground-truth label (4 classes). (b) P ANTHER [Betancourt T arifa et al., 2025] predicted seg- mentation. Figure 3: 3D volume pre views rendered via PyV ista. MIPCandy automatically generates 3D visualizations of labels and predictions for volumetric se gmentation tasks. Frontend integrations. For team-le vel experiment management, MIPCandy integrates with external tracking services via a lightweight Frontend protocol. Shipped frontends include W eights & Biases [Biew ald, 2020], Notion, and MLflow [Zaharia et al., 2018], and the create_hybrid_frontend() factory allo ws simultaneous logging to multiple services. Figure 4 shows a Notion database populated by MIPCandy , providing a persistent, shareable experiment ledger . T raining state reco very . Long-running 3D training jobs are frequently interrupted by hardware failures, preemption, or resource limits. MIPCandy serializes the full training state—optimizer , scheduler , criterion state dictionaries, and a state orb recording epoch, best score, and all training arguments—at every epoch. Training can be resumed via recover_from() followed by continue_training() , restoring the exact state and continuing from the interrupted epoch (Figure 5). 7 Case Studies This section demonstrates MIPCandy w orkflows on representati ve segmentation tasks, illustrating both the minimal code required and the artifacts produced by the frame work. 8 MIP Candy T E C H N I C A L R E P O RT Figure 4: Notion frontend integration. MIPCandy automatically logs experiment metadata, progress, and scores to a Notion database. 7.1 2D Skin Lesion Segmentation The follo wing script performs binary segmentation on the PH2 dermoscopy dataset [Mendonça et al., 2013] using a U-Net bundle. The complete pipeline—data loading, k -fold splitting, trainer configuration, and training—requires 8 lines of code: import torch from torch.utils.data import DataLoader from mipcandy.data import NNUNetDataset from mipcandy_bundles.unet import UNetTrainer device = "cuda" if torch.cuda.is_available() else "cpu" train, val = NNUNetDataset(folder= "Dataset501_PH2" , split= "Tr" ).fold(fold=0) trainer = UNetTrainer( "experiments" , DataLoader(train, batch_size=2, shuffle=True), DataLoader(val, batch_size=1), device=device, ) trainer.num_classes = 1 trainer.train(100) W ithout a bundle, the same workflo w requires implementing a single method on SegmentationTrainer : from typing import override from torch import nn from mipcandy.presets import SegmentationTrainer class MyTrainer(SegmentationTrainer): @override def build_network(self, example_shape: tuple [ int , ...]) -> nn.Module: from mipcandy_bundles.unet import make_unet2d return make_unet2d(example_shape[0], self.num_classes) Upon completion, the experiment folder contains model checkpoints, per-epoch metrics (CSV), all training curve plots shown in Section 6, and w orst-case previe w images. Ev aluation on a held-out test set is equally concise: from mipcandy.evaluation import Evaluator from mipcandy.metrics import binary_dice from mipcandy_bundles.unet import UNetPredictor predictor = UNetPredictor( "experiments/UNetTrainer/20240901-1234" , example_shape=(3, 384, 384), device= "cuda" ) 9 MIP Candy T E C H N I C A L R E P O RT evaluator = Evaluator(binary_dice) result = evaluator.predict_and_evaluate( "test_images/" , "test_labels/" , predictor) print (result.mean_metrics) 7.2 3D V olumetric Segmentation For 3D tasks, MIPCandy’ s dataset inspection system automates the determination of patch shapes and foreground sampling rates. The follo wing script trains a multiclass 3D segmentation model on the BraTS 2021 brain tumor dataset [Baid et al., 2021] with deep supervision and R OI-based patch sampling: import torch from torch.utils.data import DataLoader from mipcandy.data import NNUNetDataset from mipcandy.data.inspection import inspect, RandomROIDataset from mipcandy_bundles.unet import UNetTrainer device = "cuda" if torch.cuda.is_available() else "cpu" dataset = NNUNetDataset(folder= "Dataset320_BRaTS" , split= "Tr" ) train_full, val_full = dataset.fold(fold=0) # Inspect dataset to compute ROI shape and class distribution annotations = inspect(train_full) train = RandomROIDataset(annotations, batch_size=2) val = RandomROIDataset( inspect(val_full), batch_size=1, oversample_rate=0 ) trainer = UNetTrainer( "experiments" , DataLoader(train, batch_size=2, shuffle=True), DataLoader(val, batch_size=1), device=device, ) trainer.num_dims = 3 trainer.num_classes = 4 trainer.deep_supervision = True trainer.train(200, early_stop_tolerance=20) The inspect() call scans the training set to compute per-case foreground bounding boxes, class distributions, and intensity statistics. RandomROIDataset uses these annotations to sample patches of a statistically determined shape, with 33% of patches forced to contain foreground voxels. Deep supervision is enabled by setting a single flag; the trainer automatically wraps the loss function, computes scale-dependent weights, and generates multi-resolution tar gets. The frame work produces 3D previe w renderings (Figure 3), per-class Dice curv es, and all other transparency artifacts described in Section 6. 8 Conclusion W e have presented MIPCandy , a modular , PyT orch-native framew ork for medical image segmentation that prioritizes four qualities: fle xibility in swapping components, transpar ency during training, usability through minimal-code setup, and extensibility via a b undle ecosystem. The framework provides a complete pipeline from data loading through training and inference to ev aluation. A functional segmentation workflo w can be obtained by implementing a single method— build_network —while all infrastructure (loss selection, optimizer configuration, checkpointing, metric tracking, deep supervision, EMA, validation score prediction, and experiment tracking) is handled by the frame work with researched def aults. At the same time, e very component is independently usable and replaceable, allowing incremental adoption into e xisting PyT orch codebases. The ke y technical contributions— LayerT for compositional module configuration, built-in training transparency with worst-case tracking and v alidation score prediction, dataset inspection with ROI-based patch sampling, and the b undle ecosystem—address practical pain points in medical image se gmentation research. Unlike fully aut omated pipelines that treat training as a black box, MIPCandy ensures that the researcher retains full visibility into and control over e very stage of the process. 10 MIP Candy T E C H N I C A L R E P O RT MIPCandy is open-source under the Apache-2.0 license and is acti vely dev eloped. Future work includes expanding the metric library with surface-distance metrics (Hausdorf f distance, av erage symmetric surface distance), adding sliding window inference for large volumes, supporting semi-supervised and self-supervised learning paradigms, and e xtending the bundle ecosystem with task-specific b undles for detection and registration. References Adam Paszk e, Sam Gross, Francisco Massa, Adam Lerer , James Bradbury , Gregory Chanan, T revor Killeen, Zeming Lin, Natalia Gimelshein, Luca Antiga, et al. Pytorch: An imperativ e style, high-performance deep learning library . Advances in Neural Information Pr ocessing Systems , 32, 2019. Martín Abadi, Paul Barham, Jianmin Chen, Zhifeng Chen, Andy Da vis, Jeffre y Dean, Matthieu Devin, Sanjay Ghemawat, Geof frey Irving, Michael Isard, et al. T ensorflow: A system for large-scale machine learning. OSDI , 16: 265–283, 2016. M Jorge Cardoso, W enqi Li, Richard Bro wn, Nic Ma, Eric Kerfoot, Y iheng W ang, Benjamin Murrey , Andriy Myronenko, Can Zhao, Dong Y ang, et al. MONAI: An open-source framew ork for deep learning in healthcare. arXiv pr eprint arXiv:2211.02701 , 2022. Fernando Pérez-García, Rachel Sparks, and Sébastien Ourselin. T orchIO: A Python library for efficient loading, preprocessing, augmentation and patch-based sampling of medical images in deep learning. Computer Methods and Pr ograms in Biomedicine , 208:106236, 2021. Fabian Isensee, P aul F Jaeger , Simon AA K ohl, Jens Petersen, and Klaus H Maier-Hein. nnU-Net: a self-configuring method for deep learning-based biomedical image segmentation. Nature Methods , 18(2):203–211, 2021. W illiam F alcon and The PyT orch Lightning team. PyT orch Lightning, 2019. URL https://github.com/ Lightning- AI/pytorch- lightning . Fabian Isensee, T assilo W ald, Constantin Ulrich, Michael Baumgartner , Saikat Roy , Klaus H Maier-Hein, and Paul F Jaeger . nnU-Net revisited: A call for rigorous validation in 3D medical image segmentation. In Medical Image Computing and Computer-Assisted Intervention – MICCAI 2024 , v olume 15009 of Lecture Notes in Computer Science , pages 488–498. Springer , 2024. Adrian Celaya, Evan Lim, Rachel Glenn, Brayden Mi, Alex Balsells, Dawid Schellingerhout, Tuck er Netherton, Caroline Chung, Beatrice Riviere, and Da vid Fuentes. MIST: A simple and scalable end-to-end 3D medical imaging segmentation frame work. arXiv pr eprint arXiv:2407.21343 , 2024. Eli Gibson, W enqi Li, Carole Sudre, Lucas Fidon, Dzhoshkun I Shakir , Guotai W ang, Zach Eaton-Rosen, Robert Gray , T om Doel, Y ipeng Hu, et al. NiftyNet: a deep-learning platform for medical imaging. Computer Methods and Pr ograms in Biomedicine , 158:113–122, 2018. Nick Pa wlowski, Sofia Ira Ktena, Matthew CH Lee, Bernhard Kainz, Daniel Rueckert, Ben Glocker , and Martin Rajchl. DL TK: State of the art reference implementations for deep learning on medical images. arXiv pr eprint arXiv:1711.06853 , 2017. Andre w Beers, James Bro wn, Ken Chang, Katharina Hoebel, Elizabeth Gerstner , Bruce Rosen, and Jayashree Kalpathy- Cramer . DeepNeuro: an open-source deep learning toolbox for neuroimaging. Neur oinformatics , 19:127–140, 2021. Ziv Y aniv , Bradley C Lo wekamp, Hans J Johnson, and Richard Beare. SimpleITK image-analysis notebooks: a collaborativ e en vironment for education and reproducible research. Journal of Digital Imaging , 31:290–303, 2018. Hugging Face. Safetensors: A simple, safe and fast file format for storing tensors, 2023. URL https://github.com/ huggingface/safetensors . Boris T Polyak and Anatoli B Juditsky . Acceleration of stochastic approximation by averaging. SIAM Journal on Contr ol and Optimization , 30(4):838–855, 1992. Carole H Sudre, W enqi Li, T om V ercauteren, Sebastien Ourselin, and M Jorge Cardoso. Generalised Dice overlap as a deep learning loss function for highly unbalanced segmentations. In Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support , pages 240–248. Springer , 2017. Chen-Y u Lee, Saining Xie, Patrick Gallagher , Zhengyou Zhang, and Zhuo wen T u. Deeply-supervised nets. In Artificial Intelligence and Statistics , pages 562–570, 2015. Lukas Biew ald. Experiment tracking with W eights and Biases, 2020. URL https://www.wandb.com/ . Matei Zaharia, Andre w Chen, Aaron Davidson, Ali Ghodsi, Sue Ann Hong, Andy K onwinski, Siddharth Murching, T omas Nykodym, Paul Ogilvie, Mani Parkhe, et al. Accelerating the machine learning lifecycle with MLflo w. IEEE Data Engineering Bulletin , 41(4):39–45, 2018. 11 MIP Candy T E C H N I C A L R E P O RT Olaf Ronneberger , Philipp Fischer , and Thomas Brox. U-Net: Con volutional networks for biomedical image segmenta- tion. In International Confer ence on Medical Imag e Computing and Computer-Assisted Intervention , pages 234–241. Springer , 2015. Zongwei Zhou, Md Mahfuzur Rahman Siddiquee, Nima T ajbakhsh, and Jianming Liang. UNet++: A nested U-Net architecture for medical image segmentation. In Deep Learning in Medical Imag e Analysis and Multimodal Learning for Clinical Decision Support , volume 11045 of Lectur e Notes in Computer Science , pages 3–11. Springer , 2018. doi:10.1007/978-3-030-00889-5_1. Fausto Milletari, Nassir Nav ab, and Seyed-Ahmad Ahmadi. V -Net: Fully con volutional neural networks for volumetric medical image segmentation. In 2016 F ourth International Conference on 3D V ision (3D V) , pages 565–571. IEEE, 2016. doi:10.1109/3D V .2016.79. Fenghe T ang, Jianrui Ding, Quan Quan, Lingtao W ang, Chunping Ning, and S. Ke vin Zhou. CMUNeXt: An efficient medical image segmentation network based on lar ge kernel and skip fusion. In 2024 IEEE International Symposium on Biomedical Imaging (ISBI) , pages 1–5. IEEE, 2024. doi:10.1109/ISBI56570.2024.10635609. Saikat Roy , Gregor Köhler , Constantin Ulrich, Michael Baumgartner , Jens Petersen, Fabian Isensee, Paul F . Jäger, and Klaus H. Maier -Hein. MedNeXt: T ransformer-dri ven scaling of con vnets for medical image segmentation. In Medical Image Computing and Computer Assisted Intervention – MICCAI 2023 , v olume 14223 of Lecture Notes in Computer Science , pages 405–415. Springer , 2023. doi:10.1007/978-3-031-43901-8_39. Ali Hatamizadeh, Y ucheng T ang, V ishwesh Nath, Dong Y ang, Andriy Myronenko, Bennett Landman, Holger R. Roth, and Daguang Xu. UNETR: T ransformers for 3D medical image segmentation. In 2022 IEEE/CVF W inter Confer ence on Applications of Computer V ision (W ACV) , pages 1748–1758. IEEE, 2022. doi:10.1109/W A CV51458.2022.00181. W ill McGugan. Rich: A Python library for rich text and beautiful formatting in the terminal. https://github.com/ Textualize/rich , 2019. C. Bane Sulliv an and Alexander A. Kaszynski. PyV ista: 3D plotting and mesh analysis through a streamlined interface for the V isualization T oolkit (VTK). Journal of Open Sour ce Software , 4(37):1450, 2019. doi:10.21105/joss.01450. Amparo Soeli Betancourt T arifa, Faisal Mahmood, Uf fe Bernchou, and Peter Jan K oopmans. P ANTHER challenge: Public training dataset, 2025. T eresa Mendonça, Pedro M Ferreira, Jor ge S Marques, André RS Marcal, and Jorge Rozeira. PH2 – a dermoscopic image database for research and benchmarking. In International Confer ence of the IEEE Engineering in Medicine and Biology Society , pages 5437–5440, 2013. Ujjwal Baid, Satyam Ghodasara, Suyash Mohan, Michel Bilello, Evan Calabrese, Errol Colak, Ke yvan Farahani, Jayashree Kalpathy-Cramer, Felipe C Kitamura, Sarthak Pati, et al. The RSN A-ASNR-MICCAI BraTS 2021 benchmark on brain tumor segmentation and radiogenomic classification. arXiv preprint , 2021. A Console Output Figure 5 sho ws the full console output when resuming a previously interrupted training run via recover_from() and continue_training() . The framework restores the optimizer , scheduler , and training tracker from a pre vious checkpoint and resumes from the interrupted epoch. Each epoch produces a structured metric summary , a per -case validation table with per -class label and prediction statistics, and aggregated validation metrics. The worst-performing validation case is highlighted, and the estimated time of completion (ETC) is displayed based on quotient regression of the validation trajectory . 12 MIP Candy T E C H N I C A L R E P O RT MIPCandy T raining Recovery [ 2026 0 2 23 14:30:00 ] 2 0260223 14 8 [ 2026 0 2 23 14:30:00 100 000 ] 42 [ 2026 0 2 23 14:30:00 600 000 ] ( 3 3 84 384 ) [ 2026 0 2 23 14:30:00 700 000 ] [ 2026 0 2 23 14:30:02 700 000 ] [ 2026 0 2 23 14:30:02 800 000 ] 54.3 34. 5 [ 2026 0 2 23 14:30:02 900 000 ] ( 1 3 84 384 ) [ 2026 0 2 23 14:30:03 ] ⠋ [ 2026 0 2 23 14:30:04 500 000 ] 0.2 987 [ 0.1923 0. 4123 ] ( -0.0258 ) [ 2026 0 2 23 14:30:04 520 000 ] 0.8612 [ 0.8023 0.906 7 ] ( 0.0189 ) [ 2026 0 2 23 14:30:04 540 000 ] 0.1378 [ 0.0912 0.1923 ] ( -0.0156 ) Metric MeanV alue Span Diff [ 2026 0 2 23 14:30:04 560 000 ] 9 31.8 ⠋ [ 2026 0 2 23 14:30:36 360 000 ] -0.2987 ( 0.0258 ) [ 2026 0 2 23 14:30:36 460 000 ] -0 .2067 63 [ 2026 0 2 23 14:30:36 560 000 ] 4156.8 02 23 15 :39:53 Case ID softdice bceloss d ice %label class0 %lab elclass1 %o utputclass0 % outputclass 1 [ 2026 0 2 23 14:30:36 660 000 ] 5 [ 2026 0 2 23 14:30:36 760 000 ] 0.89 65 [ 0.7956 0.9 423 ] ( 0.0097 ) [ 2026 0 2 23 14:30:36 780 000 ] 0.108 7 [ 0.0678 0.20 23 ] ( -0.0068 ) [ 2026 0 2 23 14:30:36 800 000 ] 0.8860 [ 0 .7789 0.9334 ] ( 0.0098 ) [ 2026 0 2 23 14:30:36 820 000 ] 0 0.8475 [ 0.762 3 0.9234 ] ( 0.0 000 ) [ 2026 0 2 23 14:30:36 840 000 ] 1 0.1525 [ 0.076 6 0.2377 ] ( 0.0 000 ) [ 2026 0 2 23 14:30:36 860 000 ] 0 0.8453 [ 0.76 89 0.9101 ] ( -0. 0056 ) [ 2026 0 2 23 14:30:36 880 000 ] 1 0.1547 [ 0.08 99 0.2311 ] ( 0. 0056 ) Metric Mea nValue Span Dif f [ 2026 0 2 23 14:30:36 900 000 ] ( -0.3245 -0.2987 ) [ 2026 0 2 23 14:30:37 ] 9 44.7 [ 2026 0 2 23 14:30:37 100 000 ] -0.2 987 Figure 5: Console interface during training state reco very . The output sho ws a single epoch after recov ery: sanity check, training metrics with a structured summary table, per-case v alidation metrics with per-class statistics, score prediction with ETC, and checkpoint management. 13

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment