Training-Free Intelligibility-Guided Observation Addition for Noisy ASR

Automatic speech recognition (ASR) degrades severely in noisy environments. Although speech enhancement (SE) front-ends effectively suppress background noise, they often introduce artifacts that harm recognition. Observation addition (OA) addressed t…

Authors: Haoyang Li, Changsong Liu, Wei Rao

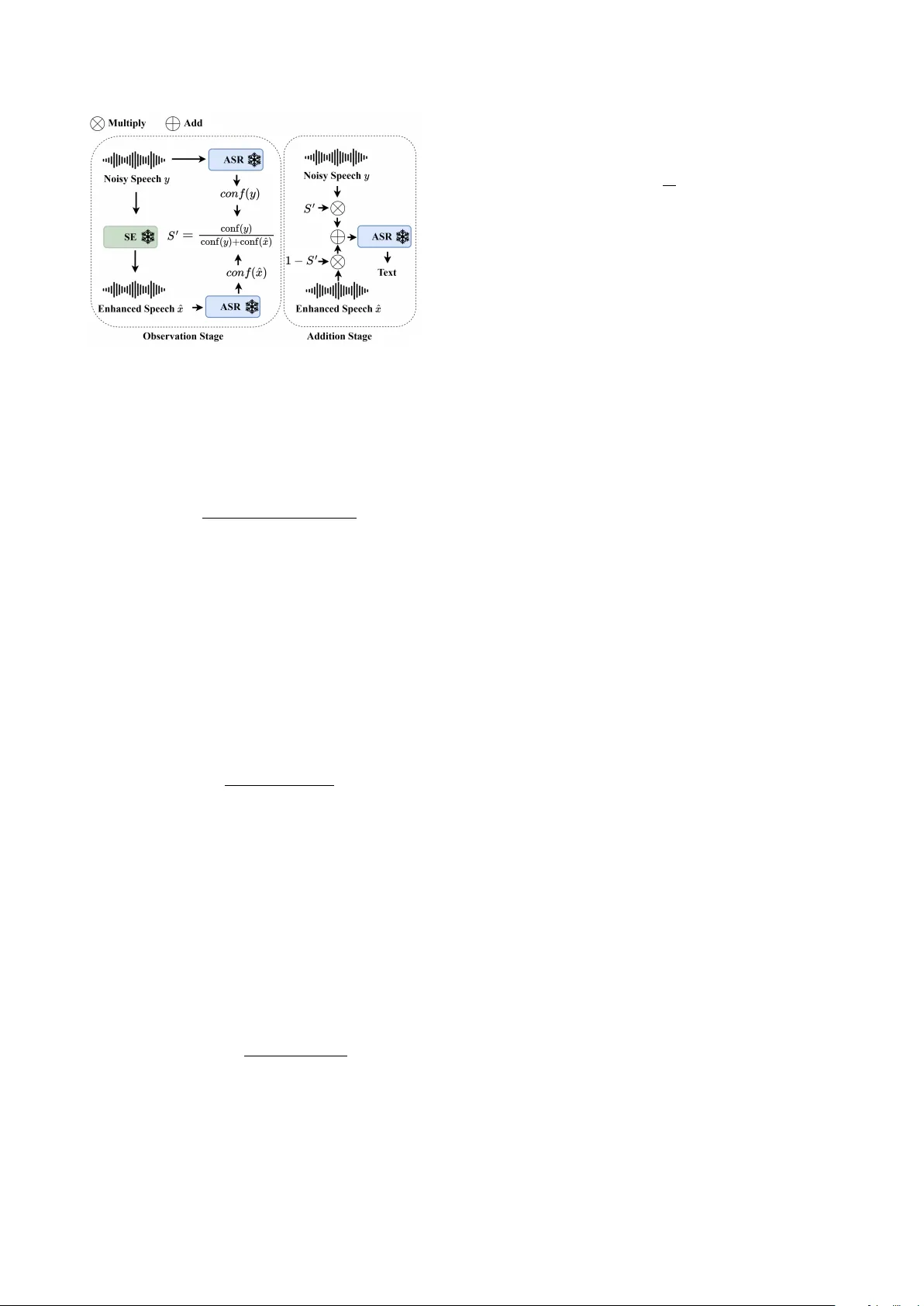

T raining-Fr ee Intelligibility-Guided Observ ation Addition f or Noisy ASR Haoyang Li 1 , Changsong Liu 1 , W ei Rao 1 , Hao Shi 2 , Sakriani Sakti 3 , ∗ , Eng Siong Chng 1 , ∗ 1 Nanyang T echnological Uni versity , Singapore 2 Independent Researcher 3 Nara Institute of Science and T echnology , Japan li0078ng@e.ntu.edu.sg Abstract Automatic speech recognition (ASR) degrades se verely in noisy en vironments. Although speech enhancement (SE) front-ends effecti vely suppress background noise, they often introduce ar- tifacts that harm recognition. Observation addition (O A) ad- dressed this issue by fusing noisy and SE enhanced speech, im- proving recognition without modifying the parameters of the SE or ASR models. This paper proposes an intelligibility-guided O A method, where fusion weights are derived from intelligi- bility estimates obtained directly from the backend ASR. Un- like prior O A methods based on trained neural predictors, the proposed method is training-free, reducing complexity and en- hances generalization. Extensiv e experiments across diverse SE-ASR combinations and datasets demonstrate strong robust- ness and improvements over existing O A baselines. Additional analyses of intelligibility-guided switching-based alternatives and frame versus utterance-le vel OA further v alidate the pro- posed design. Index T erms : noise-robust automatic speech recognition, speech enhancement post-processing, observation addition 1. Introduction Automatic speech recognition (ASR) aims to transform spo- ken audio signals into their corresponding textual transcriptions. The performance of ASR degrades significantly in the presence of background noise [1–3]. Speech enhancement (SE), which suppresses background noise to estimate cleaner speech [4–7], is widely adopted as a front-end preprocessing step for noise- robust ASR [8–10]. Despite effecti vely suppressing background noise, SE can introduce artifact errors that negati vely impact downstream ASR [11, 12]. This trade-off means SE may de- grade recognition performance, particularly for ASR models al- ready trained for noise robustness. Prior works [13–16] hav e explored joint training of SE and ASR systems to align the enhancement objecti ve with recogni- tion performance, as SE is typically optimized for signal-le vel metrics that do not correlate strongly with intelligibility [17]. Howe ver , joint training increases computational cost and is in- feasible when the SE and ASR cannot be integrated or are un- known. Moreover , it introduces a trade-off: optimizing for ASR may degrade perceptual speech quality [18] of the SE system. Early studies [11,18] have identified SE-induced artif acts as a primary cause of ASR performance degradation, and demon- strated that Observation Addition (O A) can effecti vely mitigate such artifacts and improve recognition accuracy . O A is a simple SE post-processing method that, giv en a noisy speech signal y and its enhanced version ˆ x = S E ( y ) , interpolates the two along * These authors contributed equally as senior authors. the time dimension using a weighting coefficient S ′ ∈ [0 , 1] (Eq. 1). In contrast to joint SE-ASR training approaches, OA requires no modification to the underlying SE or ASR models and enables explicit control over the trade-off between enhance- ment strength and recognition performance by adjusting S ′ . ¯ x = S ′ × y + (1 − S ′ ) × ˆ x (1) The effectiv eness of O A depends critically on the weight- ing coefficient S ′ , which should be adaptively determined to accommodate varying acoustic conditions. Previous works [19–21] use predicted normalized signal quality scores (e.g. DNSMOS [22]) of the noisy input y as S ′ . This reduces the weight of the enhanced signal ˆ x when y is relativ ely clean, and vice v ersa. Howev er, these approaches do not account for the sev erity of SE-induced artif acts in ˆ x , which may bias S ′ tow ard the enhanced signal even when substantial artifacts are present, or con versely underutilize ˆ x when enhancement is reliable. Re- cent works [21, 23, 24] use neural predictors to estimate S ′ , trained with labeled data deri ved from recognition metrics such as CER or WER. Howev er, these approaches require ground- truth transcriptions to compute CER/WER, which are often un- av ailable in real-world noisy scenarios. Furthermore, labeling data via ASR and training an additional neural-based predictor increases engineering complexity and may introduce general- ization issues across datasets, SE and ASR systems. In this work, we present a simple yet ef fecti ve O A frame- work that balances S ′ using speech intelligibility-based met- ric scores computed from y and ˆ x , which are directly obtained from backend ASR systems at inference time. By av oiding the training of a dedicated neural-based S ′ predictor , the pro- posed method significantly reduces system complexity and im- prov es practicality over prior approaches. Despite its simplicity , the proposed O A method outperforms existing O A approaches across extensi ve experiments spanning di verse SE models, ASR systems, and datasets. Comparati ve ev aluations against alterna- tiv e Switch-based and frame-lev el strategies further v alidate the effecti veness of the proposed design, establishing the proposed O A as a conv enient and broadly applicable SE post-processing method for improving ASR in noisy conditions. 2. Methodology 2.1. Intelligibility-Guided Observation Addition W e aim to design a robust OA coefficient S ′ to combine the noisy speech y and enhanced speech ˆ x , guided by two de- sign considerations. (1) S ′ should depend on both y and ˆ x to adaptiv ely balance their complementary information, and (2) S ′ should be driv en by speech intelligibility rather than signal-lev el quality , as the goal is to improv e ASR performance. Figure 1: The pr oposed Confidence-guided O A pipeline. CER and WER measured by an ASR system serve as direct indicators of speech intelligibility . In an idealized setting where the WERs of both the noisy speech y and the enhanced speech ˆ x are available, an intelligibility-based weighting factor can be constructed by normalizing their in verse error rates: S ′ = 1 / WER( y ) 1 / WER( y ) + 1 / WER( ˆ x ) . (2) This formulation assigns higher weight to the signal with lower expected recognition error while constraining S ′ ∈ [0 , 1] . For numerical stability , a small constant ϵ = 1 × 10 − 8 is added to the WERs to prev ent division by zero. In practical scenarios, ground-truth transcriptions are un- av ailable and WER cannot be directly computed. W e therefore adopt ASR confidence as a practical approximation of speech intelligibility , as it is estimated directly by the ASR system and reflects the model’ s internal uncertainty in recognition. Figure 1 illustrates the proposed confidence-based OA framew ork. Dur- ing inference, confidence scores for both the noisy speech y and the enhanced speech ˆ x are directly computed by the frozen backend ASR system. The O A coefficient S ′ is then defined as: S ′ = conf ( y ) conf ( y ) + conf ( ˆ x ) , (3) Like wise, ϵ is added to prev ent zero division. The final output is obtained via Eq. (1) and decoded by the backend ASR. The conf () computation v aries by ASR. W e consider three popular ASR systems in this study: Whisper [25], Parakeet [26, 27] and W a v2V ec2-CTC [28]. These ASRs are diverse, offering representative examples while keeping the framew ork general. Whisper outputs, by default, an average log-probability ¯ ℓ k for each decoded segment. W e le verage this decoding statis- tic to deriv e an utterance-level confidence. Specifically , we ex- ponentiate ¯ ℓ k to obtain the segment’ s geometric mean token probability . T o account for segments of different lengths, the utterance-lev el confidence is computed as a tok en-weighted a v- erage ov er all K segments: conf ( x ) = P K k =1 T k exp( ¯ ℓ k ) P K k =1 T k , (4) where T k is the number of tokens in segment k . For Parakeet and W a v2V ec2-CTC, token confidence C n is deriv ed from the posterior distribution using Tsallis entropy ( q = 0 . 33 ), followed by exponential normalization. The utterance-lev el confidence is defined as the geometric mean ov er all N token confidences: conf ( x ) = exp 1 N N X n =1 log C n ! . (5) In Parakeet, token confidence C n is computed directly at each decoding step. whereas in W av2V ec2-CTC, frame-level confidences are aggregated into tok en confidence via min pool- ing ov er greedy CTC spans. 2.2. Intelligibility-Guided Switching As a simpler alternative to O A, we consider a hard switching strategy guided by intelligibility score, in which only the signal with higher ASR confidence is chosen: ¯ x = ( y , conf ( y ) ≥ conf ( ˆ x ) , ˆ x, otherwise . (6) This discrete switching approach serves as a straightforw ard al- ternativ e to compare with OA ’ s weighted combination method. 2.3. Frame-level Observ ation Addition While prior w orks [20, 21, 23, 24], Sec. 2.1 and 2.2 mainly focus on utterance-lev el OA, where S ′ is a single scalar applied uni- formly across all frames, we also consider a more fine-grained frame-lev el O A formulation in which S ′ is defined as a vector of frame-wise O A coefficients. Specifically , frame-le vel confi- dence is obtained using the pretrained W av2V ec2-CTC model. Due to the fixed con volutional strides of W a v2V ec2, each en- coder frame corresponds to a constant number of input sam- ples. This ensures that frame indices of y and ˆ x are temporally aligned and hav e equal duration, which makes frame-wise in- terpolation well defined. Similar to Sec. 2.1, we compute per- frame confidence S ′ t by applying an exponentially normalized transformation to the Tsallis entropy of the posterior distribu- tion, yielding an O A coefficient via Eq. (3) at the frame lev el. 3. Experiments 3.1. Datasets W e ev aluate the proposed OA method under both in-domain and out-of-domain conditions using two datasets, with all au- dio sampled at 16 kHz. For in-domain experiments, SE models are trained on the training split of V oiceBank-DEMAND [29], and OA is ev aluated on the corresponding test set. V oiceBank- DEMAND is a widely adopted SE benchmark that provides paired clean speech and synthetically corrupted noisy signals. Although drawn from the same domain, the test set includes speakers, noise types and SNR lev els not seen during training, introducing a controlled mismatch. For out-of-domain evaluation, we apply SE models trained on V oiceBank-DEMAND to Channel 5 of the CHiME-4 test set [30]. CHiME-4 is a widely used benchmark for distant- talking speech recognition, comprising recordings captured by a multi-microphone tablet array in real-world noisy environ- ments. The selected test partition contains 1,320 real noisy ut- terances recorded across four everyday acoustic scenes: bus, cafe, pedestrian area, and street junction, along with 1,320 cor - responding synthetically corrupted noisy signals. This out-of- domain setting reflects realistic deployment scenarios, where O A is performed using SE models trained under conditions that differ substantially from those encountered at test time. T able 1: WER of differ ent utterance-level post-pr ocessing methods. ˆ x is obtained by GR-KAN-based MPSENet. Method V oicebank+Demand CHIME-4 Simu CHIME-4 Real Whisper Parakeet W av2V ec2 Whisper Parakeet W av2V ec2 Whisper Parakeet W av2V ec2 Noisy y 3.12 2.18 11.39 5.36 5.24 25.82 6.48 6.22 42.24 Enhanced ˆ x 2.34 1.52 8.09 8.00 7.91 20.70 15.75 14.72 30.91 SNR-O A no-clip [20] 2.40 1.79 8.01 – – – – – – SNR-O A clip [20] 2.51 1.69 9.12 – – – – – – DNSMOS-O A [21] 2.36 1.60 7.88 5.27 5.00 17.09 11.56 9.75 26.26 Classifier-O A 2 class [23] 2.31 1.30 7.71 7.12 6.76 18.59 12.88 11.87 27.73 Classifier-O A 3 class [23] 2.37 1.57 8.28 5.06 4.88 16.90 6.18 5.37 24.85 Conf-Switch (Eq. 6) 2.01 1.26 7.64 5.93 5.32 19.48 7.55 6.07 28.58 Conf-O A (Eq. 3) 2.24 1.35 7.61 4.97 4.78 16.73 5.86 5.55 24.03 WER-O A (Eq. 2) 1.55 1.03 6.56 4.48 4.19 15.50 5.36 4.85 23.43 T able 2: WER of differ ent utterance-level post-pr ocessing methods. ˆ x is obtained by Demucs. Method V oicebank+Demand CHIME-4 Simu CHIME-4 Real Whisper Parakeet W av2V ec2 Whisper Parakeet W av2V ec2 Whisper Parakeet W av2V ec2 Noisy y 3.12 2.18 11.39 5.36 5.24 25.82 6.48 6.22 42.24 Enhanced ˆ x 3.91 2.64 10.63 28.69 27.42 48.07 29.65 27.58 58.99 SNR-O A no-clip [20] 3.68 2.69 9.88 – – – – – – SNR-O A clip [20] 2.94 1.92 10.21 – – – – – – DNSMOS-O A [21] 3.12 2.43 9.93 6.82 6.66 25.20 17.75 16.33 45.97 Classifier-O A 2 class [23] 3.43 2.52 10.01 8.16 7.35 28.31 10.90 10.87 40.21 Classifier-O A 3 class [23] 2.76 2.10 9.77 5.70 5.48 23.08 6.86 6.61 36.01 Conf-Switch (Eq. 6) 3.02 2.22 9.75 5.76 5.58 25.76 7.23 6.26 41.65 Conf-O A (Eq. 3) 2.76 2.11 9.69 5.64 5.40 22.87 6.60 6.10 35.84 WER-O A (Eq. 2) 2.36 1.71 8.62 5.05 4.94 21.91 5.97 5.74 34.96 T able 3: WER of O A (Sec.2.1) and Switch (Sec.2.2) on CHIME4, ˆ x is obtained by GR-KAN-based MP-SENet. ASR is P arakeet. Method Confidence-Correct Miscalibrated Ambiguous O A W in Switch Win T ie O A W in Switch W in T ie O A W in Switch Win T ie # Samples 38 (4.42%) 67 (7.80%) 754 (87.78%) 130 (54.17%) 4 (1.67%) 106 (44.17%) 42 (2.73%) 21 (1.36%) 1478 (95.91%) Noisy y 21.19 12.16 6.66 9.58 22.02 13.40 19.12 7.25 3.24 Enhanced ˆ x 24.77 14.53 24.98 12.06 26.97 16.11 19.12 7.25 3.24 Conf-Switch (Eq. 6) 16.23 7.16 5.79 16.02 31.39 15.25 19.12 7.25 3.24 Conf-O A (Eq. 3) 5.97 16.52 5.79 5.25 40.50 15.25 8.15 16.20 3.24 3.2. Models 3.2.1. SE W e emplo y both time-domain and time-frequency (TF) domain SE systems, representing the two main categories in SE. For time-domain SE, we use the causal Demucs [4], a 1D CNN and LSTM-based model. For TF-domain SE, we use the GR- KAN–based MP-SENet from [31], a state-of-the-art non-causal SE. The tw o SE systems cov er different architectures, input do- mains, causalities, and performance lev els. 3.2.2. ASR W e ev aluate the proposed O A method using three ASR systems with diverse architectures and robustness levels. Whisper-lar ge [25] and Parakeet [26, 27] are employed as strong, noise-robust ASR models, representing lar ge-scale sequence-to-sequence and TDT -based transducer framew orks, respecti vely . For Whis- per , we use the Whisper-lar ge model, while for Parakeet we adopt the parakeet-tdt-0.6b-v2 model 1 . In addition, we include a wa v2vec2-lar ge ASR model [28] fine-tuned on the full Lib- riSpeech [32] labeled dataset 2 , which serv es as a comparati vely weaker and more noise-sensitiv e CTC-based baseline. This setup allows us to e v aluate OA under contrasting conditions where either noisy or enhanced speech may be preferred. 3.3. Implementation 3.3.1. SE training For Demucs, we use the causal version with depth 5, hidden dimension of 48 at depth 1. Kernel, stride and resample factor are set to 8, 4, 4, respectively . T raining uses batch 16, LR 3e-4, adam optimizer for 500 epochs. For GR-KAN MP-SENet, we use the same model configuration as in [31], and trained for 200 epochs at batch 4. Optimizer uses AdamW ( β 1 = 0.8 and β 2 = 0.99). LR is set to 5e-4 and decays by 0.99 every epoch. 1 https://huggingface.co/n vidia/parakeet-tdt-0.6b-v2 2 https://huggingface.co/facebook/wa v2vec2-large-960h 3.4. Baselines W e compare the proposed methods with O A solutions based on speech quality [20, 21] and intelligibility [23], which share the O A formulation in Eq. 1, differing only in how S ′ is computed. SNR-O A clip and SNR-OA no-clip [20]. S ′ is defined as the normalized SNR of the noisy speech y , based on the observa- tion that ASR performance on y typically improves at higher SNRs. Although the original method uses a neural SNR esti- mator to handle unknown SNRs in practice, we directly use the ground-truth SNR av ailable in V oiceBank-DEMAND to ev alu- ate the method’ s upper-bound performance without confound- ing estimation errors. Following the original w ork, S ′ is clipped to [0 . 6 , 1] (SNR-O A clip ). we also report results without clipping (SNR-O A no-clip ) for fair comparison with other O A methods that do not apply this constraint. DNSMOS-O A [21] . S ′ is defined as the average of the normalized B AK and SIG scores of y , where BAK and SIG are DNSMOS components for background noise and signal quality . As in the SNR-based method, we directly use DNSMOS scores without training a separate predictor . Classifier -OA 2class [23] and Classifier -O A 3class . In [23], a neural-based binary classifier is trained with labels defined as class = ( 0 , d ( x t , y t ) < d ( x t , ˆ x t ) , 1 , otherwise , (7) where d ( · , · ) denotes the edit distance, x t , y t , ˆ x t are the transcript of ground-truth, noisy speech and enhanced speech, respectiv ely . S ′ is defined as ˆ p 0 , the posterior probabili- ties of class 0. Ho wever , this binary formulation, denoted by Classifier -OA 2 class , assigns tie cases to class 1, intro- ducing bias. Therefore, we introduced a 3-class variant (Classifier-O A 3 class ), and S ′ is equiv alent to ˆ p 0 + ˆ p 2 × 0 . 5 : class = 0 , d ( x t , y t ) < d ( x t , ˆ x t ) , 1 , d ( x t , y t ) > d ( x t , ˆ x t ) , 2 , d ( x t , y t ) == d ( x t , ˆ x t ) , (8) The model architecture follows [23] and training is conducted on V oiceBank-DEMAND, with enhanced speech generated by GR-KAN MP-SENet (T able 1) and Demucs (T able 2), and train labels obtained using Whisper-lar ge. WER is used as the edit distance d ( · , · ) . 4. Results and discussion 4.1. Comparison with previous O A methods T ables 1 and 2 compare v arious O A methods. WER-O A (Eq. 2) consistently achie ves the lo west WER in all test cases, support- ing the design of intelligibility-guided OA in Section 2.1. The proposed Conf-O A (Eq. 3) achiev es the best overall practical performance, confirming ASR confidence as a reliable alterna- tiv e in the O A task. W e note three cases in T able 2 (CHiME- 4 Simu+Whisper , CHiME-4 Simu+P arakeet, and CHiME-4 Real+Whisper) where Conf-OA slightly exceeds the WER of the noisy speech. This occurs when the relative performance gap between noisy and enhanced speech is large, making the weaker signal insuf ficient to improve the stronger one. Nev- ertheless, Conf-OA still outperforms other baselines, demon- strating greater robustness. W e also observe that our 3-class classifier variant (Classifier-O A 3 class ) generally outperforms the original 2-class classifier (Classifier-O A 2 class ). Howe ver , it still requires training an explicit model, unlike the proposed training-free Conf-O A approach, which is much simpler by de- sign and yield more robust performance. 4.2. Analysis of O A and Switching T able 3 further analyzes the behavior of Conf-OA (Eq. 3) ver- sus Conf-Switch (Eq. 6). Evaluation is performed on CHiME- 4 Simu+Real, using GR-KAN MP-SENet for SE and Para- keet for ASR. T est data are grouped as: 1) Ambiguous : noisy and enhanced speech have equal WER; 2) Confidence-Correct : higher-WER speech has lo wer confidence; 3) Miscalibrated : higher-WER speech has higher confidence. Each group is fur- ther split by whether O A outperforms Switch or ties. The benefit of OA is most evident in the miscalibrated cases, where OA equals or outperforms Switch in nearly all in- stances. Notably , under the O A W in sub-group which accounts for more than half of all miscalibrated cases, OA generally im- prov es WER over both the noisy and enhanced speech, whereas Switch generally degrades recognition. This is because, under miscalibration, Switch alw ays selects the higher-WER speech, while OA can partially compensate for the weaker signal by lev eraging the stronger one. Another observation from the am- biguous cases is that OA rarely affects recognition when the noisy and enhanced speech hav e identical WER. 4.3. Does performance impr ove with frame-level O A? T able 4: WER of utterance- (Section 2.1) and frame-level O A (Section 2.3) on Chime4 Simu/Real partition. ASR is W av2V ec2. Method GR-KAN MP-SENet Demucs Simu Real Simu Real Utterance-lev el OA 16.73 24.03 22.87 35.84 Frame-lev el OA 16.87 25.30 25.19 37.76 T able 4 compares utterance-level (Section 2.1) and frame- lev el (Section 2.3) using Conf-OA (Eq. 3). W e observe perfor- mance degradation with frame-level O A. This is likely because frame-lev el fusion introduces inconsistent weighting across ad- jacent frames, disrupting the temporal continuity expected by the ASR. In contrast, utterance-lev el O A preserves global con- sistency , resulting in more stable recognition performance. 5. Conclusion This work proposes an intelligibility-guided SE post-processing framew ork for noise-robust ASR. By performing O A with fu- sion weights deriv ed from backend ASR confidence scores, the proposed method effecti vely balances noisy and enhanced speech, leading to recognition improv ements across multiple SE-ASR systems and datasets, and outperforming existing O A approaches. Importantly , the method operates in an inference- only manner, av oiding the additional training stage required by prior neural-based O A methods, reducing system complexity . Further analyses of switching-based and frame-lev el v ariants provide additional evidence for the effecti veness of the pro- posed confidence-based, utterance-lev el OA strategy . Ov erall, this work provides an effecti ve, easy-to-implement, and broadly applicable solution for improving ASR rob ustness in noisy con- ditions without modifying existing SE or ASR models. 6. References [1] T . V irtanen, R. Singh, and B. Raj, T echniques for noise rob ustness in automatic speech r ecognition . John W iley & Sons, 2012. [2] J. Li, L. Deng, Y . Gong, and R. Haeb-Umbach, “ An ov erview of noise-robust automatic speech recognition, ” IEEE/ACM T ransac- tions on Audio, Speech, and Language Pr ocessing , vol. 22, no. 4, pp. 745–777, 2014. [3] A. Rodrigues, R. Santos, J. Abreu, P . Bec ¸ a, P . Almeida, and S. Fer- nandes, “ Analyzing the performance of asr systems: The ef fects of noise, distance to the device, age and gender , ” in Pr oceedings of the XX International Confer ence on Human Computer Inter ac- tion , 2019, pp. 1–8. [4] A. Defossez, G. Synnaev e, and Y . Adi, “Real time speech enhancement in the wa veform domain, ” arXiv preprint arXiv:2006.12847 , 2020. [5] Y .-X. Lu, Y . Ai, and Z.-H. Ling, “Mp-senet: A speech enhance- ment model with parallel denoising of magnitude and phase spec- tra, ” arXiv preprint , 2023. [6] H. Li, J. Q. Y ip, T . Fan, and E. S. Chng, “Speech enhancement using continuous embeddings of neural audio codec, ” in ICASSP 2025-2025 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2025, pp. 1–5. [7] H. Li, N. Hou, Y . Hu, J. Y ao, S. M. Siniscalchi, and E. S. Chng, “ Aligning generative speech enhancement with human preferences via direct preference optimization, ” arXiv preprint arXiv:2507.09929 , 2025. [8] M. Delcroix, T . Y oshioka, A. Ogawa, Y . Kubo, M. Fujimoto, N. Ito, K. Kinoshita, M. Espi, S. Araki, T . Hori et al. , “Strate- gies for distant speech recognitionin reverberant en vironments, ” EURASIP Journal on Advances in Signal Pr ocessing , vol. 2015, no. 1, p. 60, 2015. [9] A. Nicolson and K. K. Paliw al, “Deep xi as a front-end for robust automatic speech recognition, ” in 2020 IEEE Asia-P acific Confer - ence on Computer Science and Data Engineering (CSDE) . IEEE, 2020, pp. 1–6. [10] Y . Y ang, A. Pandey , and D. W ang, “T owards decoupling frontend enhancement and backend recognition in monaural robust asr, ” Computer Speech & Languag e , vol. 95, p. 101821, 2026. [11] K. Iwamoto, T . Ochiai, M. Delcroix, R. Ikeshita, H. Sato, S. Araki, and S. Katagiri, “How bad are artifacts?: Analyzing the impact of speech enhancement errors on asr, ” arXiv pr eprint arXiv:2201.06685 , 2022. [12] Y . Hu, N. Hou, C. Chen, and E. S. Chng, “Interactiv e feature fu- sion for end-to-end noise-robust speech recognition, ” in ICASSP 2022-2022 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2022, pp. 6292–6296. [13] Z.-Q. W ang and D. W ang, “ A joint training framework for ro- bust automatic speech recognition, ” IEEE/A CM Tr ansactions on Audio, Speech, and Langua ge Pr ocessing , vol. 24, no. 4, pp. 796– 806, 2016. [14] T . Menne, R. Schl ¨ uter , and H. Ney , “Investigation into joint op- timization of single channel speech enhancement and acoustic modeling for robust asr , ” in ICASSP 2019-2019 IEEE Interna- tional Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2019, pp. 6660–6664. [15] Y . K oizumi, S. Karita, A. Narayanan, S. Panchapagesan, and M. Bacchiani, “Snri target training for joint speech enhancement and recognition, ” arXiv preprint , 2021. [16] D. Ma, N. Hou, H. Xu, E. S. Chng et al. , “Multitask-based joint learning approach to robust asr for radio communication speech, ” in 2021 Asia-P acific Signal and Information Pr ocessing Associa- tion Annual Summit and Conference (APSIP A ASC) . IEEE, 2021, pp. 497–502. [17] S.-J. Chen, A. S. Subramanian, H. Xu, and S. W atanabe, “Build- ing state-of-the-art distant speech recognition using the chime-4 challenge with a setup of speech enhancement baseline, ” arXiv pr eprint arXiv:1803.10109 , 2018. [18] K. Iwamoto, T . Ochiai, M. Delcroix, R. Ikeshita, H. Sato, S. Araki, and S. Katagiri, “How does end-to-end speech recog- nition training impact speech enhancement artifacts?” in ICASSP 2024-2024 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2024, pp. 11 031– 11 035. [19] Y .-W . Chen, J. Hirschberg, and Y . Tsao, “Noise robust speech emotion recognition with signal-to-noise ratio adapting speech en- hancement, ” arXiv preprint , 2023. [20] K.-C. W ang, Y .-J. Li, W .-L. Chen, Y .-W . Chen, Y .-C. W ang, P .- C. Y eh, C. Zhang, and Y . Tsao, “Bridging the gap: Integrating pre-trained speech enhancement and recognition models for ro- bust speech recognition, ” in 2024 32nd Eur opean Signal Pr ocess- ing Confer ence (EUSIPCO) . IEEE, 2024, pp. 426–430. [21] Z. Cui, C. Cui, T . W ang, M. He, H. Shi, M. Ge, C. Gong, L. W ang, and J. Dang, “Reducing the gap between pretrained speech en- hancement and recognition models using a real speech-trained bridging module, ” in ICASSP 2025-2025 IEEE International Con- fer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2025, pp. 1–5. [22] C. K. Reddy , V . Gopal, and R. Cutler, “Dnsmos: A non-intrusive perceptual objective speech quality metric to evaluate noise sup- pressors, ” in ICASSP 2021-2021 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2021, pp. 6493–6497. [23] H. Sato, T . Ochiai, M. Delcroix, K. Kinoshita, N. Kamo, and T . Moriya, “Learning to enhance or not: Neural network- based switching of enhanced and observed signals for overlap- ping speech recognition, ” in ICASSP 2022-2022 IEEE Interna- tional Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2022, pp. 6287–6291. [24] S. Huang, Y . Du, J. Y ang, D. Zhang, X. Jia, J. Deng, J. Kang, and R. Zheng, “Overlap-adapti ve hybrid speaker diarization and asr-a ware observation addition for misp 2025 challenge, ” arXiv pr eprint arXiv:2505.22013 , 2025. [25] A. Radford, J. W . Kim, T . Xu, G. Brockman, C. McLeav ey , and I. Sutskev er, “Robust speech recognition via large-scale weak supervision, ” in International conference on machine learning . PMLR, 2023, pp. 28 492–28 518. [26] D. Rekesh, N. R. Koluguri, S. Kriman, S. Majumdar, V . Noroozi, H. Huang, O. Hrinchuk, K. Puvvada, A. Kumar , J. Balam et al. , “Fast conformer with linearly scalable attention for efficient speech recognition, ” in 2023 IEEE A utomatic Speech Recognition and Understanding W orkshop (ASRU) . IEEE, 2023, pp. 1–8. [27] H. Xu, F . Jia, S. Majumdar, H. Huang, S. W atanabe, and B. Gins- bur g, “Ef ficient sequence transduction by jointly predicting tokens and durations, ” in International Confer ence on Machine Learn- ing . PMLR, 2023, pp. 38 462–38 484. [28] A. Baevski, Y . Zhou, A. Mohamed, and M. Auli, “wav2v ec 2.0: A frame work for self-supervised learning of speech repre- sentations, ” Advances in neural information pr ocessing systems , vol. 33, pp. 12 449–12 460, 2020. [29] C. V alentini-Botinhao, X. W ang, S. T akaki, and J. Y amagishi, “In vestigating rnn-based speech enhancement methods for noise- robust te xt-to-speech. ” in SSW , 2016, pp. 146–152. [30] E. V incent, S. W atanabe, J. Barker , and R. Marxer , “The 4th chime speech separation and recognition challenge, ” URL: http://spandh. dcs. shef. ac. uk/chime challenge/(last accessed on 1 August, 2018) , 2016. [31] H. Li, Y . Hu, C. Chen, S. M. Siniscalchi, S. Liu, and E. S. Chng, “From kan to gr-kan: Adv ancing speech enhancement with kan- based methodology , ” arXiv pr eprint arXiv:2412.17778 , 2024. [32] V . Panayotov , G. Chen, D. Pove y , and S. Khudanpur , “Lib- rispeech: an asr corpus based on public domain audio books, ” in 2015 IEEE international confer ence on acoustics, speech and signal pr ocessing (ICASSP) . IEEE, 2015, pp. 5206–5210.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment