SoK: Agentic Skills -- Beyond Tool Use in LLM Agents

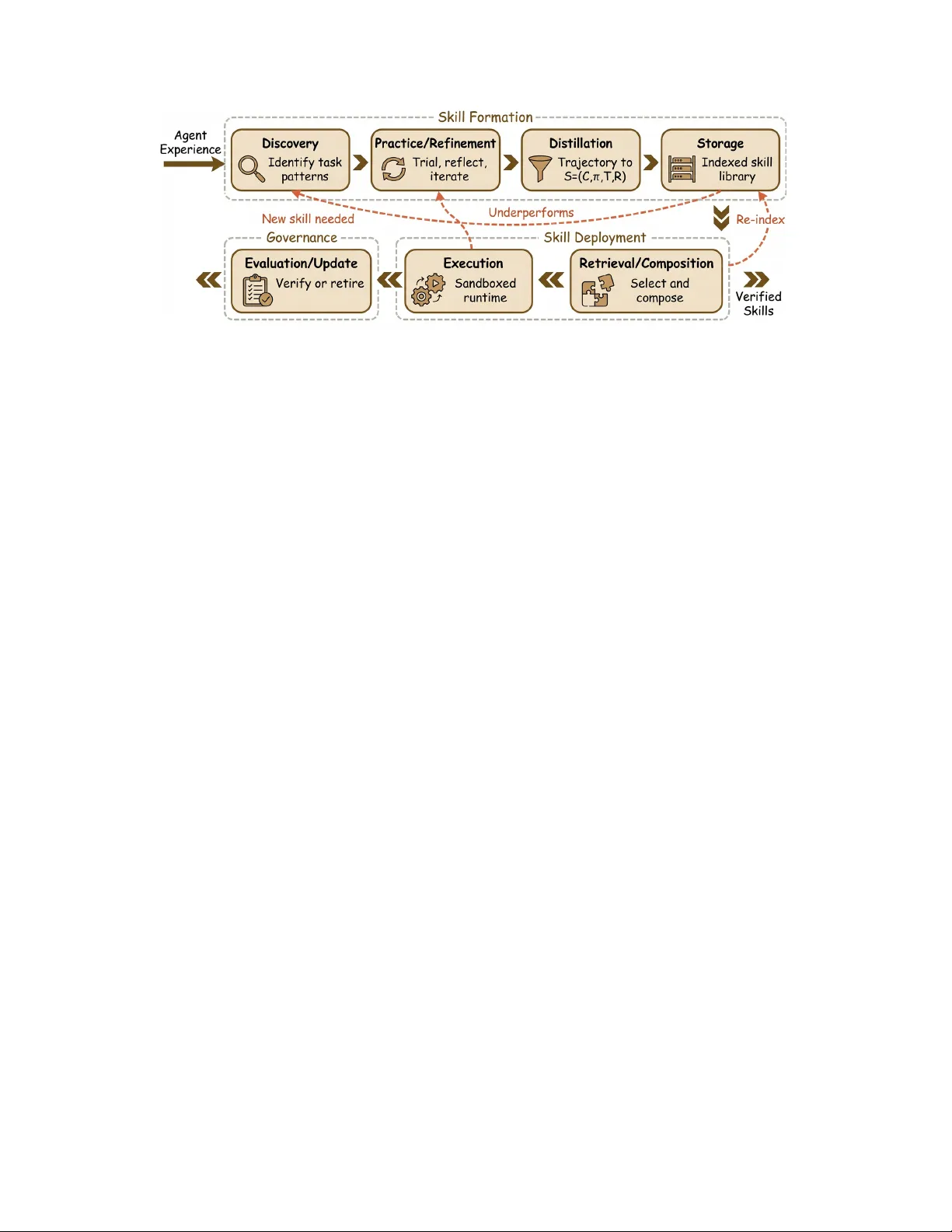

Agentic systems increasingly rely on reusable procedural capabilities, \textit{a.k.a., agentic skills}, to execute long-horizon workflows reliably. These capabilities are callable modules that package procedural knowledge with explicit applicability …

Authors: Yanna Jiang, Delong Li, Haiyu Deng