DreamBarbie: Text to Barbie-Style 3D Avatars

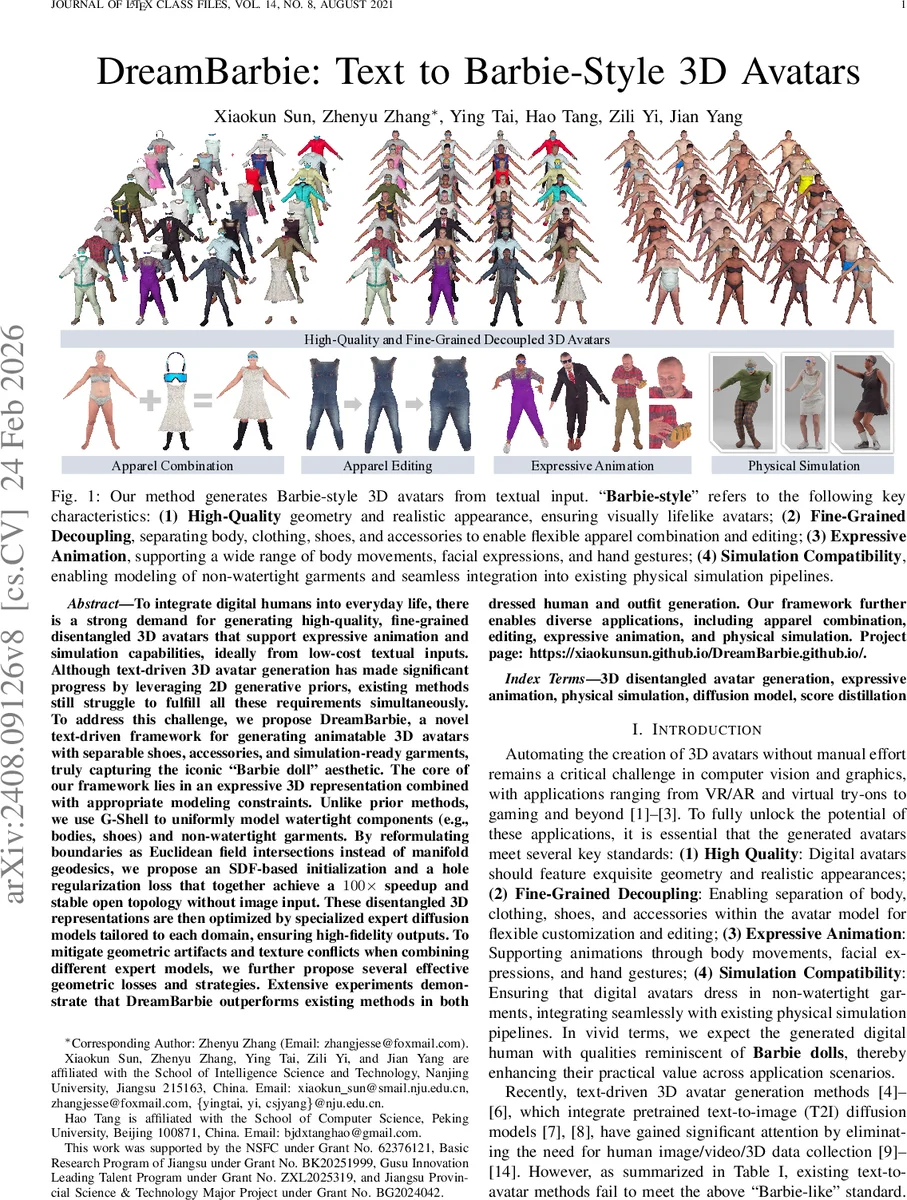

To integrate digital humans into everyday life, there is a strong demand for generating high-quality, fine-grained disentangled 3D avatars that support expressive animation and simulation capabilities, ideally from low-cost textual inputs. Although text-driven 3D avatar generation has made significant progress by leveraging 2D generative priors, existing methods still struggle to fulfill all these requirements simultaneously. To address this challenge, we propose DreamBarbie, a novel text-driven framework for generating animatable 3D avatars with separable shoes, accessories, and simulation-ready garments, truly capturing the iconic ``Barbie doll’’ aesthetic. The core of our framework lies in an expressive 3D representation combined with appropriate modeling constraints. Unlike prior methods, we use G-Shell to uniformly model watertight components (e.g., bodies, shoes) and non-watertight garments. By reformulating boundaries as Euclidean field intersections instead of manifold geodesics, we propose an SDF-based initialization and a hole regularization loss that together achieve a 100x speedup and stable open topology without image input. These disentangled 3D representations are then optimized by specialized expert diffusion models tailored to each domain, ensuring high-fidelity outputs. To mitigate geometric artifacts and texture conflicts when combining different expert models, we further propose several effective geometric losses and strategies. Extensive experiments demonstrate that DreamBarbie outperforms existing methods in both dressed human and outfit generation. Our framework further enables diverse applications, including apparel combination, editing, expressive animation, and physical simulation. Project page: https://xiaokunsun.github.io/DreamBarbie.github.io/.

💡 Research Summary

DreamBarbie introduces a novel text‑driven pipeline that can automatically generate “Barbie‑style” 3D avatars—high‑quality, finely disentangled digital humans that support expressive animation and physical simulation—using only a textual prompt. The authors identify four essential criteria for such avatars: (1) high‑fidelity geometry and realistic appearance, (2) fine‑grained decoupling of body, clothing, shoes, and accessories, (3) expressive animation capability (full body pose, facial expressions, hand gestures), and (4) compatibility with simulation pipelines, especially for non‑watertight garments. Existing text‑to‑3D methods either rely on implicit NeRF representations, which lack explicit structure for animation and simulation, or on explicit meshes such as 3DGS or SMPL‑X, which struggle to capture delicate details and cannot model open surfaces needed for draped clothing. Hybrid approaches like DMTet improve detail but cannot represent non‑watertight surfaces.

To overcome these limitations, DreamBarby leverages two key innovations. First, it adopts G‑Shell, a hybrid representation that extends DMTet by introducing a manifold signed distance field (mSDF) on a watertight template. This allows simultaneous modeling of watertight components (body, shoes, accessories) and non‑watertight garments within a single framework. The authors reformulate surface boundaries as Euclidean field intersections rather than manifold geodesics, and propose an SDF‑based initialization together with a hole‑preserving regularization loss. This eliminates the need for multi‑view image supervision, stabilizes gradients, and yields a 100× speed‑up compared with prior implicit‑only pipelines.

Second, DreamBarbie replaces the common practice of using a single general text‑to‑image diffusion model for all parts with a set of expert diffusion models, each specialized for a specific domain (human body, clothing, shoes, accessories). The pipeline consists of three stages: (1) Human Body Generation – a human‑specific diffusion model guided by a novel SMPL‑X‑evolving prior loss produces a realistic base mesh and pose; (2) Apparel Generation – each garment, shoe, or accessory is initialized using domain‑specific priors and refined with G‑Shell under several geometric constraints (surface‑normal alignment, inter‑part collision avoidance, hole regularization); (3) Unified Texture Refinement – the assembled avatar is jointly fine‑tuned to harmonize textures and lighting across parts. Throughout, Score Distillation Sampling (SDS) provides the primary text‑alignment signal, while the mSDF enables accurate distance field estimation for open surfaces.

Extensive experiments demonstrate that DreamBarbie outperforms prior NeRF, 3DGS, SMPL‑X, and hybrid methods on metrics such as F‑score (geometry), LPIPS (texture fidelity), and CLIP‑based text similarity. Notably, the generated garments are simulation‑ready: they are non‑watertight yet maintain clean topology, allowing direct integration with physics‑based draping simulators. The disentangled representation also enables downstream applications such as outfit swapping, style editing, expressive motion retargeting, and physical simulation of clothing dynamics.

In summary, DreamBarbie is the first system to combine G‑Shell’s unified watertight/non‑watertight modeling with domain‑specific diffusion guidance, achieving a full set of “Barbie‑like” qualities from a single textual description. The work opens avenues for more interactive, controllable, and physically plausible avatar creation, and suggests future extensions toward broader accessory categories, real‑time rendering, and user‑in‑the‑loop style customization.

Comments & Academic Discussion

Loading comments...

Leave a Comment