GSNR: Graph Smooth Null-Space Representation for Inverse Problems

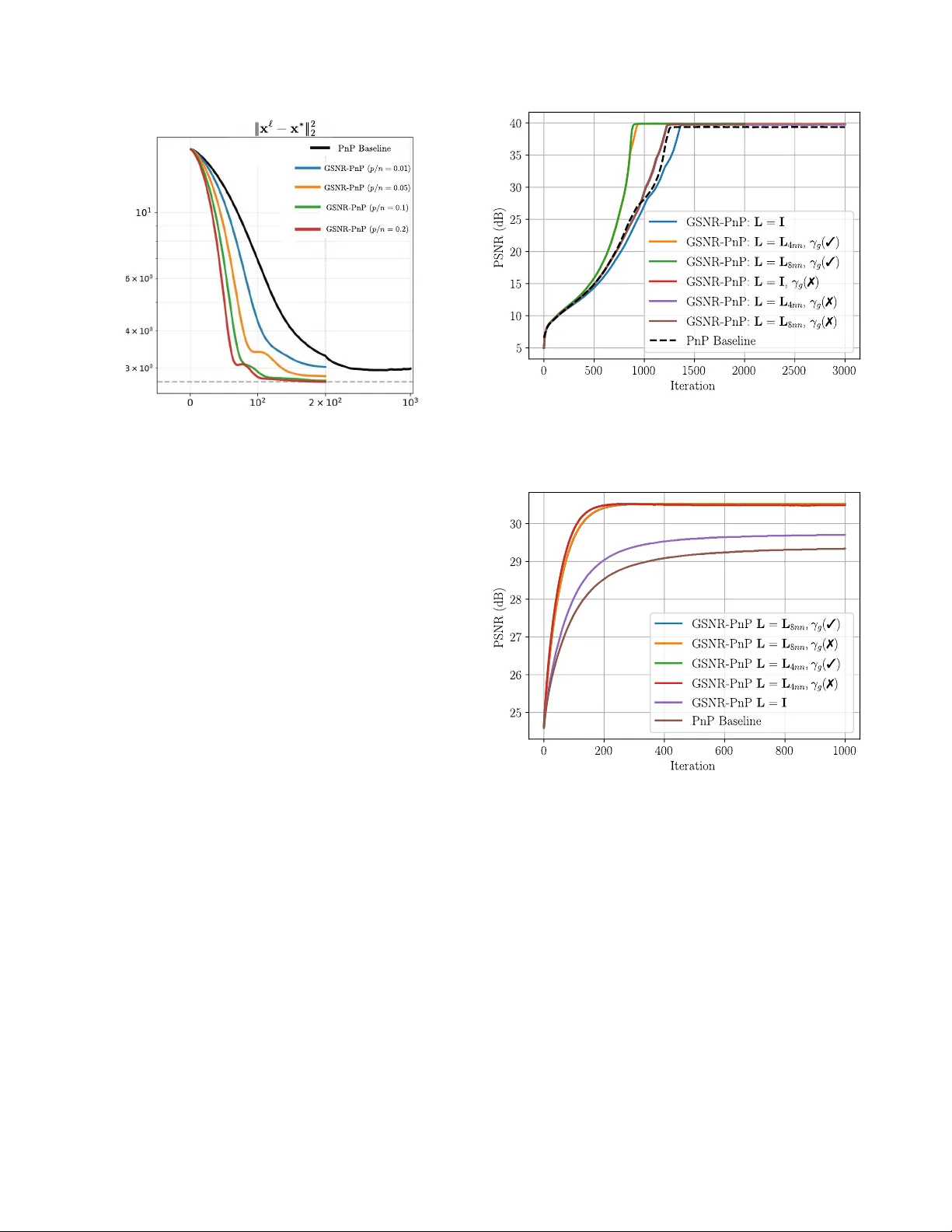

Inverse problems in imaging are ill-posed, leading to infinitely many solutions consistent with the measurements due to the non-trivial null-space of the sensing matrix. Common image priors promote solutions on the general image manifold, such as spa…

Authors: Romario Gualdrón-Hurtado, Roman Jacome, Rafael S. Suarez