The Sim-to-Real Gap in MRS Quantification: A Systematic Deep Learning Validation for GABA

Magnetic resonance spectroscopy (MRS) is used to quantify metabolites in vivo and estimate biomarkers for conditions ranging from neurological disorders to cancers. Quantifying low-concentration metabolites such as GABA ($γ$-aminobutyric acid) is cha…

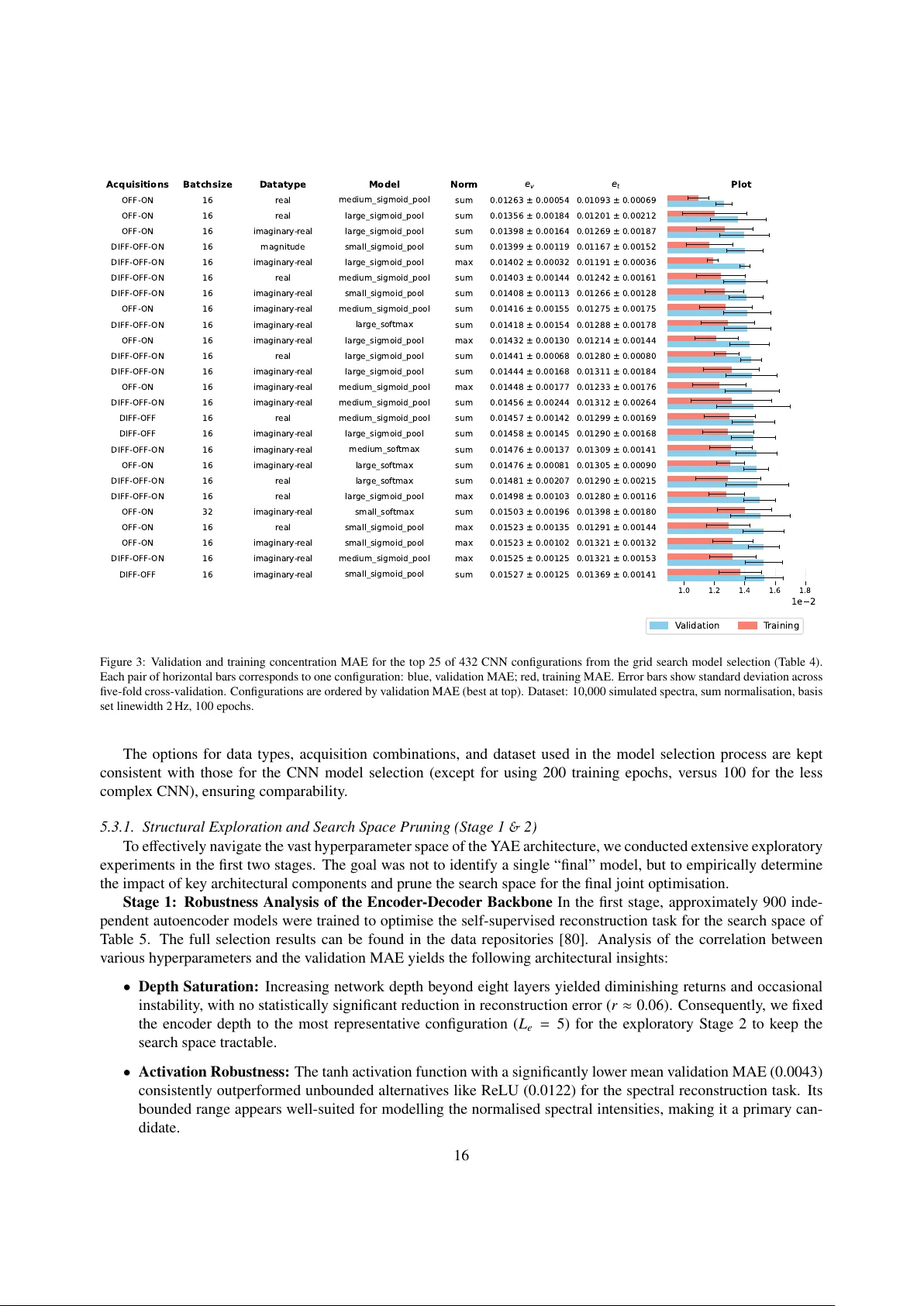

Authors: Zien Ma, S. M. Shermer, Oktay Karakuş