Training Deep Stereo Matching Networks on Tree Branch Imagery: A Benchmark Study for Real-Time UAV Forestry Applications

Autonomous drone-based tree pruning needs accurate, real-time depth estimation from stereo cameras. Depth is computed from disparity maps using $Z = f B/d$, so even small disparity errors cause noticeable depth mistakes at working distances. Building…

Authors: Yida Lin, Bing Xue, Mengjie Zhang

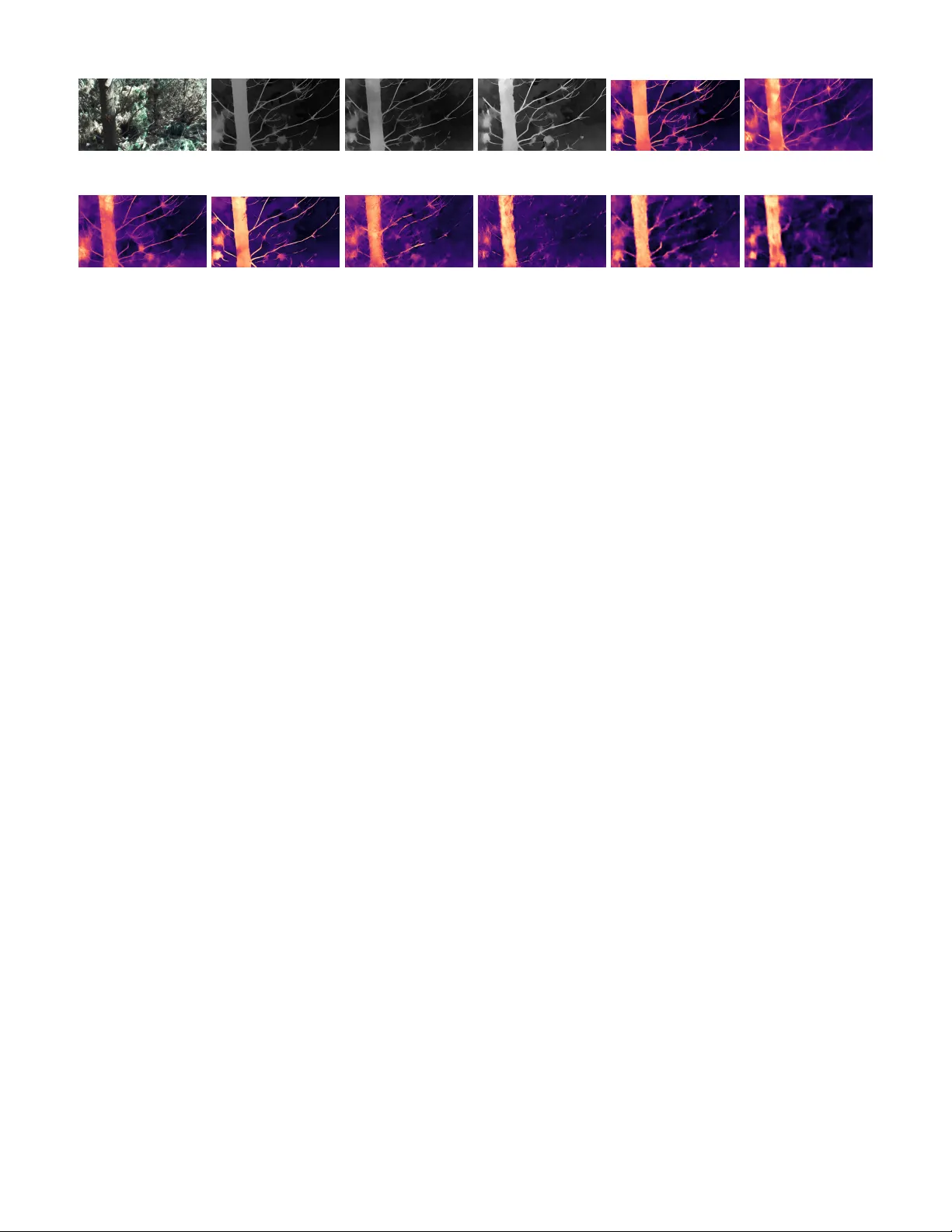

T raining Deep Stereo Matching Networks on T ree Branch Imagery: A Benchmark Study for Real-T ime U A V F orestry Applications Y ida Lin, Bing Xue, Mengjie Zhang Centr e for Data Science and Artificial Intelligence V ictoria University of W ellington, W ellington, New Zealand linyida @ myvuw .ac.nz, bing.xue @ vuw .ac.nz, mengjie.zhang @ vuw .ac.nz Sam Schofield, Richard Green Department of Computer Science and Softwar e Engineering University of Canterb ury , Canterb ury , New Zealand sam.schofield @ canterbury .ac.nz, richard.green @ canterbury .ac.nz Abstract —A utonomous drone-based tr ee pruning needs accu- rate, real-time depth estimation fr om stereo cameras. Depth is computed from disparity maps using Z = f B /d , so even small disparity errors cause noticeable depth mistakes at working distances. Building on our earlier work that identified DEFOM- Stereo as the best reference disparity generator for vegetation scenes, we present the first study to train and test ten deep stereo matching networks on real tree branch images. W e use the Canterbury T r ee Branches dataset—5,313 stereo pairs from a ZED Mini camera at 1080P and 720P—with DEFOM-generated disparity maps as training targets. The ten methods cov er step- by-step refinement, 3D conv olution, edge-aware attention, and lightweight designs. Using per ceptual metrics (SSIM, LPIPS, V iTScore) and structural metrics (SIFT/ORB feature match- ing), we find that BANet-3D produces the best overall quality (SSIM = 0.883, LPIPS = 0.157), while RAFT -Stereo scores highest on scene-level understanding (ViTScor e = 0.799). T esting on an NVIDIA Jetson Orin Super (16 GB, independently powered) mounted on our drone shows that AnyNet reaches 6.99 FPS at 1080P—the only near -real-time option—while BANet-2D gives the best quality–speed balance at 1.21 FPS. W e also compare 720P and 1080P processing times to guide resolution choices for for estry drone systems. Index T erms —Stereo matching, disparity estimation, depth estimation, deep learning, U A V for estry , real-time inference, autonomous pruning I . I N T RO D U C T I O N Radiata pine ( Pinus r adiata ) is the main plantation tree in Ne w Zealand, where forestry adds NZ$3.6 billion (1.3%) to the national economy . Trees need re gular pruning to pro- duce high-quality wood, but pruning by hand is dangerous due to fall risks and chainsa w injuries. Drones that prune automatically [1], [2] offer a safer option, yet they need centimeter-le vel depth accurac y to position cutting tools at 1–2 m distances. In a stereo camera system, depth is not measured directly . Instead, it comes from disparity maps — images that record how far each pixel shifts horizontally between the left and right camera views. Forest scenes are much harder than city or indoor environments because they contain thin overlapping branches, repeating textures, sharp depth changes, and lar ge lighting dif ferences. These challenges require training on ve getation-specific data rather than using general-purpose pretrained models. Disparity Maps and Depth Recovery . A disparity map D ∈ R H × W stores, for each pixel ( x, y ) in the left image, how far its matching pixel ( x − D ( x, y ) , y ) is shifted in the right image. Depth Z is then calculated by triangulation: Z ( x, y ) = f · B D ( x, y ) (1) where f is the focal length and B is the distance between the two cameras (the baseline). Since depth is in v ersely proportional to disparity , even small disparity errors lead to increasingly large depth errors at longer distances. W ith our ZED Mini camera ( B = 63 mm), a 1-pixel disparity mistake at 1.5 m branch distance produces roughly 2.3 cm of depth error at 1080P resolution. This highlights why accurate disparity prediction is the main goal of this work. Once disparity is known, exact depth follows directly from Eq. (1) and the camera’ s calibration settings. In our earlier benchmark study [3], we found that DEFOM- Stereo [4] is the best method for producing reference disparity maps (pseudo-ground-truth) on ve getation scenes. W e tested it without any task-specific training across several standard datasets, and it ranked first on average (rank 1.75 across ETH3D [15], KITTI [16], and Middlebury [17]) with no major failures. This consistency makes DEFOM well-suited for creating training labels. The current paper uses those DEFOM-generated disparity maps as training targets to build and ev aluate stereo matching models designed for v egetation scenes. Deep stereo methods perform well on standard benchmarks when trained on synthetic data like Scene Flo w [5], but their accuracy drops sharply on forestry images. Our zero-shot generalization study [26] confirmed this gap: methods trained only on synthetic data sho w large performance differences when applied to vegetation scenes. This happens because synthetic city scenes look v ery dif ferent from real vegetation. T raining on forest-specific data would fix this, but collecting accurate disparity maps with LiD AR in forest canopies is not practical—branches cause heavy blocking and their thin, complex shapes are hard to scan. Our approach bypasses this problem: we use DEFOM’ s high-quality predictions as reference labels, allowing large-scale training on real forestry data without costly LiD AR equipment. Three main questions driv e this study . F irst , which network design produces the best disparity maps—as measured by perceptual and structural metrics—after training on vegetation data? Second , which design offers the best balance between quality and processing speed for drone deployment on low- power hardware? Thir d , ho w does image resolution (1080P vs. 720P) af fect processing speed and real-world usability on drones with limited computing resources? T o answer these questions, we train and test ten deep stereo methods on the Canterbury T ree Branches dataset: RAFT - Stereo [6] (step-by-step refinement), PSMNet [7] and Gwc- Net [8] (3D con volution networks), MoCha-Stereo [9] (motion and channel attention), BANet-2D and BANet-3D [10] (edge- aware attention with 2D/3D cost processing), IGEV -R T [11] (fast geometry-based refinement), DeepPruner [12] (search space reduction), DCVSMNet [13] (dual cost volume), and AnyNet [14] (multi-stage prediction). All models are trained with DEFOM reference labels and tested on held-out scenes using perceptual and structural metrics. W e also run all models on an NVIDIA Jetson Orin Super mounted on our test drone to measure real-world processing speed at both 1080P and 720P . Our contrib utions are: • First vegetation-focused stereo benchmark : W e create the Canterbury T ree Branches dataset with DEFOM refer- ence labels as a training and testing resource for forestry use, removing the need for costly LiD AR data collection. • Broad ten-method comparison : W e train and test ten stereo methods from six design families using percep- tual (SSIM, LPIPS, V iTScore) and structural (SIFT/ORB feature matching) metrics. B ANet-3D emerges as the top- quality method. • Quality–speed trade-off analysis : W e map out the best trade-off boundary between disparity quality and pro- cessing speed, finding that only AnyNet, BANet-2D, and B ANet-3D of fer unbeatable combinations. • Real-world drone deployment : W e show practical re- sults on an independently-powered NVIDIA Jetson Orin Super (16 GB) with live ZED Mini stereo input at 1080P and 720P , providing speed profiles and deplo yment guide- lines for autonomous pruning. I I . R E L A T E D W O R K A. Deep Ster eo Matc hing Ar chitectur es Deep learning has changed how stereo matching works by enabling end-to-end trainable systems. Early methods used 3D con volution networks: DispNet [5] showed that netw orks can directly predict disparity , GC-Net [18] added 3D con volutions to process matching costs, and PSMNet [7] used multi-scale pooling to capture conte xt at dif ferent lev els. GwcNet [8] introduced group-wise correlation to build matching costs more ef ficiently . Step-by-step refinement methods, inspired by motion es- timation, handle large disparity ranges through repeated up- dates. RAFT -Stereo [6] uses recurrent updates with multi- scale matching and reaches top accuracy , though at high computing cost. IGEV -Stereo [11] combines repeated updates with geometry-based features; its faster version, IGEV -R T , reduces the number of update steps to speed things up while keeping reasonable quality . Edge-aware attention methods add boundary-sensitiv e filter- ing to the matching process. B ANet [10] comes in both 2D and 3D versions, performing well by respecting depth edges through ef ficient bilateral operations. Lightweight models focus on running fast on limited hard- ware. AnyNet [14] predicts disparity in stages from coarse to fine. DeepPruner [12] narro ws down the search area to sav e computation. DCVSMNet [13] uses two separate cost volumes for matching. MoCha-Stereo [9] applies motion and channel attention to improv e matching accurac y . These designs are especially rele vant for drones, where computing power is tightly limited. B. Pseudo-Gr ound-T ruth for Ster eo T raining T raining stereo networks requires dense disparity labels for every pixel. These are usually obtained with LiD AR scanners [16] or structured light systems [17]. Howe ver , such equipment cannot work well in many real settings—especially forest canopies, where branches block the scanner and pre vent accurate measurements. An alternativ e is to use high-quality predictions from strong existing models as training labels (pseudo-ground- truth). Large-scale models like DEFOM-Stereo [4] and Depth Anything [19] generalize well across different scenes, making them good candidates for label generation. In our earlier work [3], [26], we compared sev eral stereo methods and confirmed that DEFOM giv es the most consistent results on ve getation scenes. C. Ster eo V ision for UA V F or estry Drone-based stereo vision has been used for na vigation [20], 3D mapping [21], and obstacle avoidance [22]. Recent studies on branch detection [1], [24], segmentation [25], and disparity parameter optimization using genetic algorithms [27] show that deep learning and stereo cameras ha ve clear potential for forestry tasks. Still, most existing systems use traditional matching algorithms or pretrained models without adapting them to vegetation. This work fills that gap by training modern stereo networks directly on tree imagery . D. Real-T ime Stereo on Embedded Platforms Running stereo networks on small embedded computers means balancing accuracy against speed. NVIDIA Jetson boards have become the standard choice for drone percep- tion [23], and they support T ensorR T for faster processing. Earlier work showed that real-time stereo is possible at lower resolutions [14], but getting both high resolution and real-time speed remains hard. In this work, we systematically test all ten network designs at both 720P and 1080P on Jetson hardware with its own power supply , matching the conditions of actual drone flights. I I I . M E T H O D O L O G Y A. Pr oblem F ormulation Stereo matching estimates the per -pixel disparity from a pair of aligned stereo images. Giv en left and right images I L , I R ∈ R H × W × 3 , the task is to produce a disparity map D ∈ R H × W where each pixel ( x, y ) in the left image matches pixel ( x − D ( x, y ) , y ) in the right image. Disparity and depth follow the triangulation formula (Eq. (1)). Because depth Z is in versely related to disparity D , objects close to the camera (like branches at 1–2 m) produce large disparity values and fine depth detail, while distant background gi ves small disparity with limited depth precision. For our ZED Mini stereo camera with baseline B = 63 mm, operating at 1080P ( f ≈ 700 px) and 720P ( f ≈ 350 px), this relationship provides sub-centimeter depth resolution at the 1– 2 m range critical for pruning. W e therefore train networks f θ to predict disparity , and then conv ert to metric depth using Eq. (1). B. Dataset Canterbury T ree Branches Dataset : W e use 5,313 stereo pairs taken with a ZED Mini camera (63 mm baseline) on a drone in Canterb ury , Ne w Zealand (March–October 2024). The camera captures synchronized left and right images at 1920 × 1080 (1080P) and 1280 × 720 (720P). W e train and ev aluate quality at 1080P , and measure processing speed at both resolutions. The images were carefully chosen from hundreds of thousands of raw captures by filtering for motion blur , e xposure quality , alignment accuracy , and scene variety . Pseudo-Ground-T ruth Generation : Follo wing our earlier study [3], we run DEFOM-Stereo [4] on all 5,313 pairs to produce reference disparity maps. DEFOM was chosen for its consistent performance across datasets (av erage rank 1.75 across ETH3D, KITTI, and Middlebury) and its strong results on vegetation scenes. These outputs serve as the training targets for all ten methods. T rain/V alidation/T est Split : W e divide the data into train- ing (4,250 pairs, 80%), validation (531 pairs, 10%), and test (532 pairs, 10%) sets. The splits cov er different times and locations to prev ent the models from memorizing specific scenes or lighting conditions. C. Evaluated Methods W e pick ten methods from six design f amilies for training and testing (T able I). D. T r aining Pr otocol All models start from weights pretrained on Scene Flow [5] and are then fine-tuned on our Tree Branches training set. W e keep the same settings across methods where possible: • Optimizer : AdamW with weight decay 10 − 4 • Learning rate : 10 − 4 with cosine annealing • Batch size : 4 (adjusted per GPU memory) • T raining epochs : 100 with early stopping (patience 10) • Input resolution : 512 × 256 crops during training T ABLE I: Evaluated Stereo Matching Methods Method T ype Key Feature RAFT -Stereo [6] Iterativ e Recurrent refinement PSMNet [7] 3D CNN Spatial pyramid pooling GwcNet [8] 3D CNN Group-wise correlation MoCha-Stereo [9] Attention Motion-channel attention B ANet-2D [10] Bilateral Attn. 2D cost filtering B ANet-3D [10] Bilateral Attn. 3D cost filtering IGEV -R T [11] Iterativ e (R T) Geometry encoding volume DeepPruner [12] PatchMatch Differentiable pruning DCVSMNet [13] Dual CV Dual cost volume AnyNet [14] Hierarchical Anytime prediction • Data augmentation : Random crops, color jitter, horizon- tal flip Loss Function : W e train with smooth L1 loss, which mea- sures the difference between predicted and reference disparity values: L = 1 N N X i =1 smooth L 1 ( d i − ˆ d i ) (2) where ˆ d i is the DEFOM reference value. For methods that produce outputs at multiple scales (PSMNet, GwcNet, BANet- 3D), we add weighted losses at each scale. E. Evaluation Metrics Instead of only checking pixel-by-pixel error , we use several complementary metrics that capture both visual quality and structural accuracy of the predicted disparity maps compared to the DEFOM reference. Structural Similarity (SSIM) [28] checks how well the brightness, contrast, and structure of predicted maps match the reference. Higher SSIM means better preservation of disparity gradients and boundaries between regions. Learned P erceptual Image P atch Similarity (LPIPS) [29] uses deep network features to measure how dif ferent two images look to a human. Lower LPIPS means the prediction looks more like the reference, catching fine details that simple pixel comparisons miss. V iTScore measures high-le vel similarity using V ision T ransformer features. Higher V iTScore means the predicted map keeps the overall geometric layout—branch shapes, depth layers, and blocked regions—intact. Featur e Matching Ratios : W e detect SIFT and ORB keypoints in both predicted and reference maps, then count how many match successfully (using the Lo we ratio test). Higher match ratios mean the prediction faithfully reproduces structural features like edges, corners, and texture patterns. This matters for later tasks such as branch detection and distance measurement. Inference Latency : W e record average frames per second (FPS) and per-frame delay on the tar get embedded board, which is essential for real-time drone use. F . Har dwar e Platforms T raining : NVIDIA Quadro R TX 6000 GPU (24 GB VRAM) with PyT orch 2.6.0. On-Drone Deployment : NVIDIA Jetson Orin Super (16 GB shared memory), mounted on our test drone and powered by its own dedicated battery , separate from the flight battery . This separate power design prev ents the computing load from draining the flight reserv es, av oiding performance drops during long flights. The Jetson takes li ve stereo input from the ZED Mini camera at both 1080P (1920 × 1080) and 720P (1280 × 720). I V . E X P E R I M E N TA L R E S U L T S A. Compr ehensive Quality and Speed Evaluation T able II sho ws the quality and speed results for all ten methods trained on the Canterbury Tree Branches dataset with DEFOM reference labels. Quality scores come from the held- out test set (532 pairs, 1080P), and speed numbers are from the NVIDIA Jetson Orin Super at 1080P . 1) Quality Analysis: Edge-A ware Attention Methods : B ANet-3D delivers the best disparity quality on four out of fiv e metrics: highest SSIM (0.883), lowest LPIPS (0.157), and highest SIFT (0.274) and ORB (0.162) match ratios. Its 3D cost filtering keeps depth edges sharp and preserv es thin branch details, producing maps that closely match the DEFOM reference both visually and structurally . BANet-2D is a lighter version with solid quality (SSIM = 0.816, LPIPS = 0.245) and runs 1.7 × faster . Step-by-Step Refinement Methods : RAFT -Stereo scores highest on V iTScore (0.799) and second on SIFT matching (0.245), showing that repeated updates capture the o verall depth layout and scene structure well. Ho we ver , its SSIM (0.763) is near the bottom, meaning that while the big- picture depth is good, pix el-lev el smoothness suf fers. IGEV - R T , designed for faster processing, reaches moderate qual- ity (SSIM = 0.794, V iTScore = 0.558) but still only manages 0.24 FPS at 1080P—far from real-time. 3D Conv olution Methods : PSMNet gets the second-best LPIPS (0.212) and third-best V iTScore (0.786), sho wing that its multi-scale pooling captures context well for vegetation. GwcNet performs similarly (SSIM = 0.811, V iTScore = 0.750) and runs slightly faster . Attention-Based : MoCha-Stereo reaches the second- highest SSIM (0.848), showing that its motion and channel attention handles the repeating textures and fine details com- mon in tree scenes. Lightweight Methods : AnyNet is the fastest (6.99 FPS, 143 ms delay) but at a clear quality cost—it has the worst V iTScore (0.196) and highest LPIPS (0.434). Deep- Pruner sho ws the weakest quality overall (SSIM = 0.720, LPIPS = 0.542), suggesting that its search space reduction approach may throw away important matches in complex ve getation. 2) Infer ence Speed Analysis: Only AnyNet (6.99 FPS) comes close to real-time at 1080P on the Jetson Orin Super . B ANet-2D reaches 1.21 FPS, which is enough for tasks that can tolerate some delay , such as approach planning or station- ary branch assessment. All other methods run belo w 1 FPS at full resolution, so they would need either lo wer resolution or offline processing to be practical. B. Resolution-Dependent Latency Comparison Fig. 1 compares processing times at 720P (1280 × 720) and 1080P (1920 × 1080) on the Jetson Orin Super , using li ve video from the ZED Mini camera during drone flights. Fig. 1: Inference latency comparison between 720P and 1080P modes on NVIDIA Jetson Orin Super . Live stereo input from ZED Mini camera. Lower latency is better; dashed line indicates 10 FPS real- time threshold. Dropping from 1080P to 720P cuts the pixel count by 56%, which gives noticeable speed gains for most methods. AnyNet benefits the most, getting much closer to usable real- time speeds at 720P . Heavy methods like RAFT -Stereo and PSMNet stay too slo w ev en at the lo wer resolution, showing that network design matters more than resolution for on-drone speed. C. Quality–Speed T rade-off and P areto Analysis Fig. 2 plots quality (SSIM) against speed (FPS) for all ten methods, sho wing which ones offer the best combinations. Key Finding : Only three methods sit on the best trade- off line: BANet-3D (highest quality , 0.71 FPS), B ANet-2D (balanced, 1.21 FPS), and AnyNet (fastest, 6.99 FPS). Every other method is outperformed—it is either slo wer and lower - quality than one of these three, or offers no unique advantage. This gi ves clear guidance for deplo yment: • When quality matters most (offline mapping, detailed inspection): use BANet-3D. • When balance is needed (approach planning, slo w-speed manoeuvres): use BANet-2D. • When speed is critical (closed-loop control, obstacle av oidance): use AnyNet, accepting lo wer disparity qual- ity . D. Qualitative Results Fig. 3 shows visual comparisons on test scenes with thin branches, blocked areas, and different lighting. Each row T ABLE II: Comprehensive Evaluation: Disparity Quality Metrics and Inference Speed on NVIDIA Jetson Orin Super (1080P) Method SSIM ↑ LPIPS ↓ V iTScore ↑ SIFT Ratio ↑ ORB Ratio ↑ FPS ↑ Latency (ms) ↓ B ANet-3D 0.883 0.157 0.790 0.274 0.162 0.71 1408 MoCha-Stereo 0.848 0.221 0.701 0.111 0.044 0.14 7143 B ANet-2D 0.816 0.245 0.724 0.171 0.072 1.21 826 GwcNet 0.811 0.250 0.750 0.157 0.047 0.23 4348 PSMNet 0.810 0.212 0.786 0.181 0.080 0.11 9091 IGEV -R T 0.794 0.297 0.558 0.071 0.020 0.24 4167 DCVSMNet 0.769 0.347 0.509 0.047 0.012 0.36 2778 AnyNet 0.766 0.434 0.196 0.065 0.012 6.99 143 RAFT -Stereo 0.763 0.235 0.799 0.245 0.108 0.07 14286 DeepPruner 0.720 0.542 0.397 0.039 0.011 0.34 2941 All methods trained on T ree Branches training set with DEFOM pseudo-ground-truth. Quality metrics evaluated on test set (532 pairs, 1080P). FPS measured on NVIDIA Jetson Orin Super at 1080P . Bold indicates best per column. SIFT/ORB Ratio = proportion of successfully matched keypoints (Lowe ratio test). ↑ : higher is better; ↓ : lower is better . 0 2 4 6 8 0 . 7 0 . 75 0 . 8 0 . 85 0 . 9 BANet-3D BANet-2D AnyNet RAF T -Stereo PSMNet MoC ha-Stereo GwcNet IGEV -R T De epPruner DCVSMNet FPS on Jetson Orin Super (1080P) → SSIM → ⋆ Pareto-optimal • Dominated Fig. 2: Quality–speed trade-of f (SSIM vs. FPS) for all ten methods on the Jetson Orin Super at 1080P . Red stars and dashed line mark the Pareto frontier: BANet-3D (best quality), BANet-2D (balanced), AnyNet (fastest). Blue circles indicate dominated methods. displays the left input image, the DEFOM reference disparity map, and predictions from the ten trained methods. Branch Structur e Pr eservation : BANet-3D reproduces the fine branch details from the DEFOM reference most accurately , which aligns with its top SIFT/ORB match scores. RAFT -Stereo keeps the overall depth layout (matching its high V iTScore) but creates local distortions near thin branches. AnyNet heavily blurs fine structures, mer ging nearby branches and losing sharp depth edges. Sky and Background Regions : All trained methods pro- duce consistent disparity values in the featureless sky areas. B ANet-3D and MoCha-Stereo show the cleanest transitions between sky and tree crown edges. DeepPruner and DCVSM- Net produce visible bleeding ef fects at object borders. E. F ield Deployment on U A V Platform W e run all ten trained models on our test drone with an NVIDIA Jetson Orin Super (16 GB, its own po wer supply) and ZED Mini camera in real outdoor conditions. Unlike the offline test set, this e valuation uses li ve video at both 1920 × 1080 and 1280 × 720 during actual flights over pine plantation canopies, testing how well the models handle ne w , unseen data in real time. Po wer Usage : AnyNet dra ws the least po wer ( ∼ 12 W), which helps extend flight time. Methods with heavy 3D processing (RAFT -Stereo, PSMNet, B ANet-3D) use 10–20 W more (83–167% increase), which noticeably shortens a vailable flight time—an important concern since ev en the Jetson’ s dedicated battery has limited capacity . Heat Management : Running RAFT -Stereo and PSMNet continuously causes the Jetson to overheat and slow down after about 8 minutes. AnyNet and BANet-2D keep steady speed throughout 30-minute flights without heat problems, confirming the y are suitable for extended field use. V . D I S C U S S I O N A. Benefits of V e getation-Specific T raining Our results show that training stereo networks on tree imagery with DEFOM reference labels produces strong, task- specific models. B ANet-3D scores best across multiple metrics (SSIM = 0.883, LPIPS = 0.157, SIFT Ratio = 0.274, ORB Ra- tio = 0.162), sho wing that its edge-aw are attention with 3D cost processing handles the typical challenges of v egetation well: thin ov erlapping branches, repeating leaf textures, and sharp depth changes. Reference Label Quality : The strong results across all ten methods (SSIM from 0.720 to 0.883) confirm that DEFOM predictions work well as training labels. Se veral methods reach SSIM abov e 0.80, which shows that carefully chosen model predictions [3] can replace costly LiDAR data for training ve getation-specific stereo netw orks. From Disparity to Depth : Since depth is inv ersely related to disparity (Eq. (1)), the quality of disparity maps directly affects depth accuracy . High SSIM and lo w LPIPS mean that both nearby objects (high disparity) and distant ones (low disparity) are reproduced well, giving reliable depth estimates across the full working range. B. Ar chitectur e Insights for F or estry Applications Edge-A ware Attention W orks Best : B ANet-3D’ s consis- tent lead across metrics suggests that edge-sensiti ve filtering is especially useful for vegetation, where depth boundaries (a) Left Image (b) Ground Truth (c) B ANet-3D (d) RAFT -Stereo (e) PSMNet (f) MoCha (g) B ANet-2D (h) GwcNet (i) IGEV -R T (j) DeepPruner (k) DCVSMNet (l) AnyNet Fig. 3: Qualitative comparison of disparity maps on a representati ve test scene. (a) Left input image. (b) DEFOM pseudo-ground-truth. (c)–(l) Predictions from ten trained methods. B ANet-3D best preserves thin branch details and depth boundaries, while AnyNet ov er-smooths fine structures. RAFT -Stereo captures large-scale depth layering but exhibits local artifacts. are dense and follow complex branch shapes. The 3D v ersion clearly outperforms BANet-2D (SSIM 0.883 vs. 0.816), con- firming that processing the full 3D cost v olume captures depth relationships that 2D filtering misses. Rankings Depend on the Metric : An important obser- vation is that method rankings shift considerably between metrics. RAFT -Stereo gets the best V iTScore (0.799) but only 9th-best SSIM (0.763), while MoCha-Stereo gets 2nd-best SSIM (0.848) but 6th-best V iTScore (0.701). This gap shows that pixel-lev el similarity (SSIM), visual quality (LPIPS), and scene-level structure (V iTScore) measure quite different things—which is why we use multiple metrics in this study . 3D Con volution Methods : PSMNet’ s strong LPIPS (0.212, 2nd best) and V iTScore (0.786, 3rd best) sho w that its multi- scale pooling captures context well for vegetation. GwcNet performs similarly but slightly lower , consistent with their relativ e standings on other benchmarks. Best T rade-off Options : Out of ten methods, only B ANet- 3D, BANet-2D, and AnyNet offer unbeatable quality–speed combinations. This greatly simplifies the choice for drone stereo systems: practitioners only need to pick among these three based on how much delay their application can tolerate. C. Practical Deployment Considerations Resolution Choice : The 720P versus 1080P speed com- parison (Fig. 1) gives practical guidance. For closed-loop pruning control needing more than 5 FPS, AnyNet at 720P is essentially the only option. For pre-approach planning at 1– 2 FPS, B ANet-2D at 720P offers much higher quality . This three-way trade-off between resolution, quality , and speed should guide system design. Separate Po wer Supply : Our Jetson Orin Super runs on its own battery , separate from the drone’ s flight battery . This is important because heavy methods (RAFT -Stereo: ∼ 32 W , PSMNet: ∼ 24 W) would otherwise cut into flight time signifi- cantly . Even with separate po wer , the dedicated battery still has limits, so lo wer-po wer methods (An yNet: ∼ 12 W) allo w longer operation—a key factor for commercial surveys cov ering large plantation areas. Heat Issues : Running heavy methods at full load makes the Jetson overheat and slow down after 8–10 minutes. Lighter methods keep steady performance through entire flights, re- moving the need for comple x heat management strategies. D. Limitations Reference Label Ceiling : Since our models learn from DEFOM predictions, they cannot be more accurate than DE- FOM itself. The metrics we use (SSIM, LPIPS, V iTScore, SIFT/ORB) measure ho w closely models match DEFOM output, not absolute geometric accuracy . Future work should compare selected models against LiD AR measurements on sample scenes. Limited Species and Conditions : The Canterbury T ree Branches dataset covers only Radiata pine ( Pinus radiata ) under Ne w Zealand conditions. Whether the results hold for other tree types, climates, or seasons needs further testing and expanded datasets. No T ensorR T Optimization : All speed measurements use standard PyT orch. T ensorR T can speed things up by 2–5 × on Jetson hardware; AnyNet with T ensorR T could lik ely exceed 15 FPS at 1080P , making true real-time use possible. W e leav e this optimization for future work. V I . C O N C L U S I O N This paper presents the first study to train and ev aluate ten deep stereo matching networks on real tree branch im- ages for drone-based autonomous pruning. Using DEFOM- generated disparity maps as training targets—where disparity records horizontal pixel correspondence and depth follows from Z = f B /d —we test ten methods from six design families on the Canterbury T ree Branches dataset. W e ev aluate using perceptual (SSIM, LPIPS, V iTScore) and structural (SIFT/ORB feature matching) metrics, with speed testing on an independently-po wered NVIDIA Jetson Orin Super . Our main findings are: • B ANet-3D gives the best quality : It leads on four of fiv e quality metrics (SSIM = 0.883, LPIPS = 0.157, SIFT Ratio = 0.274, ORB Ratio = 0.162). Its edge-aware atten- tion with 3D cost processing fits v egetation scenes with many depth edges well. • Three methods offer unbeatable trade-offs : Only B ANet-3D (best quality), BANet-2D (balanced), and AnyNet (fastest) sit on the best trade-off boundary; all other methods are outperformed. • Resolution affects speed : Comparing 720P and 1080P processing on the Jetson Orin Super provides practical guidance for choosing the right resolution–quality–speed balance for different pruning tasks. • Confirmed in the field : T esting on a liv e drone with independently-powered Jetson and ZED Mini sho ws that AnyNet and B ANet-2D keep steady performance through full-length flights without overheating. W e plan to publicly release the Canterb ury T ree Branches dataset with DEFOM reference labels and trained model weights to support further research in drone-based forestry . Future work will e xplore T ensorR T optimization for f aster pro- cessing, self-supervised learning techniques, frame-to-frame consistency for video input, and combining these models with branch detection and se gmentation systems [1], [25] to build complete autonomous pruning systems. A C K N OW L E D G M E N T S This research was supported by the Royal Society of Ne w Zealand Marsden Fund and the Ministry of Business, Inno va- tion and Employment. W e thank the forestry research stations for data collection access. R E F E R E N C E S [1] Y . Lin, B. Xue, M. Zhang, S. Schofield, and R. Green, “Deep learning- based depth map generation and YOLO-integrated distance estimation for radiata pine branch detection using drone stereo vision, ” in Proc. Int. Conf. Imag e V is. Comput. Ne w Zealand (IVCNZ) , Christchurch, New Zealand, 2024, pp. 1–6. [2] D. Steininger, J. Simon, A. Trondl, and M. Murschitz, “TimberV ision: A multi-task dataset and framework for log-component segmentation and tracking in autonomous forestry operations, ” in Pr oc. IEEE/CVF Winter Conf. Appl. Comput. V is. (W A CV) , 2025. [3] Y . Lin, B. Xue, M. Zhang, S. Schofield, and R. Green, “T owards gold- standard depth estimation for tree branches in UA V forestry: Benchmark- ing deep stereo matching methods, ” arXiv preprint , 2026. [4] H. Jiang, Z. Lou, L. Ding, R. Xu, M. T an, W . Jiang, and R. Huang, “DEFOM-Stereo: Depth foundation model based stereo matching, ” arXiv pr eprint arXiv:2501.09466 , 2025. [5] N. Mayer, E. Ilg, P . Hausser, P . Fischer , D. Cremers, A. Dosovitskiy , and T . Brox, “ A large dataset to train con volutional networks for disparity , optical flow , and scene flow estimation, ” in Proc. IEEE Conf. Comput. V is. P attern Recognit. (CVPR) , 2016, pp. 4040–4048. [6] L. Lipson, Z. T eed, and J. Deng, “RAFT -Stereo: Multilevel recurrent field transforms for stereo matching, ” in Pr oc. Int. Conf. 3D V is. (3DV) , 2021, pp. 218–227. [7] J.-R. Chang and Y .-S. Chen, “Pyramid stereo matching network, ” in Pr oc. IEEE Conf . Comput. V is. P attern Recognit. (CVPR) , 2018, pp. 5410–5418. [8] X. Guo, K. Y ang, W . Y ang, X. W ang, and H. Li, “Group-wise correlation stereo network, ” in Proc. IEEE Conf . Comput. V is. P attern Recognit. (CVPR) , 2019, pp. 3273–3282. [9] Z. Chen, W . Long, H. Y ao, Y . Zhang, B. Luo, Z. Cao, and Z. Dong, “MoCha-Stereo: Motif channel attention network for stereo matching, ” in Proc. IEEE Conf. Comput. V is. P attern Recognit. (CVPR) , 2024, pp. 5253–5262. [10] V . T ankovich, C. Hane, Y . Zhang, A. Ber, S. Fanello, and S. Izadi, “HITNet: Hierarchical iterativ e tile refinement network for real-time stereo matching, ” in Proc. IEEE Conf. Comput. V is. P attern Recognit. (CVPR) , 2021, pp. 14362–14372. [11] G. Xu, X. W ang, X. Ding, and X. Y ang, “Iterative geometry encoding volume for stereo matching, ” in Pr oc. IEEE Conf. Comput. V is. P attern Recognit. (CVPR) , 2023, pp. 21919–21928. [12] S. Duggal, S. W ang, W .-C. Ma, R. Hu, and R. Urtasun, “DeepPruner: Learning ef ficient stereo matching via differentiable P atchMatch, ” in Pr oc. IEEE Int. Conf. Comput. V is. (ICCV) , 2019, pp. 4384–4393. [13] M. T ahmasebi, S. Huq, K. Meehan, and M. McAfee, “DCVSMNet: Double cost volume stereo matching netw ork, ” Neur ocomputing , vol. 618, p. 129002, 2025. [14] Y . W ang, Z. Lai, G. Huang, B. H. W ang, L. V an Der Maaten, M. Camp- bell, and K. Q. W einber ger , “ An ytime stereo image depth estimation on mobile devices, ” in Proc. IEEE Int. Conf. Robot. A utom. (ICRA) , 2019, pp. 5893–5900. [15] T . Sch ¨ ops, J. L. Sch ¨ onberger , S. Galliani, T . Sattler, K. Schindler, M. Pollefeys, and A. Geiger , “ A multi-view stereo benchmark with high-resolution images and multi-camera videos, ” in Pr oc. IEEE Conf. Comput. V is. P attern Recognit. (CVPR) , 2017, pp. 2538–2547. [16] A. Geiger , P . Lenz, and R. Urtasun, “ Are we ready for autonomous driving? The KITTI vision benchmark suite, ” in Proc. IEEE Conf. Comput. V is. P attern Recognit. (CVPR) , 2012, pp. 3354–3361. [17] D. Scharstein, H. Hirschm ¨ uller , Y . Kitajima, G. Krathwohl, N. Ne ˇ si ´ c, X. W ang, and P . W estling, “High-resolution stereo datasets with subpixel- accurate ground truth, ” in Pr oc. German Conf . P attern Recognit. (GCPR) , 2014, pp. 31–42. [18] A. K endall, H. Martirosyan, S. Dasgupta, P . Henry , R. K ennedy , A. Bachrach, and A. Bry , “End-to-end learning of geometry and context for deep stereo regression, ” in Pr oc. IEEE Int. Conf. Comput. V is. (ICCV) , 2017, pp. 66–75. [19] L. Y ang, B. Kang, Z. Huang, X. Xu, J. Feng, and H. Zhao, “Depth anything: Unleashing the power of large-scale unlabeled data, ” in Proc. IEEE Conf. Comput. V is. P attern Recognit. (CVPR) , 2024, pp. 10371– 10381. [20] F . Fraundorfer, L. Heng, D. Honegger , G. H. Lee, L. Meier , P . T anskanen, and M. Pollefeys, “V ision-based autonomous mapping and exploration using a quadrotor MA V , ” in Pr oc. IEEE/RSJ Int. Conf. Intell. Robots Syst. (IR OS) , 2012, pp. 4557–4564. [21] F . Nex and F . Remondino, “U A V for 3D mapping applications: A revie w , ” Appl. Geomat. , vol. 6, no. 1, pp. 1–15, 2014. [22] A. J. Barry and R. T edrake, “Pushbroom stereo for high-speed na vigation in cluttered en vironments, ” in Proc. IEEE Int. Conf. Robot. Autom. (ICRA) , 2015, pp. 3046–3052. [23] W . Liu, Z. W ang, X. Liu, N. Zeng, Y . Liu, and F . E. Alsaadi, “ A survey of deep neural network architectures and their applications, ” Neur ocomputing , v ol. 234, pp. 11–26, Apr . 2017. [24] Y . Lin, B. Xue, M. Zhang, S. Schofield, and R. Green, “YOLO and SGBM integration for autonomous tree branch detection and depth estimation in radiata pine pruning applications, ” in Proc. Int. Conf. Ima ge V is. Comput. New Zealand (IVCNZ) , W ellington, New Zealand, 2025, pp. 1–6. [25] Y . Lin, B. Xue, M. Zhang, S. Schofield, and R. Green, “Performance ev aluation of deep learning for tree branch segmentation in autonomous forestry systems, ” in Proc. Int. Conf . Image V is. Comput. New Zealand (IVCNZ) , W ellington, New Zealand, 2025, pp. 1–6. [26] Y . Lin, B. Xue, M. Zhang, S. Schofield, and R. Green, “Generalization ev aluation of deep stereo matching methods for U A V -based forestry applications, ” arXiv pr eprint arXiv:2512.03427 , 2025. [27] Y . Lin, B. Xue, M. Zhang, S. Schofield, and R. Green, “Genetic algorithms for parameter optimization for disparity map generation of radiata pine branch images, ” in Pr oc. Int. Conf. Image V is. Comput. New Zealand (IVCNZ) , W ellington, New Zealand, 2025, pp. 1–6. [28] Z. W ang, A. C. Bo vik, H. R. Sheikh, and E. P . Simoncelli, “Image quality assessment: From error visibility to structural similarity , ” IEEE T rans. Image Process. , vol. 13, no. 4, pp. 600–612, Apr . 2004. [29] R. Zhang, P . Isola, A. A. Efros, E. Shechtman, and O. W ang, “The unreasonable effectiv eness of deep features as a perceptual metric, ” in Pr oc. IEEE Conf . Comput. V is. P attern Recognit. (CVPR) , 2018, pp. 586–595.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment