Bellman Value Decomposition for Task Logic in Safe Optimal Control

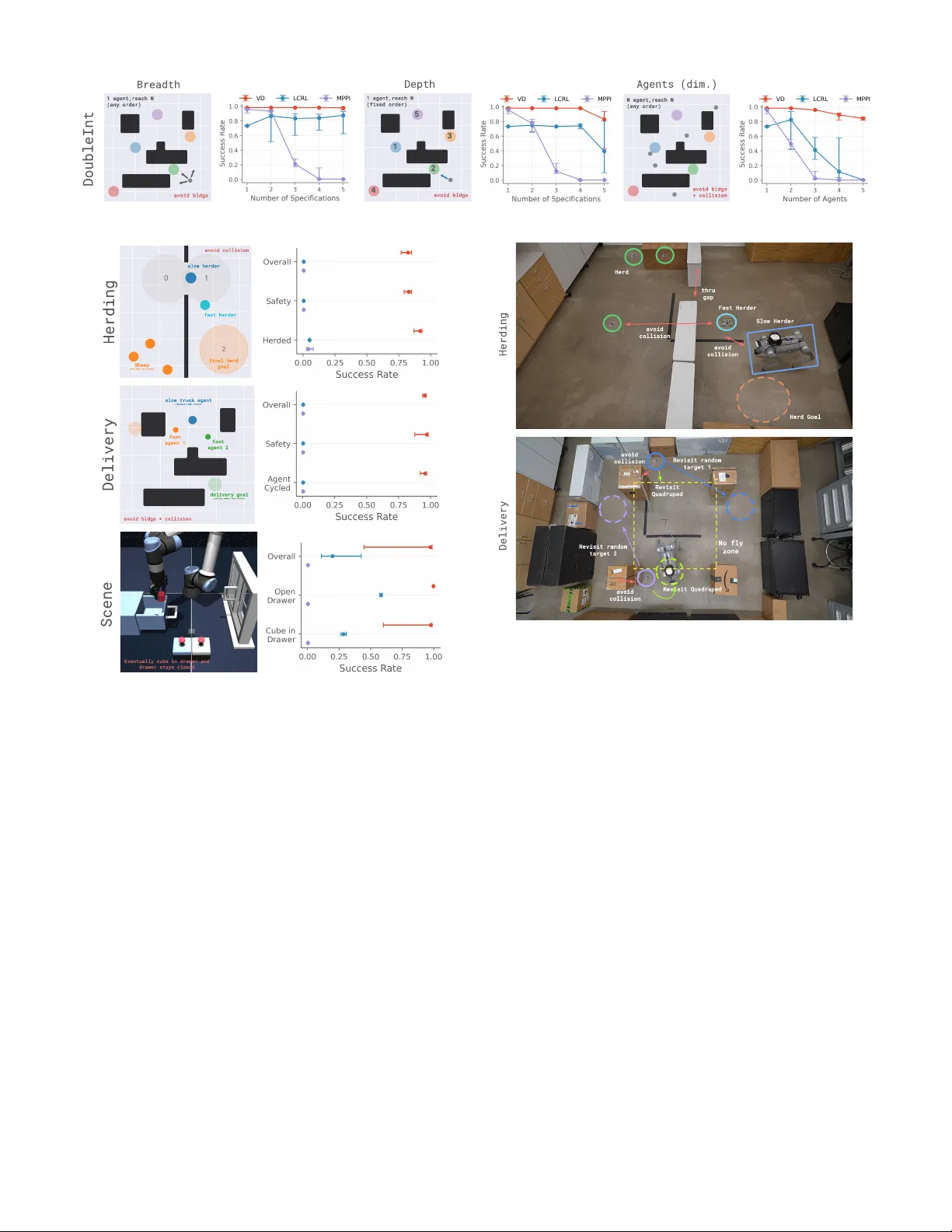

Real-world tasks involve nuanced combinations of goal and safety specifications. In high dimensions, the challenge is exacerbated: formal automata become cumbersome, and the combination of sparse rewards tends to require laborious tuning. In this wor…

Authors: William Sharpless, Oswin So, Dylan Hirsch