Less is More: Convergence Benefits of Fewer Data Weight Updates over Longer Horizon

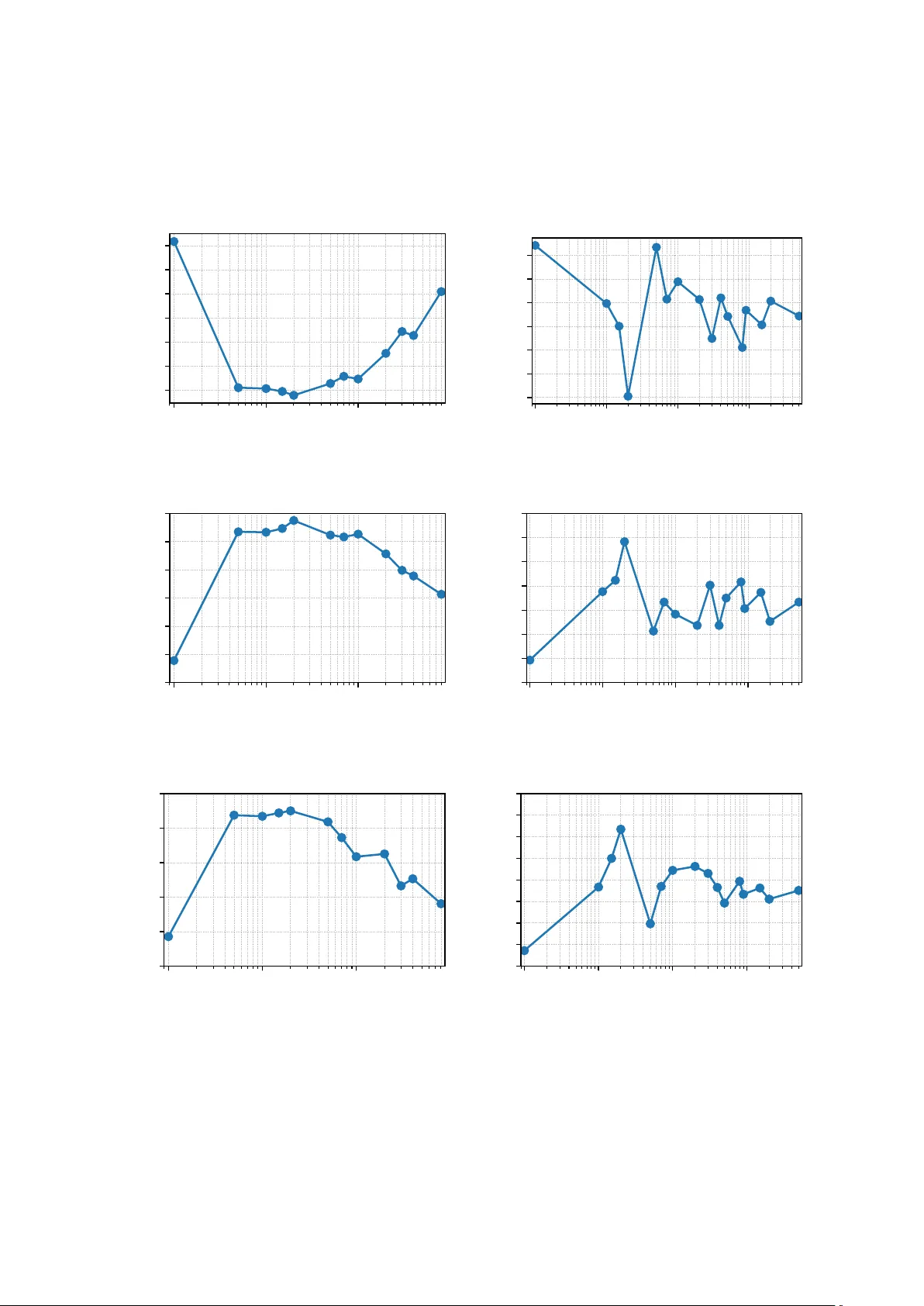

Data mixing--the strategic reweighting of training domains--is a critical component in training robust machine learning models. This problem is naturally formulated as a bilevel optimization task, where the outer loop optimizes domain weights to mini…

Authors: Rudrajit Das, Neel Patel, Meisam Razaviyayn