Quantum Hamiltonian Learning using Time-Resolved Measurement Data and its Application to Gene Regulatory Network Inference

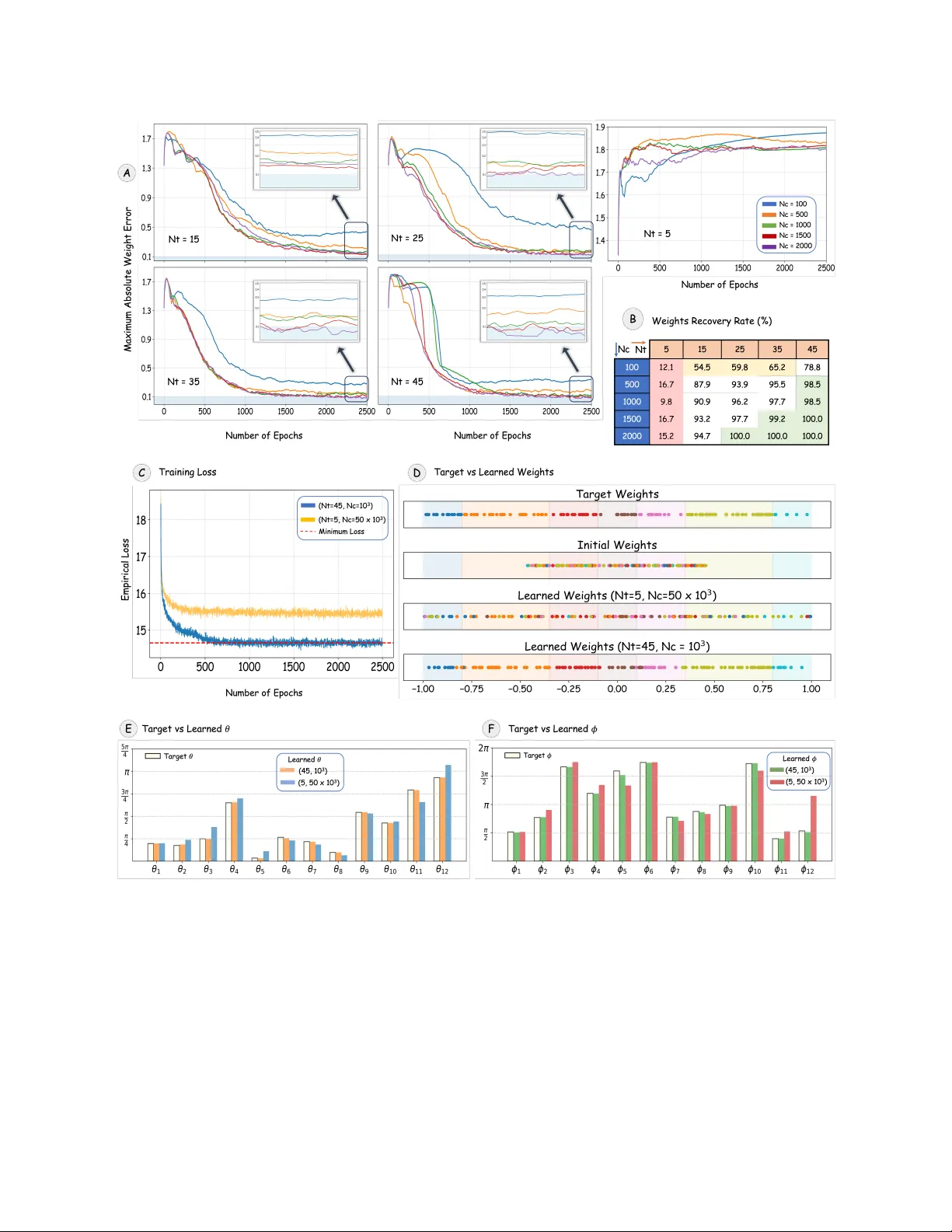

We present a new Hamiltonian-learning framework based on time-resolved measurement data from a fixed local IC-POVM and its application to inferring gene regulatory networks. We introduce the quantum Hamiltonian-based gene-expression model (QHGM), in …

Authors: Mohammad Aamir Sohail, Ranga R. Sudharshan, S. S