Making Conformal Predictors Robust in Healthcare Settings: a Case Study on EEG Classification

Quantifying uncertainty in clinical predictions is critical for high-stakes diagnosis tasks. Conformal prediction offers a principled approach by providing prediction sets with theoretical coverage guarantees. However, in practice, patient distributi…

Authors: Arjun Chatterjee, Sayeed Sajjad Razin, John Wu

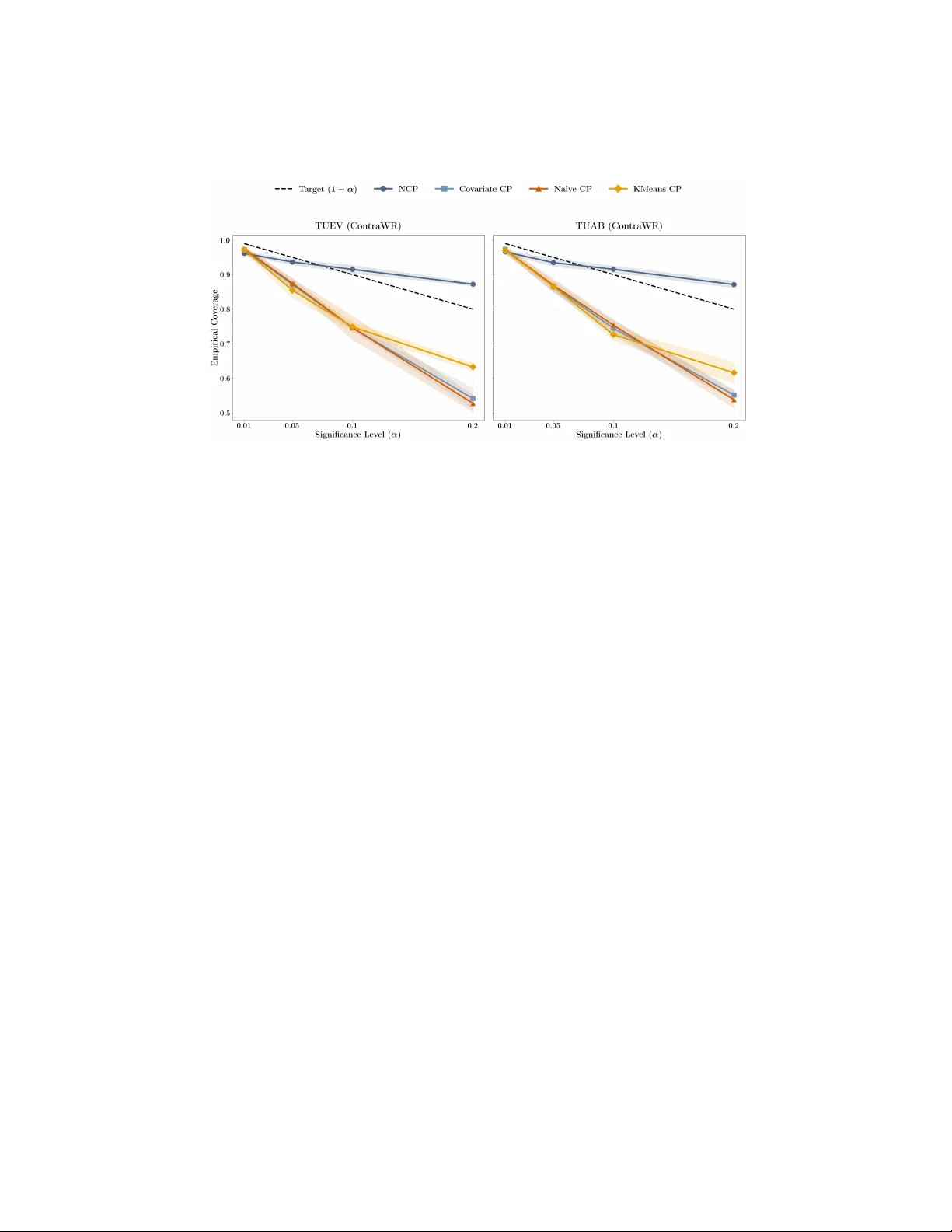

Making Conformal Predictors Robust in Healthcare Settings: a Case Study on EEG Classification Arjun Chatterjee 1 , 2 ∗ , Sa y eed Sa jjad Razin 2 , 3 , John W u 1 , 2 ∗ , Siddhartha Lagh uv arapu 1 , 2 , Jath urshan Pradeepkumar 1 , 2 , and Jimeng Sun 1 1 Univ ersity of Illinois Urbana-Champaign, Urbana, IL 61801, USA {arjunc4,johnwu3}@illinois.edu 2 PyHealth 3 Bangladesh Universit y of Engineering and T echnology Abstract. Quan tifying uncertain ty in clinical predictions is critical for high-stak es diagnosis tasks. Conformal prediction offers a principled ap- proac h b y pro viding prediction sets with theoretical co v erage guaran tees. Ho wev er, in practice, patient distribution shifts violate the i.i.d. assump- tions underlying standard conformal metho ds, leading to p oor co v erage in healthcare settings. In this work, we ev aluate several conformal pre- diction approac hes on EEG seizure classification, a task with kno wn dis- tribution shift c hallenges and lab el uncertain ty . W e demonstrate that p ersonalized calibration strategies can impro ve cov erage b y o ver 20 p er- cen tage p oin ts while maintaining comparable prediction set sizes. Our implemen tation is a v ailable via PyHealth, an open-source healthcare AI framew ork: h ttps://github.com/sunlabuiuc/PyHealth. Keyw ords: Conformal Prediction · Distribution Shift · EEG Signal Classification · Uncertain t y Quan tification · Robustness 1 In tro duction F or many healthcare tasks, uncertaint y is often in trinsic to the problem itself. Giv en a set of clinical observ ations in a patient profile, there are often many plausible outcomes and explanations. This is exemplified by EEG classification tasks, where lab els are typically deriv ed from group voting [2][7]. Here, exp ert neurologists read EEG snipp ets and attempt to identify EEG types based on pre-existing domain knowledge. How ever, whether due to p oten tial noise from measuremen ts or patient-to-patien t v ariance, we note that such h uman anno- tations are not free from noise. As a consequence, it is not uncommon to see exp erts disagree on wa veform readings within annotated EEG datasets [2][10], as represen ted in Figure 1. Single-class predictions hide this uncertain t y and can b e o verconfiden t or misleading when exp erts would disagree. As such, it is cru- cial for machine learning mo dels to prop erly account for this intrinsic level of uncertain t y and noise. 2 A. Chatterjee et al. Fig. 1. EEG Classification Challenges. EEG tasks often violate key asp ects of a typical mac hine learning pip eline. (a) Their annotation pro cess consistently lea ves ro om for uncertain ty , which is then passed onto the mo dels. (b) T raining, v alidation, and test distributions are not indep enden t and identically distributed (i.i.d) due to patien t distribution [16]. Both issues make EEG classification as a mac hine learning problem a v ery challenging task. Conformal prediction [8] pro vides a direct approac h to resolving this problem. In conformal prediction [1], giv en samples of high uncertaint y , prediction sets are pro duced rather than t ypical single p oint predictions. Ho w ev er, conformal pre- diction assumes indep enden t and identically distributed (i.i.d.) calibration and test distributions, a scenario that many healthcare applications [16] fail to sat- isfy . In EEG classification, distribution shifts are highly prev alent, whether due to patient differences, measurement en vironmen ts, or other common causes [13]. In these cases, we show that conv entional conformal prediction techniques fail to pro vide adequate co verage, leading to undercov erage. EEG classification is t ypi- cally framed as supervised classification (binary or m ulticlass) ov er fixed-length segmen ts. Models are trained to predict even t type or clinical lab el from raw or preprocessed signals. The i.i.d. assumption fails to hold when the calibration and test sets differ in patien t cohort or recording conditions. Nonetheless, building robust conformal predictors is not only required for the safe deplo ymen t of these mo dels but also the only w ay to mak e such mo d- els practical, given that their training distributions are intrinsically filled with uncertain t y . As suc h, we seek a conformal prediction approac h that is robust to patien t distribution shifts. In our work, we explore a v ariety of conformal prediction approaches tow ards impro ving the cov erage of our mo dels. W e explore a base classifier trained in a t ypical setting, Con traWR [17], on t wo EEG datasets—TUAB [7] and TUEV [10]. Our con tributions are as follo ws: (1) with a neighborho o d con- formal prediction approac h, we can improv e co verage by upw ards of 20%; (2) Under co v ariate shift, we provide theoretical results that demonstrate that NCP yields pro v ably b etter cov erage. (3) we sho w that prediction set size remains relatively constant; and (4) we mak e our approach directly mo dular through PyHealth, enabling an y one to replicate our approac h in their o wn clinical predictive mo deling setups. Conformal Prediction for EEG Classification 3 2 Metho dology 2.1 Conformal Prediction F ramew ork Conformal prediction (CP) is a distribution-free framework for constructing pre- diction sets (or interv als) that quantify uncertaint y around a point prediction by calibrating a mo del’s errors on a held-out calibration set [14]. Given a noncon- formit y score (a measure of ho w “atypical” a candidate lab el is for an input) and assuming exc hangeability of the calibration and test examples (e.g., i.i. d. data), CP outputs a prediction set b C ( X ) such that the marginal cov erage guaran tee holds: Pr Y ∈ b C ( X ) ≥ 1 − α, for a user-chosen misco v erage level α ∈ (0 , 1) . This guarantee is mo del-agnostic and holds for an y underlying predictor, provided the exc hangeability assump- tion is satisfied; ho w ev er, it is a marginal guaran tee (av eraged ov er the join t distribution of ( X , Y ) ) rather than a conditional guarantee for each individual X . In practice, we often instantiate CP via split c onformal pr e diction : the data are partitioned in to a training set (used to fit a predictor) and a calibration set (used only to compute nonconformity scores). At test time, a prediction set is formed by comparing candidate lab els to a single global threshold giv en by the (1 − α ) quan tile of the calibration scores, yielding finite-sample marginal cov erage under exc hangeabilit y . 2.2 Neigh b orho od Conformal Prediction In medical settings, patient-to-patien t v ariability induces strong heterogeneity in the conditional distribution of outcomes given co v ariates, so global (p opulation- a v erage) calibration can b e ov erly conserv ative for some subgroups and insuf- ficien tly informative for others. Neigh b orhoo d conformal prediction (NCP) ad- dresses this issue by lo calizing calibration to a patien t’s neighborho od in rep- resen tation space, aiming for neighb orho o d-level reliability—i.e., prediction sets that are calibrated for patien ts similar to the current patient—whic h is often the clinically relev an t notion of safet y [3]. Algorithmically , NCP constructs adaptive prediction sets b y using a test- p oint-sp e cific calibration distribution formed from nearby calibration examples in the represen tation space of a (pre-trained) classifier [3]. Let D cal = { ( x i , y i ) } n i =1 b e a calibration set, let Φ ( · ) denote a feature extractor (e.g., the p en ultimate- la y er em b edding of a deep netw ork), and let F θ b e a predictor that outputs class probabilities p θ ( y | x ) . Define a nonconformity score (one common choice for classification) as V ( x, y ) := 1 − p θ ( y | x ) , and compute calibration scores V i := V ( x i , y i ) for all ( x i , y i ) ∈ D cal . F or a test input x , NCP constructs a weighte d empirical distribution ov er calibration scores b y assigning a weigh t w i ( x ) ≥ 0 to eac h calibration p oin t based on its similarity 4 A. Chatterjee et al. to x (e.g., via k -nearest neighbors in Φ -space or a kernel on ∥ Φ ( x ) − Φ ( x i ) ∥ ), and normalizing: ˜ w i ( x ) := w i ( x ) P n j =1 w j ( x ) . Then, for a target misco v erage lev el α ∈ (0 , 1) , define the weighte d (1 − α ) quan tile ˆ q 1 − α ( x ) := inf n q : n X i =1 ˜ w i ( x ) 1 { V i ≤ q } ≥ 1 − α o . Finally , the NCP prediction set is b C NCP ( x ) := { y ∈ Y : V ( x, y ) ≤ ˆ q 1 − α ( x ) } . Compared to split conformal prediction (whic h uses a single global quan tile), NCP replaces the global calibration distribution with a test-point-specific neigh- b orhoo d distribution, pro ducing lo cally adaptive set sizes while retaining the standard (marginal) conformal co v erage guaran tee under exc hangeabilit y [3]. 2.3 Conformal prediction under cov ariate shift In medical applications, p a tien t populations can differ across hospitals, devices, and time p eriods, whic h changes the distribution of observed cov ariates (e.g., de- mographics, comorbidities, acquisition conditions, or other associated features). If the outcome mechanism remains stable once we condition on the measured co v ariates, then the conditional distribution of labels given features remains in- v arian t ev en though the feature distribution shifts—this is precisely the c ovariate shift setting. F ormally , co v ariate shift assumes P cal ( X ) = P test ( X ) , P cal ( Y | X ) = P test ( Y | X ) . Under cov ariate shift, standard conformal prediction calibrated on P cal need not ac hiev e the nominal cov erage on P test . A principled correction is to use weighte d conformal prediction with imp ortance weigh ts prop ortional to the density ra- tio w ( x ) = p test ( x ) /p cal ( x ) [12]. How ever, estimating this ratio is not trivial in practice, whic h w e demonstrate in our exp erimen ts. Neighb orho o d CP and r e duc e d c over age err or under shift. Neigh b orhoo d-based metho ds aim to reduce sensitivity to co v ariate shift by producing prediction sets that are closer to conditionally calibrated, whic h reduces the alignmen t b et ween the shift in X and lo cal under/ov er-cov erage. Below, we formalize this in tuition via a cov ariate-shift decomp osition of the test-domain cov erage error and show that reducing v ariability of the conditional cov erage function (as NCP is designed to do) yields smaller co v erage error than standard split conformal prediction. Theorem 1 (Cov erage error decomp osition under co v ariate shift). L et P cal and P test satisfy the c ovariate shift assumption P cal ( Y | X ) = P test ( Y | X ) , Conformal Prediction for EEG Classification 5 and let w ( X ) = p test ( X ) /p cal ( X ) b e the density r atio (assume P test ≪ P cal ). F or any pr e diction set function b C ( · ) , define its c onditional c over age function and lo c al slack under P cal as c ( x ) := P cal ( Y ∈ b C ( X ) | X = x ) , s ( x ) := c ( x ) − (1 − α ) . Then the test-domain mar ginal c over age err or satisfies P test ( Y ∈ b C ( X )) − (1 − α ) = Co v P cal w ( X ) , s ( X ) . In p articular, if NCP achieves smal ler slack variability than standar d split c on- formal pr e diction (SCP), e.g. V ar P cal ( s NCP ( X )) ≤ V ar P cal ( s SCP ( X )) , then its worst-c ase (over shifts with fixe d V ar P cal ( w ( X )) ) c over age err or is smal ler by Cauchy–Schwarz: Co v( w , s NCP ) ≤ p V ar( w ) V ar( s NCP ) ≤ p V ar( w ) V ar( s SCP ) . 2.4 Theory: Cov ariate Shift and Neigh b orho od Conformal Prediction This section provides a pro of sketc h for Theorem 1 (cov erage error decomp osition under co v ariate shift) and explains wh y neigh b orho od conformal prediction can b e more stable than standard split conformal prediction under shifts in the co v ariate distribution. 2.5 Setup Let P cal denote the calibration (source) distribution o ver ( X , Y ) and P test denote the test (target) distribution. W e assume c ovariate shift : P cal ( Y | X ) = P test ( Y | X ) but P cal ( X ) = P test ( X ) . Assume absolute contin uity P test ≪ P cal and define the densit y ratio (imp ortance w eight) w ( x ) := p test ( x ) p cal ( x ) . Let b C ( · ) b e any (p ossibly data-dependent) prediction set function constructed using calibration data. Define the conditional cov erage function (with resp ect to P cal ) c ( x ) := P cal ( Y ∈ b C ( X ) | X = x ) , and the lo cal calibration slac k s ( x ) := c ( x ) − (1 − α ) . 6 A. Chatterjee et al. Pro of of the cov ariate-shift decomp osition By the la w of total probabilit y under P test , P test ( Y ∈ b C ( X )) = E P test [ c ( X )] . Using the densit y ratio to rewrite the exp ectation under P cal giv es E P test [ c ( X )] = E P cal [ w ( X ) c ( X )] . Substituting c ( X ) = (1 − α ) + s ( X ) yields E P cal [ w ( X ) c ( X )] = (1 − α ) E P cal [ w ( X )] + E P cal [ w ( X ) s ( X )] . Since E P cal [ w ( X )] = 1 , w e obtain P test ( Y ∈ b C ( X )) − (1 − α ) = E P cal [ w ( X ) s ( X )] . Finally , using the iden tit y E [ W S ] = Co v ( W , S ) + E [ W ] E [ S ] giv es E P cal [ w ( X ) s ( X )] = Cov P cal w ( X ) , s ( X ) + E P cal [ w ( X )] E P cal [ s ( X )] . Under (approximate) marginal v alidit y on the calibration distribution, E P cal [ s ( X )] ≈ 0 , yielding the decomp osition in Theorem 1. Wh y neigh b orho od calibration reduces cov erage error The decomp osi- tion shows that the test-domain co v erage error is driven by the alignment be- t w een the cov ariate shift, quantified by w ( X ) , and the lo cal slack s ( X ) . Standard split conformal prediction uses a single global threshold and may yield highly v ariable s ( X ) across the feature space, so shifts that upw eight under-co vered regions can induce large negativ e co v ariance. Neigh b orhoo d conformal prediction instead estimates a lo cal score quan tile using nearb y calibration examples. This lo cal adaptation aims to reduce the magnitude and v ariability of s ( X ) across x , which in turn reduces Co v( w , s ) ; by Cauc h y–Sc h w arz, | Co v( w , s ) | ≤ p V ar( w )V ar( s ) , so shrinking V ar( s ) yields a tighter b ound on worst-case test-domain cov erage error for a giv en shift magnitude. 3 Exp erimen ts and Results Datasets. W e ev aluated our metho d on tw o b enc hmark tasks deriv ed from the TUH EEG Corpus[7]: the TUEV [4] and TUAB [6] datasets. The TUEV dataset targets EEG even t classification form ulated as a m ulticlass classification problem, with clinical EEG recordings annotated across six ev ent t yp es: spik e- and-sharp w a v es (SPSW), generalized p eriodic epileptiform discharges (GPED), p eriodic lateralized epileptiform disc harges (PLED), eye mo v emen ts (EYEM), artifacts (AR TF), and background activity (BCKG). In contrast, TUAB is de- signed for abnormal EEG detection, a binary classification task of normal v ersus Conformal Prediction for EEG Classification 7 Fig. 2. Empirical Cov erage. The dotted line represents the target co verage 1 − α . P ersonalized approaches (K-means CP and NCP) outp erform their non-p ersonalized coun terparts (Naive CP and Cov ariate CP). While higher co v erage is generally prefer- able, ac hieving target co verage is ideal. Ho wev er, no approac h consistently attains target cov erage across all α v alues; only NCP ac hieves target co verage at higher α . abnormal brain activit y . TUEV comprises 113,353 lab eled segments after task form ulation, while TUAB comprises 409,455. F or b oth datasets, w e globally split the combined data in to 60%, 10%, 15%, and 15% for the training, v alidation, calibration, and test sets, resp ectiv ely . F or TUEV, this yielded 17,003 samples in each of the calibration and test sets, while for TUAB, this yielded 61,419 samples in eac h of the calibration and test sets. Mo dels. W e utilize ContraWR [17] for our problem. ContraWR is a fully CNN- based arc hitecture that initially con verts biosignals into m ulti-channel spectro- gram represen tations and then pro cesses them using a ResNet-based 2D CNN. W e train Con traWR from scratch and ev aluate it across five different random seeds with our selected conformal approac hes. Conformal Approaches. W e explore four conformal prediction approac hes in our experiments. Naiv e CP , the traditional split conformal prediction (CP) metho d [8] [1], calibrates conformal predictors using a calibration set assumed to b e i.i.d. with the training and test distributions. Co v ariate CP [11] addresses co v ariate shift b et ween calibration and test sets b y w eighting eac h conformity score with a likelihoo d ratio. In practice, this w eight adjustment is estimated through k ernel densit y estimation [5]. K-means CP takes a naive approach to p ersonalized conformal prediction by deriving each test sample’s conformal threshold from only the calibration samples in its nearest cluster, rather than us- ing the en tire calibration set. NCP (Neighborho od Conformal Prediction) [3] ex- tends this idea by constructing sample-specific calibration sets through k-nearest 8 A. Chatterjee et al. neigh b ors, then weigh ting each neighbor’s conformity score by its relev ance dur- ing calibration. T ogether, these methods span b oth p ersonalized (K-means CP , NCP) and non-p ersonalized (Naiv e CP , Cov ariate CP) calibration strategies. Fig. 3. A v erage Prediction Set Sizes. Smaller prediction set sizes indicate bet- ter calibration. Personalized approac hes ac hieve sup erior co v erage (Figure 2) while main taining smaller prediction sets at low er α v alues. Conv ersely , at higher α v alues, p ersonalized methods increase prediction set sizes to improv e co verage. Notably , these increases remain modest in magnitude. Co v ariate CP do es not significantly impro ve cov erage ov er Naiv e CP . W e find that applying a cov ariate shift adjustmen t b et w een calibration and test sets do es not improv e co verage in this EEG domain. While such adjustments pro v e effectiv e in other domains [5], the naive application of kernel density esti- mation fails to improv e downstream cov erage, since the estimation of likelihoo d ratio is a difficult problem in high dimensions. This suggests that distribution shifts in EEG data are more complex than simple cov ariate shifts. P ersonalized conformal approac hes improv e empirical co verage ov er non-p ersonalized approaches. Figure 2 shows that personalized approaches ac hiev e higher co v erage than non-personalized baselines, particularly at higher α v alues where prediction sets are t ypically smaller. Notably , NCP ac hiev es 25% greater co verage than Naiv e CP at α = 0 . 2 with only a mo dest increase in prediction set size (Figure 3). Empirical cov erage remains imp erfect. While NCP surpasses the target co v erage line at most α v alues in Figure 2, it falls short at α = 0 . 01 —a regime t ypically asso ciated with high-risk scenarios. Consequently , while substan tially more useful than non-p ersonalized metho ds, NCP cannot yet guarantee 1 − α co v erage and ma y not b e suitable for deploymen t in safety-critical applications. Nonetheless, personalized conformal approaches represent a substan tial step for- Conformal Prediction for EEG Classification 9 w ard in making conformal prediction practical for real-w orld scenarios lik e EEG classification. 4 F uture W ork Sev eral directions w arran t further in v estigation. Extension to other healthcare domains. W e selected EEG classifica- tion as our case study due to the unique distribution shift c hallenges illustrated in Figure 1. Giv en the prev alence of domain generalization c hallenges across healthcare tasks [16][15], applying p ersonalized conformal predictors to diverse healthcare domains could illuminate the t yp es of distribution shifts prev alent in healthcare data and whether these approac hes generalize broadly . Lev eraging more pow erful embedding represen tations. NCP’s perfor- mance dep ends on identifying the most relev an t neigh b ors for calibration. Incor- p orating more p o werful EEG foundation mo dels with sp ecialized pre-training—such as TFM-T ok enizer [9], whic h b etter characterizes signal motifs in embedding space—could pro vide a direct path tow ard achieving target cov erage guarantees. Enabling broader adoption. W e mak e all implemen tations easily av ail- able through pip install pyhealth . By low ering barriers to entry , w e hop e to incentivize further exploration of conformal approac hes in healthcare AI, a domain where prediction guaran tees are crucial for safe deplo ymen t. Disclosure of Interests. There are no competing in terests. References 1. Angelop oulos, A.N., Bates, S.: A gen tle in tro duction to confor- mal prediction and distribution-free uncertain t y quantification (2022), h 2. Ge, W., Jing, J., An, S., Herlopian, A., Ng, M., Struck, A.F., Appavu, B., Johnson, E.L., Osman, G., Haider, H.A., et al.: Deep active learning for in terictal ictal injury con tinuum eeg patterns. Journal of neuroscience metho ds 351 , 108966 (2021) 3. Ghosh, S., Belkhouja, T., Y an, Y., Doppa, J.R.: Impro ving uncertaint y quantifi- cation of deep classifiers via neighborho od conformal prediction: No vel algorithm and theoretical analysis (2023) 4. Harati, A., Golmohammadi, M., Lop ez, S., Obeid, I., Picone, J.: Improv ed eeg ev ent classification using differential energy . In: 2015 IEEE Signal Pro cessing in Medicine and Biology Symp osium (SPMB). pp. 1–4. IEEE (2015) 5. Lagh uv arapu, S., Lin, Z., Sun, J.: Co drug: Conformal drug prop ert y prediction with densit y estimation under co v ariate shift. Adv ances in Neural Information Pro cessing Systems 36 , 37728–37747 (2023) 6. Lop ez, S., Suarez, G., Jungreis, D., Ob eid, I., Picone, J.: Automated iden tification of abnormal adult eegs. In: 2015 IEEE signal pro cessing in medicine and biology symp osium (SPMB). pp. 1–5. IEEE (2015) 7. Ob eid, I., Picone, J.: The temple univ ersity hospital eeg data corpus. F ron tiers in neuroscience 10 , 196 (2016) 10 A. Chatterjee et al. 8. P apadop oulos, H., V ovk, V., Gammerman, A.: Conformal prediction with neural net works. In: 19th IEEE International Conference on T o ols with Artificial In telli- gence (ICT AI 2007). vol. 2, pp. 388–395. IEEE (2007) 9. Pradeepkumar, J., Piao, X., Chen, Z., Sun, J.: T okenizing single-c hannel eeg with time-frequency motif learning (2026), h 10. Shah, V., von W eltin, E., Lop ez, S., McHugh, J.R., V eloso, L., Golmohammadi, M., Ob eid, I., Picone, J.: The temple univ ersity hospital seizure detection corpus (2018), 11. Tibshirani, R.J., Barb er, R.F., Candes, E.J., Ramdas, A.: Conformal prediction under cov ariate shift (2020), h 12. Tibshirani, R.J., F oygel Barb er, R., Cand ‘es, E.J., Ramdas, A.: Conformal prediction under cov ariate shift. In: A dv ances in Neural Information Processin g Systems (NeurIPS) (2019) 13. T veter, M., T veitstøl, T., Hatlestad-Hall, C., Hammer, H.L., Haraldsen, I.R.H.: Uncertain ty in deep learning for eeg under dataset shifts. Artificial Intelligence in Medicine p. 103374 (2026) 14. V ovk, V., Gammerman, A., Shafer, G.: Algorithmic Learning in a Random W orld. Springer (2005) 15. W u, Z., Y ao, H., Liebovitz, D., Sun, J.: An iterative self-learning framew ork for medical domain generalization. In: Thirt y-seven th Conference on Neural Information Pro cessing Systems (2023), h ttps://op enreview.net/forum?id=PHKkBbuJWM 16. Y ang, C., W estov er, M.B., Sun, J.: Manydg: Many-domain generalization for healthcare applications. arXiv preprint arXiv:2301.08834 (2023) 17. Y ang, C., Xiao, D., W esto ver, M.B., Sun, J.: Self-sup ervised eeg representation learning for automatic sleep staging (2023), h

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment