Scale-PINN: Learning Efficient Physics-Informed Neural Networks Through Sequential Correction

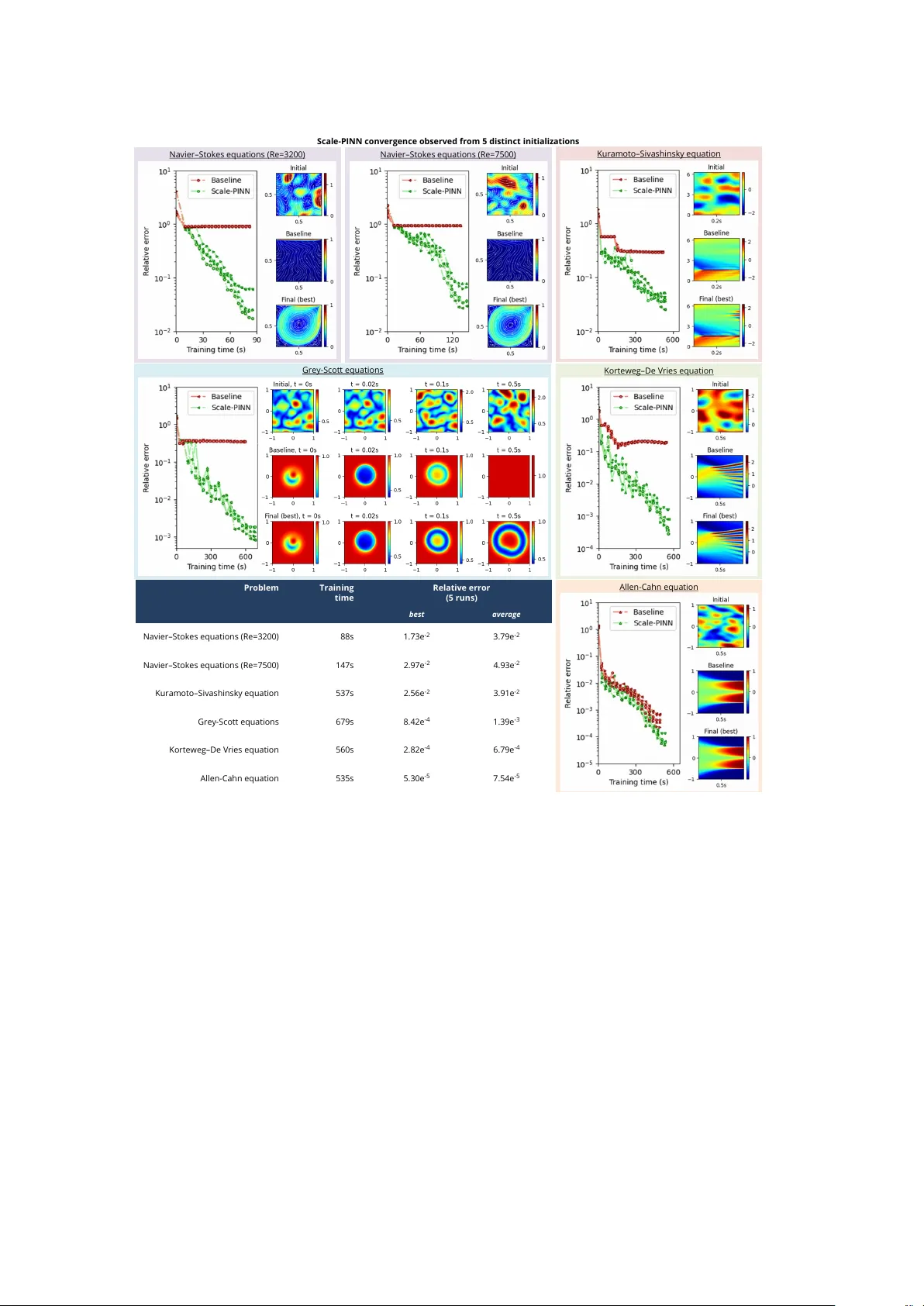

Physics-informed neural networks (PINNs) have emerged as a promising mesh-free paradigm for solving partial differential equations, yet adoption in science and engineering is limited by slow training and modest accuracy relative to modern numerical s…

Authors: Pao-Hsiung Chiu, Jian Cheng Wong, Chin Chun Ooi