A Reinforcement Learning-based Transmission Expansion Framework Considering Strategic Bidding in Electricity Markets

Transmission expansion planning in electricity markets is tightly coupled with the strategic bidding behaviors of generation companies. This paper proposes a Reinforcement Learning (RL)-based co-optimization framework that simultaneously learns trans…

Authors: Tomonari Kanazawa, Hikaru Hoshino, Eiko Furutani

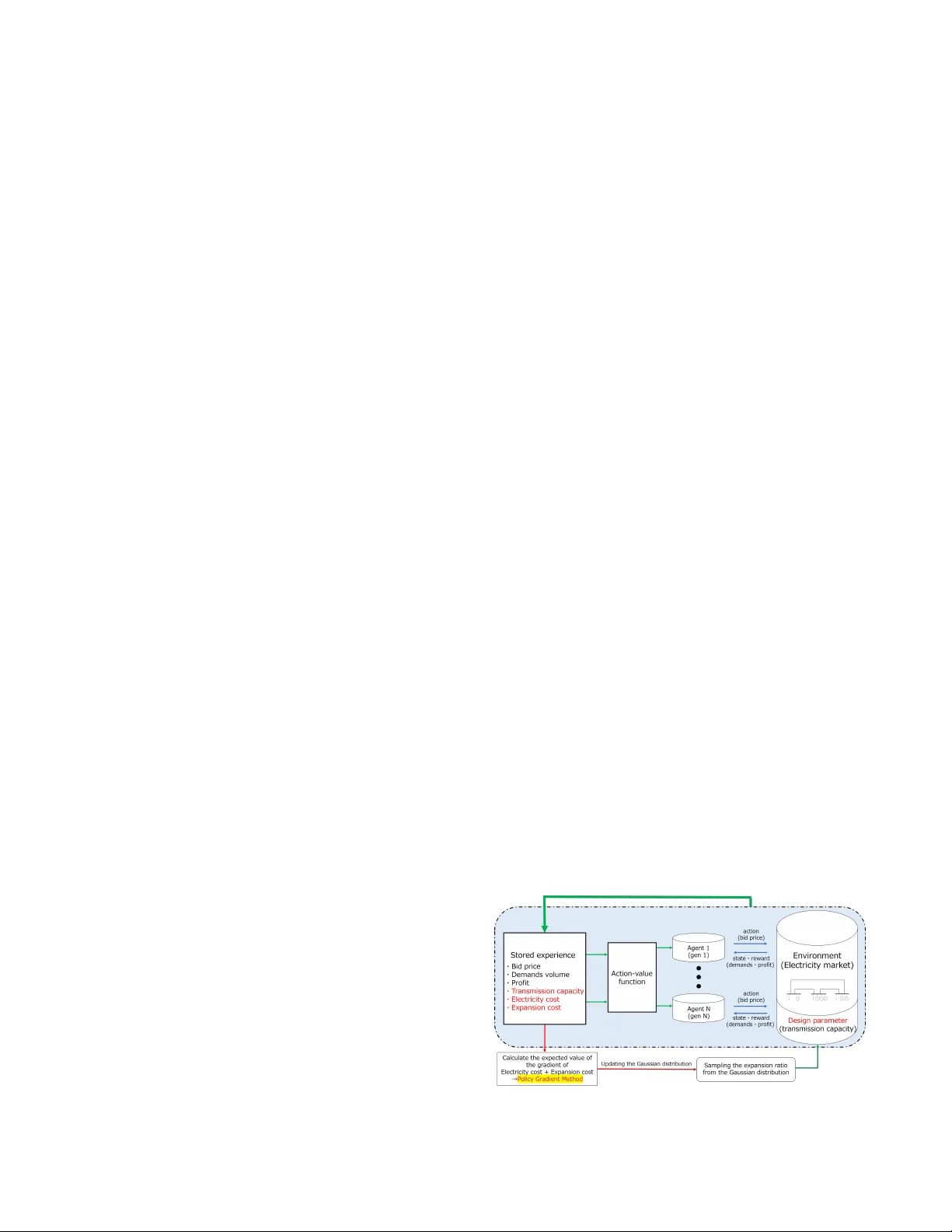

A Reinforcement Learning-based T ransmission Expansion Frame work Considering Strate gic Bidding in Electricity Markets T omonari Kanazaw a, Hikaru Hoshino, Eiko Furutani Department of Electrical Materials and Engineering University of Hyogo Himeji, Japan { er25q006@guh, hoshino@eng, furutani@eng } .u-hyogo.ac.jp Abstract —T ransmission expansion planning in electricity mar - kets is tightly coupled with the strategic bidding behaviors of gen- eration companies. This paper proposes a Reinforcement Lear n- ing (RL)-based co-optimization framework that simultaneously learns transmission investment decisions and generator bidding strategies within a unified training process. Based on a multi- agent RL framew ork for market simulation, the proposed method newly introduces a design policy lay er that jointly optimizes continuous/discrete transmission expansion decisions together with strategic bidding policies. Through iterative interaction between market clearing and in vestment design, the framework effectively captur es their mutual influence and achie ves consistent co-optimization of expansion and bidding decisions. Case studies on the IEEE 30-bus system are provided for proof-of-concept validation of the proposed co-optimization framework. Index T erms —T ransmission Expansion, Electricity Markets, Reinfor cement Learning, Multi-Agent Systems. I . I N T RO D U C T I O N The liberalization of electricity markets has led to increasing decentralization in in vestment and operation decisions among generators and transmission operators [1]. Generation is con- trolled by priv ate Generation Companies (GENCOs), whose decisions are driven by their aim to maximize their profits. T ransmission dev elopment and system (and market) operation are planned by independent entities, System Operators (SOs) and Market Operators (MOs). Under such competitive environ- ments, transmission system operators are required to conduct pr oactive planning [2] that accounts for uncertainties in fu- ture generation and market outcomes. T raditional multi-lev el optimization models hav e addressed transmission expansion planning together with market operation (see, e.g., [3], [4]), yet these models typically assume rational market equilibrium and hav e limited flexibility to fully represent strategic bidding behaviors and price volatility . Recent studies hav e applied deep Reinforcement Learning (RL) to planning of power systems in uncertain and decen- tralized environments. As revie wed in [5], existing studies hav e used RL in two distinct ways: operational planning and expansion planning. The first direction uses RL to simulate operation and market behavior , where agents representing GENCOs learn strategic bidding policies that reproduce real- istic price formation and market equilibria (see, e.g., [6]–[8]). These models capture short-term interactions among agents and enable analysis of market efficienc y , price volatility , and regulatory impacts. The second direction uses RL or related learning techniques for expansion planning and in v estment, such as transmission expansion problems [9]–[11]. In these studies, RL serves as a high-lev el optimizer that explores design or inv estment decisions over long-term horizons, of- ten neglecting operational details into simplified cost/reward functions. Howe v er , these two research lines hav e largely dev eloped in parallel. Operational optimization or market- simulation approaches using RL provide realistic operational dynamics b ut typically assume fixed network configurations, while RL-based expansion planning approaches ignore how strategic bidding behaviors affect inv estment outcomes. In practice, transmission expansion planning is inherently cou- pled with market behavior: network upgrades influence loca- tional prices and dispatch patterns, and con v ersely , bidding strategies and congestion patterns determine the economic value of transmission expansions. T o bridge this research gap, this paper proposes an RL- based co-optimization frame work that incorporates strate gic bidding and transmission expansion within a unified learning process. Based on the multi-agent deep RL framew ork for market simulations as reported in [8], we extend it to jointly optimize transmission in vestment decision as design variables that co-e volv e with agent policies. This type of RL-based co-optimization of system design and its operational policy has been proposed primariry in the robotics community (see, e.g., [12], [13]) and recently applied to energy systems appli- cations [14], [15]. T o the best of our knowledge, this paper is the first to propose an RL-based co-optimization framework in the context of transmission expansion planning. Through case studies on the IEEE 30-bus system, we demonstrate that the proposed framework captures the interaction between market dynamics and in vestment decisions, yielding cost- efficient e xpansion plans that are consistent with strategic market behavior . The rest of this paper is organized as follows. In Sec. II, the formulation of the transmission expansion planning problem studied in this paper is introduced. In Sec. III, the proposed RL-based co-optimization framew ork is presented. In Sec. IV, numerical studies on the IEEE 30-bus system are described followed by conclusion in Sec. V. I I . P RO B L E M F O R M U L ATI O N This study considers a two-le vel decision process that in- cludes short-term market operation and long-term transmission in vestment. The formulation below defines the underlying market model and in vestment v ariables that form the basis of the proposed RL-based co-optimization framew ork. A. Market Model W e consider a day-ahead electricity market consisting of N g generating units (agents) participating through strategic bids. Each agent i aims to maximize its o wn profit by deciding a bid price λ bid i ( t ) at each time step t based on observed states such as local demand and past market outcomes (the detailed modeling of bidding behavior is described in Sec. III-A). Giv en all submitted bids, the market operator performs DC Optimal Power Flo w (DC-OPF) to clear the market under power balance and line-flow constraints at each time step t : min { P i ,θ n } N g X i =1 λ bid i ( t ) P i ( t ) (1) s.t. X i ∈G n P i ( t ) − D n ( t ) = X ( n,m ) ∈L B nm ( θ n ( t ) − θ m ( t )) , ∀ n ∈ N b , (2) | B nm ( θ n ( t ) − θ m ( t )) | ≤ L nm , ∀ ( n, m ) ∈ L , (3) 0 ≤ P i ( t ) ≤ P max i , ∀ i ∈ { 1 , . . . , N g } , (4) where N b , L , and G n are the sets of nodes (buses), lines, and generators connected to b us n ∈ N b , respectiv ely; D n is the demand at b us n ; θ n is the voltage angle at bus n ; B nm is the line susceptance; L nm is the transmission capacity between buses n and m ; and P max i is the maximum generation capacity of unit i . Solving (1)–(4) yields the cleared generation P cleared i ( t ) and nodal prices λ cleared i ( t ) , which reflect network congestion and marginal operating costs. The total operational system cost at time t is then defined as C oper ( t ) = N g X i =1 λ cleared i ( t ) P cleared i ( t ) , (5) representing the total payment to generators in the cleared market. Although a single-block bidding structure is assumed here for simplicity , the formulation can be readily extended to multi-block or piece wise-linear bidding schemes, as com- monly adopted in day-ahead markets. B. T ransmission Expansion In this study , transmission expansion planning is considered for a single target year rather than a multi-stage horizon. That is, inv estment decisions are ev aluated under representativ e operating conditions of a given year , and the resulting network configuration is assumed to remain fixed during the market simulations. Let each transmission line ℓ ∈ L have a base capacity L base ℓ , while in Sec. II-A the pair ( n, m ) refers to the sending and receiving buses of each line. W e consider two types of formulations of a continuous expansion model that adjusts line capacities and a discrete siting model that determines whether to upgrade candidate lines: ∆ L ℓ ∈ R + , (continuous capacity expansion) , (6) z ℓ ∈ { 0 , 1 } , (discrete expansion decision) . (7) The effecti v e line capacity is then expressed as L ℓ = L base ℓ + z ℓ ∆ L ℓ , (8) where either z ℓ or ∆ L ℓ is fixed depending on the case study in Sec. IV. The in vestment cost for transmission expansion is modeled as: C exp = X ℓ ∈L c ℓ z ℓ ∆ L ℓ , (9) where c ℓ is the expantion cost per unit capacity of the line ℓ per year . The ov erall optimization objectiv e is to minimize the total cost including the expansion cost, defined as J = W anu T X t =1 C oper ( t ) + C exp , (10) where W anu stands for the annualization factor depending on the total time steps T . Such formulations are consistent with those employed in open-source capacity expansion models such as PyPSA [16] and other optimization framew orks. I I I . R L - B AS E D C O - O P T I M I Z AT I O N F R A M E W O R K The overvie w of the proposed co-optimization frame work is shown in Fig. 1. The upper part of the figure (highlighted in blue) represents the multi-agent RL en vironment used for market simulation and is described in Sec. III-A. The lower part illustrates the extension that enables simultaneous opti- mization of transmission capacity as explained in Sec. III-B. Fig. 1: Overvie w of the proposed co-optimization framework A. Market Simulation Using Multi-agent RL The competitiv e electricity market is modeled as a partially observable multi-agent en vironment largely based on [8]. Each GENCO, with a single generating unit, acts as an autonomous agent submitting strategic bids to maximize its profit. At each time step t , agent i observes a local state giv en by o i ( t ) = [ P load ( t ) , λ bid i ( t − 1) , ω ⊤ ] ⊤ , (11) where P load ( t ) := P n ∈N b D n ( t ) stands for the total system demand, and λ bid i ( t − 1) is the bid submitted at the pre vious step. The vector ω represents the set of transmission design parameters that remain fixed during each episode, and its role in the co-optimization is detailed in Sec. III-B. Based on the observation o i ( t ) , the agent i selects a normalized action a i ( t ) ∈ (0 , 1) , and the bid price λ bid i ( t ) is calculated as λ bid i ( t ) = λ cost i ( t )( α i a i ( t ) + 1) (12) where λ cost i is the marginal generation cost of unit i , and α i > 0 is a scaling parameter that defines the upper limit of λ bid i . When a i ( t ) = 0 , the agent bids truthfully at its marginal cost λ bid i ( t ) = c i ( t ) , whereas larger a i ( t ) corresponds to higher bids. T o av oid unrealistic temporal fluctuations in bidding, the bid prices are further constrained following [8] as max t λ bid i ( t ) min t λ bid i ( t ) ≤ 1 . 5 , (13) 0 . 9 ≤ λ bid i ( t ) λ bid i ( t − 1) ≤ 1 . 1 . (14) After all agents submit their bids, the market operator clears the market as described in Sec. II-A, and each agent receiv es a rew ard r i calculated as r i ( t ) = ( λ cleared i ( t ) − λ cost i ( t )) P cleared i ( t ) , (15) and updates its policy to maximize the long-term return G i = X t γ t r i ( t ) , (16) where γ ∈ (0 , 1) is the discount factor . T o address the coupled and non-stationary nature of multi- agent bidding, we employ the Multi-Agent Deep Determinis- tic Policy Gradient (MADDPG) algorithm. Each agent pos- sesses an actor network π i ( o i ; θ π i ) that maps local obser- vations to continuous bid actions, and a centralized critic Q i ( o , a ; θ Q i ) that ev aluates the joint action–value function using all agents’ observations o = ( o 1 , . . . , o N g ) and actions a = ( a 1 , . . . , a N g ) . During training, the centralized critic miti- gates non-stationarity , while each actor learns its decentralized policy for execution. After each market clearing, experience tuples ( o t , a t , r t , o t +1 ) are stored in a replay buf fer and used for off-polic y updates of both actor and critic networks. This procedure allows the agents to learn Nash-like bidding equi- libria consistent with network congestion and market clearing. Algorithm 1 Co-optimization of Bidding and Expansion 1: Initialize θ π i , θ Q i and parameter µ 2: f or episode = 1 to N do 3: Sample design ω ∼ p µ ( ω ) 4: Simulate market episode with MADDPG bidding 5: Compute episodic returns G i 6: Update actor and critic parameters θ π i , θ Q i 7: if episode mo d N up = 0 then 8: Update µ ← µ + α ∇ µ E [ G total ] 9: end if 10: end for B. Co-optimization of T ransmission Capacity T o enable co-optimization of transmission expansion, we treat the design vector ω = [ ω 1 , . . . , ω |L| ] ⊤ as a random variable sampled at the beginning of each episode. Its sampling follows a design policy parametrized by µ = { µ ℓ } ℓ ∈L : p µ ( ω ) = Y ℓ ∈L p µ ℓ ( ω ℓ ) , (17) where µ ℓ is the learnable parameter gov erning the distribution of the design variable for line ℓ . In the continuous case, ω ℓ corresponds to the capacity increment ∆ L ℓ , and we use a Gaussian distribution N ( µ ℓ , σ 2 ℓ ) as the design policy: p µ ℓ ( ω ℓ ) = 1 p 2 π σ 2 ℓ exp − ( ω ℓ − µ ℓ ) 2 2 σ 2 ℓ , (18) where σ ℓ is a fixed standard deviation controlling exploration. In the discrete case, ω ℓ corresponds to the binary line-upgrade decision z ℓ ∈ { 0 , 1 } , for which we use a Bernoulli distrib ution: p µ ℓ ( ω ℓ ) = µ ℓ δ ( ω ℓ − 1) + (1 − µ ℓ ) δ ( ω ℓ ) , (19) where the probability density is expressed using Dirac delta function δ ( · ) for notational consistency , e ven though a discrete random v ariable is normally described by a probability mass function. The objecti ve of the design-policy update is to minimize the total cost, and thus the episodic return G total is defined as G total = − W anu T X t =1 C oper ( t ) + C exp . (20) Based on this objective, the design-policy parameter µ is updated using the policy-gradient theorem [17]: ∇ µ E [ G total ] = E ∇ µ ln p µ ( ω )( G total − ¯ G total ) , (21) where ∇ µ is the gradient operator with respect to µ , and ¯ G total is a moving-av erage of G total introduced to reduce the variance of the gradient estimator . The expectation is approximated by the sample a verage over the most recent N up episode, and p µ can be replaced with a probability mass function for discrete v ariables. The ov erall learning flow is summarized in Algorithm 1. I V . N U M E R I C A L E X P E R I M E N T S This section ev aluates the proposed framework through proof-of-concept examples on the IEEE 30-bus system. After presenting the system settings in Sec. IV -A, two types of experiments are conducted. In Sec. IV -B, we compare the proposed method with a con ventional two-stage benchmark using PyPSA [16] under continuous expansion for two selected lines. In Sec. IV -C, we examine a more realistic setup in volving discrete siting decisions ov er multiple candidate lines. A. System Settings The topology of the IEEE 30-bus system is shown in Fig. 2. The generators at bus 2 (Gen 1), bus 23 (Gen 2), and bus 27 (Gen 3) are considered as strategic bidders that maximize their profits, and all other generators submit their true marginal cost. The marginal generation cost is assumed constant ov er the output range, and is set to $50 / MWh for strategic bidders and $55 / MWh for other generators. In this system, a relatively large demand is concentrated in the upper-right area, and the lines connecting to this region are selected as candidates for expansion. The baseline transmission capacities of lines 1-2, 3-4, 6-10, and 9-10 are set to 20 MW , while the capacities of lines 4-12 and 27-28 are set to 10 MW . The capacity expansion cost is assumed to be $100,000/MW/year . The market simulations are performed with an hourly resolution ov er T = 48 intervals for illustration, though longer horizons are readily scalable within the RL framew ork. The bidding agents are trained with MADDPG, where each actor network consists of six fully connected layers and each critic network consists of four layers. All hidden layers has 128 units and use ReLU activ ation. Adam learning rates are set to 10 − 7 (actor) and 10 − 5 (critic), with discount γ = 0 . 99 , target-netw ork smoothing τ = 5 × 10 − 3 , replay buf fer size 2 × 10 4 , and minibatch size 64. Actions are bounded in [0 , 1] , and the parameter α i in (12) is set to 1. B. Case Study 1: Comparison with PyPSA Benchmark This subsection compares the proposed RL-based co- optimization with a benchmark using PyPSA as a standard capacity expansion model. Continuous capacity expansion is considered for two selected lines (4-12 and 27-28). Since PyPSA does not account for strategic bidding in its transmis- sion planning, a two-stage benchmark is considered to emulate the con ventional sequential process. Specifically , we compare: • T wo-stage benchmark : (Stage1) Use PyPSA to compute continuous capacity expansions ∆ L ℓ under e xogenous bidding assumptions (e.g., “high-submission” and “lo w- submission” regimes); (Stage2) Giv en those capacities, simulate the multi-agent market with RL to obtain the realized operational outcomes. • Proposed RL-based co-optimization : Learn bidding policies and continuous expansion ∆ L ℓ simultaneously within the unified RL framew ork described in Sec. III. W e first present the results of the two-stage benchmark. The low-submission scenario assumes that all strategic gen- erators bid at their marginal cost of $50 / MWh , while the Fig. 2: T opology of IEEE 30-bus system high-submission scenario assumes more aggressiv e bidding at $90 / MWh determined based on preliminary RL simulations. The former leads to no expansion in PyPSA, while the latter installs 81 . 4 MW on Line 4-12, with Line 27-28 unchanged. The resulting market outcomes obtained by running multi- agent RL under these capacities are summarized in Fig. 3 and T able I 1 . The gray and purple bars in Fig. 3 show the total operational cost and expansion cost giv en by PyPSA in Stage 1, while the stacked colored bars indicate the realized generator r evenues (rather than pr ofit ) under the subsequent market simulation. Under the low-submission assumption, the lack of expansion results in se vere congestion, resulting in a substantially higher realized total cost compared with the PyPSA estimate. Under the high-submission assumption, the expansion on Line 4-12 greatly reduces congestion, and the realized cost remains close to the PyPSA estimate. A slight increase is observed because, in PyPSA, the $90/MWh bids of Gen 2 and Gen 3 pre vent them from being dispatched at all, whereas in the RL simulation they sometimes bid abov e the $55/MWh marginal cost of the non-strategic generators (as shown in T able I) and are dispatched at these higher prices, increasing the realized total cost. In contrast to the two-stage benchmark, the proposed RL- based co-optimization jointly learns both the bidding strategies and the transmission expansion. The learned design results in an expansion of 76 . 1 MW on Line 4-12. Compared with the two-stage benchmark, particularly the high-submission case, the co-optimized solution achiev es a slight reduction in expansion cost. The two-stage approach assumes uniformly high bids for all strategic generators and therefore installs more capacity than necessary , whereas the proposed method internalizes the strate gic behaviors and selects a more ef fi- cient capacity level. While such integrated planning could in principle be formulated using multi-lev el optimization such as [3], [4], the multi-agent RL-based approach offers superior scalability to longer simulated time horizons and can readily 1 Due to stochastic exploration in RL training, the market outcomes exhibit variance across runs. For fair comparison, the figure and table report a rep- resentativ e run in which all three strategic generators successfully conv erged to stable bidding policies, ensuring that the underlying learning dynamics are consistent across the evaluated cases. T ABLE I: Summary of market simulation outcomes in case studies Case Scenario A verage bid price [$/MWh] Profit [M$] Operational cost Expansion cost Gen 1 Gen 2 Gen 3 Gen 1 Gen 2 Gen 3 [M$] [M$] T wo-stage (lo w-submission) 93.7 58.1 69.8 26.2 0.66 1.62 138.39 0 1 T wo-stage (high-submission) 93.0 58.0 63.7 5.42 0.274 1.24 119.71 8.14 Proposed co-optimization (continuous) 91.5 60.1 62.4 5.72 0.483 1.43 120.09 7.61 2 Proposed co-optimization (discrete) 75.6 94.2 84.6 4.82 0 0.0351 118.00 10.00 Fig. 3: T otal cost comparison and component breakdown accommodate more complex settings such as multi-market en vironments. C. Case Study: Discrete Siting Decisions T o demonstrate the applicability of the proposed frame work to more realistic planning problems, we next consider a discrete siting case. The six candidate lines introduced in Sec. IV -A are included as binary expansion decisions, and the additional capacity is fixed at 50 MW for all lines. Under this setting, the design policy learns to upgrade two candidate lines 1–2 and 4-12. Although the e xpansion cost increases relati ve to the results in case 1 (Sec. IV -B), due to the additional upgrade of Line 1-2, the operational cost decreases sufficiently to yield a total cost that remains comparable to Case 1. A key factor behind this operational cost reduction is the change in Gen 1’ s bidding beha vior induced by the upgrade of line 1-2. In Case 1, ev en with the e xpansion of line 4-12, Gen 1 still enjoyed significant market power , and thus maintained high bid prices around $90/MWh. By contrast, the additional capacity on line 1-2 reduces congestion on this corridor Gen 1’ s ability to ex ert such market power . These results demonstrate that the proposed framew ork can identify effecti ve expansion sites by internalizing the impact of strategic market behavior . V . C O N C L U S I O N S This paper presented an RL-based framew ork for co- optimization of strategic bidding and transmission expansion planning in electricity markets. By extending a multi-agent RL algorithm with a learnable continuous/discrete design policy , the proposed method enables simultaneous learning of bidding strategies and in vestment decisions while capturing their mutual influence. The ef fectiv eness of the proposed framew ork has been shown based on case studies on the IEEE 30-bus system. The proposed framew ork pro vides a promising foundation for future applications to larger systems, multi- market en vironments, and integrated generation-transmission co-planning. R E F E R E N C E S [1] S. G ´ omez and L. Olmos, “Coordination of generation and transmission expansion planning in a liberalized electricity context—coordination schemes, risk management, and modelling strategies: A re vie w , ” Sustain- able Energy T echnologies and Assessments , v ol. 64, p. 103731, 2024. [2] E. E. Sauma and S. S. Oren, “Proactiv e planning and valuation of transmission in vestments in restructured electricity markets, ” Journal of Re gulatory Economics , vol. 30, no. 3, pp. 358–387, 2006. [3] E. E. Sauma and S. S. Oren, “Economic criteria for planning transmis- sion in vestment in restructured electricity markets, ” IEEE T ransactions on P ower Systems , vol. 22, no. 4, pp. 1394–1405, 2007. [4] L. P . Garces, A. J. Conejo, R. Garcia-Bertrand, and R. Romero, “ A bilev el approach to transmission expansion planning within a market en vironment, ” IEEE T ransactions on P ower Systems , vol. 24, no. 3, pp. 1513–1522, 2009. [5] G. Pes ´ antez, W . Guam ´ an, J. C ´ ordov a, M. T orres, and P . Benalcazar, “Reinforcement learning for ef ficient power systems planning: A review of operational and expansion strategies, ” Ener gies , vol. 17, no. 9, 2024. [6] Y . Y e, D. Qiu, M. Sun, D. Papadaskalopoulos, and G. Strbac, “Deep reinforcement learning for strategic bidding in electricity markets, ” IEEE T ransactions on Smart Grid , vol. 11, no. 2, pp. 1343–1355, 2020. [7] Y . Liang, C. Guo, Z. Ding, and H. Hua, “ Agent-based modeling in electricity market using deep deterministic policy gradient algorithm, ” IEEE T ransactions on P ower Systems , vol. 35, no. 6, pp. 4180–4192, 2020. [8] Y . Du, F . Li, H. Zandi, and Y . Xue, “ Approximating nash equilibrium in day-ahead electricity market bidding with multi-agent deep reinforce- ment learning, ” Journal of Modern P ower Systems and Clean Energy , vol. 9, no. 3, pp. 534–544, 2021. [9] W . MingKui, C. ShaoRong, Z. Quan, Z. Xu, Z. Hong, and W . Y uHong, “Multi-objectiv e transmission network expansion planning based on reinforcement learning, ” in 2020 IEEE Sustainable P ower and Energy Confer ence (iSPEC) , pp. 2348–2353, 2020. [10] Y . W ang, L. Chen, H. Zhou, X. Zhou, Z. Zheng, Q. Zeng, L. Jiang, and L. Lu, “Flexible transmission network expansion planning based on dqn algorithm, ” Ener gies , v ol. 14, no. 7, 2021. [11] J. Dong, J. Cao, Y . Lu, Y . Zhang, J. Li, C. Xu, D. Zheng, and S. Han, “Transmission expansion planning: A deep learning approach, ” Sustainable Energy , Grids and Networks , v ol. 41, p. 101585, 2025. [12] C. Schaff, D. Y unis, A. Chakrabarti, and M. R. W alter, “Jointly learning to construct and control agents using deep reinforcement learning, ” in 2019 International Conference on Robotics and Automation (ICRA) , pp. 9798–9805, 2019. [13] T . Chen, Z. He, and M. Ciocarlie, “Hardware as policy: Mechanical and computational co-optimization using deep reinforcement learning, ” in 2020 Confer ence on Robot Learning , pp. 1158–1173, 2021. [14] M. Cauz, A. Bolland, C. Ballif, and N. W yrsch, “Reinforcement learning for efficient design and control co-optimisation of energy systems, ” in ICML 2024 AI for Science W orkshop , p. 68, 2024. [15] T . Mantani, H. Hoshino, T . Kanazawa, and E. Furutani, “Sizing of bat- tery considering renew able energy bidding strategy with reinforcement learning, ” in 2025 IEEE P ower & Ener gy Society General Meeting , 2025. [16] T . Brown, J. H ¨ orsch, and D. Schlachtberger , “PyPSA: Python for power system analysis, ” Journal of Open Resear ch Softwar e , vol. 6, no. 1, 2018. [17] R. S. Suttton and A. G. Barto, Reinforcement Learning: An Introduction . MIT Press, 2nd ed., 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment