Self-Configurable Mesh-Networks for Scalable Distributed Submodular Bandit Optimization

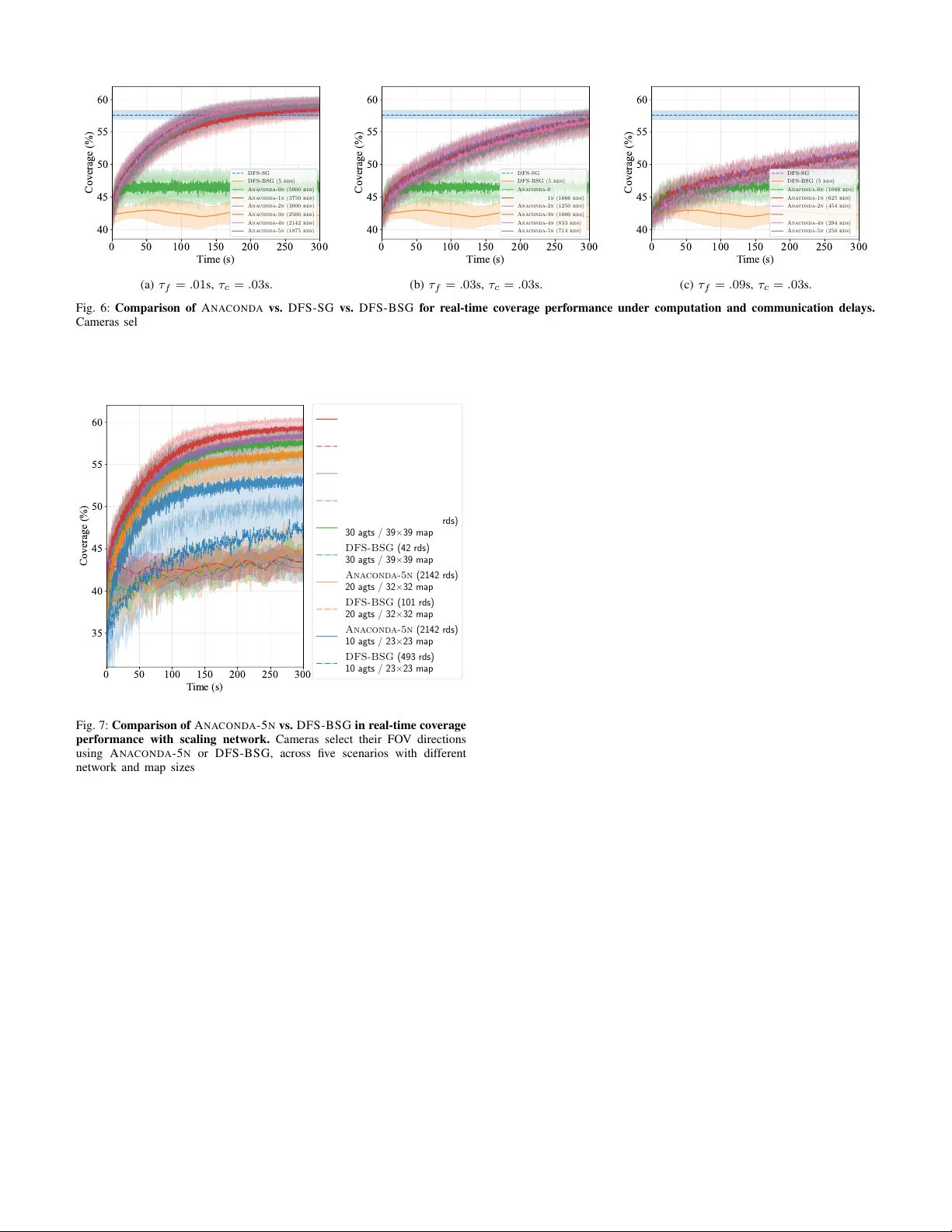

We study how to scale distributed bandit submodular coordination under realistic communication constraints in bandwidth, data rate, and connectivity. We are motivated by multi-agent tasks of active situational awareness in unknown, partially-observab…

Authors: Zirui Xu, Vasileios Tzoumas