Incremental Learning of Sparse Attention Patterns in Transformers

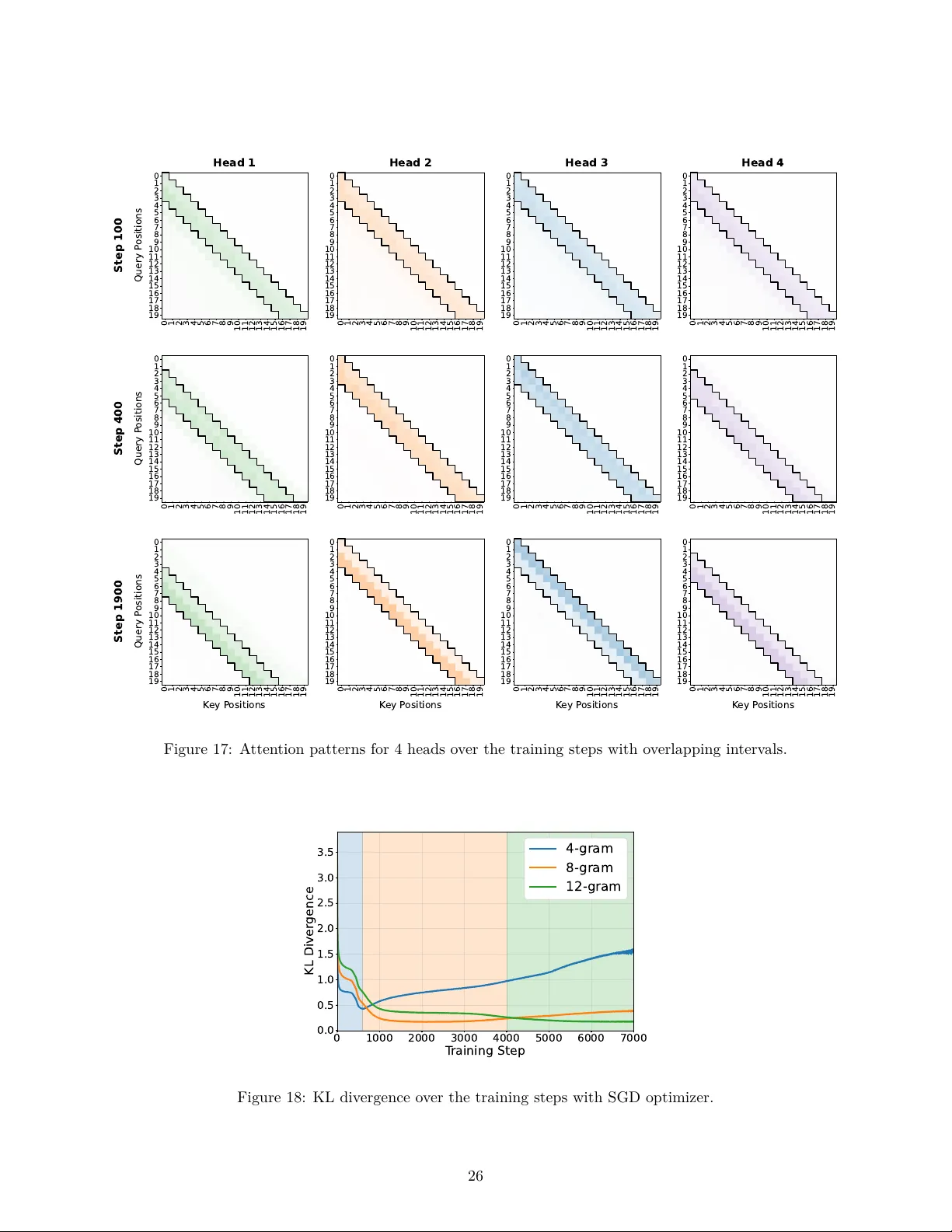

This paper introduces a high-order Markov chain task to investigate how transformers learn to integrate information from multiple past positions with varying statistical significance. We demonstrate that transformers learn this task incrementally: ea…

Authors: Oğuz Kaan Yüksel, Rodrigo Alvarez Lucendo, Nicolas Flammarion