Predictable Compression Failures: Order Sensitivity and Information Budgeting for Evidence-Grounded Binary Adjudication

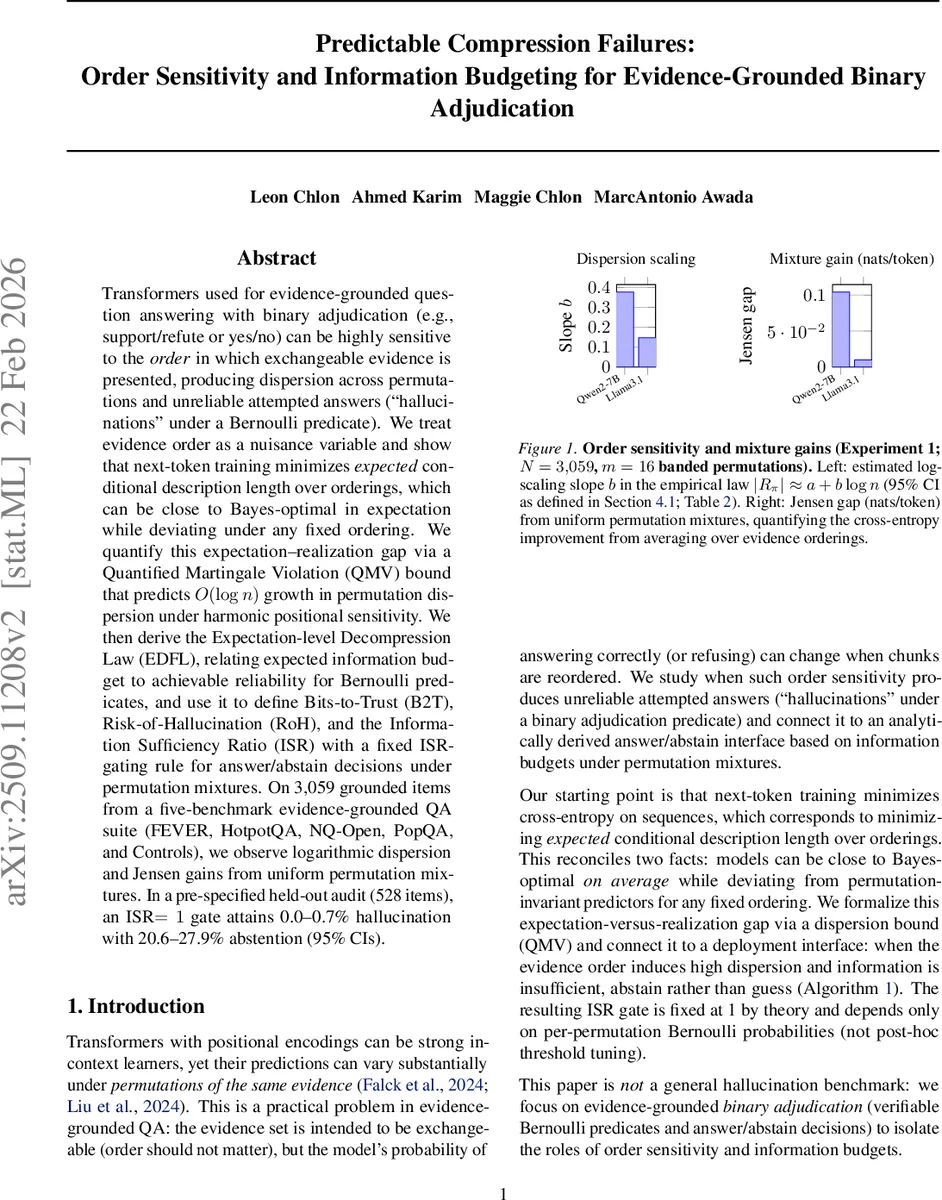

Transformers used for evidence-grounded question answering with binary adjudication (e.g., support/refute or yes/no) can be highly sensitive to the order in which exchangeable evidence is presented, producing dispersion across permutations and unreliable attempted answers (``hallucinations’’ under a Bernoulli predicate). We treat evidence order as a nuisance variable and show that next-token training minimizes expected conditional description length over orderings. This objective can be close to Bayes-optimal in expectation while deviating under any fixed ordering. We quantify this expectation–realization gap via a Quantified Martingale Violation (QMV) bound that predicts $\mathcal{O}(\log n)$ growth in permutation dispersion under harmonic positional sensitivity. We then derive the Expectation-level Decompression Law (EDFL), relating expected information budget to achievable reliability for Bernoulli predicates, and use it to define \emph{Bits-to-Trust} (B2T), \emph{Risk-of-Hallucination} (RoH), and the \emph{Information Sufficiency Ratio} (ISR), together with a fixed ISR-gating rule for answer/abstain decisions under permutation mixtures. On 3,059 grounded items from a five-benchmark evidence-grounded QA suite (FEVER, HotpotQA, NQ-Open, PopQA, and Controls), we observe logarithmic dispersion and Jensen gains from uniform permutation mixtures. In a pre-specified held-out audit (528 items), an ISR $= 1$ gate attains 0.0–0.7% hallucination with 20.6–27.9% abstention (95% confidence intervals).

💡 Research Summary

This paper investigates a practical yet under‑explored failure mode of transformer‑based evidence‑grounded question answering systems that produce binary adjudications (e.g., support/refute or yes/no). Although the evidence set is meant to be exchangeable, the positional encodings of modern transformers make the model’s output highly sensitive to the order in which the same evidence chunks are presented. Consequently, the same instance can yield different probabilities of a correct answer—or even an outright hallucination—simply by permuting the evidence.

The authors treat the evidence ordering as a nuisance variable and show that next‑token training implicitly minimizes the expected conditional description length over all possible orderings. In expectation, the model can be close to Bayes‑optimal, yet for any fixed permutation it may deviate substantially. To quantify this expectation‑realization gap, they introduce the Permutation‑induced residual (R_{\pi}=q_{\pi}-\bar{q}) where (q_{\pi}) is the model’s Bernoulli probability for a given permutation and (\bar{q}) is the average over permutations. Under a bounded total‑variation assumption on the logit’s sensitivity to adjacent rank changes, Theorem 1 (Quantified Martingale Violation, QMV) bounds the expected absolute residual by a quarter of the sum of per‑chunk total variations.

When the model’s logit can be expressed as a weighted sum of a decaying positional function (Assumption 2), the bound simplifies dramatically. If the decay is harmonic ((\alpha=1)), Theorem 2 proves an explicit (O(\log n)) upper bound on permutation‑induced dispersion, matching the empirical law (|R_{\pi}|\approx a+b\log n) observed in experiments. Faster decay ((\alpha>1)) yields constant dispersion, while slower decay ((\alpha<1)) leads to polynomial growth.

The paper then bridges these dispersion results to an information‑theoretic perspective. By viewing next‑token training as minimizing cross‑entropy, the expected KL divergence between the reference distribution (P) and the model’s conditional distribution (S_{\pi}) can be interpreted as an expected information budget (\bar{\Delta}=E_{\pi}

Comments & Academic Discussion

Loading comments...

Leave a Comment