A Stochastic Gradient Descent Approach to Design Policy Gradient Methods for LQR

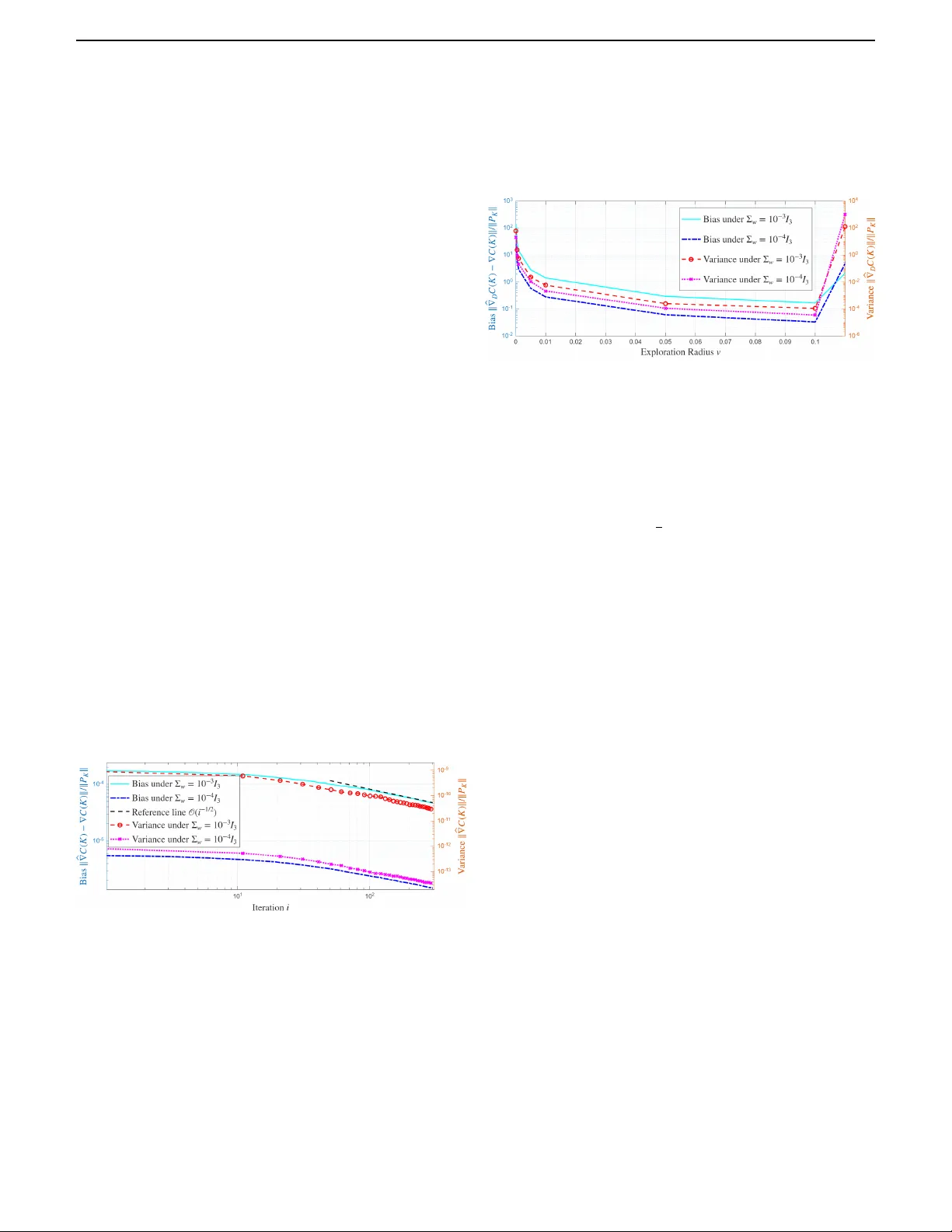

In this work, we propose a stochastic gradient descent (SGD) framework to design data-driven policy gradient descent algorithms for the linear quadratic regulator problem. Two alternative schemes are considered to estimate the policy gradient from st…

Authors: Bowen Song, Simon Weissmann, Mathias Staudigl