Expected Shortfall Regression via Optimization

To provide a comprehensive summary of the tail distribution, the expected shortfall is defined as the average over the tail above (or below) a certain quantile of the distribution. The expected shortfall regression captures the heterogeneous covariat…

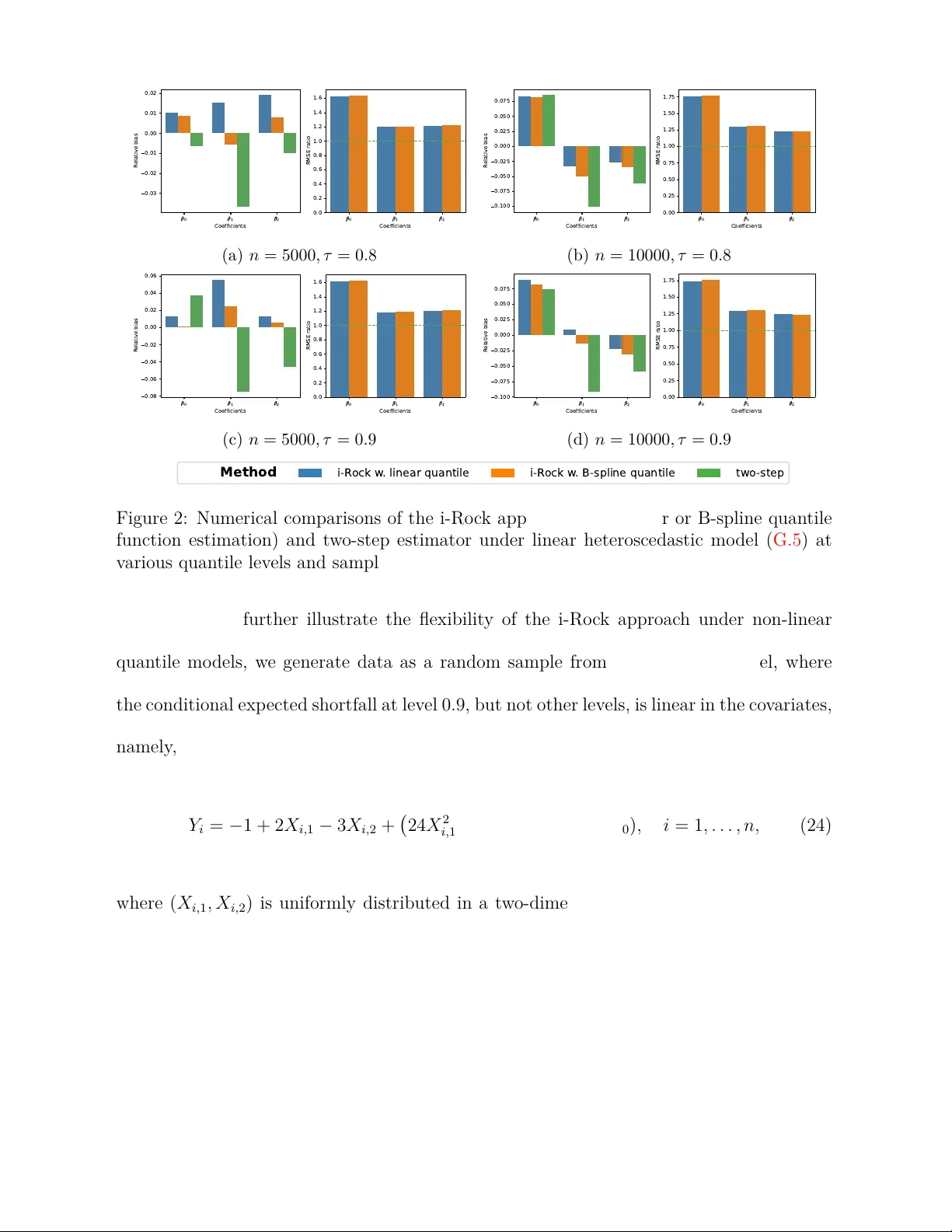

Authors: Yuanzhi Li, Shushu Zhang, Xuming He