TIACam: Text-Anchored Invariant Feature Learning with Auto-Augmentation for Camera-Robust Zero-Watermarking

Camera recapture introduces complex optical degradations, such as perspective warping, illumination shifts, and Moiré interference, that remain challenging for deep watermarking systems. We present TIACam, a text-anchored invariant feature learning f…

Authors: Abdullah All Tanvir, Agnibh Dasgupta, Xin Zhong

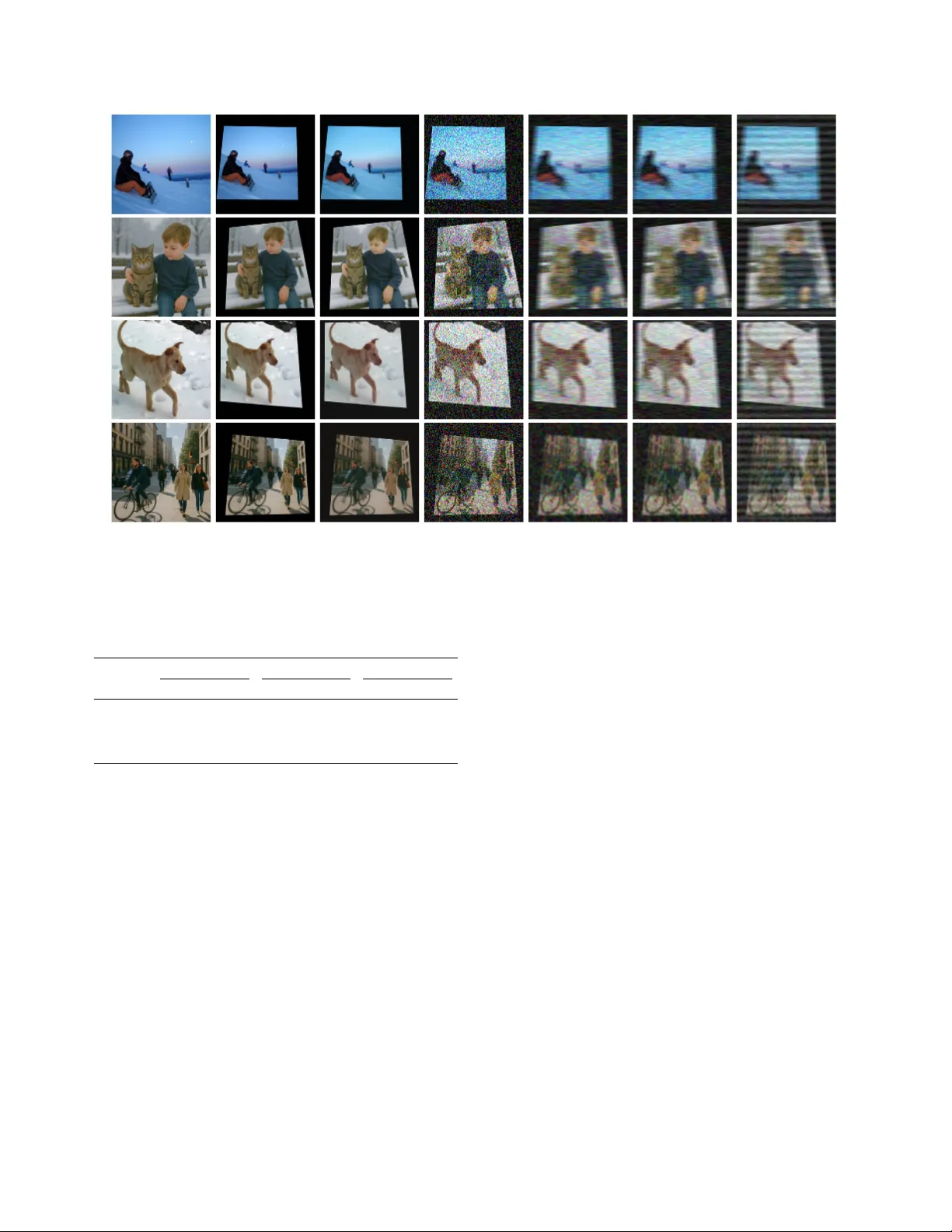

TIA Cam: T ext-Anchor ed In variant F eatur e Learning with A uto-A ugmentation f or Camera-Rob ust Zer o-W atermarking Abdullah All T an vir Agnibh Dasgupta Xin Zhong Department of Computer Science Uni versity of Nebraska Omaha atanvir@unomaha.edu adasgupta@unomaha.edu xzhong@unomaha.edu Abstract Camera r ecaptur e intr oduces complex optical degr adations, such as perspective warping , illumination shifts, and Moir ´ e interfer ence, that r emain challenging for deep watermark- ing systems. W e pr esent TIACam, a text-anc hored in vari- ant featur e learning fr amework with auto-augmentation for camera-r obust zero-watermarking . The method integr ates thr ee ke y innovations: (1) a learnable auto-augmentor that discovers camera-like distortions thr ough dif fer entiable ge- ometric, photometric, and Moir ´ e operator s; (2) a te xt- anchor ed in variant feature learner that enfor ces semantic consistency via cr oss-modal adversarial alignment between image and text; and (3) a zer o-watermarking head that binds binary messag es in the invariant featur e space with- out modifying image pixels. This unified formulation jointly optimizes invariance , semantic alignment, and watermark r ecoverability . Extensive experiments on both synthetic and r eal-world camera captur es demonstrate that TIA Cam achie ves state-of-the-art featur e stability and watermark ex- traction accuracy , establishing a principled bridge between multimodal in variance learning and physically r obust zer o- watermarking. Keyw ords: T ext-anchored representation learning, in vari- ant feature learning, auto-augmentation, camera robustness, zero-watermarking. 1. Introduction Image watermarking is a cov ert technique for embedding hidden watermark information into digital images to ensure copyright protection, content authentication, and o wnership verification. T raditional watermarking methods modify the image either in the spatial or transform domain, balanc- ing imperceptibility: the degree to which the watermark remains visually in visible, and robustness: the ability to correctly extract the watermark after image distortions. In contrast, zero-watermarking eliminates direct image modi- fication by associating a watermark with intrinsic features Figure 1. Concept of the proposed TIA Cam. extracted from the original image [ 8 ]. This paradigm pre- serves visual imperceptibility while enabling reliable wa- termark v erification or e xtraction through feature matching. Recent advances in deep learning hav e greatly expanded the scope of both embedding-based and zero-watermarking schemes, where neural networks automatically learn dis- criminativ e and rob ust features that support watermark ex- traction under div erse transformations. Among the applications, watermark extraction from camera-captured images remains particularly challenging. Unlike synthetic distortions such as rotation or blur , cam- era recapture introduces compound and spatially coupled degradations such as perspecti ve warping, illumination vari- ation, sensor noise, and color imbalance (see Fig. 1 ). T o address these ef fects, recent learning-based watermarking methods [ 6 , 24 ] train with a fixed “camera noise” layer that approximates physical distortions. This strategy improv es camera robustness by augmenting manually simulated dis- tortions directly into the training process. In parallel, an- other line of robust watermarking research le verages pre- trained visual feature extractors, such as those from self- supervised (SSL) contrastiv e learning frameworks [ 3 ], to achiev e robustness without direct noise modeling. These approaches draw inspiration from the broader goal of repre- sentation learning, capturing high-level semantics inv ariant to pixel v ariations. Howe ver , e xisting methods face sev eral limitations. First, manually designing camera noise layers is inherently restrictiv e: real-world optical distortions are environment- dependent, non-linear, and contextually coupled, making them difficult to simulate through fixed augmentations. Sec- ond, although pretrained SSL models provide some repre- sentations, they are not explicitly optimized for w atermark- ing; feature rob ustness emer ges as a side effect rather than a targeted objectiv e. Finally , ev en with these advances, water- mark extraction accuracy under real camera capture remains a major unresolved challenge. T o this end, we propose TIA Cam ( T ext-Anchored I n variant learning with A uto-augmentation for Cam era ro- bustness), a unified framework that learns camera-robust in variant features for zero-watermarking. Our approach introduces three key contributions: (1) a learnable auto- augmentor that automatically discovers realistic camera- like distortions through differentiable noise modules; (2) a text-anc hored in variant featur e learner that enforces se- mantic stability across distortions via cross-modal adversar - ial training; and (3) a zer o-watermarking head is adopted to bind binary messages to the in variant feature space, achiev- ing high watermark recov ery accurac y under synthetic and real-world camera captures. By jointly learning in vari- ance and semantic alignment, TIACam adv ances both the theoretical understanding and the practical robustness of camera-based zero-watermarking. 2. Related W ork Deep learning–based image w atermarking has primarily fo- cused on enhancing rob ustness. For example, HiDDeN [ 30 ] introduced an end-to-end encoder–decoder framework that learns invisible perturbations to embed data in images, achieving robustness against distortions such as blur , crop- ping, and JPEG compression. MBRS [ 15 ] enhanced JPEG robustness by mixing real and simulated compression dur- ing training and inte grating squeeze-and-excitation and dif- fusion modules within an encoder–decoder frame work. D WSF [ 11 ] proposed a dispersed embedding and synchro- nization–fusion strategy for high-resolution and arbitrary- size images, improving robustness against geometric and combined attacks. MuST [ 28 ] dev eloped a multi-source tracing watermarking scheme that detects and resynchro- nizes multiple embedded watermarks to trace composite im- ages back to their original sources. WOF A [ 21 ] designed a hierarchical embedding–extraction model with a compre- hensiv e distortion layer to withstand partial image theft, enabling watermark recovery from arbitrary image frag- ments. W atermark extraction from camera-captured images has been particularly challenging due to complex, coupled degradations arising from sensor noise, illumination vari- ation, and perspectiv e misalignment [ 6 , 24 ]. T o address this, proposals such as StegaStamp [ 24 ] and PIMoG [ 6 ] in- corporate manually designed camera-like noise layers into the encoder-decoder training process, simulating projec- tion, blur , color shifts, and compression to mimic real cap- ture conditions. While these approaches improv e camera robustness through manual noise augmentation, their per- formance remains constrained by the limited di versity and realism of handcrafted distortion models, which struggle to generalize across heterogeneous capture en vironments. Recent studies on deep robust watermarking and zero- watermarking ha ve shifted to ward using robust features that remain stable under distortions rather than directly embed- ding watermarks in pixel space. A common strategy is to lev erage pre-trained networks as fixed feature extrac- tors, assuming that their learned representations are inher- ently robust to perturbations. Some works [ 8 , 12 , 13 , 19 ] adopt con volutional or transformer-based backbones to ex- tract semantic features from the host image and fuse them with encoded watermark patterns through simple correla- tion schemes. More recent approaches [ 7 , 27 ] extend this idea by exploiting self-supervised models such as DINO [ 3 ] to embed or v erify watermarks in pretrained feature spaces, gaining additional rob ustness to common distortions. How- ev er , feature stability arises passi vely from pre-training ob- jectiv es rather than being explicitly optimized for water- marking robustness. T o mov e beyond reliance on pre-trained representations, recent works hav e started to explicitly train in variant fea- tures for watermarking. One line of research introduces text-guided in variance [ 1 ], where cosine similarity between image and caption embeddings is minimized to maintain se- mantic consistency across visual transformations. Another, ex emplified by In vZW [ 25 ], formulates zero-watermarking as a distortion-adv ersarial task in which a discriminator dis- tinguishes clean from distorted image features. In contrast, the proposed TIA Cam establishes a novel unified adversar - ial dynamic that jointly learns camera-like distortions, en- forces cross-modal semantic alignment, and achiev es ro- bustness surpassing both text-only and distortion-only in- variance paradigms. 3. TIA Cam As shown in Fig. 2 , the proposed framework comprises three modules, an Auto-Augmentor, a T ext-Anchored In- variant Feature Learner , and a Zero-W atermarking Head, working jointly in a loop. The Auto-Augmentor generates realistic camera degradations through differentiable cam- era noise operators, producing learnable and augmented im- age variants. The T ext-Anchored In v ariant Feature Learner aligns both the original and augmented images with their textual descriptions via a CLIP backbone and a lightweight in variant feature extractor–discriminator pair . Its training combines adversarial objectiv es against the discriminator (for semantic alignment) and against the Auto-Augmentor (for robustness). The learned in variant features, stable un- Figure 2. Overview of the proposed TIACam. Given an input image x and its positive anchor caption T with a negativ e caption ˜ T , a distorted image ˆ x = T aug ( x ) is generated using the learned auto-augmentor T aug ( · ) . All inputs are encoded by the CLIP encoders to obtain 768-D features, which are refined by the in variant feature extractor f θ ( · ) into 1024-D in variant representations. Paired samples ( f θ ( x ) , g τ ( T )) , ( f θ ( ˆ x ) , g τ ( T )) , and ( f θ ( ˆ x ) , g τ ( ˜ T )) are used to train a discriminator D ψ ( · ) that distinguishes real from fake associations, while f θ is adv ersarially optimized both against D ψ for semantic alignment and against T aug for robustness. For zero-watermarking, the in variant feature f θ ( x ) is projected onto reference codes C , and w atermark bits are predicted as ˆ W = σ ( f θ ( x ) ⊤ C ) for reliable e xtraction. der camera-induced perturbations, are then used by the Zero-W atermarking Head to bind binary watermark mes- sages to latent feature signatures, enabling reliable extrac- tion without modifying image pixels. 3.1. Learned A uto-A ugmentation T o simulate realistic distortions encountered in camera- captured or physically altered images, we design a fully dif- ferentiable Auto-Augmentor composed of learnable mod- ules for geometric, photometric, additiv e, filtering, com- pression, and Moir ´ e noise transformations (see Fig. 2 ). Each module is implemented as a parameterized neural op- erator , enabling gradients to flo w through the entire aug- mentation pipeline during adversarial training. Unlike tradi- tional fixed augmentations, the proposed Auto-Augmentor dynamically learns distortion distributions that most chal- lenge feature inv ariance, thereby modeling realistic camera variations. Geometric Module. This module applies spatial trans- formations to capture camera motion, rotation, scaling, and projection effects. Follo wing the differentiable per- spectiv e transformation layer from [ 16 ], we define x ′ = T geo ( x ; θ geo ) , T geo ( x )( u, v ) = x ( A [ u, v , 1] ⊤ ) , where A ∈ R 3 × 3 is a learnable perspective matrix. This formulation allows continuous updates of parameters such as shearing, stretching, or viewpoint shifts through gradient descent. Photometric Module. This module models illumination changes via dif ferentiable brightness, contrast, and gamma transformations: T photo ( x ′′ ) = α · x ′′ γ + β , where α , γ , and β (scalar or per-channel) are learnable parameters represent- ing contrast, gamma, and brightness. Bounded optimization of these parameters ensures physically plausible color trans- formations. Additive Noise Module. Sensor-lik e degradation is intro- duced through differentiable additi ve noise: T noise ( x ′′′ ) = x ′′′ + σ · z , z ∼ N (0 , 1) , σ > 0 , where the reparameter - ization trick maintains gradient flo w through the stochastic operation. For discrete artifacts (e.g., salt-and-pepper), a Gumbel-softmax approximation [ 14 ] is employed to ensure differentiability . Filtering Module. simulate optical blur and lens smear - ing, a learnable con volutional kernel K performs x ′′ = T filter ( x ′ ) = K ∗ x ′ , where K represents Gaussian or motion blur kernels constrained by a normalization loss for numer- ical stability . Gradients propagate through K , enabling the network to learn distortion kernels that maximally reduce feature consistency . Compression Module. T o approximate lossy compres- sion artifacts such as quantization and blocking, we imple- ment a differentiable surrogate of JPEG compression [ 30 ]. Giv en input x , the discrete cosine transform D ( x ) is fol- lowed by a smooth quantization function [ 23 ]: Q ( z ) = ⌊ z ⌋ + σ ( α ( z − ⌊ z ⌋ )) − 0 . 5 , where σ ( · ) is the sigmoid func- tion and α controls smoothness. A trainable frequency- domain mask M ∈ [0 , 1] H × W further modulates D ( x ) as D ′ ( x ) = M ⊙ D ( x ) , allowing the model to learn adaptiv e compression patterns that reflect real-world bandwidth or codec distortions. Moir ´ e Distortion Module. T o emulate the interference patterns that arise from sensor–display misalignment, we introduce a differentiable Moir ´ e generator: T moire ( x ′′′ ) = x ′′′ + α · sin 2 π ( f x u + f y v ) + ϕ , where ( u, v ) are pixel coor- dinates, ( f x , f y ) denote spatial frequencies sampled from a learnable range, and ϕ is a random phase of fset. The ampli- tude α is a trainable parameter that controls pattern strength, while ( f x , f y ) are differentiable with respect to the augmen- tation loss. This formulation enables gradient-based opti- mization of pattern frequency and intensity , allowing the augmenter to reproduce realistic periodic interference ef- fects observed in camera recapture. Modular Composition. The full augmentation func- tion is defined as a sequential composition of six dif- ferentiable modules: T aug = T comp ◦ T filter ◦ T add ◦ T photo ◦ T geo ◦ T moire , ˆ x = T aug ( x ; Θ) , where Θ = { θ geo , α, β , γ , σ , M , α moire } denotes all learnable parame- ters across modules. During training, T aug is optimized ad- versarially to generate distortions that most disrupt feature alignment, while the in variant feature e xtractor f θ is trained to preserv e semantic consistenc y . This adversarial interplay enables the model to discover which distortions matter and how to remain stable under them , capturing the complex div ersity of real camera and en vironmental perturbations. 3.2. T ext-Anchored Inv ariant Featur e Learning Modeling camera distortions is inherently difficult because real-world degradations are compound - perspective shifts, compression, lighting changes, and sensor noise often co- occur in unpredictable ways. In the feature space, rather than modeling e very possible distortion explicitly , we ap- proach the problem from the ground up: learning what re- mains stable, the semantic meaning of the image. If a wa- termark is embedded not in pixel values b ut in the meaning of the image, it will remain intact as long as the meaning is preserved, a property naturally satisfied under most cam- era distortions. This motiv ates our central feature proposal: a model that can match multimodality media (in our case, images and texts) rob ustly across distortions has, in a sense, learned to “understand” the content. Hence, the watermark can be embedded within this in variant feature space that captures the meaning of the image rather than in the raw image domain. Proposed Semantic Inv ariance Principle. W e define a composite feature extractor f θ that consists of a frozen CLIP image encoder and a trainable in variant feature ex- tractor built on top of it. Given an input image I and its text anchor T , the image and text embeddings are obtained as F = f θ ( I ) and E = g τ ( T ) , where g τ is the CLIP text encoder . For a content-preserving transformation T (e.g., camera recapture, compression, illumination shift), the ex- tractor is trained to satisfy F ≈ f θ ( T ( I )) , ensuring that both the original and distorted images share a consistent, semantics-preserving representation. The text embedding E serves as a stable anchor that captures the core meaning while discarding transient visual details. Because caption- like text anchors are abstract yet content-specific, they pro- vide a high-le vel inv ariant axis for aligning visual features across distortions. This formulation follows the Information Bottleneck (IB) principle [ 26 ], which seeks representations that pre- serve task-relev ant semantics while discarding nuisance variations. Here, the visual feature F serves as a bottle- neck between the raw image and its semantic anchor E , optimized as max I ( F ; E ) − β I ( F ; I ) , where I ( · ; · ) de- notes mutual information. Maximizing I ( F ; E ) enforces semantic consistency with the text description, while pe- nalizing I ( F ; I ) reduces sensitivity to lo w-level appearance changes. This encourages F to encode the image’ s in variant meaning rather than its unstable visual realization. Adversarial Cross-Modal Alignment T raining. W e im- plement the semantic–inv ariance principle as a cross–modal min–max game that discriminates between matched and mismatched image–text pairs. Giv en a batch B = { ( I , T + ) } with each image I and its positi ve text anchor T + , we sample a semantically related but incorrect cap- tion T − as a negativ e text anchor . A distorted image view I ′ = A ϕ ( I ) is generated by the Auto-Augmentor . Embed- dings are obtained as F = f θ ( I ) , F ′ = f θ ( I ′ ) , E + = g τ ( T + ) , E − = g τ ( T − ) , where f θ denotes the composite image feature extractor (CLIP encoder plus in variant feature extractor) and g τ is the frozen CLIP text encoder . A pair discriminator D ψ receiv es an image–te xt feature pair and predicts whether they con vey the same seman- tic content ( real ) or not ( fake ). This formulation enforces instance-lev el alignment, binding each image feature to its positiv e text anchor while repelling negati ves. It opera- tionalizes the proposed semantic in variance principle: the representation must remain tethered to its true te xtual mean- ing e ven when visual distortions alter lo w-level appearance. By discriminating at the pair level, the model preserves se- mantic identity under distortion without collapsing modali- ties into a single embedding space. The discriminator is trained to classify ( F , E + ) and ( F ′ , E + ) as real pairs and ( F , E − ) and ( F ′ , E − ) as fake pairs: L disc = E ( F,E + ) , ( F ′ ,E + ) h log D ψ ( F , E + ) + log D ψ ( F ′ , E + ) i + E ( F,E − ) , ( F ′ ,E − ) h log 1 − D ψ ( F , E − ) + log 1 − D ψ ( F ′ , E − ) i . (1) During adversarial training, f θ is optimized to fool D ψ by minimizing the opposite objectiv e: L adv = E ( F,E + ) , ( F ′ ,E + ) h log 1 − D ψ ( F , E + ) + log 1 − D ψ ( F ′ , E + ) i , (2) forcing image features to align tightly with the correct text anchors while remaining separable from neg ativ e ones. The ov erall adversarial optimization alternates GAN-style up- dates: max ψ L disc , min θ L feat := λ adv L adv . (3) In each iteration, we (i) update D ψ using Eq. ( 1 ) to im- prov e pair discrimination, and (ii) update f θ using Eq. ( 2 ) to strengthen feature alignment with the positi ve text an- chor . This pair-based adversarial training teaches the in- variant extract or to preserve semantic meaning while ignor - ing distortion-specific cues, yielding image features that are both text-anchored and distortion-in variant. Architectur e. The feature extractor f θ consists of a frozen CLIP image encoder follo wed by a trainable in variant feature extractor composed of three residual blocks and a projection head. Each residual block contains two linear layers with batch normalization, dropout, and a skip connection, formulated as h l +1 = σ ( BN 2 ( W 2 ( σ ( BN 1 ( W 1 h l )))) + h l ) , where σ ( · ) denotes the ReLU acti vation. The projection head maps the out- put to a 1024-dimensional embedding, serving as the final in variant feature representation. The discriminator D ψ is implemented as a lightweight T ransformer encoder that receiv es an image–text feature pair ( z I , z T ) and predicts whether they correspond to the same semantic content. It comprises L = 4 Trans- former blocks with H = 8 attention heads and a hid- den dimension of 512, each including multi-head self- attention, feed-forward layers with GELU activ ation, and residual normalization. A learnable [CLS] token is prepended to the sequence of projected embeddings, x = [ t cls ; W z I ; W z T ] , and the output from the final [CLS] to- ken is passed through a linear head to produce binary logits: D ψ ( z I , z T ) = softmax ( W o x cls ) . This design balances ef- ficiency and expressiv eness, enabling the discriminator to capture fine-grained semantic correspondence between im- age and text pairs. A uto-A ugmentor and Feature Extractor Adversarial T raining. The Auto-Augmentor T aug ( · ; Θ) and feature ex- tractor f θ are trained in an adversarial fashion to jointly model realistic camera distortions and learn rob ust and in- variant representations. Giv en an image x and its distorted counterpart ˆ x = T aug ( x ; Θ) , the extractor produces feature embeddings F = f θ ( x ) and F ′ = f θ ( ˆ x ) . The augmenter seeks to generate distortions that maximally disrupt feature consistency , while the extractor learns to minimize this dis- crepancy and restore semantic alignment. This process is formulated as a min–max optimization: min θ max Θ L in v ( F ′ , F ) − λ sem L sem ( x, ˆ x ) , (4) where L in v measures the cosine dissimilarity between in- variant features, and L sem enforces perceptual fidelity through a frozen V iT encoder E ( · ) : L in v ( F ′ , F ) = 1 − cos( F ′ , F ) , and L sem ( x, ˆ x ) = P i 1 − cos( E i ( x ) , E i ( ˆ x )) . During training, we alternate gradient ascent on Θ to maxi- mize L in v − λ sem L sem , forcing the augmenter to discover in- creasingly challenging yet semantically faithful distortions, and gradient descent on θ to minimize L in v , compelling f θ to become in variant to those perturbations. T o pre vent triv- ial co-adaptation, gradients through f θ are stopped during the augmenter step, and parameters in T aug (e.g., blur ra- dius, compression strength, gamma value) are clamped to physically plausible ranges. As the Auto-Augmentor learns to generate progressi vely stronger camera-like distortions, the feature extractor f θ si- multaneously learns to neutralize them. This adv ersarial dy- namic yields semantically stable and distortion-robust fea- tures that remain consistent ev en under complex capture conditions such as screen photographing, mobile recapture, or multi-stage compression. Full System T raining. In the full framework, this Auto- Augmentor–Extractor adversarial loop operates jointly with the Cross-Modal Alignment between Image and T ext rep- resentations. The overall system alternates among three coordinated updates: (i) training the discriminator D ψ to distinguish matched and mismatched image–text pairs, (ii) updating T aug to produce adversarial yet semantically valid perturbations, and (iii) updating f θ to align inv ariant image features with their correct te xt anchors while resisting cam- era distortions. This three-way optimization ensures that the learned representation is simultaneously semantically in variant, distortion-rob ust, and camera-realistic. 3.3. Zero-W atermarking with In variant Features The right block of Fig. 2 illustrates a learning-based zero- watermarking head on top of our proposed in variant feature extractor f θ . W e adopt the work on learning to associate zero-watermarks in feature space [ 25 ], where the original image is ne ver modified. Instead, we register a compact reference signature that binds a binary watermark message to the image’ s in variant representation. At test time, the same frozen f θ is applied to a (possibly camera-captured) image to extract the message. Featur e Encoding. Given an image x , we extract an in- variant feature map F = f θ ( x ) ∈ R H × W × C , and transform it to a v ector via a lightweight head Ψ (global a verage pool- ing followed by a linear projection): ˜ F = Ψ( F ) ∈ R d . During watermark registration, θ is frozen; only the small head Ψ and a reference codebook are optimized. Reference Signature and Prediction. Let W ∈ { 0 , 1 } k denote the target k -bit message. W e maintain a learnable reference matrix C ∈ R k × d , whose i -th row C i acts as a directional code for bit W i . The predicted bit probability is ˆ W i = σ ˜ F · C i , i = 1 , . . . , k , where σ ( · ) is the sigmoid function. Registration Objective. The registration is a small con vex-lik e optimization over C and the linear projection Ψ ; inference reduces to a dot product and threshold. W e optimize C (and Ψ ) by minimizing a binary cross-entropy with an ℓ 2 regularizer on C : L W = − k X i =1 h W i log ˆ W i + (1 − W i ) log(1 − ˆ W i ) i , (5) L C = ∥ C ∥ 2 2 , L total = L W + λ C L C , (6) with gradient updates C ← C − η ∂ L total ∂ C , Ψ ← Ψ − η ∂ L total ∂ Ψ . (7) This optimization is performed per image–message pair: giv en a single image and a single k -bit watermark, we learn a dedicated reference signature ( C, Ψ) for that pair only: no minibatching, no modification to the image, and no updates to f θ . Extraction. Gi ven a potentially distorted (e.g., camera- captured) image x ′ , we compute ˜ F ′ = Ψ f θ ( x ′ ) , ˆ W ′ i = σ ˜ F ′ · C i , (8) and recov er the binary message via thresholding ˜ W i = ( 1 , ˆ W ′ i ≥ 0 . 5 , 0 , ˆ W ′ i < 0 . 5 . (9) Robust recovery follows from the distortion inv ariance of f θ , which ensures ˜ F ′ ≈ ˜ F even after severe photometric, geometric, or compression artifacts. 4. Experiment and Analysis This section presents the empirical validation of the pro- posed TIA Cam framework. W e organize the analysis into three parts. First, we ev aluate the inv ariance and semantic quality of the learned features (Section 4.2 ), demonstrat- ing that our te xt-anchored representation remains stable un- der di verse distortions. Second, we assess the robustness of our watermarking system across both synthetic and camera- captured conditions (Section 4.3 ). Finally , we conduct ab- lation studies (Section 4.4 ) to analyze the roles of text guid- ance, and in variant feature learner . 4.1. Experimental Setup Datasets. W e conduct experiments on multiple datasets to ev aluate both inv ariance learning and watermarking ro- bustness. For text–image alignment, we jointly use V isual Genome [ 17 ] and Flickr30k [ 22 ], which together provide div erse textual granularity: re gion-level phrases from V i- sual Genome and full-sentence captions from Flickr30k. In addition, we test on ImageNet [ 5 ], MSCOCO [ 20 ], and Caltech-256 [ 9 ] to assess generalization across domains and resolutions. The proposed in variant feature extractor is trained on the combined V isual Genome and Flickr30k datasets. For V isual Genome, each region-lev el word set (e.g., { person, wearing, fedora } ) is con verted into a natural- language template, “This is an image of a person wear- ing a fedor a. ” , ensuring grammatical coherence and seman- tic alignment with the corresponding visual regions. This mixed training setup provides both localized and sentence- lev el supervision, enabling more stable multimodal feature learning. Implementation Details. The framework follo ws the ov erall scheme illustrated in Fig. 2 . It is implemented in PyT orch and trained on an NVIDIA R TX 4090 GPU. The learning rate is set to 1 × 10 − 4 with the Adam optimizer . All images are resized to 128 × 128 × 3 . Remarkably , the auto- augmentor modules are pretrained on their corresponding target distortions: geometric, photometric, additiv e noise, filtering, compression, and Moir ´ e interference, using 10k random synthetic image pairs per type. Each learns its transformation via mean squared error (MSE) and struc- tural similarity (SSIM) losses, and this pretraining pro vides meaningful initialization, ensuring physically plausible and semantically coherent updates. Subsequently , in adversarial training, the auto-augmentor is tuned to generate distortions that maximally perturb feature alignment, while the feature extractor f θ learns to counteract them. 4.2. Featur e In variance and Semantic Evaluation W e next ev aluate the inv ariance and semantic quality of the learned representations. The analysis proceeds in two stages: (1) feature robustness, which quantifies the stability of representations under di verse distortions, and (2) seman- tic transferability , which measures how well the learned in- variant features preserv e discriminati ve meaning when used for downstream tasks. T able 1. Cosine similarity between features of original and dis- torted images. Distortion SimCLR BYOL Barlow VICReg VIbCReg TIACam Additiv e 0.82 0.88 0.79 0.83 0.89 0.97 Photometric 0.84 0.84 0.81 0.76 0.88 0.93 Perspectiv e 0.87 0.85 0.87 0.83 0.88 0.95 JPEG 0.79 0.80 0.87 0.81 0.73 0.98 Moir ´ e 0.85 0.83 0.84 0.89 0.87 0.97 Filtering 0.88 0.88 0.89 0.87 0.88 0.98 All 0.74 0.71 0.74 0.77 0.77 0.94 Featur e Robustness. T able 1 compares the cosine sim- ilarity between features of original and distorted images across six distortion types, av eraged ov er the testing set of the ev aluated datasets. TIA Cam consistently achieves the highest similarity values among self-supervised baselines, including SimCLR [ 4 ], BYOL [ 10 ], Barlow T wins [ 29 ], VICReg [ 2 ], and VIbCReg [ 18 ], indicating superior inv ari- ance to additi ve noise, photometric variation, perspectiv e changes, and JPEG compression. Even under the compound distortion setting ( All ), TIA Cam maintains stable represen- tations with minimal degradation. These results verify that our text-anchored and auto-augmentation–guided training effecti vely enforces distortion-in variant feature learning. Furthermore, the cosine similarity values can be inter- preted intuiti vely: semantically consistent image pairs yield positiv e cosine values close to 1, whereas unrelated or mis- matched pairs (e ven those visually similar in color or te x- ture) produce negati ve values approaching –1. As shown in Fig. 3 , the proposed in variant feature space clearly dis- tinguishes between true and false pairs, indicating strong semantic alignment. Across all testing data, positive im- age pairs consistently maintain high similarity (av erage +98 . 4% ), while negati ve pairs exhibit strong separation (av erage − 47 . 2% ), confirming the discriminativ e stability of our learned features. Figure 3. From left to right: example image, its camera-distorted version, and an unrelated ne gati ve image. Semantic T ransferability . T o examine whether in vari- ant features also preserve semantic meaning, we freeze the learned encoder and train a linear classifier probe on four benchmark datasets: CIF AR-100, Imagenette, MSCOCO, and Caltech-256 (15k train / 5k test). As reported in Fig- ure 4 , TIACam attains the highest T op-1 and T op-5 ac- curacies across all datasets and distortion types. For in- stance, on CIF AR-100 under additi ve and JPEG noise, TIA- Cam improv es T op-1 accuracy by o ver 7% compared to the best baseline, and on lar ge-scale datasets such as Caltech- 256, it maintains o ver 80% T op-1 accuracy e ven under joint distortions. This demonstrates that the proposed model not only learns in variant representations b ut also preserves their semantic discriminability , enabling robust generaliza- tion across content and domain variations. 4.3. W atermarking under Camera Distortions W e next ev aluate the robustness of TIA Cam ’ s watermark extraction under real-world capture conditions, where opti- cal artifacts and complex image transformations often de- grade con ventional watermarking systems. As illustrated in Fig. 3 , we consider three representative scenarios: screen camera capture, print camera capture, and screenshot (all with v aried backgrounds), to comprehensiv ely assess both camera-induced and user-generated distortions. T able 2 summarizes the results. Remarkbly , unlike prior meth- ods [ 24 ] that rely on a detection stage to localize the wa- Figure 4. Linear probe (T op 1 & 5 accurac y) on CIF AR-100, Ima- genette, MSCOCO, and Caltech-256 under six camera distortions. termarked region before extraction, our approach directly processes the entire image. Owing to the high robustness of the learned in variant feature space, TIA Cam achiev es re- liable extraction without explicit localization. This design choice simplifies deployment and still delivers state-of-the- art accuracy in challenging real cases; incorporating a de- tection step would further enhance performance. Screen Camera. W e first ev aluate the pre valent case of display recapture, where watermarked images are displayed on a monitor and re-captured using a mobile camera. This setting introduces comple x degradations including perspec- tiv e warping, illumination shifts, sensor noise, and color imbalance. Evaluated on ov er three hundreds of screen- captured testing images, TIA Cam achiev es nearly perfect extraction with 99% and 98% bit accuracy for 30- and 100- bit messages, respectiv ely . Competing methods such as HiDDeN [ 30 ], PIMoG [ 6 ], and StegaStamp [ 24 ] show sub- stantial degradation, highlighting that TIA Cam’ s feature- space inv ariance provides robustness beyond pixel-le vel watermarking. Print Camera. W e next examine robustness under real physical recapture, where printed images are re- photographed under div erse lighting and viewpoint con- ditions. Across ov er 200 printed-and-recaptured samples, TIA Cam maintains accurate reco very: 96.6% and 95.1% for 30- and 100-bit messages, respecti vely , significantly outper- forming all baselines. These results demonstrate that the proposed TIACam features preserve alignment across imag- ing pipelines, ensuring consistent watermark integrity even after multiple optical transformations. Screenshots. T o further assess resilience to user- generated edits, we applied the augmentation strategy of [ 7 ] to a large set of screenshots and meme-style crops with varied backgrounds. TIA Cam achieves 97.4% and 95.2% bit accuracy for 30- and 100-bit messages, re- spectiv ely , demonstrating exceptional robustness against uncontrolled distortions and semantic edits. Even without region detection, the model successfully extracts water- marks from entire images, confirming the discriminative power of our in variant feature space. Figure 5. Examples of real-world distortions ev aluated in this study . The first row sho ws screen-camera captures, where images are re-photographed from different monitors using different mobile cameras. The second row shows print-camera captures, obtained by printing images on paper and re-capturing them under varying lighting and viewpoints. The third row sho ws screenshots, representing user-generated distortions such as compositional clutter . T able 2. Bit accuracy (BA, in %) comparison of HiDDeN, PI- MoG, StegaStamp, and TIA Cam under three real-world distortion types: Screen Camera, Print Camera, and Screenshots, for 30-bit and 100-bit message lengths. Results averaged over three random seeds; variance belo w 0.3%. Method Screen Camera Print Camera Scr eenshots 30 bits 100 bits 30 bits 100 bits 30 bits 100 bits HiDDeN 70.6% 68.8% 67.1% 65.7% 74.5% 70.6% PIMoG 82.3% 80.1% 75.7% 72.3% 79.7% 78.6% StegaStamp 93.8% 91.2% 92.2% 91.3% 93.7% 93.9% TIA Cam 99.1% 98.2% 96.6% 95.1% 97.4% 95.2% 4.4. Ablation Studies Effectiveness of TIA Cam Featur e Extractor . W e per- form ablation experiments to validate that the robustness of TIACam does not originate from the pretrained CLIP backbone but from our proposed inv ariant feature learning framew ork. Specifically , we compare (1) CLIP Baseline, which directly uses the CLIP image encoder , and (2) CLIP + TIA Cam Inv ariant Feature Extractor, which incorporates our proposed refinement module trained under adversarial auto-augmentation. W e ev aluate feature robustness on 10k testing images from each of four datasets: V isual Genome, Flickr , MSCOCO, and ImageNet, under all six distortions randomly sampled from the camera-distortion pipeline. The av erage cosine similarity between features of original and distorted images is reported in T able 3 . TIA Cam substan- tially enhances stability , improving cosine similarity by ap- proximately 13–15% across datasets. This confirms that the learned in variance arises from our framework rather than from CLIP’ s pretrained representations. Distinctiveness of TIA Cam Image Feature Space. T o examine the balance between inv ariance and distinctive- ness, we design extreme cases where two visually differ - ent images share the same caption, such as “A photo of cat, T able 3. Ablation on TIA Cam feature extractor . Higher cosine similarity indicates stronger in variance under distortion. Dataset CLIP Only CLIP + TIA Cam F eature V isual Genome 0.78 0.92 Flickr 0.84 0.93 MSCOCO 0.76 0.89 ImageNet 0.82 0.93 person, and park bench” in Fig. 6 . W e embed a 100-bit wa- termark using Image 1 and attempt extraction from Image 2 and from the text embedding directly . T o quantify this phenomenon, we generate 200 image pairs using text-to-image synthesis with identical captions. Only Image 1 yields perfect recovery (100% bit accuracy), while extraction from Image 2 and text features drops to an av erage of 84%. The a verage cosine similarity between Im- age 1 and 2 in variant features is 0.73, indicating that our model maintains strong feature distinctiv eness ev en under identical te xt descriptions. During training, the text anchors hav e mixed granularity , which encourages the model to learn both inv ariance to distortions and separability across distinct visual instances. These results confirm that TIA- Cam ef fectiv ely balances semantic in variance and visual in- dividuality: achieving text-guided consistency without col- lapsing visually distinct samples. Figure 6. Extreme case test of feature distincti veness. Both images are generated with the same caption, “A photo of cat, person, and park benc h” , yet their in variant features exhibit a cosine similarity of only 0.73. 5. Conclusion W e presented TIACam, a framew ork for camera-robust zero-watermarking. It features a nov el learnable auto- augmentor that models camera-like distortions, a novel text- anchored in variant feature extractor trained in a three-way adversarial loop, and demonstrated state-of-the-art robust- ness across real camera and related distortions. References [1] Muhammad Ahtesham and Xin Zhong. T ext-Guided Im- age In variant Feature Learning for Rob ust Image W atermark- ing. https :/ /arxiv. org/ abs/2503 .13805 , 2025. arXiv Preprint. 2 [2] Adrien Bardes, Jean Ponce, and Y ann LeCun. V i- CReg: V ariance-In variance-Co variance Regularization for Self-Supervised Learning. https://arxiv.org/abs / 2105.04906 , 2021. arXi v Preprint. 7 [3] Mathilde Caron, Hugo T ouvron, Ishan Misra, Herv ´ e J ´ egou, Julien Mairal, Piotr Bojanowski, and Armand Joulin. Emerg- ing Properties in Self-Supervised V ision Transformers. In ICCV , pages 9650–9660, 2021. 1 , 2 [4] T ing Chen, Simon Kornblith, Mohammad Norouzi, and Ge- offre y Hinton. A Simple Framew ork for Contrasti ve Learn- ing of V isual Representations. In ICML , pages 1597–1607, 2020. 6 [5] Jia Deng, W ei Dong, Richard Socher, Li-Jia Li, Kai Li, and Li Fei-Fei. ImageNet: A Large-Scale Hierarchical Image Database. In 2009 IEEE Confer ence on Computer V ision and P attern Recognition , pages 248–255, 2009. 6 [6] Han Fang, Zhaoyang Jia, Zehua Ma, Ee-Chien Chang, and W eiming Zhang. PIMoG: An Effecti ve Screen-Shooting Noise-Layer Simulation for Deep-Learning-Based W ater- marking Network. In ACM MM , pages 2267–2275, 2022. 1 , 2 , 7 [7] Pierre Fernandez, Alexandre Sablayrolles, T eddy Furon, Herv ´ e J ´ egou, and Matthijs Douze. W atermarking Images in Self-Supervised Latent Spaces. In ICASSP 2022 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pages 3054–3058, 2022. 2 , 7 [8] Atoany Fierro-Radilla, Mariko Nakano-Miyatake, Manuel Cedillo-Hernandez, Laura Cleofas-Sanchez, and Hector Perez-Meana. A Robust Image Zero-W atermarking Using Con volutional Neural Networks. In IWBF , pages 1–5, 2019. 1 , 2 [9] G Griffin, A Holub, and P Perona. Caltech 256 (1.0)[data set]. CaltechD A TA. doi , 10:D1, 2022. 6 [10] Jean-Bastien Grill, Florian Strub, Florent Altch ´ e, Corentin T allec, Pierre Richemond, Elena Buchatskaya, Carl Doersch, Bernardo A vila Pires, Zhaohan Guo, and Mohammad Ghesh- laghi Azar . Bootstrap Y our Own Latent: A New Approach to Self-Supervised Learning. Advances in Neural Information Pr ocessing Systems , 33:21271–21284, 2020. 6 [11] Hengchang Guo, Qilong Zhang, Junwei Luo, Feng Guo, W enbin Zhang, Xiaodong Su, and Minglei Li. Practical Deep Dispersed W atermarking W ith Synchronization and Fusion. In Proceedings of the 31st ACM International Conference on Multimedia , pages 7922–7932, 2023. 2 [12] Baoru Han, Han W ang, Dawei Qiao, Jia Xu, and T ianyu Y an. Application of zero-watermarking scheme based on swin transformer for securing the metav erse healthcare data. IEEE journal of biomedical and health informatics , 28(11): 6360–6369, 2024. 2 [13] Lingqiang He, Zhouyan He, T ing Luo, and Y ang Song. Shrinkage and Redundant Feature Elimination Network- Based Rob ust Image Zero-W atermarking. Symmetry , 15(5): 964, 2023. 2 [14] Eric Jang, Shixiang Gu, and Ben Poole. Categori- cal Reparametrization With Gumbel-Softmax. In Inter- national Confer ence on Learning Repr esentations (ICLR 2017) , 2017. 3 [15] Zhaoyang Jia, Han Fang, and W eiming Zhang. MBRS: En- hancing Robustness of DNN-Based W atermarking by Mini- Batch of Real and Simulated JPEG Compression. In ACM MM , pages 41–49, 2021. 2 [16] Nishan Khatri, Agnibh Dasgupta, Y ucong Shen, Xin Zhong, and Frank Y . Shih. Perspective Transformation Layer. In 2022 International Conference on Computational Science and Computational Intelligence (CSCI) , pages 1395–1401, 2022. 3 [17] Ranjay Krishna, Y uke Zhu, Oliver Groth, Justin Johnson, Kenji Hata, Joshua Kravitz, Stephanie Chen, Y annis Kalan- tidis, Li-Jia Li, and David A. Shamma. V isual Genome: Con- necting Language and V ision Using Crowdsourced Dense Image Annotations. International J ournal of Computer V i- sion , 123(1):32–73, 2017. 6 [18] Daesoo Lee and Erlend Aune. VIBCReg: V ariance- In variance-Better-Co variance Regularization for Self- Supervised Learning on Time Series. https : / / arxiv . org / abs / 2109 . 00783 , 2021. arXiv Preprint. 7 [19] Can Li, Hua Sun, Changhong W ang, Sheng Chen, Xi Liu, Y i Zhang, Na Ren, and Deyu T ong. ZWNet: A Deep-Learning- Powered Zero-W atermarking Scheme W ith High Rob ustness and Discriminability for Images. Applied Sciences , 14(1): 435, 2024. 2 [20] Tsung-Y i Lin, Michael Maire, Serge J. Belongie, Lubomir D. Bourdev , Ross B. Girshick, James Hays, Pietro Perona, Dev a Ramanan, Piotr Doll ´ ar , and C. Lawrence Zitnick. Microsoft COCO: Common Objects in Context. https: // arxiv. org/abs/1405.0312 , 2014. arXi v Preprint. 6 [21] Gaozhi Liu, Silu Cao, Zhenxing Qian, Xinpeng Zhang, Sheng Li, and W anli Peng. W atermarking One for All: A Robust W atermarking Scheme Against Partial Image Theft. In Proceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , pages 8225–8234, 2025. 2 [22] Bryan A Plummer , Liwei W ang, Chris M Cerv antes, Juan C Caicedo, Julia Hockenmaier, and Svetlana Lazeb- nik. Flickr30k entities: Collecting region-to-phrase corre- spondences for richer image-to-sentence models. In Pr o- ceedings of the IEEE international confer ence on computer vision , pages 2641–2649, 2015. 6 [23] Stanislav Semenov . Smooth Approximations of the Round- ing Function. https : / / arxiv . org / abs / 2504 . 19026 , 2025. arXi v Preprint. 3 [24] Matthew T ancik, Ben Mildenhall, and Ren Ng. StegaStamp: In visible Hyperlinks in Physical Photographs. In CVPR , pages 2117–2126, 2020. 1 , 2 , 7 [25] Abdullah All T anvir and Xin Zhong. InvZW : Inv ariant Fea- ture Learning via Noise-Adv ersarial T raining for Robust Im- age Zero-W atermarking. https : / / arxiv . org / abs / 2506.20370 , 2025. arXi v Preprint. 2 , 5 [26] Naftali Tishby and Noga Zaslavsk y . Deep Learning and the Information Bottleneck Principle. In 2015 IEEE Information Theory W orkshop (ITW) , pages 1–5, 2015. 4 [27] V edran V ukoti ´ c, V ivien Chappelier , and T eddy Furon. Are Classification Deep Neural Networks Good for Blind Image W atermarking? Entr opy , 22(2):198, 2020. 2 [28] Guanjie W ang, Zehua Ma, Chang Liu, Xi Y ang, Han Fang, W eiming Zhang, and Nenghai Y u. MUST: Robust Image W atermarking for Multi-Source Tracing. In AAAI , pages 5364–5371, 2024. 2 [29] Jure Zbontar , Li Jing, Ishan Misra, Y ann LeCun, and St ´ ephane Deny . Barlow T wins: Self-Supervised Learning via Redundancy Reduction. In International Confer ence on Machine Learning , pages 12310–12320, 2021. 6 [30] Jiren Zhu, Russell Kaplan, Justin Johnson, and Li Fei-Fei. HiDDeN: Hiding Data With Deep Networks. In ECCV , pages 657–672, 2018. 2 , 3 , 7 TIA Cam: T ext-Anchor ed In variant F eature Learning with A uto-A ugmentation f or Camera-Robust Zero-W atermarking Supplementary Material S1. T raining Data Diversity and Caption Examples T o illustrate the div ersity of text-image supervision used during training, we provide qualitative examples from the V isual Genome and Flickr30k datasets in Fig. 1 and Fig. 2 . V isual Genome contains short, object-centric re- gion descriptions, often listing entities or attributes, while Flickr30k provides full natural sentences with richer struc- ture and contextual detail. Despite this difference in cap- tion granularity and linguistic style, TIA Cam trains stably on the combined dataset and produces consistent in variant features. This demonstrates that the model is not tied to a particular caption format and that its learned feature space generalizes across heterogeneous annotation styles, help- ing reduce dataset-specific bias and improving robustness in multimodal settings. S2. Realism of the Learned A uto-A ugmentor Distortions T o illustrate the realism and div ersity of the distortions learned by our auto-augmentor , Fig. 3 presents represen- tativ e examples. Each row shows the original input fol- lowed by distortions synthesized by the learned modules, including perspective deformation, photometric shifts, addi- tiv e noise, filtering artifacts, JPEG degradation, and Moir ´ e interference. These visualizations sho w that the auto-augmentor pro- duces distortions characteristic of real camera pipelines: such as chromatic imbalance, sensor noise, edge warping, and Moir ´ e patterns, rather than simple digital corruptions. The close resemblance between these synthesized distor- tions and actual screen- and print-recapture ef fects supports our claim that the learned augmentations ef fectively model camera perturbations, enabling TIA Cam to learn in variance that transfers robustly to real-w orld camera scenarios. S3. Additional Ablation Study: Learned A uto- A ugmentor vs. Manual Distortions T o assess the impact of the learnable auto-augmentor , we replace it with a fixed set of hand-crafted distortions while keeping the feature extractor and training objectives un- changed. W e then compare cosine similarity between in- variant features from original and distorted images. As shown in T ables 1 and 2 , the manual augmentor produces noticeably lower similarity across both synthetic distor- tions and real capture settings (screen camera, print camera, and screenshots). In contrast, the learned auto-augmentor consistently achie ves much higher similarity , indicating stronger distortion-in variant feature alignment. T able 1. Cosine similarity between original and distorted in variant features across six distortion types. Distortion T ype Manual Augmentor (Fixed) A uto-A ugmentor (Ours) Additiv e Noise 0.84 0.98 Photometric 0.86 0.96 Perspectiv e 0.78 0.92 JPEG Compression 0.88 0.97 Moir ´ e Pattern 0.76 0.89 Filtering 0.89 0.98 T able 2. Cosine similarity between original and distorted in variant features across Screen Camera, Print Camera, Screenshot Distortion T ype Manual A ugmentor (Fixed) A uto-Augmentor (Ours) Screen Camera 0.82 0.91 Print Camera 0.76 0.87 Screenshot 0.78 0.85 W e further compare watermark extraction performance under real camera distortions. T able 3 reports bit accu- racy for 30-, 100-, and 200-bit payloads under randomly se- lected six distortions out of Additi ve, Photometric, Perspec- tiv e, JPEG, Moir ´ e, and Filtering Noise. Consistent with the feature-lev el results, models trained with manual distortions degrade as the message length increases, while the learned auto-augmentor maintains high accuracy across all payload sizes. T able 3. Bit accuracy (%) under randomly applied 6 distortions. Model V ariant 30-bit 100-bit 200-bit Manual Augmentor (Fixed) 91.2 89.5 89.3 Auto-Augmentor (Ours) 98.7 96.4 96.1 T able 4 provides a breakdown by ev aluating the same two models under three specific capture pipelines: screen camera, print camera, and screenshots. The manual aug- mentor exhibits notable drops, especially under print- camera and high-bit scenarios, while the auto-augmentor consistently achiev es strong accuracy across all conditions. T able 4. Bit accuracy (%) of Manual Augmentor and Auto Aug- mentor under screen camera, print camera, and screenshot distor- tions (30-bit and 100-bit). Method Screen Camera Print Camera Screenshots 30 bits 100 bits 30 bits 100 bits 30 bits 100 bits Manual Augmentor 93.3% 92.6% 90.1% 87.4% 92.7% 87.9% A uto A ugmentor (Ours) 99.1% 98.4% 96.9% 95.3% 97.6% 96.5% These results show that the learned auto-augmentor pro- vides stronger and more realistic distortion modeling than fixed operators. By better approximating physical camera artifacts, the auto-augmentor enables TIACam to learn more Figure 1. Example of V isual Gnome Training Data used in TIA Cam. Figure 2. Example of Flickr training data used in TIACam. The first row sho ws the images, the second row contains the corresponding captions, and the third row presents paraphrased v ersions of those captions. stable in variant features and substantially improves real- world watermark rob ustness. S4. Comprehensiv e Comparison with Existing Digital and Zero-W atermarking Methods The main paper focuses on camera-based ev aluations, where TIA Cam shows clear adv antages o ver learning-based watermarking baselines. For completeness, we further com- pare TIA Cam with a broader set of digital watermarking and zero-watermarking systems under digital appearance distor- tions, geometric transformations, and real camera capture. These supplementary results confirm that the robustness of TIA Cam extends well beyond the camera setting. T able 5 reports bit accuracy under a range of common digital perturbations. While pretrained feature-based zero- watermarking approaches remain reliable under mild set- tings, they degrade substantially under geometric or se vere appearance changes such as rotation, heavy cropping, blur, and screenshot artifacts. In contrast, TIA Cam maintains consistently high accuracy across all digital distortions, highlighting the benefit of learning distortion-in variant fea- tures rather than relying solely on pretrained representa- tions. W e next compare watermarking systems under geomet- ric transformations in T able 6 . Non-camera-oriented dig- ital watermarking approaches (DWSF , MuST , WOF A) ex- perience substantial performance drops as geometric sev er- ity increases, particularly under large rotations and scale changes. TIA Cam maintains high accuracy across all ge- T able 5. Bit accuracy (%) under digital distortions. Distortion ZBW [ 27 ] WSSL [ 7 ] TIACam Identity 1.00 1.00 1.00 Rotation (25°) 0.27 1.00 1.00 Crop (0.5) 1.00 1.00 0.98 Crop (0.1) 0.02 0.98 1.00 Resize (0.7) 1.00 1.00 1.00 Blur (2.0) 0.25 1.00 1.00 JPEG (50) 0.96 0.97 0.99 Brightness (2.0) 0.99 0.96 0.97 Contrast (2.0) 1.00 1.00 1.00 Hue (0.25) 1.00 1.00 1.00 Screenshot 0.86 0.97 0.99 ometric settings, demonstrating strong inv ariance to spatial misalignment and confirming the stability of the learned in- variant feature space. T able 6. Bit accuracy (%) under geometric distortions. Distortion D WSF [ 11 ] MuST [ 28 ] WOF A [ 21 ] TIA Cam Translation 10% 52.97 50.00 91.97 93.9 Translation 25% 49.87 49.98 93.25 92.1 Translation 50% 49.92 49.74 87.93 90.3 Rotation 15° 50.21 49.79 95.26 99.2 Rotation 30° 49.74 49.73 94.24 97.8 Rotation 45° 49.60 49.82 90.63 95.5 Scaling ± 10% 53.30 49.98 95.72 97.4 Scaling ± 20% 51.78 49.99 95.50 96.1 Scaling ± 25% 51.08 50.00 95.02 93.4 Finally , T able 7 ev aluates insertion-based watermarking methods (DWSF , WOF A, MuST) under real camera distor- tions. Although these systems are effecti ve under purely digital perturbations, they degrade sharply in real capture scenarios. TIA Cam consistently achiev es higher extrac- tion accurac y across bit lengths and distortion types, further Figure 3. Examples of auto-augmented images. demonstrating its strong generalization to physical-world artifacts. T able 7. Bit accuracy (%) of D WSF , WOF A, MuST , and TIA Cam under screen camera, print camera, and screenshot distortions (30- bit and 100-bit). Method Screen Camera Print Camera Screenshots 30 bits 100 bits 30 bits 100 bits 30 bits 100 bits DWSF 77.4% 71.8% 69.4% 65.1% 64.9% 62.8% MuST 81.8% 80.6% 74.9% 72.3% 65.3% 64.1% WOF A 86.7% 81.1% 71.4% 70.2% 67.7% 64.8% TIA Cam 99.1% 98.2% 96.6% 95.1% 97.4% 95.2% Overall, these supplementary ev aluations demonstrate that TIACam provides state-of-the-art robustness not only in camera-based watermarking but also across a broad spectrum of digital and geometric distortions, confirming the general effecti veness of its distortion-in variant feature learning framew ork. S5. Architectural Details Architectur e of the A uto-A ugmentation Modules. TIA- Cam auto-augmentor contains six learnable distortion mod- ules: Additiv e, Photometric, Filtering, JPEG, Moir ´ e, and Perspectiv e, that jointly approximate realistic camera degra- dations. The Additiv e and Photometric modules share the same architecture: a 512-dimensional latent vector is expanded into a 8 × 8 × 128 tensor through a fully- connected layer, followed by four Con vTranspose2d up- sampling blocks that generate a full-resolution residual map using ReLU acti vations and a final T anh layer . The Filtering module uses a 3-layer MLP (hidden size 256) to predict a k × k point-spread function ( k = 3 ), enforced to be non- negati ve via softplus and normalized to sum to one, then applied via depth-wise con volution. Both JPEG and Moir ´ e modules adopt a U-Net with four encoder le vels (64, 128, 256, 512 channels) and a 1024-channel bottleneck, paired with symmetric transposed-con volution decoders and skip connections; each predicts a residual that is added to the input image, enabling artifact-specific learning (compres- sion ringing for JPEG, aliasing patterns for Moir ´ e). Finally , the Perspecti ve module uses a ResNet-18 encoder (with the classification layer removed), followed by a 3-layer MLP (256 → 128 → 9 units) that regresses a 3 × 3 homogra- phy matrix to simulate geometric warping. T ogether , these six differentiable modules capture noise, blur , photometric variation, JPEG compression, moir ´ e interference, and per- spectiv e distortions observed in real camera pipelines. Architectur e of the Featur e Extractor and Discrimi- nator . TIACam uses a lightweight MLP-based feature extractor together with a transformer-based discriminator to learn in variant alignment between image–text represen- tations. The feature extractor takes a 768-dimensional CLIP embedding and processes it through an initial Lin- ear–BatchNorm–ReLU–Dropout layer that expands the rep- resentation to 1024 units. This is followed by three Resid- ualBlocks, each containing two Linear layers with Batch- Norm1d, ReLU activ ation, dropout (0.1), and a skip con- nection. A fusion block (Linear–BN–ReLU–Dropout) re- fines the intermediate representation, after which a pro- jection head first reduces the dimensionality to 512 (Lin- ear–BN–ReLU–Dropout) and then maps it to the final 1024- dimensional in variant space through a Linear–BatchNorm layer . Optional ℓ 2 normalization is applied at the out- put. Overall, the feature extractor forms a 6-layer residual MLP designed to stabilize training and produce distortion- in variant embeddings. The discriminator jointly processes the e xtracted image and text features to classify whether the pair originates from a real or adversarially generated match. Both embeddings are projected into a shared 512-dimensional hidden space via a Linear layer . A learnable [CLS] token is prepended, forming a 3-tok en sequence: [CLS] , image token, and text token. This sequence is passed through four stacked Trans- formerBlocks, each consisting of LayerNorm, an 8-head MultiheadAttention module, and a feed-forward MLP with GELU acti v ation and dropout, with residual connections af- ter both attention and MLP sub-layers. A final LayerNorm is applied to the output sequence, and the representation of the [CLS] token is fed into a fully connected layer to produce the 2-way classification logits. This combined ar- chitecture enables robust adversarial learning by aligning in variant features through the extractor while enforcing se- mantic consistency through the discriminator . S6. Detailed Linear Pr obe Results T able 8 provides the full numerical results corresponding to the linear probe comparisons summarized in Fig. 4 of the main paper . W e report T op-1 and T op-5 accuracy for SimCLR, BYOL, Barlow T wins, VICReg, VIbCReg, and TIA Cam across four datasets: CIF AR-100, Imagenette, MSCOCO, and Caltech-256, under six distortion types drawn from our camera-style pipeline (additiv e noise, pho- tometric shift, perspective warp, JPEG compression, Moir ´ e interference, and filtering noise). These expanded results confirm the trend observed in the main paper: TIACam consistently achieves the highest lin- ear separability across all datasets and distortion categories. In particular, TIA Cam shows large gains under geometric and appearance-heavy distortions, demonstrating that the learned inv ariant features retain strong semantic structure ev en under sev ere perturbations. S7. Semantic Sensitivity under In variance This experiment complements the “same-caption” analysis in the main paper by examining additional scenario: two visually similar images that differ only in subtle semantic details of their captions. Among 200 such image-caption pairs (e.g., “a photo of cat, child, park bench” vs. “a photo of dog, child, park bench” in Fig. 4 ), TIACam maintains strong alignment for true image-text pairs, achieving an a verage cosine similarity of 0.91. In contrast, ev en small changes in the caption reduce similarity to 0.70 on av erage. This behavior confirms that TIACam is not merely in- variant to distortions but also sensitiv e to semantic cues provided by te xt anchors. The in variant feature space pre- serves visual robustness while retaining the ability to dis- ambiguate fine-grained linguistic dif ferences, ensuring that each image remains both uniquely represented and seman- tically grounded. Figure 4. Semantic disambiguation using controlled captions. Although the two images are visually similar, the model distin- guishes them through small caption differences (e.g., “cat” vs. “dog”), yielding higher similarity for matching image–text pairs and lower similarity for mismatched ones. S8. Failur e Cases and Limitations TIA Cam is designed for content-preserving distortions: perturbations that may alter the visual appearance but do not change the underlying semantic meaning of the image, which is typically the case for real camera-based watermark extraction. Howe ver , when this assumption is violated, the in variant feature space may no longer be recoverable, lead- ing to failures in watermark e xtraction. Figure 5. Example failure case caused by sev ere occlusion. More than tw o-thirds of the image content is co vered, removing or alter - ing the semantic cues required by TIA Cam to recover the in variant representation. Because the watermark is bound to the image’ s un- derlying semantic meaning, a distortion that destroys or changes that meaning essentially yields a new image, making successful extraction impossible. A representati ve failure mode occurs under sev ere oc- clusion or content remov al. When a substantial portion of the image is blocked (empirically , more than two-thirds of the content; see Fig. 5 ), the semantic cues required by the T able 8. Linear e valuation (T op-1 / T op-5 accuracy) on CIF AR-100, Imagenette, MSCOCO, and Caltech-256 under six distortion types. Dataset Method Additive Photometric Perspectiv e JPEG Moir ´ e Filtering T op-1 T op-5 T op-1 T op-5 T op-1 T op-5 T op-1 T op-5 T op-1 T op-5 T op-1 T op-5 CIF AR-100 SimCLR 72.1 83.3 71.0 79.1 70.5 86.7 62.4 76.5 70.2 81.9 71.3 86.8 BY OL 75.5 89.2 73.4 88.1 72.6 87.3 61.7 78.0 74.0 86.2 73.9 87.1 Barlow T wins 71.8 88.7 69.6 85.7 72.9 86.9 65.8 77.6 75.5 87.0 78.2 86.6 VICReg 74.2 84.5 70.9 90.4 73.9 87.4 65.1 78.6 71.3 83.7 78.1 87.5 VIbCReg 72.9 88.5 72.9 88.4 76.9 87.4 66.1 82.6 76.3 81.7 77.1 87.5 TIA Cam 81.5 95.1 77.7 94.2 83.8 93.6 73.2 85.0 83.9 89.8 82.6 93.4 Imagenette SimCLR 65.3 80.0 73.1 83.2 72.2 87.5 73.0 88.1 71.7 86.8 72.5 87.3 BY OL 72.2 86.1 72.0 83.0 73.0 88.2 73.8 88.9 72.4 87.1 73.2 87.9 Barlow T wins 72.7 87.5 74.6 86.6 72.5 87.8 73.4 88.3 72.0 87.0 72.8 87.5 VICReg 75.6 90.3 74.4 87.4 73.4 88.5 74.1 89.0 72.7 87.4 73.5 88.1 VIbCReg 74.3 85.1 72.6 84.4 76.9 87.4 66.1 88.6 76.3 81.7 74.7 84.5 TIA Cam 82.0 95.4 81.2 94.6 80.3 93.9 81.0 94.2 79.8 93.1 80.5 93.6 MSCOCO SimCLR 71.5 87.6 70.7 86.9 70.2 86.3 71.0 84.1 77.0 81.8 70.8 86.5 BY OL 72.3 88.2 71.4 87.5 70.8 86.8 71.6 87.6 79.5 86.2 71.3 86.9 Barlow T wins 71.9 87.9 71.1 87.2 70.5 86.6 74.3 83.4 75.2 86.0 71.0 86.7 VICReg 74.7 88.5 71.7 87.8 71.0 87.1 75.9 85.9 78.7 83.5 71.5 87.2 VIbCReg 71.7 82.1 76.1 87.7 69.9 82.2 74.3 84.8 77.2 83.7 73.8 84.7 TIA Cam 80.8 94.3 79.9 93.6 79.0 92.8 79.6 93.2 81.4 92.0 79.1 92.6 Caltech-256 SimCLR 72.0 87.2 74.2 86.5 70.0 83.0 70.8 86.7 69.8 85.6 70.5 86.3 BY OL 71.8 89.8 78.0 87.1 73.6 86.5 71.4 88.3 70.2 86.0 71.0 86.6 Barlow T wins 70.5 86.5 77.7 86.9 70.3 87.3 71.1 85.1 68.9 85.8 70.7 86.5 VICReg 72.2 90.1 73.4 87.5 72.9 83.8 71.7 87.6 70.5 86.2 71.2 86.9 VIbCReg 73.7 87.7 74.1 85.1 73.9 87.4 73.3 87.5 68.2 83.7 72.5 84.5 TIA Cam 80.2 94.0 79.3 93.2 78.6 92.5 79.0 93.0 77.9 91.8 78.7 92.3 text anchor are no longer visible. In such cases, the feature extractor receiv es insufficient information to reco ver the in- tended in variant representation, causing the extracted fea- tures to drift away from the original manifold and leading to reduced or failed watermark decoding. This limitation is consistent with the design of TIA- Cam: the watermark is associated with the underlying se- mantics of the image rather than its pixel-lev el appearance. When those semantics are lar gely removed or destroyed, the model can no longer align the extracted feature with the reg- istered in variant signature. As a result, TIA Cam remains robust under content-preserving distortions but cannot re- cov er once the semantic content itself is lost or altered. In such cases, the distortion essentially produces a different new image in terms of meaning, and the model cannot align it with the original in variant signature.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment