Orthogonal polynomials on path-space

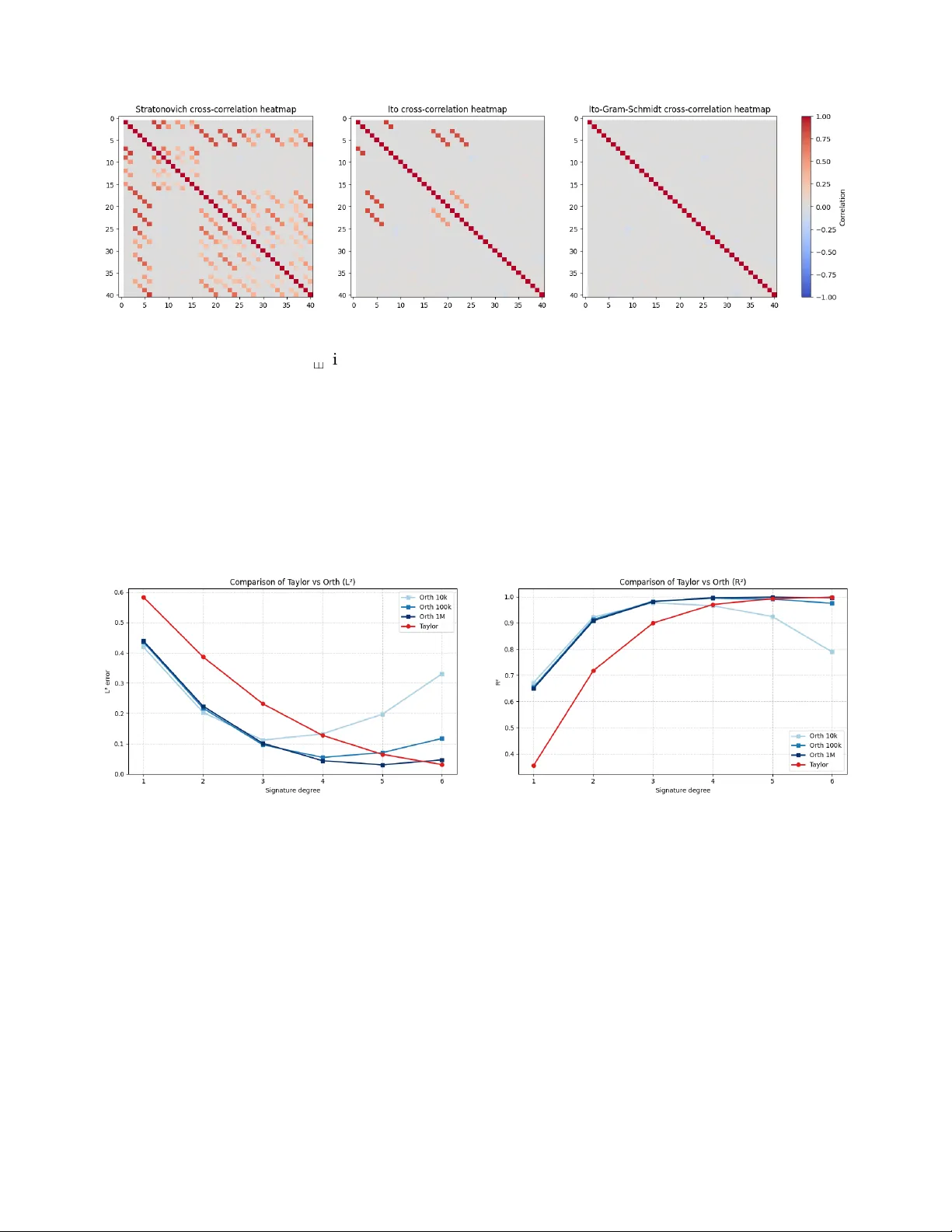

We consider the orthogonalisation of the signature of a stochastic process as the analogue of orthogonal polynomials on path-space. Under an infinite radius of convergence assumption, we prove density of linear functions on the signature in $L^p$ fun…

Authors: Ilya Chevyrev, Emilio Ferrucci, Darrick Lee