Depth-PC: A Visual Servo Framework Integrated with Cross-Modality Fusion for Sim2Real Transfer

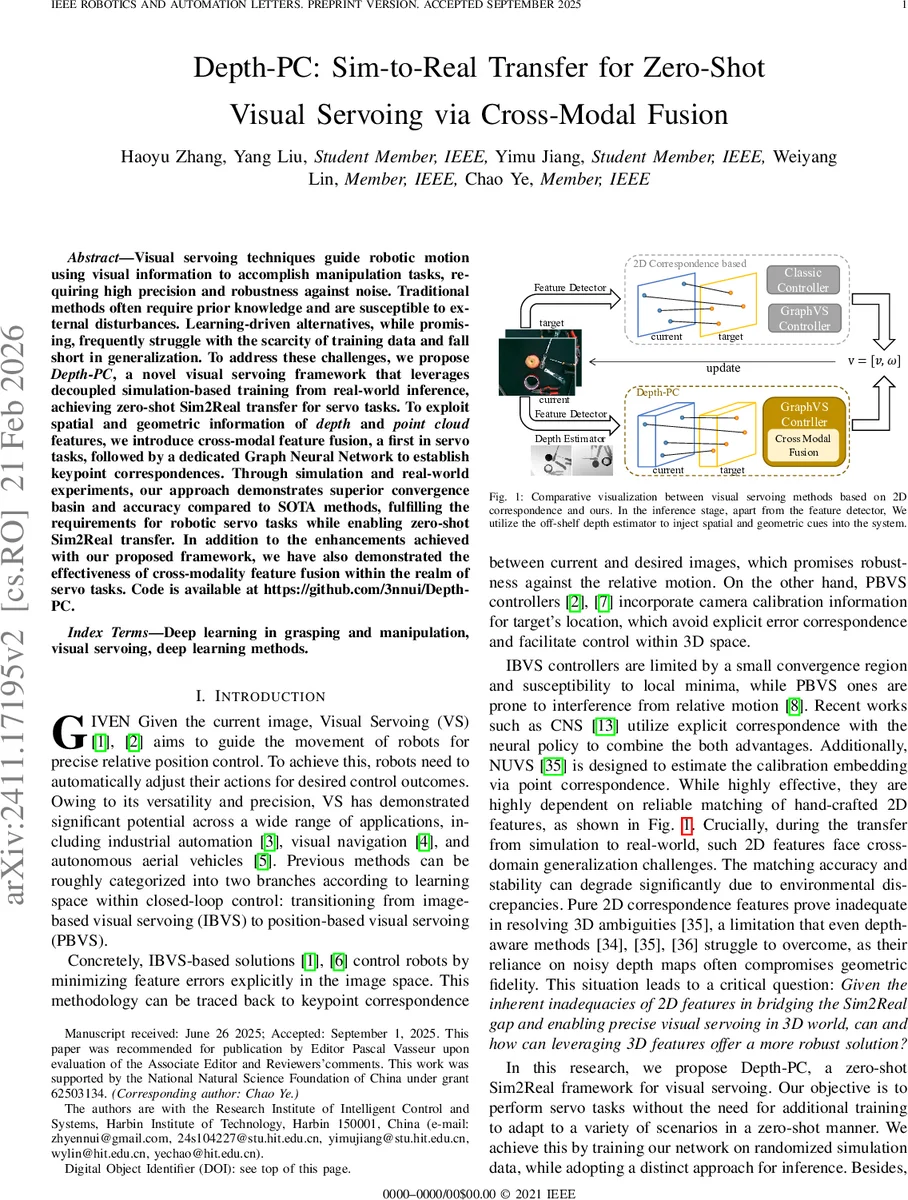

Visual servoing techniques guide robotic motion using visual information to accomplish manipulation tasks, requiring high precision and robustness against noise. Traditional methods often require prior knowledge and are susceptible to external disturbances. Learning-driven alternatives, while promising, frequently struggle with the scarcity of training data and fall short in generalization. To address these challenges, we propose Depth-PC, a novel visual servoing framework that leverages decoupled simulation-based training from real-world inference, achieving zero-shot Sim2Real transfer for servo tasks. To exploit spatial and geometric information of depth and point cloud features, we introduce cross-modal feature fusion, a first in servo tasks, followed by a dedicated Graph Neural Network to establish keypoint correspondences. Through simulation and real-world experiments, our approach demonstrates superior convergence basin and accuracy compared to SOTA methods, fulfilling the requirements for robotic servo tasks while enabling zero-shot Sim2Real transfer. In addition to the enhancements achieved with our proposed framework, we have also demonstrated the effectiveness of cross-modality feature fusion within the realm of servo tasks. Code is available at https://github.com/3nnui/Depth-PC.

💡 Research Summary

Depth‑PC introduces a novel visual‑servoing framework that achieves zero‑shot Sim2Real transfer by decoupling training (pure simulation) from inference (real‑world deployment). The core idea is to enrich the visual input with explicit 3D geometric cues—depth maps and point‑cloud data—and to fuse these modalities through a dedicated feature‑fusion pipeline before feeding them to a graph neural network (GNN) that predicts the 6‑DOF end‑effector velocity.

Data Generation and Pre‑processing

The authors use objects from the YCB‑Video dataset. For each object, a dense point cloud is obtained and then processed with the Hidden Points Removal (HPR) operator to emulate occlusions and visibility constraints that occur in real scenes. Random rotations, translations, and camera pose perturbations are applied, and the visible points are projected onto the image plane to obtain 2D keypoints together with normalized depth values (Z). Data augmentation introduces mismatched points, missing points, and uniform noise, thereby mimicking real‑world sensor imperfections. A graph is then built: intra‑cluster edges connect points within the same spatial cluster, while inter‑cluster edges link cluster centroids, capturing both local and global spatial relationships.

Cross‑Modal Feature Fusion

The fusion module consists of two components. First, a Feature Alignment Layer (FAL) maps the 2D coordinates and depth values into a common embedding space. Second, a Cluster Cross‑Attention mechanism computes an attention score matrix between the positional embeddings of each cluster and the depth embeddings of all points. Row‑wise softmax yields a depth‑to‑position attention matrix, and column‑wise softmax yields the opposite. The fused representation for a cluster is the concatenation of the depth‑weighted depth embedding and the position‑weighted positional embedding. This design preserves rich 3D information while keeping computational cost lower than full‑scale cross‑attention. The depth branch is also passed through a small MLP to produce depth embeddings (φ_Z) that later serve as distance‑correction factors.

Graph Neural Network and Velocity Head

The fused node embeddings and the edge embeddings are processed by a GNN (based on the architecture of

Comments & Academic Discussion

Loading comments...

Leave a Comment