Hybrid combinations of parametric and empirical likelihoods

This paper develops a hybrid likelihood (HL) method based on a compromise between parametric and nonparametric likelihoods. Consider the setting of a parametric model for the distribution of an observation $Y$ with parameter $θ$. Suppose there is als…

Authors: Nils Lid Hjort, Ian W. McKeague, Ingrid Van Keilegom

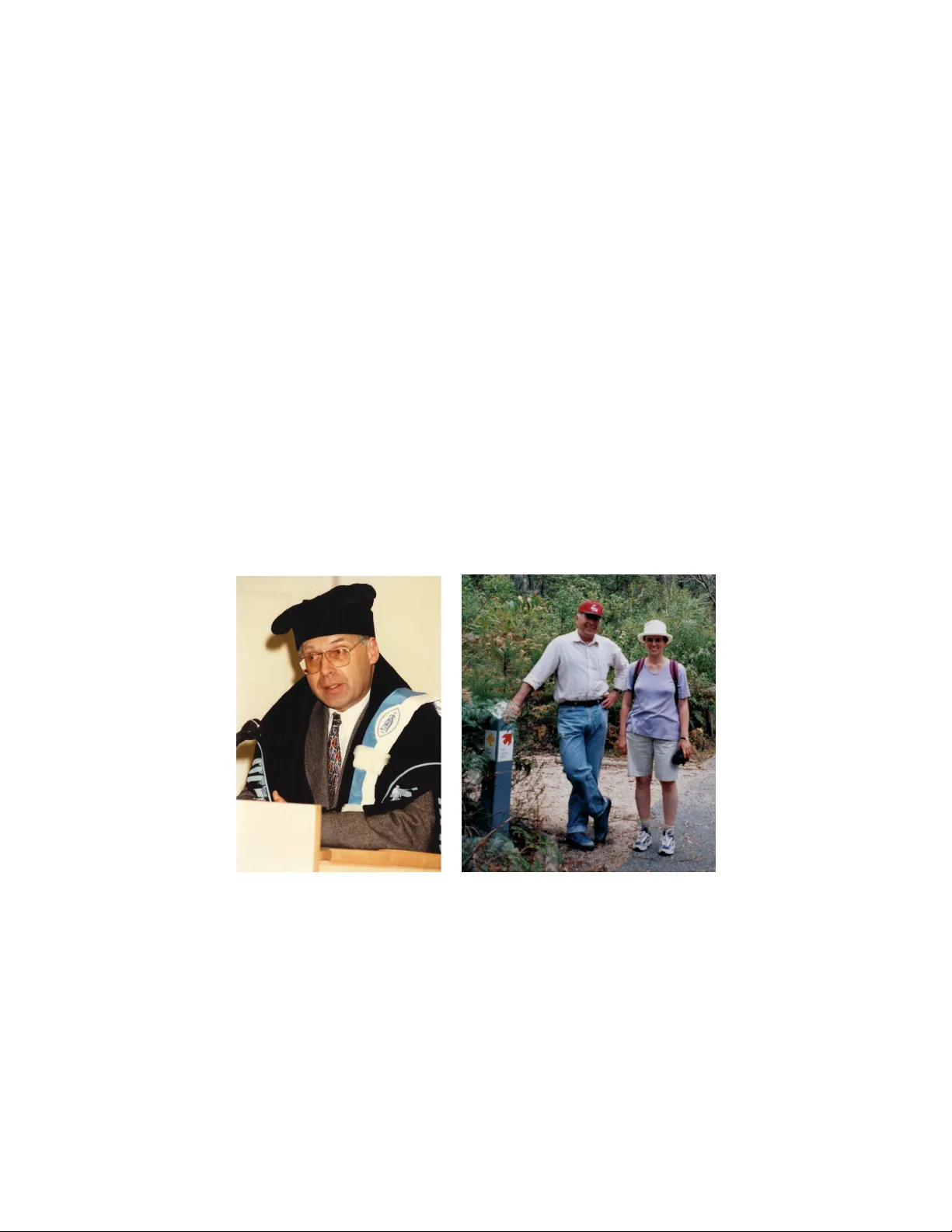

Hybrid com binations of parametric and empirical lik eliho o ds Nils Lid Hjort 1 , Ian W. McKeague 2 , and Ingrid V an Keilegom 3 University of Oslo, Columbia University, and KU L euven July 2017 Abstr act: This pap er develops a hybrid likelihoo d (HL) method based on a compromise b et ween parametric and nonparametric lik eliho o ds. Consider the setting of a parametric mo del for the distribution of an observ ation Y with parameter θ . Supp ose there is also an estimating function m ( · , µ ) identifying another parameter µ via E m ( Y , µ ) = 0, at the outset defined indep enden tly of the parametric mo del. T o b orrow strength from the parametric mo del while obtaining a degree of robustness from the empirical lik eliho od method, w e form ulate inference about θ in terms of the h ybrid likelihoo d function H n ( θ ) = L n ( θ ) 1 − a R n ( µ ( θ )) a . Here a ∈ [0 , 1) represents the exten t of the compromise, L n is the ordinary parametric likelihoo d for θ , R n is the empirical likelihoo d function, and µ is considered through the lens of the parametric mo del. W e establish asymptotic normality of the corresp onding HL estimator and a v ersion of the Wilks theorem. W e also examine extensions of these results under missp ecification of the parametric mo del, and prop ose metho ds for selecting the balance parameter a . Key wor ds and phr ases: Agnostic parametric inference, F o cus parameter, Semiparametric estima- tion, Robust methods Some p ersonal reflections on P eter W e are all grateful to Peter for his deeply influential contributions to the field of statistics, in particular to the areas of nonparametric smo othing, b o otstrap, empirical likelihoo d (what this pap er is ab out), functional data, high-dimensional data, measurement errors, etc., man y of whic h were ma jor breakthroughs in the area. His services to the profession were also exemplary and exceptional. It seems that he could simply not sa y ‘no’ to the many requests for recommendation letters, thesis rep orts, editorial duties, departmen tal reviews, and v arious other requests for help, and as many of us hav e exp erienced, he handled all this with an amazing sp eed, thoroughness, and efficiency . W e will also remember P eter as a v ery warm, gen tle, and h umble p erson, who was particularly supp ortiv e of y oung p eople. Nils Lid Hjort: I ha ve many and uniformly warm remembrances of P eter. W e had met and talked a few times at conferences, and then P eter in vited me for a t wo-mon th stay in Canberra in 2000. This w as b oth enjoy able, friendly , and fruitful. I remember fondly not only tec hnical discussions and the free-flowing of ideas on blac kb oards (and since P eter could think twice as fast as an yone else, that somehow improv ed m y o wn arguing and thinking sp eed, or so I’d like to think), but also the p ositiv e, widely international, 1 N.L. Hjort is supp orted via the Norwegian Research Council funded pro ject F o cuStat. 2 I.W. McKeague is partially supp orted by NIH Grant R01GM095722. 3 I. V an Keilegom is financially supported b y the Europ ean Research Council (2016-2021, Horizon 2020, gran t agreement No. 694409), and by IAP research net work gran t nr. P7/06 of the Belgian governmen t (Belgian Science Policy). 2 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM upb eat, but unstressed working atmosphere. Among the pluses for my Do wn Under adven tures were not merely meeting k angaro os in the wild while jogging and singing Sc hnittke, but teaming up with fello w visitors for several go o d pro jects, in particular with Gerda Claesk ens; another sign of Peter’s deep role in building partnerships and teams around him, b y his sheer presence. Then P eter and Jeannie visited us in Oslo for a six-week perio d in the autumn of 2003. F or their first day there, at least Jeannie w as deligh ted that I had put on my P eter Hall t-shirt and that I ga ve him a Hal l of F ame wristw atch. F or these Oslo weeks he was therefore elab oratedly in tro duced at seminars and meetings as Peter Hal l of F ame ; every one understo od that all other Peter Halls were considerably less famous. A couple of pro ject ideas we dev elop ed together, in the middle of P eter’s dozens and dozens of other ongoing real-time pro jects, are still in my dra wers and files, patien tly aw aiting completion. V ery few people can be as quietly and undramatically supremely efficien t and productive as Peter. Luckily most of us others don’t really ha ve to, as long as we are doing decently well a decent prop ortion of the time. But once in a while, in m y working life, when deadlines are approac hing and I’ve lagged far b ehind, I put on my Peter Hall t-shirt, and think of him. It tends to work. (a) (b) Figure 1: (a) P eter at the o ccasion of his honorary doctorate at the Institute of Statistics in Louv ain-la-Neuve in 1997; (b) P eter and Ingrid V an Keilegom in Tidbin billa Nature Reserve near Can b erra in 2002 (picture taking by Jeannie Hall). Ingrid V an Keile gom: I first met Peter in 1995 during one of P eter’s many visits to Louv ain-la-Neuve (LLN). A t that time I was still a graduate studen t at Hasselt Universit y . Two y ears later, in 1997, Peter obtained an honorary do ctorate from the Institute of Statistics in LLN (at the o ccasion of the fifth anniversary of the Institute), during whic h I disco vered that Peter was not only a gian t in his field but also a v ery human, mo dest, and kind p erson. Figure 1 (a) shows P eter at his acceptance sp eec h. Later, in 2002, soon after I HYBRID EMPIRICAL LIKELIHOOD 3 started w orking as a young faculty member in LLN, Peter invited me to Canberra for six weeks, a visit of whic h I ha ve extremely p ositive memories. I am v ery grateful to Peter for ha ving given me the opp ortunit y to work with him there. During this visit Peter and I started w orking on tw o pap ers, and although Peter w as v ery busy with many other things, it was difficult to stay on top of all new ideas and material that he w as suggesting and adding to the pap ers, day after day . At some p oin t during this visit Peter left Canberra for a 10-day visit to London, and I (naiv ely) thought I could spend more time on broadening my knowledge on the tw o topics Peter had introduced to me. How ever, the next morning I received a fax of 20 pages of hand-written notes, containing a difficult pro of that Peter had found during the flight to London. It to ok me the full next 10 days to unraffle all the details of the pro of ! Although Peter was very focused and busy with his work, he often to ok his visitors on a trip during the w eekends. I enjo yed very muc h the trip to the Tidbin billa Nature Reserve near Canberra, together with him and his wife Jeannie. A picture tak en in this park by Jeannie is seen in Figure 1 (b). After the visit to Canberra, Peter and I contin ued working on other pro jects and, in around 2004, Peter visited LLN for sev eral weeks. I pick ed him up in the morning from the airport in Brussels. He came straight from Canberra and had b een more or less 30 hours underw ay . I supposed without asking that he would like to go to the hotel to take a rest. But when we were approaching the hotel, Peter insisted that I w ould drive immediately to the Institute in order to start working straigh t aw ay . He sp en t the whole day at the Institute discussing with p eople and working in his office, b efore going finally to his hotel! I alwa ys wondered where he found the energy , the motiv ation and the strength to do this. He will b e remembered by man y of us as an extremely hard working p erson, and as an example to all of us. 4 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM 1 In tro duction F or mo delling data there are usually many options, ranging from purely parametric, semiparametric, to fully nonparametric. There are also numerous wa ys in which to combine parametrics with nonparametrics, say estimating a mo del densit y b y a com bination of a parametric fit with a nonparametric estimator, or b y taking a weigh ted a verage of parametric and nonparametric quantile estimators. This article concerns a prop osal for a bridge betw een a given parametric mo del and a nonparametric lik eliho o d-ratio method. W e construct a h ybrid likelihoo d function, based on the usual likelihoo d function for the parametric mo del, say L n ( θ ), with n referring to sample size, and the empirical likelihoo d function for a given set of control parameters, sa y R n ( µ ), where the µ parameters in question are “pushed through” the parametric model, leading to R n ( µ ( θ )), sa y . Our hybrid likelihoo d H n ( θ ), defined in ( 2 ) b elo w, is used for estimating the parameter v ector of the w orking mo del; we term the b θ hl in question the maximum hybrid likelihoo d estimator. This in turn leads to estimates of other quantities of interest. If ψ is such a fo cus parameter, expressed via the working mo del as ψ = ψ ( θ ), then it is estimated using b ψ hl = ψ ( b θ hl ). If the w orking parametric mo del is correct, these hybrid estimators lose a certain amount in terms of efficiency , when compared to the usual maxim um likelihoo d estimator. W e sho w, how ever, that the efficiency loss under ideal mo del conditions is typically a small one, and that the hybrid estimator often outp erforms the maximum lik eliho o d when the w orking model is not correct. Th us the hybrid lik eliho o d is seen to offer parametric robustness, or protection against mo del missp ecification, by b orro wing strength from the empirical likelihoo d, via the selected control parameters. Though our construction and metho ds can b e lifted to e.g. regression mo dels, see Section S.5 in the supplemen tary material, it is practical to start with the simpler i.i.d. framew ork, b oth for con veying the basic ideas and for developing theory . Thus, let Y 1 , ..., Y n b e i.i.d. observ ations, stemming from some unknown densit y f . W e wish to fit the data to some parametric family , say f θ ( y ) = f ( y , θ ), with θ = ( θ 1 , . . . , θ p ) t ∈ Θ, where Θ is an op en subset of R p . The purp ose of fitting the data to the mo del is typically to make inference ab out certain quan tities ψ = ψ ( f ), termed fo cus p ar ameters , but without necessarily trusting the mo del fully . Our machinery for constructing robust estimators for these fo cus parameters inv olves certain extra parameters, which we term c ontr ol p ar ameters , say µ j = µ j ( f ) for j = 1 , . . . , q . These are context-driv en parameters, selected to safeguard against certain t yp es of model misspecification, and ma y or may not include the fo cus parameters. Supp ose in general terms that µ = ( µ 1 , . . . , µ q ) is identified via estimating equations, E f m j ( Y , µ ) = 0 for j = 1 , . . . , q . No w consider R n ( µ ) = max n n Y i =1 ( nw i ) : n X i =1 w i = 1 , n X i =1 w i m ( Y i , µ ) = 0 , eac h w i > 0 o . (1) HYBRID EMPIRICAL LIKELIHOOD 5 This is the empirical lik eliho o d function for µ , see Owen ( 2001 ), with further discussions in e.g. Hjort et al. ( 2009 ) and Sch weder & Hjort ( 2016 , Ch. 11). One might e.g. choose m ( Y , µ ) = g ( Y ) − µ for suitable g = ( g 1 , . . . , g q ), in which case the empirical lik eliho od machinery giv es confidence regions for the parameters µ j = E f g j ( Y ). W e can no w introduce the hybrid likeliho o d (HL) function H n ( θ ) = L n ( θ ) 1 − a R n ( µ ( θ )) a , (2) where L n ( θ ) = Q n i =1 f ( Y i , θ ) is the ordinary likelihoo d, R n ( µ ) is the empirical likelihoo d for µ , but here computed at the v alue µ ( θ ), which is µ ev aluated at f θ , and with a b eing a balance parameter in [0 , 1). The asso ciated maximum HL estimator is b θ hl , the maximiser of H n ( θ ). If ψ = ψ ( f ) is a parameter of interest, it is estimated as b ψ hl = ψ ( f ( · , b θ hl )). This means first expressing ψ in terms of the mo del parameters, sa y ψ = ψ ( f ( · , θ )) = ψ ( θ ), and then plugging in the maxim um HL estimator. The general approach ( 2 ) w orks for m ultidimensional vectors Y i , so the g j functions could e.g. b e set up to reflect cov ariances. F or one-dimensional cases, options include m j ( Y , µ j ) = I { Y ≤ µ j } − j /q ( j = 1 , . . . , q − 1) for quantile inference. The h ybrid metho d ( 2 ) provides a bridge from the purely parametric to the nonparametric empirical lik eliho o d. The a parameter dictates the degree of balance. One can view ( 2 ) as a wa y for the empirical lik eliho o d to borrow strength from a parametric family , and, alternativ ely , as a means of robustifying ordinary parametric model fitting by incorp orating precision control for one or more µ j parameters. There might b e practical circumstances to assist one in selecting go od µ j parameters, or go od estimating equations, or these ma y b e singled out at the outset of the study as b eing quan tities of primary interest. Example 1. Let f θ b e the normal densit y with parameters ( ξ , σ 2 ), and tak e m j ( y , µ j ) = I { y ≤ µ j } − j / 4 for j = 1 , 2 , 3. Then (1.1), with the ensuing µ j ( ξ , σ ) = ξ + σ Φ − 1 ( j / 4) for j = 1 , 2 , 3, can b e seen as estimating the normal parameters in a wa y whic h mo difies the parametric ML metho d by taking into account the wish to ha v e goo d model fit for the three quartiles. Alternatively , it can b e view ed as a wa y of making inference for the three quartiles, b orro wing strength from the normal family in order to hop efully do b etter than simply using the three empirical quartiles. Example 2. Let f θ b e the Beta family with parameters ( b, c ), where ML estimates matc h moments for log Y and log (1 − Y ). Add to these the functions m j ( y , µ j ) = y j − µ j for j = 1 , 2. Again, this is Beta fitting with mo dification for getting the mean and v ariance ab out right, or moment estimation b orro wing strength from the Beta family . Example 3. T ak e y our fa vourite parametric family f ( y , θ ), and for an appropriate data set sp ecify an in terv al or region A that actually matters. Then use m ( y , p ) = I { y ∈ A } − p as the ‘con trol equation’ ab o v e, with p = P { Y ∈ A } = R A f ( y , θ ) dy . The effect is to push the parametric ML estimates, softly or not softly dep ending on the size of a , so as to make sure that the empirical binomial estimate b p = n − 1 P n i =1 I { Y i ∈ A } 6 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM is not far from the estimated p ( A, b θ ) = R A f ( y , b θ ) d y . This can also b e extended to using a partition of the sample space, sa y A 1 , . . . , A k , with con trol equations m j ( y , p ) = I { y ∈ A j } − p j for j = 1 , . . . , k − 1 (there is redundancy if trying to include also m k ). It will b e seen via our theory that the hybrid likelihoo d estimation strategy in this case is large-sample equiv alent to maximising (1 − a ) ℓ n ( θ ) − 1 2 an r ( Q n ( θ )) = (1 − a ) n X i =1 log f ( Y i , θ ) − 1 2 an Q n ( θ ) 1 + Q n ( θ ) , where r ( w ) = w / (1 + w ) and Q n ( θ ) = P k j =1 { b p j − p j ( θ ) } 2 / b p j . Here b p j is the direct empirical binomial estimate of P { Y ∈ A j } and p j ( b θ ) is the model-based estimate. In Section 2 we explore the basic properties of HL estimators and the ensuing b ψ hl = ψ ( b θ hl ), under mo del conditions. Results here entail that the efficiency loss is typically small, and of size O ( a 2 ) in terms of the balance parameter a . In Section 3 we study the b eha viour of HL in O (1 / √ n ) neigh b ourho ods of the parametric mo del. It turns out that the HL estimator enjoys certain robustness prop erties, as compared to the ML estimator. Section 4 examines asp ects related to fine-tuning the balance parameter a of ( 2 ), and w e provide a recip e for its selection. An illustration of our HL metho dology is given in Section 5 , inv olving fitting a Gamma mo del to data of Roman era Egyptian life-lengths, a cen tury BC. Finally , coming back to the work of Peter Hall, a nice ov erview of all pap ers of Peter on EL can b e found in Chang et al. ( 2017 ). W e like to mention in particular the pap er by DiCiccio et al. ( 1989 ), in which the features and b ehaviour of parametric and empirical likelihoo d functions are compared. W e mention that P eter also made very influential con tributions to the somewhat related area of lik eliho od tilting, see e.g. Choi et al. ( 2000 ). 2 Beha viour of HL under the parametric mo del The aim of this section is to explore asymptotic prop erties of the HL estimator under the parametric mo del f ( · ) = f ( · , θ 0 ) for an appropriate true θ 0 . W e establish the lo cal asymptotic normalit y of HL, the asymptotic normalit y of the estimator b θ hl , and a version of the Wilks theorem. The HL estimator b θ hl maximises h n ( θ ) = log H n ( θ ) = (1 − a ) ℓ n ( θ ) + a log R n ( µ ( θ )) (3) o ver θ (assumed here to be unique), where ℓ n ( θ ) = log L n ( θ ). W e need to analyse the lo cal b eha viour of the t wo parts of h n ( · ). Consider the localised empirical likelihoo d R n ( µ ( θ 0 + s/ √ n )), where s belongs to some arbitrary compact S ⊂ R p . F or simplicity we write m i,n ( s ) = m ( Y i , µ ( θ 0 + s/ √ n )). Also, consider the functions G n ( λ, s ) = P n i =1 2 log { 1 + λ t m i,n ( s ) / √ n } and G ∗ n ( λ, s ) = 2 λ t V n ( s ) − λ t W n ( s ) λ of the q -dimensional λ , where V n ( s ) = n − 1 / 2 P n i =1 m i,n ( s ) and W n ( s ) = n − 1 P n i =1 m i,n ( s ) m i,n ( s ) t . Hence G ∗ n is the t wo-term T aylor expansion of HYBRID EMPIRICAL LIKELIHOOD 7 G n . W e now re-express the EL statistic in terms of Lagrange multipliers b λ n , which is pure analysis, not y et having an ything to do with random v ariables, p er se: − 2 log R n ( µ ( θ 0 + s/ √ n )) = max λ G n ( λ, s ) = G n ( b λ n ( s ) , s ), with b λ n ( s ) the solution to P n i =1 m i,n ( s ) / { 1+ λ t m i,n ( s ) / √ n } = 0 for all s . This basic translation from the EL definition via Lagrange multipliers is contained in Owen ( 2001 , Ch. 11); for a detailed pro of, along with further discussion, see Hjort et al. ( 2009 , Remark 2.7). The follo wing lemma is crucial for understanding the basic prop erties of HL. The pro of is in Section S.1 in the supplementary material. F or an y matrix A = ( a j,k ), ∥ A ∥ = ( P j,k a 2 j,k ) 1 / 2 denotes the Euclidean norm. Lemma 1. F or a c omp act S ⊂ R p , supp ose that (i) sup s ∈ S ∥ V n ( s ) ∥ = O pr (1) ; (ii) sup s ∈ S ∥ W n ( s ) − W ∥ → pr 0 , wher e W = V ar m ( Y , µ ( θ 0 )) is of ful l r ank; (iii) n − 1 / 2 sup s ∈ S max i ≤ n ∥ m i,n ( s ) ∥ → pr 0 . Then, the max- imisers b λ n ( s ) = argmax λ G n ( λ, s ) and λ ∗ n ( s ) = argmax λ G ∗ n ( λ, s ) = W − 1 n ( s ) V n ( s ) ar e b oth O pr (1) uniformly in s ∈ S , and sup s ∈ S | max λ G n ( λ, s ) − max λ G ∗ n ( λ, s ) | = sup s ∈ S | G n ( b λ n ( s ) , s ) − G ∗ n ( λ ∗ n ( s ) , s ) | → pr 0 . Note that we ha ve an explicit expression for the maximiser of G ∗ n ( · , s ), hence also its maximum, max λ G ∗ n ( λ, s ) = V n ( s ) t W − 1 n ( s ) V n ( s ). It follows that in situations cov ered by Lemma 1 , − 2 log R n ( µ ( θ 0 + s/ √ n )) = V n ( s ) t W − 1 n ( s ) V n ( s ) + o pr (1), uniformly in s ∈ S . Also, by the Law of Large Numbers, condition (ii) of Lemma 1 is v alid if sup s ∥ W n ( s ) − W n (0) ∥ → pr 0. If m and µ are smo oth, then the latter holds using the Mean V alue Theorem. F or the quan tile example, Example 1 , we can use results on the oscillation b eha viour of empirical distributions (see Stute ( 1982 )). F or our Theorem 1 b elo w we need assumptions on the m ( y , µ ) function in volv ed in ( 1 ), and also on ho w µ = µ ( f θ ) = µ ( θ ) b eha ves close to θ 0 . In addition to E m ( Y , µ ( θ 0 )) = 0, we assume that sup s ∈ S ∥ V n ( s ) − V n (0) − ξ n s ∥ = o pr (1) , (4) with ξ n of dimension q × p tending in probability to ξ 0 . Supp ose for illustration that m ( y , µ ( θ )) has a deriv ative at θ 0 , and write m ( y , µ ( θ 0 + ε )) = m ( y , µ ( θ 0 )) + ξ ( y ) ε + r ( y, ε ), for the appropriate ξ ( y ) = ∂ m ( y , µ ( θ 0 )) /∂ θ , a q × p matrix, and with a remainder term r ( y , ε ). This fits with ( 4 ), with ξ n = n − 1 P n i =1 ξ ( Y i ) → pr ξ 0 = E ξ ( Y ), as long as n − 1 / 2 P n i =1 r ( Y i , s/ √ n ) → pr 0 uniformly in s . In smo oth cases we would typically hav e r ( y, ε ) = O ( ∥ ε ∥ 2 ), making the mentioned term of size O pr (1 / √ n ). On the other hand, when m ( y , µ ( θ )) = I { y ≤ µ ( θ ) } − α , w e hav e V n ( s ) − V n (0) = f ( µ ( θ 0 ) , θ 0 ) s + O pr ( n − 1 / 4 ) uniformly in s (see Stute ( 1982 )). W e rewrite the log-HL in terms of a lo cal 1 / √ n -scale perturbation around θ 0 : A n ( s ) = h n ( θ 0 + s/ √ n ) − h n ( θ 0 ) = (1 − a ) { ℓ n ( θ 0 + s/ √ n ) − ℓ n ( θ 0 ) } + a { log R n ( µ ( θ 0 + s/ √ n )) − log R n ( µ ( θ 0 )) } . (5) Belo w w e show that A n ( s ) conv erges w eakly to a quadratic limit A ( s ), uniformly in s ov er compacta, which then leads to our most imp ortan t insigh ts concerning HL-based estimation and inference. By the multiv ariate 8 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM Cen tral Limit Theorem, U n, 0 V n, 0 = n − 1 / 2 P n i =1 u ( Y i , θ 0 ) n − 1 / 2 P n i =1 m ( Y i , µ ( θ 0 )) → d U 0 V 0 ∼ N p + q (0 , Σ) , where Σ = J C C t W . (6) Here, u ( y , θ ) = ∂ log f ( y , θ ) /∂ θ is the score function, J = V ar u ( Y , θ 0 ) is the Fisher information matrix of dimension p × p , C = E u ( Y , θ 0 ) m ( Y , µ ( θ 0 )) t is of dimension p × q , and W = V ar m ( Y , µ ( θ 0 )) as b efore. The ( p + q ) × ( p + q ) v ariance matrix Σ is assumed to b e p ositiv e definite. This ensures that the parametric and empirical likelihoo ds do not “tread on one another’s to es”, i.e. that the m j ( y , µ ( θ )) functions are not in the span of the score functions, and vice versa. Theorem 1. Supp ose that smo otheness c onditions on log f ( y , θ ) hold, as sp el le d out in Se ction S.2 ; the c onditions of L emma 1 ar e in for c e, along with c ondition ( 4 ) with the appr opriate ξ 0 , for e ach c omp act S ⊂ R p ; and that Σ has ful l r ank. Then, for e ach c omp act S , A n ( s ) c onver ges we akly to A ( s ) = s t U ∗ − 1 2 s t J ∗ s , in the function sp ac e ℓ ∞ ( S ) endowe d with the uniform top olo gy, wher e U ∗ = (1 − a ) U 0 − aξ t 0 W − 1 V 0 and J ∗ = (1 − a ) J + aξ t 0 W − 1 ξ 0 . Her e U ∗ ∼ N p (0 , K ∗ ) , with varianc e matrix K ∗ = (1 − a ) 2 J + a 2 ξ t 0 W − 1 ξ 0 − a (1 − a )( C W − 1 ξ 0 + ξ t 0 W − 1 C t ) . The theorem, prov ed in Section S.2 of the supplemen tary material, is v alid for each fixed balance param- eter a in ( 2 ), with J ∗ and K ∗ also dep ending on a . W e discuss wa ys of fine-tuning a in Section 4 . The p × q -dimensional blo c k comp onen t C of the v ariance matrix Σ of ( 6 ) can b e work ed with and represen ted in different wa ys. Supp ose that µ is differentiable at θ = θ 0 , and denote the vector of partial deriv atives by ∂ µ ∂ θ , with deriv atives at θ 0 , and with this matrix arranged as a p × q matrix, with columns ∂ µ j ( θ 0 ) /∂ θ for j = 1 , . . . , q . F rom R m ( y , µ ( θ )) f ( y , θ ) d y = 0 for all θ follows the q × p -dimensional equation R m ∗ ( y , µ ( θ 0 )) f ( y , θ 0 ) d y ( ∂ µ ∂ θ ) t + R m ( y , µ ( θ 0 )) f ( y , θ 0 ) u ( y , θ 0 ) t d y = 0, where m ∗ ( y , µ ) = ∂ m ( y , µ ) /∂ µ , in case m is differentiable with resp ect to µ . This means C = − ∂ µ ∂ θ E θ m ∗ ( Y , µ ( θ 0 )). If m ( y , µ ) = g ( y ) − µ , for example, corresp onding to parameters µ = E g ( Y ), we hav e C = ∂ µ ∂ θ . Also, using ( 4 ) we ha ve ξ 0 = − ( ∂ µ ∂ θ ) t , of dimension q × p . Applying Theorem 1 yields U ∗ = (1 − a ) U 0 + a ∂ µ ∂ θ W − 1 V 0 , along with J ∗ = (1 − a ) J + a ∂ µ ∂ θ W − 1 ∂ µ ∂ θ t and K ∗ = (1 − a ) 2 J + { 1 − (1 − a ) 2 } ∂ µ ∂ θ W − 1 ∂ µ ∂ θ t . (7) F or the follo wing corollary of Theorem 1 , w e need to in tro duce the random function Γ n ( θ ) = n − 1 { h n ( θ ) − h n ( θ 0 ) } along with its p opulation version Γ( θ ) = − (1 − a ) KL( f θ 0 , f θ ) − a E log 1 + ξ ( θ ) t m ( Y , µ ( θ )) , (8) with b θ hl as the argmax of Γ n ( · ). Here KL( f , f θ ) = R f log( f /f θ ) d y is the Kullback–Leibler divergence, in this case from f θ 0 to f θ , and with ξ ( θ ) the solution of E m ( Y , µ ( θ )) / { 1 + ξ t m ( Y , µ ( θ ) } = 0 (that this solution exists and is unique is a consequence of the pro of of Corollary 1 b elow). HYBRID EMPIRICAL LIKELIHOOD 9 Corollary 1. Under the c onditions of The or em 1 and under c onditions (A1)–(A3) given in Se ction S.3 of the supplementary material, (i) ther e is c onsistency of b θ hl towar ds θ 0 ; (ii) √ n ( b θ hl − θ 0 ) → d ( J ∗ ) − 1 U ∗ ∼ N p 0 , ( J ∗ ) − 1 K ∗ ( J ∗ ) − 1 ; and (iii) 2 { h n ( b θ hl ) − h n ( θ 0 ) } → d ( U ∗ ) t ( J ∗ ) − 1 U ∗ . These results allow us to construct confidence regions for θ 0 and confidence interv als for its comp onen ts. Of course we are not merely in terested in the individual parameters of a mo del, but in certain functions of them, namely fo cus parameters. Assume ψ = ψ ( θ ) = ψ ( θ 1 , . . . , θ p ) is such a parameter, with ψ differentiable at θ 0 and denote c = ∂ ψ ( θ 0 ) /∂ θ . The HL estimator for this ψ is the plug-in b ψ hl = ψ ( b θ hl ). With ψ 0 = ψ ( θ 0 ) as the true parameter v alue, we then ha ve via the delta method that √ n ( b ψ hl − ψ 0 ) → d c t ( J ∗ ) − 1 U ∗ ∼ N(0 , κ 2 ) , where κ 2 = c t ( J ∗ ) − 1 K ∗ ( J ∗ ) − 1 c. (9) The fo cus parameter ψ could, for example, be one of the comp onents of µ = µ ( θ ) used in the EL part of the HL, say µ j , for whic h √ n ( b µ j, hl − µ 0 ,j ) has a normal limit with v ariance ( ∂ µ j ∂ θ ) t ( J ∗ ) − 1 K ∗ ( J ∗ ) − 1 ∂ µ j ∂ θ , in terms of ∂ µ j ∂ θ = ∂ µ j ( θ 0 ) /∂ θ . Armed with Corollary 1 , we can set up W ald and likelihoo d-ratio t yp e confidence regions and tests for θ , and confidence interv als for ψ . Consistent estimators b J ∗ and b K ∗ of J ∗ and K ∗ w ould then b e required, but these are readily obtained via plug-in. Also, an estimate of J ∗ is typically obtained via the Hessian of the optimisation algorithm used to find the HL estimator in the first place. In order to inv estigate how muc h is lost in efficiency when using the HL estimator under model conditions, consider the case of small a . W e hav e J ∗ = J + aA 1 and K ∗ = J + aA 2 + O ( a 2 ), with A 1 = ξ t 0 W − 1 ξ 0 − J and A 2 = − 2 J − C W − 1 ξ 0 − ξ t 0 W − 1 C t . F or the class of functions of the form m ( y , µ ) = g ( y ) − T ( µ ), corresponding to µ = T − 1 (E g ( Y )), we hav e A 2 = 2 A 1 . It is assumed that T ( · ) has a con tinuous inv erse at µ ( θ ) for θ in a neigh b ourho od of θ 0 . W riting ( J ∗ ) − 1 K ∗ ( J ∗ ) − 1 as ( J − 1 − aJ − 1 A 1 J − 1 )( J + aA 2 )( J − 1 − aJ − 1 A 1 J − 1 ) + O ( a 2 ), therefore, one finds that this is J − 1 + O ( a 2 ), which in particular means that the efficiency loss is very s mall when a is small. 3 Hybrid lik eliho o d outside mo del conditions In Section 2 we in vestigated the h ybrid likelihoo d estimation strategy under the conditions of the parametric mo del. Under suitable conditions, the HL is consistent and asymptotically normal, with a certain mild loss of effi ciency under mo del conditions, compared to the parametric ML metho d, the special case a = 0. In the present section we inv estigate the b eha viour of the HL outside the conditions of the parametric mo del, whic h is now view ed as a working mo del. It turns out that HL often outp erforms ML by reducing mo del bias, which in mean squared error terms might more than comp ensate for a slight increase in v ariability . This in turn calls for metho ds for fine-tuning the balance parameter a in our basic hybrid construction ( 2 ), and we shall deal with this problem to o, in Section 4 . 10 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM Our framework for inv estigating such prop erties in volv es extending the working mo del f ( y , θ ) to a f ( y , θ , γ ) m odel, where γ = ( γ 1 , . . . , γ r ) is a vector of extra parameters. There is a null v alue γ = γ 0 whic h brings this extended mo del back to the working mo del. W e now examine b eha viour of the ML and the HL schemes when γ is in the neighbourho o d of γ 0 . Supp ose in fact that f true ( y ) = f ( y , θ 0 , γ 0 + δ / √ n ) , (10) with the δ = ( δ 1 , . . . , δ r ) parameter dictating the relativ e distance from the null mo del. In this framework, supp ose an estimator b θ has the prop ert y that √ n ( b θ − θ 0 ) → d N p ( B δ, Ω) , (11) with a suitable p × r matrix B related to ho w muc h the model bias affects the estimator of θ , and limit v ariance matrix Ω. Then a parameter ψ = ψ ( f ) of interest can in this wider framework b e expressed as ψ = ψ ( θ , γ ), with true v alue ψ true = ψ ( θ 0 , γ 0 + δ / √ n ). The spirit of these inv estigations is that the statistician uses the w orking mo del with only θ present, without knowing the extension mo del or the size of the δ discrepancy . The ensuing estimator for ψ is hence b ψ = ψ ( b θ , γ 0 ). The delta metho d then leads to √ n ( b ψ − ψ true ) → d N( b t δ, τ 2 ) , (12) with b = B t ∂ ψ ∂ θ − ∂ ψ ∂ γ and τ 2 = ( ∂ ψ ∂ θ ) t Ω ∂ ψ ∂ θ , and with partial deriv atives ev aluated at the working mo del, i.e. at ( θ 0 , γ 0 ). The limiting mean squared error, for suc h an estimator of µ , is mse( δ ) = ( b t δ ) 2 + τ 2 . Among the consequences of using the narro w working mo del when it is mo derately wrong, at the level of γ = γ 0 + δ / √ n , is the bias b t δ . The size of this bias dep ends on the fo cus parameter, and it may b e zero for some fo ci, even when the mo del is incorrect. W e now examine b oth the ML and the HL metho ds in this framework, exhibiting the asso ciated B and Ω matrices and hence the mean squared errors, via ( 12 ). Consider the parametric ML estimator b θ ml first. T o present the necessary results, consider the ( p + r ) × ( p + r ) Fisher information matrix J wide = J 00 J 01 J 10 J 11 (13) for the f ( y , θ , γ ) mo del, computed at the n ull v alues ( θ 0 , γ 0 ). In particular, the p × p blo c k J 00 , corresp onding to the mo del with only θ and without γ , is equal to the earlier J matrix of ( 6 ) and app earing in Theorem 1 etc. Here one can demonstrate, under appropriate mild regularity conditions, that √ n ( b θ narr − θ 0 ) → d N p ( J − 1 00 J 01 δ, J − 1 00 ). Just as ( 12 ) follow ed from ( 11 ), one finds for b ψ ml = ψ ( b θ ml ) that √ n ( b ψ ml − ψ true ) → d N( ω t δ, τ 2 0 ) , (14) HYBRID EMPIRICAL LIKELIHOOD 11 featuring ω = J 10 J − 1 00 ∂ ψ ∂ θ − ∂ ψ ∂ γ and τ 2 0 = ( ∂ ψ ∂ θ ) t J − 1 00 ∂ ψ ∂ θ . See also Hjort & Claeskens ( 2003 ) and Claesk ens & Hjort ( 2008 , Chs. 6, 7) for further details, discussion, and precise regularity conditions. In such a situation, with a clear interest parameter ψ , we use the HL to get b ψ hl = ψ ( b θ hl , γ 0 ). W e w ork out what happ ens with b θ hl in this framework, generalising what is found in the previous section. In tro duce S ( y ) = ∂ log f ( y , θ 0 , γ 0 ) /∂ γ , the score function in direction of these extension parameters, and let K 01 = R f ( y , θ 0 ) m ( y , µ ( θ 0 )) S ( y ) d y , of dimension q × r , along with L 01 = (1 − a ) J 01 − a ( ∂ ψ ∂ θ ) t W − 1 K 01 , of dimension p × r , and with transp ose L 10 = L t 01 . The following is prov ed in Section S.4 . Theorem 2. Assume data stem fr om the extende d p + r -dimensional mo del ( 10 ), and that the c onditions liste d in Cor ol lary 1 ar e in for c e. F or the HL metho d, with the fo cus p ar ameter ψ = ψ ( f ) built into the c onstruction ( 2 ), r esults ( 11 )–( 12 ) hold, with B = ( J ∗ ) − 1 L 01 and Ω = ( J ∗ ) − 1 K ∗ ( J ∗ ) − 1 . The limiting distribution for b ψ hl = ψ ( b θ hl ) can again b e read off, just as ( 12 ) follows from ( 11 ): √ n ( b ψ hl − ψ true ) → d N( ω t hl δ, τ 2 0 , hl ) , (15) with ω hl = L 10 ( J ∗ ) − 1 ∂ ψ ∂ θ − ∂ ψ ∂ γ and τ 2 0 , hl = ( ∂ ψ ∂ θ ) t ( J ∗ ) − 1 K ∗ ( J ∗ ) − 1 ∂ ψ ∂ θ . The quan tities inv olved in these large-sample prop erties of the HL estimator dep end on the balance parameter a employ ed in the basic HL construction ( 2 ). F or a = 0 we are back to the ML, with ( 15 ) sp ecialising to ( 14 ). As a mo ves aw ay from zero, more emphasis is placed on the EL part, in effect pushing θ so n − 1 P n i =1 m ( Y i , µ ( θ )) gets closer to zero. The result is t ypically a low er bias | ω hl ( a ) t δ | , compared to | ω t δ | , and a slightly larger standard deviation τ 0 , hl , compared to τ 0 . Thus selecting a go od v alue of a is a bias-v ariance balancing game, which we discuss in the following section. 4 Fine-tuning the balance parameter The basic HL construction of ( 2 ) first en tails selecting context relev ant control parameters µ , and then a fo cus parameter ψ . A sp ecial case is that of using the fo cus ψ as the single control parameter. In each case, there is also the balance parameter a to decide up on. W ays of fine-tuning the balance are discussed here. Balancing robustness and efficiency . By allowing the empirical likelihoo d to b e combined with the lik eliho o d from a giv en parametric mo del, one may buy robustness, via the con trol parameters µ in the HL construction, at the exp ense of a certain mild loss of efficiency . One wa y to fine-tune the balance, after ha ving decided on the control parameters, is to select a so that the loss of efficiency under the conditions of the parametric working model is limited by a fixed, small amoun t, say 5%. This may b e achiev ed by using the corollaries of Section 2 by comparing the inv erse Fisher information matrix J − 1 , asso ciated with the ML estimator, to the sandwic h matrix ( J ∗ a ) − 1 K ∗ a ( J ∗ a ) − 1 , for the HL estimator. Here we refer to the corollaries of Section 2 , see e.g. ( 7 ), and hav e added the subscript a , for emphasis. If there is sp ecial interest in some 12 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM fo cus parameter ψ , one may select a so that κ a = { c t ( J ∗ a ) − 1 K ∗ a ( J ∗ a ) − 1 c } 1 / 2 ≤ (1 + ε ) κ 0 = (1 + ε )( c t J − 1 c ) 1 / 2 , (16) with ε the required threshold. With ε = 0 . 05, for example, one ensures that confidence interv als are only 5% wider than those based on the ML, but with the additional security of having controlled well for the µ parameters in the pro cess, e.g. for robustness reasons. P edantically sp eaking, in ( 9 ) there is really a c a = ∂ ψ ( θ 0 ,a ) /∂ θ also dep ending on the a , associated with the limit in probability θ 0 ,a of the HL estimator, but when discussing efficiencies at the parametric mo del, the θ 0 ,a is the same as the true θ 0 , so c a is the same as c = ∂ ψ ( θ 0 ) /∂ θ . A concrete illustration of this approach is in the following section. F eatures of the mse(a). The metho ds ab ov e, as with ( 16 ), rely on the theory developed in Section 2 , under the conditions of the parametric working mo del. In what follo ws we need the theory given in Section 3 , examining the b eha viour of the HL estimator in a neighbourho o d around the working mo del. Results there can first b e used to examine the limiting mse prop erties for the ML and the HL estimators where it will b e seen that the HL often can b ehav e b etter; a slightly larger v ariance is b eing comp ensated for with a smaller mo delling bias. Secondly , the mean squared error curve, as a function of the balance parameter a , can b e estimated from data. This leads to the idea of selecting a to b e the minimiser of this estimated risk curv e, pursued b elo w. F or a giv en fo cus parameter ψ , the limit mse when using the HL with parameter a is found from ( 15 ): mse( a ) = { ω hl ( a ) t δ } 2 + τ 0 , hl ( a ) 2 . (17) The first exercise is to ev aluate this curve, as a function of the balance parameter a , in situations with given mo del extension parameter δ . The mse( a ) at a = 0 corresp onds to the mse for the ML estimator. If mse( a ) is smaller than mse(0), for some a , then the HL is doing a b etter job than the ML. Figure 2 (a) displays the ro ot-mse( a ) curve in a simple setup, where the parametric start mo del is Beta( θ , 1), with densit y θ y θ − 1 , and the fo cus parameter used for the HL construction is ψ = E Y 2 , which is θ / ( θ + 2) under mo del conditions. The extended mo del, under which w e examine the mse prop erties of the ML and the HL, is the Beta( θ , γ ), with γ = 1 + δ / √ n in ( 10 ). The δ for this illustration is chosen to b e Q 1 / 2 = ( J 11 ) 1 / 2 , from ( 18 ) b elo w, which may b e interpreted as one standard deviation aw ay from the null mo del. The ro ot-mse( a ) curv e, computed via n umerical in tegration, shows that the HL estimator b θ hl / ( b θ hl + 2) do es b etter than the parametric ML estimator b θ ml / ( b θ ml + 2), unless a is close to 1. Similar curv es are seen for other δ , for other fo cus parameters, and for more complex mo dels. Occasionally , mse( a ) is increasing in a , indicating in such cases that ML is better than HL, but this typically happ ens only when the mo del discrepancy parameter δ is small, i.e. when the working mo del is nearly correct. HYBRID EMPIRICAL LIKELIHOOD 13 (a) (b) 0.0 0.2 0.4 0.6 0.8 1.0 0.270 0.275 0.280 0.285 0.290 0.295 0.300 a root−mse for HL and ML 0.0 0.2 0.4 0.6 0.8 1.0 0.260 0.265 0.270 0.275 0.280 a root−fic(a) Figure 2: (a) The dotted horizontal line indicates the ro ot-mse for the ML estimator, and the full curve the ro ot-mse for the HL estimator, as a function of the balance parameter a in the HL construction. (b) The ro ot-fic( a ), as a function of the balance parameter a , constructed on the basis of n = 100 simulated observ ations, from a case where γ = 1 + δ / √ n , with δ describ ed in the text. It is of interest to note that ω hl ( a ) in ( 15 ) starts out for a = 0 at ω = J 10 J − 1 00 ∂ ψ ∂ θ − ∂ ψ ∂ γ in ( 14 ), asso ciated with the ML metho d, but then it decreases in size tow ards zero, as a gro ws from zero to one. Hence, when HL employs only a small part of the ordinary log-likelihoo d in its construction, the consequent b ψ hl ,a has small bias, but p otentially a bigger v ariance than ML. The HL may thus b e seen as a debiasing op eration, for the control and fo cus parameters, in cases where the parametric mo del f ( · , θ ) cannot b e fully trusted. Estimation of mse(a). Concrete ev aluation of the mse( a ) curves of ( 17 ) shows that the HL sc heme t ypically is worth while, in that the mse is low er than that of the ML, for a range of a v alues. T o find a go o d v alue of a from data, a natural idea is to estimate the mse( a ) and then pic k its minimiser. F or mse( a ), the ingredients ω hl ( a ) and τ 0 , hl ( a ) inv olved in ( 15 ) may b e estimated consistently via plug-in of the relev ant quantities. The difficulty lies with the δ part, and more sp ecifically with δ δ t in ω hl ( a ) δ δ t ω hl ( a ). F or this parameter, defined on the O (1 / √ n ) scale via γ = γ 0 + δ / √ n , the essential information lies in D n = √ n ( b γ ml − γ 0 ), via parametric ML estimation in the extended f ( y , θ , γ ) mo del. As demonstrated and discussed in Claeskens & Hjort ( 2008 , Chs. 6–7), in connection with construction of their F o cused Information Criterion (FIC), we hav e D n → d D ∼ N r ( δ, Q ) , with Q = J 11 = ( J 11 − J 10 J − 1 00 J 01 ) − 1 . (18) The factor δ / √ n in the O (1 / √ n ) construction cannot b e estimated consisten tly . Since DD t has mean δ δ t + Q in the limit, w e estimate squared bias parameters of the type ( b t δ ) 2 = bδ δ t b using { b t ( D n D t n − b Q ) b } + , in whic h b Q estimates Q = J 11 , and x + = max( x, 0). W e construct the r × r matrix b Q from estimating and then inv erting the full ( p + r ) × ( p + r ) Fisher information matrix J wide of ( 13 ). This leads to estimating 14 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM mse( a ) using fic( a ) = { b ω hl ( a ) t ( D n D t n − b Q ) b ω hl ( a ) } + + b τ 0 , hl ( a ) 2 = n b ω hl ( a ) t { ( b γ − γ 0 )( b γ − γ 0 ) t − b Q } b ω hl ( a ) + + b τ 0 , hl ( a ) 2 . Figure 2 (b) displays such a ro ot-fic curve, the estimated ro ot-mse( a ). Whereas the ro ot-mse( a ) curv e sho wn in Figure 2 (a) is coming from considerations and numerical inv estigation of the extended f ( y , θ , γ ) mo del alone, pre-data, the ro ot-fic( a ) curve is constructed for a giv en dataset. The start mo del and its extension are as with Figure 2 (a), a Beta( θ , 1) inside a Beta( θ , γ ), with n = 100 sim ulated data p oints using γ = 1 + δ / √ n with δ chosen as for Figure 2 (a). Again, the HL metho d w as applied, using the second moment ψ = E Y 2 as b oth con trol and fo cus. The estimated risk is smallest for a = 0 . 41. 5 An illustration: Roman era Egyptian life-lengths A fascinating dataset on n = 141 life-lengths from Roman era Egypt, a century BC, is examined in Pearson ( 1902 ), where he compares life-length distributions from t wo so cieties, tw o thousand years apart. The data are also discussed, mo delled and analysed in Claesk ens & Hjort ( 2008 , Ch. 2). (a) (b) ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 0 20 40 60 80 0 20 40 60 80 estimated quantile function life−lengths 0.0 0.2 0.4 0.6 0.8 1.0 0.18 0.20 0.22 0.24 a estimate of p = Pr(A) Figure 3: (a) The q-q plot shows the ordered life-lengths y ( i ) plotted against the ML-estimated gamma quan tile function F − 1 ( i/ ( n + 1) , b b, b c ). (b) The curv e b p a , with the probability p = P { Y ∈ [9 . 5 , 20 . 5] } estimated via the HL estimator, is displa yed, as a function of the balance parameter a . A t balance p osition a = 0 . 61, the efficiency loss is 10% compared to the ML precision under ideal gamma model conditions. Here we hav e fitted the data to the Gamma( b, c ) distribution, first using the ML, with parameter estimates (1 . 6077 , 0 . 0524). The q-q plot of Figure 3 (a) displays the p oin ts ( F − 1 ( i/ ( n + 1) , b b, b c ) , y ( i ) ), with F − 1 ( · , b, c ) denoting the quan tile function of the Gamma and y ( i ) the ordered life-lengths, from 1.5 to 96. W e learn that the gamma distribution do es a decent job for these data, but that the fit is not go o d for the longer lives. There is hence scop e for the HL for estimating and assessing relev ant quantities in a more robust and indeed con trolled fashion than via the ML. Here we fo cus on p = p ( b, c ) = P { Y ∈ [ L 1 , L 2 ] } = R L 2 L 1 f ( y , b, c ) d y , for age groups [ L 1 , L 2 ] of interest. The hybrid log-lik eliho o d is hence h n ( b, c ) = (1 − a ) ℓ n ( b, c ) + a log R n ( p ( b, c )), HYBRID EMPIRICAL LIKELIHOOD 15 with R n ( p ) b eing the EL asso ciated with m ( y , p ) = I { y ∈ [ L 1 , L 2 ] } − p . W e may then, for each a , maximise this function and read off b oth the HL estimate s ( b b a , b c a ) and the consequen t b p a = p ( b b a , b c a ). Figure 3 (b) displa ys this b p a , as a function of a , for the age group [9 . 5 , 20 . 5]. F or a = 0 we ha ve the ML based estimate 0 . 251, and with increasing a there is more weigh t to the EL, whic h has the p oint estimate 0 . 171. T o decide on a go od balance, recip es of Section 4 ma y b e appealed to. The relativ ely sp eaking simplest of these is that asso ciated with ( 16 ), where w e n umerically compute κ a = { c t ( J ∗ ) − 1 K ∗ ( J ∗ ) − 1 c } 1 / 2 for each a , at the ML p osition in the parameter space of ( b, c ), and with J ∗ and K ∗ from ( 7 ). The loss of efficiency κ a /κ 0 is quite small for small a , and is at level 1.10 for a = 0 . 61. F or this v alue of a , where confidence in terv als are stretched 10% compared to the gamma-mo del-based ML solution, we find b p a equal to 0.232, with estimated standard deviation b κ a / √ n = 0 . 188 / √ n = 0 . 016. Similarly the HL machinery may b e put to w ork for other age interv als, for eac h such using the p = P { Y ∈ [ L 1 , L 2 ] } as b oth control and fo cus, and for mo dels other than the gamma. W e may emplo y the HL with a collection of control parameters, like age groups, b efore landing on inference for a fo cus parameter; see Example 3 . The more elab orate recip e of selecting a , developed in Section 4 and using fic( a ), can also b e used here. 6 F urther developmen ts and the Supplemen tary Material V arious concluding remarks and e xtra developmen ts are placed in the article’s Supplementary Material section. In particular, pro ofs of Lemma 1 , Theorems 1 and 2 and Corollary 1 are given there. Other material in volv es extension of the basic HL construction to regression type data, in Section S.5 ; log-HL-profiling op erations and a deviance fuction, leading to a full confidence curv e for a fo cus parameter, in Section S.6 , an implicit go o dness-of-fit test for the parametric vehicle mo del, in Section S.7 , and a related but different h ybrid likelihoo d construction, in Section S.8 . References Chang, J. , Guo, J. & T ang, C. Y. (2017). P eter Hall’s contribution to empirical likelihoo d. Statistic a Sinic a xx , xx–xx. Choi, E. , Hall, P. & Presnell, B. (2000). Rendering parametric pro cedures more robust by empirically tilting the mo del. Biometrika 87 , 453–465. Claeskens, G. & Hjor t, N. L. (2008). Mo del Sele ction and Mo del Aver aging . Cambridge: Cambr idge Univ ersity Press. DiCiccio, T. , Hall, P. & Romano, J. (1989). Comparison of parametric and empirical likelihoo d func- tions. Biometrika 76 , 465–476. Ferguson, T. S. (1996). A Course in L ar ge Sample The ory . Melb ourne: Chapman & Hall. Hjor t, N. L. & Claeskens, G. (2003). F requen tist mo del av erage estimators [with discussion]. Journal of the Americ an Statistic al Asso ciation 98 , 879–899. 16 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM Hjor t, N. L. , McKeague, I. W. & V an Keilegom, I. (2009). Extending the scop e of empirical likelihoo d. A nnals of Statistics 37 , 1079–1111. Hjor t, N. L. & Pollard, D. (1994). Asymptotics for minimisers of conv ex pro cesses. T ech. rep., Depart- men t of Mathematics, Univ ersity of Oslo. Molanes L ´ opez, E. , V an Keilegom, I. & Vera verbeke, N. (2009). Empirical likelihoo d for non-smooth criterion function. Sc andinavian Journal of Statistics 36 , 413–432. O wen, A. (2001). Empiric al Likeliho o d . London: Chapman & Hall/CRC. Pearson, K. (1902). On the change in exp ectation of life in man during a p eriod of circa 2000 y ears. Biometrika 1 , 261–264. Schweder, T. & Hjor t, N. L. (2016). Confidenc e, Likeliho o d, Pr ob ability: Statistic al Infer enc e with Confidenc e Distributions . Cambridge: Cam bridge Universit y Press. Sherman, R. P. (1993). The limiting distribution of the maxim um rank correlation estimator. Ec onometric a 61 , 123–137. Stute, W. (1982). The oscillation b eha vior of empirical processes. Annals of Pr ob ability 10 , 86–107. v an der V aar t, A. W. (1998). Asymptotic Statistics . Cambridge: Cambridge Universit y Press. v an der V aar t, A. W. & Wellner, J. A. (1996). We ak Conver genc e and Empiric al Pr o c esses . New Y ork: Springer-V erlag. HYBRID EMPIRICAL LIKELIHOOD 1 Supplemen tary material to ‘Hybrid com binations of parametric and empirical lik eliho o ds’ Nils Lid Hjort, Ian W. McKeague, and Ingrid V an Keilegom University of Oslo, Columbia University, and KU L euven This supplemen tary material contains the following sections. Sections S.1 , S.2 , S.3 , S.4 give the technical pro ofs of Lemma 1 , Theorem 1 , Cororally 1 and Theorem 2 . Then Section S.5 crucially indicates how the HL methodology can b e lifted from the i.i.d. case to regression t yp e models, whereas a Wilks type theorem based on HL-profiling, useful for constructing confidence curves for focus parameters, is developed in Section S.6 . An implicit go o dness-of-fit test for the parametric working mo del is identified in Section S.7 . Finally Section S.8 describ es an alternative hybrid approac h, related to, but differen t from the HL. This alternative metho d is first-order equiv alent to the HL metho d inside O (1 / √ n ) neighbourho ods of the parametric vehicle mo del, but not at farther distances. S.1 Pro of of Lemma 1 The pro of is based on tec hniques and arguments related to those of Hjort et al. ( 2009 ), but with necessary extensions and mo difications. F or the maximiser of G n ( · , s ), write b λ n ( s ) = ∥ b λ n ( s ) ∥ u ( s ) for a v ector u ( s ) of unit length. With argumen ts as in Owen ( 2001 , p. 220), ∥ b λ n ( s ) ∥ u ( s ) t W n ( s ) u ( s ) − E n ( s ) u ( s ) t V n ( s ) ≤ u ( s ) t V n ( s ) , with E n ( s ) = n − 1 / 2 max i ≤ n ∥ m i,n ( s ) ∥ , which tends to zero in probability uniformly in s by assumption (iii). Also from assumption (i), sup s ∈ S | u ( s ) t V n ( s ) | = O pr (1). Moreov er, u ( s ) t W n ( s ) u ( s ) ≥ e n, min ( s ), the smallest eigen v alue of W n ( s ), whic h con verges in probabilit y to the smallest eigenv alue of W , and this is b ounded aw ay from zero by assumption (ii). It follo ws that ∥ b λ n ( s ) ∥ = O pr (1) uniformly in s . Also, λ ∗ n ( s ) = W n ( s ) − 1 V n ( s ) is b ounded in probabilit y uniformly in s . Via log (1 + x ) = x − 1 2 x 2 + 1 3 x 3 h ( x ), where | h ( x ) | ≤ 2 for | x | ≤ 1 2 , write G n ( λ, s ) = 2 λ t V n ( s ) − 1 2 λ t W n ( s ) λ + r n ( λ, s ) = G ∗ n ( λ, s ) + r n ( λ, s ) . F or arbitrary c > 0, consider any λ with ∥ λ ∥ ≤ c . Then w e find | r n ( λ, s ) | ≤ 2 3 n X i =1 | λ t m i,n ( s ) / √ n | 3 | h ( λ t m i,n ( s ) / √ n ) | ≤ 4 3 E n ( s ) ∥ λ ∥ λ t W n ( s ) λ ≤ 4 3 E n ( s ) c 3 e n, max ( s ) , 2 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM in terms of the largest eigen v alue of W n ( s ), as long as cE n ( s ) ≤ 1 2 . Cho ose c big enough to ha ve b oth b λ n ( s ) and λ ∗ n ( s ) inside this ball for all s with probability exceeding 1 − ε ′ , for a preassigned small ε ′ . Then, P sup s ∈ S | max λ G n ( λ, s ) − max λ G ∗ n ( λ, s ) | ≥ ε ≤ P sup s ∈ S sup ∥ λ ∥≤ c | G n ( λ, s ) − G ∗ n ( λ, s ) | ≥ ε ≤ P (4 / 3) c 3 sup s ∈ S ( E n ( s ) e n, max ( s )) ≥ ε + P sup s ∈ S ∥ b λ n ( s ) ∥ > c + P sup s ∈ S ∥ λ ∗ n ( s ) ∥ > c + P c sup s ∈ S E n ( s ) > 1 2 . The lim-sup of the probability sequence on the left hand side is hence b ounded by 4 ε ′ . W e hav e prov en that sup s ∈ S | max λ G n ( λ, s ) − max λ G ∗ n ( λ, s ) | → pr 0. S.2 Pro of of Theorem 1 W e work with the tw o comp onen ts of ( 5 ) separately . First, with U n = n − 1 / 2 P n i =1 u ( Y i , θ 0 ), which tends to U 0 ∼ N p (0 , J ), cf. ( 6 ), ℓ n ( θ 0 + s/ √ n ) − ℓ n ( θ 0 ) = s t U n − 1 2 s t J s + ε n ( s ) , with sup s ∈ S | ε n ( s ) | → pr 0 , (19) under v arious sets of mild regularity conditions. If log f ( y , θ ) is concav e in θ , no other conditions are required, b ey ond finiteness of the Fisher information matrix J , see Hjort & Pollard ( 1994 ). Without concavit y , but assuming the existence of third order deriv atives D i,j,k ( y , θ ) = ∂ 3 log f ( y , θ ) /∂ θ i ∂ θ j ∂ θ k , it is straightforw ard via T ay or expansion to verify ( 19 ) under the condition that sup θ ∈N max i,j,k | D i,j,k ( Y , θ ) | has finite mean, with N a neighbourho od around θ 0 . This condition is met for most of the usually emplo yed parametric families. W e finally p oin t out that ( 19 ) can b e established without third order deriv atives, with a mild con tinuit y condition on the second deriv atives, see e.g. F erguson ( 1996 , Ch. 18). Secondly , we shall see that Lemma 1 ma y b e applied, implying log R n ( µ ( θ 0 + s/ √ n )) = − 1 2 V n ( s ) t W n ( s ) − 1 V n ( s ) + o pr (1) , (20) uniformly in s ∈ S . F or this to b e v alid it is in view of Lemma 1 sufficient to chec k condition (i) of that lemma (we assumed conditions (ii) and (iii)). Here (i) follows using ( 4 ), since sup s ∥ V n ( s ) ∥ = sup s ∥ V n (0) + ξ n s ∥ + o pr (1) → d sup s ∥ V 0 + ξ 0 s ∥ . Hence, sup s ∥ V n ( s ) ∥ = O pr (1). HYBRID EMPIRICAL LIKELIHOOD 3 F rom these efforts we find log R n ( µ ( θ 0 + s/ √ n )) − log R n ( µ ( θ 0 )) → d − 1 2 ( V 0 + ξ 0 s ) t W − 1 ( V 0 + ξ 0 s ) + 1 2 V t 0 W − 1 V 0 = − V t 0 W − 1 ξ 0 s − 1 2 s t ξ t 0 W − 1 ξ 0 s. This con vergence also takes place jointly with ( 19 ), in view of ( 6 ), and we arrive at the conclusion of the theorem. S.3 Pro of of Corollary 1 Corollary 1 is v alid under the following conditions, where Γ( · ) is defined in ( 8 ): (A1) F or all ε > 0, sup ∥ θ − θ 0 ∥ >ε Γ( θ ) < Γ( θ 0 ). (A2) The class y 7→ ∂ ∂ θ log f ( y , θ ) : θ ∈ Θ is P -Donsker (see e.g. v an der V aart & W ellner ( 1996 , Ch. 2)). (A3) Conditions (C0)–(C2) and (C4)–(C6) in Molanes L´ op ez et al. ( 2009 ) are v alid, with their function g ( X, µ 0 , ν ) replaced by our function m ( Y , µ ( θ )), with θ playing the role of ν , except that instead of demanding b oundedness of our function m ( Y , µ ) we assume merely that the class y 7→ m ( y , µ ) m ( y , µ ) t { 1 + ξ t m ( y , µ ) } 2 , with µ and ξ in a neigh b ourho od of µ ( θ 0 ) and 0, is P -Donsk er (this is a m uch milder condition than b oundedness). First note that Γ n ( θ ) can b e written as Γ n ( θ ) = (1 − a ) n − 1 n X i =1 { log f ( Y i , θ ) − log f ( Y i , θ 0 ) } − a n − 1 n X i =1 log 1 + b ξ ( θ ) t m ( Y i , µ ( θ )) , where b ξ ( θ ) is the solution of n − 1 n X i =1 m ( Y i , µ ( θ )) 1 + ξ t m ( Y i , µ ( θ )) = 0 . Note that this corresp onds with the formula of log R n giv en b elo w Lemma 1 but with λ ( θ ) / √ n relab elled as ξ ( θ ). That the b ξ ( θ ) solution is unique follows from considerations along the lines of Molanes L´ op ez et al. ( 2009 , p. 415). T o pro ve the consistency part, we make use of Theorem 5.7 in v an der V aart ( 1998 ). It suffices by condition (A1) to show that sup θ | Γ n ( θ ) − Γ( θ ) | → pr 0, which we show separately for the ML and the EL part. F or the parametric part we know that n − 1 ℓ n ( θ ) − E log f ( Y , θ ) is o pr (1) uniformly in θ b y condition (A2). F or the EL part, the pro of is similar to the pro of of Lemma 4 in Molanes L´ op ez et al. ( 2009 ) (except that no rate is required here and that the conv ergence is uniformly in θ ), and hence details are omitted. 4 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM Next, to prov e statement (ii) of the corollary , we make use of Theorems 1 and 2 in Sherman ( 1993 ) ab out the asymptotics for the maximiser of a (not necessarily concav e) criterion function, and the results in Molanes L´ op ez et al. ( 2009 ), who use the Sherman ( 1993 ) pap er to establish asymptotic normality and a version of the Wilks theorem in an EL context with nuisance parameters. F or the verification of the conditions of Theorem 1 (which shows ro ot- n consistency of b θ hl ) and Theorem 2 (which shows asymptotic normalit y of b θ hl ) in Sherman ( 1993 ), w e consider separately the ML part and the EL part. W e note that Theorem 1 in Sherman ( 1993 ) requires consistency of the estimator, which we here hav e established b y argumen ts ab o ve. F or the EL part all the work is already done using our Theorem 1 and Lemmas 1–6 in Molanes L´ op ez et al. ( 2009 ), which are v alid under condition (A3). Next, the conditions of Theorems 1 and 2 in Sherman ( 1993 ) for the ML part follow using standard arguments from parametric likelihoo d theory and condition (A2). It now follows that b θ hl is asymptotically normal, and its asymptotic v ariance is equal to ( J ∗ ) − 1 K ∗ ( J ∗ ) − 1 using Theorem 1 . Finally , claim (iii) of the corollary follows from a com bination of Theorem 1 with s = √ n ( b θ hl − θ 0 ) and the asymptotic normality of √ n ( b θ hl − θ 0 ) to ( J ∗ ) − 1 U ∗ . Indeed, 2 { h n ( b θ hl ) − h n ( θ 0 ) } → d 2 { ( U ∗ ) t ( J ∗ ) − 1 U ∗ − 1 2 ( U ∗ ) t ( J ∗ ) − 1 J ∗ ( J ∗ ) − 1 U ∗ } = ( U ∗ ) t ( J ∗ ) − 1 U ∗ , and this finishes the pro of of the corollary . S.4 Pro of of Theorem 2 T o pro ve Theorem 2 , we revisit several previous arguments for the A n ( · ) → d A ( · ) part of Theorem 1 , but no w needing to extend these to the case of the mo del departure parameter δ b eing present. First, we hav e ℓ n ( θ 0 + s/ √ n ) − ℓ n ( θ 0 ) = U n s − 1 2 s t J n s + o pr (1) → d ( U + J 01 δ ) t s − 1 2 s t J 00 s. This is essentially since U n = n − 1 / 2 P n i =1 u ( Y i , θ 0 ) now is seen to hav e mean J 01 δ , but the same v ariance, up to the required order. W e need a parallel result for V n, 0 = n − 1 / 2 P n i =1 m ( Y i , µ ( θ 0 )) under f true . Here E true m ( Y , µ ( θ 0 )) = Z m ( y , µ ( θ 0 )) f ( y , θ 0 ) { 1 + S ( y ) t δ / √ n + o (1 / √ n ) } d y = 0 + K 01 δ / √ n + o (1 / √ n ) , yielding V n, 0 → d V 0 + K 01 δ . Along with some further details, this leads to the required extension of the A n → d A part of Theorem 1 and its pro of, to the present lo cal neighbourho od mo del state of affairs; A n ( s ) = h n ( θ 0 + s/ √ n ) − h n ( θ 0 ) → d A ( s ) = s t U ∗ plus − 1 2 s t J ∗ s, HYBRID EMPIRICAL LIKELIHOOD 5 with J ∗ as defined earlier and with U ∗ plus = (1 − a )( U + J 01 δ ) − aξ t 0 W − 1 ( V 0 + K 01 δ ) = U ∗ + L 01 δ. F ollowing and then modifying the technical details of the pro of of Corollary 1 , we arriv e at √ n ( b θ hl − θ 0 ) → d ( J ∗ ) − 1 ( U ∗ + L 01 δ ) ∼ N p (( J ∗ ) − 1 L 01 δ, ( J ∗ ) − 1 K ∗ ( J ∗ ) − 1 ) , as required. S.5 The HL for regression mo dels Our HL machinery can b e lifted from the iid framework to regression. The following example illustrates the general idea. Consider the normal linear regression mo del for data ( x i , y i ), with cov ariate vector x i of dimension say d , and with y i ha ving mean x t i β . The ML solution is asso ciated with the estimation equation E m ( Y , X , β ) = 0, where m ( y , x, β ) = ( y − x t β ) x . The underlying regression parameter can b e expressed as β = (E X X t ) − 1 E X Y , in volving also the cov ariate distribution. Consider now a subv ector x 0 , of dimension sa y d 0 < d , and the asso ciated estimating equation m 0 ( y , x, γ ) = ( y − x t 0 γ ) x 0 . This invites the HL construction (1 − a ) ℓ n ( β ) + a log R n ( γ ( β )). Here ℓ n ( β ) is the ordinary parametric log-likelihoo d; R n ( γ ) is the EL asso ciated with m 0 ; and γ ( β ) is (E X 0 X t 0 ) − 1 E X 0 Y seen through the lens of the smaller regression, where E X 0 Y = X 0 X t β . This leads to inference ab out β where it is taken in to accoun t that regression with resp ect to the x 0 comp onen ts is of particular imp ortance. S.6 Confidence curv e for a fo cus parameter F or a fo cus parameter ψ = ψ ( θ ), consider the profiled log-hybrid-lik eliho od function h n, prof ( ψ ) = max { h n ( θ ) : ψ ( θ ) = ψ } . Note that h n, max = h n ( b θ hl ) is also the same as h n, prof ( b ψ hl ). W e shall find use for the hybrid deviance function asso ciated with ψ , ∆ n ( ψ ) = 2 { h n, prof ( b ψ hl ) − h n, prof ( ψ ) } . Essen tially relying on Theorem 1 , which in volv es matrices J ∗ and K ∗ and the limit v ariable U ∗ ∼ N p (0 , K ∗ ), w e show b elo w that ∆ n ( ψ 0 ) → d ∆ = { c t ( J ∗ ) − 1 U ∗ } 2 c t ( J ∗ ) − 1 c ∼ k χ 2 1 , (21) where k = c t ( J ∗ ) − 1 K ∗ ( J ∗ ) − 1 c/c t ( J ∗ ) − 1 c . Here c = ∂ ψ ( θ 0 ) /∂ θ , as in ( 9 ). Estimating this k via plug- in then leads to the full confidence curve cc( ψ ) = Γ 1 (∆ n ( ψ ) / b k ), see Sc hw eder & Hjort ( 2016 , Chs. 2–3), often improving on the usual symmetric normal-approximation based confidence interv als. Here Γ 1 ( · ) is the 6 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM distribution function of the χ 2 1 . T o sho w ( 21 ), we go via a profiled version of A n ( s ) in ( 5 ), namely B n ( t ) = h n, prof ( ψ 0 + t/ √ n ) − h n, prof ( ψ 0 ), where ψ 0 = ψ ( θ 0 ). F or B n ( t ) and ∆ n ( ψ ) we hav e the following. Theorem 3. Assume the c onditions of The or em 1 ar e in for c e. With ψ 0 = ψ ( θ 0 ) the true p ar ameter value, and c = ∂ ψ ( θ 0 ) /∂ θ , we have B n ( t ) → d B ( t ) = { c t ( J ∗ ) − 1 U ∗ t − 1 2 t 2 } /c t ( J ∗ ) − 1 c . Also, ∆ n ( ψ 0 ) = 2 max B n → d ∆ = 2 max B = { c t ( J ∗ ) − 1 U ∗ } 2 c t ( J ∗ ) − 1 c . It is clear that ∆ ∼ k χ 2 1 , with the k given ab o ve. Proving the theorem is achiev ed via Theorem 1 , along the lines of a similar type of result for log-likelihoo d profiling given in Sch weder & Hjort ( 2016 , Section 2.4), and we leav e out the details. Remark 1. The sp ecial case of a = 0 for the HL construction corresponds to parametric ML estimation, and results reac hed abov e specialise to the classical results √ n ( b θ ml − θ 0 ) → d N p (0 , J − 1 ), 2 { ℓ n, max − ℓ n ( θ 0 ) } → d χ 2 p , and √ n ( b ψ ml − ψ 0 ) → d N(0 , c t J − 1 c ). Theorem 3 is then the Wilks theorem for the profiled log-likelihoo d function. The other extreme case is that of a → 1, with the EL applied to µ = µ ( θ ). Here Theorem 1 yields U ∗ = − ξ t 0 W − 1 V 0 , and with b oth J ∗ and K ∗ equal to ξ t 0 W − 1 ξ 0 . This case corresp onds to a version of the classic EL chi-squared result, now filtered through the parametric mo del, and with − 2 log R n ( µ ( θ 0 )) → d ( U ∗ ) t ( J ∗ ) − 1 U ∗ ∼ χ 2 p . Also, √ n ( b ψ el − ψ 0 ) → d N(0 , κ 2 ), with κ 2 = c t ξ t 0 W − 1 ξ 0 c ; here b ψ el = ψ ( b θ el ) in terms of the EL estimator, the maximiser of R n ( µ ( θ )). S.7 An implied go o dness-of-fit test for the parametric mo del Metho ds dev elop ed in Section 4 , in particular those asso ciated with estimating the mean squared error of the final estimator, lend themselv es nicely to a go o dness-of-fit test for the parametric working model, as follo ws. W e accept the parametric mo del if the fic( a ) criterion of Section 4 tells us that b a = 0 is the b est balance, and if b a > 0 the mo del is rejected. This mo del test can b e accurately examined, by w orking out an expression for the deriv ative of fic( a ) at a = 0, say b Z 0 n ; we reject the mo del if b Z 0 n > 0 (since then and only then is b a p ositiv e). Here b Z 0 n is the estimated version of the limit exp erimen t v ariable Z 0 , which we shall iden tify b elo w, as a function of D ∼ N q ( δ, Q ), cf. ( 18 ). Let us write ω hl ( a ) = ω + aν + O ( a 2 ). Since τ 0 , hl ( a ) 2 = τ 2 0 + O ( a 2 ), the deriv ative of fic( a ) = ( ω + aν ) t ( D D t − Q )( ω + aν ) + τ 2 0 + O ( a 2 ) with resp ect to a , at zero, is seen to b e Z 0 = 2 ω t ( D D t − Q ) ν . Hence the limit exp erimen t version of the HYBRID EMPIRICAL LIKELIHOOD 7 test is to reject the parametric mo del if ( ω t D )( ν t D ) > ω t Qν , or Z = ω t D ( ω t Qω ) 1 / 2 ν t D ( ν t Qν ) 1 / 2 > ρ = ω t Qν ( ω t Qω ) 1 / 2 ( ν t Qν ) 1 / 2 . Under the null hypothesis of the model, Z is equal in distribution to X 1 X 2 , where ( X 1 , X 2 ) is a binormal pair, with zero means, unit v ariances, and correlation ρ . The implied significance level, of the implied go o dness of fit test, is hence α = P { X 1 X 2 > ρ } , which can b e read off via numerical in tegration or simulation, for a giv en ρ . The ν quantit y can b e iden tified with a bit of algebraic work, and then estimated consistently from the data. W e note that for the sp ecial case of m ( y , µ ) = g ( y ) − µ , and with fo cus on this mean parameter µ = E g ( Y ), then ν b ecomes prop ortional to ω . The test ab o ve is then equiv alent to rejecting the mo del if ( b ω t D n ) 2 / b ω t b Q b ω > 1, which under the null mo del happ ens with probability conv erging to α = P { χ 2 1 > 1 } = 0 . 317. S.8 A related h ybrid estimation metho d In earlier sections we hav e motiv ated and developed theory for the hybrid likelihoo d and the HL estimator. A crucial factor has b een the quadratic approximation ( 20 ). The latter is essentially v alid within a O (1 / √ n ) neigh b ourho od around the true data generating mechanism, and has yielded the results of Sections 2 and 4 . A related though different strategy is ho wev er to take this quadratic appro ximation as the starting p oint. The suggestion is then to define the alternative hybrid estimator as the maximiser e θ of N n ( θ ) = (1 − a ) ℓ n ( θ ) − 1 2 aV n ( θ ) t W n ( θ ) − 1 V n ( θ ) . (22) Under and close to the parametric working mo del, the HL estimator b θ and the new-HL estimator e θ are first-order equiv alent, in the sense of √ n ( b θ − e θ ) → pr 0. Of course we could ha ve put up ( 22 ) without knowing or caring ab out EL or HL in the first place, and with different balance w eights. But here w e are naturally led to the balance w eights 1 − a for the log-lik eliho od and − 1 2 a for the quadratic form, from the HL construction. The adv antage of ( 22 ) is partly that it is easier computationally , without a la yer of Lagrange maximisation for eac h θ . More imp ortan tly , it manages well also outside the O (1 / √ n ) neigh b ourho ods of the working mo del. The new-HL estimator tends under weak regularity conditions to the maximiser θ 0 of the limit function of N n ( θ ) /n , which may written N ( θ ) = (1 − a ) Z g log f θ d y − 1 2 a v t θ (Σ θ + v θ v t θ ) − 1 v θ , in terms of v θ = E f m ( Y , µ ( θ )) and Σ θ = V ar f m ( Y , µ ( θ )). Note next that ( A + xx t ) − 1 = A − 1 − A − 1 xx t A − 1 / (1+ 8 NILS LID HJOR T, IAN W. MCKEAGUE, and INGRID V AN KEILEGOM x t A − 1 x ), for inv ertible A and vector x of appropriate dimension. This leads to the identit y x t ( A + xx t ) − 1 x = x t A − 1 x 1 + x t A − 1 x . Hence the θ 0 asso ciated with the new-HL metho d is the miminiser of the statistical distance function d a ( f , f θ ) = (1 − a )KL( f , f θ ) + 1 2 a v t θ Σ − 1 θ v θ 1 + v t θ Σ − 1 θ v θ (23) from the real f to the mo delled f θ ; here KL( f , f θ ) = R f log( f /f θ ) d y is the Kullbac k–Leibler distance. F or a close to zero, the new-HL is essentially maximising the log-likelihoo d function, asso ciated with attempting to minimise the KL div ergence. F or a coming close to 1 the metho d amounts to minimising an empirical v ersion of v t θ Σ − 1 θ v θ , which means making v θ = E f m ( Y , µ ( θ )) close to zero. This is also what the empirical lik eliho o d is aiming at. References F erguson, T.S. (1996). A Course in L ar ge Sample The ory . Chapman & Hall, Melb ourne. Hjort, N.L., McKeague, I.W. and V an Keilegom, I. (2009). Extending the scop e of empirical lik eliho od. A nnals of Statistics , 37 , 1079–1111. Hjort, N.L. and Pollard, D. (1994). Asymptotics for minimisers of conv ex pro cesses. T echnical rep ort, Departmen t of Mathematics, Univ ersity of Oslo. Molanes L´ op ez, E., V an Keilegom, I. and V erav erb eke, N. (2009). Empirical lik eliho o d for non-smo oth criterion function. Sc andinavian Journal of Statistics , 36 , 413–432. Ow en, A. (2001). Empiric al Likeliho o d . Chapman & Hall/CR C, London. Sc hw eder, T. and Hjort, N.L. (2016). Confidenc e, Likeliho o d, Pr ob ability: Statistic al Infer enc e with Confi- denc e Distributions . Cambridge Univ ersity Press, Cambridge. Sherman, R.P . (1993). The limiting distribution of the maximum rank correlation estimator. Ec onometric a , 61 , 123–137. v an der V aart, A.W. (1998). Asymptotic Statistics . Cambridge Univ ersity Press, Cambridge. v an der V aart, A.W. and W ellner, J.A. (1996). We ak Conver genc e and Empiric al Pr o c esses . Springer-V erlag, New Y ork.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment