DM4CT: Benchmarking Diffusion Models for Computed Tomography Reconstruction

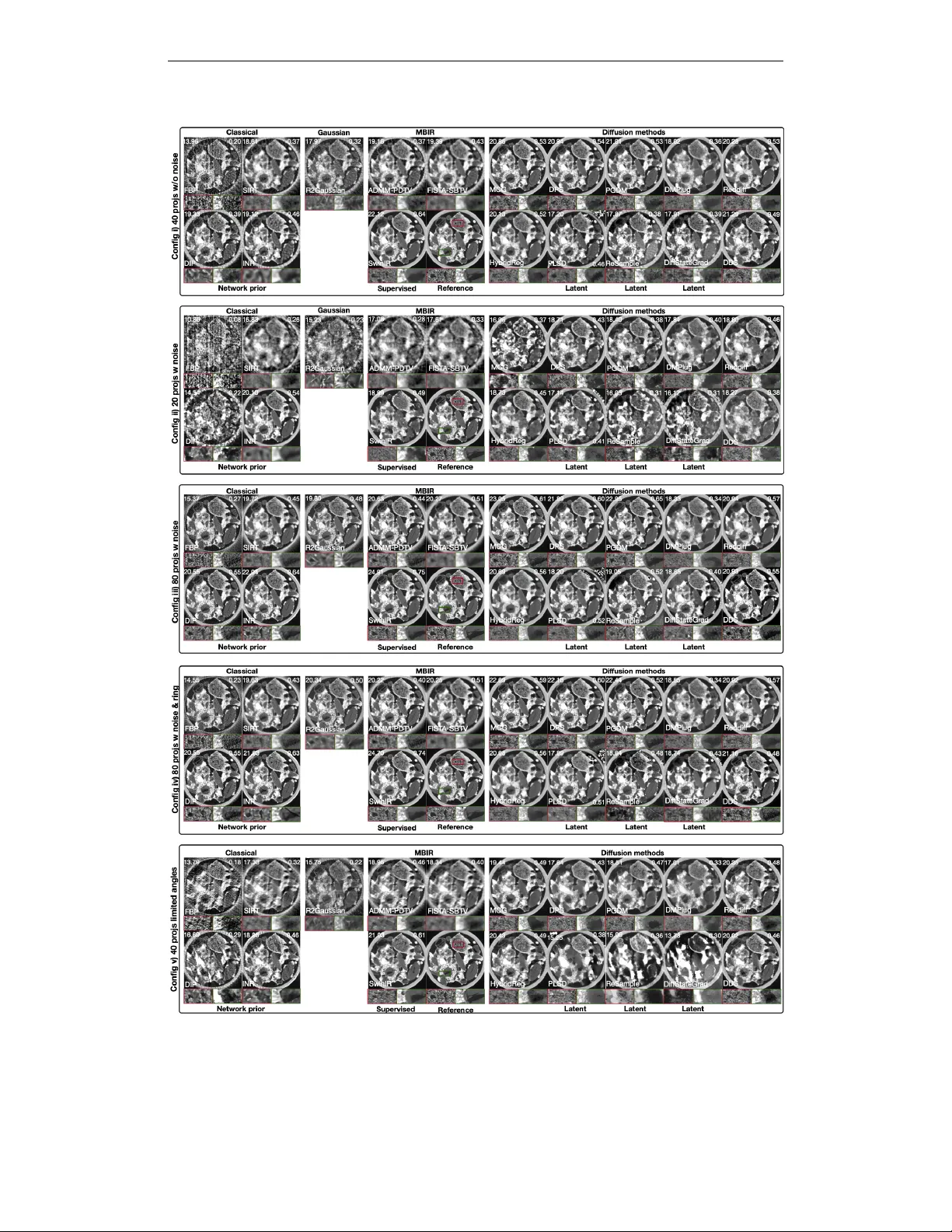

Diffusion models have recently emerged as powerful priors for solving inverse problems. While computed tomography (CT) is theoretically a linear inverse problem, it poses many practical challenges. These include correlated noise, artifact structures,…

Authors: Jiayang Shi, Daniel M. Pelt, K. Joost Batenburg